VxRail’s Latest Hardware Evolution

Thu, 04 Jan 2024 17:22:21 -0000

|Read Time: 0 minutes

December is a time of celebration and anticipation, a month in which we may reflect on the events of the year and look ahead to what is yet to come. Charles Dickens’ “A Christmas Carol” – and its many stage and movie remakes – is one of those literary classics that helps showcase this season’s magic at its finest. It is even said that there is a special kind of magic—one full of excitement, innovation, and productivity—that finds a way to (hyper)converge the past, present, and future for data center administrators all around the world who have been good all year!

No, your wondering eyes do not deceive you. Appearing today are VxRail’s next generation platforms—the VE-660 and VP-760—in all-new, all-NVMe configurations! While Santa’s elves have spent the year building their backlog of toys and planning supply-chain delivery logistics that rival SLA standards of the world’s largest e-tailers, the VxRail team has been hard at work innovating our VxRail family portfolio to ensure that your workloads can run faster than ever before. So, let’s grab a glass of eggnog and invite the holiday spirits along for a tour of VxRail past, present, and future to better understand our latest portfolio addition.

Figure 1. VxRail VE-660

Figure 1. VxRail VE-660 Figure 2. VxRail VP-760

Figure 2. VxRail VP-760

Spirit of VxRail Past

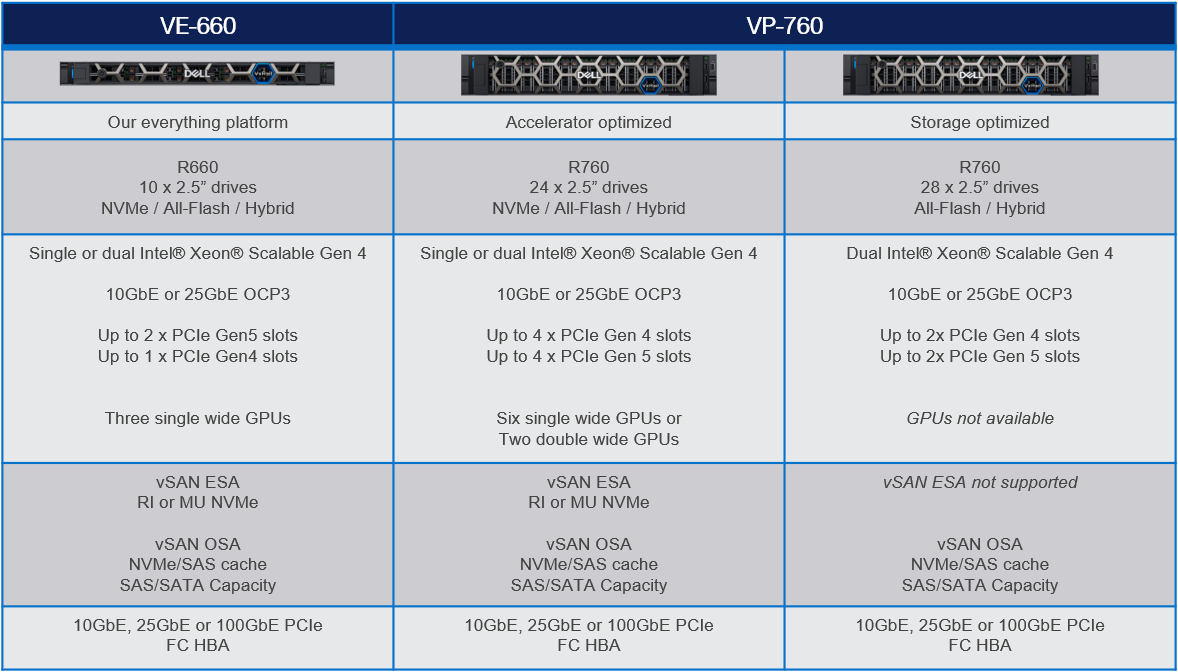

This was especially true earlier this summer when we launched the VE-660 and VP-760 VxRail platforms based on 16th Generation Dell PowerEdge servers. These next-gen successors to the VxRail E-Series and P-Series platforms not only contained the latest hardware innovations, but also represented a systemic change in the overall VxRail offering.

First, the mainline E- and P-series platforms were respectively re-christened as the VE-660 and VP-760. This was done primarily to invite easier comparison points to the underlying PowerEdge servers on which they’re based – the R660 and R760. Second, we tracked how the use of accelerators in the data center had evolved over the years and made the strategic decision to fold the capabilities of the V-Series platform into the P-Series by way of specific riser configurations. Now, customers have the ability to glean all the benefits of a high-performant 2U system with the choice of either storage-optimized (up to 28 total drive bays) or accelerator-optimized (up to 2x double wide or 6x single wide GPUs) chassis configurations—whichever best aligns to the specifics of their workload needs. And third, VxRail platforms dropped the storage type suffix from the model name. Hybrid and all-flash (and as of today, all-NVME–more on this later) storage variants are now offered as part of the riser configuration selection options of these baseline platforms, where applicable.

These changes are representative of how the breadth and depth of customer needs have grown tremendously over the years. By taking these steps to streamline the VxRail portfolio, we charted an evolutionary path forward that continues our commitment to offer greater customer choice and flexibility.

Spirit of VxRail Present

These themes of greater choice and flexibility are amplified by the architectural improvements underpinning these new VxRail platforms. Primary among them is the introduction of Intel® 4th Generation Xeon® Scalable processors. Intel’s latest generation of processors do more than bump VxRail core density per socket to 56 (112 max per node). They also come with built-in AMX accelerators (Advanced Matrix Extensions) that support AI and HPC workloads without the need for any additional drivers or hardware. For a deeper dive into the Intel® AMX capability set, the Spirit of VxRail Present invites you to read this blog: VxRail and Intel® AMX, Bringing AI Everywhere, authored by Una O’Herlihy.

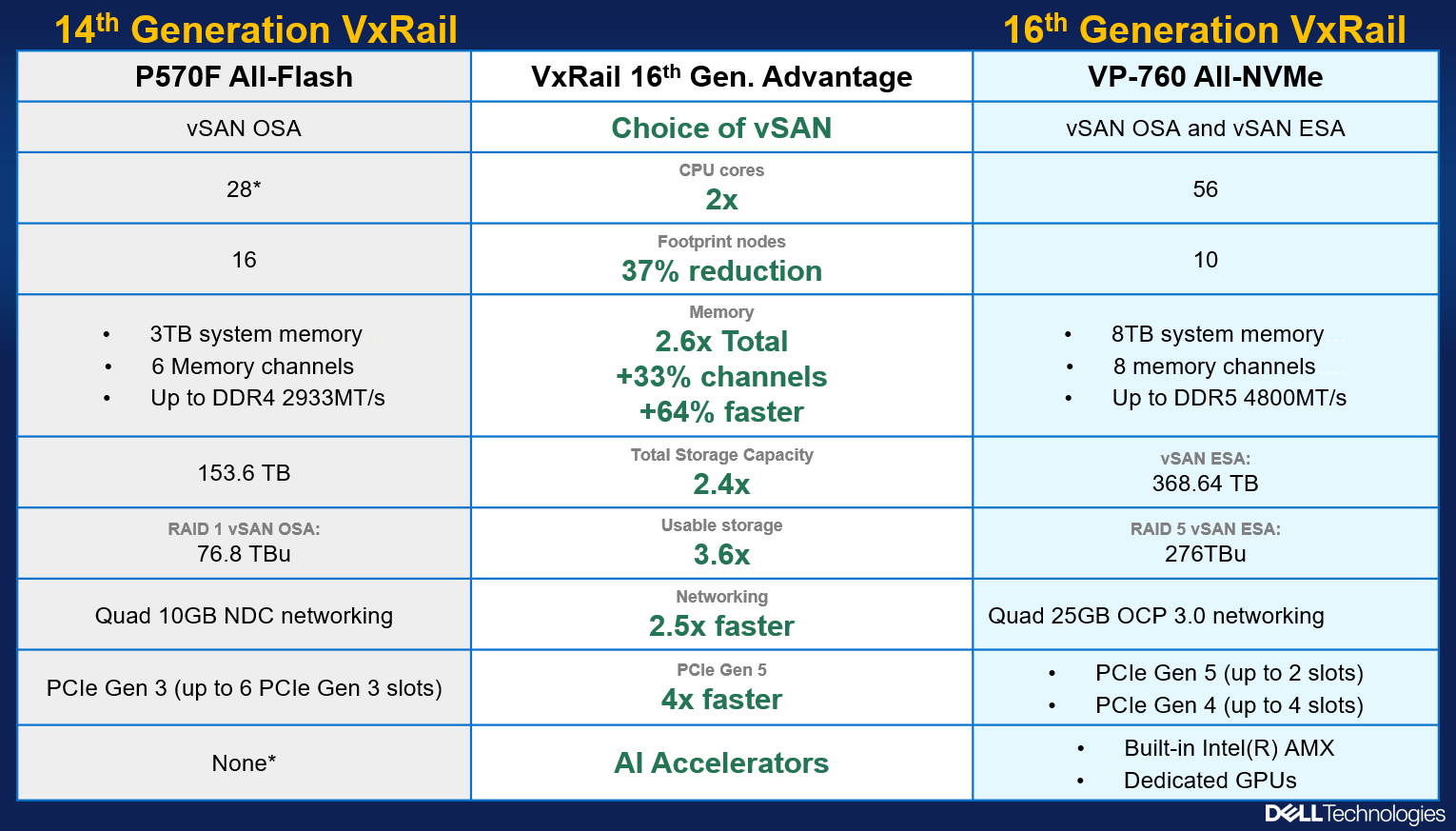

Intel’s latest processors also usher in support for DDR5 memory and PCIe Gen 5, two other architectural pillars that underpin significant jumps in performance. The following table offers a high-level overview and comparison of these pillars and a useful at-a-glance primer for those considering a technology refresh from earlier generation VxRail:

Table 1. VxRail 14th Generation to 16th Generation comparison

VxRail VE-660 & VP-760 | VxRail E560, P570 & V570 | |

Intel Chipset | 4th Generation Xeon | 2nd Generation Xeon |

Cores | 8 - 56 | 4 - 28 |

TDP | 125W – 350W | 85W – 205W |

Max DRAM Memory | 4TB per socket | 1.5TB per socket |

Memory Channels | 8 (DDR5) | 6 (DDR4) |

Memory Bandwidth | Up to 4800 MT/s | Up to 2933 MT/s |

PCIe Generation | PCIe Gen 5 | PCIe Gen 3 |

PCIe Lanes | 80 | 48 |

PCIe Throughput | 32 GT/s | 8 GT/s |

As the operational needs of a business change day-by-day, finding the right balance between workload density and load balance can often feel like an infinite war for resources. The adoption of DDR5 memory across the latest generation of VxRail platforms offers additional flexibility in the way system resources can be divvied up by virtue of two key benefits: greater memory density and faster bandwidth. The VE-660 and VP-760 wield eight memory channels per processor, with the ability to slot up to two 4800MT/s DIMMs per channel for a maximum memory capacity of 8TB per node. Compared to a VxRail P570, the density and speed improvements are staggering: 33% more memory channels per processor, 2.6x increase in per system total memory, and up to a 64% increase in memory speed! With faster and greater density compute and memory available for workloads, each node in a VxRail cluster can handle more VMs, and if there is ever a case of task bottlenecking, there are plenty of resources still available for optimal load balancing.

When we consider the presence of PCIe Gen 5, we see an even greater increase in the overall performance envelope. PowerEdge’s Next-Generation Tech Note does a great job of contextualizing the capabilities of PCIe Gen 5. The main takeaway for VxRail, however, is that it increases the maximum bandwidth achievable from various peripheral components by roughly 25% when compared to PCIe Gen 4 and roughly 66% when compared to PCIe Gen 3. In particular, the jump in available PCIe lanes (48 lanes to a luxurious 80 lanes) and associated throughput (8 GT/s to 32 GT/s per lane) from Gen 3 to Gen 5 significantly reduces performance bottlenecks, resulting in faster storage transfer rates and more bandwidth for accelerators to process AI and ML workloads.

PCIe Gen 5 is also backwards compatible with previous generation peripherals, enabling a certain degree of flexibility with respect to VxRail’s component extensibility and longevity in the data center. Yesterday’s technologies can still be used, but the VE-660 and VP-760 can adapt to growing workload demands by taking full advantage of the latest peripherals as they are released. They are even equipped with an additional PCIe slot over their E- & P-Series predecessors, providing extra dimensions of configuration. These boons in flexibility ensure any investment into this generation of VxRail enjoys longer relevance as your infrastructure backbone.

Spirit of VxRail Future

Even with all these architectural improvements defining the VP-760 and VE-660, we knew we could find ways of improving the capability set. So, we made our list of desired features (and checked it twice!) and determined that the best way to augment these next-generation hardware enhancements would be with the introduction of all-NVMe storage options.

The Spirit of VxRail Past wishes to remind us that VxRail with all-NVMe storage is not new—NVMe first made its way to the VxRail lineup with the P580N and E560N almost four years ago and has been a mainstay facet of the VxRail with vSAN architecture ever since. However, what is most compelling about all-NVMe versions of the VE-660 and VP-760—what the Spirit of VxRail Future wishes to strongly communicate—is that NVMe opens the door to two very compelling benefits: additional flexibility of choice with respect to vSAN architecture and an associated increase in overall storage capacity with the addition of read intensive NVMe drives in sizes of up to 15.36TB.

The following figure outlines all of the generational advantages customers can benefit from when transitioning from existing 14th Generation VxRail environments to VP-760 all-NVMe platforms.

Figure 4. The VxRail 16th Generation all-NVMe advantage

Figure 4. The VxRail 16th Generation all-NVMe advantage

In addition, VxRail on 16th Generation hardware can now support deployments with either vSAN Original Storage Architecture (OSA) or vSAN Express Storage Architecture (ESA). David Glynn provided a great summary of the core value vSAN ESA brings to the table for VxRail in his blog written nearly a year ago. With today’s launch, the VP-760 and VE-660 can now take advantage of vSAN ESA’s single-tier storage architecture that enables RAID-5 resiliency and capacity with RAID-1 performance. Customers who choose to deploy with vSAN OSA can also see the benefit of these new read intensive NVMe drives, with a total storage per node of up to 122.88TB in the VE-660 and 322.56TB in the VP-760. For those who deploy with vSAN ESA, maximum achievable storage is 153.6TB on the VE-660 and up to 368.64TB on the VP-760.

The Spirit of VxRail Future has seen the value of all-NVMe and is content knowing that VxRail will continue to underpin VMware mission-critical workloads for years to come.

Resources

Author: Mike Athanasiou, Sr. Engineering Technologist

Related Blog Posts

Learn About the Latest VMware Cloud Foundation 5.1.1 on Dell VxRail 8.0.210 Release

Tue, 26 Mar 2024 18:47:52 -0000

|Read Time: 0 minutes

The latest VCF on VxRail release delivers GenAI-ready infrastructure, runs more demanding workloads, and is an excellent choice for supporting hardware tech refreshes and achieving higher consolidation ratios.

VMware Cloud Foundation 5.1.1 on VxRail 8.0.210 is a minor release from the perspective of versioning and new functionality but is significant in terms of support for the latest VxRail hardware platforms. This new release is based on the latest software bill of materials (BOM) featuring vSphere 8.0 U2b, vSAN 8.0 U2b, and NSX 4.1.2.3. Read on for more details…

VxRail hardware platform updates

16th generation VxRail VE-660 and VP-760 hardware platform support

Cloud Foundation on VxRail customers can now benefit from the latest, more scalable, and robust 16th generation hardware platforms. This includes a full spectrum of hybrid, all-flash, and all NVMe options that have been qualified to run VxRail 8.0.210 software. This is fantastic news as these new hardware options bring many technical innovations, which my colleagues discussed in detail in previous blogs.

These new hardware platforms are based on Intel® 4th Generation Xeon® Scalable processors, which increase VxRail core density per socket to 56 (112 max per node). They also come with built-in Intel® AMX accelerators (Advanced Matrix Extensions) that support AI and HPC workloads without the need for additional drivers or hardware.

VxRail on the 16th generation hardware supports deployments with either vSAN Original Storage Architecture (OSA) or vSAN Express Storage Architecture (ESA). The VP-760 and VE-660 can take advantage of vSAN ESA’s single-tier storage architecture, which enables RAID-5 resiliency and capacity with RAID-1 performance.

This table summarizes the configurations of the newly added platforms:

To learn more about the VE-660 and VP-760 platforms, please check Mike Athanasiou’s VxRail’s Latest Hardware Evolution blog. To learn more about Intel® AMX capability set, make sure to check out the VxRail and Intel® AMX, Bringing AI Everywhere blog, authored by Una O’Herlihy.

VCF on VxRail LCM updates

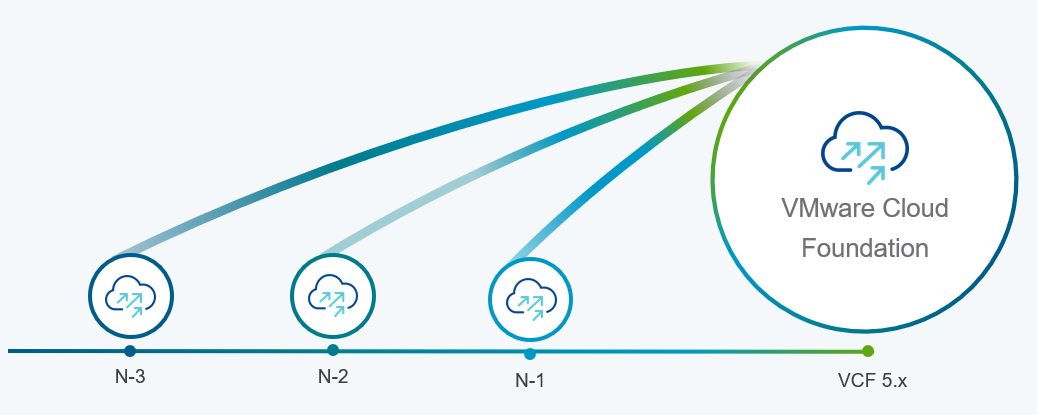

Support upgrades to VCF 5.1.1 from existing VCF 4.4.x and higher environments (N-3 upgrade support)

Customers who already upgraded to VCF 5.x are already familiar with the concept of the skip-level upgrade, which allows them to upgrade directly to the latest 5.x release without the need to perform upgrades to the interim versions. It significantly reduces the time required to perform the upgrade and enhances the overall upgrade experience. VCF 5.1.1 introduces so-called “N-3” upgrade support (as illustrated on the following diagram), which supports the skip-level upgrade for VCF 4.4.x. This means they can now perform a direct LCM upgrade operation from VCF 4.4.x, 4.5.x, 5.0.x, and 5.1.0 to VCF 5.1.1.

VCF licensing changes

Simplified licensing using a single solution license key

Starting with VCF 5.1.1, vCenter Server, ESXi, and TKG component licenses are now entered using a single “VCF Solution License” key. This helps to simplify the licensing by minimizing the number of individual component keys that require separate management. VMware NSX Networking, HCX, and VMware Aria Suite components are automatically entitled from the vCenter Server post-deployment. The single licensing key and existing keyed licenses will continue to work in parallel.

Removal of VCF+ cloud-connected subscriptions as a supported VCF licensing type

The other significant licensing change is the deprecation of VCF+ licensing, which the new subscription model has replaced.

Support for deploying or expanding VCF instances using Evaluation Mode

VMware Cloud Foundation 5.1.1 allows deploying a new VCF instance in evaluation mode without needing to enter license keys. An administrator has 60 days to enter licensing for the deployment, and SDDC Manager is fully functional at this time. The workflows for expanding a cluster, adding a new cluster, or creating a VI workload domain also provide an option to license later within a 60 day timeframe.

For more comprehensive information about changes in VCF licensing, please consult the VMware website.

Core VxRail enhancements

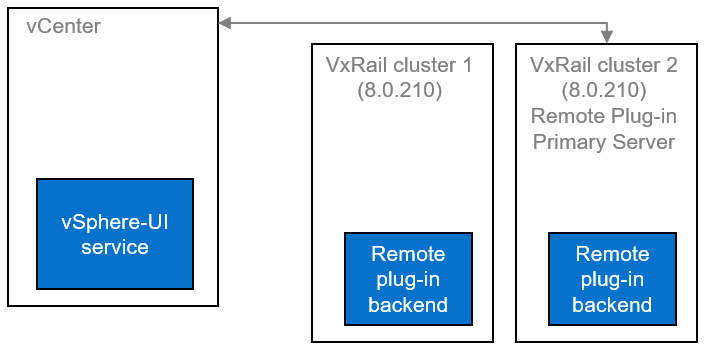

Support for remote vCenter plug-in

One of the notable enhancements in VxRail 8.0.210 is adopting the vSphere Client remote plugin architecture. It showcases adopting the latest vSphere architecture guidelines, as the local plug-ins are deprecated in vSphere 8.0 and won’t be supported in vSphere 9.0. The vSphere Client remote plug-in architecture allows plug-in functionality integration without running inside a vCenter Server. It’s a more robust architecture that separates vCenter Server from plug-ins and provides more security, flexibility, and scalability when choosing the programming frameworks and introducing new features. Starting with 8.0.210, a new VxRail Manager remote plug-in is deployed in the VxRail Manager Appliance.

LCM enhancements, including improved VxRail pre-checks and self-remediation of iDRAC issues.

VxRail 8.0.210 also comes with several small features based on Customer feedback that combine to improve the LCM experience's reliability. These include:

- VxRail Manager root disk space precheck prevents the upgrade errors related to lack of disk space (for rpm-based upgrades).

- Self-remediation of iDRAC issues during LCM upgrades provides a more reliable firmware upgrade experience. By clearing the iDRAC job queue and resetting the iDRAC, the process may recover from a firmware update failure.

Serviceability enhancements, including improved expansion pre-checks, external storage reporting, and improved troubleshooting capabilities.

Another group of features contributes to overall improved serviceability and visibility into the system:

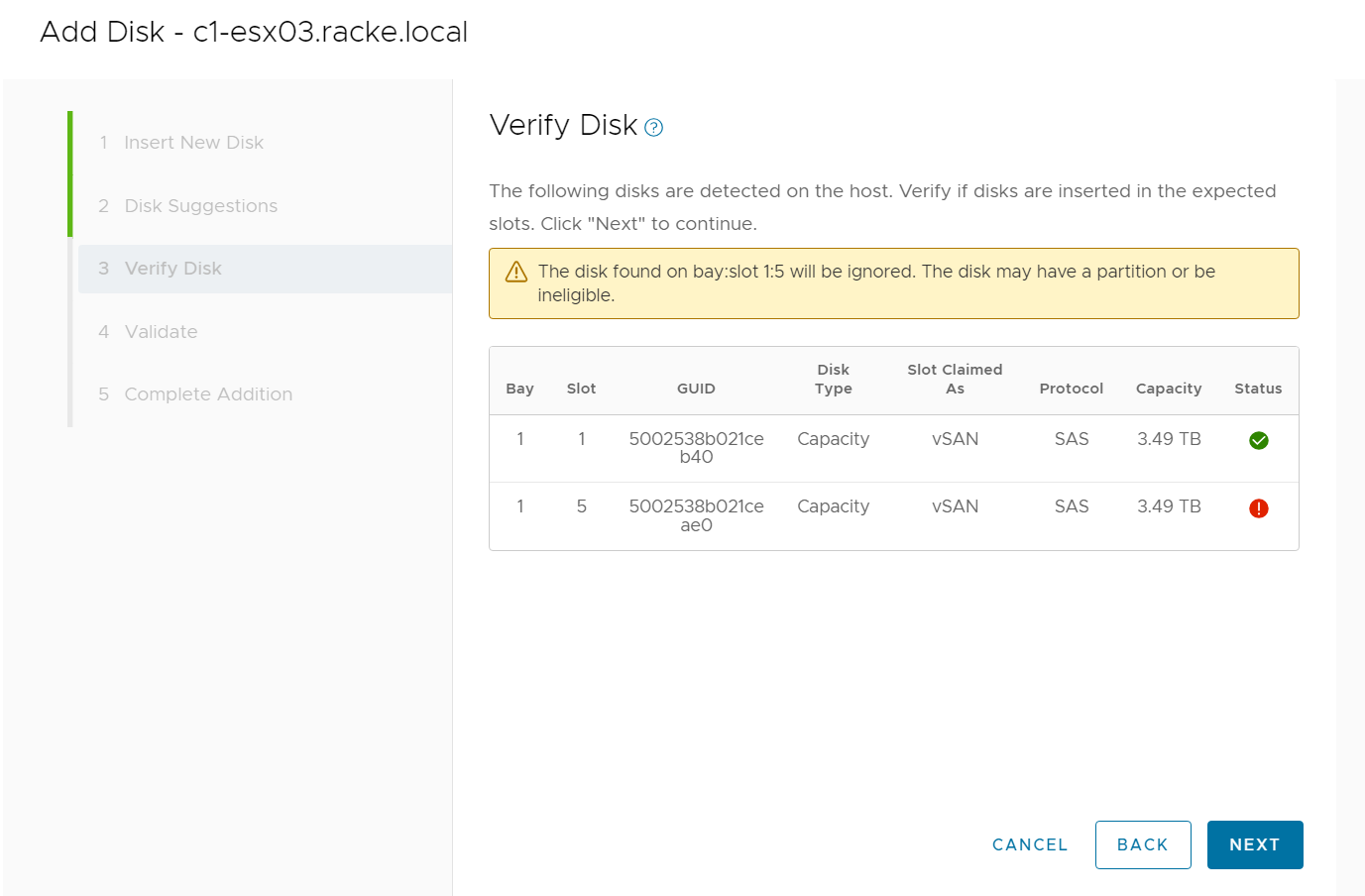

- The UI now implements new errors and warnings for incompatible disks when the user tries to add an incompatible disk during the disk addition process (see the following figure)

- The improved hardware views report on storage capacity and utilization for dynamic nodes, improving the overall visibility for the external storage attached to dynamic nodes directly from the vSphere Client.

- VxRail cluster troubleshooting efficiency has improved thanks to better standardization of log format and event grooming for disk exhaustion.

- The improved node-add health checks reduce the risk of successfully adding a faulty or mismatched node to a VxRail cluster.

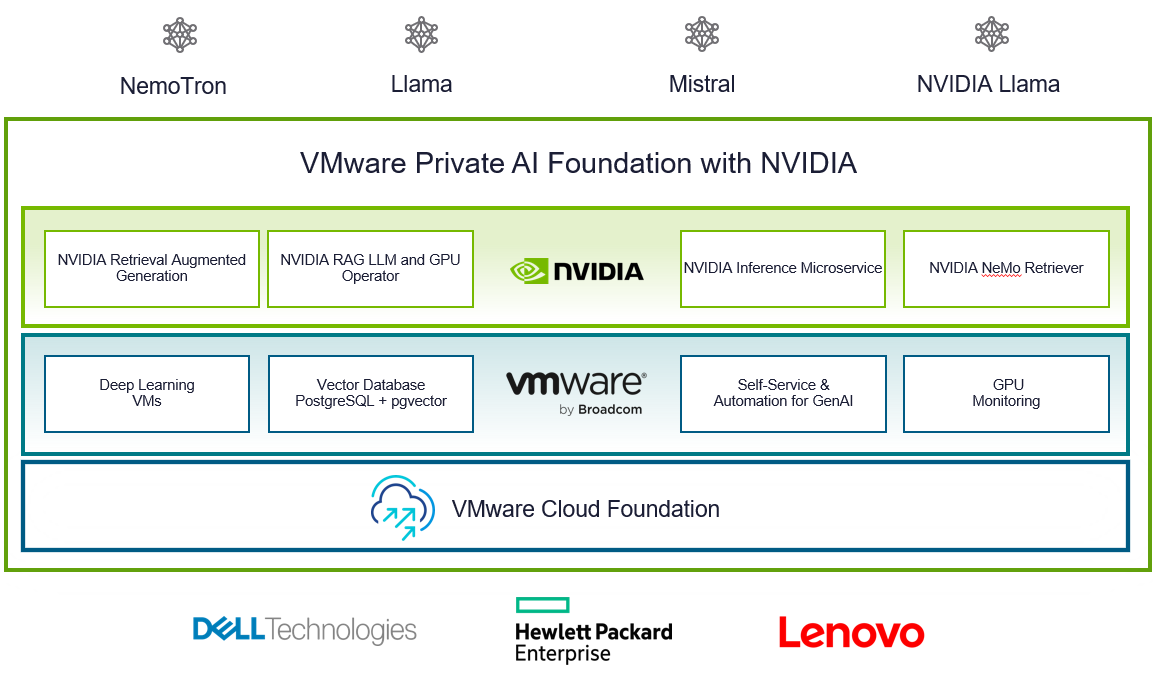

VMware Private AI Foundation with NVIDIA

With VCF 5.1.1, VMware introduces VMware Private AI Foundation with NVIDIA as Initial Access. Dell Technologies Engineering intends to validate this feature when it is generally available.

This solution aims to enable enterprise customers to adopt Generative AI capabilities more easily and securely by providing enterprises with a cost-effective, high-performance, and secure environment for delivering business value from Large Language Models (LLMs) using their private data.

Summary

The new VCF 5.1.1 on VxRail 8.0.210 release is an excellent option for customers looking for a hardware refresh, Gen AI-ready infrastructure to run more demanding workloads, or to achieve higher consolidation ratios. Additional enhancements introduced in the core VxRail functionality improve the overall LCM experience, serviceability, and visibility into the system.

Thank you for your time, and please check the additional resources if you like to learn more.

Resources

- VxRail’s Latest Hardware Evolution blog

- VxRail and Intel® AMX, Bringing AI Everywhere

- VxRail product page

- VxRail Infohub page

- VxRail Videos

- VMware Cloud Foundation on Dell VxRail Release Notes

- VCF on VxRail Interactive Demo

- VMware Product Lifecycle Matrix

Author: Karol Boguniewicz

Twitter: @cl0udguide

100 GbE Networking – Harness the Performance of vSAN Express Storage Architecture

Wed, 05 Apr 2023 12:48:50 -0000

|Read Time: 0 minutes

For a few years, 25GbE networking has been the mainstay of rack networking, with 100 GbE reserved for uplinks to spine or aggregation switches. 25 GbE provides a significant leap in bandwidth over 10 GbE, and today carries no outstanding price premium over 10 GbE, making it a clear winner for new buildouts. But should we still be continuing with this winning 25 GbE strategy? Is it time to look to a future of 100 GbE networking within the rack? Or is that future now?

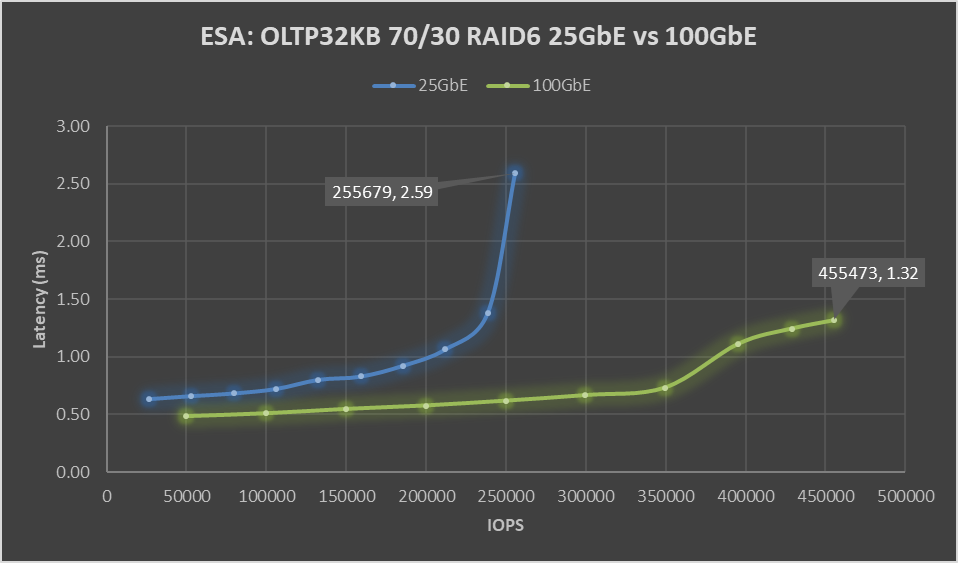

This question stems from my last blog post: VxRail with vSAN Express Storage Architecture (ESA) where I called out VMware’s 100 GbE recommended for maximum performance. But just how much more performance can vSAN ESA deliver with 100GbE networking? VxRail is fortunate to have its performance team, who stood up two identical six-node VxRail with vSAN ESA clusters, except for the networking. One was configured with Broadcom 57514 25 GbE networking, and the other with Broadcom 57508 100 GbE networking. For more VxRail white papers, guides, and blog posts visit VxRail Info Hub.

When it comes to benchmark tests, there is a large variety to choose from. Some benchmark tests are ideal for generating headline hero numbers for marketing purposes – think quarter-mile drag racing. Others are good for helping with diagnosing issues. Finally, there are benchmark tests that are reflective of real-world workloads. OLTP32K is a popular one, reflective of online transaction processing with a 70/30 read-write split and a 32k block size, and according to the aggregated results from thousands of Live Optics workload observations across millions of servers.

One more thing before we get to the results of the VxRail Performance Team's testing. The environment configuration. We used a storage policy of erasure coding with a failure tolerance of two and compression enabled.

When VMware announced vSAN with Express Storage Architecture they published a series of blogs all of which I encourage you to read. But as part of our 25 GbE vs 100 GbE testing, we also wanted to verify the astounding claims of RAID-5/6 with the Performance of RAID-1 using the vSAN Express Storage Architecture and vSAN 8 Compression - Express Storage Architecture. In short, forget the normal rules of storage performance, VMware threw that book out of the window. We didn’t throw our copy out of the window, well not at first, but once our results validated their claims… it went out.

Let’s look at the data: Boom!

Figure 1. ESA: OLTP32KB 70/30 RAID6 25 GbE vs 100 GbE performance graph

Boom! A 78% increase in peak IOPS with a substantial 49% drop in latency. This is a HUGE increase in performance, and the sole difference is the use of the Broadcom 57508 100 GbE networking. Also, check out that latency ramp-up on the 25 GbE line, it’s just like hitting a wall. While it is almost flat on the 100 GbE line.

But nobody runs constantly at 100%, at least they shouldn’t be. 60 to 70% of absolute max is typically a normal day-to-day comfortable peak workload, leaving some headroom for spikes or node maintenance. At that range, there is an 88% increase in IOPS with a 19 to 21% drop in latency, with a smaller drop in latency attributable to the 25 GbE configuration not hitting a wall. As much as applications like high performance, it is needed to deliver performance with consistent and predictable latency, and if it is low all the better. If we focus on just latency, the 100 GbE networking enabled 350K IOPS to be delivered at 0.73 ms, while the 25 GbE networking can squeak out 106K IOPS at 0.72 ms. That may not be the fairest of comparisons, but it does highlight how much 100GbE networking can benefit latency-sensitive workloads.

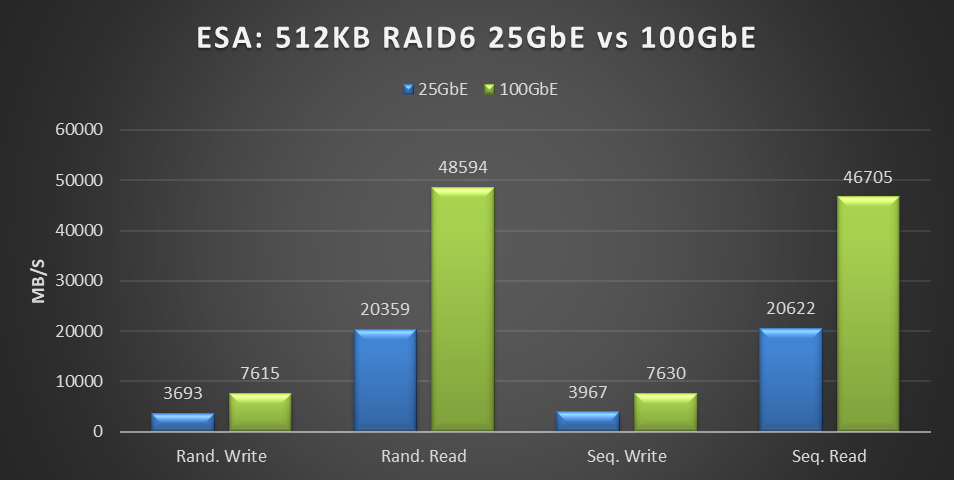

Boom, again! This benchmark is not reflective of real-world workloads but is a diagnostic test that stresses the network with its 100% read-and-write workloads. Can this find the bottleneck that 25 GbE hit in the previous benchmark?

Figure 2. ESA: 512KB RAID6 25 GbE vs 100 GbE performance graph

This testing was performed on a six-node cluster, with each node contributing one-sixth of the throughput shown in this graph. 20359MB/s of random read throughput for the 25 GbE cluster or 3393 MB/s per node. Which is slightly above the theoretical max throughput of 3125 MB/s that 25 GbE can deliver. This is the absolute maximum that 25 GbE can deliver! In the world of HCI, the virtual machine workload is co-resident with the storage. As a result, some of the IO is local to the workload, resulting in higher than theoretical throughput. For comparison, the 100 GbE cluster achieved 48,594 MB/s of random read throughput, or 8,099 MB/s per node out of a theoretical maximum of 12,500 MB/s.

But this is just the first release of the Express Storage Architecture. In the past, VMware has added significant gains to vSAN, as seen in the lab-based performance analysis of Harnessing the Performance of Dell EMC VxRail 7.0.100. We can only speculate on what else they have in store to improve upon this initial release.

What about costs, you ask? Street pricing can vary greatly depending on the region, so it's best to reach out to your Dell account team for local pricing information. Using US list pricing as of March 2023, I got the following:

Component | Dell PN | List price | Per port | 25GbE | 100GbE |

Broadcom 57414 dual 25 Gb | 540-BBUJ | $769 | $385 | $385 |

|

S5248F-ON 48 port 25 GbE | 210-APEX | $59,216 | $1,234 | $1,234 |

|

25 GbE Passive Copper DAC | 470-BBCX | $125 | $125 | $125 |

|

Broadcom 57508 dual 100Gb | 540-BDEF | $2,484 | $1,242 |

| $1,242 |

S5232F-ON 32 port 100 GbE | 210-APHK | $62,475 | $1,952 |

| $1,952 |

100 GbE Passive Copper DAC | 470-ABOX | $360 | $360 |

| $360 |

Total per port |

|

|

| $1,743 | $3,554 |

Overall, the per-port cost of the 100 GbE equipment was 2.04 times that of the 25 GbE equipment. However, this doubling of network cost provides four times the bandwidth, a 78% increase in storage performance, and a 49% reduction in latency.

If your workload is IOPS-bound or latency-sensitive and you had planned to address this issue by adding more VxRail nodes, consider this a wakeup call. Adding dual 100Gb came at a total list cost of $42,648 for the twelve ports used. This cost is significantly less than the list price of a single VxRail node and a fraction of the list cost of adding enough VxRail nodes to achieve the same level of performance increase.

Reach out to your networking team; they would be delighted to help deploy the 100 Gb switches your savings funded. If decision-makers need further encouragement, send them this link to the white paper on this same topic Dell VxRail Performance Analysis (similar content, just more formal), and this link to VMware's vSAN 8 Total Cost of Ownership white paper.

While 25 GbE has its place in the datacenter, when it comes to deploying vSAN Express Storage Architecture, it's clear that we're moving beyond it and onto 100 GbE. The future is now 100 GbE, and we thank Broadcom for joining us on this journey.