VxRail and Intel® AMX, Bringing AI Everywhere

Wed, 13 Dec 2023 22:54:31 -0000

|Read Time: 0 minutes

We have seen exponential growth and adoption of Artificial Intelligence (AI) across nearly every sector in recent years, with many implementing their AI strategies as soon as possible to tap into the benefits and efficiencies AI has to offer.

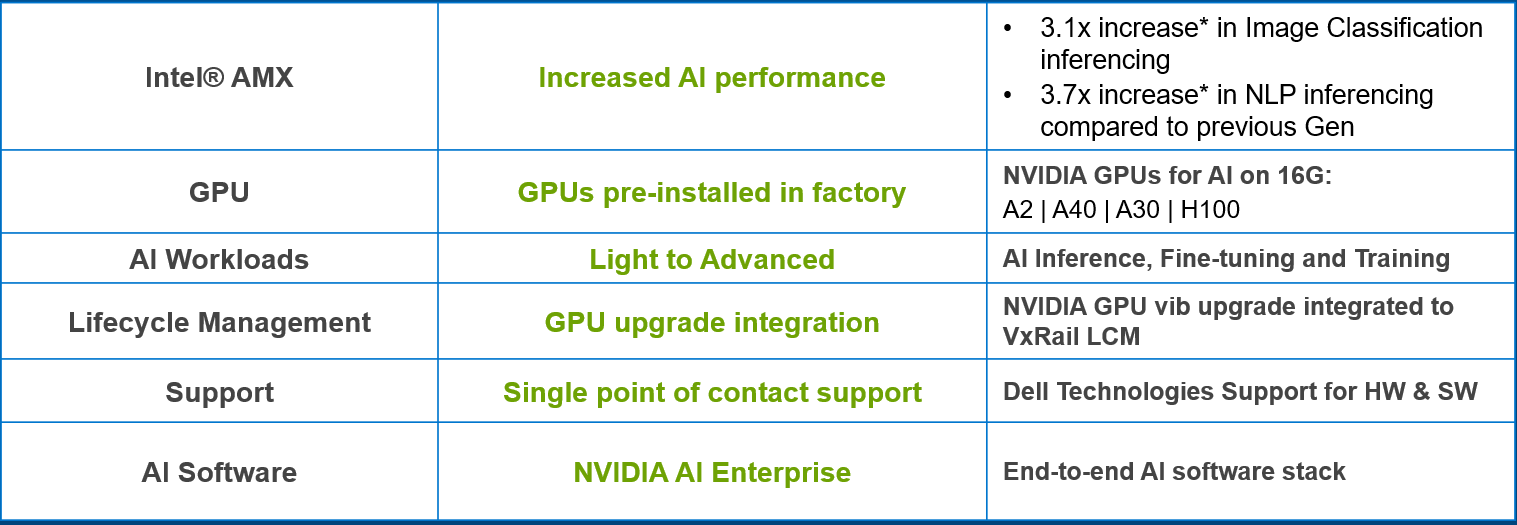

With our VxRail platforms, we have been supporting fully integrated and pre-installed GPUs for many years, with an array of NVIDIA GPUs available that already caters for high performance compute, graphics, and, of course, AI workloads.

These GPUs are stood up as part of the first run deployment of your VxRail system (though licensing for NVIDIA will be separate) and will be displayed and managed in vCenter. On top of this integration with vSphere and VxRail Manager, you will also see the GPUs’ lifecycle management taken care of through vLCM, where the GPU vib will be added to VxRail’s LCM bundle and then upgraded as part of that upgrade process.

When considering the type of accelerator you need for your VxRail system, there is now an additional option outside of discrete GPUs, which may have just enough acceleration capabilities to cater to your AI workloads.

Figure 1. Embrace AI with VxRail

Figure 1. Embrace AI with VxRail

Our VxRail 16th Generation platforms, launched this past summer, come with a choice of Intel® 4th generation Xeon® Scalable processors, all of which come with built-in accelerators called Intel® Advanced Matrix Extensions (AMX) that are deeply embedded in every core of the processor. The Intel® AMX accelerator, which benefits both AI and HPC workloads, is supported out-of-the-box and comes as standard without any requirement for drivers, special hardware, or additional licensing.

In this blog, we will cover the performance testing carried out by our VxRail Performance team in conjunction with Intel, as well as the gains we can expect to see when running AI inferencing workloads on our VxRail 16th Generation platforms leveraging Intel® AMX.

But first – what is Intel® AMX and how does it work?

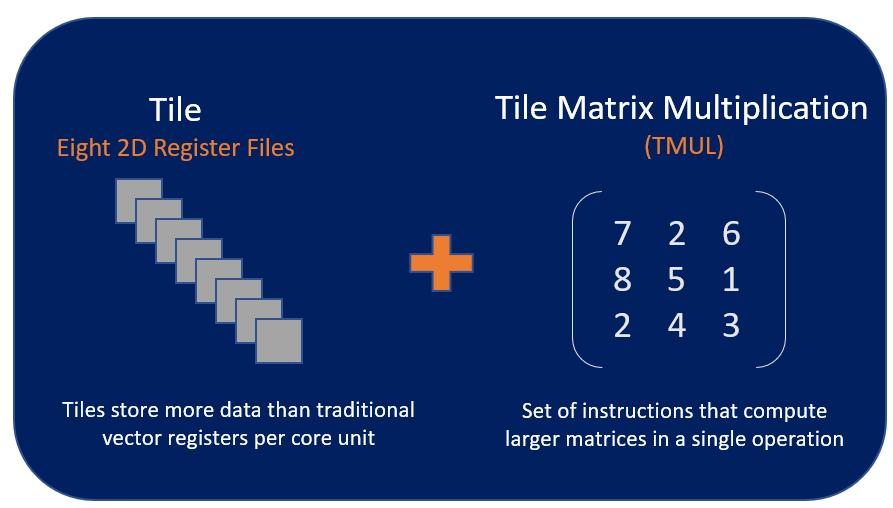

Intel® AMX’s architecture consists of two components:

- Tiles – consisting of eight two-dimensional registers that store large chunks of data, each 1kilobyte in size

- Tile Matrix Multiplication (TMUL) – an accelerator engine attached to the tiles that performs matrix-multiply computations for AI

The accelerator works by combining larger 2D register files called tiles and a set of matrix multiplication instructions, enabling Intel® AMX to deliver the type of matrix compute functionality that you commonly find in dedicated AI accelerators (i.e. GPUs) directly into our CPU cores. This allows AI workloads to run on the CPU instead of offloading them to dedicated GPUs.

Figure 2. Intel AMX Architecture Tile and TMUL

Figure 2. Intel AMX Architecture Tile and TMUL

With this functionality, the Intel® AMX accelerator works best with AI workloads that rely on matrix math, like natural language processing, recommendation systems, and image recognition. The Intel® AMX accelerator delivers acceleration for both inferencing and deep learning on these workloads, providing a significant performance boost which we will cover shortly.

There are two data types – INT8 and BF16 – supported for Intel® AMX, both of which allow for the matrix multiplication I mentioned earlier.

Some Intel® AMX workload use cases include:

- Image recognition

- Natural language processing

- Recommendation systems

- Media processing

- Machine translation

Did you say performance testing?

Yes, I did.

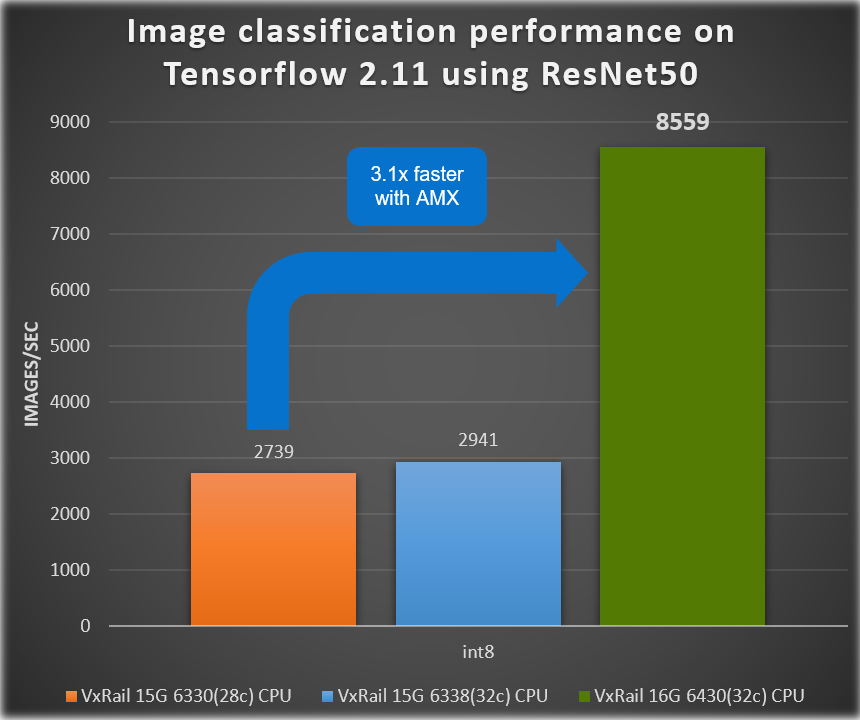

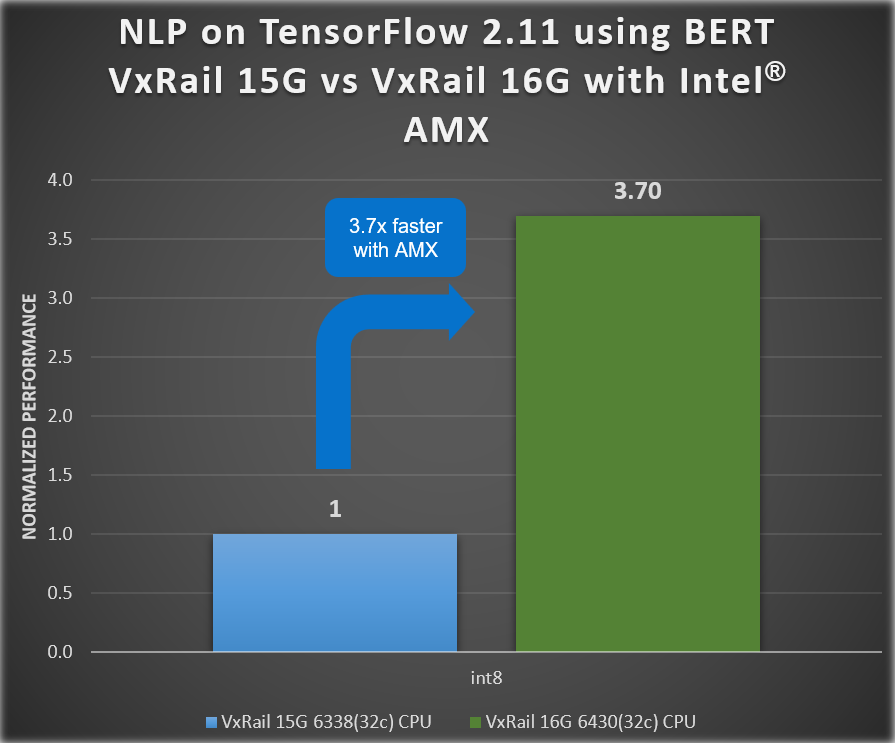

With this testing, we saw increased AI performance for two sets of benchmark results that demonstrated the generation-to-generation inference performance gains delivered by our 16th generation VxRail VE-660 platform (with Intel® AMX!) compared to previous 15th generation VxRail platforms.

The testing was focused on the inferencing of two different AI tasks, one for image classification with the ResNet50 model and the other for natural language processing with the BERT-large Model. The following covers the details of the testing:

Benchmark Testing:

- ResNet50 for Image Classification

- BERT benchmark for Natural Language Processing

Framework: TensorFlow 2.11

Table 1. Tested VxRail hardware overview

Generation | 16th Generation | 15th Generation | 15th Generation (different processor) |

System Name | VxRail VE-660 | VxRail E660N | VxRail E660N |

Number of Nodes | 4 | 4 | 4 |

| Components per VE-660 node | Components per E660N node | Components per E660N node |

Processor Model | Intel® Xeon ® 6430 (32c) | Intel® Xeon ® 6338 (32c) | Intel® Xeon ® 6330 (28c) |

Intel® AMX? | Yes | No | No |

Processors per node | 2 | 2 | 2 |

Core count per node | 64 | 64 | 56 |

Processor Frequency | 2.1 GHz, 3.4 GHz Turbo boost | 2.0 GHz, 3.0 GHz Turbo boost | 2.0 GHz, 3.10 GHz Turbo boost |

Memory per node | 512GB RAM | 512GB RAM | 512GB RAM |

Storage | 2x diskgroups (1 x cache, 3 x capacity) | 2x diskgroups (1 x cache, 4 x capacity) | 2x diskgroups (1 x cache, 4 x capacity) |

vSAN OSA | vSAN OSA 8.0 U2 | vSAN OSA 8.0 | vSAN OSA 8.0 |

VxRail version | Engineer pre-release VxRail code | 8.0.010 | 8.0.010 |

We can see in the following figures that the ResNet50 image classification throughput increased by 3.1x, and we see a 3.7x increase in AI performance for the BERT benchmark results for natural language processing (NLP).

Figure 3. VxRail Generation-to-Generation – ResNet 50 inference results

Figure 3. VxRail Generation-to-Generation – ResNet 50 inference results

Figure 4. VxRail Generation-to-Generation - BERT inference results

Figure 4. VxRail Generation-to-Generation - BERT inference results

This exceptional increase in performance illustrates the type of AI performance gains you can achieve with Intel® AMX on VxRail without needing to invest in dedicated GPUs, enabling you to start your AI journey whenever you want.

Before we go, let’s review some highlights…

Intel® AMX and VxRail are…

- Already included in any Intel processor on VxRail 16th Generation VE-660 and VP-760 platforms

- Highly optimized for matrix operations common to AI workloads

- Cost-effective, allowing you to run AI workloads without the need of a dedicated GPU

- Integral to increased AI performance on VxRail 16thGeneration platform

- 3.1 x for Image Classification*

- 3.7 x for Natural Language Processing (NLP)*

Intel® AMX and VxRail support…

- Most popular AI frameworks, including TensorFlow, Pytorch, OpenVINO, and more

- int8 and bf16 data types

- Deep Learning AI Inference and Training Workloads for:

- Image recognition

- Natural language processing

- Recommendation systems

- Media processing

- Machine translation

(*Results based on engineering pre-release VxRail code)

Conclusion

Our VE-660 and VP-760 VxRail platforms come with built-in Intel® AMX accelerators which improve AI performance by 3.1x for image classification and 3.7x for NLP. The combination of these 16th Generation VxRail platforms and 4th generation Intel® Xeon® processors provides a cost-effective solution for customers that rely on Intel® AMX to meet their SLA for AI workload acceleration.

Author: Una O’Herlihy