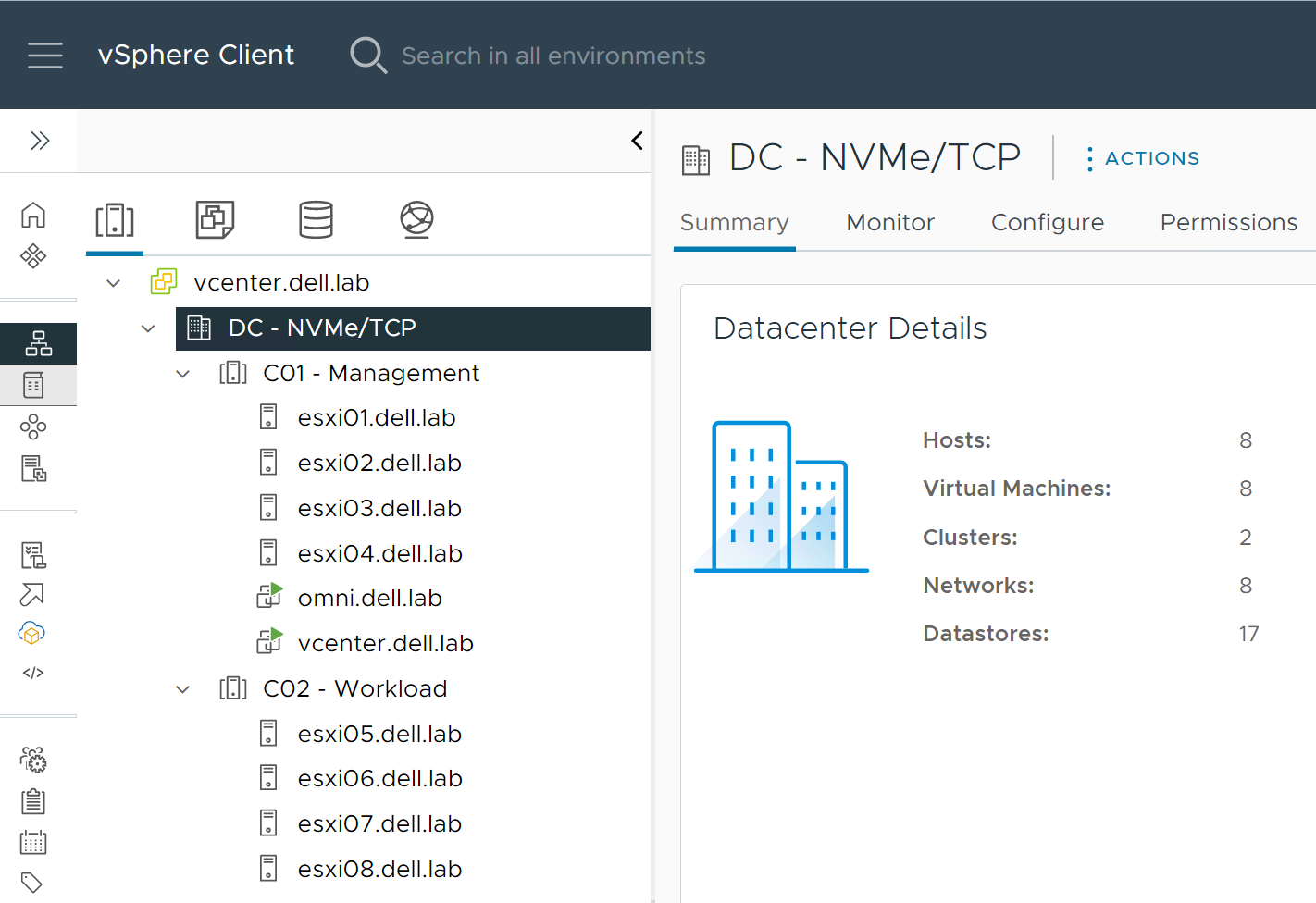

This guide begins with vSphere vCenter and hosts deployed with the data center, clusters, and management virtual networking in place. The screens below depict the settings used.

In vSphere, configure your clusters, hosts, and VMs before deploying SFSS. The figure below shows the configuration used for the example in this guide.

Ensure that the hostname and domain are changed from their default values. They are used to form the NQN of the host. If you need to reform the NQN after changing the hostname or domain (FQDN), run the esxcli nvme info set --hostnqn=default command.

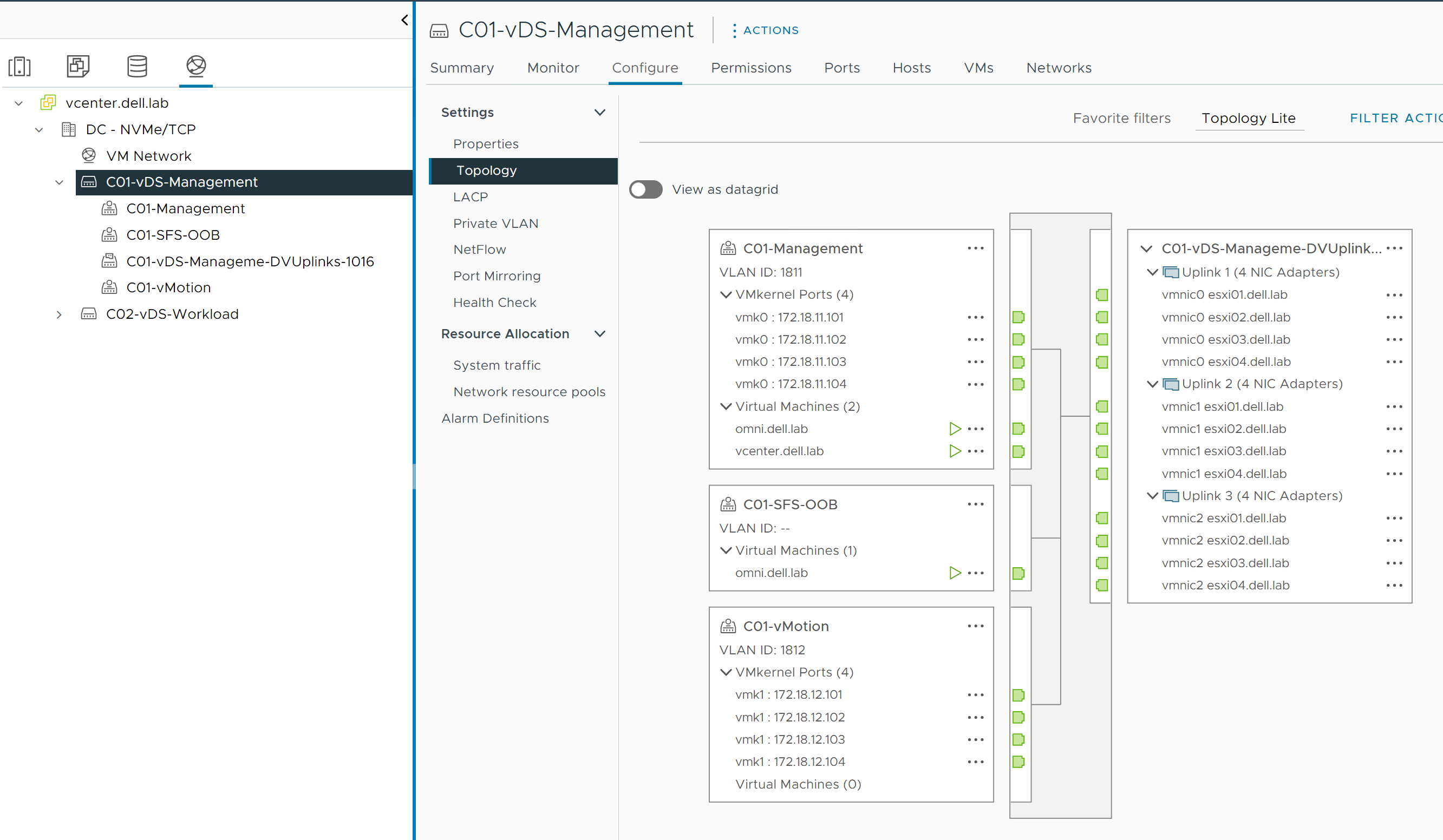

The figure below shows the topology of the virtual distributed switch of the Management Cluster. Hosts esxi01 to esxi04 connect to vDS C01-vDS-Management. Uplinks 1 and 2 connect to the network carrying management, vMotion, and SFSS control traffic. Uplink 3 is used to connect the OMNI virtual machine to the switches in our solution via an out-of-band network. To align with the network MTU settings, the vDS that's used for SFSS NVMe/TCP control traffic has an MTU of 9000.

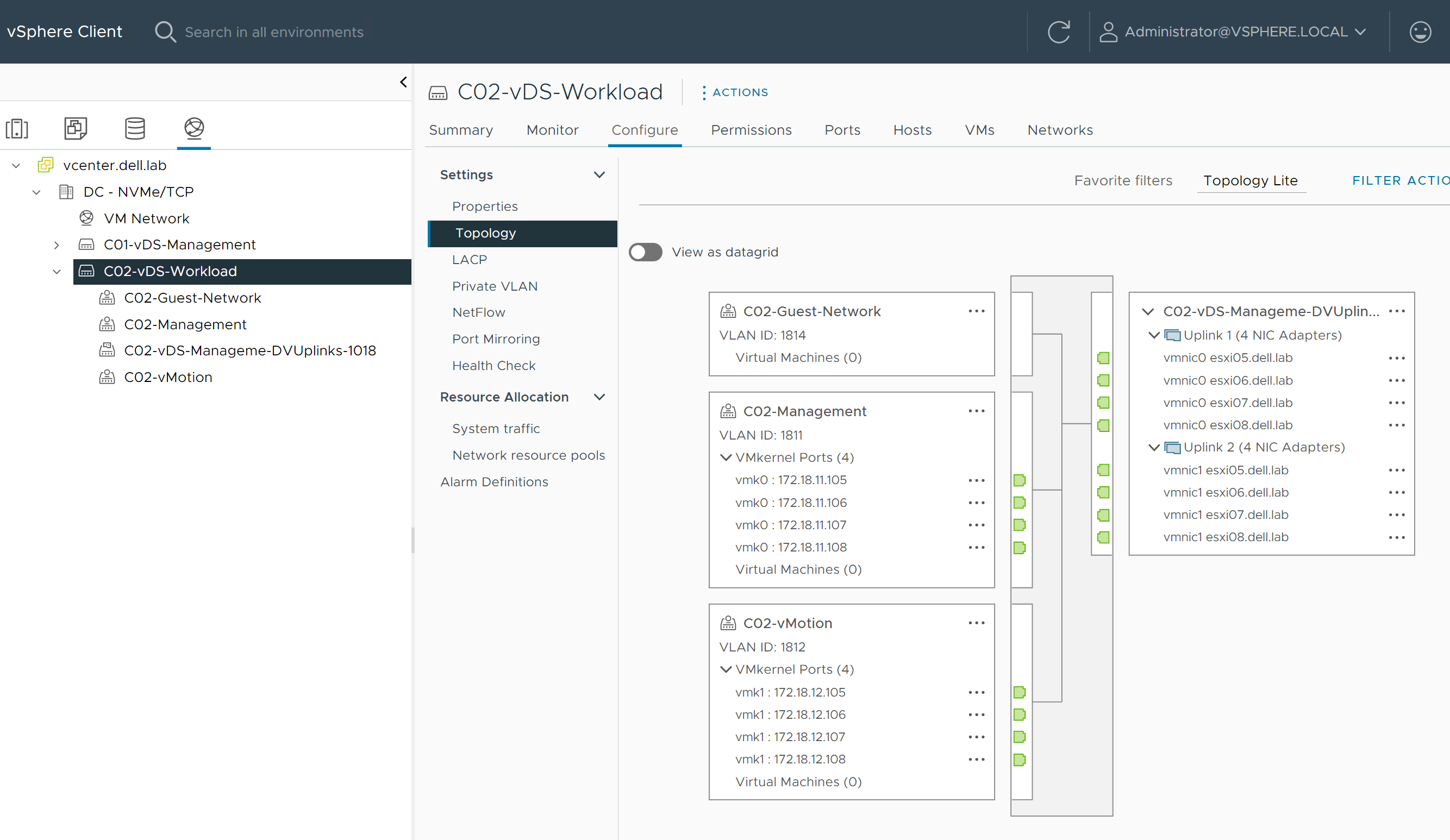

The figure below shows the configuration used for vDS for the workload cluster’s two management uplink ports. Hosts esxi05-esxi08 connect to C02-vDS-Workload for management, vmotion, and guest traffic. Uplinks 1 and 2 connect to the LAN. We will create a vDS for NVMe/TCP to connect to the SAN switches later.