Dell Technologies vSAN ESA AF-0 makes vSAN easier for small and medium businesses

Tue, 14 Nov 2023 21:26:35 -0000

|Read Time: 0 minutes

Dell Technologies and VMware have created a viable solution with low hardware entry requirements with the new VMware ESA profile, AF-0, to help customers ride the competitive edge, with lower total cost of ownership.

VMware has added the new ESA AF-0 to the ReadyNode profile with minimum hardware requirements for host CPU, memory, and networking, and with the full performance benefits of a single storage tier comprised of NVMe devices. With the vSAN ESA AF-0 profile, VMware and Dell Technologies offer customers simplified IT environments, plus the latest 16G server technology, performance, low failure, and maintenance domains, with a competitive advantage that flexibly aligns with business objectives.

At Dell Technologies, we understand this. And with VMware, our focus is to help you achieve your goals easily and on your terms. Dell Technologies ReadyNodes built on PowerEdge servers are tested and validated building blocks for the vSAN ESA solution.

The new ReadyNodes profile, AF-0, helps customers in a wide deployment use case, but not limited to:

- Customer environments that need to run small or modest workloads (leveraging existing data centers networking (10Gbps switches)), to dramatically reduce TCO and benefit from ESA features and capabilities.

- 2-node environments running just the required number of VMs for small data centers and edge environments. For example: a small or medium business that needs lower hardware entry requirements than in a similar OSA configuration.

Dell vSAN Express Storage Architecture ReadyNode AF-0 configuration

Table 1. An example of a Dell vSAN ESA ReadyNode AF-0 configuration

Components | Description | Quantity |

ESXi Pre-Installed | Yes |

|

System | vSAN Express Storage Architecture AF-0 | 2 |

CPU | Intel Xeon Gold 6434 3.7G, 8C/16T, 16GT/s, 23M Cache, Turbo, HT (195W) DDR5-4800 | 2 |

Memory | 16GB RDIMM, 4800MT/s Single Rank, 4800MT/s RDIMMs | 8 |

Storage Tier | 1.6TB Enterprise NVMe Mixed Use AG Drive U.2 Gen4 with carrier | 2 |

NIC | Broadcom 57414 Dual Port 10/25GbE SFP28, OCP NIC 3.0 | 1 |

Boot Device | BOSS-N1 controller card + with 2 M.2 480GB (RAID 1) | 1 |

Five enhancements for VMware vSAN ESA 8 Update 2

VMware vSAN ESA introduces several new enhancements. These deliver better performance and make improved data durability and resilience available across all ESA profiles, from AF-0 to AF-High Density for small and medium businesses to large enterprises.

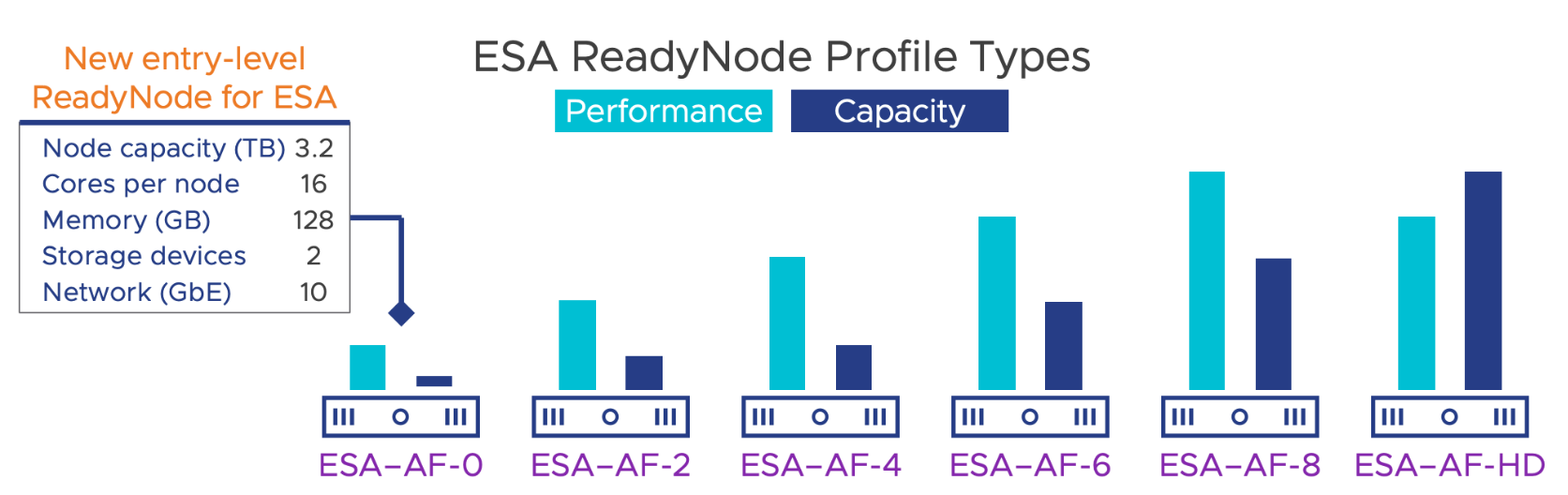

Figure 1. VMware vSAN ESA ReadyNode profiles

The five enhancements are:

- ESA brings Integrated file services to Cloud Native and Traditional workloads, when running vSAN file services, and supports NFS and SMB protocols for traditional and cloud native clients. Some additional enhancements include an improved Active Directory configuration check, improved network reconfigurations, and faster failover of protocol services containers in maintenance mode.

- ESA's Adaptive Write (writes data in an alternative, optimized way) Path helps ESA ingest and process data more quickly, to provide improved performance, higher throughput, and lower latency on write intensive workloads.

- Adaptive Write Path helps in disaggregated topologies, where VMs in a vSphere or vSAN cluster that are consuming the storage resources of another vSAN ESA or vSAN cluster can take advantage of this capability. This enhancement helps achieve higher throughput and lower latency, automatically in real-time without administrator intervention.

- ESA also provides optimized I/O processing in the upper layers in the vSAN ESA stack that occurs between the VM and its objects, drastically increasing performance. This improved parallelism is particularly important for resource intensive VMs that require higher IOPS and throughput, leveraging the latest and faster underlying ReadyNode hardware specifications.

- Lastly, with reduced efforts required to process I/O, ESA can now support more VMs per host: from 200 VMs to 500 VMs per host. This increase in VM density allows customers (especially small and medium business customers) to take advantage of the latest hardware from the PowerEdge portfolio, by running more VMs with fewer hosts, such as with the vSAN ESA AF-0 profile.

These enhancements to vSAN ESA, and the introduction of the AF-0 ReadyNode profile to the Dell PowerEdge server portfolio, provide a winning combination for small and medium businesses, small datacenters, and edge environments.

Dell PowerEdge Next Server Portfolio

Dell PowerEdge servers are built to support an organization’s quest to adopt and acquire the latest technologies for their IT requirements. Although providing these technological enhancements is imperative, it is important to note that business needs vary, and the latest technologies must flexibly align these enhancements across small and medium business to large organizations.

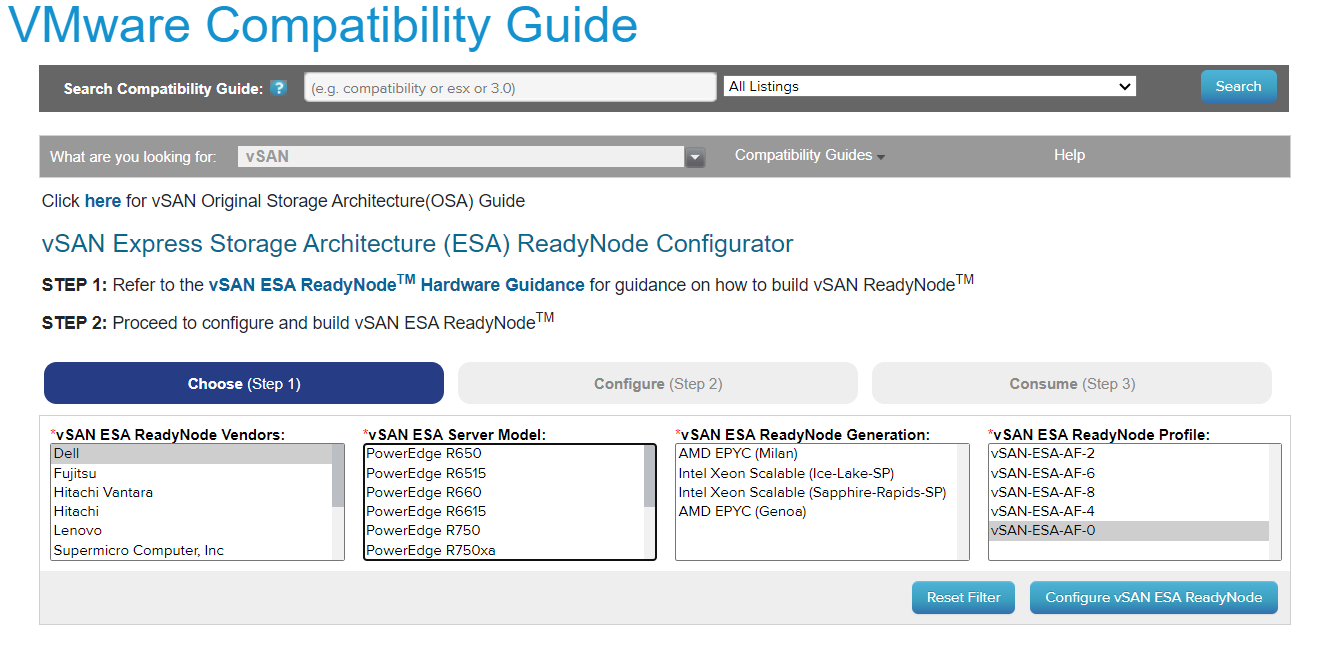

Figure 2. Dell vSAN ReadyNodes portfolio for the AF-0 profile

Dell PowerEdge servers are designed for the modern evolving data center, providing the productivity and performance for diverse workloads. Available in different form factors, they are engineered to run the most demanding applications across industry segments to address the challenges of digital transformation. The portfolio of PowerEdge servers configured for VMware vSAN Ready Nodes, is jointly engineered, validated, and certified, and one of the broadest in the industry. When you build a vSAN Cluster, Dell Technologies and VMware strongly recommend using tested and certified ReadyNodes that are validated to provide predictable performance and scalability.

With combined solutions featuring Dell PowerEdge servers, and VMware vSAN 8, customers get improved performance, reduced risks, a certified platform, and can scale as needed to accelerate their journey with a competitive edge towards digital transformation.

Resources

Author: Thomas MM

Related Blog Posts

Learn About the Latest Major VxRail Software Release: VxRail 8.0.210

Wed, 24 Apr 2024 12:09:24 -0000

|Read Time: 0 minutes

It’s springtime, VxRail customers! VxRail 8.0.210 is our latest software release to bloom. Come see for yourself what makes this software release shine.

VxRail 8.0.210 provides support for VMware vSphere 8.0 Update 2b. All existing platforms that support VxRail 8.0 can upgrade to VxRail 8.0.210. This is also the first VxRail 8.0 software to support the hybrid and all-flash models of the VE-660 and VP-760 nodes based on Dell PowerEdge 16th Generation platforms that were released last summer and the edge-optimized VD-4000 platform that was released early last year.

Read on for a deep dive into the release content. For a more comprehensive rundown of the feature and enhancements in VxRail 8.0.210, see the release notes.

Support for VD-4000

The support for VD-4000 includes vSAN Original Storage Architecture (OSA) and vSAN Express Storage Architecture (ESA). VD-4000 was launched last year with VxRail 7.0 support with vSAN OSA. Support in VxRail 8.0.210 carries over all previously supported configurations for VD-4000 with vSAN OSA. What may intrigue you even more is that VxRail 8.0.210 is introducing first-time support for VD-4000 with vSAN ESA.

In the second half of last year, VMware reduced the hardware requirements to run vSAN ESA to extend its adoption of vSAN ESA into edge environments. This change enabled customers to consider running the latest vSAN technology in areas where constraints from price points and infrastructure resources were barriers to adoption. VxRail added support for the reduced hardware requirements shortly after for existing platforms that already supported vSAN ESA, including E660N, P670N, VE-660 (all-NVMe), and VP-760 (all-NVMe). With VD-4000, the VxRail portfolio now has an edge-optimized platform that can run vSAN ESA for environments that also may have space, energy consumption, and environmental constraints. To top that off, it’s the first VxRail platform to support a single-processor node to run vSAN ESA, further reducing the price point.

It is important to set performance expectations when running workload applications on the VD-4000 platform. While our performance testing on vSAN ESA showed stellar gains to the point where we made the argument to invest in 100GbE to maximize performance (check it out here), it is essential to understand that the VD-4000 platform is running with an Intel Xeon-D processor with reduced memory and bandwidth resources. In short, while a VD-4000 running vSAN ESA won’t be setting any performance records, it can be a great solution for your edge sites if you are looking to standardize on the latest vSAN technology and take advantage of vSAN ESA’s data services and erasure coding efficiencies.

Lifecycle management enhancements

VxRail 8.0.210 offers support for a few vLCM feature enhancements that came with vSphere 8.0 Update 2. In addition, the VxRail implementation of these enhancements further simplifies the user experience.

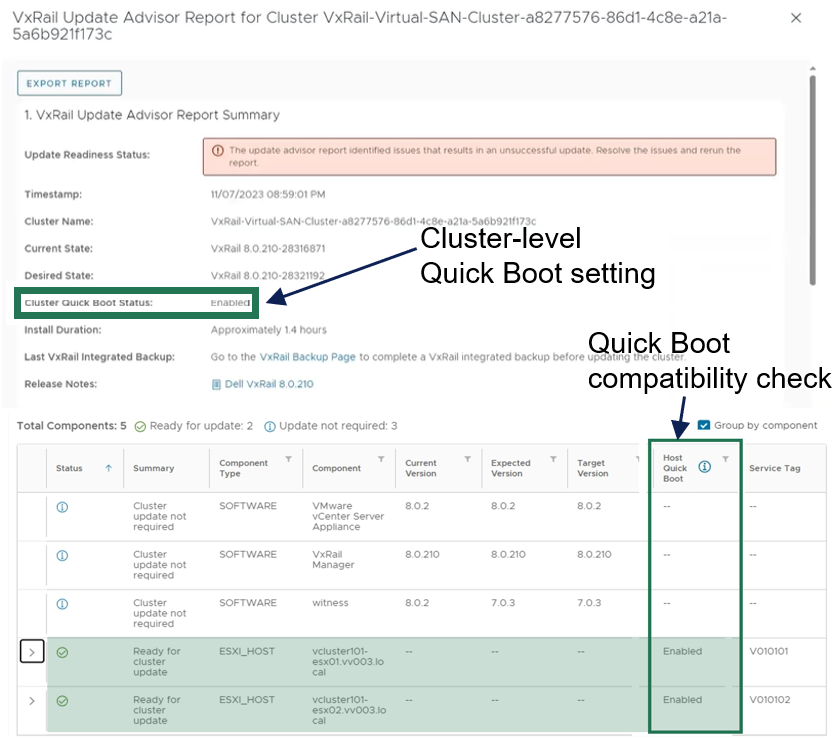

For vLCM-enabled VxRail clusters, we’ve made it easier to benefit from VMware ESXi Quick Boot. The VxRail Manager UI has been enhanced so that users can enable Quick Boot one time, and VxRail will maintain the setting whenever there is a Quick Boot-compatible cluster update. As a refresher for some folks not familiar with Quick Boot, it is an operating system-level reboot of the node that skips the hardware initialization. It can reduce the node reboot time by up to three minutes, providing significant time savings when updating large clusters. That said, any cluster update that involves firmware updates is not Quick Boot-compatible.

Using Quick Boot had been cumbersome in the past because it required several manual steps. To use Quick Boot for a cluster update, you would need to go to the vSphere Update Manager to enable the Quick Boot setting. Because the setting resets to Disabled after the reboot, this step had to be repeated for any Quick Boot-compatible cluster update. Now, the setting can be persisted to avoid manual intervention.

As shown in the following figure, the update advisor report now informs you whether a cluster update is Quick Boot-compatible so that the information is part of your update planning procedure. VxRail leverages the ESXi Quick Boot compatibility utility for this status check.

Figure 1. VxRail update advisor report highlighting Quick Boot information

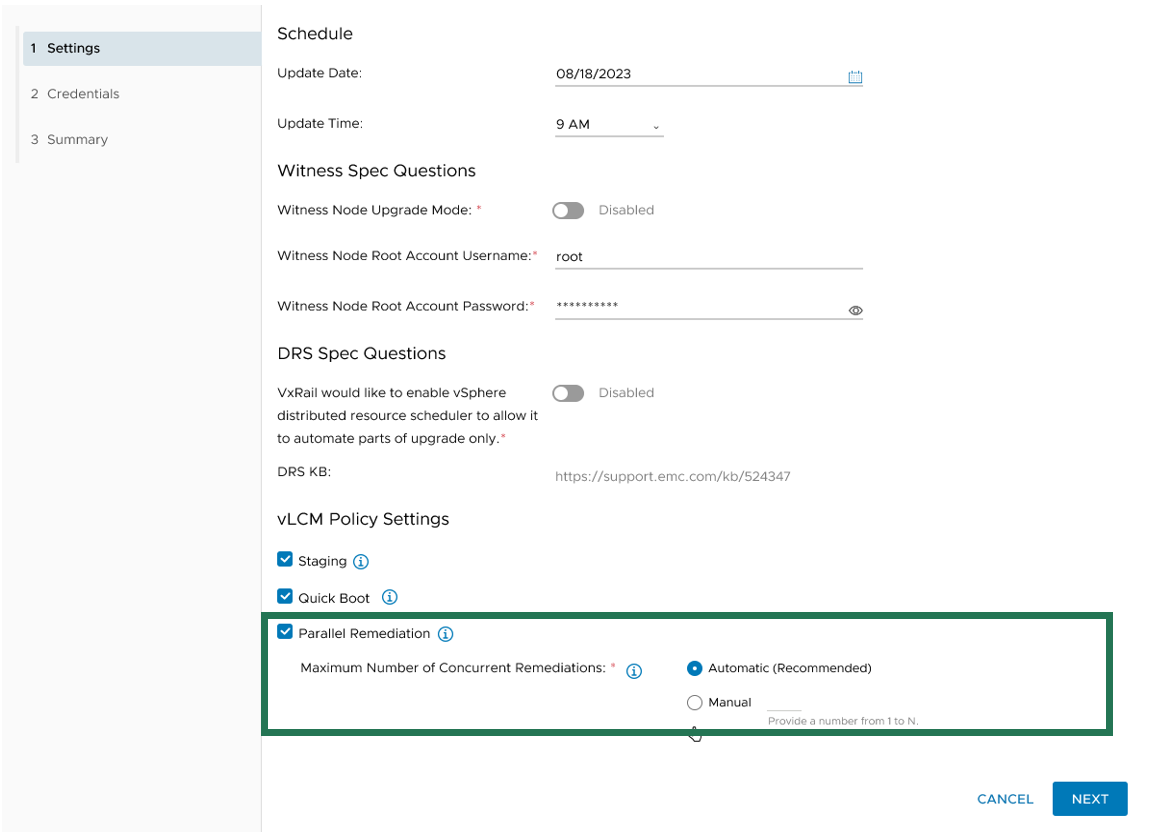

Another new vLCM feature enhancement that VxRail supports is parallel remediation. This enhancement allows you to update multiple nodes at the same time, which can significantly cut down on the overall cluster update time. However, this feature enhancement only applies to VxRail dynamic nodes because vSAN clusters still need to be updated one at a time to adhere to storage policy settings.

This feature offers substantial benefits in reducing the maintenance window, and VxRail’s implementation of the feature offers additional protections over how it can be used on vSAN Ready Nodes. For example, enabling parallel remediation with vSAN Ready Nodes means that you would be responsible for managing when nodes go into and out of maintenance mode as well as ensuring application availability because vCenter will not check whether the nodes that you select will disrupt application uptime. The VxRail implementation adds safety checks that help mitigate potential pitfalls, ensuring a smoother parallel remediation process.

VxRail Manager manages when nodes enter and exit maintenance modes and provides the same level of error checking that it already performs on cluster updates. You have the option of letting VxRail Manager automatically set the maximum number of nodes that it will update concurrently, or you can input your own number. The number for the manual setting is capped at the total node count minus two to ensure that the VxRail Manager VM and vCenter Server VM can continue to run on separate nodes during the cluster update.

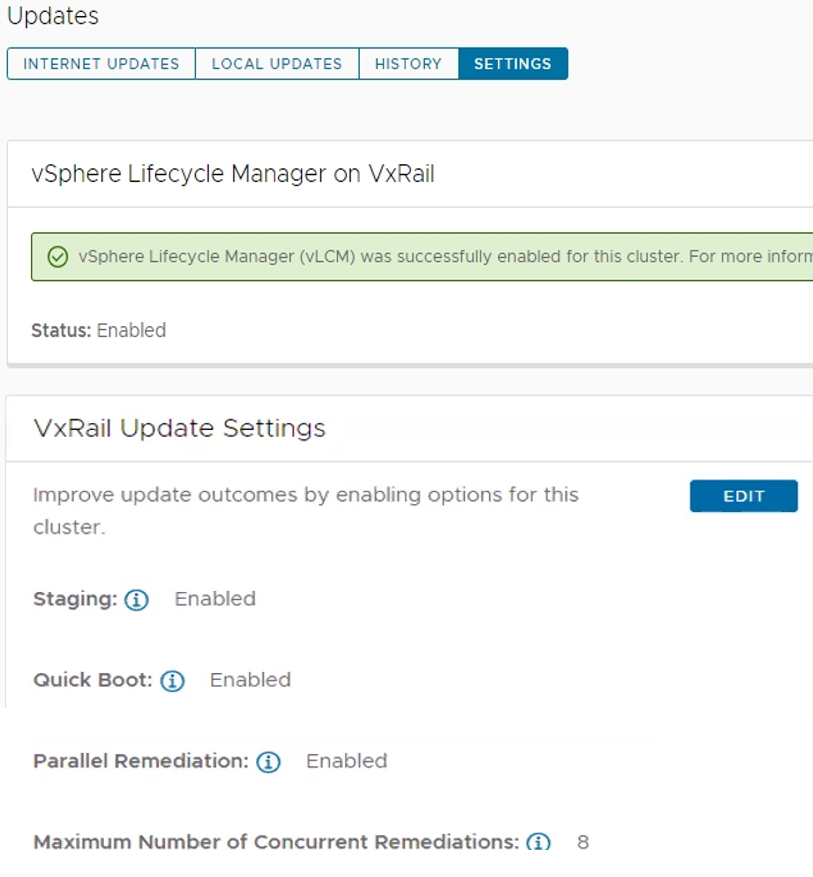

Figure 2. Options for setting the maximum number of concurrent node remediations

During the cluster update, VxRail Manager intelligently reduces the node count of concurrent updates if a node cannot enter maintenance mode or if the application workload cannot be migrated to another node to ensure availability. VxRail Manager will automatically defer that node to the next batch of node updates in the cluster update operation.

The last vLCM feature enhancement in VxRail 8.0.210 that I want to discuss is installation file pre-staging. The idea is to upload as many installation files for the node update as possible onto the node before it actually begins the update operation. Transfer times can be lengthy, so any reduction in the maintenance window would have a positive impact to the production environment.

To reap the maximum benefits of this feature, consider using the scheduling feature when setting up your cluster update. Initiating a cluster update with a future start time allows VxRail Manager the time to pre-stage the files onto the nodes before the update begins.

As you can see, the three vLCM feature enhancements can have varying levels of impact on your VxRail clusters. Automated Quick Boot enablement only benefits cluster updates that are Quick Boot-compatible, meaning there is not a firmware update included in the package. Parallel remediation only applies to VxRail dynamic node clusters. To maximize installation files pre-staging, you need to schedule cluster updates in advance.

That said, two commonalities across all three vLCM feature enhancements is that you must have your VxRail clusters running vLCM mode and that the VxRail implementation for these three feature enhancements makes them more secure and easy to use. As shown in the following figure, the Updates page on the VxRail Manager UI has been enhanced so that you can easily manage these vLCM features at the cluster level.

Figure 3. VxRail Update Settings for vLCM features

VxRail dynamic nodes

VxRail 8.0.210 also introduces an enhancement for dynamic node clusters with a VxRail-managed vCenter Server. In a recent VxRail software release, VxRail added an option for you to deploy a VxRail-managed vCenter Server with your dynamic node cluster as a Day 1 operation. The initial support was for Fiber-Channel attached storage. The parallel enhancement in this release adds support for dynamic node clusters using IP-attached storage for its primary datastore. That means iSCSI, NFS, and NVMe over TCP attached storage from PowerMax, VMAX, PowerStore, UnityXT, PowerFlex, and VMware vSAN cross-cluster capacity sharing is now supported. Just like before, you are still responsible for acquiring and applying your own vCenter Server license before the 60-day evaluation period expires.

Password management

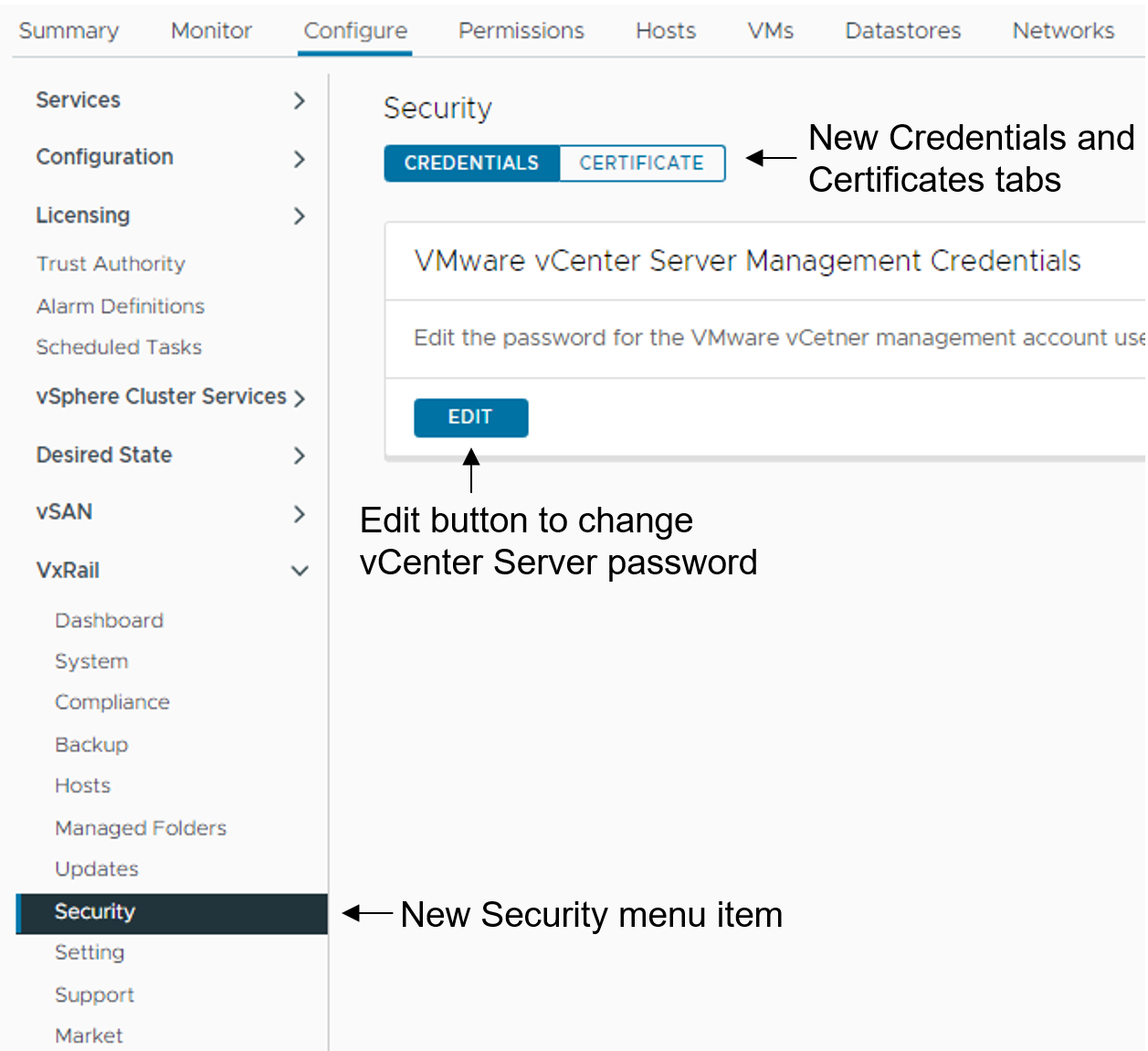

Password management is one of the key areas of focus in this software release. To reduce the manual steps to modify the vCenter Server management and iDRAC root account passwords, the VxRail Manager UI has been enhanced to allow you to make the changes via a wizard-driven workflow instead of having to change the password on the vCenter Server or iDRAC themselves and then go onto VxRail Manager UI to provide the updated password. The enhancement simplifies the experience and reduces potential user errors.

To update the vCenter Server management credentials, there is a new Security page that replaces the Certificates page. As illustrated in the following figure, a Certificate tab for the certificates management and a Credentials tab to change the vCenter Server management password are now present.

Figure 4. How to update the vCenter Server management credentials

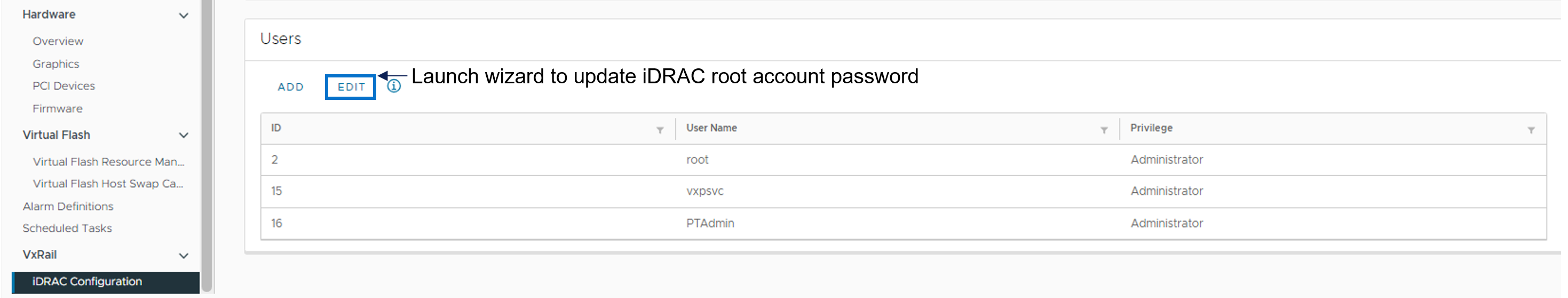

To update the iDRAC root account password, there is a new iDRAC Configuration page where you can click the Edit button to launch a wizard to the change password.

Figure 5. How to update the iDRAC root password

Deployment Flexibility

Lastly, I want to touch on two features in deployment flexibility.

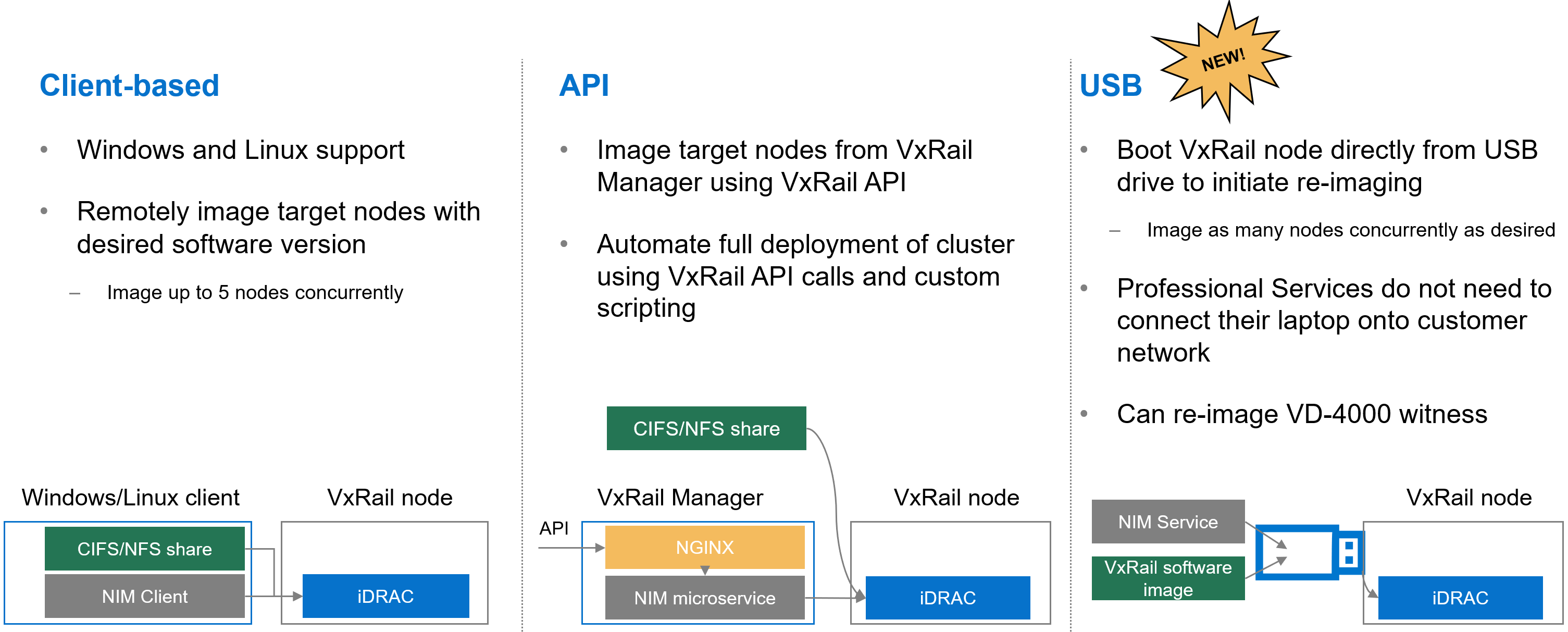

Over the past few years, the VxRail team has invested heavily in empowering you with the tools to recompose and rebuild the clusters on your own. One example is making our VxRail nodes customer-deployable with the VxRail Configuration Portal. Another is the node imaging tool.

Figure 6. Different options to use the node image management tool

Initially, the node imaging tool was Windows client-based where the workstation has the VxRail software ISO image stored locally or on a share. By connecting the workstation onto the local network where the target nodes reside, the imaging tool can be used to connect to the iDRAC of the target node. Users can reimage up to 5 nodes on the local network concurrently. In a more recent VxRail release, we added Linux client support for the tool.

We’ve also refactored the tool into a microservice within the VxRail HCI System Software so that it can be used via VxRail API. This method added more flexibility so that you can automate the full deployment of your cluster by using VxRail API calls and custom scripting.

In VxRail 8.0.210, we are introducing the USB version of the tool. Here, the tool can be self-contained on a USB drive so that users can plug the USB drive into a node, boot from it, and initiate reimaging. This provides benefits in scenarios where the 5-node maximum for concurrent reimage jobs is an issue. With this option, users can scale reimage jobs by setting up more USB drives. The USB version of the tool now allows an option to reimage the embedded witness on the VD-4000.

The final feature for deployment flexibility is support for IPv6. Whether your environment is exhausting the IPv4 address pool or there are requirements in your organization to future-proof your networking with IPv6, you will be pleasantly surprised by the level of support that VxRail offers.

You can deploy IPv6 in a dual or single network stack. In a dual network stack, you can have IPv4 and IPv6 addresses for your management network. In a single network stack, the management network is only on the IPv6 network. Initial support is for VxRail clusters running vSAN OSA with 3 or more nodes. Other than that, the feature set is on par with what you see with IPv4. Select the network stack at cluster deployment.

Conclusion

VxRail 8.0.210 offers a plethora of new features and platform support such that there is something for everyone. As you digest the information about this release, know that updating your cluster to the latest VxRail software provides you with the best return on your investment from a security and capability standpoint. Backed by VxRail Continuously Validated States, you can update your cluster to the latest software with confidence. For more information about VxRail 8.0.210, please refer to the release notes. For more information about VxRail in general, visit the Dell Technologies website.

Author: Daniel Chiu, VxRail Technical Marketing

https://www.linkedin.com/in/daniel-chiu-8422287/

VxRail’s Latest Hardware Evolution

Thu, 04 Jan 2024 17:22:21 -0000

|Read Time: 0 minutes

December is a time of celebration and anticipation, a month in which we may reflect on the events of the year and look ahead to what is yet to come. Charles Dickens’ “A Christmas Carol” – and its many stage and movie remakes – is one of those literary classics that helps showcase this season’s magic at its finest. It is even said that there is a special kind of magic—one full of excitement, innovation, and productivity—that finds a way to (hyper)converge the past, present, and future for data center administrators all around the world who have been good all year!

No, your wondering eyes do not deceive you. Appearing today are VxRail’s next generation platforms—the VE-660 and VP-760—in all-new, all-NVMe configurations! While Santa’s elves have spent the year building their backlog of toys and planning supply-chain delivery logistics that rival SLA standards of the world’s largest e-tailers, the VxRail team has been hard at work innovating our VxRail family portfolio to ensure that your workloads can run faster than ever before. So, let’s grab a glass of eggnog and invite the holiday spirits along for a tour of VxRail past, present, and future to better understand our latest portfolio addition.

Figure 1. VxRail VE-660

Figure 1. VxRail VE-660 Figure 2. VxRail VP-760

Figure 2. VxRail VP-760

Spirit of VxRail Past

This was especially true earlier this summer when we launched the VE-660 and VP-760 VxRail platforms based on 16th Generation Dell PowerEdge servers. These next-gen successors to the VxRail E-Series and P-Series platforms not only contained the latest hardware innovations, but also represented a systemic change in the overall VxRail offering.

First, the mainline E- and P-series platforms were respectively re-christened as the VE-660 and VP-760. This was done primarily to invite easier comparison points to the underlying PowerEdge servers on which they’re based – the R660 and R760. Second, we tracked how the use of accelerators in the data center had evolved over the years and made the strategic decision to fold the capabilities of the V-Series platform into the P-Series by way of specific riser configurations. Now, customers have the ability to glean all the benefits of a high-performant 2U system with the choice of either storage-optimized (up to 28 total drive bays) or accelerator-optimized (up to 2x double wide or 6x single wide GPUs) chassis configurations—whichever best aligns to the specifics of their workload needs. And third, VxRail platforms dropped the storage type suffix from the model name. Hybrid and all-flash (and as of today, all-NVME–more on this later) storage variants are now offered as part of the riser configuration selection options of these baseline platforms, where applicable.

These changes are representative of how the breadth and depth of customer needs have grown tremendously over the years. By taking these steps to streamline the VxRail portfolio, we charted an evolutionary path forward that continues our commitment to offer greater customer choice and flexibility.

Spirit of VxRail Present

These themes of greater choice and flexibility are amplified by the architectural improvements underpinning these new VxRail platforms. Primary among them is the introduction of Intel® 4th Generation Xeon® Scalable processors. Intel’s latest generation of processors do more than bump VxRail core density per socket to 56 (112 max per node). They also come with built-in AMX accelerators (Advanced Matrix Extensions) that support AI and HPC workloads without the need for any additional drivers or hardware. For a deeper dive into the Intel® AMX capability set, the Spirit of VxRail Present invites you to read this blog: VxRail and Intel® AMX, Bringing AI Everywhere, authored by Una O’Herlihy.

Intel’s latest processors also usher in support for DDR5 memory and PCIe Gen 5, two other architectural pillars that underpin significant jumps in performance. The following table offers a high-level overview and comparison of these pillars and a useful at-a-glance primer for those considering a technology refresh from earlier generation VxRail:

Table 1. VxRail 14th Generation to 16th Generation comparison

VxRail VE-660 & VP-760 | VxRail E560, P570 & V570 | |

Intel Chipset | 4th Generation Xeon | 2nd Generation Xeon |

Cores | 8 - 56 | 4 - 28 |

TDP | 125W – 350W | 85W – 205W |

Max DRAM Memory | 4TB per socket | 1.5TB per socket |

Memory Channels | 8 (DDR5) | 6 (DDR4) |

Memory Bandwidth | Up to 4800 MT/s | Up to 2933 MT/s |

PCIe Generation | PCIe Gen 5 | PCIe Gen 3 |

PCIe Lanes | 80 | 48 |

PCIe Throughput | 32 GT/s | 8 GT/s |

As the operational needs of a business change day-by-day, finding the right balance between workload density and load balance can often feel like an infinite war for resources. The adoption of DDR5 memory across the latest generation of VxRail platforms offers additional flexibility in the way system resources can be divvied up by virtue of two key benefits: greater memory density and faster bandwidth. The VE-660 and VP-760 wield eight memory channels per processor, with the ability to slot up to two 4800MT/s DIMMs per channel for a maximum memory capacity of 8TB per node. Compared to a VxRail P570, the density and speed improvements are staggering: 33% more memory channels per processor, 2.6x increase in per system total memory, and up to a 64% increase in memory speed! With faster and greater density compute and memory available for workloads, each node in a VxRail cluster can handle more VMs, and if there is ever a case of task bottlenecking, there are plenty of resources still available for optimal load balancing.

When we consider the presence of PCIe Gen 5, we see an even greater increase in the overall performance envelope. PowerEdge’s Next-Generation Tech Note does a great job of contextualizing the capabilities of PCIe Gen 5. The main takeaway for VxRail, however, is that it increases the maximum bandwidth achievable from various peripheral components by roughly 25% when compared to PCIe Gen 4 and roughly 66% when compared to PCIe Gen 3. In particular, the jump in available PCIe lanes (48 lanes to a luxurious 80 lanes) and associated throughput (8 GT/s to 32 GT/s per lane) from Gen 3 to Gen 5 significantly reduces performance bottlenecks, resulting in faster storage transfer rates and more bandwidth for accelerators to process AI and ML workloads.

PCIe Gen 5 is also backwards compatible with previous generation peripherals, enabling a certain degree of flexibility with respect to VxRail’s component extensibility and longevity in the data center. Yesterday’s technologies can still be used, but the VE-660 and VP-760 can adapt to growing workload demands by taking full advantage of the latest peripherals as they are released. They are even equipped with an additional PCIe slot over their E- & P-Series predecessors, providing extra dimensions of configuration. These boons in flexibility ensure any investment into this generation of VxRail enjoys longer relevance as your infrastructure backbone.

Spirit of VxRail Future

Even with all these architectural improvements defining the VP-760 and VE-660, we knew we could find ways of improving the capability set. So, we made our list of desired features (and checked it twice!) and determined that the best way to augment these next-generation hardware enhancements would be with the introduction of all-NVMe storage options.

The Spirit of VxRail Past wishes to remind us that VxRail with all-NVMe storage is not new—NVMe first made its way to the VxRail lineup with the P580N and E560N almost four years ago and has been a mainstay facet of the VxRail with vSAN architecture ever since. However, what is most compelling about all-NVMe versions of the VE-660 and VP-760—what the Spirit of VxRail Future wishes to strongly communicate—is that NVMe opens the door to two very compelling benefits: additional flexibility of choice with respect to vSAN architecture and an associated increase in overall storage capacity with the addition of read intensive NVMe drives in sizes of up to 15.36TB.

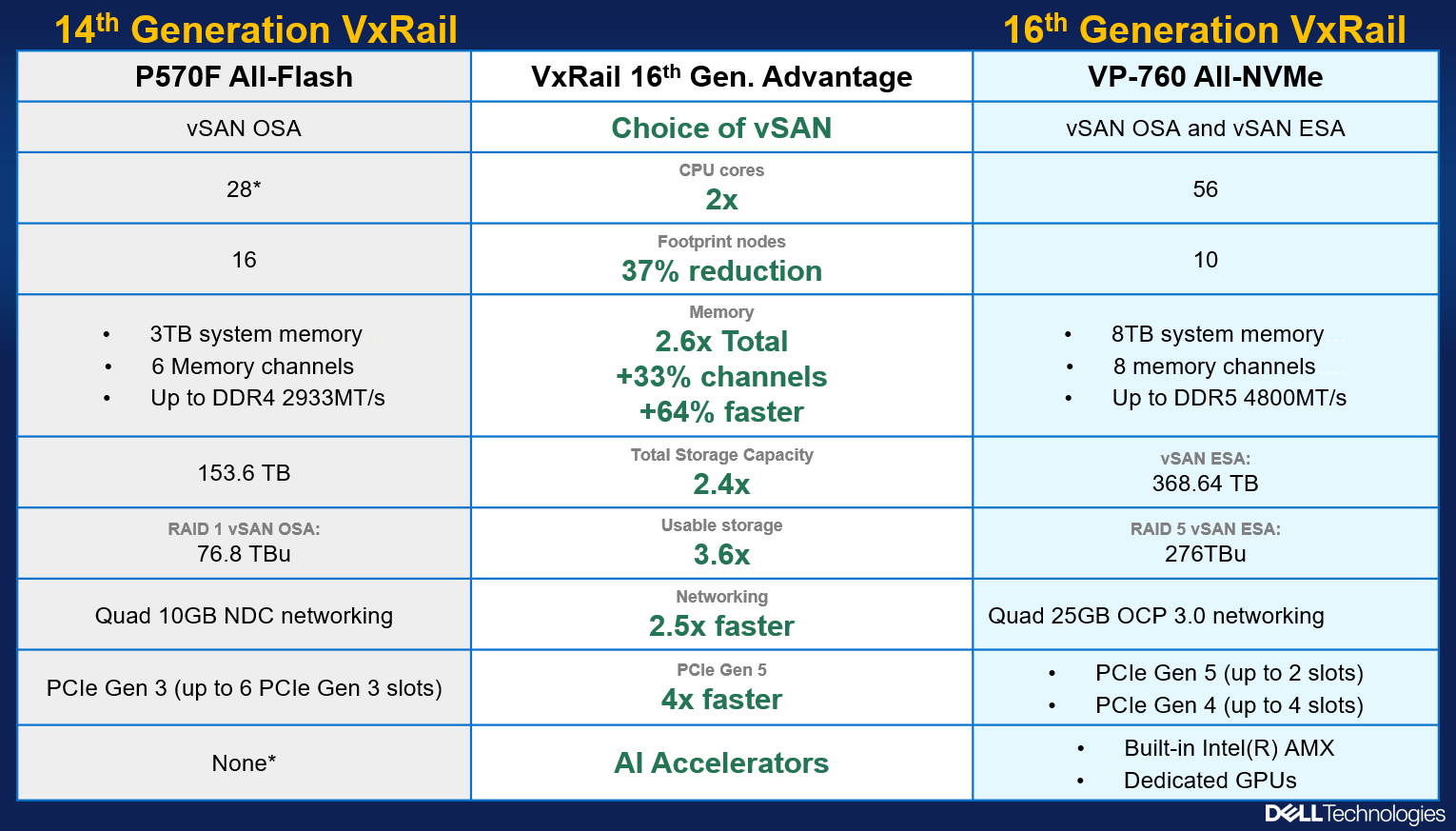

The following figure outlines all of the generational advantages customers can benefit from when transitioning from existing 14th Generation VxRail environments to VP-760 all-NVMe platforms.

Figure 4. The VxRail 16th Generation all-NVMe advantage

Figure 4. The VxRail 16th Generation all-NVMe advantage

In addition, VxRail on 16th Generation hardware can now support deployments with either vSAN Original Storage Architecture (OSA) or vSAN Express Storage Architecture (ESA). David Glynn provided a great summary of the core value vSAN ESA brings to the table for VxRail in his blog written nearly a year ago. With today’s launch, the VP-760 and VE-660 can now take advantage of vSAN ESA’s single-tier storage architecture that enables RAID-5 resiliency and capacity with RAID-1 performance. Customers who choose to deploy with vSAN OSA can also see the benefit of these new read intensive NVMe drives, with a total storage per node of up to 122.88TB in the VE-660 and 322.56TB in the VP-760. For those who deploy with vSAN ESA, maximum achievable storage is 153.6TB on the VE-660 and up to 368.64TB on the VP-760.

The Spirit of VxRail Future has seen the value of all-NVMe and is content knowing that VxRail will continue to underpin VMware mission-critical workloads for years to come.

Resources

Author: Mike Athanasiou, Sr. Engineering Technologist