Assets

Introducing APEX Navigator for Kubernetes: Resource onboarding

Mon, 20 May 2024 17:00:00 -0000

|Read Time: 0 minutes

We are excited to announce the availability of Dell APEX Navigator for Kubernetes! This offering is part of the Dell Premier account experience that includes the APEX Navigator user interface and shares the management interface with APEX Navigator for Storage. In a three-part blog series, we will go through the key aspects of the APEX Navigator for Kubernetes:

- Onboarding Kubernetes clusters and storage resources

- Batch deployment of Container Storage Modules

- Application Mobility between clusters

UI Overview

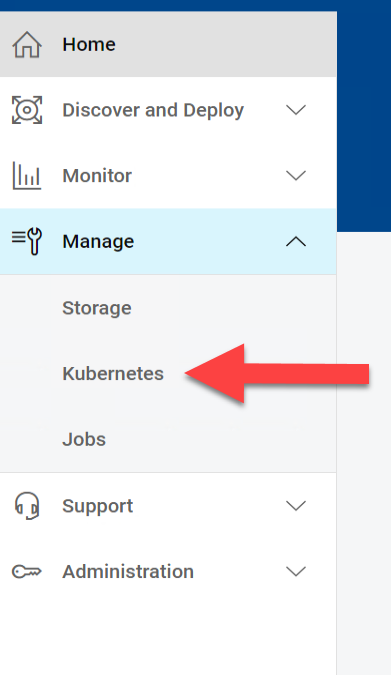

Once you login as an ITOPs user, you can navigate to the Kubernetes section under Manage section of the left side navigation bar:

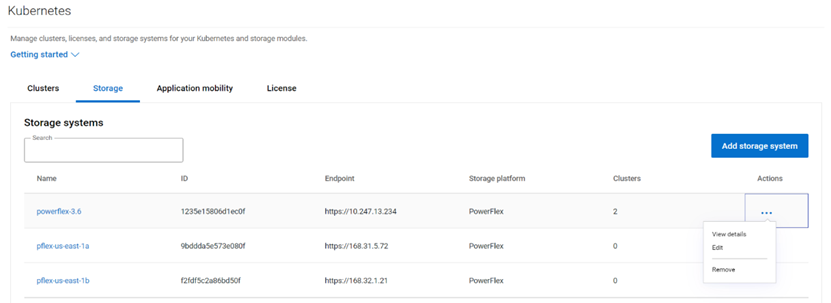

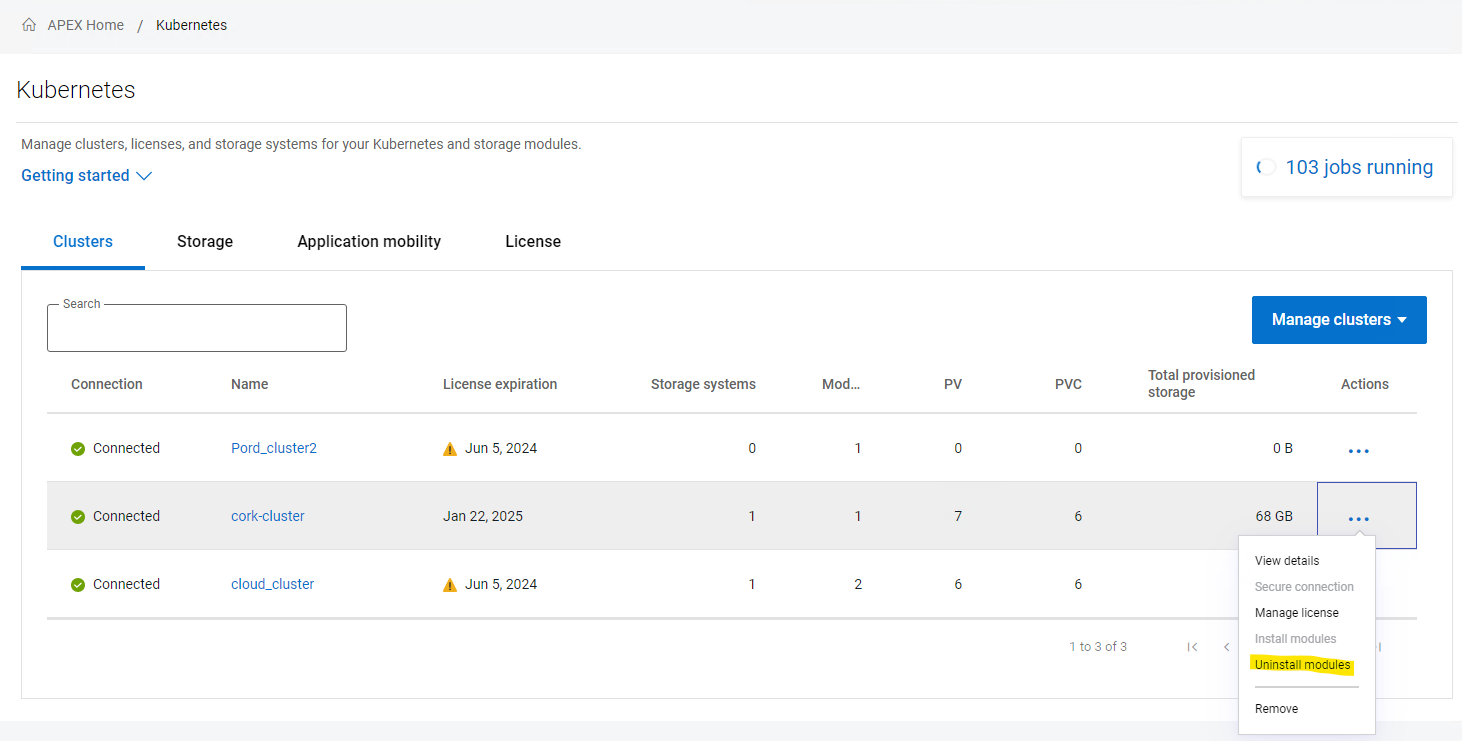

The details pane has four tabs:

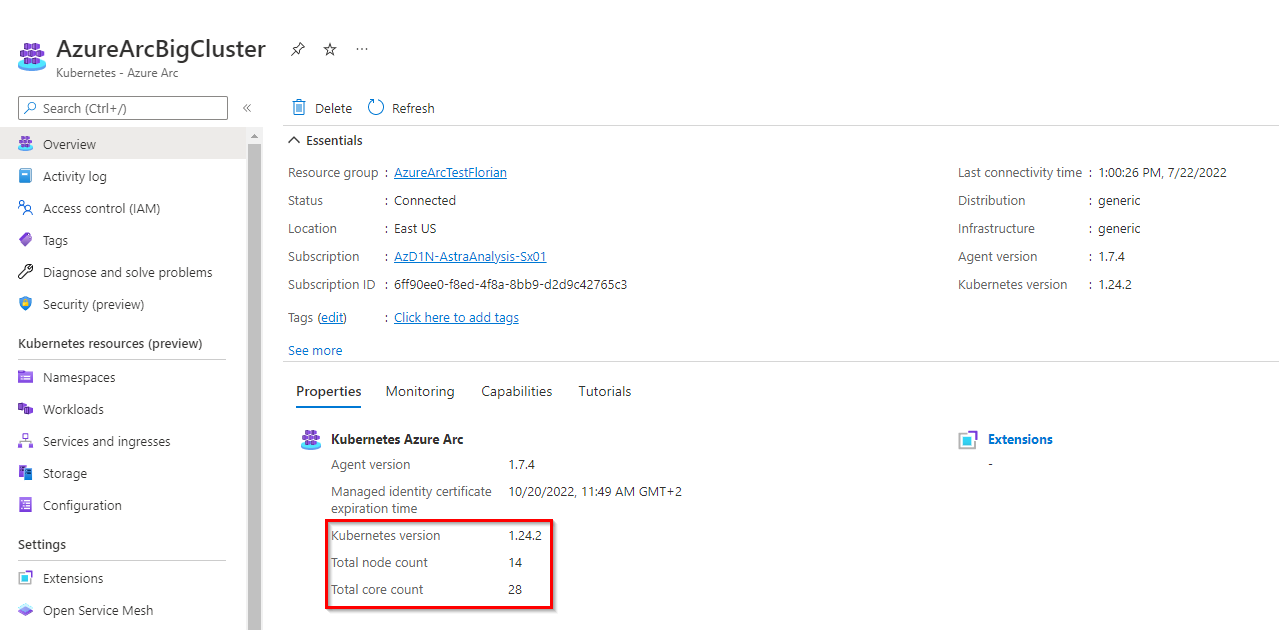

- The Clusters tab shows the onboarded Kubernetes clusters in a tabular form with various attributes of the clusters as columns. You can add additional clusters and manage the container storage modules.

- Similarly the Storage tab shows the storage platforms onboarded.

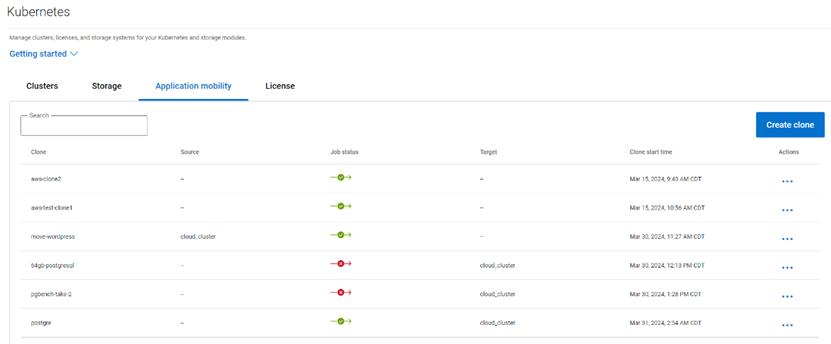

- The Application Mobility section shows the Application Mobility jobs that have been initiated along with details like Source destination and the status of the job.

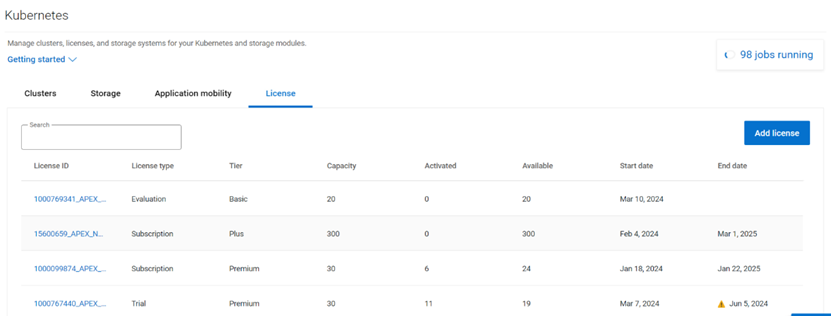

- The License tab lists the various licenses that have been added to the platform.

Multi-cloud Kubernetes Cluster onboarding

Kubernetes clusters both on-prem and on public clouds can be managed with APEX Navigator for Kubernetes.

Onboarding prerequisites

Before you onboard a cluster, please go through the following steps to make sure the cluster is ready to be onboarded.

Install the latest Dell CSM-operator

Dell CSM Operator is a Kubernetes Operator designed to manage the Dell Storage CSI drivers and Container Storage Modules. Install v1.4.4 or later using the instructions here.

Install the Dell Connectivity Client

The Dell Connectivity Client initiates a connection to https://connect-into.dell.com/ in order to communicate with APEX Navigator for Kubernetes. Therefore, the firewall and proxy between the Kubernetes cluster and that address must be opened.

To do this first make sure the following namespace are created on the cluster:

$ kubectl create namespace karavi dell-csm dell-connectivity-client

You can get the custom resource definition (CRD) YAML file to install the Dell Connectivity Client resource from the CSM Operator GitHub repo. Once you have the YAML file you can install the client service as follows:

$ kubectl apply -f dcm-client.yml

You can verify the installation to see an output like below:

$ kubectl get pods -n dell-connectivity-client

NAME READY STATUS RESTARTS AGE

dell-connectivity-client-0 3/3 Running 0 70s

Note: if you remove a cluster, please note that you need to re-install the Dell client before you onboard it again.

License for the cluster

On the License tab, you can add the different licenses that you have using APEX Navigator for Kubernetes. You will be assigning one of these licenses to the cluster once connected.

Connect to cluster

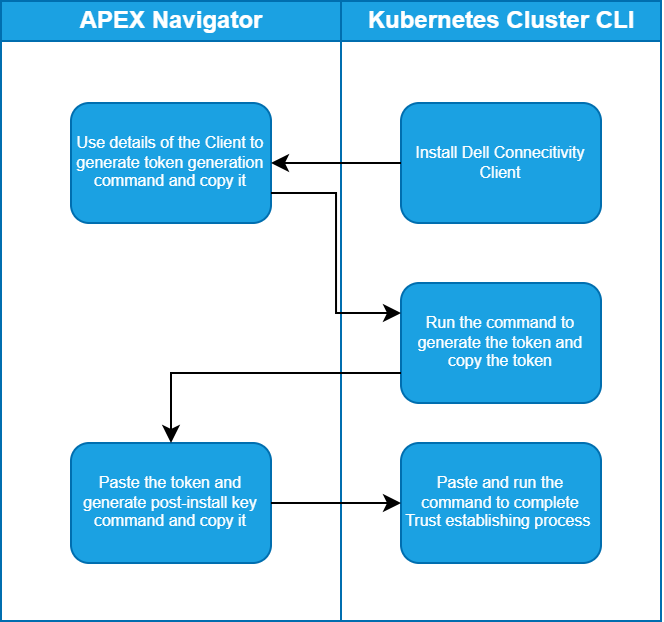

Once you have the CSM Operator and Dell Connectivity Client running on the cluster, you can connect to the cluster from the APEX Navigator UI. Here are the steps involved in establishing trust between the APEX Navigator and the Kubernetes cluster to onboard the cluster.

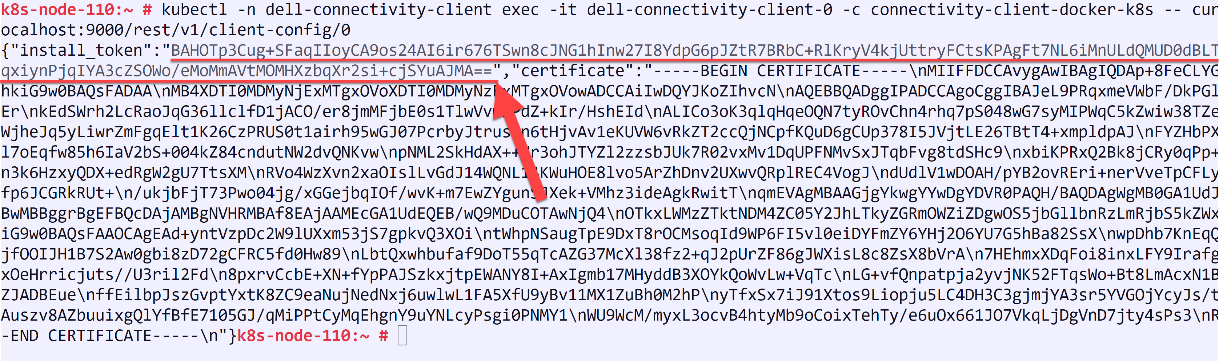

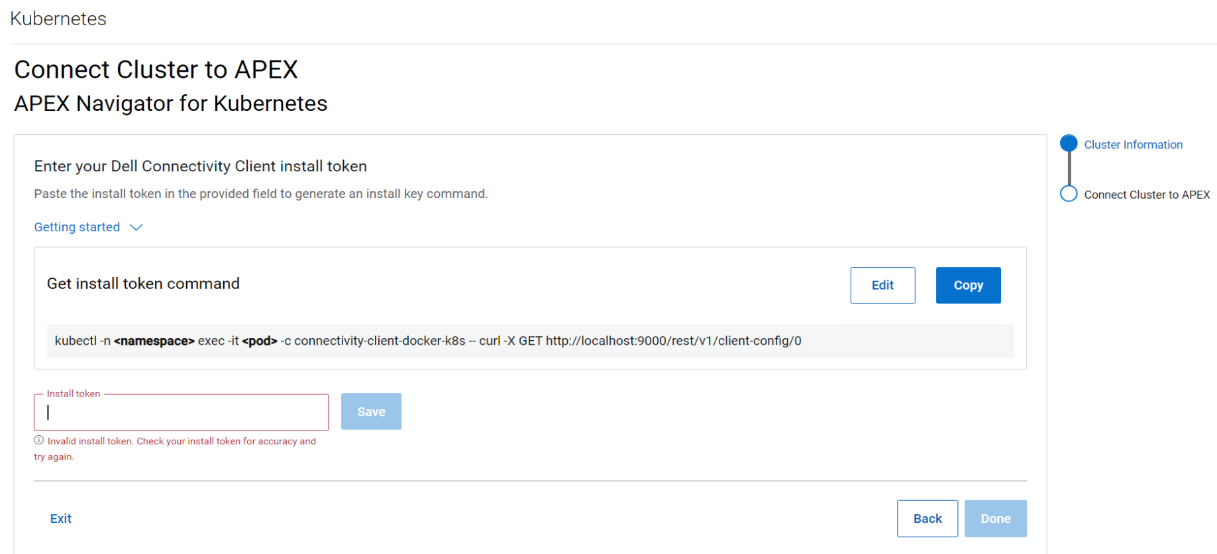

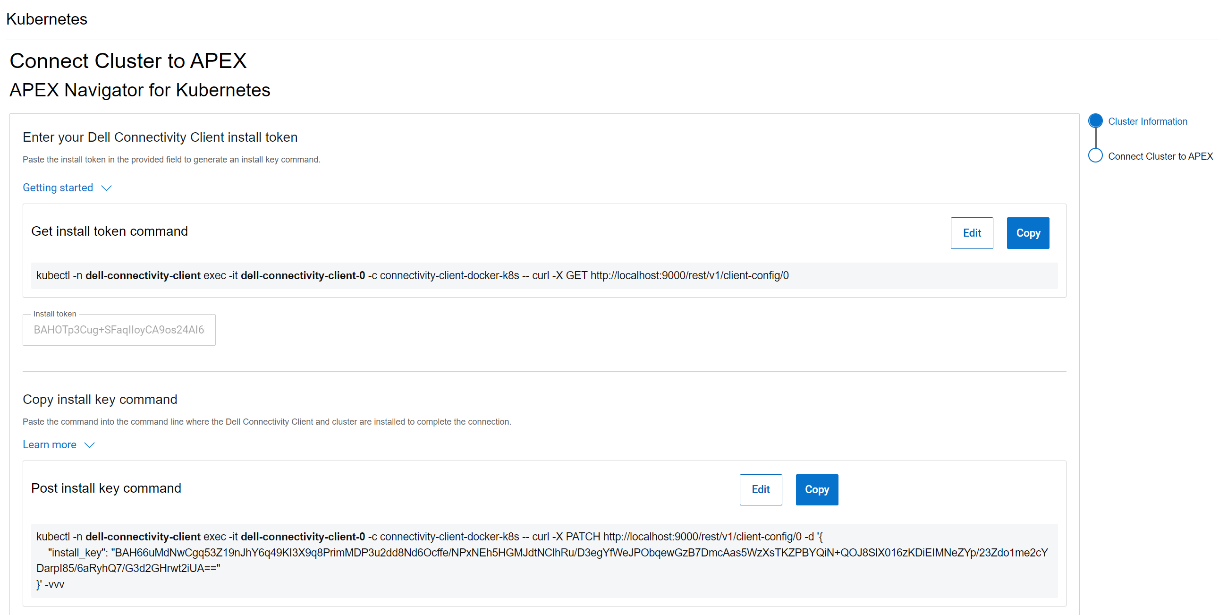

Follow the instructions on the UI to create the command that you need to run on your cluster to generate a token and then copy the token (underlined in the figure below) and paste it in the Install token field:

After this step, another command is generated that needs to be run on the cluster to complete the trusted connection process, as show in the following figure:

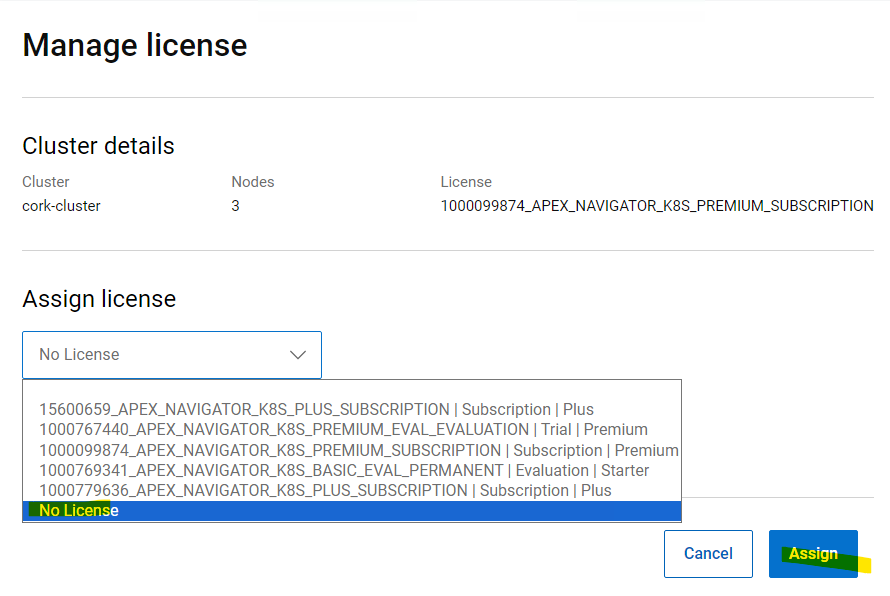

License for the cluster

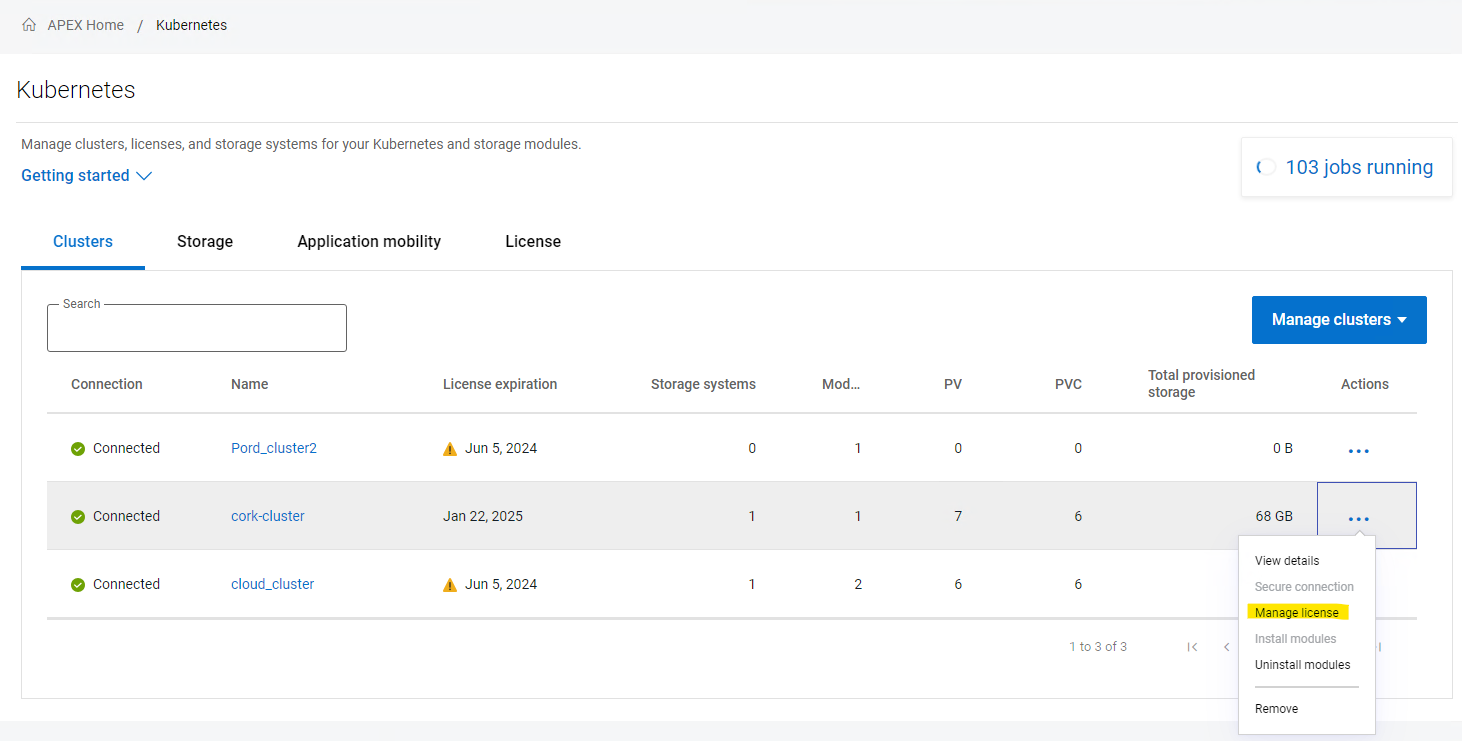

Once the cluster successfully connects, you may see the cluster is still listed in grey color indicating that it requires a license. Click on the ellipsis (…) button under the Actions column, on the right-hand side of the cluster row and select “Manage license”. In the License selection dialog, you can select the License that you want to assign the cluster. This step completes the onboarding of the cluster.

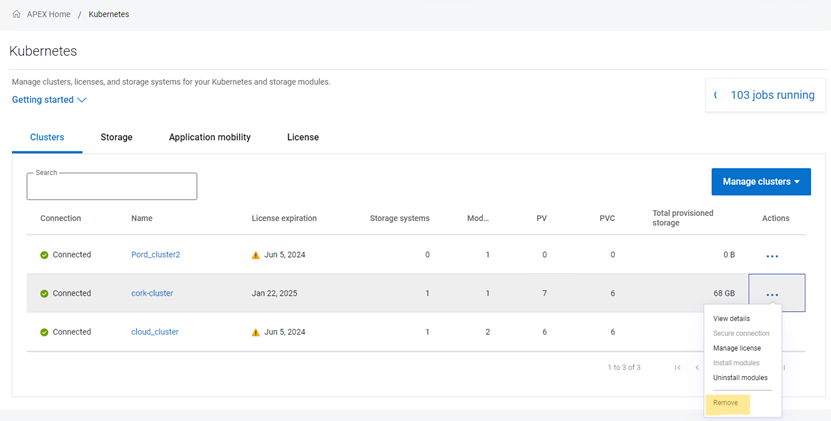

Removing a cluster

Following are the steps to remove a Kubernetes cluster from APEX Navigator for Kubernetes:

1. Uninstall all modules:

2. Unassign the license

3. Remove the cluster from the interface.

4. Uninstall the connectivity client on your cluster:

kubectl delete -n dell-connectivity-client apexconnectivityclients

After these four steps, the cluster is cleaned from every Dell CSI/CSM/APEX Navigator resource.

Multi cloud Storage support

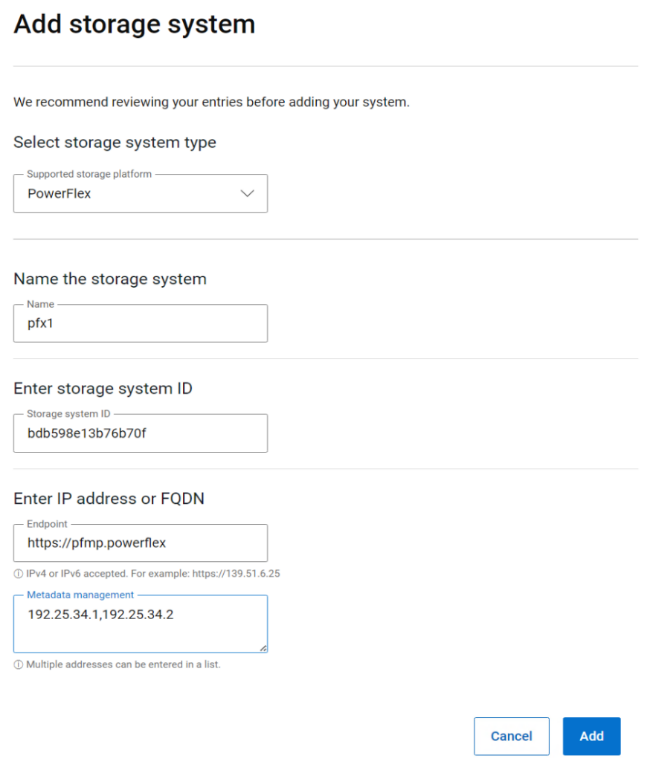

APEX Navigator for Kubernetes supports both on-prem and on-cloud Dell storage platforms. On-prem storage systems can be added using a simple dialog as shown below:

APEX Block storage on AWS

If you would like to use APEX Block storage on AWS, please make sure you have the required licenses for APEX Navigator for Storage and have onboarded your AWS account onto the APEX Navigator platform. You can deploy an APEX Block Storage cluster on AWS with just a few clicks (watch this demo video on YouTube) and start using the cloud storage for Kubernetes

Authors:

Parasar Kodati, Engineering Technologist, Dell ISG

Florian Coulombel, Engineering Technologist, Dell ISG

Introducing APEX Navigator for Kubernetes: Batch deployment of CSMs

Mon, 20 May 2024 17:00:00 -0000

|Read Time: 0 minutes

This is part 2 of the three-part blog post series introducing Dell APEX Navigator for Kubernetes. In this post, we will cover batch deployment of CSMs on any number of onboarded Kubernetes clusters.

A major advantage of using APEX Navigator for Kubernetes is the ability to deploy multiple CSMs onto multiple Kubernetes clusters which consume storage from different Dell storage systems (including Dell APEX Block Storage on AWS). Multiple install jobs are simultaneously launched on the clusters to enable parallel installation which saves time and effort for admins managing storage for a growing Kubernetes footprint. Let us see how this can be achieved.

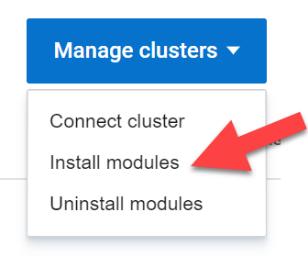

From the Clusters tab click on Manage Clusters and select Install modules:

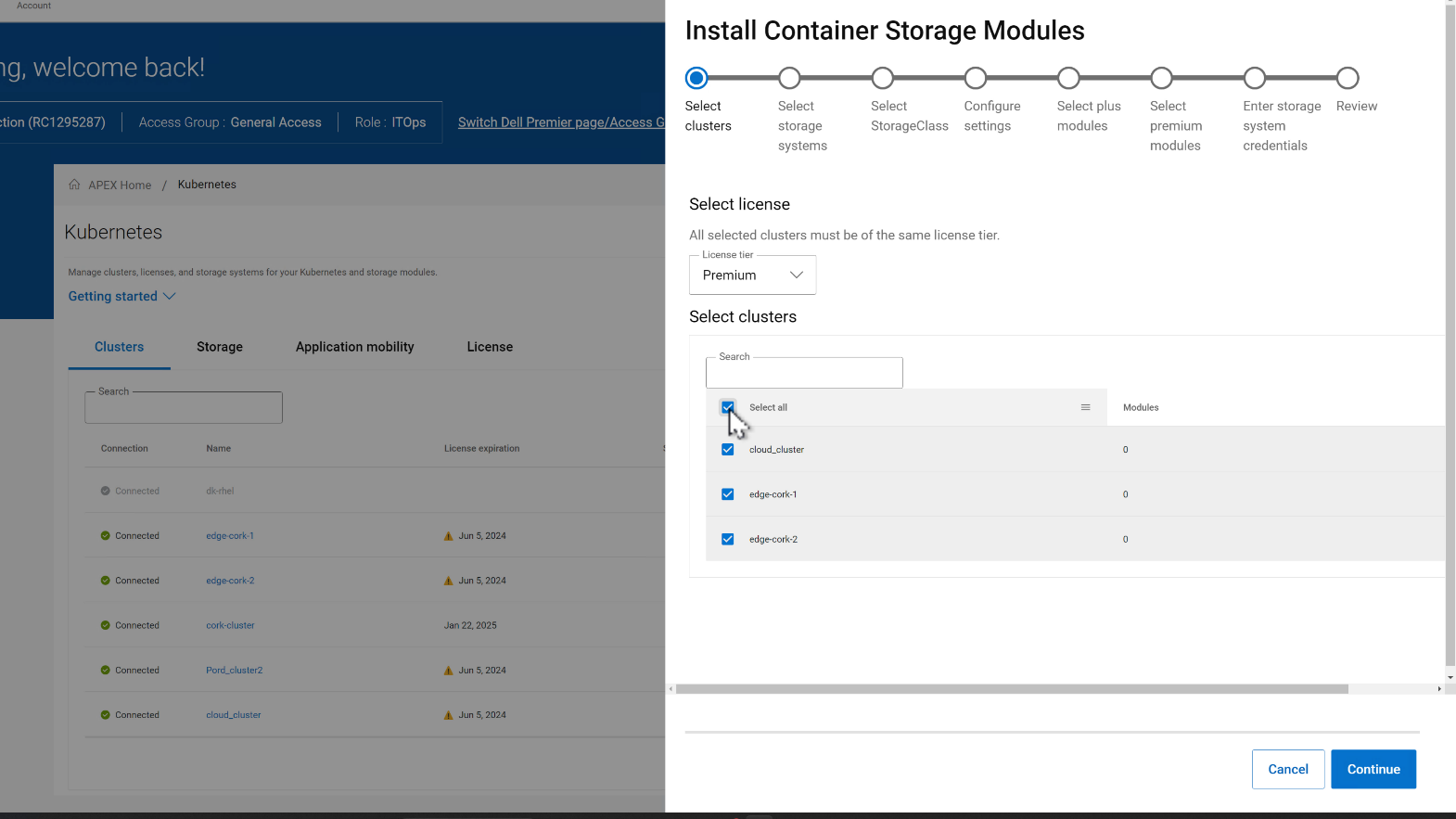

This launches the Module Installation wizard where you can install specific Dell Container Storage Modules and things like SDC client for PowerFlex storage for an entire set of clusters. This ensures the same storage class and other configuration parameters are used across all the clusters for consistency and standardization. In the first release of APEX Navigator for Kubernetes, only Observability, Authorization, and Application Mobility CSMs are supported. Over time more services will be added.

In the CSM deployment wizard, the first step is to select all the clusters where the CSMs need to be installed.

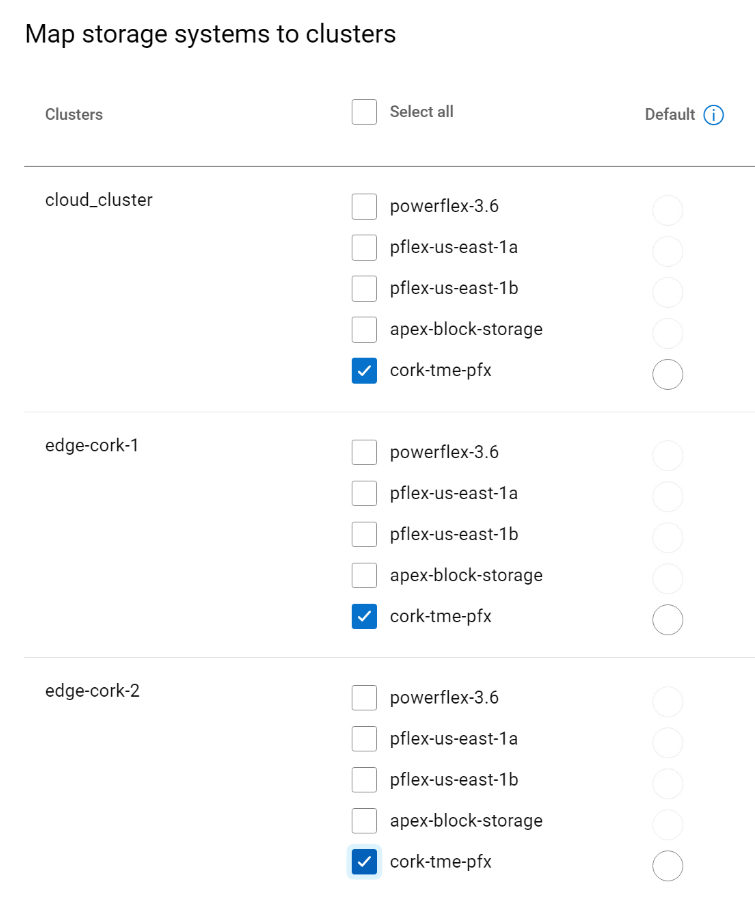

Then, you can select the storage systems for each of the clusters. In the figure below, the selected clusters are sharing the same storage.

In the next step, the Storage class is set for each cluster pair:

- Select the CSMs to install under Plus and Premium categories.

- Enter storage credentials for the storage platforms.

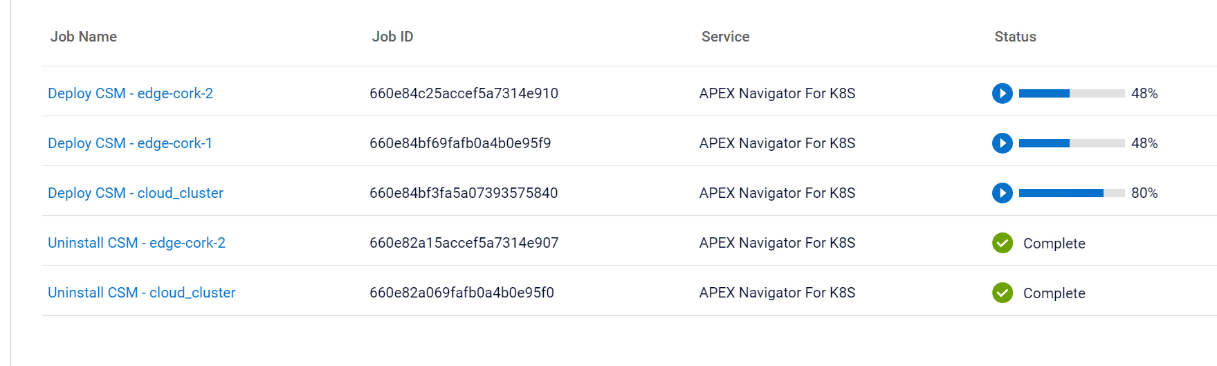

On the summary page of the wizard, you can review the install configurations and click Install to start the installation process. You can track the multiple parallel install jobs on multiple clusters:

On the summary page of the wizard, you can review the install configurations and click Install to start the installation process. You can track the multiple parallel install jobs on multiple clusters:

Authors:

Parasar Kodati, Engineering Technologist, Dell ISG

Florian Coulombel, Engineering Technologist, Dell ISG

Introducing APEX Navigator for Kubernetes: Application Mobility

Mon, 20 May 2024 17:00:00 -0000

|Read Time: 0 minutes

This is part 3 of the three-part blog series introducing Dell APEX Navigator for Kubernetes.

Application Mobility Overview

Data and application mobility is an essential element in maintaining the required availability and service level for a given application. From a workload standpoint, the application needs to have a redundant instance at a target site that can be used as a failover instance. For this to work for stateful applications, we need to ensure data availability on the target site. Data mobility can be achieved in two ways:

- Continuous replication at the storage level using the Replication Container Storage Module

- Point-in-time host-based backup using the Application Mobility Container Storage Module

The Replication container storage module for Dell storage platforms orchestrates the data replication using the storage platform’s native replication capabilities. The Application Mobility module on the other hand uses the host-based backup approach. While both the approaches work for Dell storage platforms through the command line interface, the first release of APEX Navigator for Kubernetes user interface supports only the host-based backup functionality called the Application Mobility Module.

The following are the pre-requisites for application mobility:

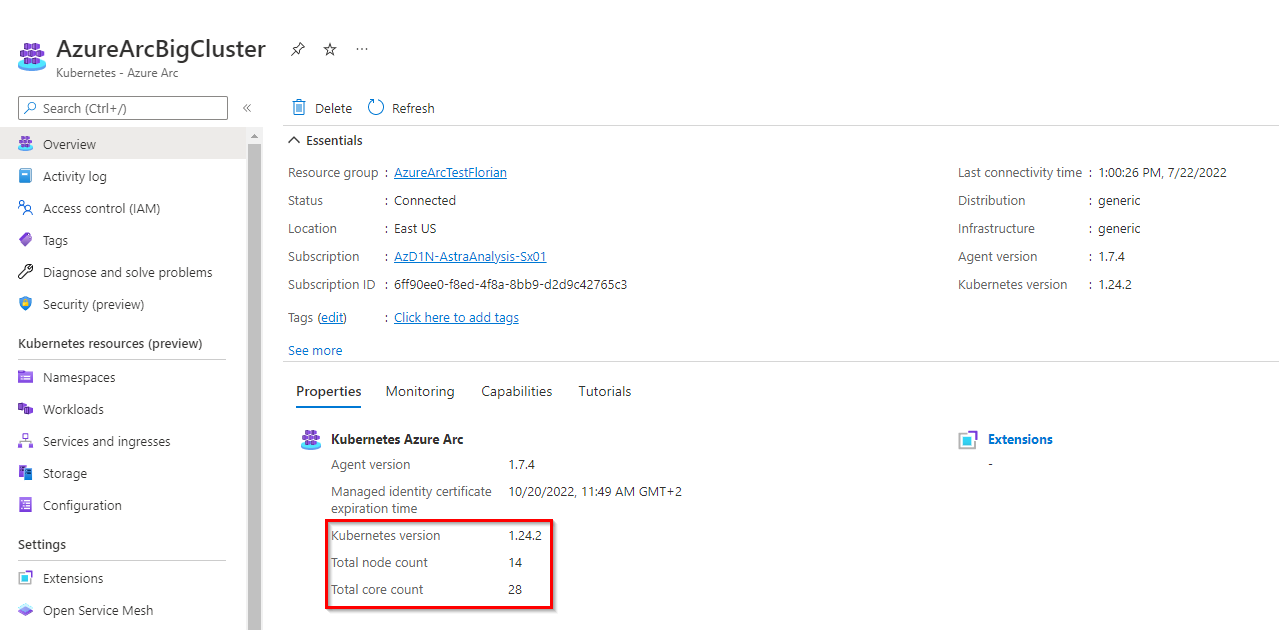

- Source and target K8s clusters onboarded and Application Mobility CSM installed.

- Source and target Dell storage systems onboarded.

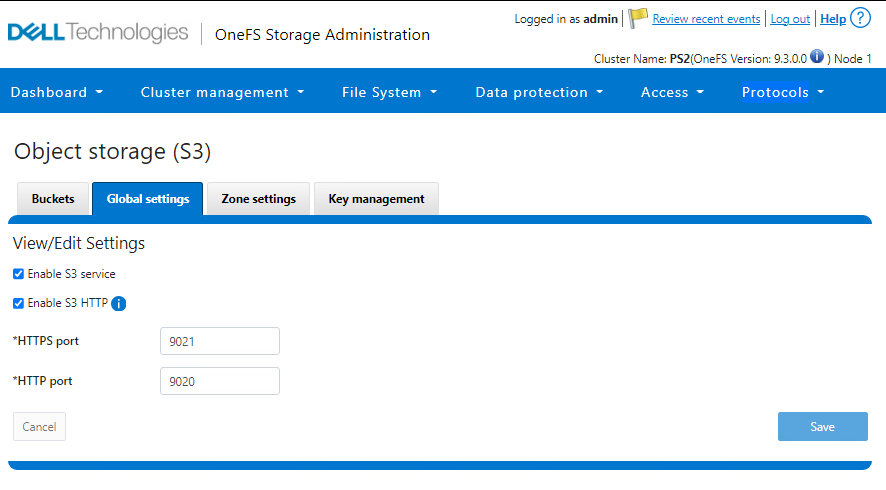

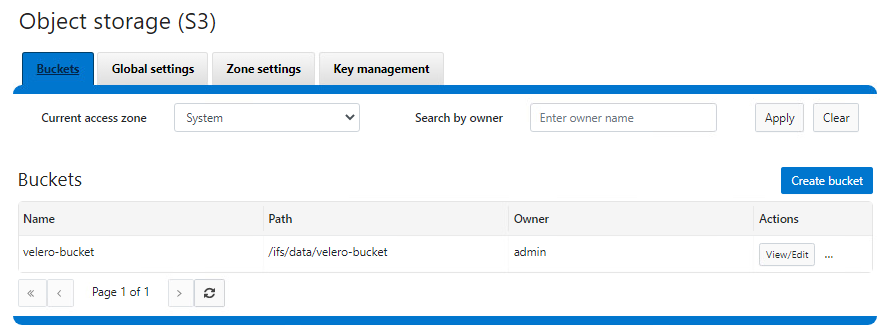

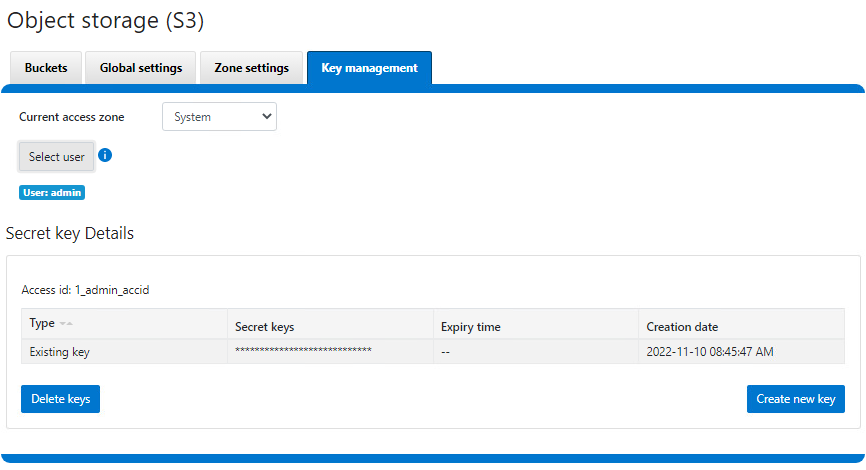

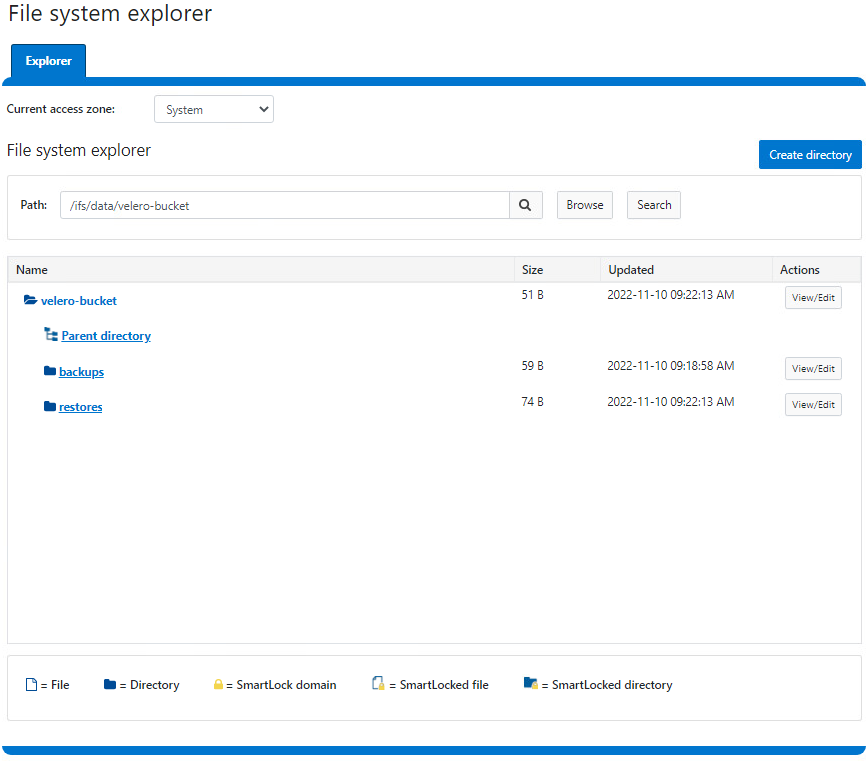

- S3 Object Store accessible from source and target for the intermediate backup copy of the application data

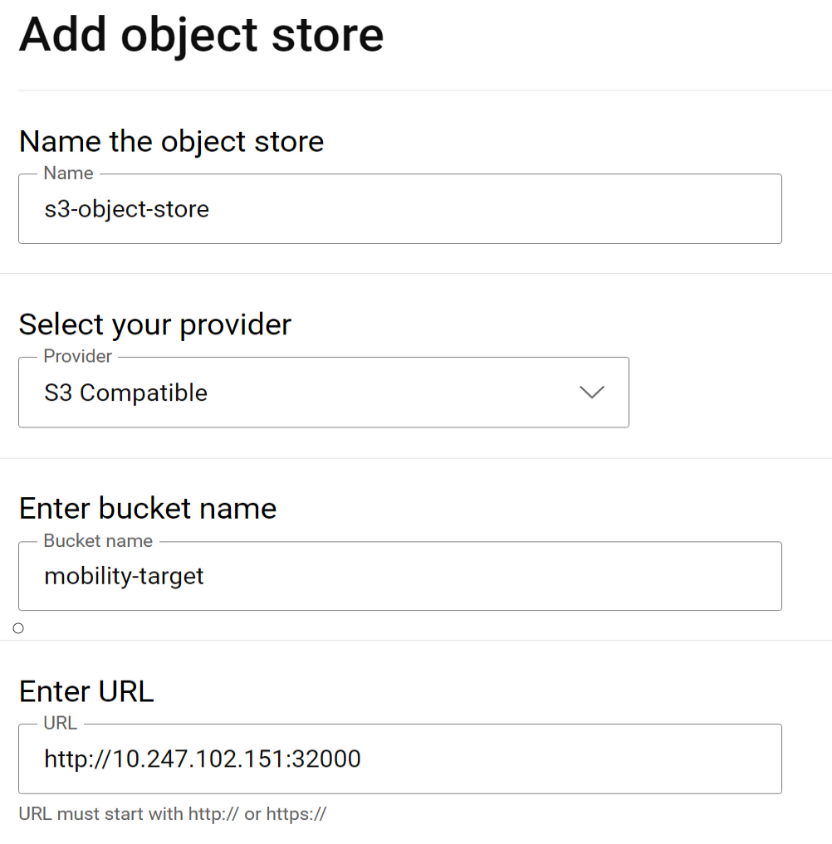

Adding Object Store

We already covered how to connect clusters and storage in previous sections. Let us see how to set up the S3 Object Store within the APEX Navigator for Kubernetes.

Navigate to the Storage tab and click on the “Add object store” button. This launches a dialog to add the details of the Object store:

If using Amazon Web services (AWS) S3 for the object store, the region on the Kubernetes backup storage location object needs to be updated prior to creating a clone. On each Kubernetes cluster where Application Mobility is installed, run this command to update the region:

kubectl patch backupstoragelocation/default -n dell-csm --type='merge' -p '{"spec":{"config":{"region":"<region- name>"}}}'

Application Mobility definition

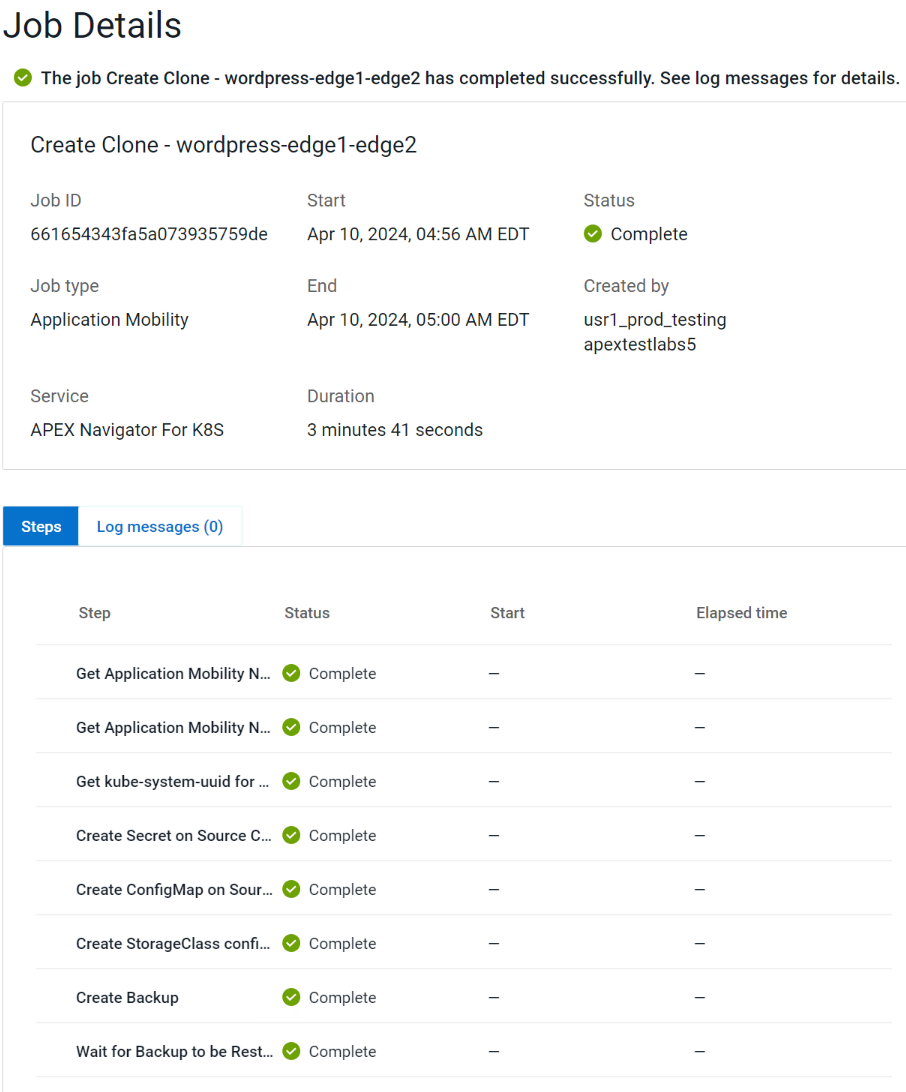

To start an Application mobility job, go to the Application Mobility tab and click “Create Clone”. This launches a wizard that takes you through the following steps:

- Specify source cluster and the namespace of the application to be moved.

- Specify target cluster and the namespace.

- Specify the Object store details to be used for the intermediate backup copy.

- Review details and click Create clone to start the mobility job.

You can track mobility jobs under Jobs section like below:

Authors:

Parasar Kodati, Engineering Technologist, Dell ISG

Florian Coulombel, Engineering Technologist, Dell ISG

For the Year 2022: Ansible Integration Enhancements for the Dell Infrastructure Solutions Portfolio

Mon, 29 Apr 2024 19:20:40 -0000

|Read Time: 0 minutes

The Dell infrastructure portfolio spans the entire hybrid cloud, from storage to compute to networking, and all the software functionality to deploy, manage, and monitor different application stacks from traditional databases to containerized applications deployed on Kubernetes. When it comes to integrating the infrastructure portfolio with 3rd party IT Operations platforms, Ansible is at the top of the list in terms of expanding the scope and depth of integration.

Here is a summary of the enhancements we made to the various Ansible modules across the Dell portfolio in 2022:

- Ansible plugin for PowerStore had four different releases (1.5,1.6,1.7, and 1.8) with the following capabilities:

- New modules:

- dellemc.powerstore.ldap_account – To manage LDAP account on Dell PowerStore

- dellemc.powerstore.ldap_domain - To manage LDAP domain on Dell PowerStore

- dellemc.powerstore.dns - To manage DNS on Dell PowerStore

- dellemc.powerstore.email - To manage email on Dell PowerStore

- dellemc.powerstore.ntp - To manage NTP on Dell PowerStore

- dellemc.powerstore.remote_support – To manage remote support to get the details, modify the attributes, verify the connection. and send a test alert

- dellemc.powerstore.remote_support_contact – To manage remote support contact on Dell PowerStore

- dellemc.powerstore.smtp_config – To manage SMTP config

- Added support for the host connectivity option to host and host group

- Added support for cluster creation and validating cluster creation attributes

- Data operations:

- Added support to clone, refresh, and restore a volume

- Added support to configure/remove the metro relationship for a volume

- Added support to modify the role of replication sessions

- Added support to clone, refresh, and restore a volume group

- File system:

- Added support to associate/disassociate a protection policy to/from a NAS server

- Added support to handle filesystem and NAS server replication sessions

- Ansible execution:

- Added an execution environment manifest file to support building an execution environment with Ansible Builder

- Enabled check_mode support for Info modules

- Updated modules to adhere to Ansible community guidelines

- The Info module is enhanced to list DNS servers, email notification destinations, NTP servers, remote support configuration, remote support contacts and SMTP configuration, LDAP domain, and LDAP accounts.

- Visit this GitHub page to go through release history: https://github.com/dell/ansible-powerstore/blob/main/CHANGELOG.rst

- New modules:

- Ansible plugin for PowerFlex had four different releases (1.2, 1.3, 1.4, and 1.5) with the following capabilities:

- New modules:

- dellemc.powerflex.replication_consistency_group – To manage replication consistency groups on Dell PowerFlex

- dellemc.powerflex.mdm_cluster – To manage a MDM cluster on Dell PowerFlex

- dellemc.powerflex.protection_domain – To manage a Protection Domain on Dell PowerFlex

- The info module is enhanced to support listing the replication consistency groups, volumes, and storage pools with the statistics data.

- Storage management:

- The storage pool module is enhanced to get the details with the statistics data.

- The volume module is enhanced to get the details with the statistics data.

- Ansible execution:

- Added an execution environment manifest file to support building an execution environment with Ansible Builder

- Enabled check_mode support for the Info module

- Updated modules to adhere to Ansible community guidelines

- Visit this GitHub page to go through release history: https://github.com/dell/ansible-powerflex/blob/main/CHANGELOG.rst

- New modules:

- The Ansible plugin for PowerMax had four different releases (1.7, 1.8, 2.0 and 2.1) with the following capabilities:

- New module: dellemc.powermax.initiator – To manage initiators

- Host operations:

- Added support of case insensitivity of the host WWN to the host, and to the masking view module.

- Enhanced the host module to add or remove initiators to or from the host using an alias.

- Data operations:

- Enhanced storage group module to support

- Moving volumes to destination storage groups.

- Making volume name an optional parameter while adding a new volume to a storage group.

- Setting host I/O limits for existing storage groups and added the ability to force move devices between storage groups with SRDF protection.

- Enhanced volume module to support

- A cylinders option to specify size while creating a LUN, and added the ability to create volumes with identifier_name and volume_id.

- Renaming volumes that were created without a name.

- Enhanced the RDF group module to get volume pair information for an SRDF group.

- Enhanced storage group module to support

- Added an execution environment manifest file to support building an execution environment with Ansible Builder

- Added rotating file handler for log files

- Enhanced the info module to list the initiators, get volume details and masking view connection information

- Enhanced the verifycert parameter in all modules to support file paths for custom certificate location.

- Some things renamed:

- Names of previously released modules have been changed from dellemc_powermax_<module name> to <module name>

- The Gatherfacts module is renamed to Info

- Renamed metro DR module input parameters

- Visit this GitHub page to go through release history: https://github.com/dell/ansible-powermax/blob/main/CHANGELOG.rst

- Ansible plugin for PowerScale had four different releases (1.5,1.6,1.7 and 1.8) with the following capabilities:

- New modules:

- dellemc.powerscale.nfs_alias – To manage NFS aliases on a PowerScale

- dellemc.powerscale.filepoolpolicy – To manage the file pool policy on PowerScale

- dellemc.powerscale.storagepooltier – To manage storage pool tiers on PowerScale

- dellemc.powerscale.networksettings – To manage Network settings on PowerScale

- dellemc.powerscale.smartpoolsettings – To manage Smartpool settings on PowerScale

- Security support:

- Support for security flavors while creating and modifying NFS export.

- Access Zone, SMB, SmartQuota, User and Group modules are enhanced to support the NIS authentication provider.

- The Filesystem module is enhanced to support ACL and container parameters.

- The ADS module is enhanced to support the machine_account and organizational_unit parameters while creating an ADS provider.

- File management:

- The Info module is enhanced to support the listing of NFS aliases.

- Support to create and modify additional parameters of an SMB share in the SMB module.

- Support for recursive force deletion of filesystem directories.

- Ansible execution

- Added an execution environment manifest file to support building an execution environment with Ansible Builder.

- Check mode is supported for the Info, Filepool Policy, and Storagepool Tier modules.

- Added rotating file handlers for log files.

- Removal of the dellemc_powerscale prefix from all module names.

- Other module enhancements:

- The SyncIQ Policy module is enhanced to support accelerated_failback and restrict_target_network of a policy.

- The Info module is enhanced to support NodePools and Storagepool Tiers Subsets.

- The SmartQuota module is enhanced to support container parameter and float values for Quota Parameters.

- Visit this GitHub page to go through release history: https://github.com/dell/ansible- powerscale/blob/main/CHANGELOG.rst

- New modules:

- The Ansible plugin for Dell OpenManage had 13 releases this year, some of which were major releases. Here is a brief summary:

- v7.1: Support for retrieving smart fabric and smart fabric uplink information, support for IPv6 addresses for OMSDK dependent iDRAC modules, and OpenManage Enterprise inventory plugin.

- v7.0: Rebranded from Dell EMC to Dell, enhanced the idrac_firmware module to support proxy, and added support to retrieve iDRAC local user details.

- v6.3: Support for the LockVirtualDisk operation and to configure Remote File Share settings using the idrac_virtual_media module.

- v6.2: Added clear pending BIOS attributes, reset BIOS to default settings, and configured BIOS attributes using Redfish enhancements for idrac_bios.

- v6.1: Support for device-specific operations on OpenManage Enterprise and configuring boot settings on iDRAC.

- v6.0: Added collection metadata for creating execution environments, deprecation of share parameters, and support for configuring iDRAC attributes using the idrac_attributes module.

- v5.5: Support to generate certificate signing request, import, and export certificates on iDRAC.

- v5.4: Enhanced the idrac_server_config_profile module to support export, import, and preview of the SCP configuration using Redfish and added support for check mode.

- v5.3: Added check mode support for redfish_storage_volume, idempotency for the ome_smart_fabric_uplink module, and support for debug logs added to ome_diagnostics

- V5.2: Support to configure console preferences on OpenManage Enterprise.

- V5.1: Support for OpenManage Enterprise Modular server interface management.

- V5.0.1: Support to provide custom or organizational CA signed certificates for SSL validation from the environment variable.

- 5.0: HTTPS SSL support for all modules and quick deploy settings.

- Visit this GitHub page to go through release history: https://github.com/dell/dellemc-openmanage-ansible-modules/releases.

For all Ansible projects you can track the progress, contribute, or report issues on individual repositories.

You can also join our DevOps and Automation community at: https://www.dell.com/community/Automation/bd-p/Automation.

Happy New Year and happy upgrades!

Authors: Parasar Kodati and Florian Coulombel

Network Design for PowerScale CSI

Mon, 29 Apr 2024 18:55:46 -0000

|Read Time: 0 minutes

Network connectivity is an essential part of any infrastructure architecture. When it comes to how Kubernetes connects to PowerScale, there are several options to configure the Container Storage Interface (CSI). In this post, we will cover the concepts and configuration you can implement.

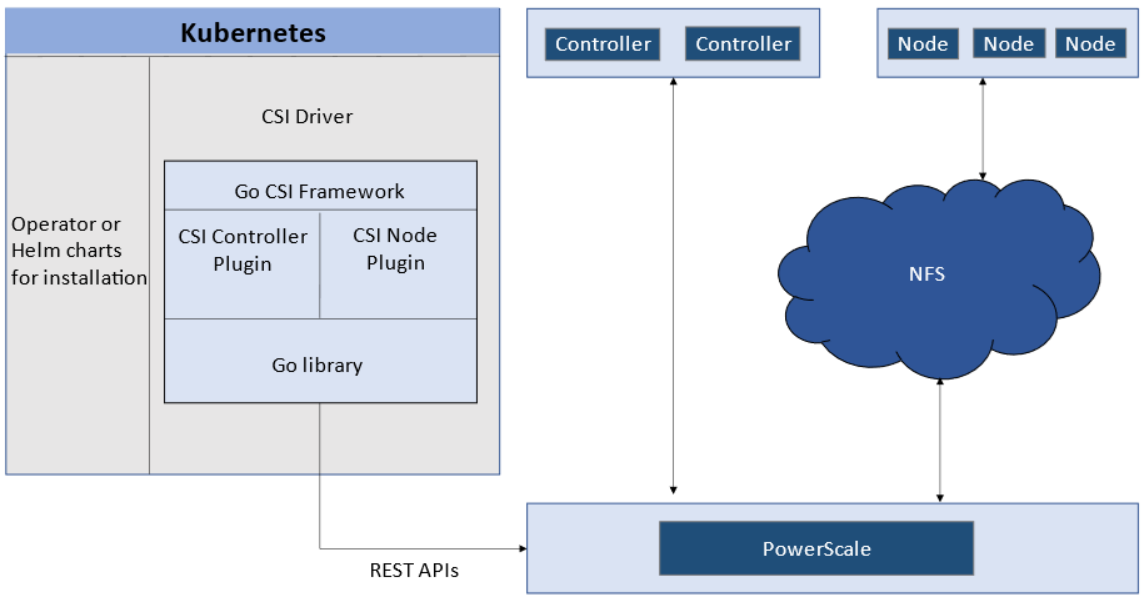

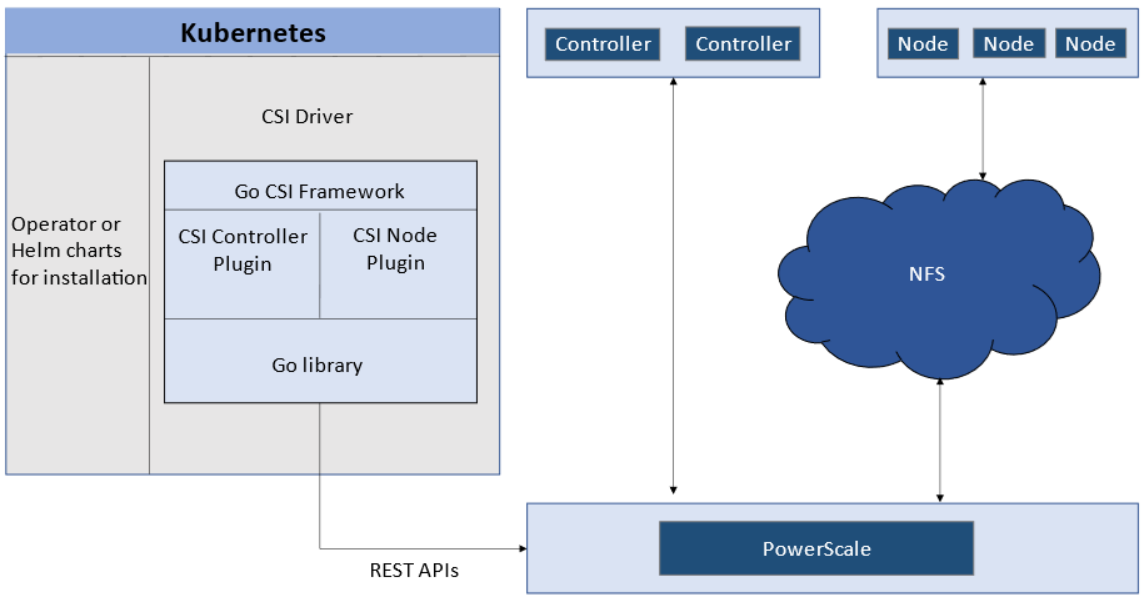

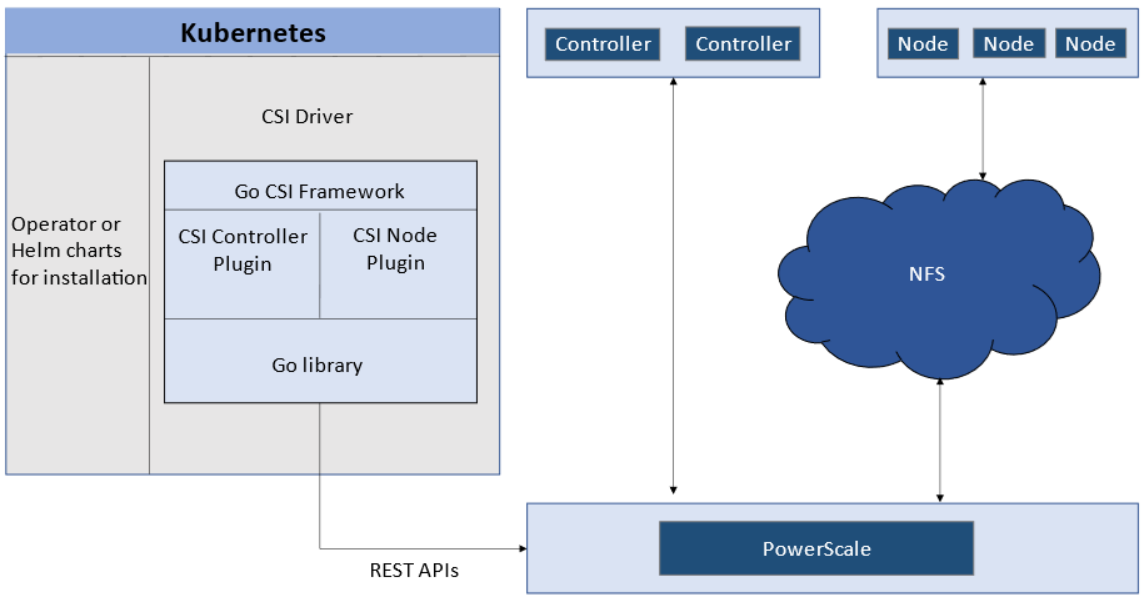

The story starts with CSI plugin architecture.

CSI plugins

Like all other Dell storage CSI, PowerScale CSI follows the Kubernetes CSI standard by implementing functions in two components.

- CSI controller plugin

- CSI node plugin

The CSI controller plugin is deployed as a Kubernetes Deployment, typically with two or three replicas for high-availability, with only one instance acting as a leader. The controller is responsible for communicating with PowerScale, using Platform API to manage volumes (to PowerScale it’s to create/delete directories, NFS exports, and quotas), to update the NFS client list when a Pod moves, and so on.

A CSI node plugin is a Kubernetes DaemonSet, running on all nodes by default. It’s responsible for mounting the NFS export from PowerScale, to map the NFS mount path to a Pod as persistent storage, so that applications and users in the Pod can access the data on PowerScale.

Roles, privileges, and access zone

Because CSI needs to access both PAPI (PowerScale Platform API) and NFS data, a single user role typically isn’t secure enough: the role for PAPI access will need more privileges than normal users.

According to the PowerScale CSI manual, CSI requires a user that has the following privileges to perform all CSI functions:

Privilege | Type |

ISI_PRIV_LOGIN_PAPI | Read Only |

ISI_PRIV_NFS | Read Write |

ISI_PRIV_QUOTA | Read Write |

ISI_PRIV_SNAPSHOT | Read Write |

ISI_PRIV_IFS_RESTORE | Read Only |

ISI_PRIV_NS_IFS_ACCESS | Read Only |

ISI_PRIV_IFS_BACKUP | Read Only |

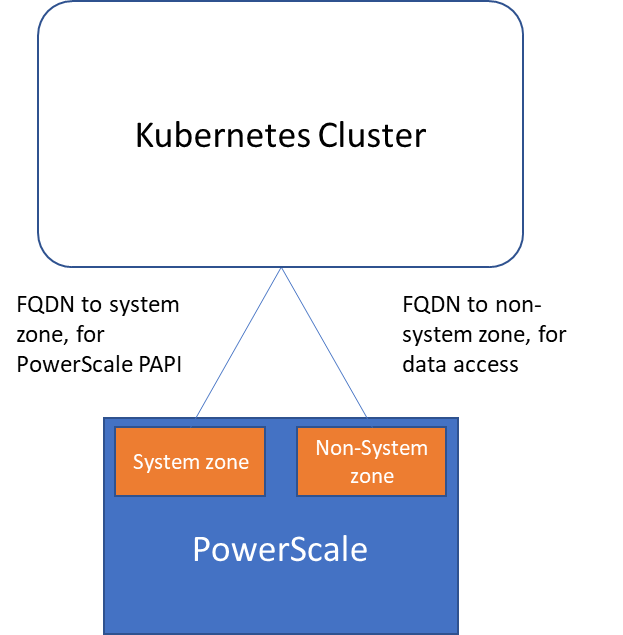

Among these privileges, ISI_PRIV_SNAPSHOT and ISI_PRIV_QUOTA are only available in the System zone. And this complicates things a bit. To fully utilize these CSI features, such as volume snapshot, volume clone, and volume capacity management, you have to allow the CSI to be able to access the PowerScale System zone. If you enable the CSM for replication, the user needs the ISI_PRIV_SYNCIQ privilege, which is a System-zone privilege too.

By contrast, there isn’t any specific role requirement for applications/users in Kubernetes to access data: the data is shared by the normal NFS protocol. As long as they have the right ACL to access the files, they are good. For this data accessing requirement, a non-system zone is suitable and recommended.

These two access zones are defined in different places in CSI configuration files:

- The PAPI access zone name (FQDN) needs to be set in the secret yaml file as “endpoint”, for example “f200.isilon.com”.

- The data access zone name (FQDN) needs to be set in the storageclass yaml file as “AzServiceIP”, for example “openshift-data.isilon.com”.

If an admin really cannot expose their System zone to the Kubernetes cluster, they have to disable the snapshot and quota features in the CSI installation configuration file (values.yaml). In this way, the PAPI access zone can be a non-System access zone.

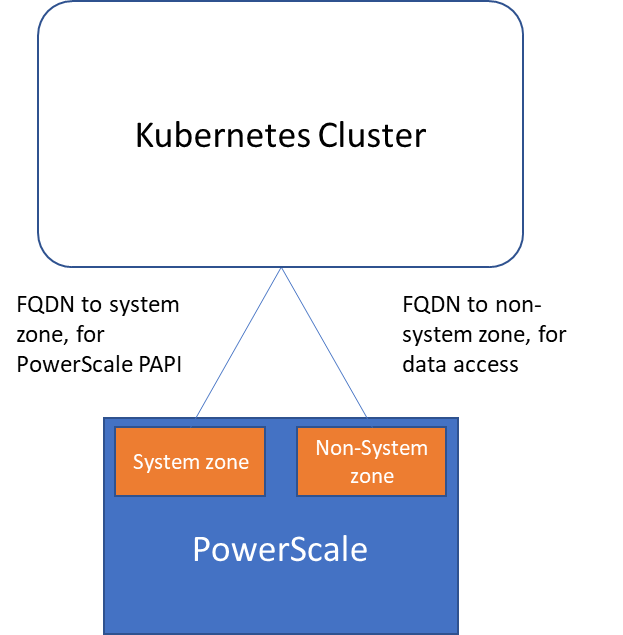

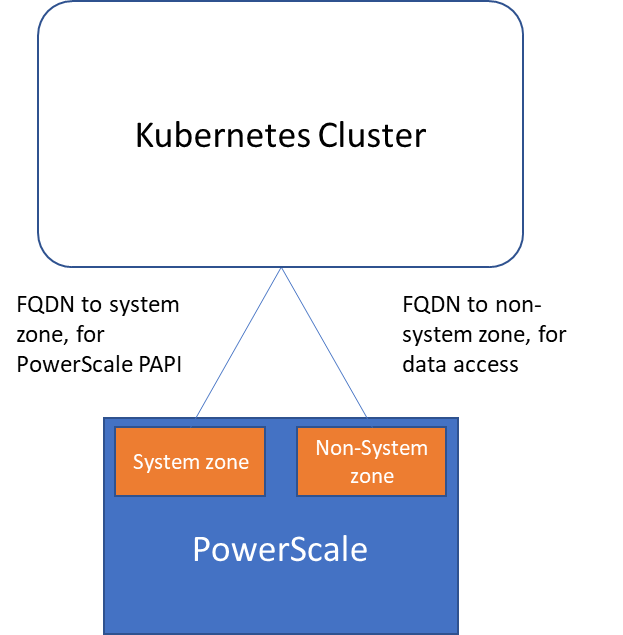

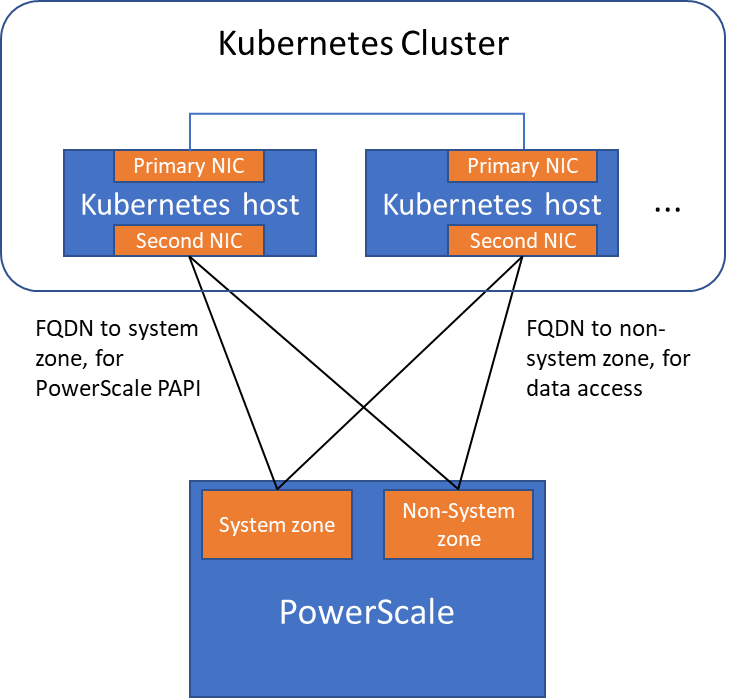

The following diagram shows how the Kubernetes cluster connects to PowerScale access zones.

Network

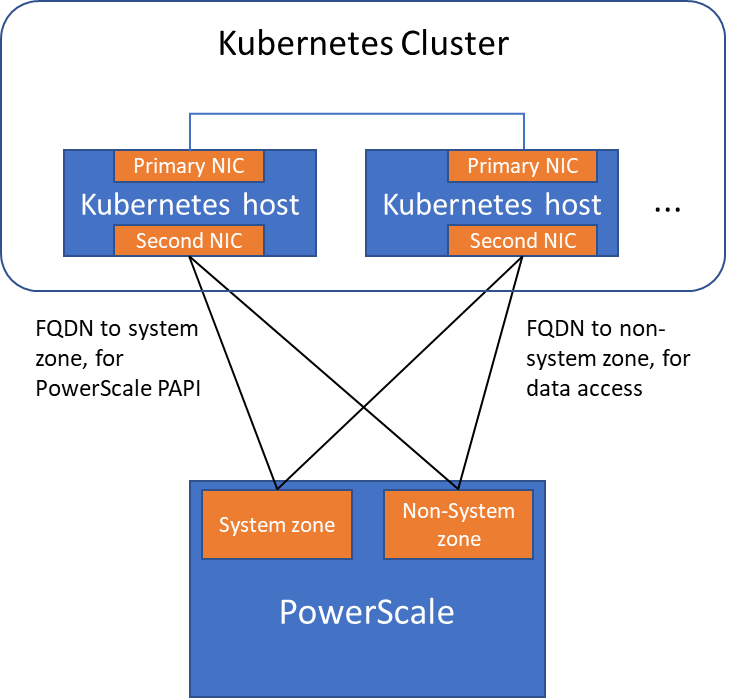

Normally a Kubernetes cluster comes with many networks: a pod inter-communication network, a cluster service network, and so on. Luckily, the PowerScale network doesn’t have to join any of them. The CSI pods can access a host’s network directly, without going through the Kubernetes internal network. This also has the advantage of providing a dedicated high-performance network for data transfer.

For example, on a Kubernetes host, there are two NICs: IP 192.168.1.x and 172.24.1.x. NIC 192.168.1.x is used for Kubernetes, and is aligned with its hostname. NIC 172.24.1.x isn’t managed by Kubernetes. In this case, we can use NIC 172.24.1.x for data transfer between Kubernetes hosts and PowerScale.

Because by default, the CSI driver will use the IP that is aligned with its hostname, to let CSI recognize the second NIC 172.24.1.x, we have explicitly set the IP range in “allowedNetworks” in the values.yaml file in the CSI driver installation. For example:

allowedNetworks: [172.24.1.0/24]

Also, in this network configuration, it’s unlikely that the Kubernetes internal DNS can resolve the PowerScale FQDN. So, we also have to make sure the “dnsPolicy” has been set to “ClusterFirstWithHostNet” in the values.yaml file. With this dnsPolicy, the CSI pods will reach the DNS server in /etc/resolv.conf in the host OS, not the internal DNS server of Kubernetes.

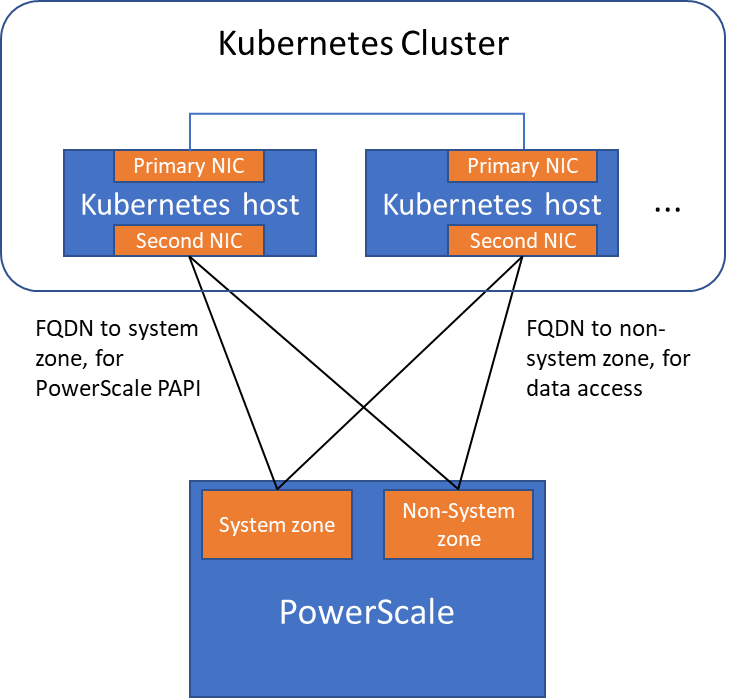

The following diagram shows the configuration mentioned above:

Please note that the “allowedNetworks” setting only affects the data access zone, and not the PAPI access zone. In fact, CSI just uses this parameter to decide which host IP should be set as the NFS client IP on the PowerScale side.

Regarding the network routing, CSI simply follows the OS route configuration. Because of that, if we want the PAPI access zone to go through the primary NIC (192.168.1.x), and have the data access zone to go through the second NIC (172.24.1.x), we have to change the route configuration of the Kubernetes host, not this parameter.

Hopefully this blog helps you understand the network configuration for PowerScale CSI better. Stay tuned for more information on Dell Containers & Storage!

Authors: Sean Zhan, Florian Coulombel

Network Design for PowerScale CSI

Mon, 29 Apr 2024 18:53:12 -0000

|Read Time: 0 minutes

Network connectivity is an essential part of any infrastructure architecture. When it comes to how Kubernetes connects to PowerScale, there are several options to configure the Container Storage Interface (CSI). In this post, we will cover the concepts and configuration you can implement.

The story starts with CSI plugin architecture.

CSI plugins

Like all other Dell storage CSI, PowerScale CSI follows the Kubernetes CSI standard by implementing functions in two components.

- CSI controller plugin

- CSI node plugin

The CSI controller plugin is deployed as a Kubernetes Deployment, typically with two or three replicas for high-availability, with only one instance acting as a leader. The controller is responsible for communicating with PowerScale, using Platform API to manage volumes (to PowerScale it’s to create/delete directories, NFS exports, and quotas), to update the NFS client list when a Pod moves, and so on.

A CSI node plugin is a Kubernetes DaemonSet, running on all nodes by default. It’s responsible for mounting the NFS export from PowerScale, to map the NFS mount path to a Pod as persistent storage, so that applications and users in the Pod can access the data on PowerScale.

Roles, privileges, and access zone

Because CSI needs to access both PAPI (PowerScale Platform API) and NFS data, a single user role typically isn’t secure enough: the role for PAPI access will need more privileges than normal users.

According to the PowerScale CSI manual, CSI requires a user that has the following privileges to perform all CSI functions:

Privilege | Type |

ISI_PRIV_LOGIN_PAPI | Read Only |

ISI_PRIV_NFS | Read Write |

ISI_PRIV_QUOTA | Read Write |

ISI_PRIV_SNAPSHOT | Read Write |

ISI_PRIV_IFS_RESTORE | Read Only |

ISI_PRIV_NS_IFS_ACCESS | Read Only |

ISI_PRIV_IFS_BACKUP | Read Only |

Among these privileges, ISI_PRIV_SNAPSHOT and ISI_PRIV_QUOTA are only available in the System zone. And this complicates things a bit. To fully utilize these CSI features, such as volume snapshot, volume clone, and volume capacity management, you have to allow the CSI to be able to access the PowerScale System zone. If you enable the CSM for replication, the user needs the ISI_PRIV_SYNCIQ privilege, which is a System-zone privilege too.

By contrast, there isn’t any specific role requirement for applications/users in Kubernetes to access data: the data is shared by the normal NFS protocol. As long as they have the right ACL to access the files, they are good. For this data accessing requirement, a non-system zone is suitable and recommended.

These two access zones are defined in different places in CSI configuration files:

- The PAPI access zone name (FQDN) needs to be set in the secret yaml file as “endpoint”, for example “f200.isilon.com”.

- The data access zone name (FQDN) needs to be set in the storageclass yaml file as “AzServiceIP”, for example “openshift-data.isilon.com”.

If an admin really cannot expose their System zone to the Kubernetes cluster, they have to disable the snapshot and quota features in the CSI installation configuration file (values.yaml). In this way, the PAPI access zone can be a non-System access zone.

The following diagram shows how the Kubernetes cluster connects to PowerScale access zones.

Network

Normally a Kubernetes cluster comes with many networks: a pod inter-communication network, a cluster service network, and so on. Luckily, the PowerScale network doesn’t have to join any of them. The CSI pods can access a host’s network directly, without going through the Kubernetes internal network. This also has the advantage of providing a dedicated high-performance network for data transfer.

For example, on a Kubernetes host, there are two NICs: IP 192.168.1.x and 172.24.1.x. NIC 192.168.1.x is used for Kubernetes, and is aligned with its hostname. NIC 172.24.1.x isn’t managed by Kubernetes. In this case, we can use NIC 172.24.1.x for data transfer between Kubernetes hosts and PowerScale.

Because by default, the CSI driver will use the IP that is aligned with its hostname, to let CSI recognize the second NIC 172.24.1.x, we have explicitly set the IP range in “allowedNetworks” in the values.yaml file in the CSI driver installation. For example:

allowedNetworks: [172.24.1.0/24]

Also, in this network configuration, it’s unlikely that the Kubernetes internal DNS can resolve the PowerScale FQDN. So, we also have to make sure the “dnsPolicy” has been set to “ClusterFirstWithHostNet” in the values.yaml file. With this dnsPolicy, the CSI pods will reach the DNS server in /etc/resolv.conf in the host OS, not the internal DNS server of Kubernetes.

The following diagram shows the configuration mentioned above:

Please note that the “allowedNetworks” setting only affects the data access zone, and not the PAPI access zone. In fact, CSI just uses this parameter to decide which host IP should be set as the NFS client IP on the PowerScale side.

Regarding the network routing, CSI simply follows the OS route configuration. Because of that, if we want the PAPI access zone to go through the primary NIC (192.168.1.x), and have the data access zone to go through the second NIC (172.24.1.x), we have to change the route configuration of the Kubernetes host, not this parameter.

Hopefully this blog helps you understand the network configuration for PowerScale CSI better. Stay tuned for more information on Dell Containers & Storage!

Authors: Sean Zhan, Florian Coulombel

Network Design for PowerScale CSI

Mon, 29 Apr 2024 18:52:07 -0000

|Read Time: 0 minutes

Network connectivity is an essential part of any infrastructure architecture. When it comes to how Kubernetes connects to PowerScale, there are several options to configure the Container Storage Interface (CSI). In this post, we will cover the concepts and configuration you can implement.

The story starts with CSI plugin architecture.

CSI plugins

Like all other Dell storage CSI, PowerScale CSI follows the Kubernetes CSI standard by implementing functions in two components.

- CSI controller plugin

- CSI node plugin

The CSI controller plugin is deployed as a Kubernetes Deployment, typically with two or three replicas for high-availability, with only one instance acting as a leader. The controller is responsible for communicating with PowerScale, using Platform API to manage volumes (to PowerScale it’s to create/delete directories, NFS exports, and quotas), to update the NFS client list when a Pod moves, and so on.

A CSI node plugin is a Kubernetes DaemonSet, running on all nodes by default. It’s responsible for mounting the NFS export from PowerScale, to map the NFS mount path to a Pod as persistent storage, so that applications and users in the Pod can access the data on PowerScale.

Roles, privileges, and access zone

Because CSI needs to access both PAPI (PowerScale Platform API) and NFS data, a single user role typically isn’t secure enough: the role for PAPI access will need more privileges than normal users.

According to the PowerScale CSI manual, CSI requires a user that has the following privileges to perform all CSI functions:

Privilege | Type |

ISI_PRIV_LOGIN_PAPI | Read Only |

ISI_PRIV_NFS | Read Write |

ISI_PRIV_QUOTA | Read Write |

ISI_PRIV_SNAPSHOT | Read Write |

ISI_PRIV_IFS_RESTORE | Read Only |

ISI_PRIV_NS_IFS_ACCESS | Read Only |

ISI_PRIV_IFS_BACKUP | Read Only |

Among these privileges, ISI_PRIV_SNAPSHOT and ISI_PRIV_QUOTA are only available in the System zone. And this complicates things a bit. To fully utilize these CSI features, such as volume snapshot, volume clone, and volume capacity management, you have to allow the CSI to be able to access the PowerScale System zone. If you enable the CSM for replication, the user needs the ISI_PRIV_SYNCIQ privilege, which is a System-zone privilege too.

By contrast, there isn’t any specific role requirement for applications/users in Kubernetes to access data: the data is shared by the normal NFS protocol. As long as they have the right ACL to access the files, they are good. For this data accessing requirement, a non-system zone is suitable and recommended.

These two access zones are defined in different places in CSI configuration files:

- The PAPI access zone name (FQDN) needs to be set in the secret yaml file as “endpoint”, for example “f200.isilon.com”.

- The data access zone name (FQDN) needs to be set in the storageclass yaml file as “AzServiceIP”, for example “openshift-data.isilon.com”.

If an admin really cannot expose their System zone to the Kubernetes cluster, they have to disable the snapshot and quota features in the CSI installation configuration file (values.yaml). In this way, the PAPI access zone can be a non-System access zone.

The following diagram shows how the Kubernetes cluster connects to PowerScale access zones.

Network

Normally a Kubernetes cluster comes with many networks: a pod inter-communication network, a cluster service network, and so on. Luckily, the PowerScale network doesn’t have to join any of them. The CSI pods can access a host’s network directly, without going through the Kubernetes internal network. This also has the advantage of providing a dedicated high-performance network for data transfer.

For example, on a Kubernetes host, there are two NICs: IP 192.168.1.x and 172.24.1.x. NIC 192.168.1.x is used for Kubernetes, and is aligned with its hostname. NIC 172.24.1.x isn’t managed by Kubernetes. In this case, we can use NIC 172.24.1.x for data transfer between Kubernetes hosts and PowerScale.

Because by default, the CSI driver will use the IP that is aligned with its hostname, to let CSI recognize the second NIC 172.24.1.x, we have explicitly set the IP range in “allowedNetworks” in the values.yaml file in the CSI driver installation. For example:

allowedNetworks: [172.24.1.0/24]

Also, in this network configuration, it’s unlikely that the Kubernetes internal DNS can resolve the PowerScale FQDN. So, we also have to make sure the “dnsPolicy” has been set to “ClusterFirstWithHostNet” in the values.yaml file. With this dnsPolicy, the CSI pods will reach the DNS server in /etc/resolv.conf in the host OS, not the internal DNS server of Kubernetes.

The following diagram shows the configuration mentioned above:

Please note that the “allowedNetworks” setting only affects the data access zone, and not the PAPI access zone. In fact, CSI just uses this parameter to decide which host IP should be set as the NFS client IP on the PowerScale side.

Regarding the network routing, CSI simply follows the OS route configuration. Because of that, if we want the PAPI access zone to go through the primary NIC (192.168.1.x), and have the data access zone to go through the second NIC (172.24.1.x), we have to change the route configuration of the Kubernetes host, not this parameter.

Hopefully this blog helps you understand the network configuration for PowerScale CSI better. Stay tuned for more information on Dell Containers & Storage!

Authors: Sean Zhan, Florian Coulombel

Announcing CSM Release 1.6!

Mon, 29 Apr 2024 18:40:02 -0000

|Read Time: 0 minutes

Introduction

The first release of 2023 for Kubernetes CSI Driver & Dell Container Storage Modules (CSM) is here!

The official changelog is available in the CHANGELOG directory of the CSM repository.

CSI Features

Supported Kubernetes distributions

The newly supported Kubernetes distributions are:

- Kubernetes 1.26

- MKE 3.6

- RKE 1.4

Note: OpenShift 4.12 official qualification is not there yet. Indeed, these modules have been tested against Kubernetes 1.25 which is based on OpenShift 4.12. But you must install them using Helm package manager, not CSI or CSM Operators.

Installation Wizard

One of the major new features for CSI in CSM 1.6 is the Installation Wizard.

If you are a faithful reader of this blog series, you already know that Dell's CSI and CSM moved to pure Helm Charts and are distributed in our helm chart repository. This paved the way for the wizard installer!

Straight from the documentation portal, you can launch the wizard to configure and install the CSI and CSM modules for PowerStore and PowerMax. All the dependencies between CSI and CSM are managed.

The wizard doesn't aim to cover all use cases but gives an excellent default values.yaml, which can always be tuned later.

It has never been easier to install CSI and CSM!

cert-csi open-source

cert-csi is Dell's test framework to validate and qualify drivers against the Kubernetes distributions.

If all tests from cert-csi pass, we call a platform (Linux OS + Kubernetes distribution) certified and officially supported by the Dell Engineering and Support structure.

With cert-csi open-sourced, the community can validate a platform, even if it’s not in the support matrix yet.

For more details about how to use the cert-csi utility, see the documentation portal.

Other features

The dellctl images CLI prints all the container images needed by Dell CSI drivers.

PowerMax Metro volumes are now fully compliant with the CSI specification for volume expansion, clone, and snapshot.

CSM Features

CSM Operator adds PowerStore support

The CSM Operator is the future of the Operator framework for Dell CSI driver and Dell Container Storage Modules and now integrates the modules for PowerStore.

CSM Resiliency PowerStore support

Kubernetes is notably conservative with StatefulSets on node failures: it won't reschedule them automatically and requires an administrator to force the deletion of the pods.

CSM resiliency solves that problem (and more) by querying the backend storage and getting the volumes' status to allow rescheduling in a few seconds after a node is NotReady for Kubernetes.

PowerStore is now part of the supported storage backends!

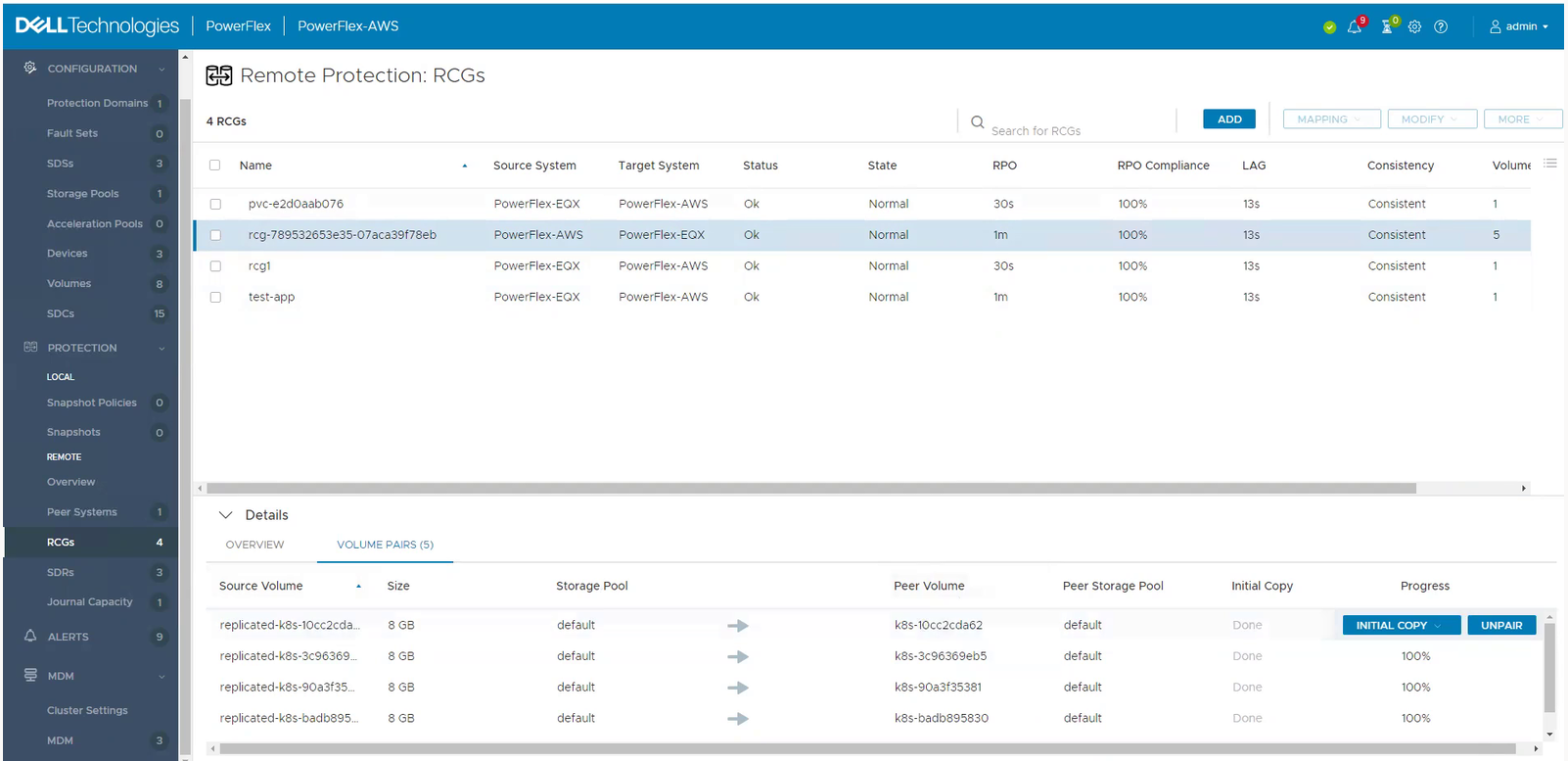

CSM Replication PowerFlex support

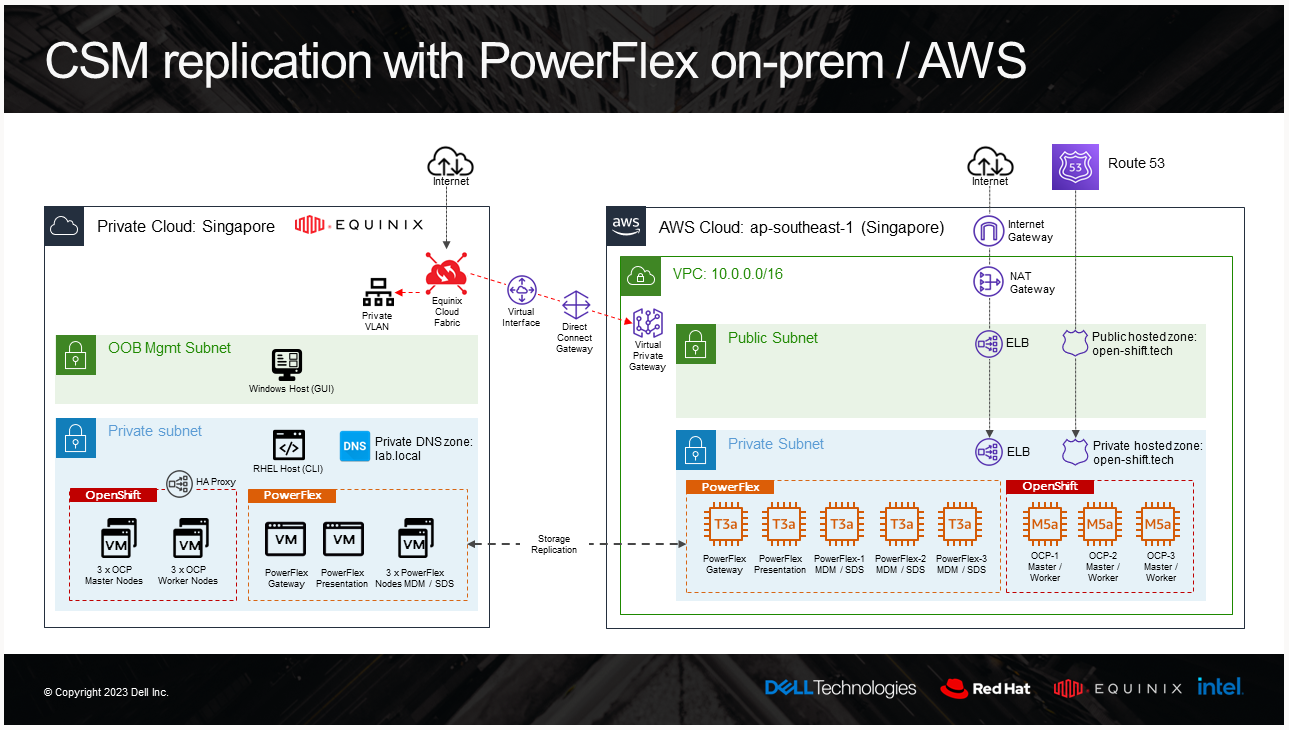

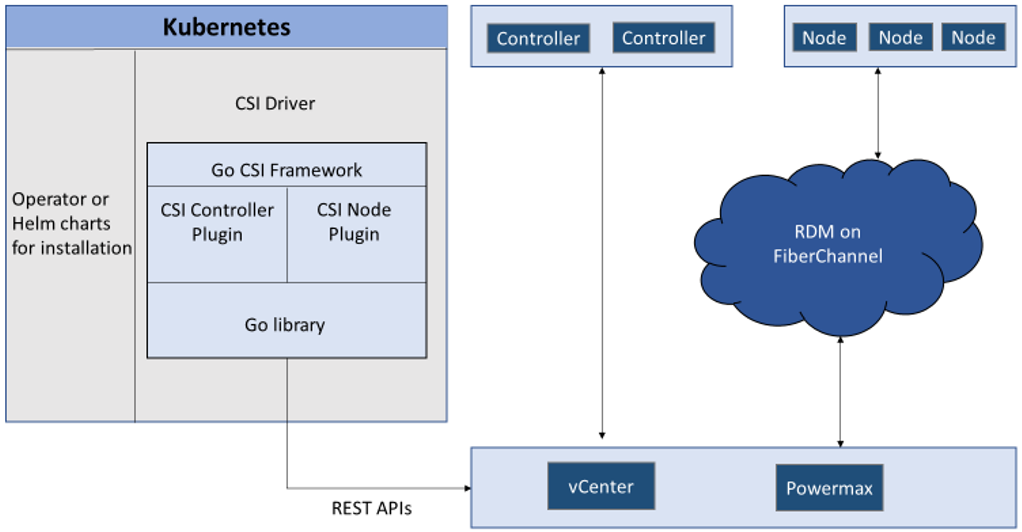

CSM replication supports PowerFlex and it is now possible to combine it with an offering of PowerFlex in AWS. For these types of designs, it is recommended to have low latency between the source and the target. For example, here is the architecture of our lab:

And the result of a replicated volume in PowerFlex UI in AWS looks like this:

To learn more about PowerFlex in AWS, see the video Dell Project Alpine Gets Real with PowerFlex on AWS and the blog Dell PowerFlex is now available on AWS.

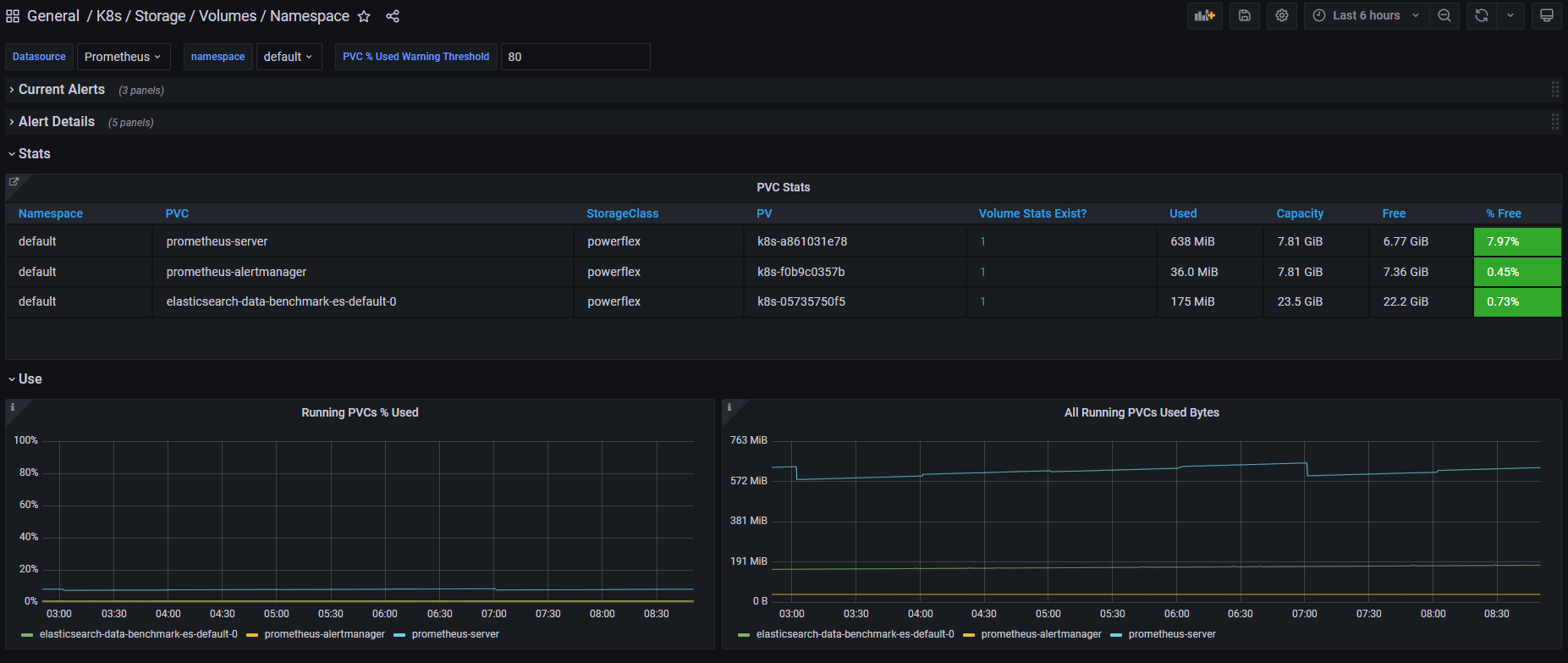

CSM Observability PowerMax support

CSM Observability can collect PowerMax metrics, including the performance of the storage groups that back the PVC, the capacity of the storage resource pool, and more.

Useful links

Stay informed of the latest updates of the Dell CSM eco-system by subscribing to:

- The Dell CSM Github repository

- Our DevOps & Automation Youtube playlist

- The Slack Workspaces at Dell Technologies

- Demo of CSM replication with PowerFlex

Author: Florian Coulombel

CSI drivers 2.0 and Dell EMC Container Storage Modules GA!

Mon, 29 Apr 2024 18:38:49 -0000

|Read Time: 0 minutes

The quarterly update for Dell CSI Driver is here! But today marks a significant milestone because we are also announcing the availability of Dell EMC Container Storage Modules (CSM). Here’s what we’re covering in this blog:

Container Storage Modules

Dell Container Storage Modules is a set of modules that aims to extend Kubernetes storage features beyond what is available in the CSI specification.

The CSM modules will expose storage enterprise features directly within Kubernetes, so developers are empowered to leverage them for their deployment in a seamless way.

Most of these modules are released as sidecar containers that work with the CSI driver for the Dell storage array technology you use.

CSM modules are open-source and freely available from : https://github.com/dell/csm.

Volume Group Snapshot

Many stateful apps can run on top of multiple volumes. For example, we can have a transactional DB like Postgres with a volume for its data and another for the redo log, or Cassandra that is distributed across nodes, each having a volume, and so on.

When you want to take a recoverable snapshot, it is vital to take them consistently at the exact same time.

Dell CSI Volume Group Snapshotter solves that problem for you. With the help of a CustomResourceDefinition, an additional sidecar to the Dell CSI drivers, and leveraging vanilla Kubernetes snapshots, you can manage the life cycle of crash-consistent snapshots. This means you can create a group of volumes for which the drivers create snapshots, restore them, or move them with one shot simultaneously!

To take a crash-consistent snapshot, you can either use labels on your PersistantVolumeClaim, or be explicit and pass the list of PVCs that you want to snap. For example:

apiVersion: v1 apiVersion: volumegroup.storage.dell.com/v1alpha2 kind: DellCsiVolumeGroupSnapshot metadata: # Name must be 13 characters or less in length name: "vg-snaprun1" spec: driverName: "csi-vxflexos.dellemc.com" memberReclaimPolicy: "Retain" volumesnapshotclass: "poweflex-snapclass" pvcLabel: "vgs-snap-label" # pvcList: # - "pvcName1" # - "pvcName2"

For the first release, CSI for PowerFlex supports Volume Group Snapshot.

Observability

The CSM Observability module is delivered as an open-telemetry agent that collects array-level metrics to scrape them for storage in a Prometheus DB.

The integration is as easy as creating a Prometheus ServiceMonitor for Prometheus. For example:

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: otel-collector

namespace: powerstore

spec:

endpoints:

- path: /metrics

port: exporter-https

scheme: https

tlsConfig:

insecureSkipVerify: true

selector:

matchLabels:

app.kubernetes.io/instance: karavi-observability

app.kubernetes.io/name: otel-collectorWith the observability module, you will gain visibility on the capacity of the volume you manage with Dell CSI drivers and their performance, in terms of bandwidth, IOPS, and response time.

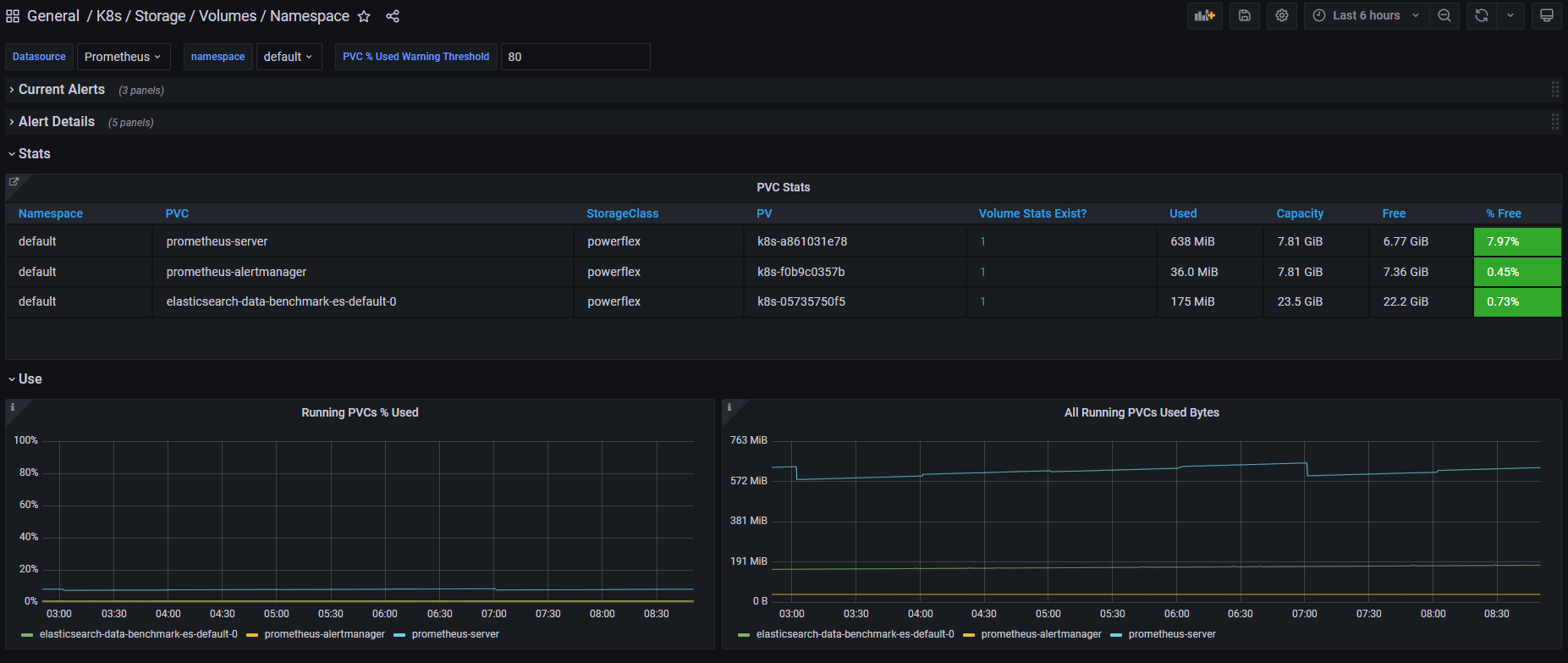

Thanks to pre-canned Grafana dashboards, you will be able to go through these metrics’ history and see the topology between a Kubernetes PersistentVolume (PV) until its translation as a LUN or fileshare in the backend array.

The Kubernetes admin can also collect array level metrics to check the overall capacity performance directly from the familiar Prometheus/Grafana tools.

For the first release, Dell EMC PowerFlex and Dell EMC PowerStore support CSM Observability.

Replication

Each Dell storage array supports replication capabilities. It can be asynchronous with an associated recovery point objective, synchronous replication between sites, or even active-active.

Each replication type serves a different purpose related to the use-case or the constraint you have on your data centers.

The Dell CSM replication module allows creating a persistent volume that can be of any of three replication types -- synchronous, asynchronous, and metro -- assuming the underlying storage box supports it.

The Kubernetes architecture can build on a stretched cluster between two sites or on two or more independent clusters. The module itself is composed of three main components:

- The Replication controller whose role is to manage the CustomResourceDefinition that abstracts the concept of Replication across the Kubernetes cluster

- The Replication sidecar for the CSI driver that will convert the Replication controller request to an actual call on the array side

- The repctl utility, to simplify managing replication objects across multiple Kubernetes clusters

The usual workflow is to create a PVC that is replicated with a classic Kubernetes directive by just picking the right StorageClass. You can then use repctl or edit the DellCSIReplicationGroup CRD to launch operations like Failover, Failback, Reprotect, Suspend, Synchronize, and so on.

For the first release, Dell EMC PowerMax and Dell EMC PowerStore support CSM Replication.

Authorization

With CSM Authorization we are giving back more control of storage consumption to the storage administrator.

The authorization module is an independent service, installed and owned by the storage admin.

Within that module, the storage administrator will create access control policies and storage quotas to make sure that Kubernetes consumers are not overconsuming storage or trying to access data that doesn’t belong to them.

CSM Authorization makes multi-tenant architecture real by enforcing Role-Based Access Control on storage objects coming from multiple and independent Kubernetes clusters.

The authorization module acts as a proxy between the CSI driver and the backend array. Access is granted with an access token that can be revoked at any point in time. Quotas can be changed on the fly to limit or increase storage consumption from the different tenants.

For the first release, Dell EMC PowerMax and Dell EMC PowerFlex support CSM Authorization.

Resilency

When dealing with StatefulApp, if a node goes down, vanilla Kubernetes is pretty conservative.

Indeed, from the Kubernetes control plane, the failing node is seen as not ready. It can be because the node is down, or because of network partitioning between the control plane and the node, or simply because the kubelet is down. In the latter two scenarios, the StatefulApp is still running and possibly writing data on disk. Therefore, Kubernetes won’t take action and lets the admin manually trigger a Pod deletion if desired.

The CSM Resiliency module (sometimes named PodMon) aims to improve that behavior with the help of collected metrics from the array.

Because the driver has access to the storage backend from pretty much all other nodes, we can see the volume status (mapped or not) and its activity (are there IOPS or not). So when a node goes into NotReady state, and we see no IOPS on the volume, Resiliency will relocate the Pod to a new node and clean whatever leftover objects might exist.

The entire process happens in seconds between the moment a node is seen down and the rescheduling of the Pod.

To protect an app with the resiliency module, you only have to add the label podmon.dellemc.com/driver to it, and it is then protected.

For more details on the module’s design, you can check the documentation here.

For the first release, Dell EMC PowerFlex and Dell EMC Unity support CSM Resiliency.

Installer

Each module above is released either as an independent helm chart or as an option within the CSI Drivers.

For more complex deployments, which may involve multiple Kubernetes clusters or a mix of modules, it is possible to use the csm installer.

The CSM Installer, built on top of carvel gives the user a single command line to create their CSM-CSI application and to manage them outside the Kubernetes cluster.

For the first release, all drivers and modules support the CSM Installer.

New CSI features

Across portfolio

For each driver, this release provides:

- Support of OpenShift 4.8

- Support of Kubernetes 1.22

- Support of Rancher Kubernetes Engine 2

- Normalized configurations between drivers

- Dynamic Logging Configuration

- New CSM installer

VMware Tanzu Kubernetes Grid

VMware Tanzu offers storage management by means of its CNS-CSI driver, but it doesn’t support ReadWriteMany access mode.

If your workload needs concurrent access to the filesystem, you can now rely on CSI Driver for PowerStore, PowerScale and Unity through the NFS protocol. The three platforms are officially supported and qualified on Tanzu.

NFS behind NAT

NFS Driver, PowerStore, PowerScale, and Unity have all been tested and work when the Kubernetes cluster is behind a private network.

PowerScale

By default, the CSI driver creates volumes with 777 POSIX permission on the directory.

Now with the isiVolumePathPermissions parameter, you can use ACLs or any more permissive POSIX rights.

The isiVolumePathPermissions can be configured as part of the ConfigMap with the PowerScale settings or at the StorageClass level. The accepted parameter values are: private_read, private, public_read, public_read_write, and public for the ACL or any combination of [POSIX Mode].

Useful links

For more details you can:

- Watch CSM demos on our VP Youtube channel : https://www.youtube.com/user/itzikreich/

- Ask for help from the Dell container community website

- Subscribe to Github notification and be informed of the latest releases on: https://github.com/dell/csm

- Chat with us on Slack

Author: Florian Coulombel

Looking Ahead: Dell Container Storage Modules 1.2

Mon, 29 Apr 2024 18:36:49 -0000

|Read Time: 0 minutes

The quarterly update for Dell CSI Drivers & Dell Container Storage Modules (CSM) is here! Here’s what we’re planning.

CSM Features

New CSM Operator!

Dell Container Storage Modules (CSM) add data services and features that are not in the scope of the CSI specification today. The new CSM Operator simplifies the deployment of CSMs. With an ever-growing ecosystem and added features, deploying a driver and its affiliated modules need to be carefully studied before beginning the deployment.

The new CSM Operator:

- Serves as a one-stop-shop for deploying all Dell CSI driver and Container Storage Modules

- Simplifies the install and upgrade operations

- Leverages the Operator framework to give a clear status of the deployment of the resources

- Is certified by Red Hat OpenShift

In the short/middle term, the CSM Operator will deprecate the experimental CSM Installer.

Replication support with PowerScale

For disaster recovery protection, PowerScale implements data replication between appliances by means of the the SyncIQ feature. SyncIQ replicates the data between two sites, where one is read-write while the other is read-only, similar to Dell storage backends with async or sync replication.

The role of the CSM replication module and underlying CSI driver is to provision the volume within Kubernetes clusters and prepare the export configurations, quotas, and so on.

CSM Replication for PowerScale has been designed and implemented in such a way that it won’t collide with your existing Superna Eyeglass DR utility.

A live-action demo will be posted in the coming weeks on our VP YouTube channel: https://www.youtube.com/user/itzikreich/.

CSI features

Across the portfolio

In this release, each CSI driver:

- Supports OpenShift 4.9

- Supports Kubernetes 1.23

- Supports the CSI Spec 1.5

- Updates the latest UBI-minimal image

- Supports fsGroupPolicy

fsGroupPolicy support

Kubernetes v1.19 introduced the fsGroupPolicy to give more control to the CSI driver over the permission sets in the securityContext.

There are three possible options:

- None -- which means that the fsGroup directive from the securityContext will be ignored

- File -- which means that the fsGroup directive will be applied on the volume. This is the default setting for NAS systems such as PowerScale or Unity-File.

- ReadWriteOnceWithFSType -- which means that the fsGroup directive will be applied on the volume if it has fsType defined and is ReadWriteOnce. This is the default setting for block systems such as PowerMax and PowerStore-Block.

In all cases, Dell CSI drivers let kubelet perform the change ownership operations and do not do it at the driver level.

Standalone Helm install

Drivers for PowerFlex and Unity can now be installed with the help of the install scripts we provide under the dell-csi-installer directory.

A standalone Helm chart helps to easily integrate the driver installation with the agent for Continuous Deployment like Flux or Argo CD.

Note: To ensure that you install the driver on a supported Kubernetes version, the Helm charts take advantage of the kubeVersion field. Some Kubernetes distributions use labels in kubectl version (such as v1.21.3-mirantis-1 and v1.20.7-eks-1-20-7) that require manual editing.

Volume Health Monitoring support

Drivers for PowerFlex and Unity implement Volume Health Monitoring.

This feature is currently in alpha in Kubernetes (in Q1-2022), and is disabled with a default installation.

Once enabled, the drivers will expose the standard storage metrics, such as capacity usage and inode usage through the Kubernetes /metrics endpoint. The metrics will flow natively in popular dashboards like the ones built-in OpenShift Monitoring:

Pave the way for full open source!

All Dell drivers and dependencies like gopowerstore, gobrick, and more are now on Github and will be fully open-sourced. The umbrella project is and remains https://github.com/dell/csm, from which you can open tickets and see the roadmap.

Google Anthos 1.9

The Dell partnership with Google continues, and the latest CSI drivers for PowerScale and PowerStore support Anthos v1.9.

NFSv4 POSIX and ACL support

Both CSI PowerScale and PowerStore now allow setting the default permissions for the newly created volume. To do this, you can use POSIX octal notation or ACL.

- In PowerScale, you can use plain ACL or built-in values such as private_read, private, public_read, public_read_write, public or custom ones;

- In PowerStore, you can use the custom ones such as A::OWNER@:RWX, A::GROUP@:RWX, and A::OWNER@:rxtncy.

Useful links

For more details you can:

- Watch these great CSM demos on our VP YouTube channel: https://www.youtube.com/user/itzikreich/

- Subscribe to Github notification and be informed of the latest releases on: https://github.com/dell/csm

- Ask for help or chat with us on Slack

Author: Florian Coulombel

How to Build a Custom Dell CSI Driver

Mon, 29 Apr 2024 18:15:25 -0000

|Read Time: 0 minutes

With all the Dell Container Storage Interface (CSI) drivers and dependencies being open-source, anyone can tweak them to fit a specific use case.

This blog shows how to create a patched version of a Dell CSI Driver for PowerScale.

The premise

As a practical example, the following steps show how to create a patched version of Dell CSI Driver for PowerScale that supports a longer mounted path.

The CSI Specification defines that a driver must accept a max path of 128 bytes minimal:

// SP SHOULD support the maximum path length allowed by the operating

// system/filesystem, but, at a minimum, SP MUST accept a max path

// length of at least 128 bytes.

Dell drivers use the gocsi library as a common boilerplate for CSI development. That library enforces the 128 bytes maximum path length.

The PowerScale hardware supports path lengths up to 1023 characters, as described in the File system guidelines chapter of the PowerScale spec. We’ll therefore build a csi-powerscale driver that supports that maximum length path value.

Steps to patch a driver

Dependencies

The Dell CSI drivers are all built with golang and, obviously, run as a container. As a result, the prerequisites are relatively simple. You need:

- Golang (v1.16 minimal at the time of the publication of that post)

- Podman or Docker

- And optionally make to run our Makefile

Clone, branch, and patch

The first thing to do is to clone the official csi-powerscale repository in your GOPATH source directory.

cd $GOPATH/src/github.com/

git clone git@github.com:dell/csi-powerscale.git dell/csi-powerscale

cd dell/csi-powerscaleYou can then pick the version of the driver you want to patch; git tag gives the list of versions.

In this example, we pick the v2.1.0 with git checkout v2.1.0 -b v2.1.0-longer-path.

The next step is to obtain the library we want to patch.

gocsi and every other open-source component maintained for Dell CSI are available on https://github.com/dell.

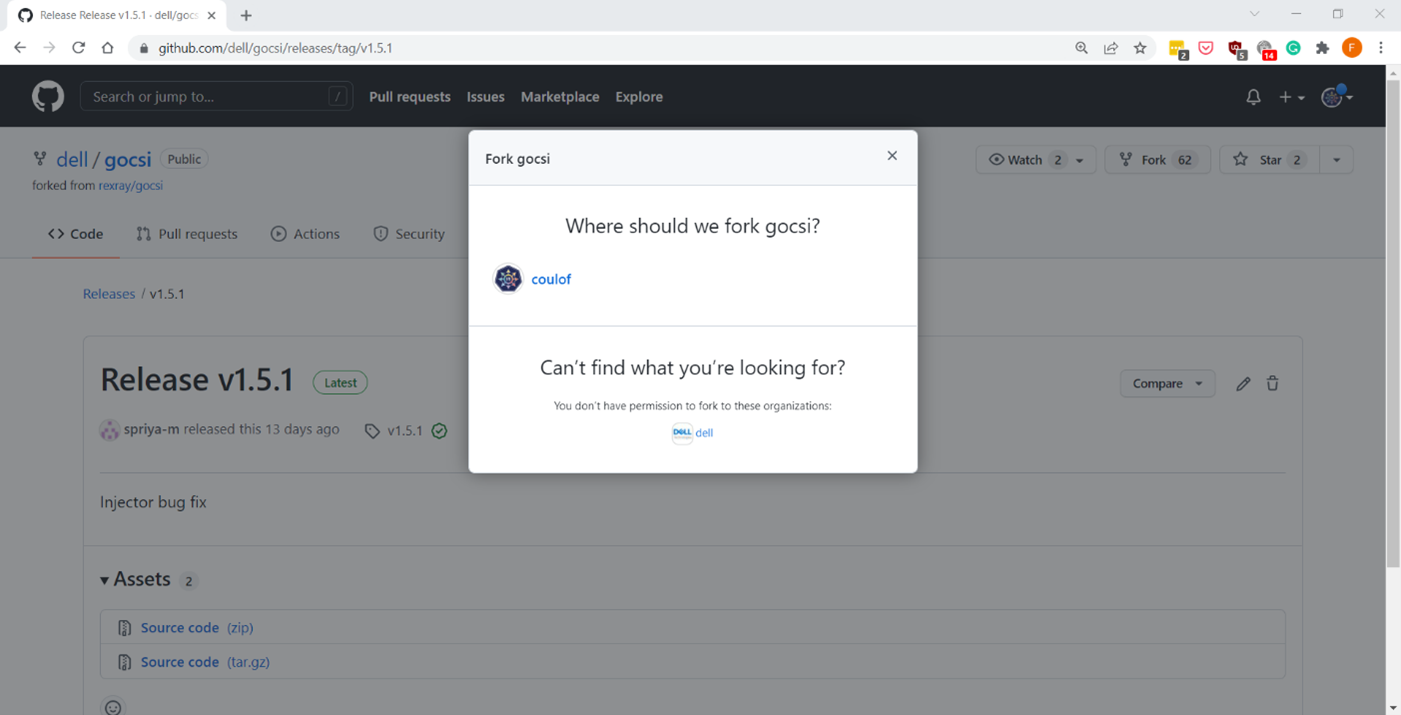

The following figure shows how to fork the repository on your private github:

Now we can get the library with:

cd $GOPATH/src/github.com/

git clone git@github.com:coulof/gocsi.git coulof/gocsi

cd coulof/gocsiTo simplify the maintenance and merge of future commits, it is wise to add the original repo as an upstream branch with:

git remote add upstream git@github.com:dell/gocsi.gitThe next important step is to pick and choose the correct library version used by our version of the driver.

We can check the csi-powerscale dependency file with: grep gocsi $GOPATH/src/github.com/dell/csi-powerscale/go.mod and create a branch of that version. In this case, the version is v1.5.0, and we can branch it with: git checkout v1.5.0 -b v1.5.0-longer-path.

Now it’s time to hack our patch! Which is… just a oneliner:

--- a/middleware/specvalidator/spec_validator.go

+++ b/middleware/specvalidator/spec_validator.go

@@ -770,7 +770,7 @@ func validateVolumeCapabilitiesArg(

}

const (

- maxFieldString = 128

+ maxFieldString = 1023

maxFieldMap = 4096

maxFieldNodeId = 256

)We can then commit and push our patched library with a nice tag:

git commit -a -m 'increase path limit'

git push --set-upstream origin v1.5.0-longer-path

git tag -a v1.5.0-longer-path

git push --tagsBuild

With the patch committed and pushed, it’s time to build the CSI driver binary and its container image.

Let’s go back to the csi-powerscale main repo: cd $GOPATH/src/github.com/dell/csi-powerscale

As mentioned in the introduction, we can take advantage of the replace directive in the go.mod file to point to the patched lib. In this case we add the following:

diff --git a/go.mod b/go.mod

index 5c274b4..c4c8556 100644

--- a/go.mod

+++ b/go.mod

@@ -26,6 +26,7 @@ require (

)

replace (

+ github.com/dell/gocsi => github.com/coulof/gocsi v1.5.0-longer-path

k8s.io/api => k8s.io/api v0.20.2

k8s.io/apiextensions-apiserver => k8s.io/apiextensions-apiserver v0.20.2

k8s.io/apimachinery => k8s.io/apimachinery v0.20.2When that is done, we obtain the new module from the online repo with: go mod download

Note: If you want to test the changes locally only, we can use the replace directive to point to the local directory with:

replace github.com/dell/gocsi => ../../coulof/gocsiWe can then build our new driver binary locally with: make build

After compiling it successfully, we can create the image. The shortest path to do that is to replace the csi-isilon binary from the dellemc/csi-isilon docker image with:

cat << EOF > Dockerfile.patch

FROM dellemc/csi-isilon:v2.1.0

COPY "csi-isilon" .

EOF

docker build -t coulof/csi-isilon:v2.1.0-long-path -f Dockerfile.patch . Alternatively, you can rebuild an entire docker image using provided Makefile.

By default, the driver uses a Red Hat Universal Base Image minimal. That base image sometimes misses dependencies, so you can use another flavor, such as:

BASEIMAGE=registry.fedoraproject.org/fedora-minimal:latest REGISTRY=docker.io IMAGENAME=coulof/csi-powerscale IMAGETAG=v2.1.0-long-path make podman-buildThe image is ready to be pushed in whatever image registry you prefer. In this case, this is hub.docker.com: docker push coulof/csi-isilon:v2.1.0-long-path.

Update CSI Kubernetes deployment

The last step is to replace the driver image used in your Kubernetes with your custom one.

Again, multiple solutions are possible, and the one to choose depends on how you deployed the driver.

If you used the helm installer, you can add the following block at the top of the myvalues.yaml file:

images:

driver: docker.io/coulof/csi-powerscale:v2.1.0-long-pathThen update or uninstall/reinstall the driver as described in the documentation.

If you decided to use the Dell CSI Operator, you can simply point to the new image:

apiVersion: storage.dell.com/v1

kind: CSIIsilon

metadata:

name: isilon

spec:

driver:

common:

image: "docker.io/coulof/csi-powerscale:v2.1.0-long-path"

...Or, if you want to do a quick and dirty test, you can create a patch file (here named path_csi-isilon_controller_image.yaml) with the following content:

spec:

template:

spec:

containers:

- name: driver

image: docker.io/coulof/csi-powerscale:v2.1.0-long-pathYou can then apply it to your existing install with: kubectl patch deployment -n powerscale isilon-controller --patch-file path_csi-isilon_controller_image.yaml

In all cases, you can check that everything works by first making sure that the Pod is started:

kubectl get pods -n powerscale and that the logs are clean:

kubectl logs -n powerscale -l app=isilon-controller -c driver.Wrap-up and disclaimer

As demonstrated, thanks to the open source, it’s easy to fix and improve Dell CSI drivers or Dell Container Storage Modules.

Keep in mind that Dell officially supports (through tickets, Service Requests, and so on) the image and binary, but not the custom build.

Thanks for reading and stay tuned for future posts on Dell Storage and Kubernetes!

Author: Florian Coulombel

Looking Ahead: Dell Container Storage Modules 1.2

Mon, 29 Apr 2024 18:11:07 -0000

|Read Time: 0 minutes

The quarterly update for Dell CSI Drivers & Dell Container Storage Modules (CSM) is here! Here’s what we’re planning.

CSM Features

New CSM Operator!

Dell Container Storage Modules (CSM) add data services and features that are not in the scope of the CSI specification today. The new CSM Operator simplifies the deployment of CSMs. With an ever-growing ecosystem and added features, deploying a driver and its affiliated modules need to be carefully studied before beginning the deployment.

The new CSM Operator:

- Serves as a one-stop-shop for deploying all Dell CSI driver and Container Storage Modules

- Simplifies the install and upgrade operations

- Leverages the Operator framework to give a clear status of the deployment of the resources

- Is certified by Red Hat OpenShift

In the short/middle term, the CSM Operator will deprecate the experimental CSM Installer.

Replication support with PowerScale

For disaster recovery protection, PowerScale implements data replication between appliances by means of the the SyncIQ feature. SyncIQ replicates the data between two sites, where one is read-write while the other is read-only, similar to Dell storage backends with async or sync replication.

The role of the CSM replication module and underlying CSI driver is to provision the volume within Kubernetes clusters and prepare the export configurations, quotas, and so on.

CSM Replication for PowerScale has been designed and implemented in such a way that it won’t collide with your existing Superna Eyeglass DR utility.

A live-action demo will be posted in the coming weeks on our VP YouTube channel: https://www.youtube.com/user/itzikreich/.

CSI features

Across the portfolio

In this release, each CSI driver:

- Supports OpenShift 4.9

- Supports Kubernetes 1.23

- Supports the CSI Spec 1.5

- Updates the latest UBI-minimal image

- Supports fsGroupPolicy

fsGroupPolicy support

Kubernetes v1.19 introduced the fsGroupPolicy to give more control to the CSI driver over the permission sets in the securityContext.

There are three possible options:

- None -- which means that the fsGroup directive from the securityContext will be ignored

- File -- which means that the fsGroup directive will be applied on the volume. This is the default setting for NAS systems such as PowerScale or Unity-File.

- ReadWriteOnceWithFSType -- which means that the fsGroup directive will be applied on the volume if it has fsType defined and is ReadWriteOnce. This is the default setting for block systems such as PowerMax and PowerStore-Block.

In all cases, Dell CSI drivers let kubelet perform the change ownership operations and do not do it at the driver level.

Standalone Helm install

Drivers for PowerFlex and Unity can now be installed with the help of the install scripts we provide under the dell-csi-installer directory.

A standalone Helm chart helps to easily integrate the driver installation with the agent for Continuous Deployment like Flux or Argo CD.

Note: To ensure that you install the driver on a supported Kubernetes version, the Helm charts take advantage of the kubeVersion field. Some Kubernetes distributions use labels in kubectl version (such as v1.21.3-mirantis-1 and v1.20.7-eks-1-20-7) that require manual editing.

Volume Health Monitoring support

Drivers for PowerFlex and Unity implement Volume Health Monitoring.

This feature is currently in alpha in Kubernetes (in Q1-2022), and is disabled with a default installation.

Once enabled, the drivers will expose the standard storage metrics, such as capacity usage and inode usage through the Kubernetes /metrics endpoint. The metrics will flow natively in popular dashboards like the ones built-in OpenShift Monitoring:

Pave the way for full open source!

All Dell drivers and dependencies like gopowerstore, gobrick, and more are now on Github and will be fully open-sourced. The umbrella project is and remains https://github.com/dell/csm, from which you can open tickets and see the roadmap.

Google Anthos 1.9

The Dell partnership with Google continues, and the latest CSI drivers for PowerScale and PowerStore support Anthos v1.9.

NFSv4 POSIX and ACL support

Both CSI PowerScale and PowerStore now allow setting the default permissions for the newly created volume. To do this, you can use POSIX octal notation or ACL.

- In PowerScale, you can use plain ACL or built-in values such as private_read, private, public_read, public_read_write, public or custom ones;

- In PowerStore, you can use the custom ones such as A::OWNER@:RWX, A::GROUP@:RWX, and A::OWNER@:rxtncy.

Useful links

For more details you can:

- Watch these great CSM demos on our VP YouTube channel: https://www.youtube.com/user/itzikreich/

- Subscribe to Github notification and be informed of the latest releases on: https://github.com/dell/csm

- Ask for help or chat with us on Slack

Author: Florian Coulombel

CSI drivers 2.0 and Dell EMC Container Storage Modules GA!

Mon, 29 Apr 2024 17:44:07 -0000

|Read Time: 0 minutes

The quarterly update for Dell CSI Driver is here! But today marks a significant milestone because we are also announcing the availability of Dell EMC Container Storage Modules (CSM). Here’s what we’re covering in this blog:

Container Storage Modules

Dell Container Storage Modules is a set of modules that aims to extend Kubernetes storage features beyond what is available in the CSI specification.

The CSM modules will expose storage enterprise features directly within Kubernetes, so developers are empowered to leverage them for their deployment in a seamless way.

Most of these modules are released as sidecar containers that work with the CSI driver for the Dell storage array technology you use.

CSM modules are open-source and freely available from : https://github.com/dell/csm.

Volume Group Snapshot

Many stateful apps can run on top of multiple volumes. For example, we can have a transactional DB like Postgres with a volume for its data and another for the redo log, or Cassandra that is distributed across nodes, each having a volume, and so on.

When you want to take a recoverable snapshot, it is vital to take them consistently at the exact same time.

Dell CSI Volume Group Snapshotter solves that problem for you. With the help of a CustomResourceDefinition, an additional sidecar to the Dell CSI drivers, and leveraging vanilla Kubernetes snapshots, you can manage the life cycle of crash-consistent snapshots. This means you can create a group of volumes for which the drivers create snapshots, restore them, or move them with one shot simultaneously!

To take a crash-consistent snapshot, you can either use labels on your PersistantVolumeClaim, or be explicit and pass the list of PVCs that you want to snap. For example:

apiVersion: v1 apiVersion: volumegroup.storage.dell.com/v1alpha2 kind: DellCsiVolumeGroupSnapshot metadata: # Name must be 13 characters or less in length name: "vg-snaprun1" spec: driverName: "csi-vxflexos.dellemc.com" memberReclaimPolicy: "Retain" volumesnapshotclass: "poweflex-snapclass" pvcLabel: "vgs-snap-label" # pvcList: # - "pvcName1" # - "pvcName2"

For the first release, CSI for PowerFlex supports Volume Group Snapshot.

Observability

The CSM Observability module is delivered as an open-telemetry agent that collects array-level metrics to scrape them for storage in a Prometheus DB.

The integration is as easy as creating a Prometheus ServiceMonitor for Prometheus. For example:

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: otel-collector

namespace: powerstore

spec:

endpoints:

- path: /metrics

port: exporter-https

scheme: https

tlsConfig:

insecureSkipVerify: true

selector:

matchLabels:

app.kubernetes.io/instance: karavi-observability

app.kubernetes.io/name: otel-collectorWith the observability module, you will gain visibility on the capacity of the volume you manage with Dell CSI drivers and their performance, in terms of bandwidth, IOPS, and response time.

Thanks to pre-canned Grafana dashboards, you will be able to go through these metrics’ history and see the topology between a Kubernetes PersistentVolume (PV) until its translation as a LUN or fileshare in the backend array.

The Kubernetes admin can also collect array level metrics to check the overall capacity performance directly from the familiar Prometheus/Grafana tools.

For the first release, Dell EMC PowerFlex and Dell EMC PowerStore support CSM Observability.

Replication

Each Dell storage array supports replication capabilities. It can be asynchronous with an associated recovery point objective, synchronous replication between sites, or even active-active.

Each replication type serves a different purpose related to the use-case or the constraint you have on your data centers.

The Dell CSM replication module allows creating a persistent volume that can be of any of three replication types -- synchronous, asynchronous, and metro -- assuming the underlying storage box supports it.

The Kubernetes architecture can build on a stretched cluster between two sites or on two or more independent clusters. The module itself is composed of three main components:

- The Replication controller whose role is to manage the CustomResourceDefinition that abstracts the concept of Replication across the Kubernetes cluster

- The Replication sidecar for the CSI driver that will convert the Replication controller request to an actual call on the array side

- The repctl utility, to simplify managing replication objects across multiple Kubernetes clusters

The usual workflow is to create a PVC that is replicated with a classic Kubernetes directive by just picking the right StorageClass. You can then use repctl or edit the DellCSIReplicationGroup CRD to launch operations like Failover, Failback, Reprotect, Suspend, Synchronize, and so on.

For the first release, Dell EMC PowerMax and Dell EMC PowerStore support CSM Replication.

Authorization

With CSM Authorization we are giving back more control of storage consumption to the storage administrator.

The authorization module is an independent service, installed and owned by the storage admin.

Within that module, the storage administrator will create access control policies and storage quotas to make sure that Kubernetes consumers are not overconsuming storage or trying to access data that doesn’t belong to them.

CSM Authorization makes multi-tenant architecture real by enforcing Role-Based Access Control on storage objects coming from multiple and independent Kubernetes clusters.

The authorization module acts as a proxy between the CSI driver and the backend array. Access is granted with an access token that can be revoked at any point in time. Quotas can be changed on the fly to limit or increase storage consumption from the different tenants.

For the first release, Dell EMC PowerMax and Dell EMC PowerFlex support CSM Authorization.

Resilency

When dealing with StatefulApp, if a node goes down, vanilla Kubernetes is pretty conservative.

Indeed, from the Kubernetes control plane, the failing node is seen as not ready. It can be because the node is down, or because of network partitioning between the control plane and the node, or simply because the kubelet is down. In the latter two scenarios, the StatefulApp is still running and possibly writing data on disk. Therefore, Kubernetes won’t take action and lets the admin manually trigger a Pod deletion if desired.

The CSM Resiliency module (sometimes named PodMon) aims to improve that behavior with the help of collected metrics from the array.

Because the driver has access to the storage backend from pretty much all other nodes, we can see the volume status (mapped or not) and its activity (are there IOPS or not). So when a node goes into NotReady state, and we see no IOPS on the volume, Resiliency will relocate the Pod to a new node and clean whatever leftover objects might exist.

The entire process happens in seconds between the moment a node is seen down and the rescheduling of the Pod.

To protect an app with the resiliency module, you only have to add the label podmon.dellemc.com/driver to it, and it is then protected.

For more details on the module’s design, you can check the documentation here.

For the first release, Dell EMC PowerFlex and Dell EMC Unity support CSM Resiliency.

Installer

Each module above is released either as an independent helm chart or as an option within the CSI Drivers.

For more complex deployments, which may involve multiple Kubernetes clusters or a mix of modules, it is possible to use the csm installer.

The CSM Installer, built on top of carvel gives the user a single command line to create their CSM-CSI application and to manage them outside the Kubernetes cluster.

For the first release, all drivers and modules support the CSM Installer.

New CSI features

Across portfolio

For each driver, this release provides:

- Support of OpenShift 4.8

- Support of Kubernetes 1.22

- Support of Rancher Kubernetes Engine 2

- Normalized configurations between drivers

- Dynamic Logging Configuration

- New CSM installer

VMware Tanzu Kubernetes Grid

VMware Tanzu offers storage management by means of its CNS-CSI driver, but it doesn’t support ReadWriteMany access mode.