Use Go Debugger’s Delve with Kubernetes and CSI PowerFlex

Wed, 15 Mar 2023 14:41:14 -0000

|Read Time: 0 minutes

Some time ago, I faced a bug where it was important to understand the precise workflow.

One of the beauties of open source is that the user can also take the pilot seat!

In this post, we will see how to compile the Dell CSI driver for PowerFlex with a debugger, configure the driver to allow remote debugging, and attach an IDE.

Compilation

Base image

First, it is important to know that Dell and RedHat are partners, and all CSI/CSM containers are certified by RedHat.

This comes with a couple of constraints, one being that all containers use the Red Hat UBI Minimal image as a base image and, to be certified, extra packages must come from a Red Hat official repo.

CSI PowerFlex needs the e4fsprogs package to format file systems in ext4, and that package is missing from the default UBI repo. To install it, you have these options:

- If you build the image from a registered and subscribed RHEL host, the repos of the server are automatically accessible from the UBI image. This only works with podman build.

- If you have a Red Hat Satellite subscription, you can update the Dockerfile to point to that repo.

- You can use a third-party repository.

- You go the old way and compile the package yourself (the source of that package is in UBI source-code repo).

Here we’ll use an Oracle Linux mirror, which allows us to access binary-compatible packages without the need for registration or payment of a Satellite subscription.

The Oracle Linux 8 repo is:

[oracle-linux-8-baseos] name=Oracle Linux 8 - BaseOS baseurl=http://yum.oracle.com/repo/OracleLinux/OL8/baseos/latest/x86_64 gpgcheck = 0 enabled = 1

And we add it to final image in the Dockerfile with a COPY directive:

# Stage to build the driver image

FROM $BASEIMAGE@${DIGEST} AS final

# install necessary packages

# alphabetical order for easier maintenance

COPY ol-8-base.repo /etc/yum.repos.d/

RUN microdnf update -y && \

...Delve

There are several debugger options available for Go. You can use the venerable GDB, a native solution like Delve, or an integrated debugger in your favorite IDE.

For our purposes, we prefer to use Delve because it allows us to connect to a remote Kubernetes cluster.

Our Dockerfile employs a multi-staged build approach. The first stage is for building (and named builder) from the Golang image; we can add Delve with the directive:

RUN go install github.com/go-delve/delve/cmd/dlv@latest

And then compile the driver.

On the final image that is our driver, we add the binary as follows:

# copy in the driver COPY --from=builder /go/src/csi-vxflexos / COPY --from=builder /go/bin/dlv /

In the build stage, we download Delve with:

RUN go get github.com/go-delve/delve/cmd/dlv

In the final image we copy the binary with:

COPY --from=builder /go/bin/dlv /

To achieve better results with the debugger, it is important to disable optimizations when compiling the code.

This is done in the Makefile with:

CGO_ENABLED=0 GOOS=linux GO111MODULE=on go build -gcflags "all=-N -l"

After rebuilding the image with make docker and pushing it to your registry, you need to expose the Delve port for the driver container. You can do this by adding the following lines to your Helm chart. We need to add the lines to the driver container of the Controller Deployment.

ports: - containerPort: 40000

Alternatively, you can use the kubectl edit -n powerflex deployment command to modify the Kubernetes deployment directly.

Usage

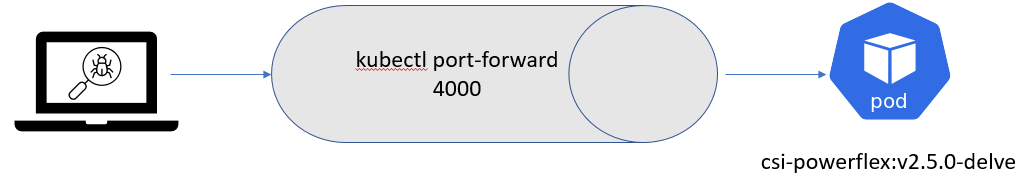

Assuming that the build has been completed successfully and the driver is deployed on the cluster, we can expose the debugger socket locally by running the following command:

kubectl port-forward -n powerflex pod/csi-powerflex-controller-uid 40000:40000

Next, we can open the project in our favorite IDE and ensure that we are on the same branch that was used to build the driver.

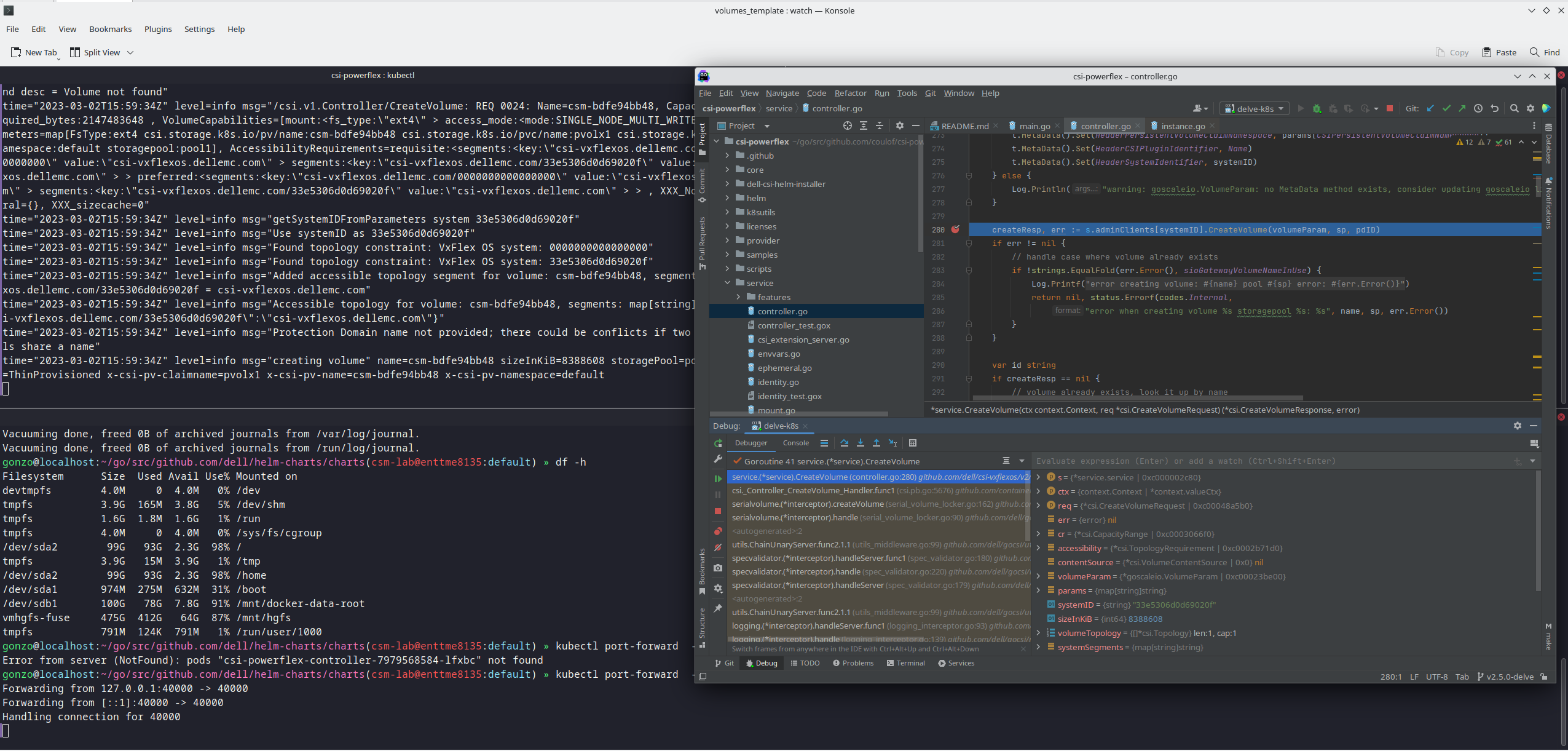

In the following screenshot I used Goland, but VSCode can do remote debugging too.

We can now connect the IDE to that forwarded socket and run the debugger live:

And here is the result of a breakpoint on CreateVolume call:

The full code is here: https://github.com/dell/csi-powerflex/compare/main...coulof:csi-powerflex:v2.5.0-delve.

If you liked this information and need more deep-dive details on Dell CSI and CSM, feel free to reach out at https://dell-iac.slack.com.

Author: Florian Coulombel

Related Blog Posts

CSM 1.8 Release is Here!

Fri, 22 Sep 2023 21:29:12 -0000

|Read Time: 0 minutes

Introduction

This is already the third release of Dell Container Storage Modules (CSM)!

The official changelog is available in the CHANGELOG directory of the CSM repository.

CSI Features

Supported Kubernetes distributions

The newly supported Kubernetes distributions are :

- Kubernetes 1.28

- OpenShift 4.13

SD-NAS support for PowerMax and PowerFlex

Historically, PowerMax and PowerFlex are Dell’s high-end and SDS for block storage. Both of these backends recently introduced support for software defined NAS.

This means that the respective CSI drivers can now provision PVC with the ReadWriteMany access mode for the volume type file. In other words, thanks to the NFS protocol different nodes from the Kubernetes cluster can access the same volume concurrently. This feature is particularly useful for applications, such as log management tools like Splunk or Elastic Search, that need to process logs coming from multiple Pods.

CSI Specification compliance

Storage capacity tracking

Like PowerScale in v1.7.0, PowerMax and Dell Unity allow you to check the storage capacity on a node before deploying storage to that node. This isn't that relevant in the case of shared storage, because shared storage generally will always show the same capacity to each node in the cluster. However, it could prove useful if the array lacks available storage.

Using this feature, an object from the CSIStorageCapacity type is created by the CSI driver in the same namespace as the CSI driver, one for each storageClass.

An example:

kubectl get csistoragecapacities -n unity # This shows one object per storageClass.

Volume Limits

The Volume Limits feature is added to both PowerStore and PowerFlex. All Dell storage platforms now implement this feature.

This option limits the maximum number of volumes to which a Kubernetes worker node can connect. This can be configured on a per-node basis, or cluster-wide. Setting this variable to zero disables the limit.

Here are some PowerStore examples.

Per node:

kubectl label node <node name> max-powerstore-volumes-per-node=<volume_limit>

For the entire cluster (all worker nodes):

Specify maxPowerstoreVolumesPerNode or maxVxflexVolumesPerNode in the values.yaml file upon Helm installation.

If you opted-in for the CSP Operator deployment, you can control it by specifying X_CSI_MAX_VOLUMES_PER_NODES in the CRD.

Useful links

Stay informed of the latest updates of the Dell CSM eco-system by subscribing to:

- The Dell CSM Github repository

- Our DevOps & Automation Youtube playlist

- Slack (under the Dell Infrastructure namespace)

- Live streaming on Twitch

Author: Florian Coulombel

CSM 1.7 Release is Here!

Fri, 30 Jun 2023 13:42:36 -0000

|Read Time: 0 minutes

Introduction

The second release of 2023 for Kubernetes CSI Driver & Dell Container Storage Modules (CSM) is here!

The official changelog is available in the CHANGELOG directory of the CSM repository.

As you may know, Dell Container Storage Modules (CSM) bring powerful enterprise storage features and functionality to your Kubernetes workloads running on Dell primary storage arrays, and provide easier adoption of cloud native workloads, improved productivity, and scalable operations. Read on to learn more about what’s in this latest release.

CSI features

Supported Kubernetes distributions

The newly supported Kubernetes distributions are:

- Kubernetes 1.27

- OpenShift 4.12

- Amazon EKS Anywhere

- k3s on Debian

CSI PowerMax

For the last couple of versions, the CSI PowerMax reverseproxy is enabled by default. The TLS certificate secret creation is now pre-packaged using cert-manager, to avoid manual steps for the administrator.

A volume can be mounted to a Pod as `readOnly`. This is the default behavior for a `configMap` or `secret`. That option is now also supported for RawBlock devices.

apiVersion: v1 kind: Pod metadata: name: task-pv-pod spec: volumes: - name: task-pv-storage persistentVolumeClaim: claimName: task-pv-claim # What ever is the accessMode it will be read-only for the Pod readOnly: true ...

CSM v1.5 introduced the capacity to provision Fibre Channel LUNs to Kubernetes worker nodes through VMware Raw Device Mapping. One limitation of the RDM/LUN was that it was sticky to a single ESXi host, meaning that the Pod could not move to another worker node.

The auto-RDM feature works at the HostGroup level in PowerMax and therefore supports clusters with multiple ESXi hosts.

We are exposing the host I/O limits on the storage groups parameter using the StorageClass. The Host I/O limit is here to implement QoS at the worker node level and to prevent any noisy neighbor behavior.

CSI PowerScale

Storage Capacity Tracking is used by the Kubernetes scheduler to make sure that the node and backend storage have enough capacity for Pod/PVC.

The user can now set Quota limit parameters from the PVC and StorageClass requests. This allows the user to have better control of the quota parameters (including Soft Limit, AdvisoryLimit, Softgrace period) attached to each PVC

The PVC settings take precedence if quota limit values are specified in both StorageClass and PVC.

CSM features

CSM Operator

One can now use the CSM Operator to install Dell Unity and PowerMax CSI drivers and affiliated modules.

The CSM Operator now provides CSM resiliency and CSM-Replication for CSI-PowerFlex.

A detailed matrix of supported CSM components is available here.

CSM Installation Wizard

The CSM Installation Wizard is the easiest and most straight forward way to install the Dell CSI drivers and Container Storage Modules.

In this release, we are adding support for Dell Unity, PowerScale, and PowerFlex.

To keep it simple, we removed the option to install the driver and modules in separate namespaces.

CSM Authorization

In this release of CSM, Secrets Encryption is enabled by default.

- All secrets are encrypted by default, using the AES-CBC key type.

- After installation/upgrade, all secrets will be encrypted.

- AES-CBC is the default key type.

- AES-CBC is the only supported key type.

CSM Replication

When you use CSM replication, two volumes are created: the active volume and the replica. Prior to CSM v1.7, if you removed the two PVs, the physical replica wasn't deleted.

Now on PV deletion, we cascade the removal to all objects, including the replica block volumes in PowerStore, PowerMax, and PowerFlex, so that there are no more orphan volumes.

Useful links

Stay informed of the latest updates of the Dell CSM eco-system by subscribing to:

- The Dell CSM Github repository

- Our DevOps & Automation Youtube playlist

- Slack (under the Dell Infrastructure namespace)

Author: Florian Coulombel