Dell Container Storage Modules—A GitOps-Ready Platform!

Mon, 26 Sep 2022 15:17:45 -0000

|Read Time: 0 minutes

One of the first things I do after deploying a Kubernetes cluster is to install a CSI driver to provide persistent storage to my workloads; coupled with a GitOps workflow; it takes only seconds to be able to run stateful workloads.

The GitOps process is nothing more than a few principles:

- Git as a single source of truth

- Resource explicitly declarative

- Pull based

Nonetheless, to ensure that the process runs smoothly, you must make certain that the application you will manage with GitOps complies with these principles.

This article describes how to use the Microsoft Azure Arc GitOps solution to deploy the Dell CSI driver for Dell PowerMax and affiliated Container Storage Modules (CSMs).

The platform we will use to implement the GitOps workflow is Azure Arc with GitHub. Still, other solutions are possible using Kubernetes agents such as Argo CD, Flux CD, and GitLab.

Azure GitOps itself is built on top of Flux CD.

Install Azure Arc

The first step is to onboard your existing Kubernetes cluster within the Azure portal.

Obviously, the Azure agent will connect to the Internet. In my case, the installation of the Arc agent fails from the Dell network with the error described here: https://docs.microsoft.com/en-us/answers/questions/734383/connect-openshift-cluster-to-azure-arc-secret-34ku.html

Certain URLs (even when bypassing the corporate proxy) don't play well when communicating with Azure. I have seen some services get a self-signed certificate, causing the issue.

The solution for me was to put an intermediate transparent proxy between the Kubernetes cluster and the corporate cluster. That way, we can have better control over the responses given by the proxy.

In this example, we install Squid on a dedicated box with the help of Docker. To make it work, I used the Squid image by Ubuntu and made sure that Kubernetes requests were direct with the help of always_direct:

docker run -d --name squid-container ubuntu/squid:5.2-22.04_beta ; docker cp squid-container:/etc/squid/squid.conf ./ ; egrep -v '^#' squid.conf > my_squid.conf docker rm -f squid-container

Then add the following section:

acl k8s port 6443 # k8s https always_direct allow k8s

You can now install the agent per the following instructions: https://docs.microsoft.com/en-us/azure/azure-arc/kubernetes/quickstart-connect-cluster?tabs=azure-cli#connect-using-an-outbound-proxy-server.

export HTTP_PROXY=http://mysquid-proxy.dell.com:3128 export HTTPS_PROXY=http://mysquid-proxy.dell.com:3128 export NO_PROXY=https://kubernetes.local:6443 az connectedk8s connect --name AzureArcCorkDevCluster \ --resource-group AzureArcTestFlorian \ --proxy-https http://mysquid-proxy.dell.com:3128 \ --proxy-http http://mysquid-proxy.dell.com:3128 \ --proxy-skip-range 10.0.0.0/8,kubernetes.default.svc,.svc.cluster.local,.svc \ --proxy-cert /etc/ssl/certs/ca-bundle.crt

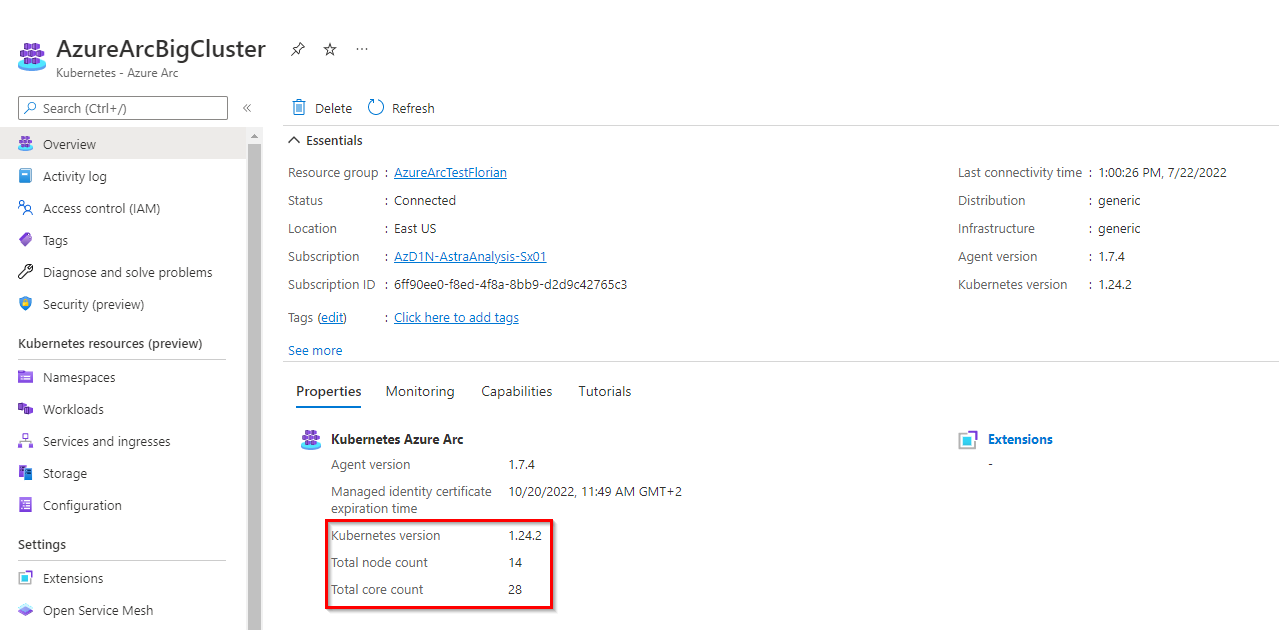

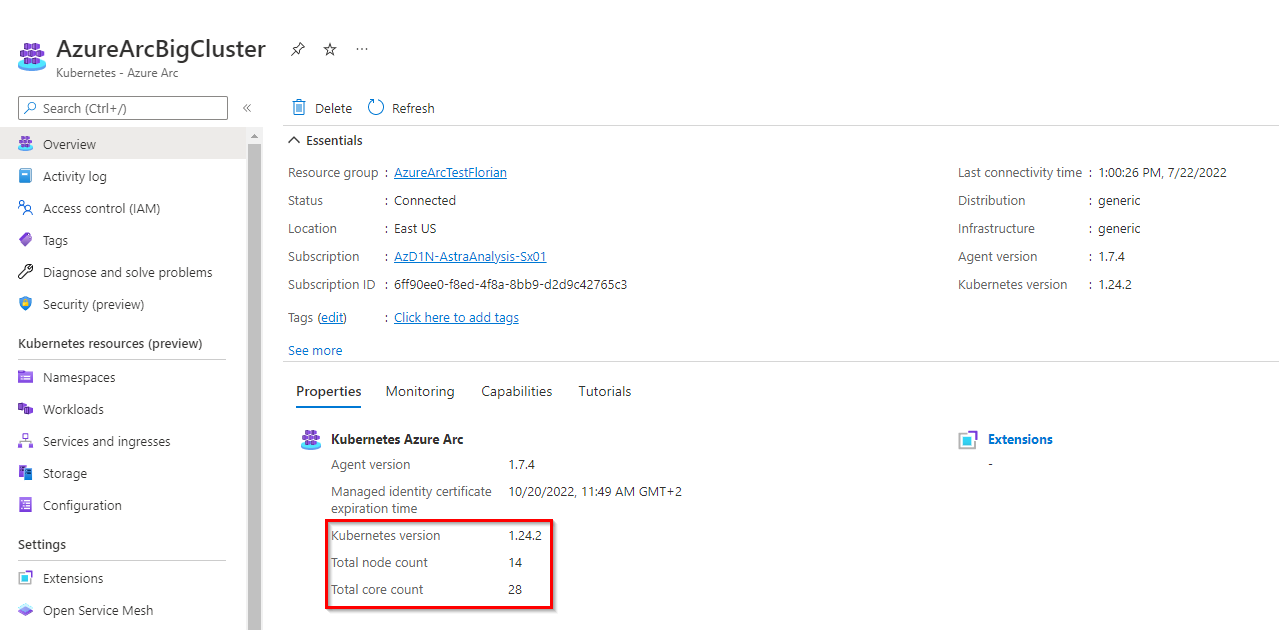

If everything worked well, you should see the cluster with detailed info from the Azure portal:

Add a service account for more visibility in Azure portal

To benefit from all the features that Azure Arc offers, give the agent the privileges to access the cluster.

The first step is to create a service account:

kubectl create serviceaccount azure-user kubectl create clusterrolebinding demo-user-binding --clusterrole cluster-admin --serviceaccount default:azure-user kubectl apply -f - <<EOF apiVersion: v1 kind: Secret metadata: name: azure-user-secret annotations: kubernetes.io/service-account.name: azure-user type: kubernetes.io/service-account-token EOF

Then, from the Azure UI, when you are prompted to give a token, you can obtain it as follows:

kubectl get secret azure-user-secret -o jsonpath='{$.data.token}' | base64 -d | sed $'s/$/\\\n/g'Then paste the token in the Azure UI.

Install the GitOps agent

The GitOps agent installation can be done with a CLI or in the Azure portal.

As of now, the Microsoft documentation presents in detail the deployment that uses the CLI; so let's see how it works with the Azure portal:

Organize the repository

The Git repository organization is a crucial part of the GitOps architecture. It hugely depends on how internal teams are organized, the level of information you want to expose and share, the location of the different clusters, and so on.

In our case, the requirement is to connect multiple Kubernetes clusters owned by different teams to a couple of PowerMax systems using only the latest and greatest CSI driver and affiliated CSM for PowerMax.

Therefore, the monorepo approach is suited.

The organization follows this structure:

.

├── apps

│ ├── base

│ └── overlays

│ ├── cork-development

│ │ ├── dev-ns

│ │ └── prod-ns

│ └── cork-production

│ └── prod-ns

├── clusters

│ ├── cork-development

│ └── cork-production

└── infrastructure

├── cert-manager

├── csm-replication

├── external-snapshotter

└── powermax

- apps: Contains the applications to be deployed on the clusters.

- We have different overlays per cluster.

- cluster: Usually contains the cluster-specific Flux CD main configuration; using Azure Arc, none is needed.

- Infrastructure: Contains the deployments that are used to run the infrastructure services; they are common to every cluster.

- cert-manager: Is a dependency of powermax reverse-proxy

- csm-replication: Is a dependency of powermax to support SRDF replication

- external-snapshotter: Is a dependency of powermax to snapshot

- powermax: Contains the driver installation

You can see all files in https://github.com/coulof/fluxcd-csm-powermax.

Note: The GitOps agent comes with multi-tenancy support; therefore, we cannot cross-reference objects between namespaces. The Kustomization and HelmRelease must be created in the same namespace as the agent (here, flux-system) and have a corresponding targetNamespace to the resource to be installed.

Conclusion

This article is the first of a series exploring the GitOps workflow. Next, we will see how to manage application and persistent storage with the GitOps workflow, how to upgrade the modules, and so on.

Resources

- Tutorial: Implement CI/CD with GitOps (Flux v2)

- GitOps Flux v2 configurations with AKS and Azure Arc-enabled Kubernetes

Related Blog Posts

Dell Container Storage Modules—A GitOps-Ready Platform!

Thu, 26 Jan 2023 19:04:30 -0000

|Read Time: 0 minutes

One of the first things I do after deploying a Kubernetes cluster is to install a CSI driver to provide persistent storage to my workloads; coupled with a GitOps workflow; it takes only seconds to be able to run stateful workloads.

The GitOps process is nothing more than a few principles:

- Git as a single source of truth

- Resource explicitly declarative

- Pull based

Nonetheless, to ensure that the process runs smoothly, you must make certain that the application you will manage with GitOps complies with these principles.

This article describes how to use the Microsoft Azure Arc GitOps solution to deploy the Dell CSI driver for Dell PowerMax and affiliated Container Storage Modules (CSMs).

The platform we will use to implement the GitOps workflow is Azure Arc with GitHub. Still, other solutions are possible using Kubernetes agents such as Argo CD, Flux CD, and GitLab.

Azure GitOps itself is built on top of Flux CD.

Install Azure Arc

The first step is to onboard your existing Kubernetes cluster within the Azure portal.

Obviously, the Azure agent will connect to the Internet. In my case, the installation of the Arc agent fails from the Dell network with the error described here: https://docs.microsoft.com/en-us/answers/questions/734383/connect-openshift-cluster-to-azure-arc-secret-34ku.html

Certain URLs (even when bypassing the corporate proxy) don't play well when communicating with Azure. I have seen some services get a self-signed certificate, causing the issue.

The solution for me was to put an intermediate transparent proxy between the Kubernetes cluster and the corporate cluster. That way, we can have better control over the responses given by the proxy.

In this example, we install Squid on a dedicated box with the help of Docker. To make it work, I used the Squid image by Ubuntu and made sure that Kubernetes requests were direct with the help of always_direct:

docker run -d --name squid-container ubuntu/squid:5.2-22.04_beta ; docker cp squid-container:/etc/squid/squid.conf ./ ; egrep -v '^#' squid.conf > my_squid.conf docker rm -f squid-container

Then add the following section:

acl k8s port 6443 # k8s https always_direct allow k8s

You can now install the agent per the following instructions: https://docs.microsoft.com/en-us/azure/azure-arc/kubernetes/quickstart-connect-cluster?tabs=azure-cli#connect-using-an-outbound-proxy-server.

export HTTP_PROXY=http://mysquid-proxy.dell.com:3128 export HTTPS_PROXY=http://mysquid-proxy.dell.com:3128 export NO_PROXY=https://kubernetes.local:6443 az connectedk8s connect --name AzureArcCorkDevCluster \ --resource-group AzureArcTestFlorian \ --proxy-https http://mysquid-proxy.dell.com:3128 \ --proxy-http http://mysquid-proxy.dell.com:3128 \ --proxy-skip-range 10.0.0.0/8,kubernetes.default.svc,.svc.cluster.local,.svc \ --proxy-cert /etc/ssl/certs/ca-bundle.crt

If everything worked well, you should see the cluster with detailed info from the Azure portal:

Add a service account for more visibility in Azure portal

To benefit from all the features that Azure Arc offers, give the agent the privileges to access the cluster.

The first step is to create a service account:

kubectl create serviceaccount azure-user kubectl create clusterrolebinding demo-user-binding --clusterrole cluster-admin --serviceaccount default:azure-user kubectl apply -f - <<EOF apiVersion: v1 kind: Secret metadata: name: azure-user-secret annotations: kubernetes.io/service-account.name: azure-user type: kubernetes.io/service-account-token EOF

Then, from the Azure UI, when you are prompted to give a token, you can obtain it as follows:

kubectl get secret azure-user-secret -o jsonpath='{$.data.token}' | base64 -d | sed $'s/$/\\\n/g'Then paste the token in the Azure UI.

Install the GitOps agent

The GitOps agent installation can be done with a CLI or in the Azure portal.

As of now, the Microsoft documentation presents in detail the deployment that uses the CLI; so let's see how it works with the Azure portal:

Organize the repository

The Git repository organization is a crucial part of the GitOps architecture. It hugely depends on how internal teams are organized, the level of information you want to expose and share, the location of the different clusters, and so on.

In our case, the requirement is to connect multiple Kubernetes clusters owned by different teams to a couple of PowerMax systems using only the latest and greatest CSI driver and affiliated CSM for PowerMax.

Therefore, the monorepo approach is suited.

The organization follows this structure:

.

├── apps

│ ├── base

│ └── overlays

│ ├── cork-development

│ │ ├── dev-ns

│ │ └── prod-ns

│ └── cork-production

│ └── prod-ns

├── clusters

│ ├── cork-development

│ └── cork-production

└── infrastructure

├── cert-manager

├── csm-replication

├── external-snapshotter

└── powermax

- apps: Contains the applications to be deployed on the clusters.

- We have different overlays per cluster.

- cluster: Usually contains the cluster-specific Flux CD main configuration; using Azure Arc, none is needed.

- Infrastructure: Contains the deployments that are used to run the infrastructure services; they are common to every cluster.

- cert-manager: Is a dependency of powermax reverse-proxy

- csm-replication: Is a dependency of powermax to support SRDF replication

- external-snapshotter: Is a dependency of powermax to snapshot

- powermax: Contains the driver installation

You can see all files in https://github.com/coulof/fluxcd-csm-powermax.

Note: The GitOps agent comes with multi-tenancy support; therefore, we cannot cross-reference objects between namespaces. The Kustomization and HelmRelease must be created in the same namespace as the agent (here, flux-system) and have a corresponding targetNamespace to the resource to be installed.

Conclusion

This article is the first of a series exploring the GitOps workflow. Next, we will see how to manage application and persistent storage with the GitOps workflow, how to upgrade the modules, and so on.

Resources

How to Build a Custom Dell CSI Driver

Wed, 20 Apr 2022 21:28:38 -0000

|Read Time: 0 minutes

With all the Dell Container Storage Interface (CSI) drivers and dependencies being open-source, anyone can tweak them to fit a specific use case.

This blog shows how to create a patched version of a Dell CSI Driver for PowerScale.

The premise

As a practical example, the following steps show how to create a patched version of Dell CSI Driver for PowerScale that supports a longer mounted path.

The CSI Specification defines that a driver must accept a max path of 128 bytes minimal:

// SP SHOULD support the maximum path length allowed by the operating

// system/filesystem, but, at a minimum, SP MUST accept a max path

// length of at least 128 bytes.

Dell drivers use the gocsi library as a common boilerplate for CSI development. That library enforces the 128 bytes maximum path length.

The PowerScale hardware supports path lengths up to 1023 characters, as described in the File system guidelines chapter of the PowerScale spec. We’ll therefore build a csi-powerscale driver that supports that maximum length path value.

Steps to patch a driver

Dependencies

The Dell CSI drivers are all built with golang and, obviously, run as a container. As a result, the prerequisites are relatively simple. You need:

- Golang (v1.16 minimal at the time of the publication of that post)

- Podman or Docker

- And optionally make to run our Makefile

Clone, branch, and patch

The first thing to do is to clone the official csi-powerscale repository in your GOPATH source directory.

cd $GOPATH/src/github.com/

git clone git@github.com:dell/csi-powerscale.git dell/csi-powerscale

cd dell/csi-powerscaleYou can then pick the version of the driver you want to patch; git tag gives the list of versions.

In this example, we pick the v2.1.0 with git checkout v2.1.0 -b v2.1.0-longer-path.

The next step is to obtain the library we want to patch.

gocsi and every other open-source component maintained for Dell CSI are available on https://github.com/dell.

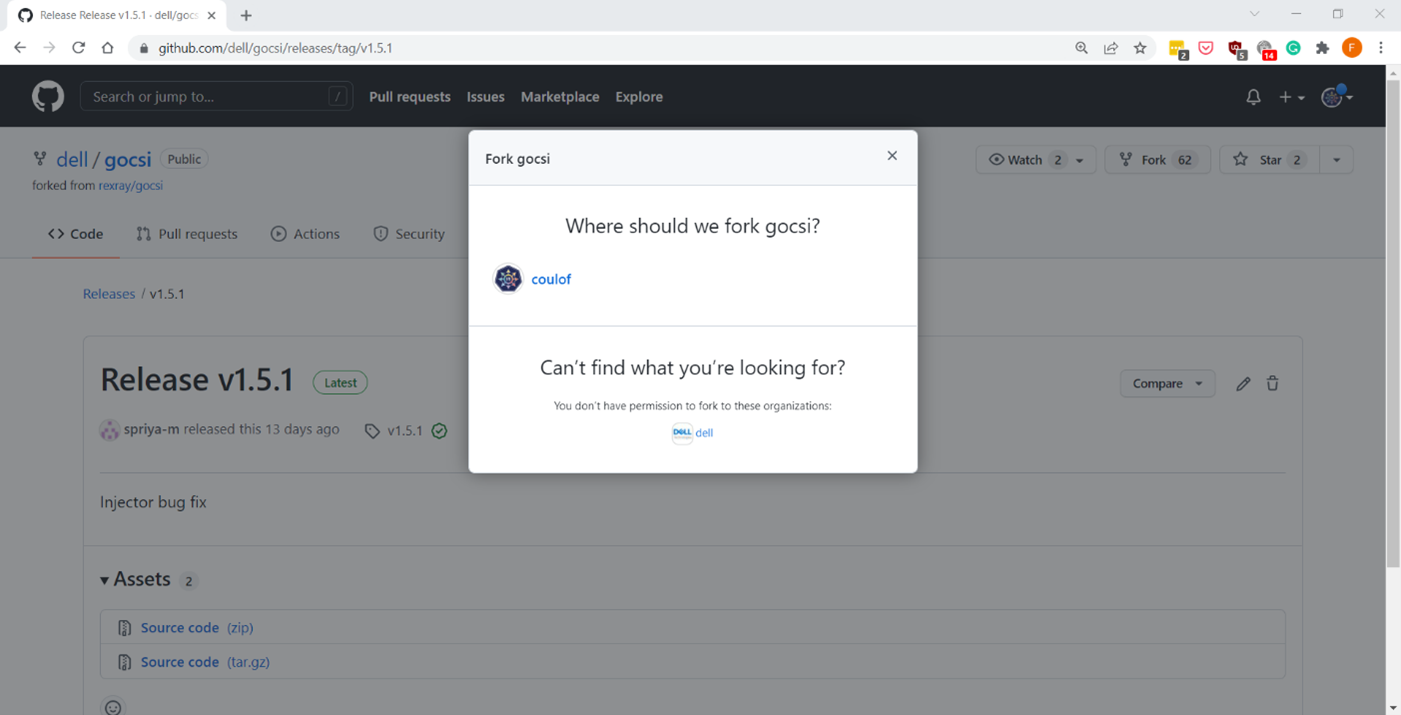

The following figure shows how to fork the repository on your private github:

Now we can get the library with:

cd $GOPATH/src/github.com/

git clone git@github.com:coulof/gocsi.git coulof/gocsi

cd coulof/gocsiTo simplify the maintenance and merge of future commits, it is wise to add the original repo as an upstream branch with:

git remote add upstream git@github.com:dell/gocsi.gitThe next important step is to pick and choose the correct library version used by our version of the driver.

We can check the csi-powerscale dependency file with: grep gocsi $GOPATH/src/github.com/dell/csi-powerscale/go.mod and create a branch of that version. In this case, the version is v1.5.0, and we can branch it with: git checkout v1.5.0 -b v1.5.0-longer-path.

Now it’s time to hack our patch! Which is… just a oneliner:

--- a/middleware/specvalidator/spec_validator.go

+++ b/middleware/specvalidator/spec_validator.go

@@ -770,7 +770,7 @@ func validateVolumeCapabilitiesArg(

}

const (

- maxFieldString = 128

+ maxFieldString = 1023

maxFieldMap = 4096

maxFieldNodeId = 256

)We can then commit and push our patched library with a nice tag:

git commit -a -m 'increase path limit'

git push --set-upstream origin v1.5.0-longer-path

git tag -a v1.5.0-longer-path

git push --tagsBuild

With the patch committed and pushed, it’s time to build the CSI driver binary and its container image.

Let’s go back to the csi-powerscale main repo: cd $GOPATH/src/github.com/dell/csi-powerscale

As mentioned in the introduction, we can take advantage of the replace directive in the go.mod file to point to the patched lib. In this case we add the following:

diff --git a/go.mod b/go.mod

index 5c274b4..c4c8556 100644

--- a/go.mod

+++ b/go.mod

@@ -26,6 +26,7 @@ require (

)

replace (

+ github.com/dell/gocsi => github.com/coulof/gocsi v1.5.0-longer-path

k8s.io/api => k8s.io/api v0.20.2

k8s.io/apiextensions-apiserver => k8s.io/apiextensions-apiserver v0.20.2

k8s.io/apimachinery => k8s.io/apimachinery v0.20.2When that is done, we obtain the new module from the online repo with: go mod download

Note: If you want to test the changes locally only, we can use the replace directive to point to the local directory with:

replace github.com/dell/gocsi => ../../coulof/gocsiWe can then build our new driver binary locally with: make build

After compiling it successfully, we can create the image. The shortest path to do that is to replace the csi-isilon binary from the dellemc/csi-isilon docker image with:

cat << EOF > Dockerfile.patch

FROM dellemc/csi-isilon:v2.1.0

COPY "csi-isilon" .

EOF

docker build -t coulof/csi-isilon:v2.1.0-long-path -f Dockerfile.patch . Alternatively, you can rebuild an entire docker image using provided Makefile.

By default, the driver uses a Red Hat Universal Base Image minimal. That base image sometimes misses dependencies, so you can use another flavor, such as:

BASEIMAGE=registry.fedoraproject.org/fedora-minimal:latest REGISTRY=docker.io IMAGENAME=coulof/csi-powerscale IMAGETAG=v2.1.0-long-path make podman-buildThe image is ready to be pushed in whatever image registry you prefer. In this case, this is hub.docker.com: docker push coulof/csi-isilon:v2.1.0-long-path.

Update CSI Kubernetes deployment

The last step is to replace the driver image used in your Kubernetes with your custom one.

Again, multiple solutions are possible, and the one to choose depends on how you deployed the driver.

If you used the helm installer, you can add the following block at the top of the myvalues.yaml file:

images:

driver: docker.io/coulof/csi-powerscale:v2.1.0-long-pathThen update or uninstall/reinstall the driver as described in the documentation.

If you decided to use the Dell CSI Operator, you can simply point to the new image:

apiVersion: storage.dell.com/v1

kind: CSIIsilon

metadata:

name: isilon

spec:

driver:

common:

image: "docker.io/coulof/csi-powerscale:v2.1.0-long-path"

...Or, if you want to do a quick and dirty test, you can create a patch file (here named path_csi-isilon_controller_image.yaml) with the following content:

spec:

template:

spec:

containers:

- name: driver

image: docker.io/coulof/csi-powerscale:v2.1.0-long-pathYou can then apply it to your existing install with: kubectl patch deployment -n powerscale isilon-controller --patch-file path_csi-isilon_controller_image.yaml

In all cases, you can check that everything works by first making sure that the Pod is started:

kubectl get pods -n powerscale and that the logs are clean:

kubectl logs -n powerscale -l app=isilon-controller -c driver.Wrap-up and disclaimer

As demonstrated, thanks to the open source, it’s easy to fix and improve Dell CSI drivers or Dell Container Storage Modules.

Keep in mind that Dell officially supports (through tickets, Service Requests, and so on) the image and binary, but not the custom build.

Thanks for reading and stay tuned for future posts on Dell Storage and Kubernetes!

Author: Florian Coulombel