CSI drivers 2.0 and Dell EMC Container Storage Modules GA!

Mon, 29 Apr 2024 18:38:49 -0000

|Read Time: 0 minutes

The quarterly update for Dell CSI Driver is here! But today marks a significant milestone because we are also announcing the availability of Dell EMC Container Storage Modules (CSM). Here’s what we’re covering in this blog:

Container Storage Modules

Dell Container Storage Modules is a set of modules that aims to extend Kubernetes storage features beyond what is available in the CSI specification.

The CSM modules will expose storage enterprise features directly within Kubernetes, so developers are empowered to leverage them for their deployment in a seamless way.

Most of these modules are released as sidecar containers that work with the CSI driver for the Dell storage array technology you use.

CSM modules are open-source and freely available from : https://github.com/dell/csm.

Volume Group Snapshot

Many stateful apps can run on top of multiple volumes. For example, we can have a transactional DB like Postgres with a volume for its data and another for the redo log, or Cassandra that is distributed across nodes, each having a volume, and so on.

When you want to take a recoverable snapshot, it is vital to take them consistently at the exact same time.

Dell CSI Volume Group Snapshotter solves that problem for you. With the help of a CustomResourceDefinition, an additional sidecar to the Dell CSI drivers, and leveraging vanilla Kubernetes snapshots, you can manage the life cycle of crash-consistent snapshots. This means you can create a group of volumes for which the drivers create snapshots, restore them, or move them with one shot simultaneously!

To take a crash-consistent snapshot, you can either use labels on your PersistantVolumeClaim, or be explicit and pass the list of PVCs that you want to snap. For example:

apiVersion: v1 apiVersion: volumegroup.storage.dell.com/v1alpha2 kind: DellCsiVolumeGroupSnapshot metadata: # Name must be 13 characters or less in length name: "vg-snaprun1" spec: driverName: "csi-vxflexos.dellemc.com" memberReclaimPolicy: "Retain" volumesnapshotclass: "poweflex-snapclass" pvcLabel: "vgs-snap-label" # pvcList: # - "pvcName1" # - "pvcName2"

For the first release, CSI for PowerFlex supports Volume Group Snapshot.

Observability

The CSM Observability module is delivered as an open-telemetry agent that collects array-level metrics to scrape them for storage in a Prometheus DB.

The integration is as easy as creating a Prometheus ServiceMonitor for Prometheus. For example:

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: otel-collector

namespace: powerstore

spec:

endpoints:

- path: /metrics

port: exporter-https

scheme: https

tlsConfig:

insecureSkipVerify: true

selector:

matchLabels:

app.kubernetes.io/instance: karavi-observability

app.kubernetes.io/name: otel-collectorWith the observability module, you will gain visibility on the capacity of the volume you manage with Dell CSI drivers and their performance, in terms of bandwidth, IOPS, and response time.

Thanks to pre-canned Grafana dashboards, you will be able to go through these metrics’ history and see the topology between a Kubernetes PersistentVolume (PV) until its translation as a LUN or fileshare in the backend array.

The Kubernetes admin can also collect array level metrics to check the overall capacity performance directly from the familiar Prometheus/Grafana tools.

For the first release, Dell EMC PowerFlex and Dell EMC PowerStore support CSM Observability.

Replication

Each Dell storage array supports replication capabilities. It can be asynchronous with an associated recovery point objective, synchronous replication between sites, or even active-active.

Each replication type serves a different purpose related to the use-case or the constraint you have on your data centers.

The Dell CSM replication module allows creating a persistent volume that can be of any of three replication types -- synchronous, asynchronous, and metro -- assuming the underlying storage box supports it.

The Kubernetes architecture can build on a stretched cluster between two sites or on two or more independent clusters. The module itself is composed of three main components:

- The Replication controller whose role is to manage the CustomResourceDefinition that abstracts the concept of Replication across the Kubernetes cluster

- The Replication sidecar for the CSI driver that will convert the Replication controller request to an actual call on the array side

- The repctl utility, to simplify managing replication objects across multiple Kubernetes clusters

The usual workflow is to create a PVC that is replicated with a classic Kubernetes directive by just picking the right StorageClass. You can then use repctl or edit the DellCSIReplicationGroup CRD to launch operations like Failover, Failback, Reprotect, Suspend, Synchronize, and so on.

For the first release, Dell EMC PowerMax and Dell EMC PowerStore support CSM Replication.

Authorization

With CSM Authorization we are giving back more control of storage consumption to the storage administrator.

The authorization module is an independent service, installed and owned by the storage admin.

Within that module, the storage administrator will create access control policies and storage quotas to make sure that Kubernetes consumers are not overconsuming storage or trying to access data that doesn’t belong to them.

CSM Authorization makes multi-tenant architecture real by enforcing Role-Based Access Control on storage objects coming from multiple and independent Kubernetes clusters.

The authorization module acts as a proxy between the CSI driver and the backend array. Access is granted with an access token that can be revoked at any point in time. Quotas can be changed on the fly to limit or increase storage consumption from the different tenants.

For the first release, Dell EMC PowerMax and Dell EMC PowerFlex support CSM Authorization.

Resilency

When dealing with StatefulApp, if a node goes down, vanilla Kubernetes is pretty conservative.

Indeed, from the Kubernetes control plane, the failing node is seen as not ready. It can be because the node is down, or because of network partitioning between the control plane and the node, or simply because the kubelet is down. In the latter two scenarios, the StatefulApp is still running and possibly writing data on disk. Therefore, Kubernetes won’t take action and lets the admin manually trigger a Pod deletion if desired.

The CSM Resiliency module (sometimes named PodMon) aims to improve that behavior with the help of collected metrics from the array.

Because the driver has access to the storage backend from pretty much all other nodes, we can see the volume status (mapped or not) and its activity (are there IOPS or not). So when a node goes into NotReady state, and we see no IOPS on the volume, Resiliency will relocate the Pod to a new node and clean whatever leftover objects might exist.

The entire process happens in seconds between the moment a node is seen down and the rescheduling of the Pod.

To protect an app with the resiliency module, you only have to add the label podmon.dellemc.com/driver to it, and it is then protected.

For more details on the module’s design, you can check the documentation here.

For the first release, Dell EMC PowerFlex and Dell EMC Unity support CSM Resiliency.

Installer

Each module above is released either as an independent helm chart or as an option within the CSI Drivers.

For more complex deployments, which may involve multiple Kubernetes clusters or a mix of modules, it is possible to use the csm installer.

The CSM Installer, built on top of carvel gives the user a single command line to create their CSM-CSI application and to manage them outside the Kubernetes cluster.

For the first release, all drivers and modules support the CSM Installer.

New CSI features

Across portfolio

For each driver, this release provides:

- Support of OpenShift 4.8

- Support of Kubernetes 1.22

- Support of Rancher Kubernetes Engine 2

- Normalized configurations between drivers

- Dynamic Logging Configuration

- New CSM installer

VMware Tanzu Kubernetes Grid

VMware Tanzu offers storage management by means of its CNS-CSI driver, but it doesn’t support ReadWriteMany access mode.

If your workload needs concurrent access to the filesystem, you can now rely on CSI Driver for PowerStore, PowerScale and Unity through the NFS protocol. The three platforms are officially supported and qualified on Tanzu.

NFS behind NAT

NFS Driver, PowerStore, PowerScale, and Unity have all been tested and work when the Kubernetes cluster is behind a private network.

PowerScale

By default, the CSI driver creates volumes with 777 POSIX permission on the directory.

Now with the isiVolumePathPermissions parameter, you can use ACLs or any more permissive POSIX rights.

The isiVolumePathPermissions can be configured as part of the ConfigMap with the PowerScale settings or at the StorageClass level. The accepted parameter values are: private_read, private, public_read, public_read_write, and public for the ACL or any combination of [POSIX Mode].

Useful links

For more details you can:

- Watch CSM demos on our VP Youtube channel : https://www.youtube.com/user/itzikreich/

- Ask for help from the Dell container community website

- Subscribe to Github notification and be informed of the latest releases on: https://github.com/dell/csm

- Chat with us on Slack

Author: Florian Coulombel

Related Blog Posts

How to Build a Custom Dell CSI Driver

Mon, 29 Apr 2024 18:15:25 -0000

|Read Time: 0 minutes

With all the Dell Container Storage Interface (CSI) drivers and dependencies being open-source, anyone can tweak them to fit a specific use case.

This blog shows how to create a patched version of a Dell CSI Driver for PowerScale.

The premise

As a practical example, the following steps show how to create a patched version of Dell CSI Driver for PowerScale that supports a longer mounted path.

The CSI Specification defines that a driver must accept a max path of 128 bytes minimal:

// SP SHOULD support the maximum path length allowed by the operating

// system/filesystem, but, at a minimum, SP MUST accept a max path

// length of at least 128 bytes.

Dell drivers use the gocsi library as a common boilerplate for CSI development. That library enforces the 128 bytes maximum path length.

The PowerScale hardware supports path lengths up to 1023 characters, as described in the File system guidelines chapter of the PowerScale spec. We’ll therefore build a csi-powerscale driver that supports that maximum length path value.

Steps to patch a driver

Dependencies

The Dell CSI drivers are all built with golang and, obviously, run as a container. As a result, the prerequisites are relatively simple. You need:

- Golang (v1.16 minimal at the time of the publication of that post)

- Podman or Docker

- And optionally make to run our Makefile

Clone, branch, and patch

The first thing to do is to clone the official csi-powerscale repository in your GOPATH source directory.

cd $GOPATH/src/github.com/

git clone git@github.com:dell/csi-powerscale.git dell/csi-powerscale

cd dell/csi-powerscaleYou can then pick the version of the driver you want to patch; git tag gives the list of versions.

In this example, we pick the v2.1.0 with git checkout v2.1.0 -b v2.1.0-longer-path.

The next step is to obtain the library we want to patch.

gocsi and every other open-source component maintained for Dell CSI are available on https://github.com/dell.

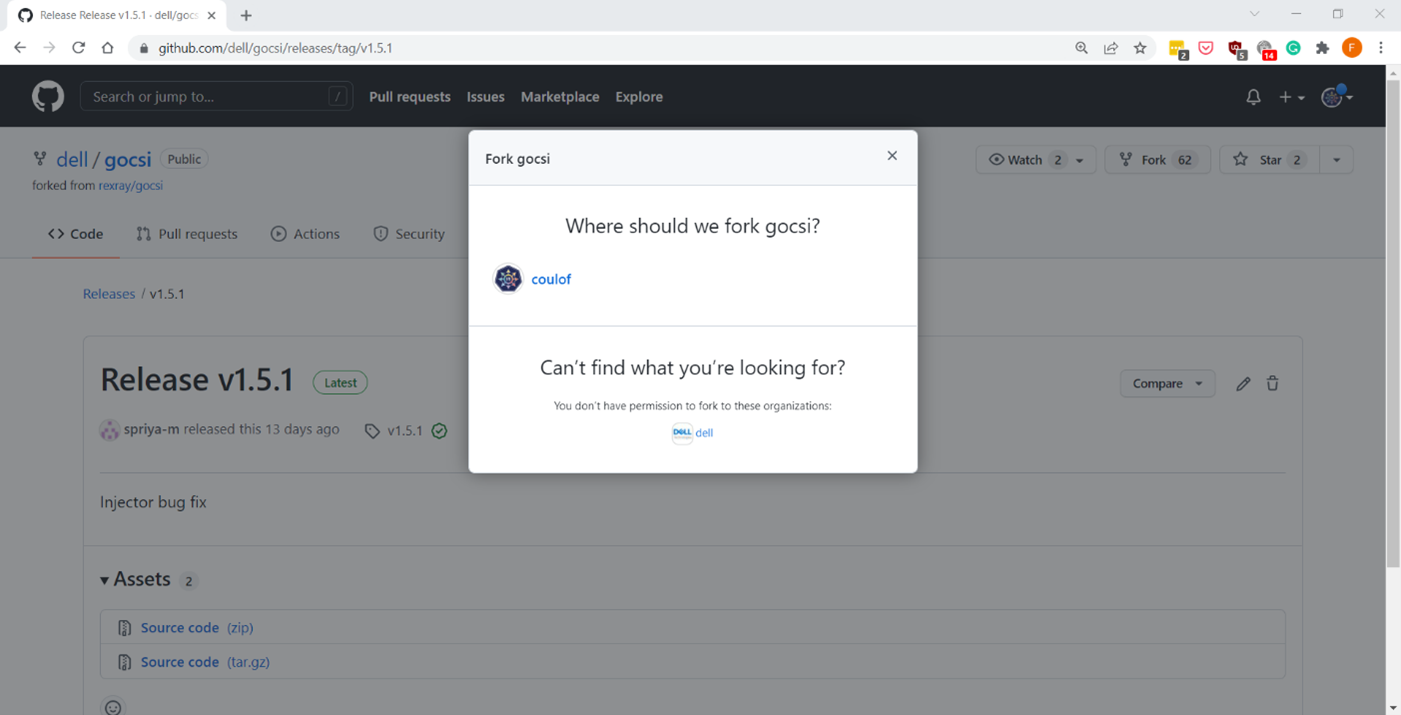

The following figure shows how to fork the repository on your private github:

Now we can get the library with:

cd $GOPATH/src/github.com/

git clone git@github.com:coulof/gocsi.git coulof/gocsi

cd coulof/gocsiTo simplify the maintenance and merge of future commits, it is wise to add the original repo as an upstream branch with:

git remote add upstream git@github.com:dell/gocsi.gitThe next important step is to pick and choose the correct library version used by our version of the driver.

We can check the csi-powerscale dependency file with: grep gocsi $GOPATH/src/github.com/dell/csi-powerscale/go.mod and create a branch of that version. In this case, the version is v1.5.0, and we can branch it with: git checkout v1.5.0 -b v1.5.0-longer-path.

Now it’s time to hack our patch! Which is… just a oneliner:

--- a/middleware/specvalidator/spec_validator.go

+++ b/middleware/specvalidator/spec_validator.go

@@ -770,7 +770,7 @@ func validateVolumeCapabilitiesArg(

}

const (

- maxFieldString = 128

+ maxFieldString = 1023

maxFieldMap = 4096

maxFieldNodeId = 256

)We can then commit and push our patched library with a nice tag:

git commit -a -m 'increase path limit'

git push --set-upstream origin v1.5.0-longer-path

git tag -a v1.5.0-longer-path

git push --tagsBuild

With the patch committed and pushed, it’s time to build the CSI driver binary and its container image.

Let’s go back to the csi-powerscale main repo: cd $GOPATH/src/github.com/dell/csi-powerscale

As mentioned in the introduction, we can take advantage of the replace directive in the go.mod file to point to the patched lib. In this case we add the following:

diff --git a/go.mod b/go.mod

index 5c274b4..c4c8556 100644

--- a/go.mod

+++ b/go.mod

@@ -26,6 +26,7 @@ require (

)

replace (

+ github.com/dell/gocsi => github.com/coulof/gocsi v1.5.0-longer-path

k8s.io/api => k8s.io/api v0.20.2

k8s.io/apiextensions-apiserver => k8s.io/apiextensions-apiserver v0.20.2

k8s.io/apimachinery => k8s.io/apimachinery v0.20.2When that is done, we obtain the new module from the online repo with: go mod download

Note: If you want to test the changes locally only, we can use the replace directive to point to the local directory with:

replace github.com/dell/gocsi => ../../coulof/gocsiWe can then build our new driver binary locally with: make build

After compiling it successfully, we can create the image. The shortest path to do that is to replace the csi-isilon binary from the dellemc/csi-isilon docker image with:

cat << EOF > Dockerfile.patch

FROM dellemc/csi-isilon:v2.1.0

COPY "csi-isilon" .

EOF

docker build -t coulof/csi-isilon:v2.1.0-long-path -f Dockerfile.patch . Alternatively, you can rebuild an entire docker image using provided Makefile.

By default, the driver uses a Red Hat Universal Base Image minimal. That base image sometimes misses dependencies, so you can use another flavor, such as:

BASEIMAGE=registry.fedoraproject.org/fedora-minimal:latest REGISTRY=docker.io IMAGENAME=coulof/csi-powerscale IMAGETAG=v2.1.0-long-path make podman-buildThe image is ready to be pushed in whatever image registry you prefer. In this case, this is hub.docker.com: docker push coulof/csi-isilon:v2.1.0-long-path.

Update CSI Kubernetes deployment

The last step is to replace the driver image used in your Kubernetes with your custom one.

Again, multiple solutions are possible, and the one to choose depends on how you deployed the driver.

If you used the helm installer, you can add the following block at the top of the myvalues.yaml file:

images:

driver: docker.io/coulof/csi-powerscale:v2.1.0-long-pathThen update or uninstall/reinstall the driver as described in the documentation.

If you decided to use the Dell CSI Operator, you can simply point to the new image:

apiVersion: storage.dell.com/v1

kind: CSIIsilon

metadata:

name: isilon

spec:

driver:

common:

image: "docker.io/coulof/csi-powerscale:v2.1.0-long-path"

...Or, if you want to do a quick and dirty test, you can create a patch file (here named path_csi-isilon_controller_image.yaml) with the following content:

spec:

template:

spec:

containers:

- name: driver

image: docker.io/coulof/csi-powerscale:v2.1.0-long-pathYou can then apply it to your existing install with: kubectl patch deployment -n powerscale isilon-controller --patch-file path_csi-isilon_controller_image.yaml

In all cases, you can check that everything works by first making sure that the Pod is started:

kubectl get pods -n powerscale and that the logs are clean:

kubectl logs -n powerscale -l app=isilon-controller -c driver.Wrap-up and disclaimer

As demonstrated, thanks to the open source, it’s easy to fix and improve Dell CSI drivers or Dell Container Storage Modules.

Keep in mind that Dell officially supports (through tickets, Service Requests, and so on) the image and binary, but not the custom build.

Thanks for reading and stay tuned for future posts on Dell Storage and Kubernetes!

Author: Florian Coulombel

CSI drivers 2.0 and Dell EMC Container Storage Modules GA!

Mon, 29 Apr 2024 17:44:07 -0000

|Read Time: 0 minutes

The quarterly update for Dell CSI Driver is here! But today marks a significant milestone because we are also announcing the availability of Dell EMC Container Storage Modules (CSM). Here’s what we’re covering in this blog:

Container Storage Modules

Dell Container Storage Modules is a set of modules that aims to extend Kubernetes storage features beyond what is available in the CSI specification.

The CSM modules will expose storage enterprise features directly within Kubernetes, so developers are empowered to leverage them for their deployment in a seamless way.

Most of these modules are released as sidecar containers that work with the CSI driver for the Dell storage array technology you use.

CSM modules are open-source and freely available from : https://github.com/dell/csm.

Volume Group Snapshot

Many stateful apps can run on top of multiple volumes. For example, we can have a transactional DB like Postgres with a volume for its data and another for the redo log, or Cassandra that is distributed across nodes, each having a volume, and so on.

When you want to take a recoverable snapshot, it is vital to take them consistently at the exact same time.

Dell CSI Volume Group Snapshotter solves that problem for you. With the help of a CustomResourceDefinition, an additional sidecar to the Dell CSI drivers, and leveraging vanilla Kubernetes snapshots, you can manage the life cycle of crash-consistent snapshots. This means you can create a group of volumes for which the drivers create snapshots, restore them, or move them with one shot simultaneously!

To take a crash-consistent snapshot, you can either use labels on your PersistantVolumeClaim, or be explicit and pass the list of PVCs that you want to snap. For example:

apiVersion: v1 apiVersion: volumegroup.storage.dell.com/v1alpha2 kind: DellCsiVolumeGroupSnapshot metadata: # Name must be 13 characters or less in length name: "vg-snaprun1" spec: driverName: "csi-vxflexos.dellemc.com" memberReclaimPolicy: "Retain" volumesnapshotclass: "poweflex-snapclass" pvcLabel: "vgs-snap-label" # pvcList: # - "pvcName1" # - "pvcName2"

For the first release, CSI for PowerFlex supports Volume Group Snapshot.

Observability

The CSM Observability module is delivered as an open-telemetry agent that collects array-level metrics to scrape them for storage in a Prometheus DB.

The integration is as easy as creating a Prometheus ServiceMonitor for Prometheus. For example:

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: otel-collector

namespace: powerstore

spec:

endpoints:

- path: /metrics

port: exporter-https

scheme: https

tlsConfig:

insecureSkipVerify: true

selector:

matchLabels:

app.kubernetes.io/instance: karavi-observability

app.kubernetes.io/name: otel-collectorWith the observability module, you will gain visibility on the capacity of the volume you manage with Dell CSI drivers and their performance, in terms of bandwidth, IOPS, and response time.

Thanks to pre-canned Grafana dashboards, you will be able to go through these metrics’ history and see the topology between a Kubernetes PersistentVolume (PV) until its translation as a LUN or fileshare in the backend array.

The Kubernetes admin can also collect array level metrics to check the overall capacity performance directly from the familiar Prometheus/Grafana tools.

For the first release, Dell EMC PowerFlex and Dell EMC PowerStore support CSM Observability.

Replication

Each Dell storage array supports replication capabilities. It can be asynchronous with an associated recovery point objective, synchronous replication between sites, or even active-active.

Each replication type serves a different purpose related to the use-case or the constraint you have on your data centers.

The Dell CSM replication module allows creating a persistent volume that can be of any of three replication types -- synchronous, asynchronous, and metro -- assuming the underlying storage box supports it.

The Kubernetes architecture can build on a stretched cluster between two sites or on two or more independent clusters. The module itself is composed of three main components:

- The Replication controller whose role is to manage the CustomResourceDefinition that abstracts the concept of Replication across the Kubernetes cluster

- The Replication sidecar for the CSI driver that will convert the Replication controller request to an actual call on the array side

- The repctl utility, to simplify managing replication objects across multiple Kubernetes clusters

The usual workflow is to create a PVC that is replicated with a classic Kubernetes directive by just picking the right StorageClass. You can then use repctl or edit the DellCSIReplicationGroup CRD to launch operations like Failover, Failback, Reprotect, Suspend, Synchronize, and so on.

For the first release, Dell EMC PowerMax and Dell EMC PowerStore support CSM Replication.

Authorization

With CSM Authorization we are giving back more control of storage consumption to the storage administrator.

The authorization module is an independent service, installed and owned by the storage admin.

Within that module, the storage administrator will create access control policies and storage quotas to make sure that Kubernetes consumers are not overconsuming storage or trying to access data that doesn’t belong to them.

CSM Authorization makes multi-tenant architecture real by enforcing Role-Based Access Control on storage objects coming from multiple and independent Kubernetes clusters.

The authorization module acts as a proxy between the CSI driver and the backend array. Access is granted with an access token that can be revoked at any point in time. Quotas can be changed on the fly to limit or increase storage consumption from the different tenants.

For the first release, Dell EMC PowerMax and Dell EMC PowerFlex support CSM Authorization.

Resilency

When dealing with StatefulApp, if a node goes down, vanilla Kubernetes is pretty conservative.

Indeed, from the Kubernetes control plane, the failing node is seen as not ready. It can be because the node is down, or because of network partitioning between the control plane and the node, or simply because the kubelet is down. In the latter two scenarios, the StatefulApp is still running and possibly writing data on disk. Therefore, Kubernetes won’t take action and lets the admin manually trigger a Pod deletion if desired.

The CSM Resiliency module (sometimes named PodMon) aims to improve that behavior with the help of collected metrics from the array.

Because the driver has access to the storage backend from pretty much all other nodes, we can see the volume status (mapped or not) and its activity (are there IOPS or not). So when a node goes into NotReady state, and we see no IOPS on the volume, Resiliency will relocate the Pod to a new node and clean whatever leftover objects might exist.

The entire process happens in seconds between the moment a node is seen down and the rescheduling of the Pod.

To protect an app with the resiliency module, you only have to add the label podmon.dellemc.com/driver to it, and it is then protected.

For more details on the module’s design, you can check the documentation here.

For the first release, Dell EMC PowerFlex and Dell EMC Unity support CSM Resiliency.

Installer

Each module above is released either as an independent helm chart or as an option within the CSI Drivers.

For more complex deployments, which may involve multiple Kubernetes clusters or a mix of modules, it is possible to use the csm installer.

The CSM Installer, built on top of carvel gives the user a single command line to create their CSM-CSI application and to manage them outside the Kubernetes cluster.

For the first release, all drivers and modules support the CSM Installer.

New CSI features

Across portfolio

For each driver, this release provides:

- Support of OpenShift 4.8

- Support of Kubernetes 1.22

- Support of Rancher Kubernetes Engine 2

- Normalized configurations between drivers

- Dynamic Logging Configuration

- New CSM installer

VMware Tanzu Kubernetes Grid

VMware Tanzu offers storage management by means of its CNS-CSI driver, but it doesn’t support ReadWriteMany access mode.

If your workload needs concurrent access to the filesystem, you can now rely on CSI Driver for PowerStore, PowerScale and Unity through the NFS protocol. The three platforms are officially supported and qualified on Tanzu.

NFS behind NAT

NFS Driver, PowerStore, PowerScale, and Unity have all been tested and work when the Kubernetes cluster is behind a private network.

PowerScale

By default, the CSI driver creates volumes with 777 POSIX permission on the directory.

Now with the isiVolumePathPermissions parameter, you can use ACLs or any more permissive POSIX rights.

The isiVolumePathPermissions can be configured as part of the ConfigMap with the PowerScale settings or at the StorageClass level. The accepted parameter values are: private_read, private, public_read, public_read_write, and public for the ACL or any combination of [POSIX Mode].

Useful links

For more details you can:

- Watch CSM demos on our VP Youtube channel : https://www.youtube.com/user/itzikreich/

- Ask for help from the Dell container community website

- Subscribe to Github notification and be informed of the latest releases on: https://github.com/dell/csm

- Chat with us on Slack

Author: Florian Coulombel