Assets

Meeting the Service Level Agreements (SLAs), performance, and automation needs of the 5G Core

Thu, 01 Aug 2024 16:35:33 -0000

|Read Time: 0 minutes

As discussed in our second blog, the 5G Core is the brain of the 5G network. It is responsible for managing a wide range of functions within the mobile network, including mobility management, authentication, authorization, data management, policy management, and Quality of Service (QoS) for end users. We also discussed how solutions like Dell Telecom Infrastructure Blocks for Red Hat play an essential role in supporting these network functions. In our fifth and final blog in this series, we take a deeper look into how Dell Technologies helps Communication Service Providers (CSPs) meet their Service Level Agreements (SLAs), performance, and automation needs for their 5G Core.

Increasing revenue and decreasing costs are at the top of every CSP’s priorities, and the 5G Core plays an important role in achieving these outcomes. Its service-based architecture activates new revenue streams for CSPs by enabling mobile edge computing, network slicing, slicing orchestration, and service assurance. Its future-proof design also allows for improved efficiencies such as fixed mobile convergence, collapsing all 3GPP and non-3GPP wireless and fixed accesses into a single core network.

However, embracing a 5G Core network means also embracing a cloud transformation strategy to achieve these outcomes. Cloud-native network functions with microservices architecture require a container-based cloud native architecture built around a common automation and operational framework to achieve the desired service agility that would allow CSPs to introduce new features and service improvements faster. Moreover, this should be a multi-tenant cloud platform to maximize infrastructure utilization and reuse.

Cloud transformation is also vital in ensuring that the SLAs are met by CSPs transitioning from legacy purpose-built physical appliances to modernized network infrastructure with open disaggregated cloud-native architecture to run their 5G Core. Moreover, cloud transformation allows CSPs to support multiple customized SLAs for different services and customer segments, and offer the following benefits:

- Performance Assurance: SLAs provide a clear benchmark for service performance, giving customers peace of mind that they will receive the expected quality of service.

- Accountability: By defining responsibilities and performance metrics, SLAs establish clear lines of accountability and provide a basis for addressing service failures.

- Dispute Resolution: In the event of disputes or service failures, SLAs serve as a reference point for resolving conflicts, helping to avoid lengthy legal battles.

- Continuous Improvement: Monitoring and reporting SLA metrics encourage CSPs to continuously improve their services, ensuring reliability and efficiency.

Building a container-based, cloud platform for network workloads is certainly doable. Several CSPs have successfully done this while leveraging Dell PowerEdge servers. For example, Dell Technologies offers telecom optimized PowerEdge XR Edge servers to ensure that CSPs have the right form factor for every location from core sites to the network edge.

Nevertheless, many CSPs do not want to build this telecom cloud infrastructure themselves for a variety of reasons such as time constraints, integration complexity, lack of cloud competencies and experience, and limited budgets. Building and maintaining cloud platforms is not a CSP’s core competence after all.

This is where the Dell Technologies’ engineered systems for 5G telecom cloud networks come in. The Dell Telecom Infrastructure Blocks for Red Hat were developed in close partnership with Red Hat, a leader for container management with Red Hat® OpenShift®, according the 2023 Gartner® Magic Quadrant™ for Container Management1. The Infrastructure Blocks are built around automation for these reasons and are in line with Dell’s philosophy of making cloud transformation not only open, but also consumable.

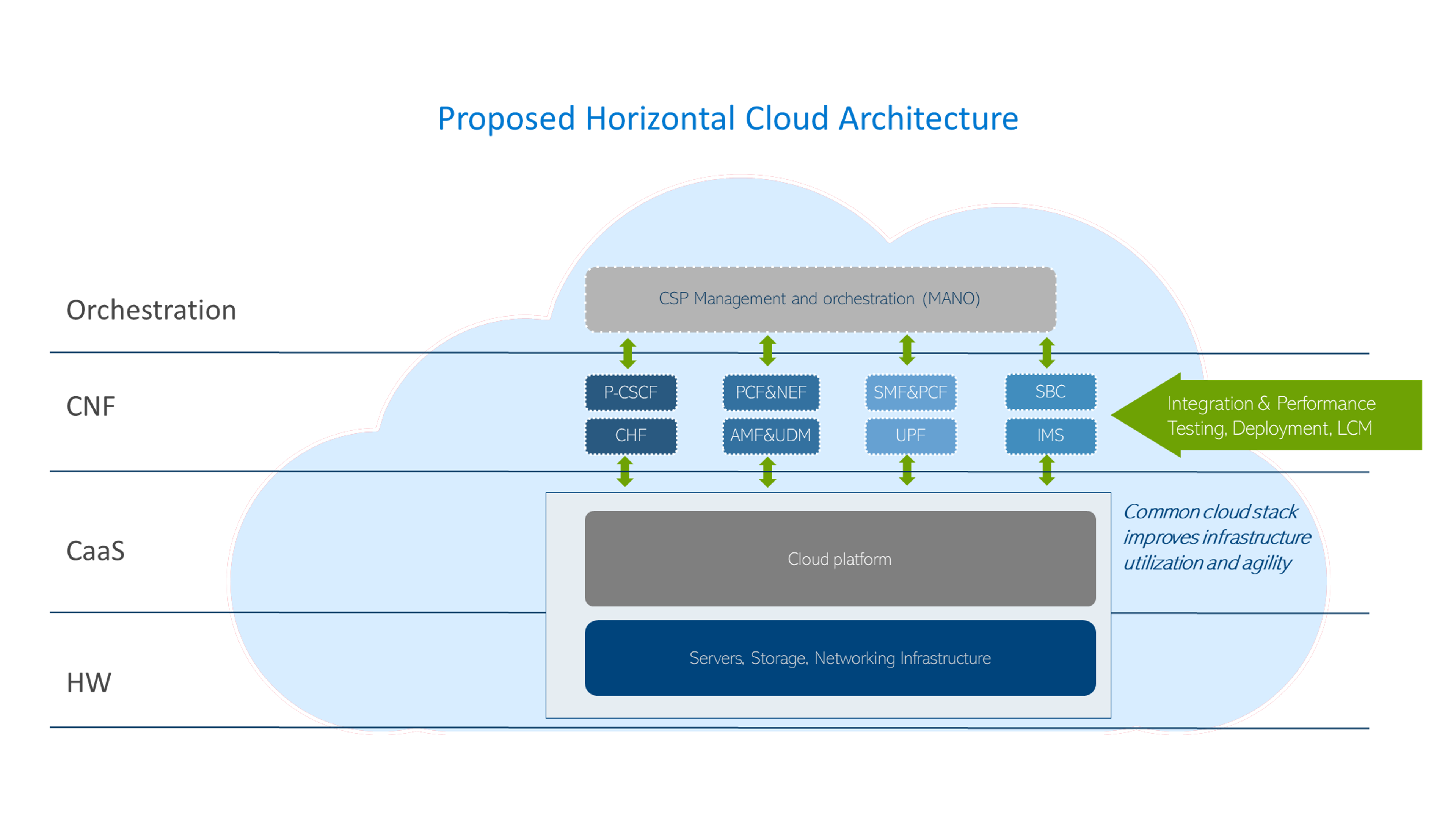

These engineered systems introduce a horizontal cloud architecture that effectively dismantles technology silos, offering CSPs a streamlined approach to deployment while substantially lowering their Total Cost of Ownership (TCO)2.

Some of the key advantages of Dell's pre-validated, factory integrated, solution is that it comes with automated workflows and single call support model for the entire cloud platform. They can help accelerate the time-to-market for new services, enabling CSPs to maintain a competitive edge in the fast-paced 5G landscape.

The robust Dell and Red Hat cloud foundation stack, that utilizes performant, telecom optimized servers and Red Hat OpenShift software, means CSPs can confidently make the leap from legacy systems to a modern, cloud-native telecom cloud for their 5G Core workloads. The platform ensures strict adherence to Service Level Agreements (SLAs) and delivers superior performance, giving CSPs peace of mind as they navigate this transition.

Dell Technologies also works closely with a wide range of Network Equipment Providers (NEPs) and Independent Software Vendors (ISVs) to certify their workloads on Dell Telecom Infrastructure Blocks. This is made possible by the Open Telecom Ecosystem Labs where CSPs, hardware, CaaS, and application vendors come together to accelerate cloud transformation.

Dell Telecom Infrastructure Blocks for Red Hat stand out as a unique solution for CSPs. By leveraging this jointly engineered telecom cloud solution, providers can swiftly respond to market demands and stay ahead of their competition in the 5G race.

Below are links to the first four blogs in our five-part blog series on moving to a cloud-native architecture for deploying 5G Core with Red Hat OpenShift.

- Cloud-native or Bust: Telco Cloud Platforms and 5G Core Migration

- The 5G Core Network Demystified

- Simplifying 5G Network Deployment with Dell Telecom Infrastructure Blocks for Red Hat

- Distribution of 5G Core to Network Edge

Learn more about Dell Telecom Infrastructure Blocks for Red Hat here.

Footnotes

1. Source: Red Hat Blog, “Red Hat Named a Leader in 2023 Gartner Magic Quadrant for Container Management”, September 2023

2. Source: ACG Research Report, “Examining the Impact on Total Cost of Ownership when deploying Telecom Infrastructure Blocks for Red Hat from Core to RAN”, February 2024

Distribution of 5G Core to Network Edge

Wed, 08 May 2024 18:35:27 -0000

|Read Time: 0 minutes

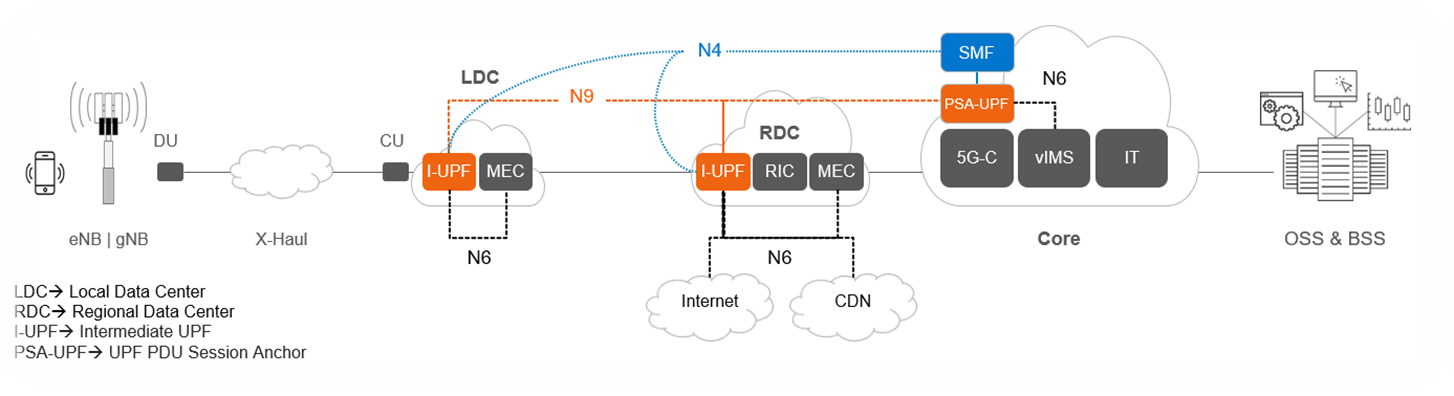

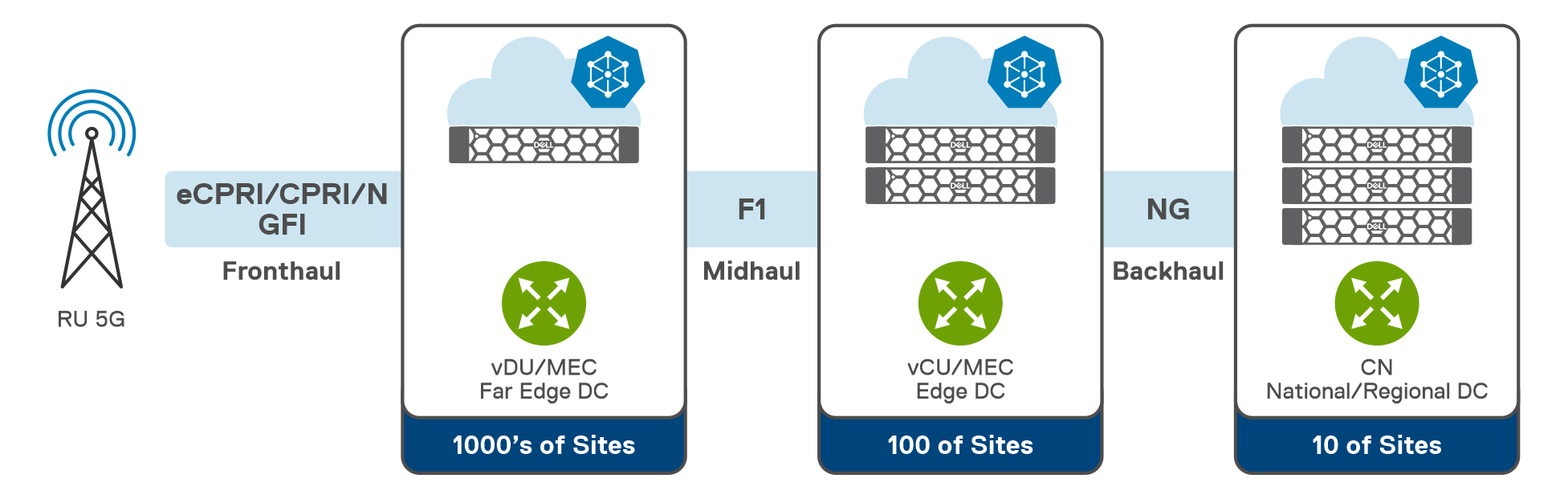

Thus far in our blog series, we have discussed migrating to an open cloud-native ecosystem, the 5G Core and its architecture, and how Dell Telecom Infrastructure Blocks for Red Hat can help simplify 5G network deployment for Red Hat® OpenShift®. Now, we would like to introduce a key use case for distributing 5G core User Plane functions from the centralized data center to the network edge.

Distributing Core Functions in 5G networks

The evolution of communication technology has brought us to the era of 5G networks, promising faster speeds, lower latency, and the ability to connect billions of devices simultaneously. However, to achieve these ambitious goals, the architecture of 5G networks needs to be more flexible, scalable, and efficient than ever before. With the advent of CUPS, or Control and User Plane Separation, in later LTE releases, the telecommunications industry had high expectations for a prototypical distributed control-user plane architecture. This development was seen as a steppingstone towards the more advanced 5G networks that were on the horizon. CUPS aimed to separate the control plane and user plane functionalities where the Control Plane (specifically the Session Management Function or SMF) is typically centralized while the User Plane Function (UPF) can be located alongside the Control Plane or distributed to other locations in the network as demanded by specific use cases.

Understanding the need for Distributed UPF

The UPF is a key component in 5G networks, responsible for handling user data traffic. Distributed User Plane Function (D-UPF) is an advanced network architecture that distributes the UPF functionality across multiple nodes closer to the user and enables local breakout (LBO) to manage use cases that requires lower latency or more privacy, enabling a more scalable and flexible networking environment. With D-UPF, operators can handle increasing data volumes, reduce latency, and enhance overall network performance. By distributing the UPF, operators can effectively manage the increasing data demands across different consumer and enterprise use cases in a cost-effective manner.

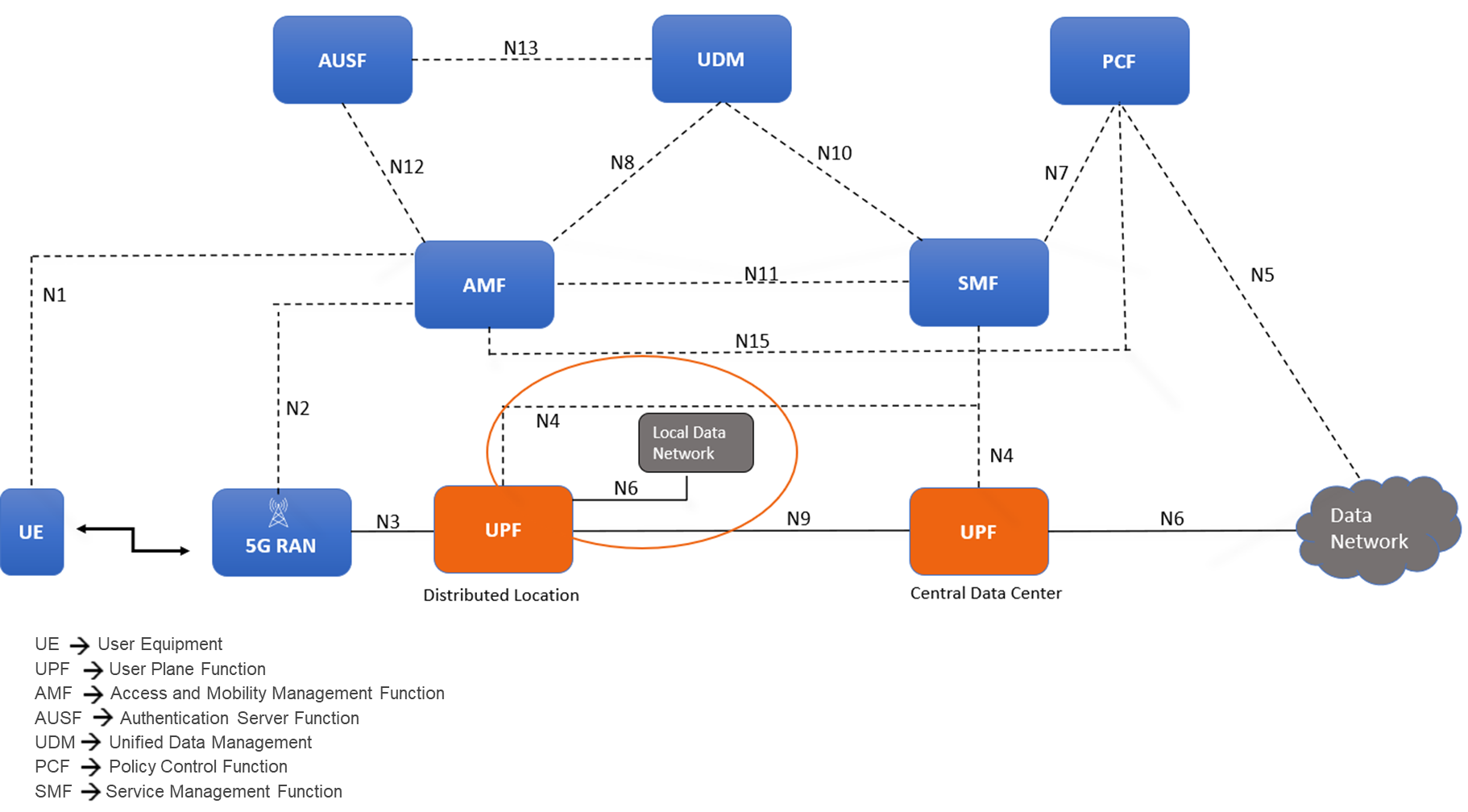

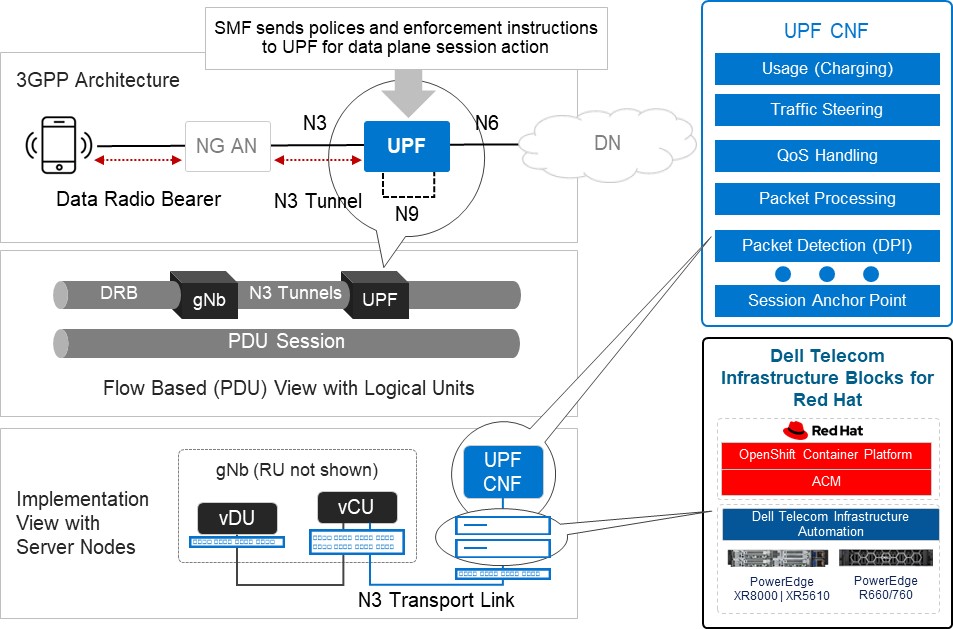

Figure 1: Distributed User Plane function in 5G Core Architecture

D-UPF also plays a crucial role in enabling edge computing in 5G networks. By distributing the user plane traffic closer to the network edge, D-UPF reduces the latency associated with data transmission to and from centralized data centers. This opens opportunities for real-time applications, such as autonomous vehicles, augmented reality, and industrial automation, where low latency is critical for their proper functioning.

Distributed UPF deployment options

Figure 2: D-UPF deployment and functionality

The above diagram provides an overview of the different roles D-UPF may play in a 5G architecture. For example:

- Centralized UPF/PSA-UPF: In the simplest scenario, the UPF is centralized and session anchor occurs within the data center and takes care of the long-term stable IP address assignment. One such example includes VoLTE / NR call where PDU Session Anchor (PSA)-UPF traffic steers to IMS.

- Intermediate UPF (I-UPF):An intermediate UPF (I-UPF) can be inserted on the User Plane path between the RAN and a PSA. Here are two possible reasons to do that:

- If due to mobility, the UE moves to a new RAN node and the new RAN node cannot support N3 tunnel to the old PSA, then an I-UPF is inserted and this I-UPF will have the N3 interface towards RAN and an N9 interface towards the PSA UPF. This process of linking multiple UPFs is called UPF chaining, which involves directing user data flows through a series of UPFs, each of which is performing specific functions.

- You might want to deploy UPF within the Local Data Center/Edge for a low latency use case to steer data traffic to a co-deployed MEC for edge services or to break-out traffic to the local data network.

Challenges and considerations for D-UPF deployments

Now that we have reviewed the need for D-UPF and the different deployment scenarios, let’s consider some of the obstacles you will encounter along the way. As we all know, these network functions have their own needs, especially when it comes to the amount of data being inspected, routed, and forwarded across from the core to the edge, and back again. Below are four areas for consideration:

- Resource Constraints: Edge or remote locations often have limited physical space available for deploying network equipment. The challenge lies in accommodating the necessary hardware, cooling systems, and other infrastructure within these space-constrained environments. Remote locations may also have limited or unreliable power supply infrastructure. Opting for infrastructure with optimal power efficiency, high density, serviceability, ruggedized exterior, and optimized for edge form factors becomes important as UPFs are extended to the edge.

- Performance Requirements: The need for low latency Infrastructure is critical to ensure real-time responsiveness and a seamless user experience when deploying core functions to the edge. Also, by processing data at the network edge with minimal latency, the need for large bandwidth networks to transmit data to centralized core is reduced. This helps in optimizing network bandwidth and lowering the operating costs. This ultimately reduces the CSP’s reliance on centralized core infrastructure for time-sensitive operations.

- Orchestration and Automation: Deploying and managing UPFs distributed across edge locations is a complex challenge. This includes tasks such as workload placement, resource allocation, and automated management of edge infrastructure. Choosing a horizontal telco cloud platform that supports automated distributed core deployment and provides the capability to expand and scale the compute and storage resources to accommodate the varying demands at the edge is a must.

- Lifecycle Management and Operating Cost: Another significant factor is the increased costs associated with first deploying and then operating remote deployments. The large number of these locations coupled with their limited accessibility makes them more expensive to construct and maintain. To address this, zero-touch provisioning at network setup k and sustainable lifecycle management are necessary to optimize the economics of the edge.

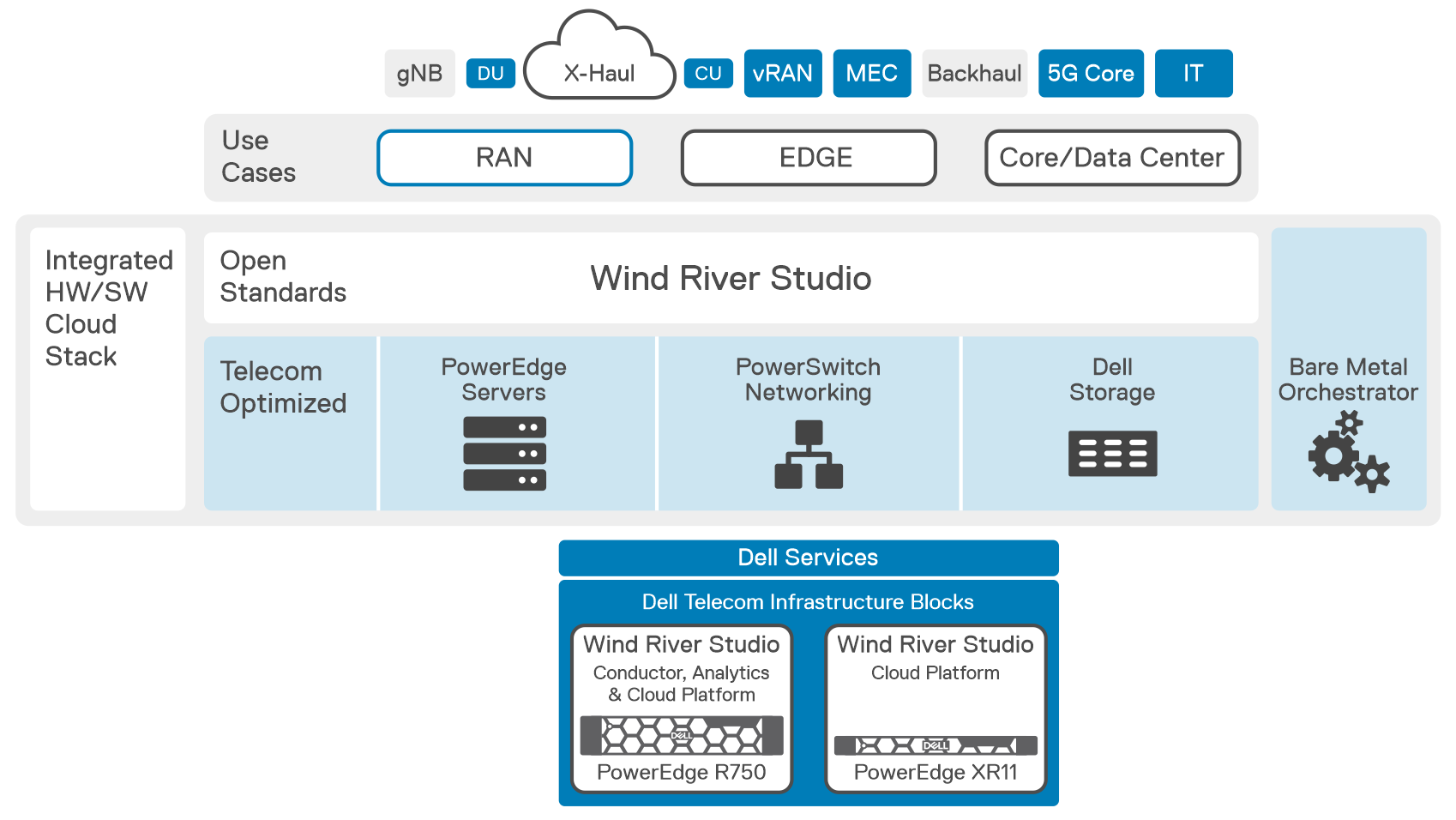

The Horizontal Cloud Platform: Dell Telecom Infrastructure Blocks

Figure3: An Implementation View of Dell Telecom Infrastructure Blocks for Red Hat running the UPF

Dell Technologies is at the forefront of providing cutting-edge cloud-native solutions for the 5G telecom industry. As discussed in our previous blog, Telecom Infrastructure Blocks for Red Hat is one of those solutions, helping operators break down technology silos and empowering them to deploy a common cloud platform from Core to Edge to RAN. These are engineered systems, based on high-performance telecom edge-optimized Dell PowerEdge servers, that have been pre-validated and integrated with Red Hat OpenShift ecosystem software. This makes them a perfect solution for tackling the D-UPF challenges outlined in this blog.

- Resource Constraints:

- Space-efficient modular designed server options for telecom environments, such as the Dell PowerEdge XR8000 series servers, allow providers to mix and match components based on workload needs. They can run multiple workloads, such as CU/DU and UPF in the same chassis.

- Smart cooling designs support harsh edge environments and keep systems at optimal temperatures without using more energy than is needed.[1]

- Rugged and flexible server options that are less than half the length of traditional servers and offer front or rear connectivity make installation in small enclosures at the base of cell towers easier.[2]

- Performance Requirements:

- Orchestration and Automation:

- Horizontal cloud stack engineered platform based on Red Hat OpenShift allows operators to pool resources to meet changing workload requirements. This is achieved by automating server discovery, creating and maintaining a server inventory, and adding the ability to configure and reconfigure the full hardware and software stack to meet evolving workload requirements.

- Servers leverage dynamic resource allocation to ensure that computing resources are allocated precisely where and when they are needed. This real-time optimization minimizes resource waste and maximizes network efficiency.[3]

- Lifecycle Management and Operating Cost:

- Include Dell Telecom Infrastructure Automation software to automate the deployment and life-cycle management as its fundamental components.

- Backed by a unified support model from Dell with options that meet carrier grade SLAs, CSPs do not have to worry about multi-vendor management for the cloud infrastructure support (for both the hardware and cloud platform software), as Dell becomes the single point of contact in the support of telco cloud platform and works with its partners to resolve issues.

Summary

In summary, the need for D-UPF in 5G networks arises from the requirements of handling massive data volumes, improving network efficiency, reducing latency, enabling edge computing, and supporting advanced 5G services. Selecting among the different deployment scenarios possible will require ensuring you have an infrastructure capable of meeting your changing objectives for today and the flexibility and scalability to see you through your long-term goals. For example, you can host and support the deployment and management of content delivery network (CDN) at the network edge, where Dell Telecom Infrastructure Blocks for Red Hat can also serve as a telco cloud building block. By implementing this engineered telco cloud platform solution from Dell, we believe CSPs will be able to streamline the process and reduce costs associated with the deployment and maintenance of UPF across edge locations.

To learn more about Telecom Infrastructure Blocks for Red Hat, visit our website Dell Telecom Multicloud Foundation solutions.

[1] Source: ACG Report, “The TCO Benefits of Dell’s Next-Generation Telco Servers“, February 2023

[2] Source: Dell Technologies, “Introducing New Dell OEM PowerEdge XR Servers“, March 2023

[3] Source: Dell Technologies, “Competing in the new world of Open RAN in Telecom”, February 2024

Cloud-native or Bust: Telco Cloud Platforms and 5G Core Migration

Thu, 25 Apr 2024 16:23:22 -0000

|Read Time: 0 minutes

Breaking down barriers with an open, disaggregated, and cloud-native 5G Core

As 5G network rollouts accelerate, communication service providers (CSPs) around the world are shifting away from purpose-built, vertically integrated solutions in favor of open, disaggregated, and cloud-native architectures running containerized network functions. This allows them to take advantage of modern DevSecOps practices and an emerging ecosystem of telecom hardware and software suppliers delivering cloud-native solutions based on open APIs, open-source software, and industry-standard hardware to boost innovation, streamline network operations, and reduce costs.

To take advantage of the benefits of cloud-native architectures, many CSPs are moving their 5G Core network functions onto commercially available cloud native application platforms like Red Hat OpenShift, the industry's leading enterprise Kubernetes platform. However, building an open, disaggregated telco cloud for 5G Core is not easy and it comes with its own set of challenges that need to be tackled before large scale deployments.

In an disaggregated network, the system integration and support tasks become the CSP's responsibility. To achieve their objectives for 5G, they must:

- Accelerate the introduction and management of new technologies by simplifying and streamlining processes from Day 0, network design and integrations tasks, to Day 1 deployment, and Day 2 lifecycle management and operations.

- Break down digital silos to deploy a horizontal cloud platform to reduce CapEx and OpEx while lowering power consumption

- Deploy architectures and technologies that consistently meet strict telecom service level agreements (SLAs).

Digging into the challenges of deploying 5G Core network functions on cloud infrastructure

This will be a five-part blog series that addresses the challenges when deploying 5G Core network functions on a telco cloud.

- In this first blog, we will highlight CSPs’ key challenges as they migrate to an open, disaggregated, and cloud-native ecosystem;

- The next blog will explore the 3GPP 5G Core network architecture and its components;

- The third blog in the series will discuss how Dell Technologies is working with Red Hat to streamline operator processes from initial technology onboarding through Day 2 operations when deploying a telco cloud to support core network functions;

- The fourth blog will focus on distributing User Plane functionality from the centralized data center to the network edge, so operators can create a more scalable and flexible 5G network environment;

- The final blog in the series will discuss how Dell is integrating Intel technology that consistently meets CSP SLAs for 5G Core network functions.

Accelerating the introduction and simplifying the management of 5G Core network functions on cloud infrastructure

Cloud native architectures offer the potential to achieve superior performance, agility, flexibility, and scalability, resulting in easily updated, scaled, and maintained Core network functions with improved network performance and lower operational costs. Nevertheless, operating 5G Core network functions on a telco cloud can be difficult due to new challenges operators face in integration, deployment, lifecycle management, and developing and maintaining the right skill sets.

Different integration and validation requirements

Open multi-vendor cloud-native architectures require the CSP to take on more ownership of design, integration, validation, and management of many complex components, such as compute, storage, networking hardware, the virtualization software, and the 5G Core workload that runs on top. This increases the complexity of deployment and lifecycle management processes while requiring investment in development of new skill sets.

Complexity of the deployment process demands automation

5G Core deployment on a telco cloud platform can be a complex process that requires integrating multiple systems and components into a unified whole with automated deployment from the hardware up through the Core network functions. This complexity creates the need for automation that not only to streamlines processes, but also ensures a consistent deployment or upgrade each time that aligns with established configuration best practices. Many CSPs may lack deployment experience with automation and cloud native tools making this a difficult task.

Lifecycle management and orchestration of a disaggregated 5G Core

The size and complexity of the 5G Core can make lifecycle management and orchestration challenging. Every one of the components starts a new validation cycle and increases the risk of introducing security vulnerabilities and configuration issues into the environment.

Lack of cloud-native skills and experience

Managing a telco cloud requires a different set of skills and expertise than operating traditional networks environments. CSPs often need to acquire additional staff and invest in cloud native training and development to obtain the skills and experience to put cloud native principles into practice as they build, deploy, and manage cloud-native applications and services.

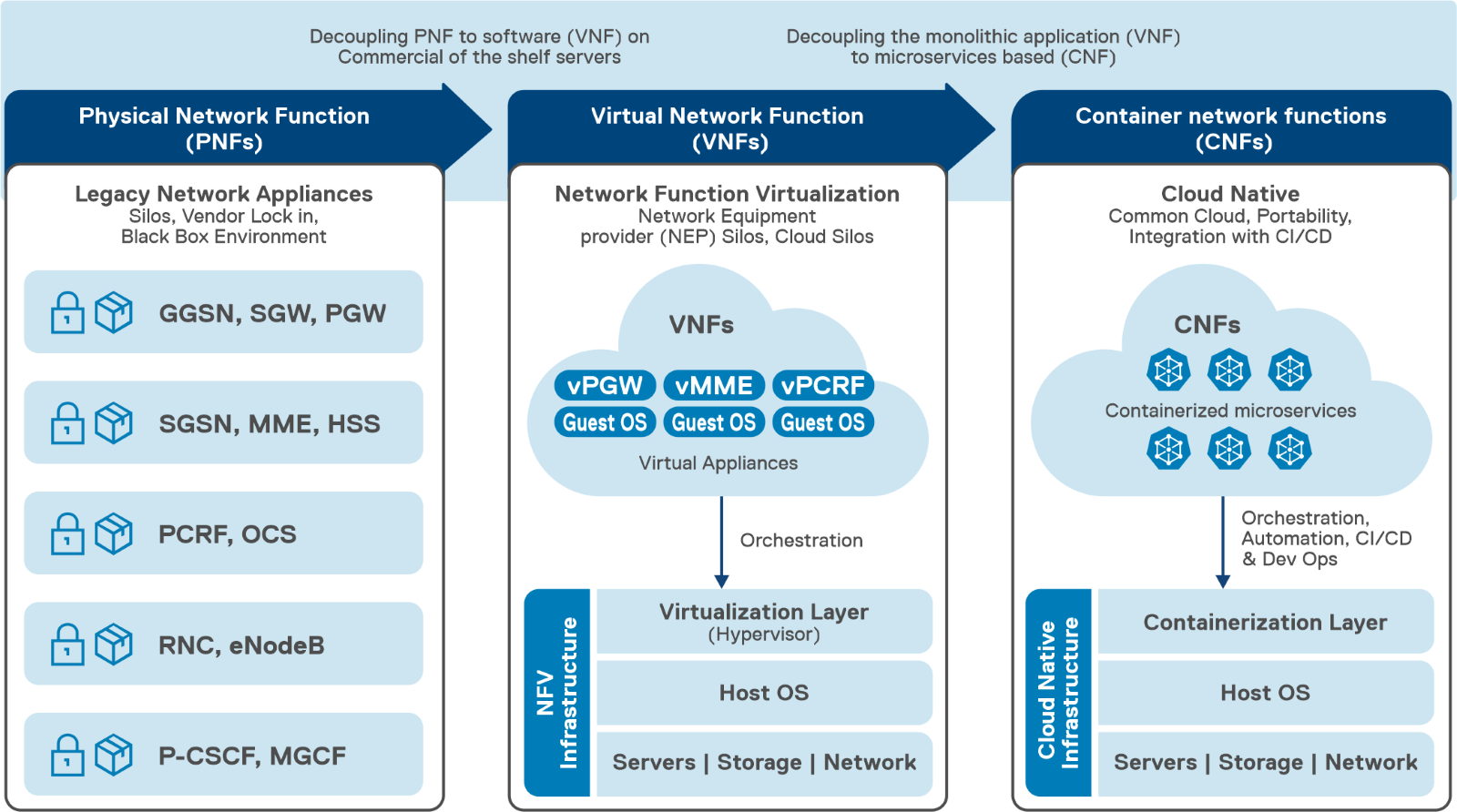

Breaking down vertical silos with a horizontal, 5G telco cloud platform

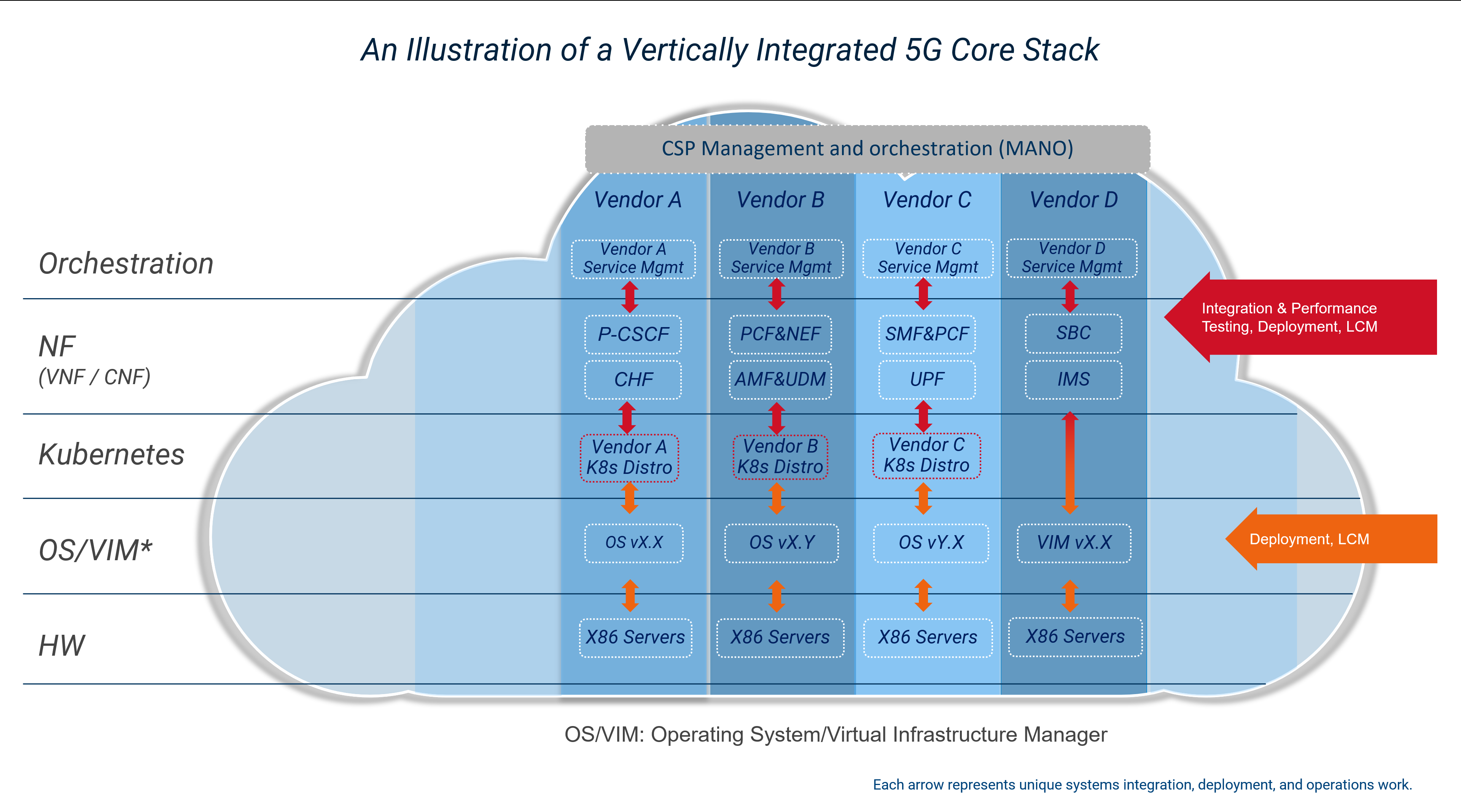

In recent years, many CSPs embarked on a journey away from vertically integrated, proprietary appliances to virtualized network functions (VNFs). One of the goals when adopting network functions virtualization was to obtain greater freedom in selecting hardware and software components from multiple suppliers, making services more cost-effective and scalable. However, CSPs often experiences difficulties in designing, integrating and testing their individual stacks, resulting in higher integration costs, interoperability issues and regression testing delays leading to less efficient operations.

Despite efforts to move to virtualized network functions, silos of vertically integrated cloud deployments can emerge where the virtual network functions suppliers define their own cloud stack to simplify their process of meeting the requirements for each workload. These vertical silos prevented CSPs from pooling resources, which can reduce infrastructure utilization rates and increase power consumption. It also increases the complexity of lifecycle management as each layer of the stack for each silo needs to be validated whenever a change to a component of the stack is made.

Vertically integrated 5G Core stack on a telco cloud

Vertically integrated 5G Core stack on a telco cloud

CSPs are now looking to implement a horizontal platform that can provide a common cloud infrastructure to help break down these silos to lower costs, reduce power consumption, improve operational efficiency, and minimize complexity allowing CSPs to adopt cloud native infrastructure from the core to the radio access network (RAN).

Horizontal platform for 5G telco workloads

Horizontal platform for 5G telco workloads

Maintaining compliance with telecom industry SLAs

Creating and managing a geographically dispersed telco cloud based on a broad range of suppliers while consistently adhering to CSP SLAs takes a lot of effort, resources and time and can introduce new complications and risks. To meet these SLAs and accelerate the introduction of new technologies, CSPs will need a novel approach when working with vendors that reduces integration and deployment times and costs while simplifying ongoing operations. This will include developing a tighter relationship with their supply base to offload integration tasks while maintaining the flexibility provided by an open telecom ecosystem. As an example, Vodafone recently introduced a paper outlining their vision for a new operating model to improve systems integrations with their supply base to help achieve these objectives. It would also include following a proven path in enterprise IT by adopting engineered systems, similar to the converged and hyper converged systems used by IT today, that have been optimized for telecom use cases to simplify deployment, scaling and management.

Short-term total cost of ownership (TCO)

When it comes to optimizing short-term TCO, there are several options available to CSPs. One such option is to work closely with vendors in order to reduce integration, deployment times and costs while simplifying ongoing operations. This approach can help CSPs leverage the expertise of vendors who specialize in the software and hardware components required for a disaggregated telco cloud. By working with skilled vendors, CSPs can reduce the risk of validating and integrating components themselves, which can lead to cost savings in the short term.

Another option that CSPs can consider is to adopt a phased approach to implementation. This involves deploying disaggregated telco cloud technologies in stages, starting with the most critical components and gradually expanding to include additional components over time. This approach can help to mitigate the initial costs associated with disaggregated telco cloud adoption while still realizing the benefits of increased flexibility, scalability, and cost efficiency.

CSPs can also take advantage of initiatives like Vodafone's new operating model for improving systems integrations with their supply base. This model aims to simplify the process of integrating components from multiple vendors by providing a standardized framework for testing and validation. By adopting frameworks like this, CSPs can reduce the time and costs associated with integrating components from multiple vendors, which can help to optimize short-term TCO.

Although implementing a disaggregated telco cloud can increased investment in the short term, there are several options available to CSPs for optimizing short-term TCO. Whether it's working closely with trusted vendors, adopting a phased approach, or leveraging standardized frameworks, CSPs can take steps to reduce costs and maximize the benefits of a disaggregated telco cloud.

Your partners for simplifying telco cloud platform design, deployment, and operations

Dell and Red Hat are leading experts in cloud-native technology used in building 5G networks and are working together to simplify their deployment and management for CSPs. Dell Telecom Infrastructure Blocks for Red Hat is a solution that combines Dell's hardware and software with Red Hat OpenShift, providing a pre-integrated and validated solution for deploying and managing 5G Core workloads. This offering enables CSPs to quickly launch and scale 5G networks to meet market demand for new services while minimizing the complexity and risk associated with deploying cloud-native infrastructure.

Next steps

In the next blog we will dive deeper into the the service-based architecture of the 5G Core architecture and how it was developed to support cloud native principles. To learn more about how Dell Technologies and Red Hat are partnering to simplify the deployment and management of a telco cloud platform built to support 5G Core workloads, see the ACG Research Industry Directions Brief: Extending the Value of Open Cloud Foundations to the 5G Network Core with Telecom Infrastructure Blocks for Red Hat.

Authored by:

Gaurav Gangwal

Senior Principal Engineer – Technical Marketing, Product Management

About the author:

Gaurav Gangwal works in Dell's Telecom Systems Business (TSB) as a Technical Marketing Engineer on the Product Management team. He is currently focused on 5G products and solutions for RAN, Edge, and Core. Prior to joining Dell in July 2022, he worked for AT&T for over ten years and previously with Viavi, Alcatel-Lucent, and Nokia. Gaurav has an Engineering degree in Electronics and Telecommunications and has worked in the telecommunications industry for about 14+ years. He currently resides in Bangalore, India.

Kevin Gray

Senior Consultant, Product Marketing – Product Marketing

About the author:

Kevin Gray leads marketing for Dell Technologies Telecom Systems Business Foundations solutions. He has more than 25 years of experience in telecommunications and enterprise IT sectors. His most recent roles include leading marketing teams for Dell’s telecommunications, enterprise solutions and hybrid cloud businesses. He received his Bachelor of Science in Electrical Engineering from the University of Massachusetts in Amherst and his MBA from Bentley University. He was born and raised in the Boston area and is a die-hard Boston sports fan.

Simplifying 5G Network Deployment with Dell Telecom Infrastructure Blocks for Red Hat

Fri, 19 Jan 2024 15:08:05 -0000

|Read Time: 0 minutes

Welcome back to our 5G Core blog series. In the second blog post of the series, we discussed the 5G Core, its architecture, and how it stands apart from its predecessors, the role of cloud-native architectures, the concept of network slicing, and how these elements come together to define the 5G Network Architecture.

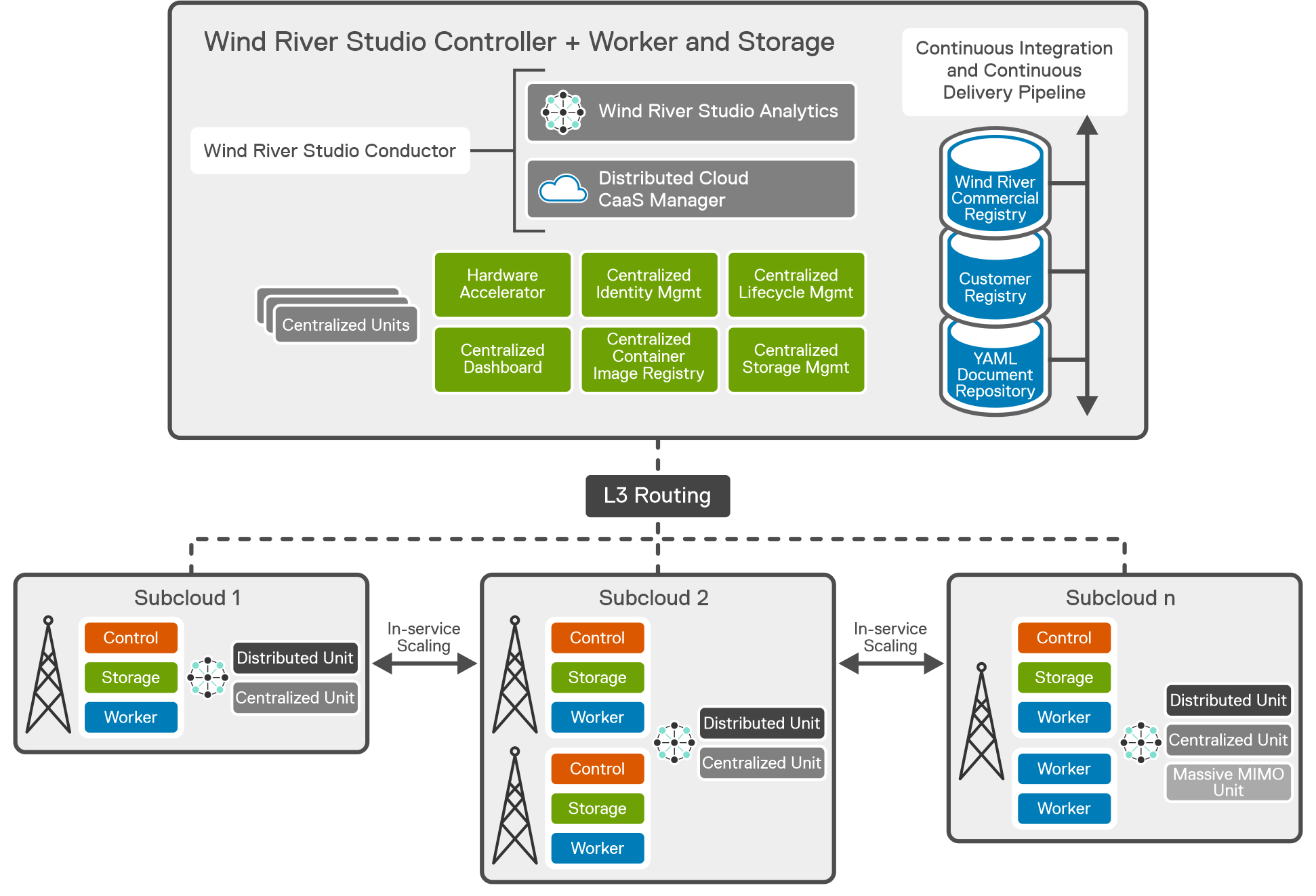

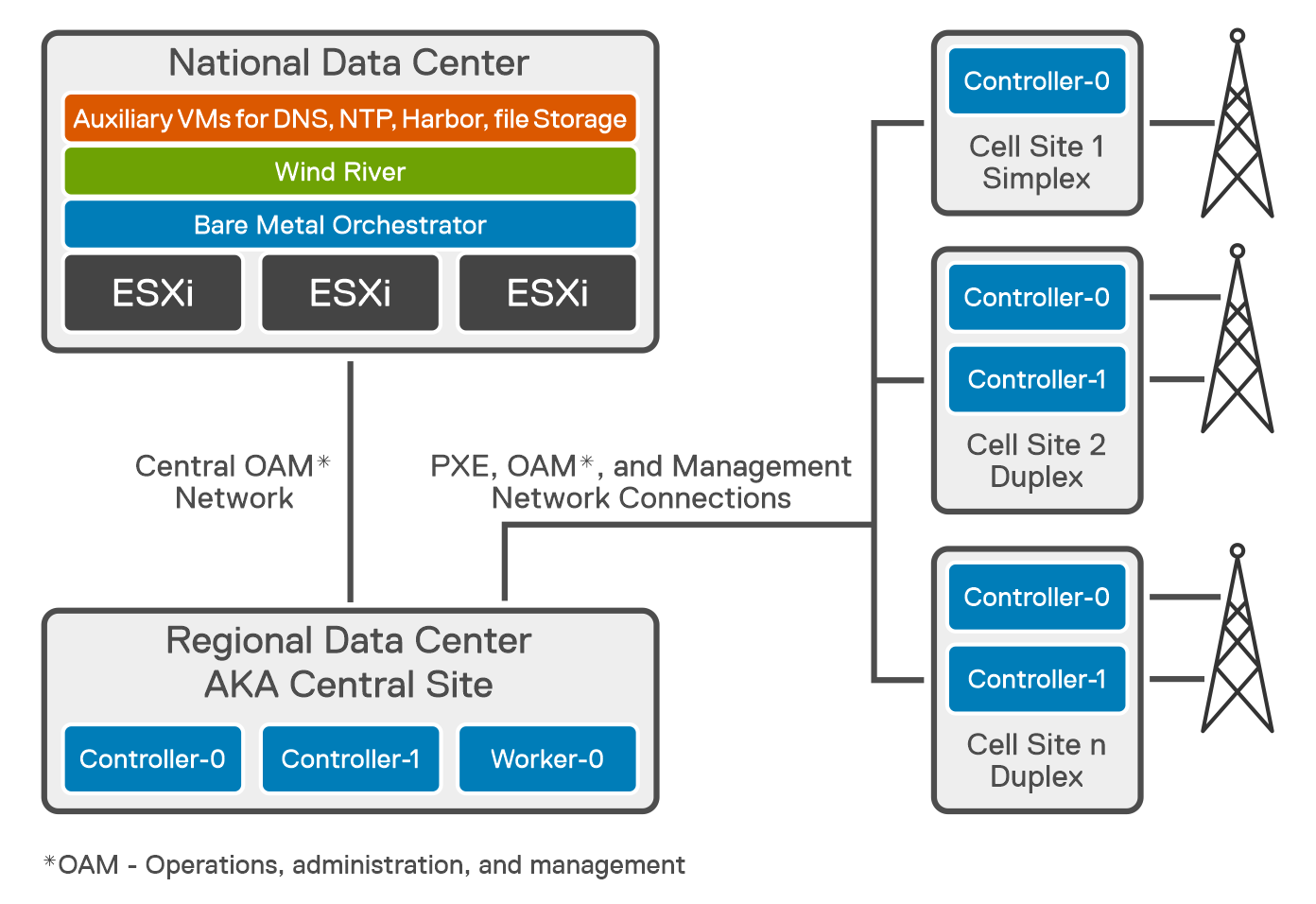

In this third blog, we look at Dell Technologies’ and Red Hat's collaboration with their latest offering of Dell Telecom Infrastructure Blocks for Red Hat. We explore how Infrastructure Blocks streamline Communications Service Providers’ (CSPs) processes for a Telco cloud used with 5G core from initial technology onboarding at day 0/1 to day 2 life cycle management.

Helping CSPs transition to a cloud-native 5G core

Building a cloud-native 5G core network is not easy. It requires careful planning, implementation, and expertise in cloud-native architectures. The network needs to be designed and deployed in a way that ensures high availability, resiliency, low latency, efficient resource utilization, and flawless component interoperability. CSPs may feel overwhelmed when considering the transition from legacy architectures to an open, best-of-breed cloud-native architecture. This can lead to delays in design, deployment, and life cycle management processes that stall projects and reduce a CSP’s ability to effectively deploy and manage their disaggregated cloud-native network.

Automation plays a critical role in managing deployment and life cycle management processes. Many projects stall or fail due to poorly defined automation strategies that make it difficult to ensure compatibility between hardware and software configurations across a large, distributed network. This is especially true when trying to deploy and manage a cloud platform running on bare metal.

Dell Telecom Infrastructure Blocks for Red Hat are foundational building blocks for creating a Telco cloud that is based on Red Hat OpenShift. They aim to reduce the time, cost, and risk of designing, deploying, and maintaining 5G networks using open software and industry standard infrastructure. The current release of Telecom Infrastructure Blocks for Red Hat supports the creation of management and workload clusters for 5G core network functions running Red Hat OpenShift on bare metal servers.

There are a number of challenges to build and maintain Kubernetes clusters on bare metal to run 5G network functions:

- Ensuring interoperability and fault tolerance in a disaggregated network is not an easy task. Deploying and managing Kubernetes clusters on bare metal requires extensive design, planning, and interoperability testing to ensure a reliable, fault tolerant, and performant system.

- Automating the deployment and life cycle management of hardware resources and cloud software in a bare metal environment can be complex. It involves deploying and updating a fleet of bare metal servers at scale.

- There is a lack of pre-built software integrations specifically designed for deploying Kubernetes clusters on bare metal servers and bringing those cluster configurations to a state where they are ready to run workloads. This means that configuring and deploying Kubernetes on bare metal frequently requires more manual effort to build and maintain the automation needed to manage deployments and upgrades at scale. This manual effort can be time-consuming and add complexity that introduces risk to the process.

- This lack of consistent, easy-to-manage automation to deploy and update the cloud stack to meet workload requirements also make it harder to implement a unified cloud platform across all workloads. This leads to infrastructure silos that limit the ability to pool resources to improve infrastructure utilization rates, which in turn reduces network TCO efficiency.

These challenges are amplified when running 5G network functions, which require low latency and high reliability to meet carrier-grade service level agreement (SLAs). This collaboration between Dell and Red Hat aims to offer a comprehensive solution for CSPs that addresses the challenges associated with building and maintaining carrier-grade cloud infrastructure for 5G core network functions.

Key objectives of Dell Telecom Infrastructure Blocks for Red Hat

Implement a “shift left” approach

In software development, the term “shift left” refers to the ability to move tasks to an earlier stage in the development or production process to reduce time to value for those processes. The shift left approach being offered with Infrastructure Blocks moves much of the testing and integration work performed by the CSP into the supply chain prior to onboarding the new technology. This method provides CSPs with a speedy path to value by shortening the preparation and validation phase for a new network deployment. It also simplifies the procurement process by reducing the number of suppliers the CSP needs to work with and simplifies support by providing one call support for the full cloud stack. Proactive problem-solving, reduction of field touch points, risk minimization, and operational simplification are byproducts of the Infrastructure Block approach that hasten the introduction of new technology into a CSP’s network. By adopting this approach, CSPs can obtain faster rollout times and reduced operational costs. Dell does three things to help CSPs shift the technology onboarding processes left:

Engineering

Telecom Infrastructure Blocks are foundational building blocks that are co-designed with Red Hat to help CSPs build and scale out their network. These building blocks are purpose-built to meet specific workload requirements. Dell collaborates with Red Hat to maintain a roadmap of feature enhancements and perform continuous design and integration testing to accelerate the adoption of new technologies and software upgrades. The design planning and extensive interoperability testing performed by Dell simplifies the processes of building and maintaining a fault tolerant and performant cloud platform to run 5G core workloads.

Automation

Many CSPs today rely on procedural automation that they build and maintain on their own to automate the deployment and life cycle management of their cloud platform at scale. Procedural automation requires an understanding of the current state of their cloud stack and the maintenance of scripts or playbooks to define the steps needed to update the configuration to the desired state. When deploying Kubernetes on bare metal to support 5G core workloads, there are a number of items with dependencies that must be configured appropriately, including the following properties:

- Cloud platform software version

- BIOS version and settings

- Firmware versions for network interface cards (NICs) and other Peripheral Component Interconnect Express (PCIe) cards

- Single root I/O virtualization (SR-IOV) / Data Plane Development Kit (DPDK) configurations

- RAID configurations

- Site-specific data

Building and maintaining these scripts and automation playbooks is no easy task. It requires an up-to-date view of the current configuration of the infrastructure, an understanding of the dependencies between hardware and software that must be met to perform an update, and people with specialized skills that include knowledge of server hardware and the tools to manage them, the cloud software, and how to write or update playbooks to execute deployments and upgrades.

Managing this across a large, distributed network with a range of workloads that frequently require unique configurations of the cloud stack is a difficult and time-consuming process. Also consider that, in an open ecosystem environment, there is always a new version of software, BIOS, or firmware coming and the people with the needed skill sets are in short supply, resulting in a herculean effort with mixed results.

Telecom Infrastructure Blocks include purpose-built automation software that is easy to use and maintain. The blocks integrate with Red Hat Advanced Cluster Management and Red Hat OpenShift to automate deployment and life cycle management of the hardware and software stack used in a Telco cloud. This software uses declarative automation to simplify the deployment and upgrade of the cloud platform hardware and software to align with approved configuration profiles. With declarative automation, the CSP simply defines the desired state of the cloud stack, and the automation software determines the steps required to achieve the desired state and executes those steps to align the system with the approved configuration.

This infrastructure automation software uses a declarative data model that defines the desired state of the system, the resources properties, and keeps a list of the current state of the cloud configuration and inventory, which significantly simplifies the deployment and life cycle management of the cloud stack. Infrastructure Blocks come with Topology and Orchestration Specification for Cloud Applications (TOSCA) workflows that define the configurations needed to update the system based on the extensive design and validation testing performed by Dell and Red Hat. Dell provides regular updates that simplify the process of upgrading to the latest release. CSPs simply update a Customer Input Questionnaire and the automation software updates the hardware and the cloud platform software, and brings them to a workload-ready state with a single click of a button.

Integration

Dell ships fully integrated systems that are designed, optimized, and tested to meet the requirements of a range of telecom use cases and workloads. They include all the hardware, software, and licenses needed to build and scale out a Telco cloud. Delivering fully integrated building blocks from Dell’s factory significantly reduces the time spent configuring infrastructure onsite or in a network configuration center. They are also backed by Dell Technologies one-call support for the full cloud stack that meets telecom SLAs. Dell has established escalation paths with Red Hat to ensure the highest levels of support for customers.

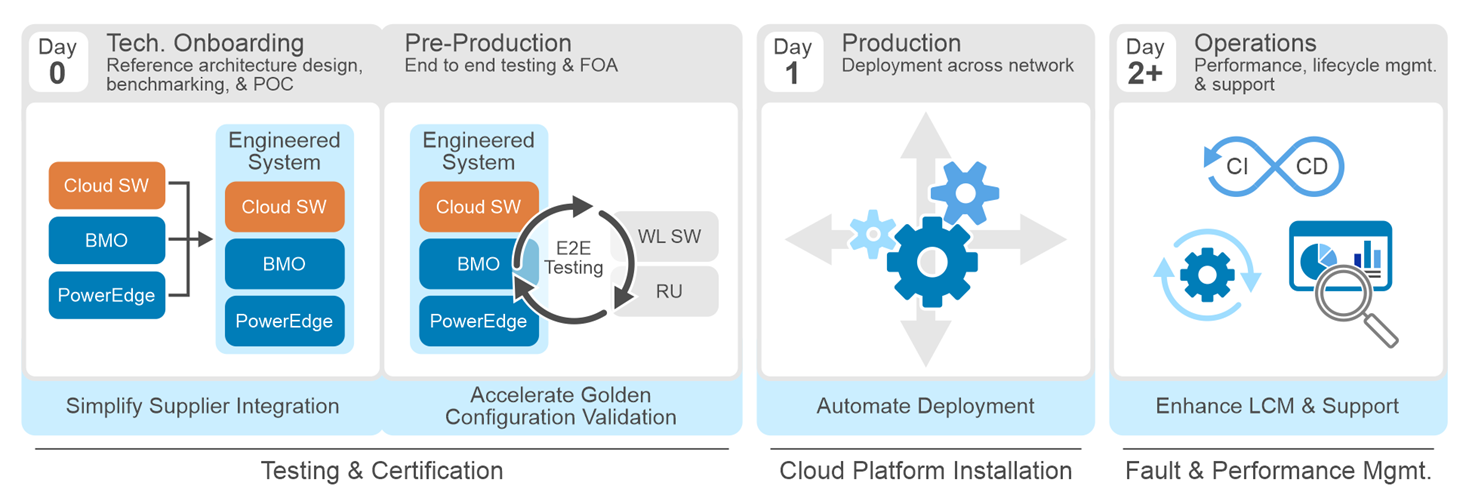

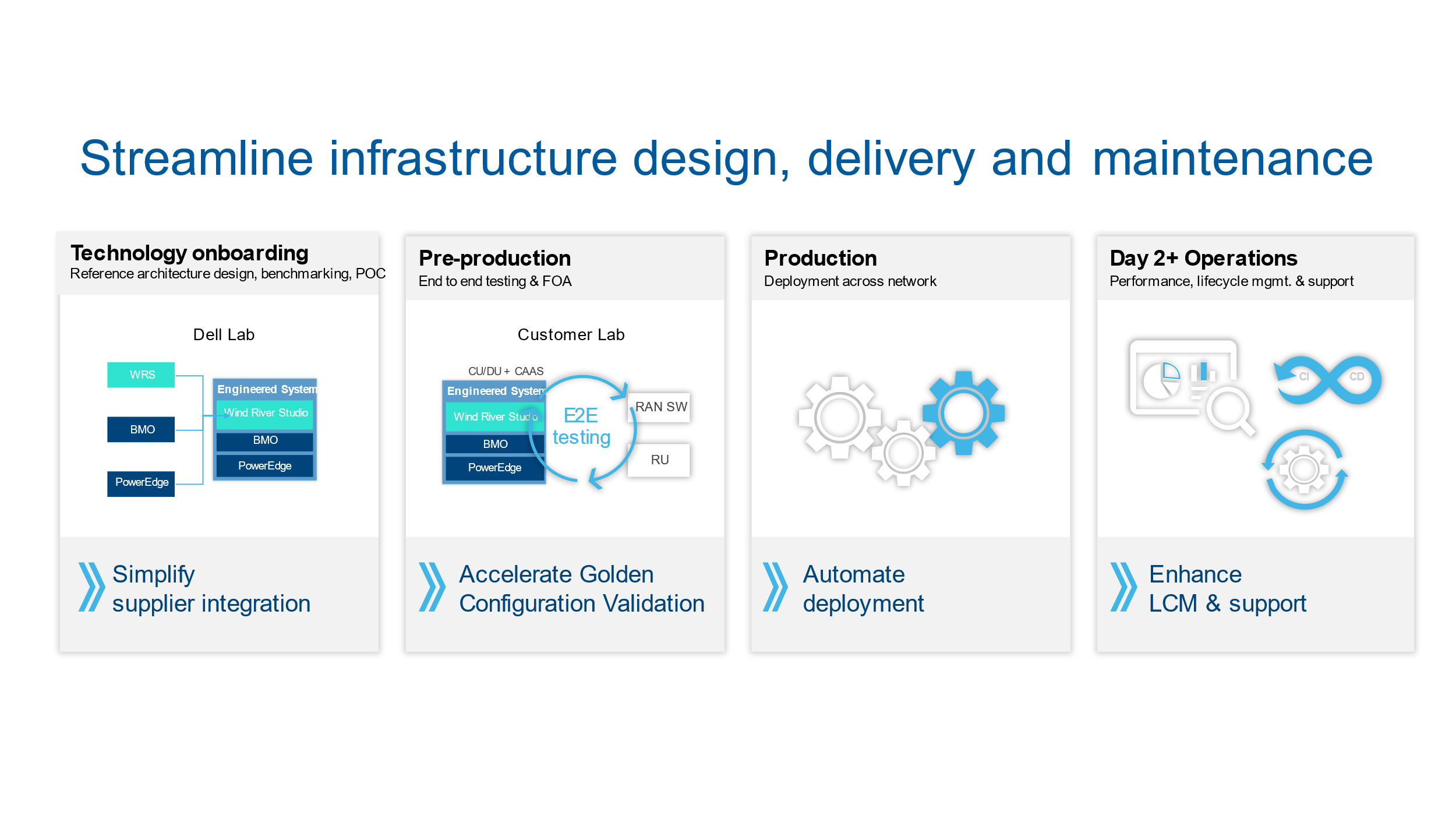

Streamlining Day 0 through Day 2 tasks with Telecom Infrastructure Blocks

In a typical CSP network operating model, there are four stages an CSPs goes through from initial technology onboarding through managing ongoing operations. These stages are:

- Stage 1: Technology onboarding (Day 0)

- Stage 2: Pre-production (Day 0)

- Stage 3: Production (Day 1)

- Stage 4: Operations and lifecycle management (Day 2+)

Dell Telecom Infrastructure Blocks were built to streamline each stage of the processes to reduce the time and risk of building and maintaining a Telco cloud. We do this by proactively working with Red Hat to create an engineered system that meets telecom SLAs, includes automation that delivers zero-touch provisioning, and simplifies life cycle management through continuous design and integration testing.

Let's look at how Dell Telecom Infrastructure blocks affects CSP processes from Day 0 to Day 2+.

Stage 1: Day 0 technology onboarding

Dell and Red Hat collaborate to design an engineered system that is validated through an extensive array of test cases to ensure its reliability and performance. These test cases cover aspects such as functionality, interoperability, security, reliability, scalability, and infrastructure-specific requirements for 5G Core workloads. This testing is aimed at ensuring optimal performance across a diverse array of performance metrics and scale points, thereby guaranteeing performant system operation.

Some of our design and validation test cases include:

Cloud Infrastructure Cluster testing: Cloud Infrastructure Cluster testing refers to the process of testing the infrastructure components of a cloud cluster to ensure their proper functioning, performance, and scalability. It involves validating the networking, storage, compute resources, and other infrastructure elements within a cluster. These test cases include:

- Installation and validation of Infrastructure Block plugins and automation.

- Cluster storage (Red Hat OpenShift AI) validation and testing.

- Validation of cluster network configurations

- Verification of Container Network Interface (CNI) plugin configurations in the cluster.

- Validation of high availability configurations

- Scalability and performance testing

Performance Benchmarking testing: Performance Benchmarking testing usually includes several steps. First, test scenarios are created to simulate real-world usage. Second, performance tests are conducted and the resulting performance data is collected and analyzed. Finally, the results are compared to established benchmarks. Some of the testing performed with Infrastructure Blocks includes:

- CPU benchmarking

- Memory benchmarking

- Storage benchmarking

- Network benchmarking

- Container as a Service (CaaS) benchmarking

- Interoperability tests between the Infrastructure and CaaS layers

At this step, we define the automation workflows and configuration templates that will be used by the automation software during the deployment. Dell works proactively with its customers and partners to understand their best practices and incorporate those into the blueprints included with every Telecom Infrastructure Block.

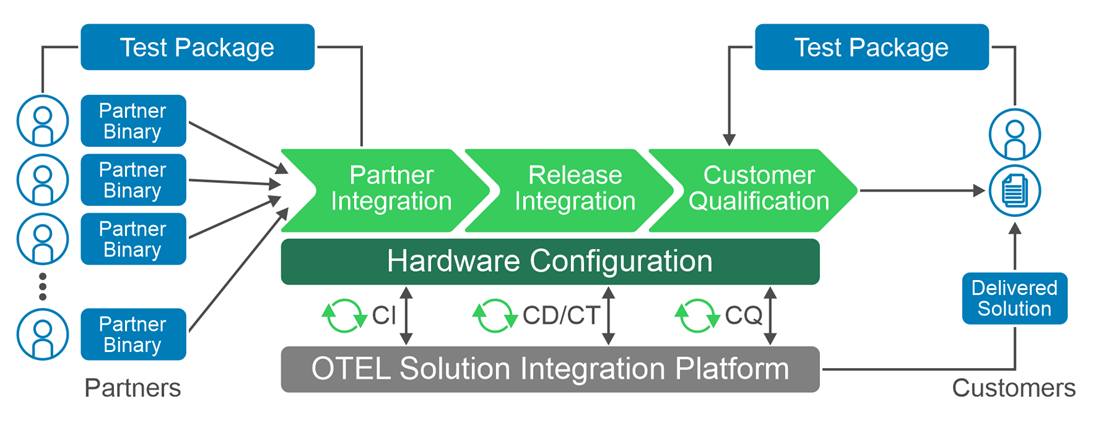

This process produces an engineered system that streamlines the CSP’s reference architecture design, benchmarking, and proof of concept (POC) processes to reduce engineering costs and accelerate the onboarding of new technology. To further streamline the design, validation, and certification process, we provide Dell Open Telecom Ecosystem Lab (OTEL), which can act as an extension of a CSP’s lab to validate workloads on Infrastructure Blocks that meet the CSP’s defined requirements.

Stage 2: Pre-production

The main objective in stage 2 is to onboard network functions onto the cloud infrastructure, define the network golden configuration, and to prepare it for production deployment. Infrastructure Blocks eliminates some of the touch steps in the onboarding by:

- Delivering an integrated and validated building block direct from Dell’s factory

- Delivering a deployment guide that simplifies onboarding

- Providing Customer Input Questionnaires that are configuration input templates used by the automation software to streamline deployment

CSPs can also leverage the Red Hat test line in Dell’s Open Telecom Ecosystem Lab (OTEL) to validate and certify the CSP’s workloads on Infrastructure Blocks. OTEL can play a significant role in enabling CSPs and partners by developing custom blueprints or performing custom tests required by the CSP using Dell’s Solution Integration Platform (SIP) which is part of OTEL. SIP is an advanced automation and service integration platform developed by Dell. It supports multi-vendor system integration and life cycle management testing at scale. It uses industry standard components, toolkits, and solutions such as GitOps.

Dell Services also offers tailor-made configurations to cater to specific operator needs. These are carried out at a Dell second-touch configuration facility. Here, configurations customized to the customer's specifications are pre-installed and then dispatched directly to the customer's location or configuration facility.

Dell Services also offers tailor-made configurations to cater to specific operator needs. These are carried out at a Dell second-touch configuration facility. Here, configurations customized to the customer's specifications are pre-installed and then dispatched directly to the customer's location or configuration facility.

Stage 3: Production

At the production stage, Dell integrates and configures all hardware and settings to support the discovery and installation of the validated version of the cloud platform software, which eliminates the need to configure hardware on site or in a configuration center. Dell’s infrastructure automation software then deploys the validated versions of Red Hat Advanced Cluster Manager and Red Hat Openshift on the servers used in the management and workload clusters and brings those clusters to a workload ready state. This process ensures a consistent and reliable installation process that reduces the risk of configuration errors or compatibility issues. Dell's automation enables zero-touch provisioning that configures hundreds of servers at the same time with full visibility into the health and status of the server infrastructure before and after deployment. Should the CSP need assistance with deployment, Dell's professional services team is standing by to assist. Dell ProDeploy for Telecom provides on-site support to rack, stack, and integrate servers into their network or remote support for deployment.

Stage 4: Operations and lifecycle management

In Day 2+ operations, CSPs must sustain network performance while adapting to changes to the network over time. This includes ensuring software and infrastructure compatibility as updates are made to the network, performing rolling updates, fault and performance management, scaling resources to meet network demands, ensuring efficient use of network resources, and adapting to technology evolution.

Infrastructure Blocks simplify Day 2+ operations in several ways:

- Dell works with Red Hat, its customers, and other partners to capture updates and new requirements necessary to evolve Infrastructure Blocks to support new technologies and software enhancements over time. This requires pro-active collaboration to ensure continuous roadmap alignment across parties. Dell then performs extensive design and validation testing on these enhancements before integrating them into Infrastructure Blocks to deliver a resilient and performant design. This helps CSPs stay on the leading edge of the technology curve while minimizing the risk of encountering faults and performance issues in production.

- Today, Telecom Infrastructure Blocks offers support for three releases per year. In every release, we prioritize the introduction of new capabilities, features, components, and solution enhancements. In addition, there are six patch releases per year that prioritize sub features to ensure compatibility across different releases. Long Term Support releases are provided at the end of the twelve-month release cycle, with a focus on fixing any solution defects that may arise.

- The out-of-the box automation provided with Infrastructure Blocks ensures a consistent, carrier-grade deployment or upgrade of the hardware and cloud platform software each time. This eliminates configuration errors to further reduce issues found in production.

- When bringing together various hardware and software components, CSPs frequently manage different release cycles to support a range of workload requirements. To address any difficulties with software compatibility and life cycle management, Dell Technologies has created a system release cadence process. It includes testing, validating, and locking the release compatibility matrixes for all Infrastructure Block components. This helps to resolve deployment problems affecting software compatibility and Day 2+ life cycle management procedures.

- Dell Professional Services can also provide custom integrations into a CSP’s CI/CD pipeline, providing the CSP with validated updates to the cloud infrastructure that pass directly into the CSP’s CI/CD tool chains to enhance DevOps processes.

- In addition, Dell offers single-call, carrier grade support that meets telecom grade SLAs with guaranteed response and service restoration times for the entire cloud stack (hardware & software).

- The declarative automation provided with Infrastructure Blocks eliminates the time spent updating scripts and playbooks to push out system updates and minimizes the risk of configuration errors that lead to fault or performance issues.

Summary

Dell Telecom Infrastructure Blocks for Red Hat offers a streamlined and efficient way to build and manage Telco cloud infrastructure. From initial technology onboarding to Day 2+ operations, they simplify every step of the process. This makes it easier for CSPs to transition from their vertically integrated, legacy architectures of today to an open cloud-native software platform running on industry standard hardware that delivers reliable and high-quality services to their customers.

This blog post is a collaborative effort from the staff of Dell Technologies and Red Hat.

Monetizing Network Exposure Through Open APIs

Fri, 08 Dec 2023 19:40:56 -0000

|Read Time: 0 minutes

Market Background

5G, especially 5G standalone, has not yet developed to fulfill expectations. End users are not yet seeing significant differences in comparison to 4G, and CSPs are not yet seeing new revenue streams. To address these challenges, we have previously presented two blogs (Network Slicing and Network Edge). In this third blog, we continue to describe a realistic view of how CSPs could maximize the 5G standalone experience and go beyond being merely connectivity providers. This blog focuses on exposing the network capabilities (services and user/network information) that service providers and enterprises can use to enable innovative and monetizable services.

Figure 1: Monetizing Network Exposure

Figure 1: Monetizing Network Exposure

The success of numerous innovative mobile applications can be traced to the availability of mobile Software Development Kits (SDKs). SDKs are available for both iOS and Android mobile platforms. These SDKs provide open tools, libraries, and documentation that allow application developers to easily create mobile applications that rely upon the capabilities of existing mobile platforms (such as notifications and analytics) and device hardware (like GPS and camera.). Most importantly, these two mobile platforms alone currently support over four billion users. The next step is to use the same principles on the network side by using Open APIs that allow unified access to network capabilities for increased network exposure.

The concept of network exposure is not new. There have been a few less-than-successful attempts in the past, such as Service Capabilities Exposure Function (SCEF) and APIs for the IMS/Voice. These solutions were not able to scale sufficiently to attract a significant number of application developers. The specifications have been too complicated for anybody outside of the telecom world to understand or implement. The integration of network exposure into the 5G design is groundbreaking. API exposure is now fundamental to 5G and is natively built into the architecture, enabling applications to seamlessly interact with the network.

Monetizing mobile networks using Open APIs relies on the implementation of communication APIs for voice, video, and messaging, as well as network APIs for location, authentication, and quality of service. By exposing these capabilities through Open APIs, CSPs can establish partnerships by facilitating the creation of tailored, high-value services for businesses, thereby enabling them to monetize 5G beyond traditional connectivity and bundled offerings. These new revenue streams are paramount as the traditional revenue streams from mobile broadband services are flat while costs continue to rise. Moreover, the deployment of a cloud-native 5G standalone network requires substantial investments, making it crucial to identify new revenue streams that can justify the business case.

Technical Background and Standardization

5G standalone was specified in 3GPP release 15 and its architecture standardized the Network Exposure Function (NEF). One of the 5G core network functions, NEF allows applications to subscribe to network changes, or instruct them to extract network information and capabilities. NEF enables an extensive set of network exposure capabilities, but it lacks the scale, agility, and simplicity that application developers require. GSMA’s Open Gateway Initiative, the CAMARA project, and TM Forum’s Open APIs all aim to address this gap.

- GSMA’s Open Gateway Initiative achieves scale by committing CSPs to implement the common system framework in a unified manner.

- Actual Service APIs are defined under the CAMARA project where the work is done as an open-source project at the Linux foundation.

- TM Forum’s Open APIs are used in this framework for Operation, Administration, and Management (OAM).

The use case is well described in the GSMA’s Open Gateway white paper.

Network APIs

Open APIs and network capabilities in this new concept have much to offer. The CAMARA project has already defined 18 Service APIs such as Quality on Demand, Device Location, Device Status, Number Verification, Simple Edge Discovery, One Time Password SMS, Carrier Billing, and SIM swap. Three of the most popular elements are described in more detail below:

Quality on Demand: It is easy to imagine that multiple applications can benefit from better quality (bandwidth and latency). The challenge is to address how the network can fulfill this request instantaneously and cost-effectively. Some Proof of Concepts (PoCs) demonstrate that implementing Quality on Demand improvements can trigger either a new Network Slice or a different Quality of Service Class Identifier (QCI). For more information, see our Network Slicing blog.

Device Location: This API verifies that the device is in a specific geographical area. The main benefits of the network-based request are that it can be used when a GPS signal is not available, and it is considered more trustworthy (location info cannot be spoofed).

Device Status: This API provides a very simple and straightforward request to determine whether the subscriber is roaming.

None of these Service APIs offer anything unique that the market has not seen before. Their intrinsic value comes from being part of a unified platform that enables a consistent way of accessing network capabilities and information, similar to how mobile SDKs became a catalyst to the thriving mobile device ecosystem we know today. Only time will tell how much value-add application developers will see from these Open APIs.

Use Cases and Commercial Models

The value of new features and applications is considered whenever 5G monetization is discussed. We are still in the early phase of Open APIs, but the TM Forum’s Catalyst Program and CAMARA Open API showcases can give good insights into what the coming commercial deployments could look like. These programs have triggered several PoCs where the related use cases have required optimized performance (Quality of Demand), user location/roaming information, and feedback on consumer experience. In these PoCs, the service providers have been able to consume the Open APIs directly or through a Hyperscale marketplace. As an example, in one PoC, guaranteed Quality of Delivery was needed for a 360-degree 8K live streaming service with content monetization through APIs (with CSPs curating markets at the edge). Another PoC included an end-to-end implementation of a marketplace from which one could consume network services from multiple CSP networks (Simple hyperscaler integrated network experience).

We can expect several commercial models for these Open APIs, because these APIs can be utilized in various ways such as providing network/subscriber information, optimizing functionalities/features, and allocating network capacity/resources. it is yet to be determined how these Open APIs can be consumed easily. Service providers are unlikely to integrate and set up individual contracts with every other service provider in the world. Therefore, there must be a place for aggregation in order to hide the complexity behind a portal. This role can be assumed by a group of service providers or hyperscalers who can onboard these services onto their marketplaces.

Challenges and The Road Ahead

One of the main Key Performance Indicators (KPI’s that define success for service providers is the ability to scale and have a global reach. It is critical that there be no fragmentation and that the community work towards a unified approach. Jointly agreed upon solutions and specifications require more time to develop; therefore, another year may pass before we start to see commercial use case launches (as forecast by Borje Ekholm, Ericsson CEO during the Q3-2023 earnings call).

The journey to unified 5G is not easy, and it presents various challenges:

- Technology migration poses a challenge as mobile operators need to transition from existing systems to effectively utilize the potential of the 5G NEF and Open APIs.

- Another significant obstacle is bridging the gap between software developers and mobile operators. Developers require clear, simple, unified, and well-documented APIs to leverage the network capabilities effectively.

- At the same time, mobile operators must ensure that exposing their network does not compromise the security of the network while ensuring that end users have full control of where and how their information is stored and used.

Some service providers have already launched platforms with a few Service APIs. Early deployments can introduce a risk of fragmentation. However, the risk is outweighed by the positive impact testing the concept in the real world and constructing more concrete requirements from actual user experiences with these services.

Regardless of how much commercial success these new Network and Service APIs realize in the coming years, they will have made an important step towards more Open, Agile, and Programmable networks. Similarly, Dell has been embracing this vision in our Telecom strategy as reflected on our Multi-Cloud Foundation Concept, Bare Metal Orchestration, and Open RAN development projects. In our vision, Open APIs are needed in all layers (Infrastructure, Network, Operations, and Services). Stay tuned for more to come from Dell about the open infrastructure ecosystem and automation (#MWC24).

The 5G Core Network Demystified

Thu, 17 Aug 2023 19:29:23 -0000

|Read Time: 0 minutes

In the first blog of this 5G Core series, we looked at the concept of cloud-native design, its applications in the 5G network, the benefits and how Dell and Red Hat are simplifying the deployment and management of cloud-native 5G networks.

With this second blog post we aim to demystify the 5G Core network, its architecture, and how it stands apart from its predecessors. We will delve into the core network functions, the role of Cloud-Native architecture, the concept of network slicing, and how these elements come together to define the 5G Network Architecture.

The essence of 5G Core

5G Core, often abbreviated as 5GC, is the heart of the 5G network. It is the control center that governs all the protocols, network interfaces, and services that make the 5G system function seamlessly. The 5G Core is the brainchild of 3GPP (3rd Generation Partnership Project), a standards organization whose specifications cover cellular telecommunications technologies, including radio access, core network and service capabilities, which provide a complete system description for mobile telecommunications.

The 5G Core is not just an upgrade from the 4G core network, it is a radical transformation designed to revolutionize the mobile network landscape. It is built to handle a broader audience, extending its reach to all industry sectors and time-critical applications, such as autonomous driving. The 5G core is responsible for managing a wide variety of functions within the mobile network that make it possible for users to communicate. These functions include mobility management, authentication, authorization, data management, policy management, and quality of service (QOS) for end users.

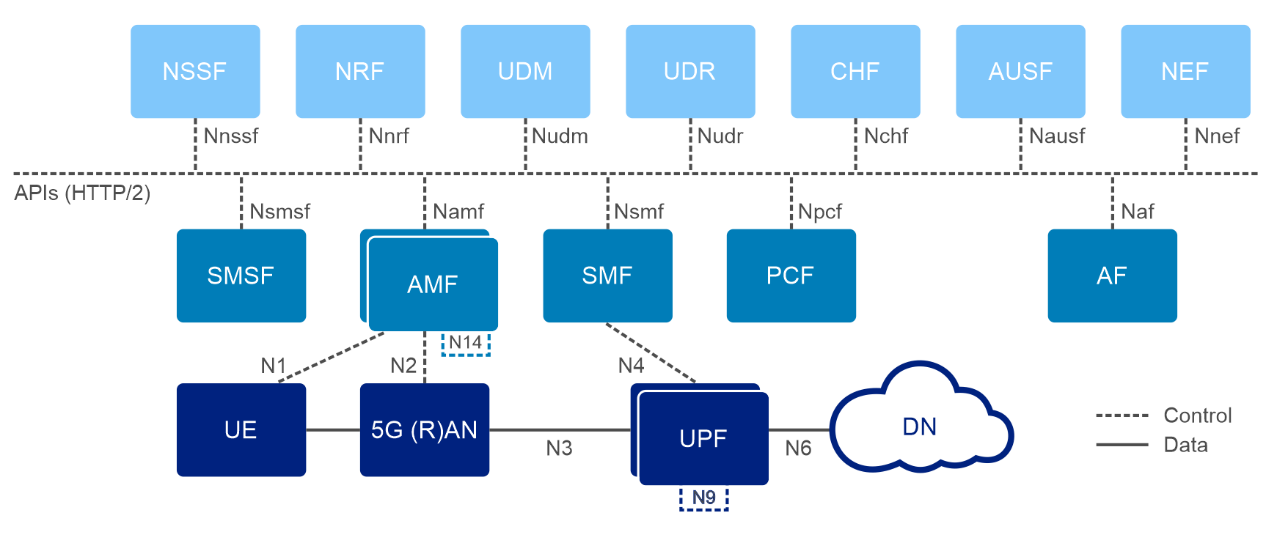

5G Network Architecture: What You Need to Know

5G was built from the ground up, with network functions divided by service. As a result, this architecture1 is also known as the 5G core Service-Based Architecture (SBA), The 5G core is a network of interconnected services, as illustrated in the figure below.

3GPP defines that 5G Core Network as a decomposed network architecture with a service-based Architecture (SBA) where each 5G Network Function (NF) can subscribe to and register for services from other NF, using HTTP/2 as a baseline communication protocol.

A second concept in the architecture of 5G is to decrease dependencies between the Access Network (AN) and the Core Network (CN) by employing a unified access-agnostic core network with a common interface between the Access Network and Core Network that integrates diverse 3GPP and non-3GPP access type.

In addition, the 5G core decouples the user plane (UP) (or data plane) from the control plane (CP).This function, which is known as CUPS2 (Control & User Plane Separation), was first introduced in 3GPP release 14. An important characteristic of this function being that, in case of a traffic peak, you can dynamically scale the CP functions without affecting the user plane operations, allowing deployment of UP functions (UPF) closer to the RAN and User Equipment (UE) to support use cases like Ultra Reliable low latency Communication (URLLC) and achieve benefits in both Capex and Opex.

5G Core Network Functions and What They Do

The 5G Core Network is composed of various network functions, each serving a unique purpose. These functions communicate internally and externally over well-defined standard interfaces, making the 5G network highly flexible and agile. Let's take a closer look at some3 of the critical 5G Core Network functions:

User Plane Function (UPF)

The User Plane Function is a critical component of the 5G core network architecture It oversees the managment of user data during the data transmission process. The UPF serves as a connection point between the RAN and the data network. It takes user data from the RAN and performs a variety of functions like as packet inspection, traffic routing, packet processing, and QoS enforcement before delivering it to the Data Network or Internet. This function allows the data plane to be shifted closer to the network edge, resulting in faster data rates and shorter latencies. The UPF combines the user traffic transport functions previously performed in 4G by the Serving Gateway (S-GW) and Packet Data Network Gateway (P-GW) in the 4G Evolved Packet Core (EPC).

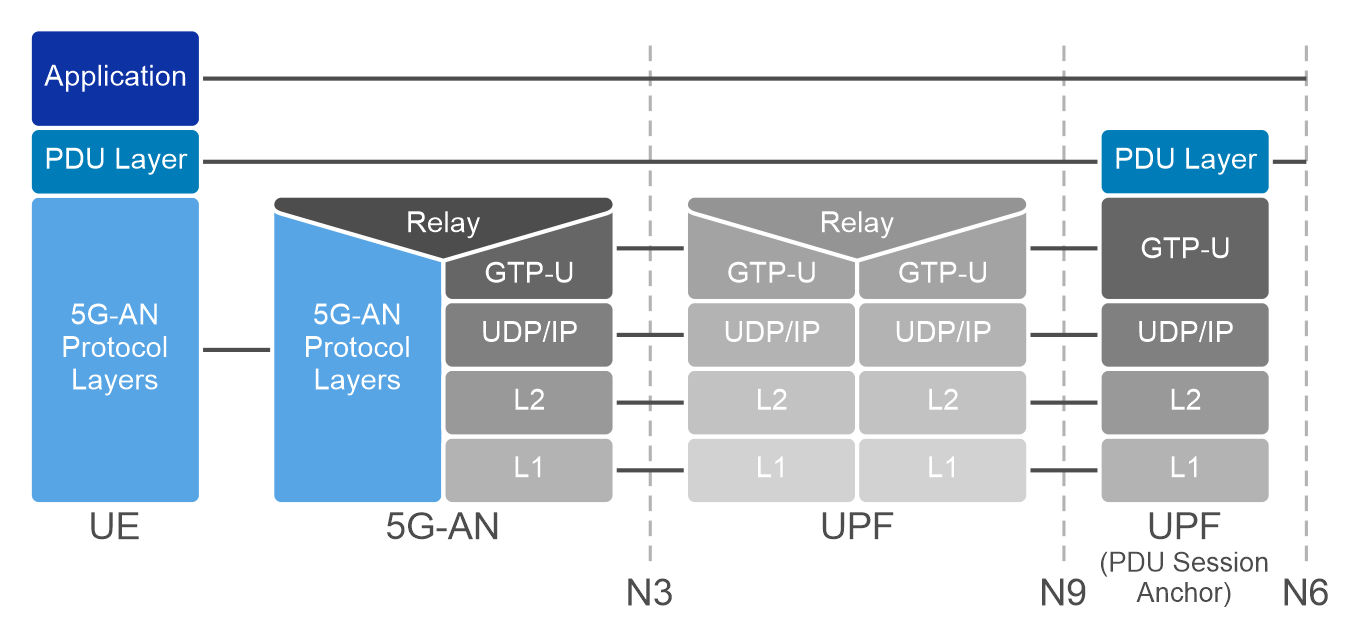

UPF Interfaces/reference points with employed protocols:

- N3 (GTP-U): Interface between the RAN (gNB) and the UPF

- N9 (GTP-U): Interface between two UPF’s (i.e the Intermediate I-UPF and the UPF Session Anchor)

- N6 (GTP-U): Interface between the Data Network (DN) and the UPF

- N4 (PFCP): Interface between the Session Management Function (SMF) and the UPF

Session Management Function (SMF)

The Session Management Function (SMF) is crucial element that make up the 5G Core Network responsible for establishing, maintaining, and terminating network sessions for User Equipment (UE). The SMF carries out these tasks using network protocols such as Packet Forwarding Control Protocol (PFCP) and Network Function-specific Service-based interface (Nsmf).

SMF communicates with other network functions like the Policy Control Function (PCF), Access and Mobility Management Function (AMF), and the UPF to ensure seamless data flow, effective policy enforcement, and efficient use of network resources. It also plays a significant role in handling Quality of Service (QoS) parameters, routing information, and charging characteristics for individual network sessions.

SMF brings some control plane functionality of the serving gateway control plane (SGW-C) and packet gateway control plane (PGW-C) in addition to providing the session management functionality of the 4G Mobility Management Entity (MME).

Access and Mobility Management Function (AMF)

The Access and Mobility Management Function (AMF) oversees the management of connections and mobility. It receives policy control, session-related, and authentication information from the end devices and passes the session information to the PCF, SMF and other network functions. In the 4G/EPC network, the corresponding network element to the AMF is the Mobility Management Entity. While the MME's functionality has been decomposed in the 5G core network, the AMF retains some of these roles, focusing primarily on connection and mobility management, and forwarding session management messages to the SMF.

Additionally, the AMF retrieves subscription information and supports short message service (SMS). It identifies a network slice using the Single Network Slice Selection Assistance Information (S- NSSAI), which includes the Slice/Service Type (SST) and Slice Differentiator (SD). The AMF's operations enable the management of Registration, Reachability, Connection, and Mobility of UE, making it an essential component of the 5G Core Network.

Policy Control Function (PCF)

The Policy Control Function (PCF) provides the framework for creating policies to be consumed by the other control plane network functions. These policies can include aspects like QOS, Subscriber Spending/Usage Monitoring, network slicing management, and management of subscribers, applications, and network resources. The PCF in the 5G network serves as a policy decision point, like the PCRF (Policy and Charging Rules Function) in 4G/EPC Network. It communicates with other network elements such as the AMF, SMF, and Unified Data Management (UDM) to acquire critical information and make sound policy decisions.

Unified Data Management (UDM) and Unified Data Repository (UDR)

The Unified Data Management (UDM) and Unified Data Repository(UDR) are critical components of the 5G core network. The UDM maintains subscriber data, policies, and other associated information, while the UDR stores this data. They collaborate to conduct data management responsibilities that were previously handled by the HSS (Home Subscriber Server) in the 4G EPC. When compared to the HSS, the UDM and UDR provide greater flexibility and efficiency, supporting the enhanced capabilities of the 5G network.

Network Exposure Function (NEF)

The Network Exposure Function (NEF) is another key component of 5G core network that enables network operators to securely expose network functionality and interfaces on a granular level by creating a bridge between the 5G core network and external application (E.g., internal exposure/re-exposure, Edge Computing). The NEF also provides a means for the Application Functions (AFs) to securely provide information to 3GPP network (E.g., Expected UE Behavior).

The NEF northbound interface is between the NEF and the AF. It specifies RESTful APIs that allow the AF to access the services and capabilities provided by 3GPP network entities and securely exposed by the NEF. It communicates with each NF through a southbound interface facilitated by a northbound API. The 3GPP interface refers to the southbound interface between NEF and 5G network functions, such as the N29 interface between NEF and Session Management Function (SMF), the N30 interface between NEF and Policy Control Function (PCF), and so on.

By opening the network's capabilities to third-party applications, NEF enables a seamless connection between network capabilities and business requirements, optimizing network resource allocation and enhancing the overall business experience.

Network Repository Function (NRF)

The Network Resource Function (NRF) serves as critical component required to implement the new service-based architecture in the 5G core network which serves as a centralized repository for all NF’s instances. It is in charge of managing the lifecycle of NF profiles, which includes registering new profiles, updating old ones, and deregistering those that are no longer in use. The NRF offers a standards-based API for 5G NF registration and discovery.

Technically, NRF operates by storing data about all Network Function (NF) instances, including their supported functionalities, services, and capacities. When a new NF instance is instantiated, it registers with the NRF, providing all the necessary details. Subsequently, any NF that needs to communicate with another NF can query the NRF for the target NF's instance details. Upon receiving this query, the NRF responds with the most suitable NF instance information based on the requested service and capacity.

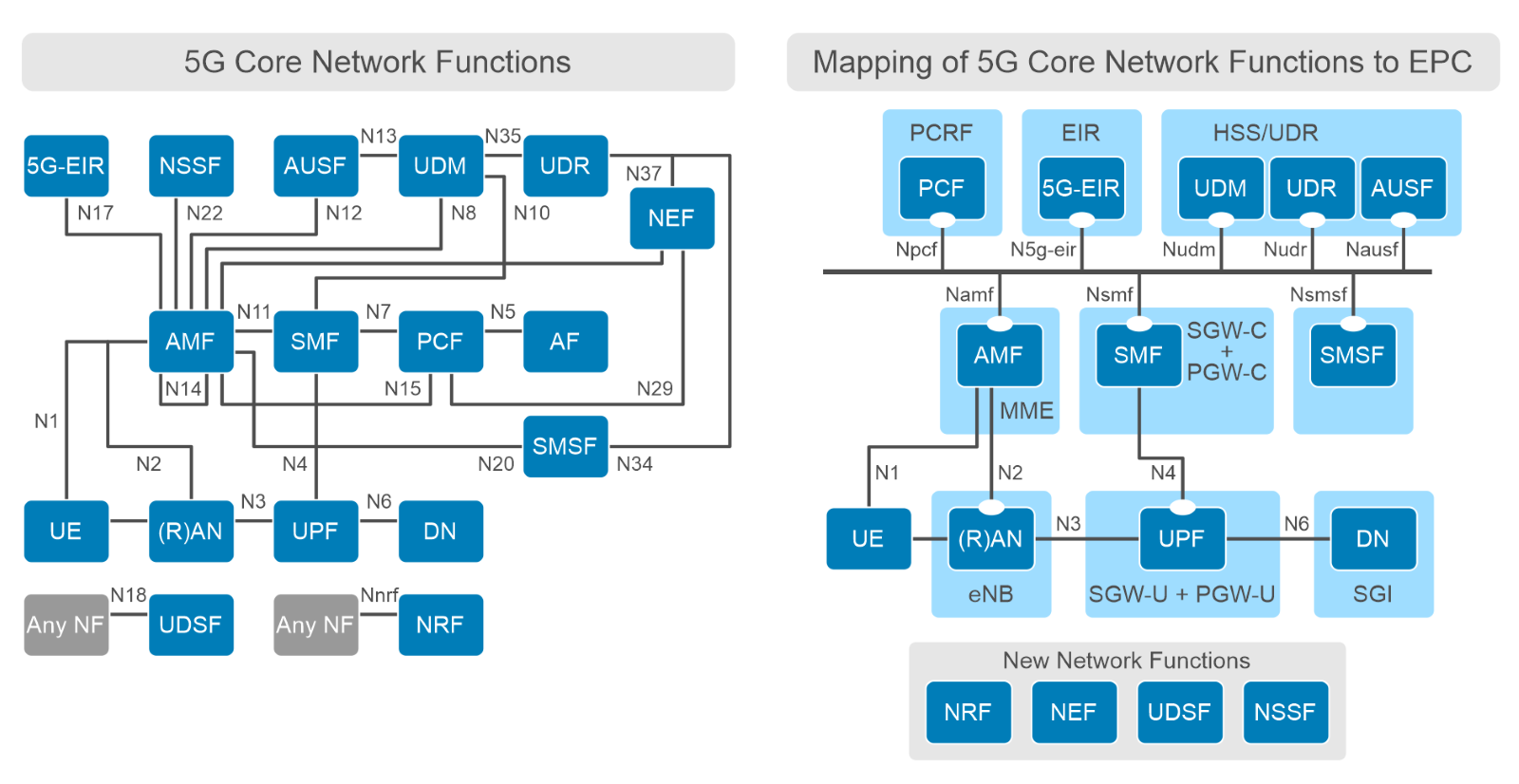

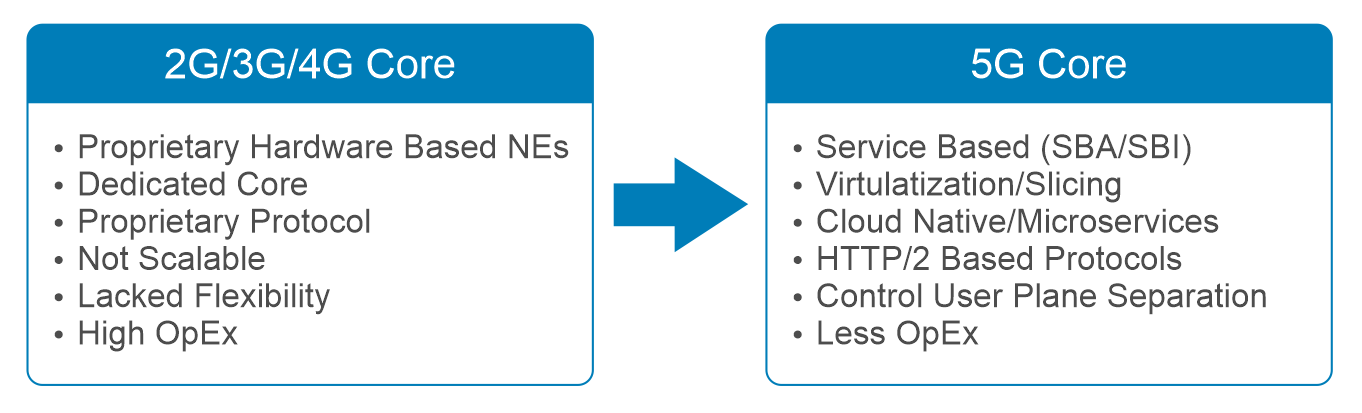

How Does the 5G Core Differ from Previous Generations?

The primary architectural distinction between the 5G Core and the 4G EPC is that the 5G Core makes use of the Service-Based Architecture (SBA) with cloud-native flexible configurations of loosely coupled and independent NFs deployed as containerized microservices. The microservices based architecture provides the ability for NFs to scale and upgrade independently of each other which is significant benefit to CSPs. The 4G EPC, on the other hand, employs a flat architecture for efficient data handling with network components deployed as physical network elements in most cases and the interface between core network elements was specified as point-to-point running proprietary protocols and was not scalable.

Another significant distinction between 5G Core and EPC is the formation of the control plane (CP). The control plane functionality is more intelligently shared between Access and Mobility Management Functions (AMF) and Session Management Functions (SMF) in the 5G Core than the MME and SGW/PGW in the 4G/EPC. This separation allows for more efficient scaling of network resources and improved network performance.

In addition to the design and functional updates, the business' priorities with 5G have been updated. With 5GC, CSPs are moving away from proprietary, vertically integrated systems and shifting to cloud-native and open source-based platforms like Red Hat OpenShift Container Platform that runs on industry standard hardware. This helps improve the responsiveness while also cutting the operating expenses will be the primary focus going forward with 5G Core for CSPs.

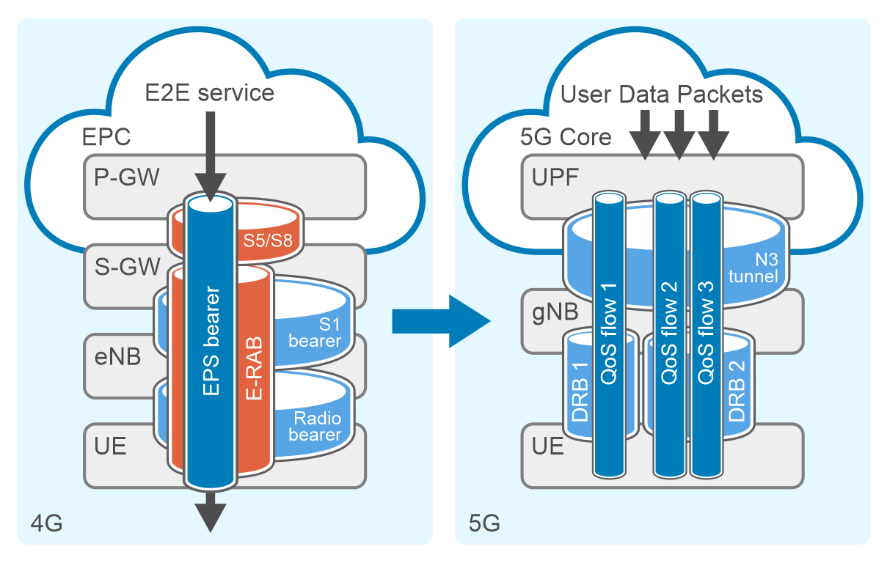

Key distinctions between the 4G LTE and 5G QoS models

The key distinctions between 4G LTE and 5G QoS models primarily lie in their approach to quality-of-service enforcement and their level of complexity. In 4G LTE, QoS is enforced at the EPS bearer level (S5/S8 + E-RAB) with each bearer assigned an EPS bearer ID. On the other hand, 5G QoS is a more flexible approach that enforces QoS at the QoS flow level. Each QoS flow is identified by a QoS Flow ID (QFI).

Furthermore, the process of ensuring end-to-end QoS for a Packet Data Unit (PDU) session in 5G involves packet classification, user plane marking, and mapping to radio resources. Data Packets are classified into QoS flows by UPF using Packet Detection Rules (PDRs) for downlink and QoS rules for uplink.

5G leverages Service Data Adaptation Protocol (SDAP) for mapping between a QOS flow from the 5G core network and a data radio bearer (DRB). This level of control and adaptability provides an improved QoS model in 5G as compared to 4G networks.

The Power of Cloud-Native Architecture in 5G Core

One of the standout features of the 5G Core is its cloud-native architecture. This architecture allows the 5G core network to be built with microservices that can be reused for supporting other network functions. The 5G core leverages technologies like microservices, containers, orchestration, CI/CD pipelines, APIs, and service meshes, making it more agile and flexible.

With Cloud-Native architecture, 5G Core can be easily deployed and operated, offering a cost-effective solution that complies with regulatory requirements and supports a wide range of use cases. The adherence to cloud-native principles is of utmost importance as it allows for the independent scaling of components and their dynamic placement based on service demands and resource availability. This architecture also allows for network slicing, which enables the creation of end-to-end virtual networks on top of a shared infrastructure.

Network Slicing: Enabling a Range of 5G Services

Network Slicing is considered as one of the key features by 3GPP in 5G. A network slice can be looked like a logical end-to-end network that can be dynamically created. A UE may access to multiple slices over the same gNB, within a network slice, UEs can create PDU sessions to different Gateways via Data network name (DNNs). This architecture allows operators to provide a custom Quality of Service (QoS) for different services and/or customers with agreed upon Service-level Agreement (SLA).

The Network Slice Selection Function (NSSF) plays a vital role in the network slicing architecture of 5G Core. It facilitates the process of selecting the appropriate network slice for a device based on the Network Slice Selection Assistance Information (NSSAI) specified by the device. When a device sends a registration request, it mentions the NSSAI, thereby indicating its network slice preference. The NSSF uses this information to determine which network slice would best meet the device's requirements and accordingly assigns the device to that network slice. This ability to customize network slices based on specific needs is a defining feature of 5G network slicing, enabling a single physical network infrastructure to cater to a diverse range of services with contrasting QOS requirements. To read and learn more on Network Slicing check out this amazing blog post To slice or not to slice | Dell Technologies Info Hub.

Next steps

To learn how Dell and Red Hat are helping CSPs in their cloud-native journey, see the blog Cloud-native or Bust: Telco Cloud Platforms and 5G Core Migration on Info Hub. In the next blog of the 5G Core series, we will explore the collaboration between Dell Technologies and Red Hat to simplify operator processes, starting from the initial technology onboarding all the way to Day 2 operations. The focus is on deploying a telco cloud that supports 5G core network functions using Dell Telecom Infrastructure Blocks for Red Hat.

To learn more about about Telecom Infrastructure Blocks for Red Hat, kindly visit our website Dell Telecom Multi-Cloud Foundation solutions.

1 The 5G Architecture shown here is the simplified version, there are other 5G NFs like UDSF, SCP, BSF, SEPP, NWDAF, N3IWF etc. not shown here.

2 CUPS is a pre-5G technology (5G Standalone (SA) was introduced in 3GPP Rel-15). 5G SA offers more innovation with the ability to change anchors (SSC Mode 3), daisy chain UPFs, and connect to multiple UPFs.

3 The Network Functions mentioned in this section are a subset of the standardized NFs in 5G Core network.

Authored by:

Gaurav Gangwal

Senior Principal Engineer – Technical Marketing, Product Management

About the author:

Gaurav Gangwal works in Dell's Telecom Systems Business (TSB) as a Technical Marketing Engineer on the Product Management team. He is currently focused on 5G products and solutions for RAN, Edge, and Core. Prior to joining Dell in July 2022, he worked for AT&T for over ten years and previously with Viavi, Alcatel-Lucent, and Nokia. Gaurav has an Engineering degree in Electronics and Telecommunications and has worked in the telecommunications industry for about 14+ years. He currently resides in Bangalore, India.

Kevin Gray

Senior Consultant, Product Marketing – Product Marketing

About the author:

Kevin Gray leads marketing for Dell Technologies Telecom Systems Business Foundations solutions. He has more than 25 years of experience in telecommunications and enterprise IT sectors. His most recent roles include leading marketing teams for Dell’s telecommunications, enterprise solutions and hybrid cloud businesses. He received his Bachelor of Science in Electrical Engineering from the University of Massachusetts in Amherst and his MBA from Bentley University. He was born and raised in the Boston area and is a die-hard Boston sports fan.

The 5G Core Network is composed of various network functions, each serving a unique purpose. These functions communicate internally and externally over well-defined standard interfaces, making the 5G network highly flexible and agile. Let's take a closer look at some3 of the critical 5G Core Network functions:

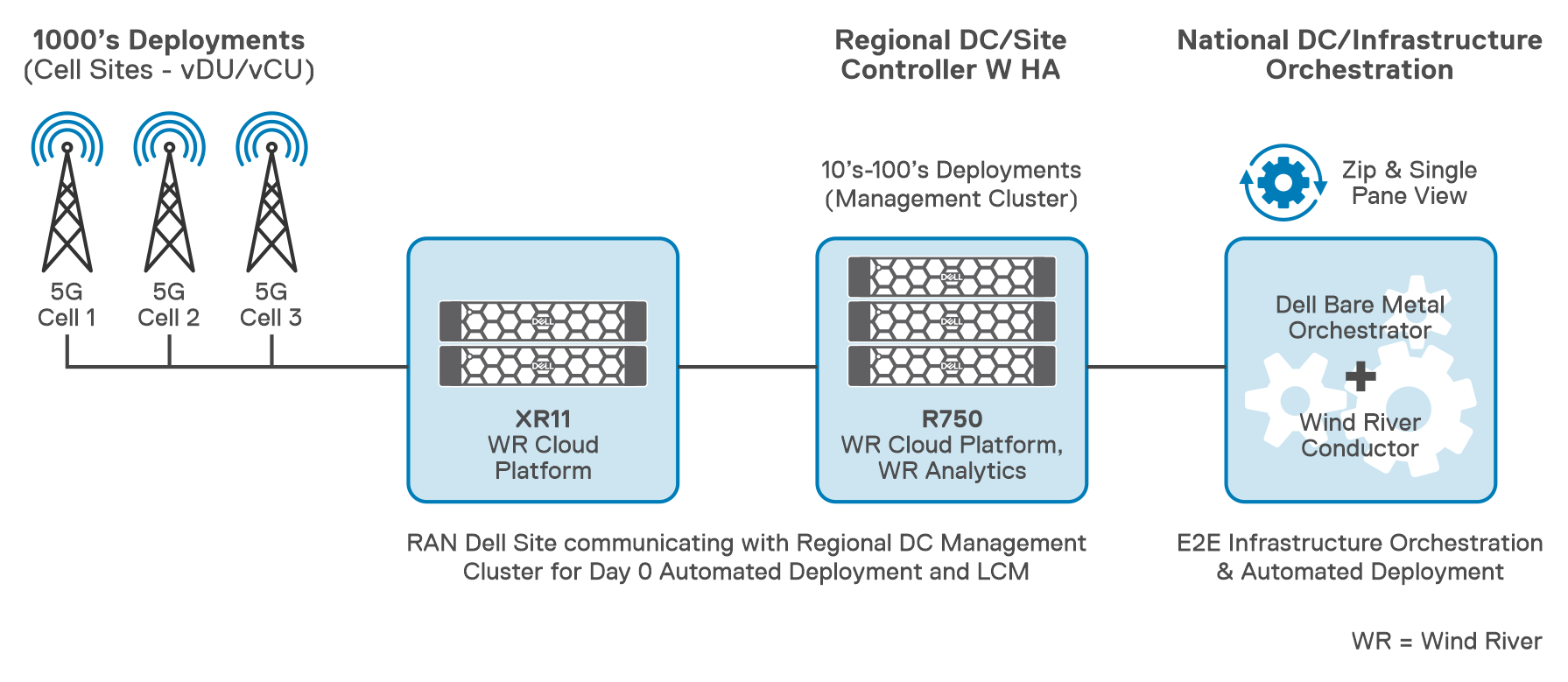

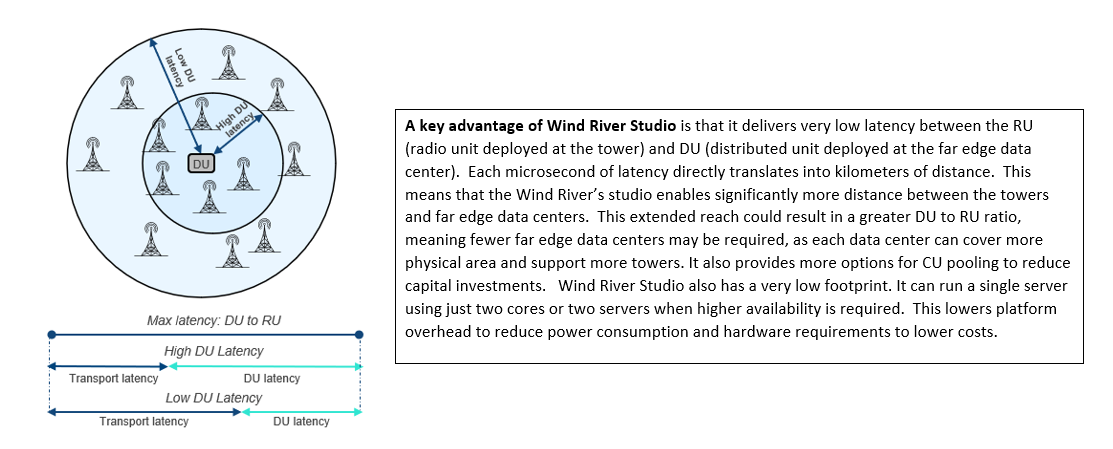

How Dell Telecom Infrastructure Blocks are Simplifying 5G RAN Cloud Transformation

Thu, 08 Dec 2022 20:01:48 -0000

|Read Time: 0 minutes