Accelerate Telecom Cloud Deployments with Dell Telecom Infrastructure Blocks

Mon, 31 Oct 2022 16:48:10 -0000

|Read Time: 0 minutes

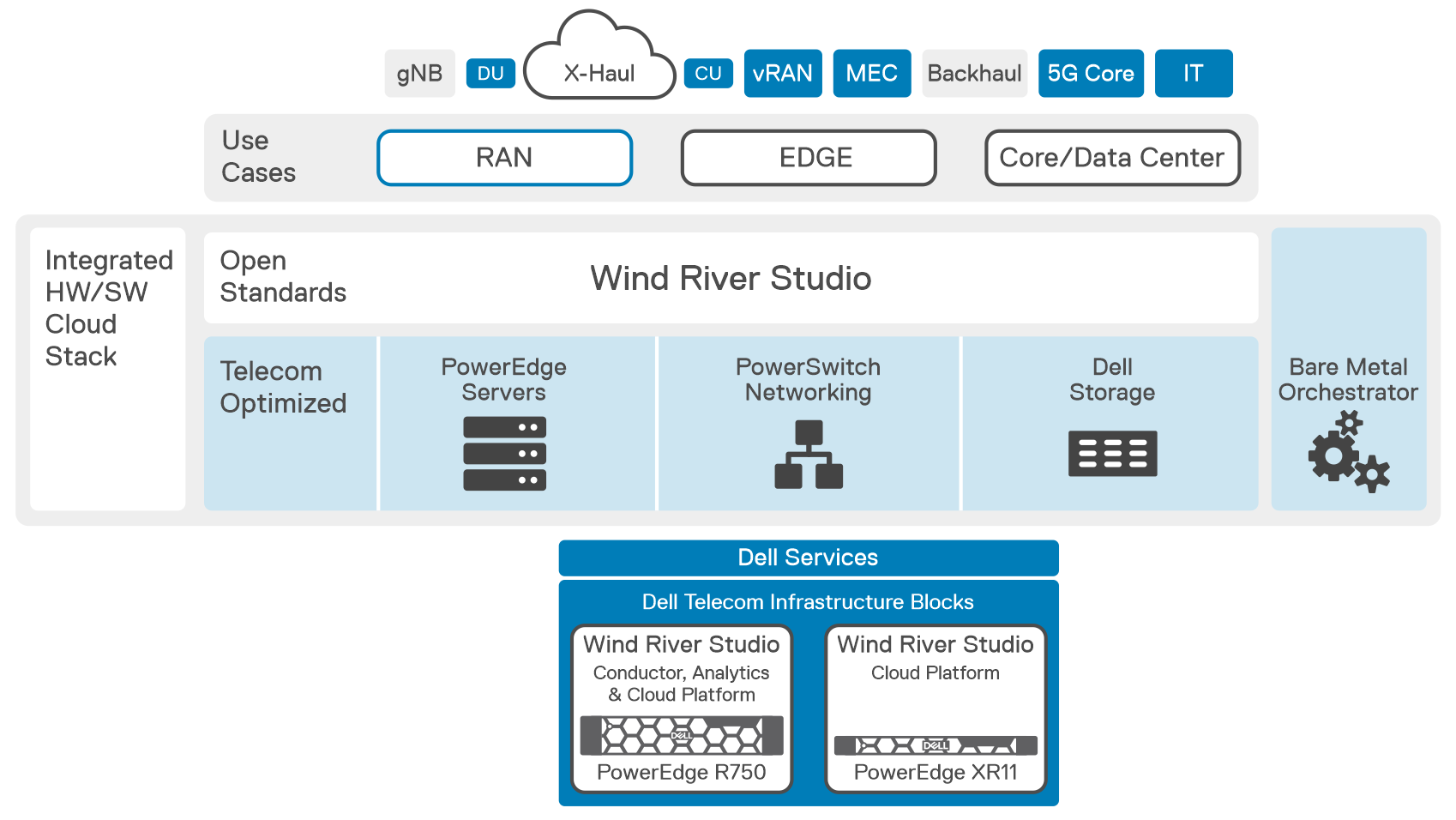

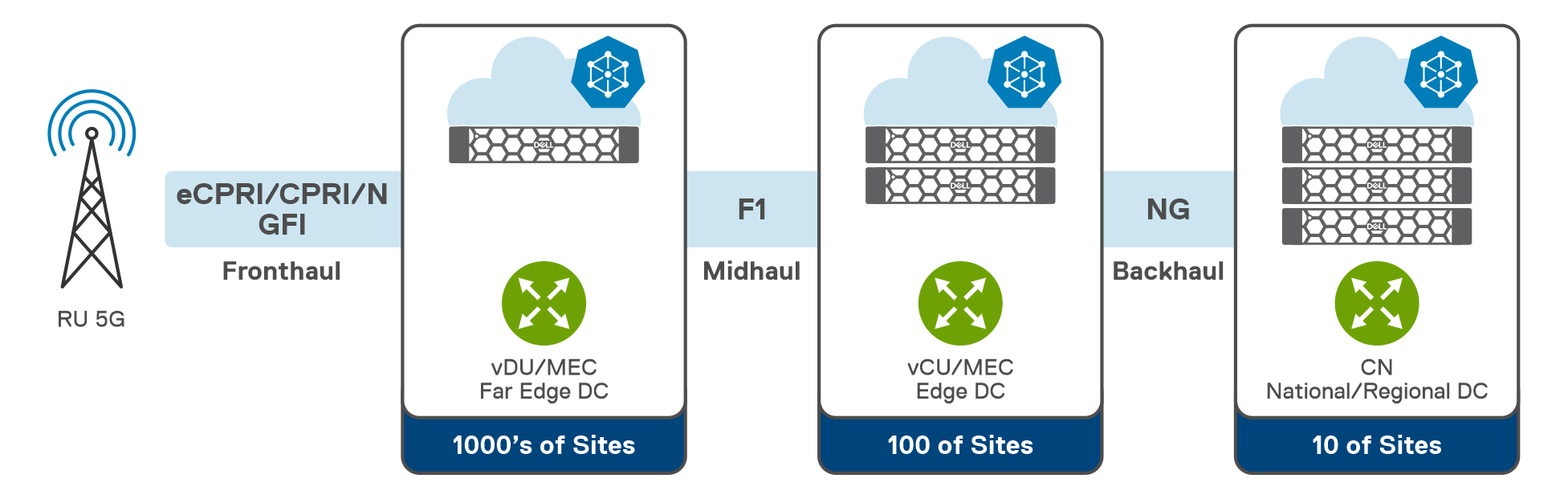

During MWC Vegas, Dell Technologies announced Dell’s first Telecom Infrastructure Blocks co-engineered with our partner Wind River to help communication service providers (CSPs) reduce complexity, accelerate network deployments, and simplify life cycle management of 5G network infrastructure. Their first use cases will be focused on infrastructure for virtual Radio Access Network (vRAN) and Open RAN workloads.

Deploying and supporting open, virtualized, and cloud-native 5G RANs is one of the key requirements to accelerate 5G adoption. The number of options available in 5G RAN design makes it imperative that infrastructures supporting them are flexible, fully automated for distributed operations, and maximally efficient in terms of power, cost, the resources they consume, and the performance they deliver.

Dell Telecom Infrastructure Blocks for Wind River are designed and fully engineered to provide a turnkey experience with fully integrated hardware and software stacks from Dell and Wind River that are RAN workload-ready and aligned with workload requirements. This means the engineered system, once delivered, will be ready for RAN network functions onboarding through a simple and standard workflow avoiding any integration and lifecycle management complexities normally expected from a fully disaggregated network deployment.

The Dell Telecom Infrastructure Blocks for Wind River are a part of the Dell Technologies Multi-Cloud Foundation, a telecom cloud designed specifically to assist CSPs in providing network services on a large scale by lowering the cost, time, complexity, and risk of deploying and maintaining a distributed telco-cloud. Dell Telecom Infrastructure Blocks for Wind River are comprised of:

- Dell hardware that has been validated and optimized for RAN

- Dell Bare Metal Orchestrator and a Bare Metal Orchestrator Module (a combination of a Bare Metal Orchestrator plug-in and a Wind River Conductor integration plug-in)

- Wind River Studio, which is comprised of:

- Wind River Conductor

- Wind River Cloud Platform

- Wind River Analytics

How do Dell Telecom Infrastructure Blocks for Wind River make infrastructure design, delivery, and lifecycle management of a telecom cloud better and easier?

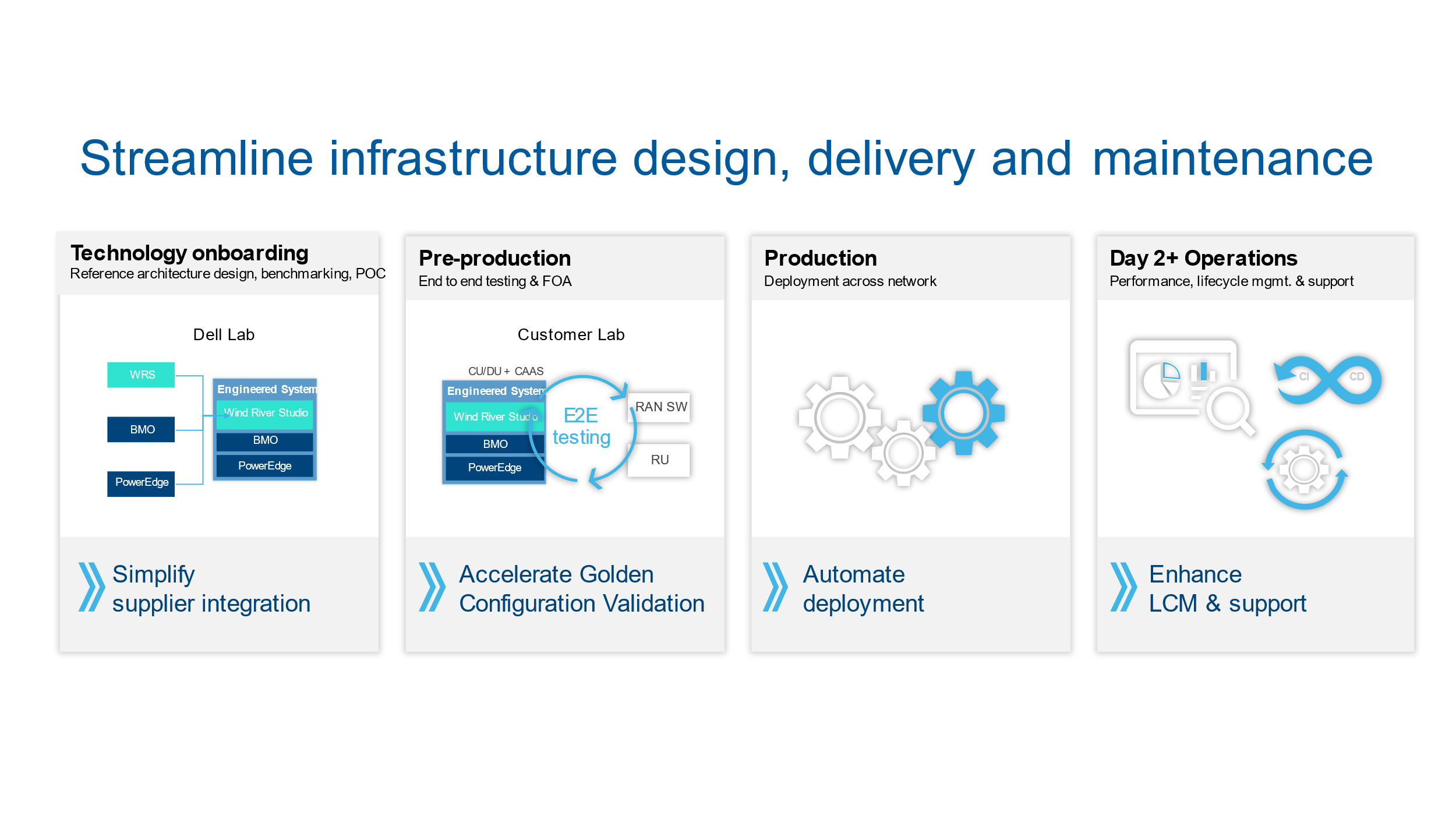

From technology onboarding to Day 2+ operations for CSPs, Dell Telecom Infrastructure Blocks streamline the processes for technology acquisition, design, and management. We have broken down these processes into 4 stages. Let us examine how Dell Telecom Infrastructure Blocks for Wind River can impact each stage of this journey.

Stage 1: Technology onboarding | Faster Time to Market

The first stage is the Technology onboarding, where Dell Technologies works with Wind River in Dell’s Solution Engineering Lab to develop the engineered system. Together we design, validate, build, and run a broad range of test cases to create an optimized engineered system for 5G RAN vCU/vDU and Telecom Multi-Cloud Foundation Management clusters. During this stage, we conduct extensive solution testing with Wind River performing more than 650 test cases. This includes validating functionality, interoperability, security, scalability, high availability, and test cases specific to the workload’s infrastructure requirements to ensure this system operates flawlessly across a range of scale and performance points.

We also launched our OTEL Lab (Open Telecom Ecosystem Lab) to allow telecom ecosystem suppliers (ISVs) to integrate or certify their workload applications on Dell infrastructure including Telecom Infrastructure Blocks. Customers and partners working in OTEL can fine-tune the Infrastructure Block to a given CSP’s needs, marrying the efficiency of Infrastructure Block development with the nuances presented in meeting a CSP’s specific requirements.

Continuous improvement in the design of Infrastructure Blocks is enabled by ongoing feedback on the process throughout the life of the solution which can further streamline the design, validation, and certification. This extensive process produces an engineered system that streamlines the operator’s reference architecture design, benchmarking, proof of concept, and end-to-end validation processes to reduce engineering costs and accelerate the onboarding of new technology.

All hardware and software required for this Engineered system are integrated in Dell’s factory and sold and supported as a single system to simplify procurement, reduce configuration time, and streamline product support.

This "shift left" in the design, development, validation, and integration of the stacks means readiness testing and integration are finished sooner in the development cycle than they would have been with more traditional and segregated development and test processes. For CSPs, this method speeds up time to value by reducing the time needed to prepare and validate a new solution for deployment.

Now we go from Technology onboarding to the second phase, pre-production.

Stage 2: Pre-production | Accelerated onboarding

From Dell’s Solution Engineering Labs, the engineered system moves into the CSPs pre-production environment where the golden configuration is defined. Rather than receiving a collection of disaggregated components, (infrastructure, cloud stacks, automation, and so on.) CSPs start with a factory-integrated, engineered system that can be quickly deployed in their pre-production test lab. At this stage, customers leverage the best practices, design guidance, and lessons learned to create a fully validated stack for their workload. The next step is to pre-stage the Telco Cloud stack including the workload and start preparing for Day 1 and Day 2 by integrating with the customer CI/CD pipeline and defining/agreeing on the life-cycle management process to support the first office application deployment.

Stage 3: Production | Automation enables faster deployment

Advancing the flow, deployment into production is accelerated by:

- Factory integration that reduces procurement, installation, and integration time on-site.

- Embedded automation that reduces time spent configuring hardware or software. This includes validating configurations and streamlining processes with Customer Information Questionnaires (CIQs). CIQs are YAML files that list credentials, management networks, storage details, physical locations, and other relevant data needed to set the telco cloud stack at different physical locations for CSPs.

- Streamlining support with a unified single call carrier-grade support model for the full cloud stack.

Automating deployment eliminates manual configuration errors to accelerate product delivery. Should the CSP need assistance with deployment, Dell's professional services team is standing by to assist. Dell provides on-site services to rack, stack, and integrate servers into their network.

Stage 4: Day 2+ Operations | Performance, lifecycle management, and support

Day 2+ operations are simplified in several ways. First, the automation provided, combined with the extensive validation testing Dell and Wind River perform, ensure a consistent, telco-grade deployment, or upgrade each time. This streamlines daily fault, configuration, performance, and security management in the fully distributed cloud. In addition, Dell Bare Metal Orchestrator will automate the detection of configuration drift and its remediation. And, Wind River Studio Analytics utilizes machine learning to proactively detect issues before they become a problem.

Second, Dell’s Solutions Engineering lab validates all-new feature enhancements to the software and hardware including new updates, upgrades, bug fixes, and security patches. Once we have updated the engineered system, we push it via Dell CI/CD pipeline to Dell factory and OTEL Lab. We can also push the update to the CSP's CI/CD pipeline using integrations set up by Dell Services to reduce the testing our customers perform in their labs.

We complement all this by providing unified, single-call support for the entire cloud stack with options for carrier-grade SLAs for service response and restoration times.

Proprietary appliance-based networks are being replaced by best-of-breed, multivendor cloud networks as CSPs adapt their network designs for 5G RAN. As CSPs adopt disaggregated, cloud-native architectures, Dell Technologies is ready to lend a helping hand. With Dell Telecom Multi-Cloud Foundation, we provide an automated, validated, and continuously integrated foundation for deploying and managing disaggregated, cloud-native telecom networks.

Ready to talk? Request a callback.

To learn more about our solution, please visit the Dell Telecom Multi-Cloud Foundation solutions site

Authored by:

Gaurav Gangwal

Senior Principal Engineer – Technical Marketing, Product Management

About the author:

Gaurav Gangwal works in Dell's Telecom Systems Business (TSB) as a Technical Marketing Engineer on the Product Management team. He is currently focused on 5G products and solutions for RAN, Edge, and Core. Prior to joining Dell in July 2022, he worked for AT&T for over ten years and previously with Viavi, Alcatel-Lucent, and Nokia. Gaurav has an engineering degree in electronics and telecommunications and has worked in the telecommunications industry for about 14 years. He currently resides in Bangalore, India.

Related Blog Posts

How Dell Telecom Infrastructure Blocks are Simplifying 5G RAN Cloud Transformation

Thu, 08 Dec 2022 20:01:48 -0000

|Read Time: 0 minutes

5G is a technology that is transforming industry, society, and how we communicate and live in ways we’ve yet to imagine. Communication Service Providers (CSPs) are at the heart of this technological transformation. Although 5G builds on existing 4G infrastructure, 5G networks deployed at scale will require a complete redesign of communication infrastructure. 5G network transformation is undergoing, where more than 220 operators in more than 85 countries have already launched services, and they have realized that operational agility and accelerated deployment model in such a decentralized and cloud-native landscape are considered a must-have to meet customer demands for new innovative capabilities, services, and digital experiences for both Telecom and vertical industries. This is accompanied by the promise of cloud native architectures and open and flexible deployments, which enable CSPs to scale and enable new data-driven architectures in an open ecosystem. While the initial deployments of 5G are based on the Virtualized Radio Access Network (vRAN), which offers CSPs enhanced operational efficiency and flexibility to fulfill the needs of 5G customers, Open RAN expands vRAN's design concepts as well as goals and is truly considered the future. Although O-RAN disaggregates the network, providing network operators more flexibility in terms of how their networks are built and allowing them the benefits of interoperability, the trade-off for the flexibility is typically increased operational complexity, which incurs additional costs of continuous testing, validation, and integration of the 5G RAN system components, which are now provided by a diverse set of suppliers.

Another aspect of this growing complexity is the need for denser networks. Although powerful, new 5G antennas and RAN gear required to attain maximum bandwidth cover substantially less distance than 4G macro cells operating at lower frequencies. This means similar coverage requires more 5G hardware and supporting software. Adding the essential gear for 5G networks can dramatically raise operational costs, but the hardware is only a portion of these costs. The expenses of maintaining a network include the time and money spent on configuration changes, testing, monitoring, repairs, and upgrades.

For most nationwide operators, Edge and RAN cell sites are widely deployed and geographically dispersed across the nation. As network densification increases, it becomes impractical to manually onboard thousands of servers across multiple sites. CSPs need to create a strategy for incorporating greater automation into their network and continue service operations to ensure robust connectivity, manage to expand network complexities, and preserve cost efficiencies without the need for a complete "rip and replace" strategy.

As CSPs migrate to an edge-computing architecture, a new set of requirements emerges. As workloads move closer to the network's edge, CSPs must still maintain ultra-high availability often 5-6 nines. Legacy technology is incapable of attaining this degree of availability.

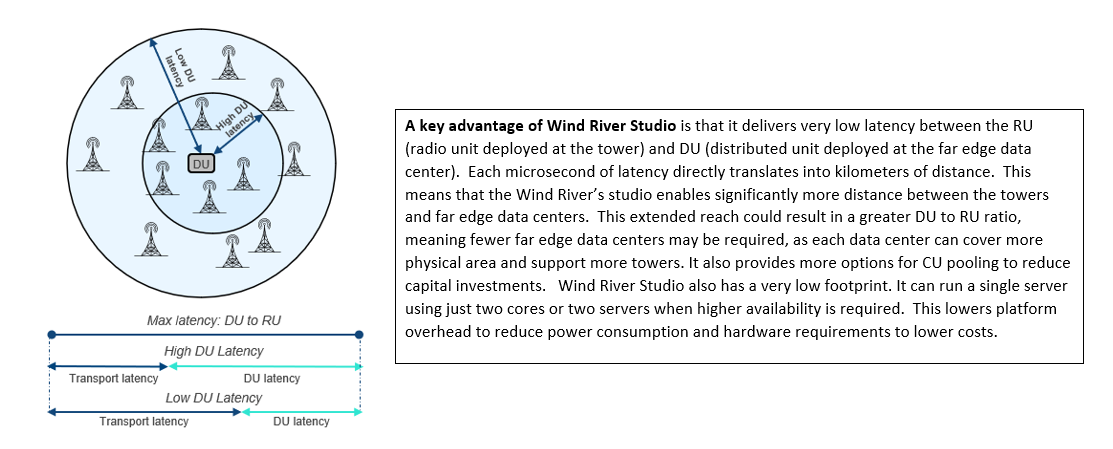

Scalability, specifically down to a single node with a small footprint at the edge. When a single network reaches tens of thousands of cell sites, you simply cannot afford to have a significant physical footprint with many servers. As a result, the need for a new architecture that can scale up and down grew. As applications grow more real-time, ultra-low latency at the edge is required. CSPs need in-built lifecycle management to perform live software upgrades and manage this environment. Finally, CSPs are demanding more and more open-source software for their networks. Wind River Studio addresses each of these network issues.

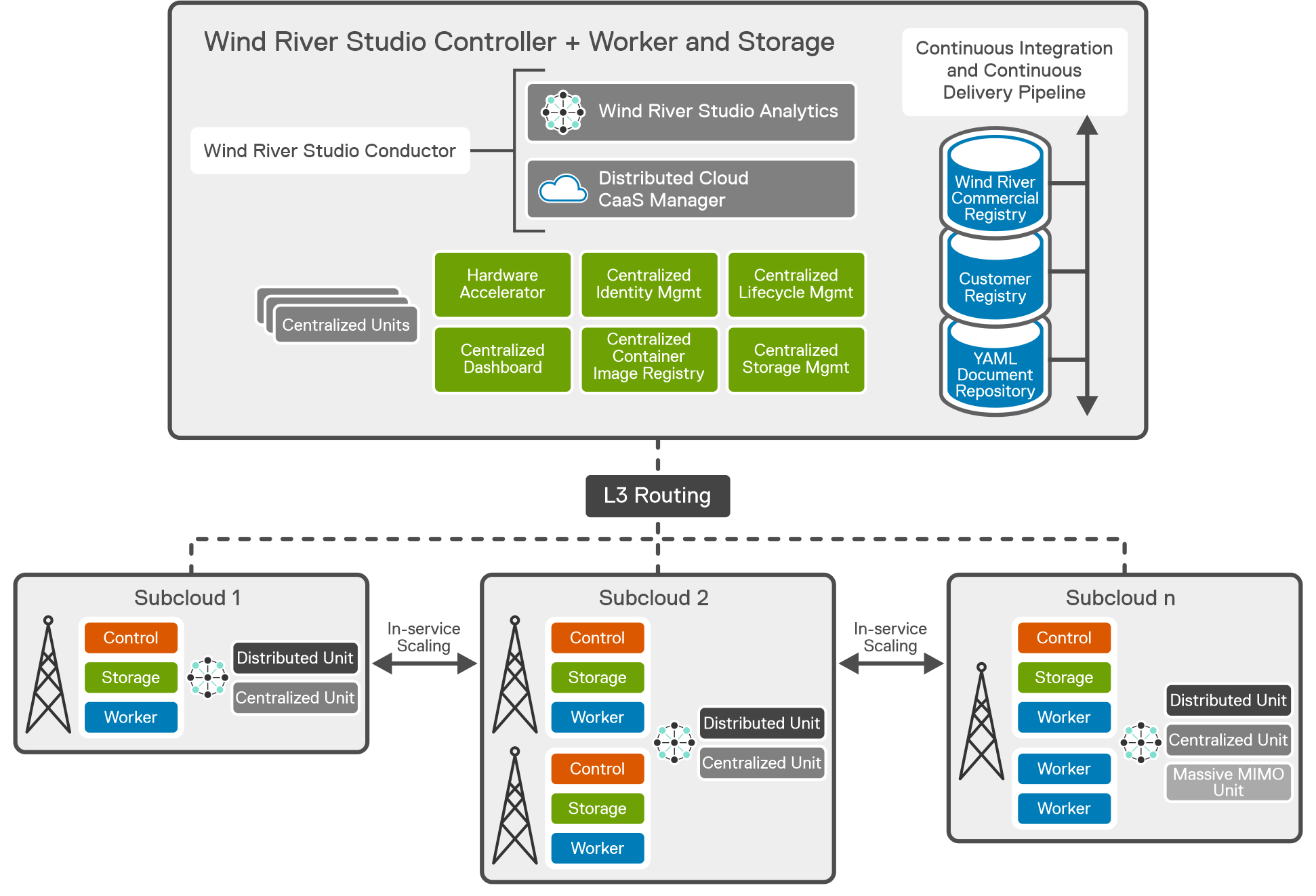

Wind River Studio Cloud Platform, which is the StarlingX project with commercial support, provides a production-grade distributed Kubernetes solution for managing edge cloud infrastructure. In addition to the Kubernetes-based Wind River Studio Cloud Platform, Studio also provides orchestration (Wind River Studio Conductor) and analytics (Wind River Studio Analytics) capabilities so operators can deploy and manage their intelligent 5G edge networks globally.

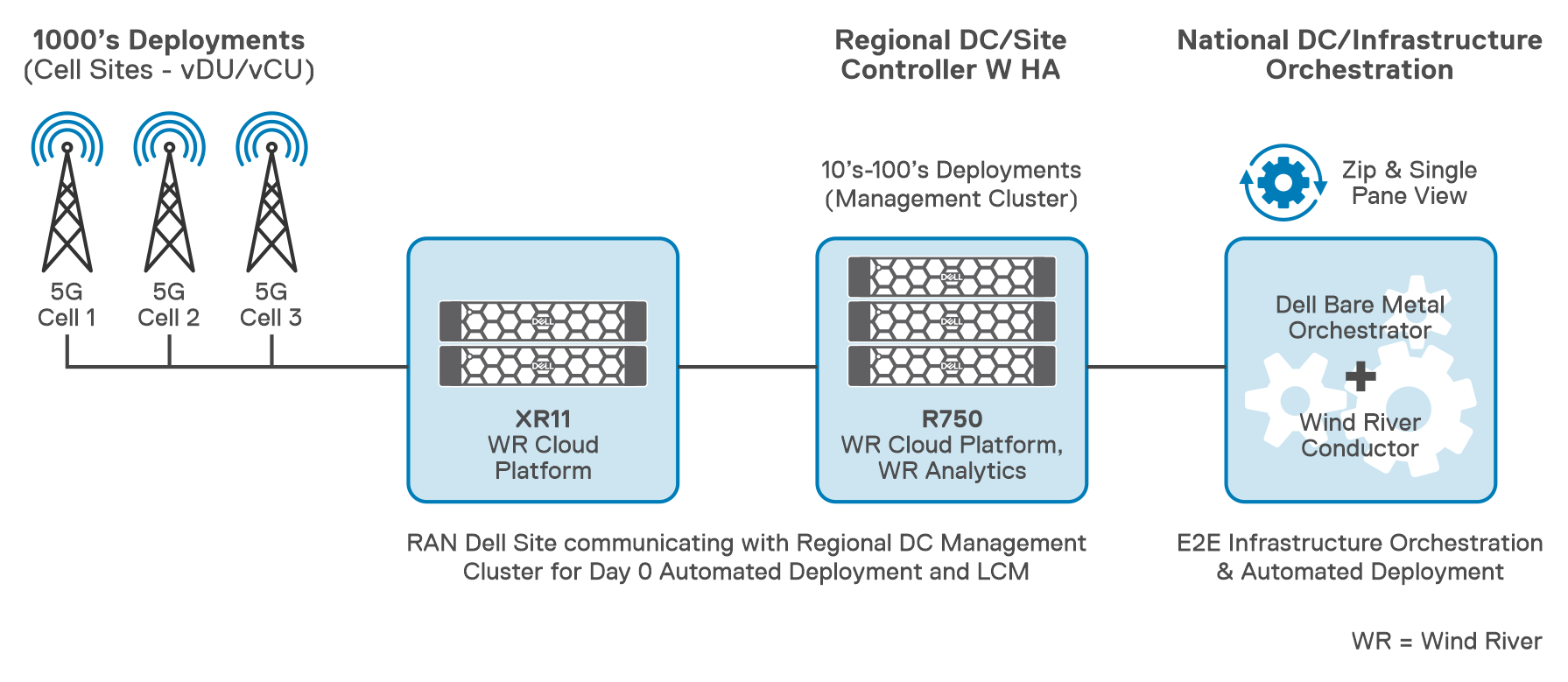

Mobile Network Operators who adopt vRAN and Open RAN must integrate cloud platform software on optimized and tuned hardware to create a cloud platform for vRAN and Open RAN applications. Dell and Wind River have worked together to create a fully engineered, pre-integrated solution designed to streamline 5G vRAN and Open RAN design, deployment, and lifecycle management. Dell Telecom Infrastructure Blocks for Wind River integrate Dell Bare Metal Orchestrator (BMO) and Wind River Studio on Dell PowerEdge servers to provide factory-integrated building blocks for deploying ultra-low latency, vRAN and Open RAN networks with centralized, zero-touch provisioning and management capabilities.

Key Advantages:

- Reduces the complexity of integration and lifecycle management in a highly distributed, disaggregated network, allowing lower operating costs while reducing time to deploy new services while accelerating innovation.

- Dell's comprehensive, factory-integrated solution simplifies supply chain management by reducing the number of components and suppliers needed to build out the network. In addition, to back-haul optimization by preloading all software needed for day-0 and day-1 automation.

- It has been thoroughly tested and includes design guidance for building and scaling out a network that provides low latency, redundancy, and High Availability (HA) for carrier-grade RAN Applications.

- Simplified support with Dell providing single contact support for the whole stack including all hardware and software from Dell and Wind River with an option for carrier-grade support.

- Reduces the total cost of ownership (TCO) for CSPs by deploying a fully integrated, validated, and production-ready vRAN/O-RAN cloud infrastructure solution with a smaller footprint, low latency, and operational simplicity.

Wind River Studio Cloud Platform Architecture

Wind River Studio Cloud Platform Distributed Cloud configuration supports an edge computing solution by providing central management and orchestration for a geographically distributed network of cloud platform systems with easy installation with support for complete Zero Touch Provisioning of the entire cloud, from the Central Region to all the Sub-Clouds

The architecture features a synchronized distributed control plane for reduced latency, with an autonomous control plane such that all sub-cloud local services are operational even during loss of Northbound connectivity to the Central Region (Distributed cloud system controllers cluster location) which is quite important because Studio Cloud Platform can scale horizontal or vertical independent from the main cloud in the regional data center (RDC) or in National Data center (NDC).

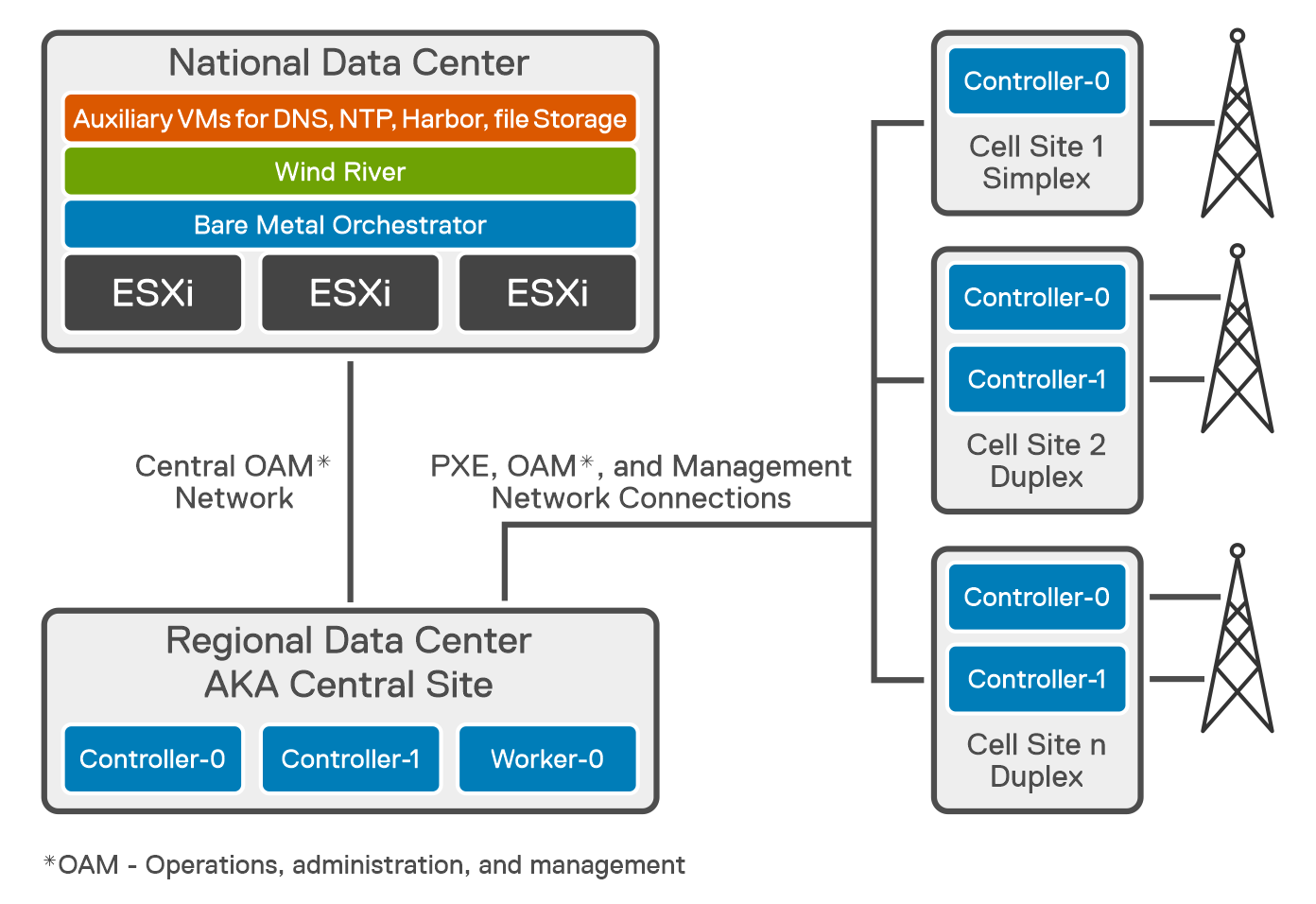

Cell Sites, or sub-clouds, are geographically dispersed edge sites of varying sizes. Dell Telecom Infrastructure Blocks for Wind River cell site installations can be either All-in-One Simplex (AIO- SX), AIO Duplex (DX), or All-in-One (AIO) DX + workers. For a typical AIO SX deployment, at least one server is needed in a sub-cloud. Remote worker sites running Bare Metal Orchestrator are where sub-clouds are set up.

- AIO-SX (All-In-One Simplex) - A complete hyper-converged cloud with no HA, with ultra-low cloud platform overhead of 2 physical cores and 64G Memory, and 500G Disk, which is required to run the cloud, while the rest of the CPU cores, memory, and disk are used for the applications.

- AIO-DX (All-In-One Duplex) - Same as AIO-SX except that it runs on 2 servers to provide High Availability (HA) up to 6-9's.

- AIO-DX (All-In-One Duplex) +Workers - Two nodes plus a set of worker nodes (starting small and growing as workload demands increase)

The Central Site at the RDC is deployed as a standard cluster across three Dell PowerEdge R750 servers, two of which are the controller nodes and one of which is a worker node, The Central Site also known as the system controller, provides orchestration and synchronization services for up to 1000 distributed sub-clouds, or cell sites. Controller-0, Controller-1, and Workers-0 through n are the various controllers in the system. To implement AIO DX, both Controller-0 and Controller-1 are required.

Wind River Studio Conductor runs in the National Data Center (NDC) as an orchestrator and infrastructure automation manager. It integrates with Dell's Bare Metal Orchestrator (BMO) to provide complete end-to-end automation for the full hardware and software stack. Additionally, it provides a centralized point of control for managing and automating application deployment in an environment that is large-scale and distributed.

Studio Conductor receives information from Bare Metal Orchestrator as new cell sites come online. Studio Conductor instructs the system controller (CaaS manager) to install, bootstrap, and deploy Studio Cloud Platform at the cell sites. It supports TOSCA (Topology and Orchestration Specification for Cloud Application) based blueprint modeling. (Blueprints are policies that enable orchestration modeling.) Studio Conductor uses blueprints to map services to all distributed clouds and determine the right place to deploy. It also includes built-in secret storage to securely store password keys internally, reducing threat opportunities.

Studio Conductor can adapt and integrate with existing orchestration solutions. The plug-in architecture allows it to accommodate new and old technologies, so it can easily be extended to accommodate evolving requirements.

Wind River Studio Analytics is an integrated data collection, monitoring, analysis, and reporting tool used to optimize distributed network operations. Studio Analytics specifically solves a unique use case for the distributed edge. It provides visibility and operational insights into the Studio Cloud Platform from a Kubernetes and application workload perspective. Studio Analytics has a built-in alerting system with the ability to integrate with several third-party monitoring systems. Studio Analytics uses technology from Elastic. co as a foundation to take data reliably and securely from any source and format, then search, analyze, and visualize it in real time. Studio Analytics also uses the Kibana product from Elastic as an open user interface to visually display the data in a dashboard.

Dell Telecom Multi-Cloud Foundation Infrastructure Blocks provides a validated, automated, and factory integrated engineered system that paves the way for the zero-touch deployment of 5G Telco Cloud Infrastructure, to operation and management of the lifecycle of vRAN and Open RAN sites, all of which contribute to a high-performing network that lessens the cost, time, complexity, and risk of deploying and maintaining a telco cloud for the delivery of 5G services.

To learn more about our solution, please visit the Dell Telecom Multi-Cloud Foundation solutions site

Authored by:

Gaurav Gangwal

Senior Principal Engineer – Technical Marketing, Product Management

About the author:

Gaurav Gangwal works in Dell's Telecom Systems Business (TSB) as a Technical Marketing Engineer on the Product Management team. He is currently focused on 5G products and solutions for RAN, Edge, and Core. Prior to joining Dell in July 2022, he worked for AT&T for over ten years and previously with Viavi, Alcatel-Lucent, and Nokia. Gaurav has an engineering degree in electronics and telecommunications and has worked in the telecommunications industry for about 14 years. He currently resides in Bangalore, India.

Distribution of 5G Core to Network Edge

Mon, 06 May 2024 12:52:39 -0000

|Read Time: 0 minutes

Thus far in our blog series, we have discussed migrating to an open cloud-native ecosystem, the 5G Core and its architecture, and how Dell Telecom Infrastructure Block for Red Hat can help simplify 5G network deployment for Red Hat® OpenShift®. Now, we would like to introduce a key use case for distributing 5G core User Plane functions from the centralized data center to the network edge.

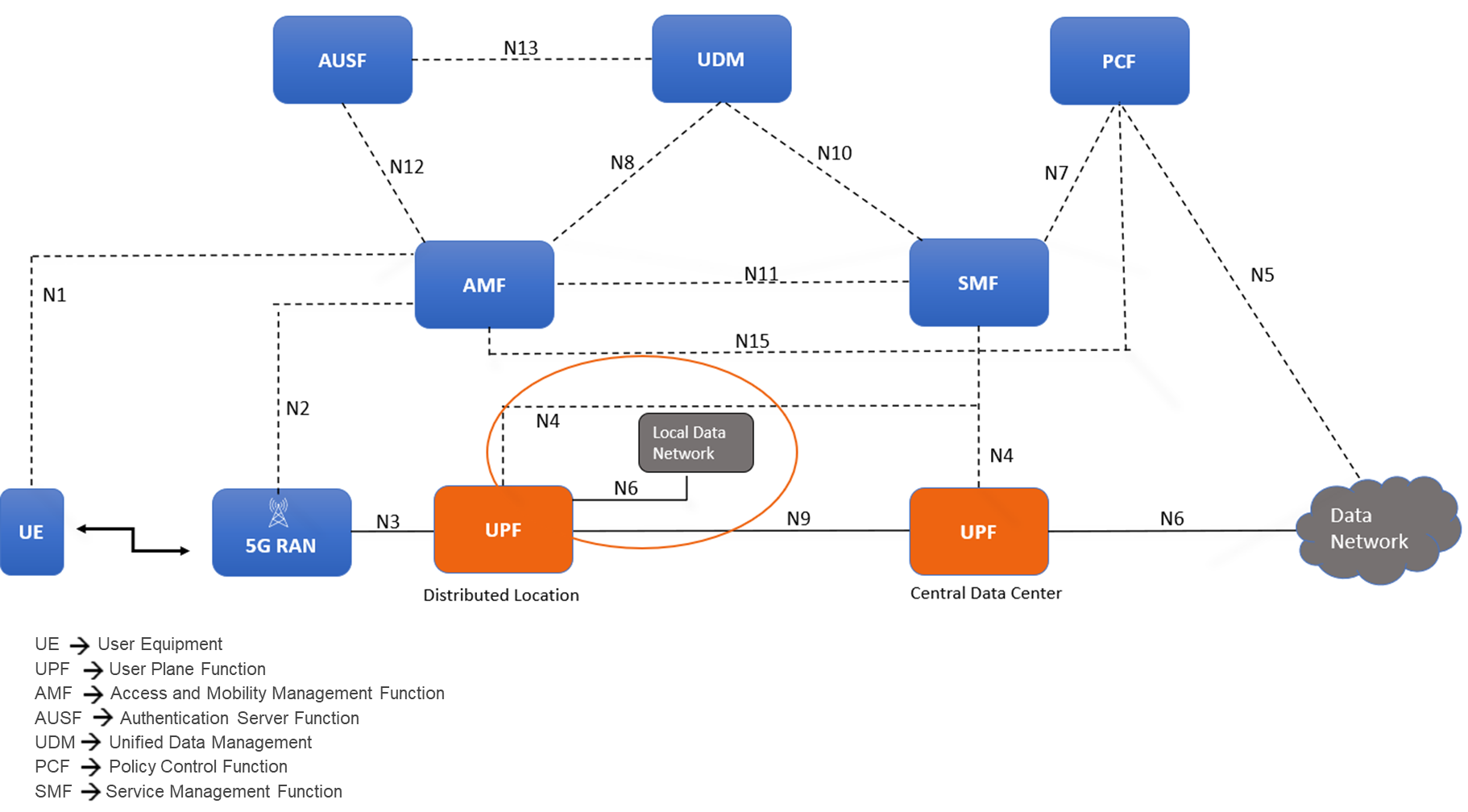

Distributing Core Functions in 5G networks

The evolution of communication technology has brought us to the era of 5G networks, promising faster speeds, lower latency, and the ability to connect billions of devices simultaneously. However, to achieve these ambitious goals, the architecture of 5G networks needs to be more flexible, scalable, and efficient than ever before. With the advent of CUPS, or Control and User Plane Separation, in later LTE releases, the telecommunications industry had high expectations for a prototypical distributed control-user plane architecture. This development was seen as a steppingstone towards the more advanced 5G networks that were on the horizon. CUPS aimed to separate the control plane and user plane functionalities where the Control Plane (specifically the Session Management Function or SMF) is typically centralized while the User Plane Function (UPF) can be located alongside the Control Plane or distributed to other locations in the network as demanded by specific use cases.

Understanding the need for Distributed UPF

The UPF is a key component in 5G networks, responsible for handling user data traffic. Distributed User Plane Function (D-UPF) is an advanced network architecture that distributes the UPF functionality across multiple nodes closer to the user and enables local breakout (LBO) to manage use cases that requires lower latency or more privacy, enabling a more scalable and flexible networking environment. With D-UPF, operators can handle increasing data volumes, reduce latency, and enhance overall network performance. By distributing the UPF, operators can effectively manage the increasing data demands across different consumer and enterprise use cases in a cost-effective manner.

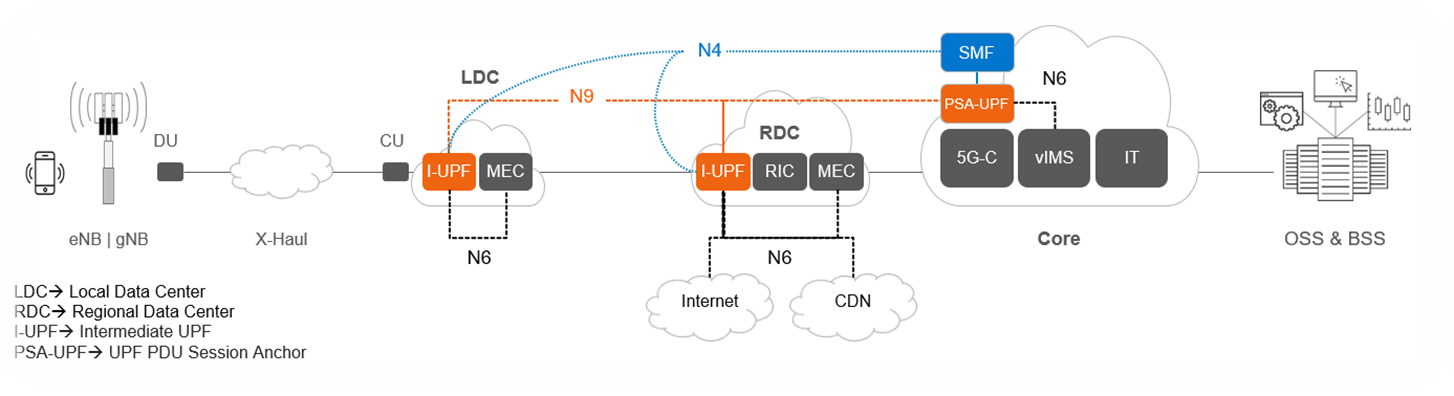

Figure 1: Distributed User Plane function in 5G Core Architecture

D-UPF also plays a crucial role in enabling edge computing in 5G networks. By distributing the user plane traffic closer to the network edge, D-UPF reduces the latency associated with data transmission to and from centralized data centers. This opens opportunities for real-time applications, such as autonomous vehicles, augmented reality, and industrial automation, where low latency is critical for their proper functioning.

Distributed UPF deployment options

Figure 2: D-UPF deployment and functionality

The above diagram provides an overview of the different roles D-UPF may play in a 5G architecture. For example:

- Centralized UPF/PSA-UPF: In the simplest scenario, the UPF is centralized and session anchor occurs within the data center and takes care of the long-term stable IP address assignment. One such example includes VoLTE / NR call where PDU Session Anchor (PSA)-UPF traffic steers to IMS.

- Intermediate UPF (I-UPF):An intermediate UPF (I-UPF) can be inserted on the User Plane path between the RAN and a PSA. Here are two possible reasons to do that:

- If due to mobility, the UE moves to a new RAN node and the new RAN node cannot support N3 tunnel to the old PSA, then an I-UPF is inserted and this I-UPF will have the N3 interface towards RAN and an N9 interface towards the PSA UPF. This process of linking multiple UPFs is called UPF chaining, which involves directing user data flows through a series of UPFs, each of which is performing specific functions.

- You might want to deploy UPF within the Local Data Center/Edge for a low latency use case to steer data traffic to a co-deployed MEC for edge services or to break-out traffic to the local data network.

Challenges and considerations for D-UPF deployments

Now that we have reviewed the need for D-UPF and the different deployment scenarios, let’s consider some of the obstacles you will encounter along the way. As we all know, these network functions have their own needs, especially when it comes to the amount of data being inspected, routed, and forwarded across from the core to the edge, and back again. Below are four areas for consideration:

- Resource Constraints: Edge or remote locations often have limited physical space available for deploying network equipment. The challenge lies in accommodating the necessary hardware, cooling systems, and other infrastructure within these space-constrained environments. Remote locations may also have limited or unreliable power supply infrastructure. Opting for infrastructure with optimal power efficiency, high density, serviceability, ruggedized exterior, and optimized for edge form factors becomes important as UPFs are extended to the edge.

- Performance Requirements: The need for low latency Infrastructure is critical to ensure real-time responsiveness and a seamless user experience when deploying core functions to the edge. Also, by processing data at the network edge with minimal latency, the need for large bandwidth networks to transmit data to centralized core is reduced. This helps in optimizing network bandwidth and lowering the operating costs. This ultimately reduces the CSP’s reliance on centralized core infrastructure for time-sensitive operations.

- Orchestration and Automation: Deploying and managing UPFs distributed across edge locations is a complex challenge. This includes tasks such as workload placement, resource allocation, and automated management of edge infrastructure. Choosing a horizontal telco cloud platform that supports automated distributed core deployment and provides the capability to expand and scale the compute and storage resources to accommodate the varying demands at the edge is a must.

- Lifecycle Management and Operating Cost: Another significant factor is the increased costs associated with first deploying and then operating remote deployments. The large number of these locations coupled with their limited accessibility makes them more expensive to construct and maintain. To address this, zero-touch provisioning at network setup k and sustainable lifecycle management are necessary to optimize the economics of the edge.

The Horizontal Cloud Platform: Dell Telecom Infrastructure Blocks

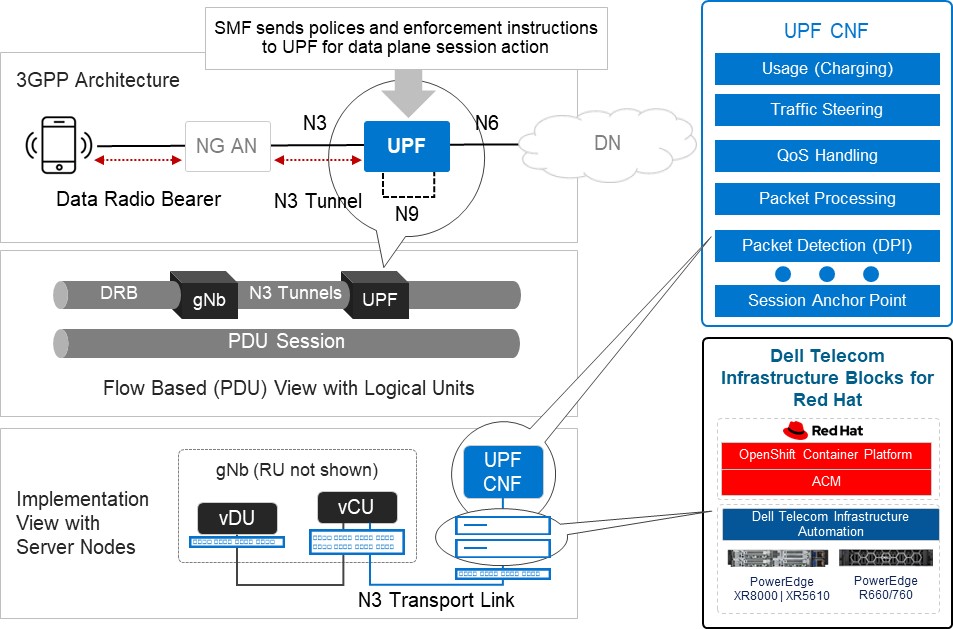

Figure3: An Implementation View of Dell Telecom Infrastructure Blocks for Red Hat running the UPF

Dell Technologies is at the forefront of providing cutting-edge cloud-native solutions for the 5G telecom industry. As discussed in our previous blog, Telecom Infrastructure Blocks for Red Hat is one of those solutions, helping operators break down technology silos and empowering them to deploy a common cloud platform from Core to Edge to RAN. These are engineered systems, based on high-performance telecom edge-optimized Dell PowerEdge servers, that have been pre-validated and integrated with Red Hat OpenShift ecosystem software. This makes them a perfect solution for tackling the D-UPF challenges outlined in this blog.

- Resource Constraints:

- Space-efficient modular designed server options for telecom environments, such as the Dell PowerEdge XR8000 series servers, allow providers to mix and match components based on workload needs. They can run multiple workloads, such as CU/DU and UPF in the same chassis.

- Smart cooling designs support harsh edge environments and keep systems at optimal temperatures without using more energy than is needed.[1]

- Rugged and flexible server options that are less than half the length of traditional servers and offer front or rear connectivity make installation in small enclosures at the base of cell towers easier.[2]

- Performance Requirements:

- Orchestration and Automation:

- Horizontal cloud stack engineered platform based on Red Hat OpenShift allows operators to pool resources to meet changing workload requirements. This is achieved by automating server discovery, creating and maintaining a server inventory, and adding the ability to configure and reconfigure the full hardware and software stack to meet evolving workload requirements.

- Servers leverage dynamic resource allocation to ensure that computing resources are allocated precisely where and when they are needed. This real-time optimization minimizes resource waste and maximizes network efficiency.[3]

- Lifecycle Management and Operating Cost:

- Include Dell Telecom Infrastructure Automation software to automate the deployment and life-cycle management as its fundamental components.

- Backed by a unified support model from Dell with options that meet carrier grade SLAs, CSPs do not have to worry about multi-vendor management for the cloud infrastructure support (for both the hardware and cloud platform software), as Dell becomes the single point of contact in the support of telco cloud platform and works with its partners to resolve issues.

Summary

In summary, the need for D-UPF in 5G networks arises from the requirements of handling massive data volumes, improving network efficiency, reducing latency, enabling edge computing, and supporting advanced 5G services. Selecting among the different deployment scenarios possible will require ensuring you have an infrastructure capable of meeting your changing objectives for today and the flexibility and scalability to see you through your long-term goals. For example, you can host and support the deployment and management of content delivery network (CDN) at the network edge, where Dell Telecom Infrastructure Blocks for Red Hat can also serve as a telco cloud building block. By implementing this engineered telco cloud platform solution from Dell, we believe CSPs will be able to streamline the process and reduce costs associated with the deployment and maintenance of UPF across edge locations.

To learn more about Telecom Infrastructure Blocks for Red Hat, visit our website Dell Telecom Multicloud Foundation solutions.

[1] Source: ACG Report, “The TCO Benefits of Dell’s Next-Generation Telco Servers“, February 2023

[2] Source: Dell Technologies, “Introducing New Dell OEM PowerEdge XR Servers“, March 2023

[3] Source: Dell Technologies, “Competing in the new world of Open RAN in Telecom”, February 2024