What is Happening in the Network Edge

Mon, 26 Jun 2023 10:59:44 -0000

|Read Time: 0 minutes

Where is the Network Edge in Mobile Networks

The notion of ‘Edge’ can take on different meanings depending on the context, so it’s important to first define what we mean by Network Edge. This term can be broadly classified into two categories: Enterprise Edge and Network Edge. The former refers to when the infrastructure is hosted by the company using the service, while the latter refers to when the infrastructure is hosted by the Mobile Network Operator (MNO) providing the service.

This article focuses on the Network Edge, which can be located anywhere from the Radio Access Network (RAN) to next to the Core Network (CN). Network Edge sites collocated with the RAN are often referred to as Far Edge.

What is in the Network Edge

In a 5G Standalone (5G SA) Network, a Network Edge site typically contains a cloud platform that hosts a User Plane Function (UPF) to enable local breakout (LBO). It may include a suite of consumer and enterprise applications, for example, those that require lower latency or more privacy. It can also benefit the transport network when large content such as Video-on-Demand is brought closer to the end users.

Modern cloud platforms are envisioned to be open and disaggregated to enable MNOs to rapidly onboard new applications from different Independent Software Vendors (ISV) thus accelerating technology adoption. These modern cloud platforms are typically composed of Commercial-of-the-Shelf (COTS) hardware, multi-tenant Container-as-a-Service (CaaS) platforms, and multi-cloud Management and Orchestration solutions.

Similarly, modern applications are designed to be cloud-native to maximize service agility. By having microservices architectures and supporting containerized deployments, MNOs can rapidly adapt their services to meet changing market demands.

What contributes to Network Latency

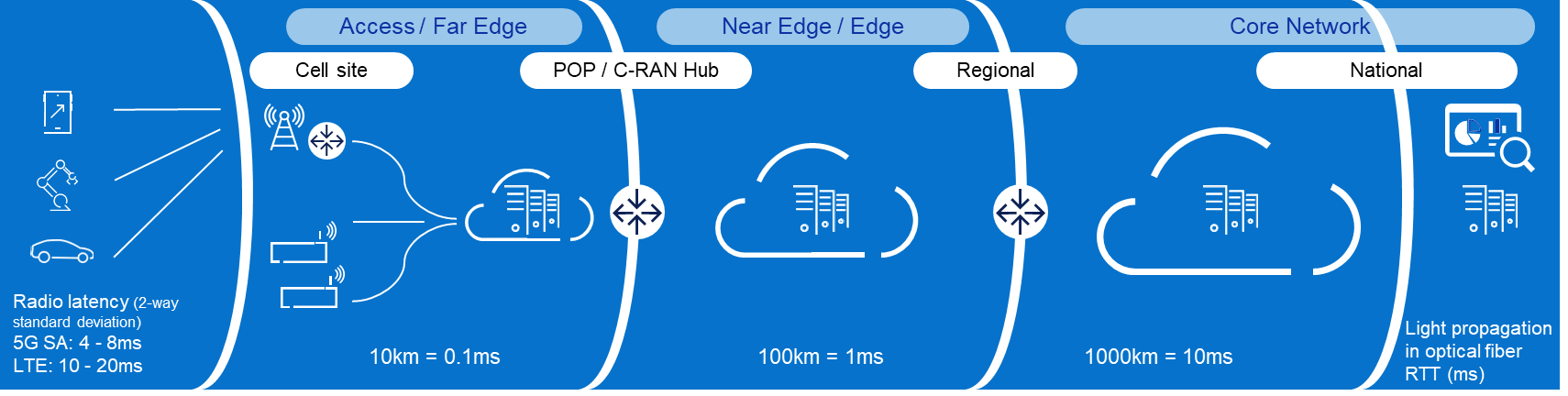

The appeal of Network Edge or Multi-access Edge Computing (MEC) is commonly associated with lower latency or more privacy. While moving applications from beyond the CN to near the RAN does eliminate up to tens of milliseconds of delay, it is also important to understand that there are many other contributors to network latency which can be optimized. In fact, latency is added at every stage from the User Equipment (UE) to the application and back.

RAN is typically the biggest contributor to network latency and jitter, the latter being a measure of fluctuations in delay. Accordingly, 3GPP has introduced a lot of enhancements in 5G New Radio (5G NR) to reduce latency and jitter in the air interface. We can actively reduce latency through the following categories: There are three primary categories where latency can be reduced:

- Transmission time: reduce symbol duration with higher subcarrier spacing or with mini slots

- Waiting time: improve scheduling (optimize handshaking), simultaneous transmit/receive, and uplink/downlink switching with TDD

- Processing time: reduce UE and gNB processing and queuing with enhanced coding and modulation

Transport latency is relatively simple to understand as it is mainly due to light propagation in optical fiber. The industry rule of thumb is 1 millisecond round trip latency for every 100 kilometers. The number of hops along the path also impacts latency as every transport equipment adds a bit of delay.

Typically, CN adds less than 1 millisecond to the latency. The challenge for the CN is more about keeping the latency low for mobile UEs, by seamlessly changing anchors to the nearest Edge UPF through a new procedure called ‘make before break’. Also, the UPF architecture and Gi/SGi services (e.g., Deep Packet Inspection, Network Address Translation, and Content Optimization) may add a few additional milliseconds to the overall latency, depending on whether these functions are integrated or independent.

Architectural and Business approaches for the Network Edge

The physical locations that host RAN and Network Edge functionalities are widely recognized to be some of the MNOs’ most valuable assets. Few other entities today have the real estate and associated infrastructure (e.g., power, fiber) to bring cloud capabilities this close to the end clients. Consequently, monetization of the Network Edge is an important component of most MNOs’ strategy for maximizing their investment in the mobile network and, specifically, in 5G. In almost all cases, the Network Edge monetization strategy includes making Network Edge available for Enterprise customers to use as an “Edge Cloud.” However, doing so involves making architectural and business model choices across several dimensions:

- Connectivity or Cloud: should the MNO offer a cloud service or just the connectivity to a cloud service provided by a third party (and potentially hosted at a third party’s site).

- aaS model: in principle, the full range of as-a-Service models are available to the MNO to offer at the network edge. This includes co-location services; Bare-Metal-as-a-Service, Infrastructure-as-a-Service (IaaS), Containers-as-a-Service (CaaS), and Platform and Software-as-a-Service (PaaS and SaaS). Going up this value chain (up being from co-lo to SaaS) allows the MNO to capture more of the value provided to the Enterprise. However, it also requires it to take on significantly more of responsibility and puts it in direct competition with well-established players in this space – e.g., the cloud hyperscale companies. The right mix of offerings – and it is invariably a mix – thus involves a complex set of technical and business case tradeoffs. The end result will be different for every MNO and how each arrives there will also be unique.

- Management framework: our industry’s initial approach to exposing the Network Edge to the enterprises involved a management framework that tightly couples to how the MNO manages its network functions (e.g., the ETSI MEC family of standards for example (ETSI MEC)). However, this approach comes with several drawbacks from an Enterprise point of view. As a result, a loosely coupled approach, where the Enterprise manages its Edge Cloud applications using typical cloud management solutions appears to be gaining significant traction, with solutions such as Amazon’s Wavelength as an example. This approach, of course, has its own drawbacks and managing the interplay between the two is an important consideration in Network Edge (and one that is intertwined with the selection of aaS model).

- Network-as-a-Service: a unique aspect of the Network Edge is the MNOs ability to expose network information to applications as well as the ability to provide those applications (highly curated) means of controlling the network. How and if this makes sense is again both an issue of the business case – for the MNO and the Enterprise – as well as a technical/architectural issue.

Certainly, the likely end state is a complex mixture of services and go-to-market models focused on the Enterprise (B2B) segment. The exposition of operational automation and the features of 5G designed to address this make it likely that this is a huge opportunity for MNOs. Navigating the complexities of this space requires a deep understanding of both what services the Enterprises are looking for and how they are looking to consume these. It also requires an architectural approach that can handle the variable mix of what is needed in a way that is highly scalable.

As the long-time leader in Enterprise IT services, Dell is uniquely positioned to address this space – stay tuned for more details in an upcoming blog!

Building the Network Edge

There are several factors to consider when moving workloads from central sites to edge locations. Limited space and power are at the top of the list. The distance of locations from the main cities and generally more exposed to the elements require a new class of denser, easier-to-service, and even ruggedized form factors. Thanks to the popularity of Open RAN and Enterprise Edge, there are already solutions in the market today that can also be used for Network Edge. Read more on Edge blog series Computing on the Edge | Dell Technologies Info Hub

Higher deployment and operating costs are another major factor. The sheer number of edge locations combined with their degraded accessibility make them more expensive to build and maintain. The economics of the Network Edge thus necessitates automation and pre-integration. Dell’s solution is the newly engineered cloud-native solution with automated deployment and life-cycle management at its core. More on this novel approach here Dell Telecom MultiCloud Foundation | Dell USA.

Last is the lower cost of running applications centrally. Central sites have the advantage of pooling computes and sharing facilities such as power, connectivity, and cooling. It is therefore important to reduce overhead wherever possible, such as opting for containerized over VM-based cloud platforms. Moreover, having an open and disaggregated horizontal cloud platform not only allows for multitenancy at edge locations, which significantly reduces overhead but also enables application portability across the network to maximize efficiency.

The ideal situation is where Open/Cloud RAN and Network Edge are sharing sites thus splitting several of the deployment and operations costs. Due to the latency requirements, Distributed Unit (DU) must be placed within 20 kilometers of the Radio Unit (RU). Latency requirements for the mid-haul interface between DU and Central Unit (CU) are less stringent, and CU could be placed roughly around 80-100 kilometers from the DU. In addition, the Near-Real Time Radio Intelligent Controller (Near-RT RIC) and the related xApps must be placed within 10ms RTT. This makes it possible to collocate Network Edge sites with the CU sites and Near-RT RIC.

Future

What has happened over the past few years is that several MNOs have already moved away from having 2-3 national DCs for their entire CN to deploying 5-10 regional DCs where some network functions such as the UPF were distributed. One example of this is AT&Ts dozen “5G Edge Zones” which were introduced in the major metropolitan areas: AT&T Launching a Dozen 5G “Edge Zones” Across the U.S. (att.com).

This approach already suffices for the majority of “low latency” use cases and for smaller countries even the traditional 2-3 national DCs can offer sufficiently low transport latency. However, when moving into critical use cases with more stringent latency requirements, which means consistently very low latency is a must, then moving the applications to the Far Edge sites becomes a necessity in tandem with 5G SA enhancements such as network slicing and an optimized air interface.

The challenge with consumer use cases such as cloud gaming is supporting the required Service Level (i.e., low latency) country wide. And since enabling the network to support this requires a substantial initial investment, we are seeing the classic chicken and egg problem where independent software vendors opt not to develop these more demanding applications while MNOs keep waiting for these “killer use cases” to justify the initial investment for the Network Edge. As a result, we expect geographically limited enterprise use cases to gain market traction first and serve as catalysts for initially limited Network Edge deployments.

For use cases where assured speeds and low latency are critical, end-to-end Network Slicing is essential. In order to adopt a new more service-oriented approach, MNOs will need Network Edge and low latency enhancements together with Network Slicing in their toolbox. For more on this approach and Network Slicing, please check out our previous blog To slice or not to slice | Dell Technologies Info Hub.

About the author: Tomi Varonen

Tomi Varonen is a Telecom Network Architect in Dell’s Telecom Systems Business Unit. He is based in Finland and working with the Cloud, Core Network, and OSS&BSS customer cases in the EMEA region. Tomi has over 23 years of experience in the Telecom sector in various technical and sales positions. Wide expertise in end-to-end mobile networks and enjoys creating solutions for new technology areas. Passion for various outdoor activities with family and friends including skiing, golf, and bicycling.

About the author: Arthur Gerona

Arthur is a Principal Global Enterprise Architect at Dell Technologies. He is working on the Telecom Cloud and Core area for the Asia Pacific and Japan region. He has 19 years of experience in Telecommunications, holding various roles in delivery, technical sales, product management, and field CTO. When not working, Arthur likes to keep active and travel with his family.

About the author: Alex Reznik

ALEX REZNIK is a Global Principal Architect in Dell Technologies Telco Solutions Business organization. In this role, he is focused on helping Dell’s Telco and Enterprise partners navigate the complexities of Edge Cloud strategy and turning the potential of 5G Edge transformation into the reality of business outcomes. Alex is a recognized industry expert in the area of edge computing and a frequent speaker on the subject. He is a co-author of the book "Multi-Access Edge Computing in Action." From March 2017 through February 2021, Alex served as Chair of ETSI’s Multi-Access Edge Computing (MEC) ISG – the leading international standards group focused on enabling edge computing in access networks.

Prior to joining Dell, Alex was a Distinguished Technologist in HPE’s North American Telco organization. In this role, he was involved in various aspects of helping Tier 1 CSPs deploy state-of-the-art flexible infrastructure capable of delivering on the full promises of 5G. Prior to HPE Alex was a Senior Principal Engineer/Senior Director at InterDigital, leading the company’s research and development activities in the area of wireless internet evolution. Since joining InterDigital in 1999, he has been involved in a wide range of projects, including leadership of 3G modem ASIC architecture, design of advanced wireless security systems, coordination of standards strategy in the cognitive networks space, development of advanced IP mobility and heterogeneous access technologies and development of new content management techniques for the mobile edge.

Alex earned his B.S.E.E. Summa Cum Laude from The Cooper Union, S.M. in Electrical Engineering and Computer Science from the Massachusetts Institute of Technology, and Ph.D. in Electrical Engineering from Princeton University. He held a visiting faculty appointment at WINLAB, Rutgers University, where he collaborated on research in cognitive radio, wireless security, and future mobile Internet. He served as the Vice-Chair of the Services Working Group at the Small Cells Forum. Alex is an inventor of over 160 granted U.S. patents and has been awarded numerous awards for Innovation at InterDigital.

Related Blog Posts

Cloud-native or Bust: Telco Cloud Platforms and 5G Core Migration

Thu, 25 Apr 2024 16:23:22 -0000

|Read Time: 0 minutes

Breaking down barriers with an open, disaggregated, and cloud-native 5G Core

As 5G network rollouts accelerate, communication service providers (CSPs) around the world are shifting away from purpose-built, vertically integrated solutions in favor of open, disaggregated, and cloud-native architectures running containerized network functions. This allows them to take advantage of modern DevSecOps practices and an emerging ecosystem of telecom hardware and software suppliers delivering cloud-native solutions based on open APIs, open-source software, and industry-standard hardware to boost innovation, streamline network operations, and reduce costs.

To take advantage of the benefits of cloud-native architectures, many CSPs are moving their 5G Core network functions onto commercially available cloud native application platforms like Red Hat OpenShift, the industry's leading enterprise Kubernetes platform. However, building an open, disaggregated telco cloud for 5G Core is not easy and it comes with its own set of challenges that need to be tackled before large scale deployments.

In an disaggregated network, the system integration and support tasks become the CSP's responsibility. To achieve their objectives for 5G, they must:

- Accelerate the introduction and management of new technologies by simplifying and streamlining processes from Day 0, network design and integrations tasks, to Day 1 deployment, and Day 2 lifecycle management and operations.

- Break down digital silos to deploy a horizontal cloud platform to reduce CapEx and OpEx while lowering power consumption

- Deploy architectures and technologies that consistently meet strict telecom service level agreements (SLAs).

Digging into the challenges of deploying 5G Core network functions on cloud infrastructure

This will be a five-part blog series that addresses the challenges when deploying 5G Core network functions on a telco cloud.

- In this first blog, we will highlight CSPs’ key challenges as they migrate to an open, disaggregated, and cloud-native ecosystem;

- The next blog will explore the 3GPP 5G Core network architecture and its components;

- The third blog in the series will discuss how Dell Technologies is working with Red Hat to streamline operator processes from initial technology onboarding through Day 2 operations when deploying a telco cloud to support core network functions;

- The fourth blog will focus on distributing User Plane functionality from the centralized data center to the network edge, so operators can create a more scalable and flexible 5G network environment;

- The final blog in the series will discuss how Dell is integrating Intel technology that consistently meets CSP SLAs for 5G Core network functions.

Accelerating the introduction and simplifying the management of 5G Core network functions on cloud infrastructure

Cloud native architectures offer the potential to achieve superior performance, agility, flexibility, and scalability, resulting in easily updated, scaled, and maintained Core network functions with improved network performance and lower operational costs. Nevertheless, operating 5G Core network functions on a telco cloud can be difficult due to new challenges operators face in integration, deployment, lifecycle management, and developing and maintaining the right skill sets.

Different integration and validation requirements

Open multi-vendor cloud-native architectures require the CSP to take on more ownership of design, integration, validation, and management of many complex components, such as compute, storage, networking hardware, the virtualization software, and the 5G Core workload that runs on top. This increases the complexity of deployment and lifecycle management processes while requiring investment in development of new skill sets.

Complexity of the deployment process demands automation

5G Core deployment on a telco cloud platform can be a complex process that requires integrating multiple systems and components into a unified whole with automated deployment from the hardware up through the Core network functions. This complexity creates the need for automation that not only to streamlines processes, but also ensures a consistent deployment or upgrade each time that aligns with established configuration best practices. Many CSPs may lack deployment experience with automation and cloud native tools making this a difficult task.

Lifecycle management and orchestration of a disaggregated 5G Core

The size and complexity of the 5G Core can make lifecycle management and orchestration challenging. Every one of the components starts a new validation cycle and increases the risk of introducing security vulnerabilities and configuration issues into the environment.

Lack of cloud-native skills and experience

Managing a telco cloud requires a different set of skills and expertise than operating traditional networks environments. CSPs often need to acquire additional staff and invest in cloud native training and development to obtain the skills and experience to put cloud native principles into practice as they build, deploy, and manage cloud-native applications and services.

Breaking down vertical silos with a horizontal, 5G telco cloud platform

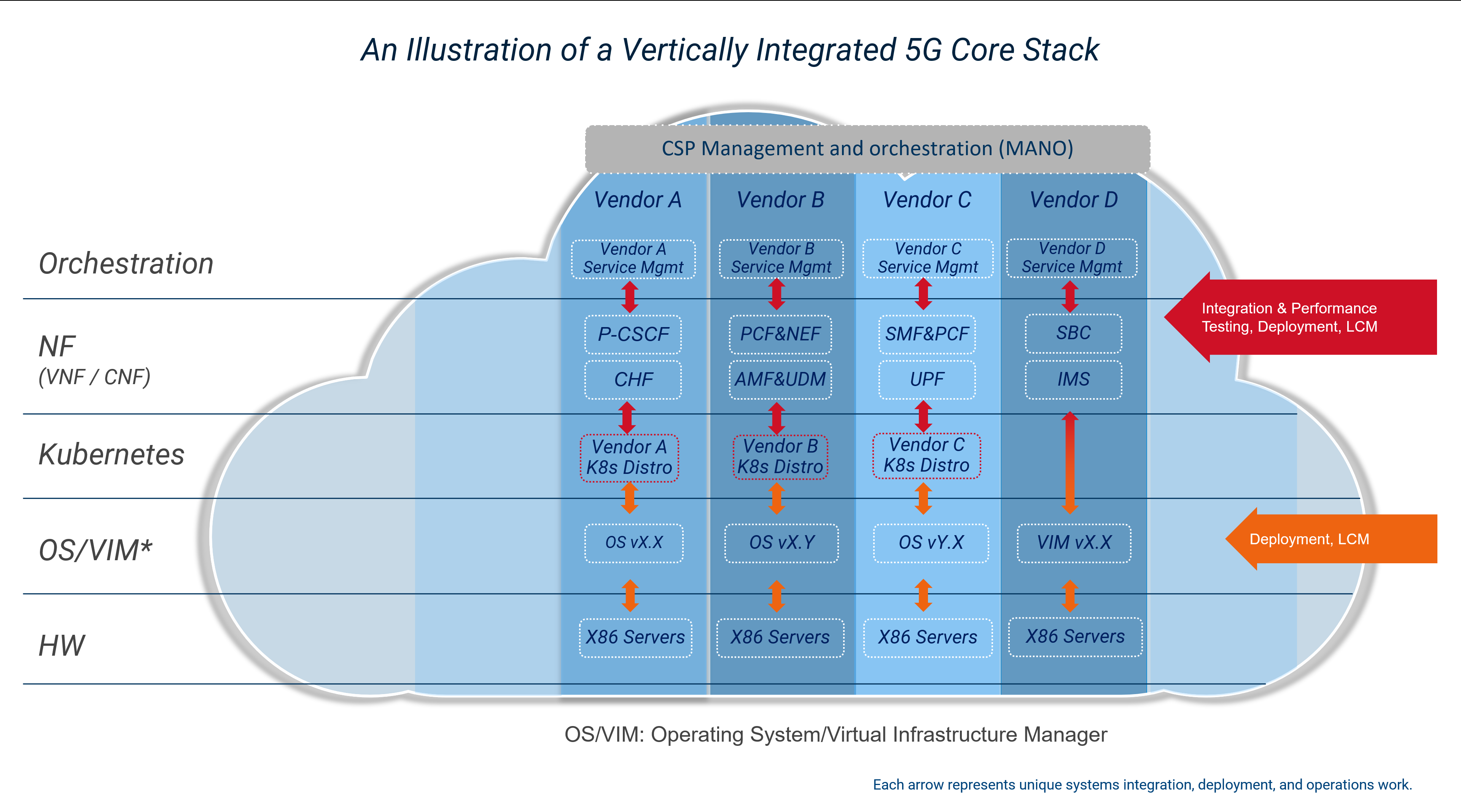

In recent years, many CSPs embarked on a journey away from vertically integrated, proprietary appliances to virtualized network functions (VNFs). One of the goals when adopting network functions virtualization was to obtain greater freedom in selecting hardware and software components from multiple suppliers, making services more cost-effective and scalable. However, CSPs often experiences difficulties in designing, integrating and testing their individual stacks, resulting in higher integration costs, interoperability issues and regression testing delays leading to less efficient operations.

Despite efforts to move to virtualized network functions, silos of vertically integrated cloud deployments can emerge where the virtual network functions suppliers define their own cloud stack to simplify their process of meeting the requirements for each workload. These vertical silos prevented CSPs from pooling resources, which can reduce infrastructure utilization rates and increase power consumption. It also increases the complexity of lifecycle management as each layer of the stack for each silo needs to be validated whenever a change to a component of the stack is made.

Vertically integrated 5G Core stack on a telco cloud

Vertically integrated 5G Core stack on a telco cloud

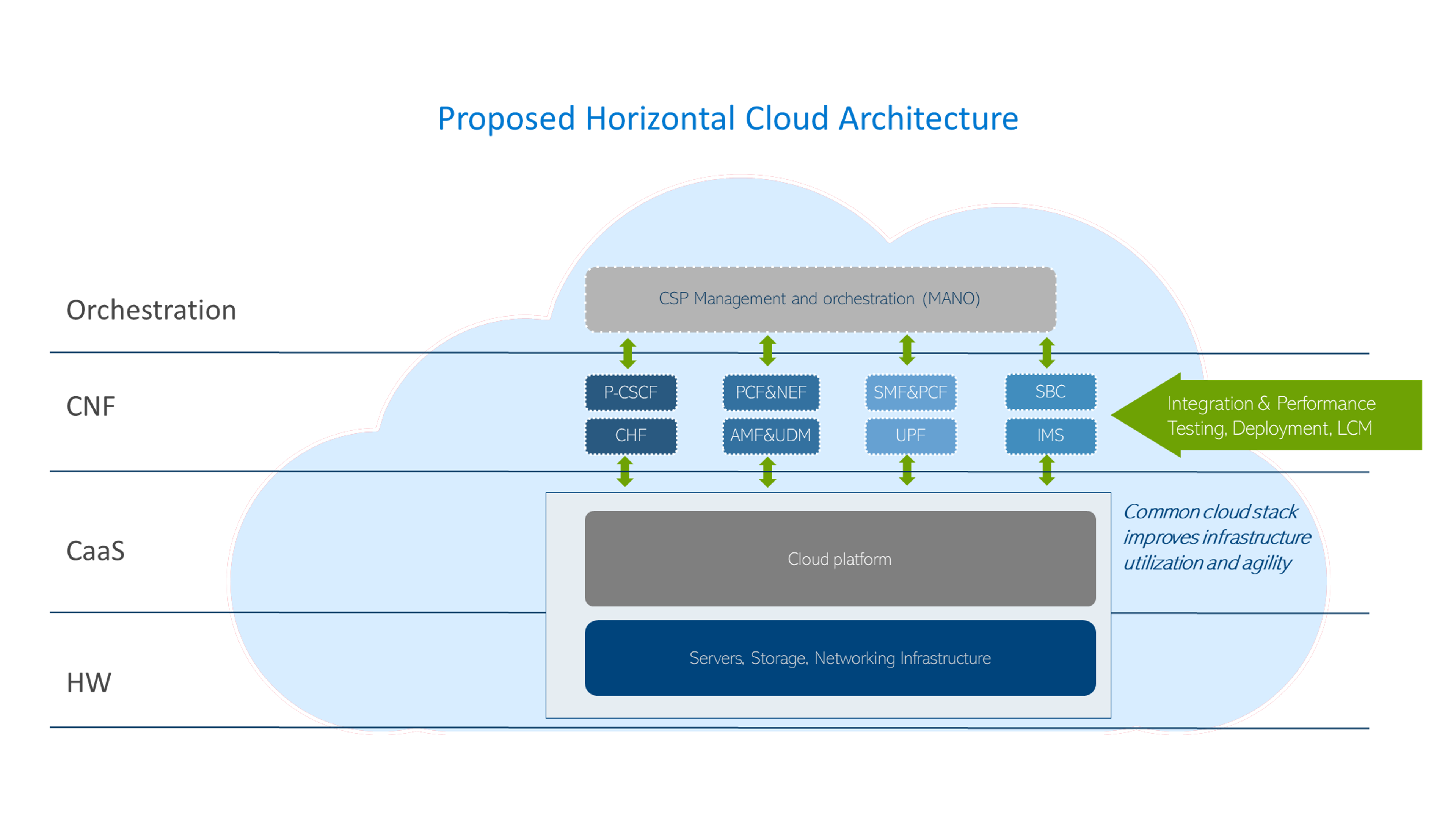

CSPs are now looking to implement a horizontal platform that can provide a common cloud infrastructure to help break down these silos to lower costs, reduce power consumption, improve operational efficiency, and minimize complexity allowing CSPs to adopt cloud native infrastructure from the core to the radio access network (RAN).

Horizontal platform for 5G telco workloads

Horizontal platform for 5G telco workloads

Maintaining compliance with telecom industry SLAs

Creating and managing a geographically dispersed telco cloud based on a broad range of suppliers while consistently adhering to CSP SLAs takes a lot of effort, resources and time and can introduce new complications and risks. To meet these SLAs and accelerate the introduction of new technologies, CSPs will need a novel approach when working with vendors that reduces integration and deployment times and costs while simplifying ongoing operations. This will include developing a tighter relationship with their supply base to offload integration tasks while maintaining the flexibility provided by an open telecom ecosystem. As an example, Vodafone recently introduced a paper outlining their vision for a new operating model to improve systems integrations with their supply base to help achieve these objectives. It would also include following a proven path in enterprise IT by adopting engineered systems, similar to the converged and hyper converged systems used by IT today, that have been optimized for telecom use cases to simplify deployment, scaling and management.

Short-term total cost of ownership (TCO)

When it comes to optimizing short-term TCO, there are several options available to CSPs. One such option is to work closely with vendors in order to reduce integration, deployment times and costs while simplifying ongoing operations. This approach can help CSPs leverage the expertise of vendors who specialize in the software and hardware components required for a disaggregated telco cloud. By working with skilled vendors, CSPs can reduce the risk of validating and integrating components themselves, which can lead to cost savings in the short term.

Another option that CSPs can consider is to adopt a phased approach to implementation. This involves deploying disaggregated telco cloud technologies in stages, starting with the most critical components and gradually expanding to include additional components over time. This approach can help to mitigate the initial costs associated with disaggregated telco cloud adoption while still realizing the benefits of increased flexibility, scalability, and cost efficiency.

CSPs can also take advantage of initiatives like Vodafone's new operating model for improving systems integrations with their supply base. This model aims to simplify the process of integrating components from multiple vendors by providing a standardized framework for testing and validation. By adopting frameworks like this, CSPs can reduce the time and costs associated with integrating components from multiple vendors, which can help to optimize short-term TCO.

Although implementing a disaggregated telco cloud can increased investment in the short term, there are several options available to CSPs for optimizing short-term TCO. Whether it's working closely with trusted vendors, adopting a phased approach, or leveraging standardized frameworks, CSPs can take steps to reduce costs and maximize the benefits of a disaggregated telco cloud.

Your partners for simplifying telco cloud platform design, deployment, and operations

Dell and Red Hat are leading experts in cloud-native technology used in building 5G networks and are working together to simplify their deployment and management for CSPs. Dell Telecom Infrastructure Blocks for Red Hat is a solution that combines Dell's hardware and software with Red Hat OpenShift, providing a pre-integrated and validated solution for deploying and managing 5G Core workloads. This offering enables CSPs to quickly launch and scale 5G networks to meet market demand for new services while minimizing the complexity and risk associated with deploying cloud-native infrastructure.

Next steps

In the next blog we will dive deeper into the the service-based architecture of the 5G Core architecture and how it was developed to support cloud native principles. To learn more about how Dell Technologies and Red Hat are partnering to simplify the deployment and management of a telco cloud platform built to support 5G Core workloads, see the ACG Research Industry Directions Brief: Extending the Value of Open Cloud Foundations to the 5G Network Core with Telecom Infrastructure Blocks for Red Hat.

Authored by:

Gaurav Gangwal

Senior Principal Engineer – Technical Marketing, Product Management

About the author:

Gaurav Gangwal works in Dell's Telecom Systems Business (TSB) as a Technical Marketing Engineer on the Product Management team. He is currently focused on 5G products and solutions for RAN, Edge, and Core. Prior to joining Dell in July 2022, he worked for AT&T for over ten years and previously with Viavi, Alcatel-Lucent, and Nokia. Gaurav has an Engineering degree in Electronics and Telecommunications and has worked in the telecommunications industry for about 14+ years. He currently resides in Bangalore, India.

Kevin Gray

Senior Consultant, Product Marketing – Product Marketing

About the author:

Kevin Gray leads marketing for Dell Technologies Telecom Systems Business Foundations solutions. He has more than 25 years of experience in telecommunications and enterprise IT sectors. His most recent roles include leading marketing teams for Dell’s telecommunications, enterprise solutions and hybrid cloud businesses. He received his Bachelor of Science in Electrical Engineering from the University of Massachusetts in Amherst and his MBA from Bentley University. He was born and raised in the Boston area and is a die-hard Boston sports fan.

Monetizing Network Exposure Through Open APIs

Fri, 08 Dec 2023 19:40:56 -0000

|Read Time: 0 minutes

Market Background

5G, especially 5G standalone, has not yet developed to fulfill expectations. End users are not yet seeing significant differences in comparison to 4G, and CSPs are not yet seeing new revenue streams. To address these challenges, we have previously presented two blogs (Network Slicing and Network Edge). In this third blog, we continue to describe a realistic view of how CSPs could maximize the 5G standalone experience and go beyond being merely connectivity providers. This blog focuses on exposing the network capabilities (services and user/network information) that service providers and enterprises can use to enable innovative and monetizable services.

Figure 1: Monetizing Network Exposure

Figure 1: Monetizing Network Exposure

The success of numerous innovative mobile applications can be traced to the availability of mobile Software Development Kits (SDKs). SDKs are available for both iOS and Android mobile platforms. These SDKs provide open tools, libraries, and documentation that allow application developers to easily create mobile applications that rely upon the capabilities of existing mobile platforms (such as notifications and analytics) and device hardware (like GPS and camera.). Most importantly, these two mobile platforms alone currently support over four billion users. The next step is to use the same principles on the network side by using Open APIs that allow unified access to network capabilities for increased network exposure.

The concept of network exposure is not new. There have been a few less-than-successful attempts in the past, such as Service Capabilities Exposure Function (SCEF) and APIs for the IMS/Voice. These solutions were not able to scale sufficiently to attract a significant number of application developers. The specifications have been too complicated for anybody outside of the telecom world to understand or implement. The integration of network exposure into the 5G design is groundbreaking. API exposure is now fundamental to 5G and is natively built into the architecture, enabling applications to seamlessly interact with the network.

Monetizing mobile networks using Open APIs relies on the implementation of communication APIs for voice, video, and messaging, as well as network APIs for location, authentication, and quality of service. By exposing these capabilities through Open APIs, CSPs can establish partnerships by facilitating the creation of tailored, high-value services for businesses, thereby enabling them to monetize 5G beyond traditional connectivity and bundled offerings. These new revenue streams are paramount as the traditional revenue streams from mobile broadband services are flat while costs continue to rise. Moreover, the deployment of a cloud-native 5G standalone network requires substantial investments, making it crucial to identify new revenue streams that can justify the business case.

Technical Background and Standardization

5G standalone was specified in 3GPP release 15 and its architecture standardized the Network Exposure Function (NEF). One of the 5G core network functions, NEF allows applications to subscribe to network changes, or instruct them to extract network information and capabilities. NEF enables an extensive set of network exposure capabilities, but it lacks the scale, agility, and simplicity that application developers require. GSMA’s Open Gateway Initiative, the CAMARA project, and TM Forum’s Open APIs all aim to address this gap.

- GSMA’s Open Gateway Initiative achieves scale by committing CSPs to implement the common system framework in a unified manner.

- Actual Service APIs are defined under the CAMARA project where the work is done as an open-source project at the Linux foundation.

- TM Forum’s Open APIs are used in this framework for Operation, Administration, and Management (OAM).

The use case is well described in the GSMA’s Open Gateway white paper.

Network APIs

Open APIs and network capabilities in this new concept have much to offer. The CAMARA project has already defined 18 Service APIs such as Quality on Demand, Device Location, Device Status, Number Verification, Simple Edge Discovery, One Time Password SMS, Carrier Billing, and SIM swap. Three of the most popular elements are described in more detail below:

Quality on Demand: It is easy to imagine that multiple applications can benefit from better quality (bandwidth and latency). The challenge is to address how the network can fulfill this request instantaneously and cost-effectively. Some Proof of Concepts (PoCs) demonstrate that implementing Quality on Demand improvements can trigger either a new Network Slice or a different Quality of Service Class Identifier (QCI). For more information, see our Network Slicing blog.

Device Location: This API verifies that the device is in a specific geographical area. The main benefits of the network-based request are that it can be used when a GPS signal is not available, and it is considered more trustworthy (location info cannot be spoofed).

Device Status: This API provides a very simple and straightforward request to determine whether the subscriber is roaming.

None of these Service APIs offer anything unique that the market has not seen before. Their intrinsic value comes from being part of a unified platform that enables a consistent way of accessing network capabilities and information, similar to how mobile SDKs became a catalyst to the thriving mobile device ecosystem we know today. Only time will tell how much value-add application developers will see from these Open APIs.

Use Cases and Commercial Models

The value of new features and applications is considered whenever 5G monetization is discussed. We are still in the early phase of Open APIs, but the TM Forum’s Catalyst Program and CAMARA Open API showcases can give good insights into what the coming commercial deployments could look like. These programs have triggered several PoCs where the related use cases have required optimized performance (Quality of Demand), user location/roaming information, and feedback on consumer experience. In these PoCs, the service providers have been able to consume the Open APIs directly or through a Hyperscale marketplace. As an example, in one PoC, guaranteed Quality of Delivery was needed for a 360-degree 8K live streaming service with content monetization through APIs (with CSPs curating markets at the edge). Another PoC included an end-to-end implementation of a marketplace from which one could consume network services from multiple CSP networks (Simple hyperscaler integrated network experience).

We can expect several commercial models for these Open APIs, because these APIs can be utilized in various ways such as providing network/subscriber information, optimizing functionalities/features, and allocating network capacity/resources. it is yet to be determined how these Open APIs can be consumed easily. Service providers are unlikely to integrate and set up individual contracts with every other service provider in the world. Therefore, there must be a place for aggregation in order to hide the complexity behind a portal. This role can be assumed by a group of service providers or hyperscalers who can onboard these services onto their marketplaces.

Challenges and The Road Ahead

One of the main Key Performance Indicators (KPI’s that define success for service providers is the ability to scale and have a global reach. It is critical that there be no fragmentation and that the community work towards a unified approach. Jointly agreed upon solutions and specifications require more time to develop; therefore, another year may pass before we start to see commercial use case launches (as forecast by Borje Ekholm, Ericsson CEO during the Q3-2023 earnings call).

The journey to unified 5G is not easy, and it presents various challenges:

- Technology migration poses a challenge as mobile operators need to transition from existing systems to effectively utilize the potential of the 5G NEF and Open APIs.

- Another significant obstacle is bridging the gap between software developers and mobile operators. Developers require clear, simple, unified, and well-documented APIs to leverage the network capabilities effectively.

- At the same time, mobile operators must ensure that exposing their network does not compromise the security of the network while ensuring that end users have full control of where and how their information is stored and used.

Some service providers have already launched platforms with a few Service APIs. Early deployments can introduce a risk of fragmentation. However, the risk is outweighed by the positive impact testing the concept in the real world and constructing more concrete requirements from actual user experiences with these services.

Regardless of how much commercial success these new Network and Service APIs realize in the coming years, they will have made an important step towards more Open, Agile, and Programmable networks. Similarly, Dell has been embracing this vision in our Telecom strategy as reflected on our Multi-Cloud Foundation Concept, Bare Metal Orchestration, and Open RAN development projects. In our vision, Open APIs are needed in all layers (Infrastructure, Network, Operations, and Services). Stay tuned for more to come from Dell about the open infrastructure ecosystem and automation (#MWC24).