Distribution of 5G Core to Network Edge

Mon, 06 May 2024 12:52:39 -0000

|Read Time: 0 minutes

Thus far in our blog series, we have discussed migrating to an open cloud-native ecosystem, the 5G Core and its architecture, and how Dell Telecom Infrastructure Block for Red Hat can help simplify 5G network deployment for Red Hat® OpenShift®. Now, we would like to introduce a key use case for distributing 5G core User Plane functions from the centralized data center to the network edge.

Distributing Core Functions in 5G networks

The evolution of communication technology has brought us to the era of 5G networks, promising faster speeds, lower latency, and the ability to connect billions of devices simultaneously. However, to achieve these ambitious goals, the architecture of 5G networks needs to be more flexible, scalable, and efficient than ever before. With the advent of CUPS, or Control and User Plane Separation, in later LTE releases, the telecommunications industry had high expectations for a prototypical distributed control-user plane architecture. This development was seen as a steppingstone towards the more advanced 5G networks that were on the horizon. CUPS aimed to separate the control plane and user plane functionalities where the Control Plane (specifically the Session Management Function or SMF) is typically centralized while the User Plane Function (UPF) can be located alongside the Control Plane or distributed to other locations in the network as demanded by specific use cases.

Understanding the need for Distributed UPF

The UPF is a key component in 5G networks, responsible for handling user data traffic. Distributed User Plane Function (D-UPF) is an advanced network architecture that distributes the UPF functionality across multiple nodes closer to the user and enables local breakout (LBO) to manage use cases that requires lower latency or more privacy, enabling a more scalable and flexible networking environment. With D-UPF, operators can handle increasing data volumes, reduce latency, and enhance overall network performance. By distributing the UPF, operators can effectively manage the increasing data demands across different consumer and enterprise use cases in a cost-effective manner.

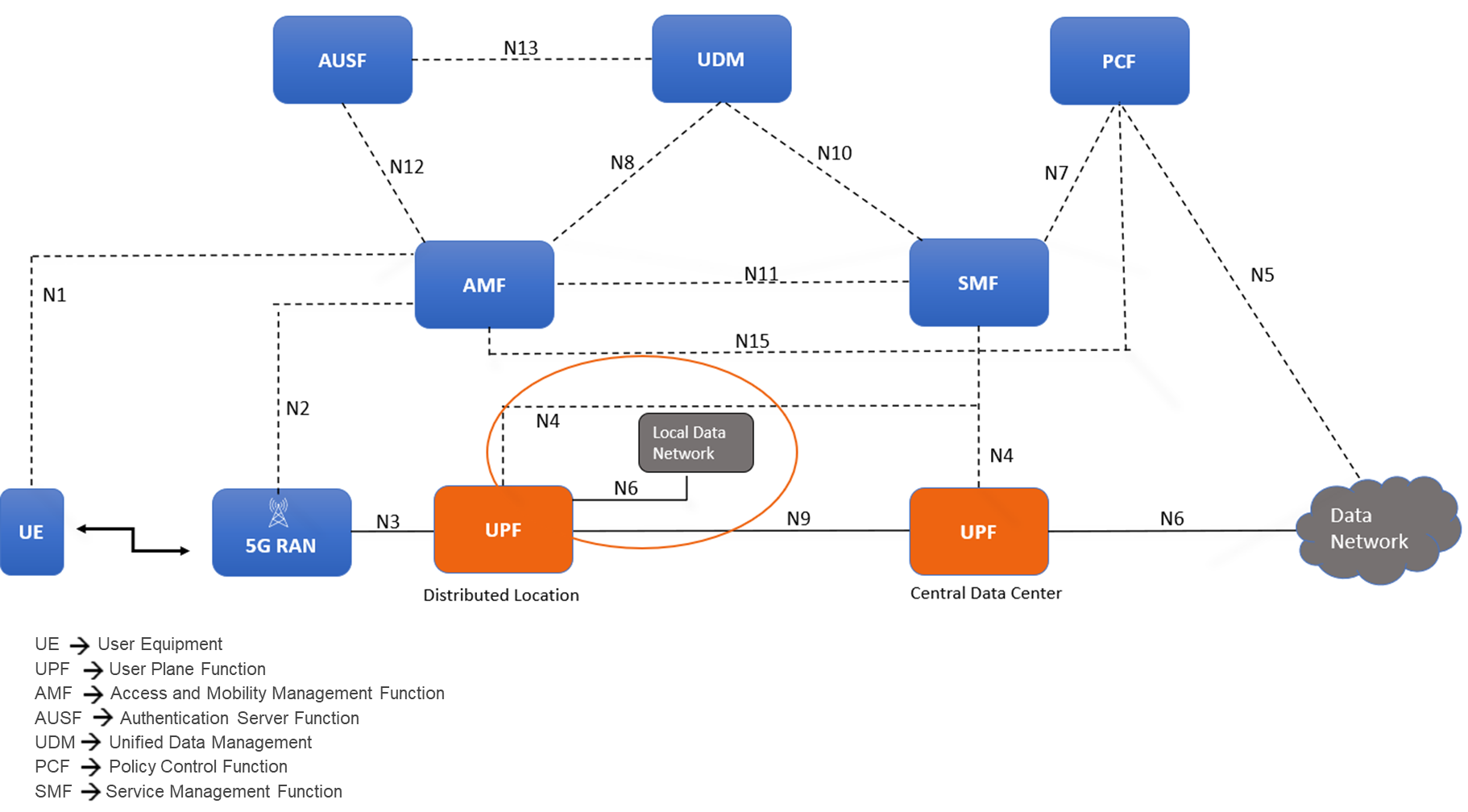

Figure 1: Distributed User Plane function in 5G Core Architecture

D-UPF also plays a crucial role in enabling edge computing in 5G networks. By distributing the user plane traffic closer to the network edge, D-UPF reduces the latency associated with data transmission to and from centralized data centers. This opens opportunities for real-time applications, such as autonomous vehicles, augmented reality, and industrial automation, where low latency is critical for their proper functioning.

Distributed UPF deployment options

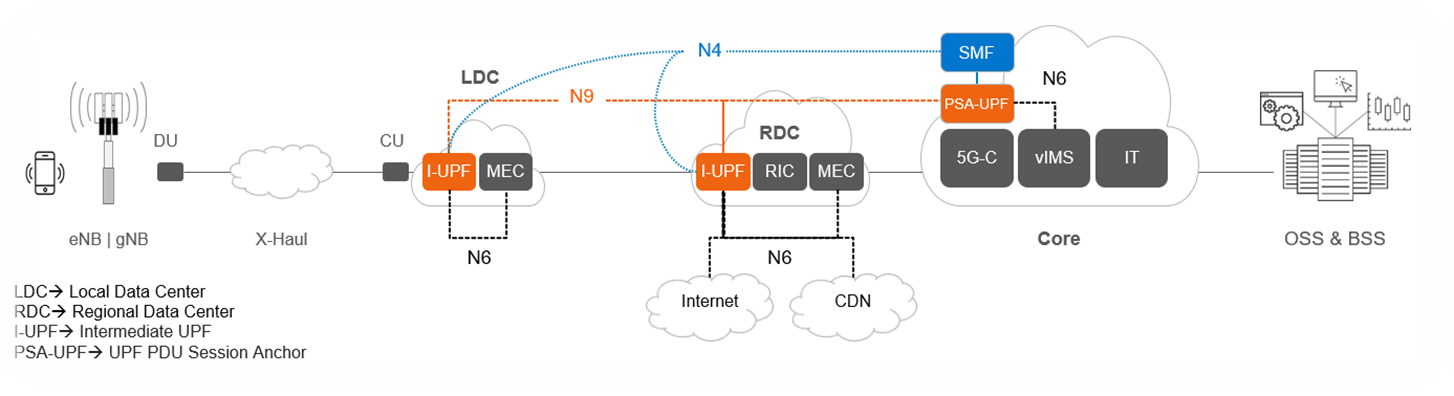

Figure 2: D-UPF deployment and functionality

The above diagram provides an overview of the different roles D-UPF may play in a 5G architecture. For example:

- Centralized UPF/PSA-UPF: In the simplest scenario, the UPF is centralized and session anchor occurs within the data center and takes care of the long-term stable IP address assignment. One such example includes VoLTE / NR call where PDU Session Anchor (PSA)-UPF traffic steers to IMS.

- Intermediate UPF (I-UPF):An intermediate UPF (I-UPF) can be inserted on the User Plane path between the RAN and a PSA. Here are two possible reasons to do that:

- If due to mobility, the UE moves to a new RAN node and the new RAN node cannot support N3 tunnel to the old PSA, then an I-UPF is inserted and this I-UPF will have the N3 interface towards RAN and an N9 interface towards the PSA UPF. This process of linking multiple UPFs is called UPF chaining, which involves directing user data flows through a series of UPFs, each of which is performing specific functions.

- You might want to deploy UPF within the Local Data Center/Edge for a low latency use case to steer data traffic to a co-deployed MEC for edge services or to break-out traffic to the local data network.

Challenges and considerations for D-UPF deployments

Now that we have reviewed the need for D-UPF and the different deployment scenarios, let’s consider some of the obstacles you will encounter along the way. As we all know, these network functions have their own needs, especially when it comes to the amount of data being inspected, routed, and forwarded across from the core to the edge, and back again. Below are four areas for consideration:

- Resource Constraints: Edge or remote locations often have limited physical space available for deploying network equipment. The challenge lies in accommodating the necessary hardware, cooling systems, and other infrastructure within these space-constrained environments. Remote locations may also have limited or unreliable power supply infrastructure. Opting for infrastructure with optimal power efficiency, high density, serviceability, ruggedized exterior, and optimized for edge form factors becomes important as UPFs are extended to the edge.

- Performance Requirements: The need for low latency Infrastructure is critical to ensure real-time responsiveness and a seamless user experience when deploying core functions to the edge. Also, by processing data at the network edge with minimal latency, the need for large bandwidth networks to transmit data to centralized core is reduced. This helps in optimizing network bandwidth and lowering the operating costs. This ultimately reduces the CSP’s reliance on centralized core infrastructure for time-sensitive operations.

- Orchestration and Automation: Deploying and managing UPFs distributed across edge locations is a complex challenge. This includes tasks such as workload placement, resource allocation, and automated management of edge infrastructure. Choosing a horizontal telco cloud platform that supports automated distributed core deployment and provides the capability to expand and scale the compute and storage resources to accommodate the varying demands at the edge is a must.

- Lifecycle Management and Operating Cost: Another significant factor is the increased costs associated with first deploying and then operating remote deployments. The large number of these locations coupled with their limited accessibility makes them more expensive to construct and maintain. To address this, zero-touch provisioning at network setup k and sustainable lifecycle management are necessary to optimize the economics of the edge.

The Horizontal Cloud Platform: Dell Telecom Infrastructure Blocks

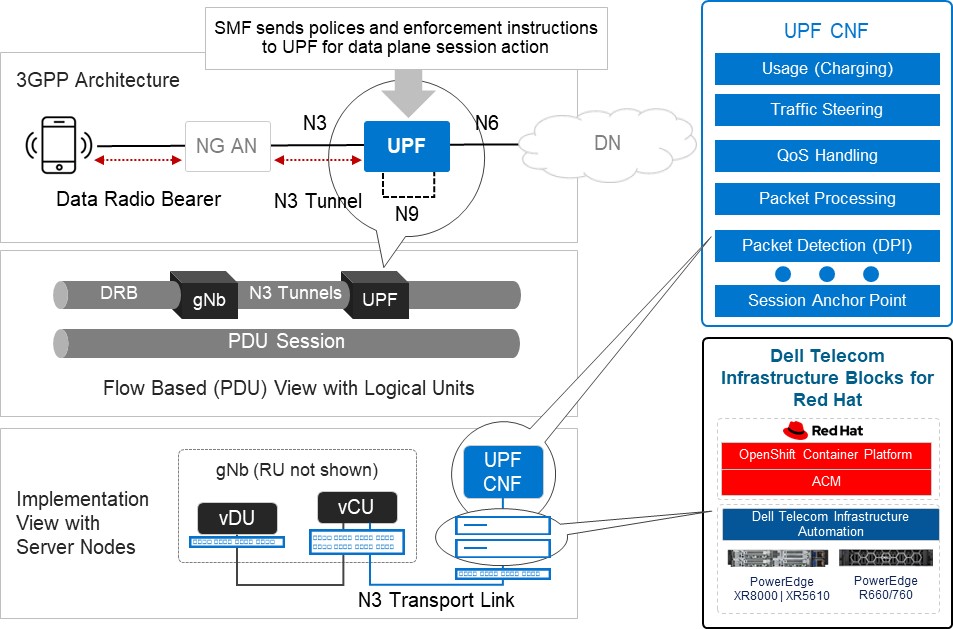

Figure3: An Implementation View of Dell Telecom Infrastructure Blocks for Red Hat running the UPF

Dell Technologies is at the forefront of providing cutting-edge cloud-native solutions for the 5G telecom industry. As discussed in our previous blog, Telecom Infrastructure Blocks for Red Hat is one of those solutions, helping operators break down technology silos and empowering them to deploy a common cloud platform from Core to Edge to RAN. These are engineered systems, based on high-performance telecom edge-optimized Dell PowerEdge servers, that have been pre-validated and integrated with Red Hat OpenShift ecosystem software. This makes them a perfect solution for tackling the D-UPF challenges outlined in this blog.

- Resource Constraints:

- Space-efficient modular designed server options for telecom environments, such as the Dell PowerEdge XR8000 series servers, allow providers to mix and match components based on workload needs. They can run multiple workloads, such as CU/DU and UPF in the same chassis.

- Smart cooling designs support harsh edge environments and keep systems at optimal temperatures without using more energy than is needed.[1]

- Rugged and flexible server options that are less than half the length of traditional servers and offer front or rear connectivity make installation in small enclosures at the base of cell towers easier.[2]

- Performance Requirements:

- Orchestration and Automation:

- Horizontal cloud stack engineered platform based on Red Hat OpenShift allows operators to pool resources to meet changing workload requirements. This is achieved by automating server discovery, creating and maintaining a server inventory, and adding the ability to configure and reconfigure the full hardware and software stack to meet evolving workload requirements.

- Servers leverage dynamic resource allocation to ensure that computing resources are allocated precisely where and when they are needed. This real-time optimization minimizes resource waste and maximizes network efficiency.[3]

- Lifecycle Management and Operating Cost:

- Include Dell Telecom Infrastructure Automation software to automate the deployment and life-cycle management as its fundamental components.

- Backed by a unified support model from Dell with options that meet carrier grade SLAs, CSPs do not have to worry about multi-vendor management for the cloud infrastructure support (for both the hardware and cloud platform software), as Dell becomes the single point of contact in the support of telco cloud platform and works with its partners to resolve issues.

Summary

In summary, the need for D-UPF in 5G networks arises from the requirements of handling massive data volumes, improving network efficiency, reducing latency, enabling edge computing, and supporting advanced 5G services. Selecting among the different deployment scenarios possible will require ensuring you have an infrastructure capable of meeting your changing objectives for today and the flexibility and scalability to see you through your long-term goals. For example, you can host and support the deployment and management of content delivery network (CDN) at the network edge, where Dell Telecom Infrastructure Blocks for Red Hat can also serve as a telco cloud building block. By implementing this engineered telco cloud platform solution from Dell, we believe CSPs will be able to streamline the process and reduce costs associated with the deployment and maintenance of UPF across edge locations.

To learn more about Telecom Infrastructure Blocks for Red Hat, visit our website Dell Telecom Multicloud Foundation solutions.

[1] Source: ACG Report, “The TCO Benefits of Dell’s Next-Generation Telco Servers“, February 2023

[2] Source: Dell Technologies, “Introducing New Dell OEM PowerEdge XR Servers“, March 2023

[3] Source: Dell Technologies, “Competing in the new world of Open RAN in Telecom”, February 2024

Related Blog Posts

Simplifying 5G Network Deployment with Dell Telecom Infrastructure Blocks for Red Hat

Fri, 19 Jan 2024 15:08:05 -0000

|Read Time: 0 minutes

Welcome back to our 5G Core blog series. In the second blog post of the series, we discussed the 5G Core, its architecture, and how it stands apart from its predecessors, the role of cloud-native architectures, the concept of network slicing, and how these elements come together to define the 5G Network Architecture.

In this third blog, we look at Dell Technologies’ and Red Hat's collaboration with their latest offering of Dell Telecom Infrastructure Blocks for Red Hat. We explore how Infrastructure Blocks streamline Communications Service Providers’ (CSPs) processes for a Telco cloud used with 5G core from initial technology onboarding at day 0/1 to day 2 life cycle management.

Helping CSPs transition to a cloud-native 5G core

Building a cloud-native 5G core network is not easy. It requires careful planning, implementation, and expertise in cloud-native architectures. The network needs to be designed and deployed in a way that ensures high availability, resiliency, low latency, efficient resource utilization, and flawless component interoperability. CSPs may feel overwhelmed when considering the transition from legacy architectures to an open, best-of-breed cloud-native architecture. This can lead to delays in design, deployment, and life cycle management processes that stall projects and reduce a CSP’s ability to effectively deploy and manage their disaggregated cloud-native network.

Automation plays a critical role in managing deployment and life cycle management processes. Many projects stall or fail due to poorly defined automation strategies that make it difficult to ensure compatibility between hardware and software configurations across a large, distributed network. This is especially true when trying to deploy and manage a cloud platform running on bare metal.

Dell Telecom Infrastructure Blocks for Red Hat are foundational building blocks for creating a Telco cloud that is based on Red Hat OpenShift. They aim to reduce the time, cost, and risk of designing, deploying, and maintaining 5G networks using open software and industry standard infrastructure. The current release of Telecom Infrastructure Blocks for Red Hat supports the creation of management and workload clusters for 5G core network functions running Red Hat OpenShift on bare metal servers.

There are a number of challenges to build and maintain Kubernetes clusters on bare metal to run 5G network functions:

- Ensuring interoperability and fault tolerance in a disaggregated network is not an easy task. Deploying and managing Kubernetes clusters on bare metal requires extensive design, planning, and interoperability testing to ensure a reliable, fault tolerant, and performant system.

- Automating the deployment and life cycle management of hardware resources and cloud software in a bare metal environment can be complex. It involves deploying and updating a fleet of bare metal servers at scale.

- There is a lack of pre-built software integrations specifically designed for deploying Kubernetes clusters on bare metal servers and bringing those cluster configurations to a state where they are ready to run workloads. This means that configuring and deploying Kubernetes on bare metal frequently requires more manual effort to build and maintain the automation needed to manage deployments and upgrades at scale. This manual effort can be time-consuming and add complexity that introduces risk to the process.

- This lack of consistent, easy-to-manage automation to deploy and update the cloud stack to meet workload requirements also make it harder to implement a unified cloud platform across all workloads. This leads to infrastructure silos that limit the ability to pool resources to improve infrastructure utilization rates, which in turn reduces network TCO efficiency.

These challenges are amplified when running 5G network functions, which require low latency and high reliability to meet carrier-grade service level agreement (SLAs). This collaboration between Dell and Red Hat aims to offer a comprehensive solution for CSPs that addresses the challenges associated with building and maintaining carrier-grade cloud infrastructure for 5G core network functions.

Key objectives of Dell Telecom Infrastructure Blocks for Red Hat

Implement a “shift left” approach

In software development, the term “shift left” refers to the ability to move tasks to an earlier stage in the development or production process to reduce time to value for those processes. The shift left approach being offered with Infrastructure Blocks moves much of the testing and integration work performed by the CSP into the supply chain prior to onboarding the new technology. This method provides CSPs with a speedy path to value by shortening the preparation and validation phase for a new network deployment. It also simplifies the procurement process by reducing the number of suppliers the CSP needs to work with and simplifies support by providing one call support for the full cloud stack. Proactive problem-solving, reduction of field touch points, risk minimization, and operational simplification are byproducts of the Infrastructure Block approach that hasten the introduction of new technology into a CSP’s network. By adopting this approach, CSPs can obtain faster rollout times and reduced operational costs. Dell does three things to help CSPs shift the technology onboarding processes left:

Engineering

Telecom Infrastructure Blocks are foundational building blocks that are co-designed with Red Hat to help CSPs build and scale out their network. These building blocks are purpose-built to meet specific workload requirements. Dell collaborates with Red Hat to maintain a roadmap of feature enhancements and perform continuous design and integration testing to accelerate the adoption of new technologies and software upgrades. The design planning and extensive interoperability testing performed by Dell simplifies the processes of building and maintaining a fault tolerant and performant cloud platform to run 5G core workloads.

Automation

Many CSPs today rely on procedural automation that they build and maintain on their own to automate the deployment and life cycle management of their cloud platform at scale. Procedural automation requires an understanding of the current state of their cloud stack and the maintenance of scripts or playbooks to define the steps needed to update the configuration to the desired state. When deploying Kubernetes on bare metal to support 5G core workloads, there are a number of items with dependencies that must be configured appropriately, including the following properties:

- Cloud platform software version

- BIOS version and settings

- Firmware versions for network interface cards (NICs) and other Peripheral Component Interconnect Express (PCIe) cards

- Single root I/O virtualization (SR-IOV) / Data Plane Development Kit (DPDK) configurations

- RAID configurations

- Site-specific data

Building and maintaining these scripts and automation playbooks is no easy task. It requires an up-to-date view of the current configuration of the infrastructure, an understanding of the dependencies between hardware and software that must be met to perform an update, and people with specialized skills that include knowledge of server hardware and the tools to manage them, the cloud software, and how to write or update playbooks to execute deployments and upgrades.

Managing this across a large, distributed network with a range of workloads that frequently require unique configurations of the cloud stack is a difficult and time-consuming process. Also consider that, in an open ecosystem environment, there is always a new version of software, BIOS, or firmware coming and the people with the needed skill sets are in short supply, resulting in a herculean effort with mixed results.

Telecom Infrastructure Blocks include purpose-built automation software that is easy to use and maintain. The blocks integrate with Red Hat Advanced Cluster Management and Red Hat OpenShift to automate deployment and life cycle management of the hardware and software stack used in a Telco cloud. This software uses declarative automation to simplify the deployment and upgrade of the cloud platform hardware and software to align with approved configuration profiles. With declarative automation, the CSP simply defines the desired state of the cloud stack, and the automation software determines the steps required to achieve the desired state and executes those steps to align the system with the approved configuration.

This infrastructure automation software uses a declarative data model that defines the desired state of the system, the resources properties, and keeps a list of the current state of the cloud configuration and inventory, which significantly simplifies the deployment and life cycle management of the cloud stack. Infrastructure Blocks come with Topology and Orchestration Specification for Cloud Applications (TOSCA) workflows that define the configurations needed to update the system based on the extensive design and validation testing performed by Dell and Red Hat. Dell provides regular updates that simplify the process of upgrading to the latest release. CSPs simply update a Customer Input Questionnaire and the automation software updates the hardware and the cloud platform software, and brings them to a workload-ready state with a single click of a button.

Integration

Dell ships fully integrated systems that are designed, optimized, and tested to meet the requirements of a range of telecom use cases and workloads. They include all the hardware, software, and licenses needed to build and scale out a Telco cloud. Delivering fully integrated building blocks from Dell’s factory significantly reduces the time spent configuring infrastructure onsite or in a network configuration center. They are also backed by Dell Technologies one-call support for the full cloud stack that meets telecom SLAs. Dell has established escalation paths with Red Hat to ensure the highest levels of support for customers.

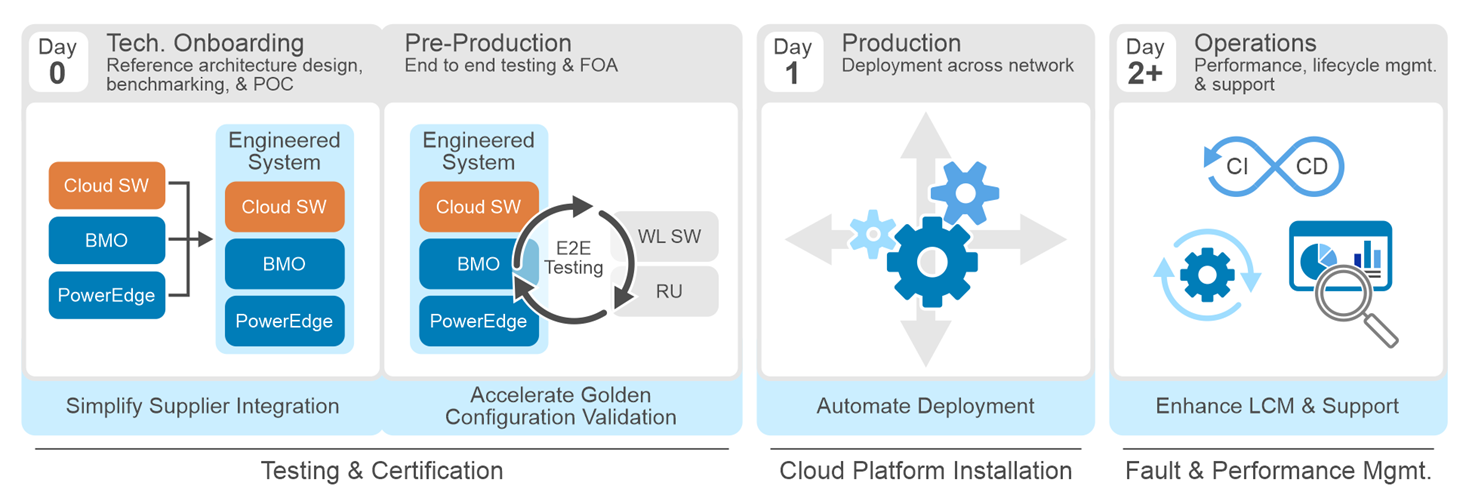

Streamlining Day 0 through Day 2 tasks with Telecom Infrastructure Blocks

In a typical CSP network operating model, there are four stages an CSPs goes through from initial technology onboarding through managing ongoing operations. These stages are:

- Stage 1: Technology onboarding (Day 0)

- Stage 2: Pre-production (Day 0)

- Stage 3: Production (Day 1)

- Stage 4: Operations and lifecycle management (Day 2+)

Dell Telecom Infrastructure Blocks were built to streamline each stage of the processes to reduce the time and risk of building and maintaining a Telco cloud. We do this by proactively working with Red Hat to create an engineered system that meets telecom SLAs, includes automation that delivers zero-touch provisioning, and simplifies life cycle management through continuous design and integration testing.

Let's look at how Dell Telecom Infrastructure blocks affects CSP processes from Day 0 to Day 2+.

Stage 1: Day 0 technology onboarding

Dell and Red Hat collaborate to design an engineered system that is validated through an extensive array of test cases to ensure its reliability and performance. These test cases cover aspects such as functionality, interoperability, security, reliability, scalability, and infrastructure-specific requirements for 5G Core workloads. This testing is aimed at ensuring optimal performance across a diverse array of performance metrics and scale points, thereby guaranteeing performant system operation.

Some of our design and validation test cases include:

Cloud Infrastructure Cluster testing: Cloud Infrastructure Cluster testing refers to the process of testing the infrastructure components of a cloud cluster to ensure their proper functioning, performance, and scalability. It involves validating the networking, storage, compute resources, and other infrastructure elements within a cluster. These test cases include:

- Installation and validation of Infrastructure Block plugins and automation.

- Cluster storage (Red Hat OpenShift AI) validation and testing.

- Validation of cluster network configurations

- Verification of Container Network Interface (CNI) plugin configurations in the cluster.

- Validation of high availability configurations

- Scalability and performance testing

Performance Benchmarking testing: Performance Benchmarking testing usually includes several steps. First, test scenarios are created to simulate real-world usage. Second, performance tests are conducted and the resulting performance data is collected and analyzed. Finally, the results are compared to established benchmarks. Some of the testing performed with Infrastructure Blocks includes:

- CPU benchmarking

- Memory benchmarking

- Storage benchmarking

- Network benchmarking

- Container as a Service (CaaS) benchmarking

- Interoperability tests between the Infrastructure and CaaS layers

At this step, we define the automation workflows and configuration templates that will be used by the automation software during the deployment. Dell works proactively with its customers and partners to understand their best practices and incorporate those into the blueprints included with every Telecom Infrastructure Block.

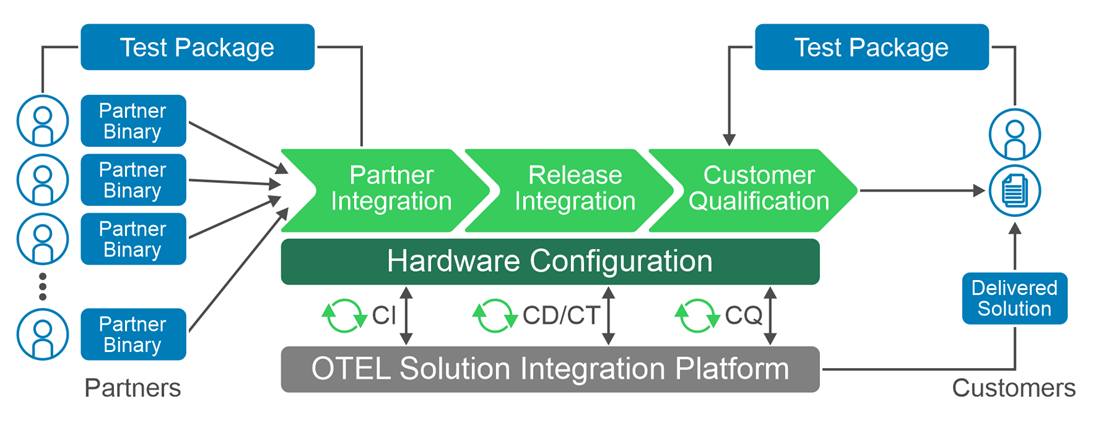

This process produces an engineered system that streamlines the CSP’s reference architecture design, benchmarking, and proof of concept (POC) processes to reduce engineering costs and accelerate the onboarding of new technology. To further streamline the design, validation, and certification process, we provide Dell Open Telecom Ecosystem Lab (OTEL), which can act as an extension of a CSP’s lab to validate workloads on Infrastructure Blocks that meet the CSP’s defined requirements.

Stage 2: Pre-production

The main objective in stage 2 is to onboard network functions onto the cloud infrastructure, define the network golden configuration, and to prepare it for production deployment. Infrastructure Blocks eliminates some of the touch steps in the onboarding by:

- Delivering an integrated and validated building block direct from Dell’s factory

- Delivering a deployment guide that simplifies onboarding

- Providing Customer Input Questionnaires that are configuration input templates used by the automation software to streamline deployment

CSPs can also leverage the Red Hat test line in Dell’s Open Telecom Ecosystem Lab (OTEL) to validate and certify the CSP’s workloads on Infrastructure Blocks. OTEL can play a significant role in enabling CSPs and partners by developing custom blueprints or performing custom tests required by the CSP using Dell’s Solution Integration Platform (SIP) which is part of OTEL. SIP is an advanced automation and service integration platform developed by Dell. It supports multi-vendor system integration and life cycle management testing at scale. It uses industry standard components, toolkits, and solutions such as GitOps.

Dell Services also offers tailor-made configurations to cater to specific operator needs. These are carried out at a Dell second-touch configuration facility. Here, configurations customized to the customer's specifications are pre-installed and then dispatched directly to the customer's location or configuration facility.

Dell Services also offers tailor-made configurations to cater to specific operator needs. These are carried out at a Dell second-touch configuration facility. Here, configurations customized to the customer's specifications are pre-installed and then dispatched directly to the customer's location or configuration facility.

Stage 3: Production

At the production stage, Dell integrates and configures all hardware and settings to support the discovery and installation of the validated version of the cloud platform software, which eliminates the need to configure hardware on site or in a configuration center. Dell’s infrastructure automation software then deploys the validated versions of Red Hat Advanced Cluster Manager and Red Hat Openshift on the servers used in the management and workload clusters and brings those clusters to a workload ready state. This process ensures a consistent and reliable installation process that reduces the risk of configuration errors or compatibility issues. Dell's automation enables zero-touch provisioning that configures hundreds of servers at the same time with full visibility into the health and status of the server infrastructure before and after deployment. Should the CSP need assistance with deployment, Dell's professional services team is standing by to assist. Dell ProDeploy for Telecom provides on-site support to rack, stack, and integrate servers into their network or remote support for deployment.

Stage 4: Operations and lifecycle management

In Day 2+ operations, CSPs must sustain network performance while adapting to changes to the network over time. This includes ensuring software and infrastructure compatibility as updates are made to the network, performing rolling updates, fault and performance management, scaling resources to meet network demands, ensuring efficient use of network resources, and adapting to technology evolution.

Infrastructure Blocks simplify Day 2+ operations in several ways:

- Dell works with Red Hat, its customers, and other partners to capture updates and new requirements necessary to evolve Infrastructure Blocks to support new technologies and software enhancements over time. This requires pro-active collaboration to ensure continuous roadmap alignment across parties. Dell then performs extensive design and validation testing on these enhancements before integrating them into Infrastructure Blocks to deliver a resilient and performant design. This helps CSPs stay on the leading edge of the technology curve while minimizing the risk of encountering faults and performance issues in production.

- Today, Telecom Infrastructure Blocks offers support for three releases per year. In every release, we prioritize the introduction of new capabilities, features, components, and solution enhancements. In addition, there are six patch releases per year that prioritize sub features to ensure compatibility across different releases. Long Term Support releases are provided at the end of the twelve-month release cycle, with a focus on fixing any solution defects that may arise.

- The out-of-the box automation provided with Infrastructure Blocks ensures a consistent, carrier-grade deployment or upgrade of the hardware and cloud platform software each time. This eliminates configuration errors to further reduce issues found in production.

- When bringing together various hardware and software components, CSPs frequently manage different release cycles to support a range of workload requirements. To address any difficulties with software compatibility and life cycle management, Dell Technologies has created a system release cadence process. It includes testing, validating, and locking the release compatibility matrixes for all Infrastructure Block components. This helps to resolve deployment problems affecting software compatibility and Day 2+ life cycle management procedures.

- Dell Professional Services can also provide custom integrations into a CSP’s CI/CD pipeline, providing the CSP with validated updates to the cloud infrastructure that pass directly into the CSP’s CI/CD tool chains to enhance DevOps processes.

- In addition, Dell offers single-call, carrier grade support that meets telecom grade SLAs with guaranteed response and service restoration times for the entire cloud stack (hardware & software).

- The declarative automation provided with Infrastructure Blocks eliminates the time spent updating scripts and playbooks to push out system updates and minimizes the risk of configuration errors that lead to fault or performance issues.

Summary

Dell Telecom Infrastructure Blocks for Red Hat offers a streamlined and efficient way to build and manage Telco cloud infrastructure. From initial technology onboarding to Day 2+ operations, they simplify every step of the process. This makes it easier for CSPs to transition from their vertically integrated, legacy architectures of today to an open cloud-native software platform running on industry standard hardware that delivers reliable and high-quality services to their customers.

This blog post is a collaborative effort from the staff of Dell Technologies and Red Hat.

Monetizing Network Exposure Through Open APIs

Fri, 08 Dec 2023 19:40:56 -0000

|Read Time: 0 minutes

Market Background

5G, especially 5G standalone, has not yet developed to fulfill expectations. End users are not yet seeing significant differences in comparison to 4G, and CSPs are not yet seeing new revenue streams. To address these challenges, we have previously presented two blogs (Network Slicing and Network Edge). In this third blog, we continue to describe a realistic view of how CSPs could maximize the 5G standalone experience and go beyond being merely connectivity providers. This blog focuses on exposing the network capabilities (services and user/network information) that service providers and enterprises can use to enable innovative and monetizable services.

Figure 1: Monetizing Network Exposure

Figure 1: Monetizing Network Exposure

The success of numerous innovative mobile applications can be traced to the availability of mobile Software Development Kits (SDKs). SDKs are available for both iOS and Android mobile platforms. These SDKs provide open tools, libraries, and documentation that allow application developers to easily create mobile applications that rely upon the capabilities of existing mobile platforms (such as notifications and analytics) and device hardware (like GPS and camera.). Most importantly, these two mobile platforms alone currently support over four billion users. The next step is to use the same principles on the network side by using Open APIs that allow unified access to network capabilities for increased network exposure.

The concept of network exposure is not new. There have been a few less-than-successful attempts in the past, such as Service Capabilities Exposure Function (SCEF) and APIs for the IMS/Voice. These solutions were not able to scale sufficiently to attract a significant number of application developers. The specifications have been too complicated for anybody outside of the telecom world to understand or implement. The integration of network exposure into the 5G design is groundbreaking. API exposure is now fundamental to 5G and is natively built into the architecture, enabling applications to seamlessly interact with the network.

Monetizing mobile networks using Open APIs relies on the implementation of communication APIs for voice, video, and messaging, as well as network APIs for location, authentication, and quality of service. By exposing these capabilities through Open APIs, CSPs can establish partnerships by facilitating the creation of tailored, high-value services for businesses, thereby enabling them to monetize 5G beyond traditional connectivity and bundled offerings. These new revenue streams are paramount as the traditional revenue streams from mobile broadband services are flat while costs continue to rise. Moreover, the deployment of a cloud-native 5G standalone network requires substantial investments, making it crucial to identify new revenue streams that can justify the business case.

Technical Background and Standardization

5G standalone was specified in 3GPP release 15 and its architecture standardized the Network Exposure Function (NEF). One of the 5G core network functions, NEF allows applications to subscribe to network changes, or instruct them to extract network information and capabilities. NEF enables an extensive set of network exposure capabilities, but it lacks the scale, agility, and simplicity that application developers require. GSMA’s Open Gateway Initiative, the CAMARA project, and TM Forum’s Open APIs all aim to address this gap.

- GSMA’s Open Gateway Initiative achieves scale by committing CSPs to implement the common system framework in a unified manner.

- Actual Service APIs are defined under the CAMARA project where the work is done as an open-source project at the Linux foundation.

- TM Forum’s Open APIs are used in this framework for Operation, Administration, and Management (OAM).

The use case is well described in the GSMA’s Open Gateway white paper.

Network APIs

Open APIs and network capabilities in this new concept have much to offer. The CAMARA project has already defined 18 Service APIs such as Quality on Demand, Device Location, Device Status, Number Verification, Simple Edge Discovery, One Time Password SMS, Carrier Billing, and SIM swap. Three of the most popular elements are described in more detail below:

Quality on Demand: It is easy to imagine that multiple applications can benefit from better quality (bandwidth and latency). The challenge is to address how the network can fulfill this request instantaneously and cost-effectively. Some Proof of Concepts (PoCs) demonstrate that implementing Quality on Demand improvements can trigger either a new Network Slice or a different Quality of Service Class Identifier (QCI). For more information, see our Network Slicing blog.

Device Location: This API verifies that the device is in a specific geographical area. The main benefits of the network-based request are that it can be used when a GPS signal is not available, and it is considered more trustworthy (location info cannot be spoofed).

Device Status: This API provides a very simple and straightforward request to determine whether the subscriber is roaming.

None of these Service APIs offer anything unique that the market has not seen before. Their intrinsic value comes from being part of a unified platform that enables a consistent way of accessing network capabilities and information, similar to how mobile SDKs became a catalyst to the thriving mobile device ecosystem we know today. Only time will tell how much value-add application developers will see from these Open APIs.

Use Cases and Commercial Models

The value of new features and applications is considered whenever 5G monetization is discussed. We are still in the early phase of Open APIs, but the TM Forum’s Catalyst Program and CAMARA Open API showcases can give good insights into what the coming commercial deployments could look like. These programs have triggered several PoCs where the related use cases have required optimized performance (Quality of Demand), user location/roaming information, and feedback on consumer experience. In these PoCs, the service providers have been able to consume the Open APIs directly or through a Hyperscale marketplace. As an example, in one PoC, guaranteed Quality of Delivery was needed for a 360-degree 8K live streaming service with content monetization through APIs (with CSPs curating markets at the edge). Another PoC included an end-to-end implementation of a marketplace from which one could consume network services from multiple CSP networks (Simple hyperscaler integrated network experience).

We can expect several commercial models for these Open APIs, because these APIs can be utilized in various ways such as providing network/subscriber information, optimizing functionalities/features, and allocating network capacity/resources. it is yet to be determined how these Open APIs can be consumed easily. Service providers are unlikely to integrate and set up individual contracts with every other service provider in the world. Therefore, there must be a place for aggregation in order to hide the complexity behind a portal. This role can be assumed by a group of service providers or hyperscalers who can onboard these services onto their marketplaces.

Challenges and The Road Ahead

One of the main Key Performance Indicators (KPI’s that define success for service providers is the ability to scale and have a global reach. It is critical that there be no fragmentation and that the community work towards a unified approach. Jointly agreed upon solutions and specifications require more time to develop; therefore, another year may pass before we start to see commercial use case launches (as forecast by Borje Ekholm, Ericsson CEO during the Q3-2023 earnings call).

The journey to unified 5G is not easy, and it presents various challenges:

- Technology migration poses a challenge as mobile operators need to transition from existing systems to effectively utilize the potential of the 5G NEF and Open APIs.

- Another significant obstacle is bridging the gap between software developers and mobile operators. Developers require clear, simple, unified, and well-documented APIs to leverage the network capabilities effectively.

- At the same time, mobile operators must ensure that exposing their network does not compromise the security of the network while ensuring that end users have full control of where and how their information is stored and used.

Some service providers have already launched platforms with a few Service APIs. Early deployments can introduce a risk of fragmentation. However, the risk is outweighed by the positive impact testing the concept in the real world and constructing more concrete requirements from actual user experiences with these services.

Regardless of how much commercial success these new Network and Service APIs realize in the coming years, they will have made an important step towards more Open, Agile, and Programmable networks. Similarly, Dell has been embracing this vision in our Telecom strategy as reflected on our Multi-Cloud Foundation Concept, Bare Metal Orchestration, and Open RAN development projects. In our vision, Open APIs are needed in all layers (Infrastructure, Network, Operations, and Services). Stay tuned for more to come from Dell about the open infrastructure ecosystem and automation (#MWC24).