Defining the future of O-RAN Management with Vodafone, Amdocs, and Dell Technologies

Thu, 22 Feb 2024 13:08:00 -0000

|Read Time: 0 minutes

Seizing the initiative to define the future of Open RAN management

The transformative journey of communication service provider (CSP) networks has reached a new, exciting stage. As operators increasingly adopt cloud technologies and embrace disaggregated architecture, the O-RAN Alliance is leading an expansion into the radio access network (RAN) realm. By disrupting the traditional RAN landscape, O-RAN is driving the industry towards a software-driven approach that leverages diverse software and hardware from multiple vendors to achieve the best possible outcomes. The goal is to create integrated, tested and certified solutions that deliver lower total cost of ownership (TCO) and amplified innovation.

With over 40 years’ industry expertise, Amdocs is a leading provider of software and services to communications and media companies. The company offers market-leading capabilities for service providers’ operations support systems (OSS) and radio access networks (RANs), and has delivered proven solutions in network management, planning, and optimization. To meet emerging challenges, Amdocs also strongly collaborates with leading industry organizations like the Telecom Infra Project and the O-RAN Alliance.

Dell Technologies is a global leader in digital transformation and infrastructure. Its products are widely utilized by global telecom operators in network and IT infrastructure, ranging from purpose-built telecom servers to cloud-native orchestration and infrastructure automation solutions. The company also offers bundled solutions developed in close collaboration with a diverse ecosystem of partners in O-Cloud and workload layers, and has extensive representation in key industry forums, including the O-RAN Alliance, Telecom Infra Project, and 3GPP.

To advance a shared vision for O-RAN management, our two companies have partnered to enable cloud transformations throughout the industry. For example, consider Amdocs Service Management and Orchestration (SMO) for O-RAN, whose capabilities include orchestration, inventory and assurance for any managed element, including x/rAPPs.

While Amdocs offering supports any O-Cloud, across bare metal and CaaS, when integrated with Dell Telecom Infrastructure Automation Suite, it supports deployments on Dell Technology’s industry-leading telecom servers, as well as O-Cloud layer software, provided by partner organizations. This integration enables CSPs to rapidly provision, manage, and monitor their O-Cloud infrastructure, and simplify the lifecycle management of infrastructure nodes in a dynamic, disaggregated network. A proof of concept (PoC) showcasing this solution's capabilities is currently underway at Vodafone Group, encompassing both immediate use cases and a roadmap of forward-looking scenarios.

Bringing efficiencies to O-RAN with Service Management and Orchestration (SMO)

Service Management and Orchestration (SMO) is a key pillar in service and network orchestration, addressing specific CSP needs. By operating across multiple hierarchies, SMO efficiently manages multi-vendor, multi-technology entities with varying lifecycles. Furthermore, by focusing on cloud infrastructure, virtualized and containerized cloud-native functions (CNFs), it’s fully aligned with the industry’s developing architecture, seamlessly integrating with, and actively contributing to O-RAN standards and interfaces.

Amdocs SMO provides all the capabilities required to manage O-RAN. It supports the end-to-end lifecycle of the network, including design and onboarding, orchestration and management, inventory, and assurance processes. This approach also extends to embracing the openness and disaggregated approach of O-RAN, with support for heterogeneous multi-technology, multi-vendor networks – bringing CSPs cost efficiencies and empowering innovation.

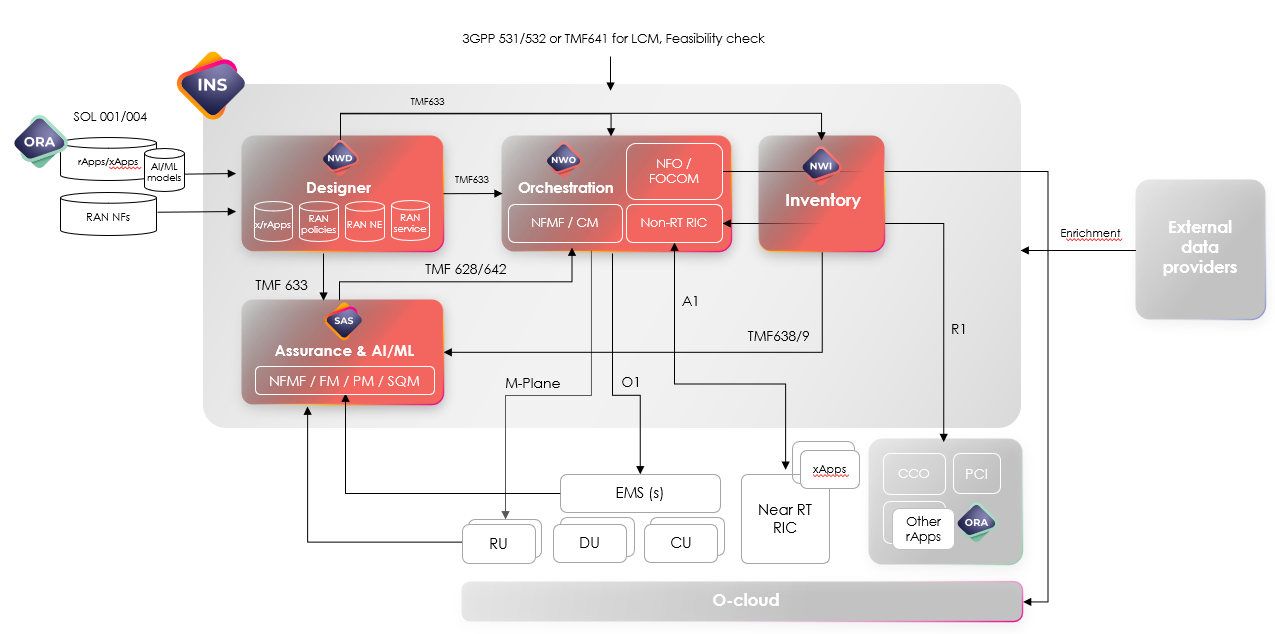

Figure 1 Amdocs Service Management and Orchestration Solution Overview

Amdocs’ SMO supports a diverse set of use cases, from O-RAN network rollout, network slicing and O-RAN energy efficiency savings, to assurance and closed-loop operations. Furthermore, it’s instrumental in simplifying the rollout process, addressing challenges presented by the disaggregated, multi-vendor nature of O-RAN.

Post-rollout too, SMO plays a pivotal role managing each individual network slice, ensuring RAN performance, maintaining service-level objectives and undertaking corrective actions. This is achieved by leveraging standard FM, PM, SQM capabilities, as well as O-RAN apps, which are deployed within both the Non-RT RIC (rApps) and

Near-RT RIC (xApps) to support different optimization use cases. Throughout, the solution fully adheres to O-RAN specifications and standards.

Streamlining with Infrastructure and O-Cloud automation

Dell Technologies Infrastructure Automation Suite helps to simplify and automate infrastructure management in disaggregated networks, allowing CSPs to seamlessly provision, manage and monitor their infrastructure. In addition to operating based on the O-RAN O2-IMS and O2-DMS APIs, the Suite provides an open, model-driven framework for a ubiquitous single point of control. This suite then serves as the unified entry and exit point for automated deployment and orchestration of multi-site and multi-vendor infrastructure, as well as streamlined day 2 lifecycle management, including updates and upgrades.

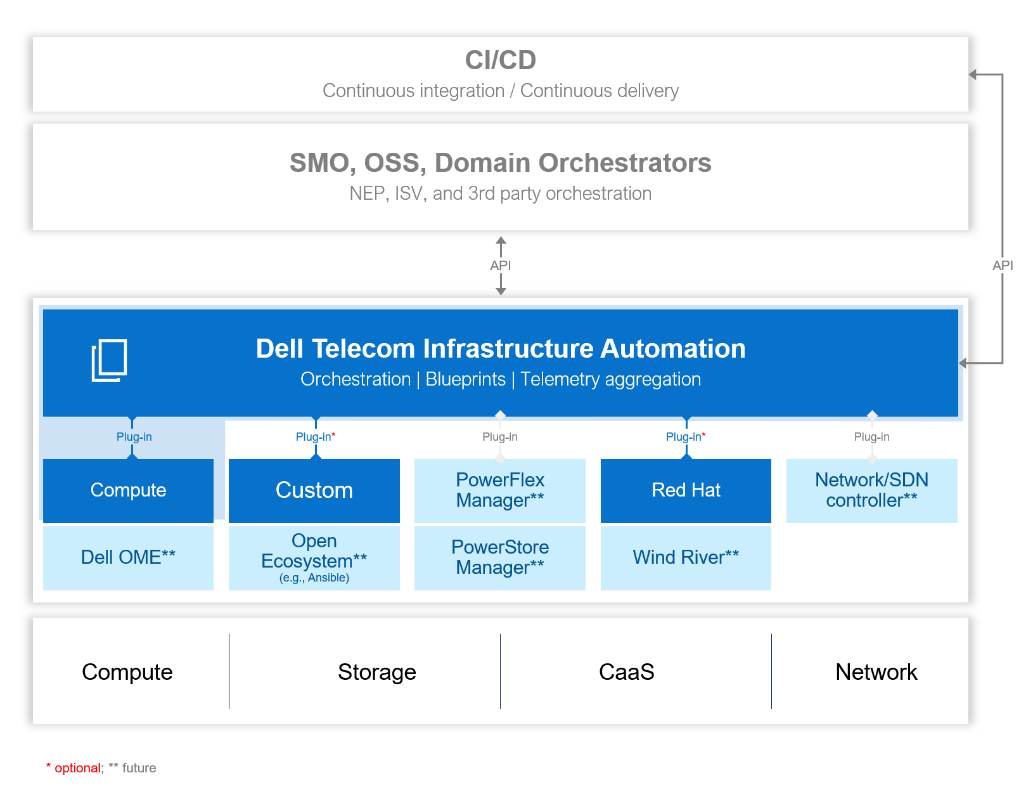

Figure 2 Dell Telecom Infrastructure Automation Suite

Dell Telecom Infrastructure Automation Suite’s open and extensible architecture serves as the driving force behind O-RAN infrastructure automation. It includes a comprehensive set of components, including full orchestration, data-driven telemetry of cloud infrastructure, resource controllers, API adaptors, a user interface and a single pane of glass for complete cloud infrastructure.

Importantly, the suite, with its open declarative automation framework, also delivers support for cloud infrastructure operations, lower infrastructure total cost of ownership (TCO), accelerated time to market (TTM)/time to repair (TTR), and a modular, extensible architecture to avoid vendor lock-in.

A ground-breaking proof of concept with Vodafone

A main takeaway from our collaboration with Vodafone was that the ability to replace manual processes with zero-touch operations would represent a real game changer. To showcase this vision, Amdocs and Dell Technologies set the goal of building a proof-of-concept (PoC) that would achieve this objective. Taking an end-to-end distributed zero-touch deployment approach, we set out to build a model that significantly reduces the time to bring new sites and services online. Ultimately, Vodafone also seeks to automate the radio network rollout and validate the joint solution’s ability to manage a hybrid, multi-vendor, and disaggregated O-RAN network.

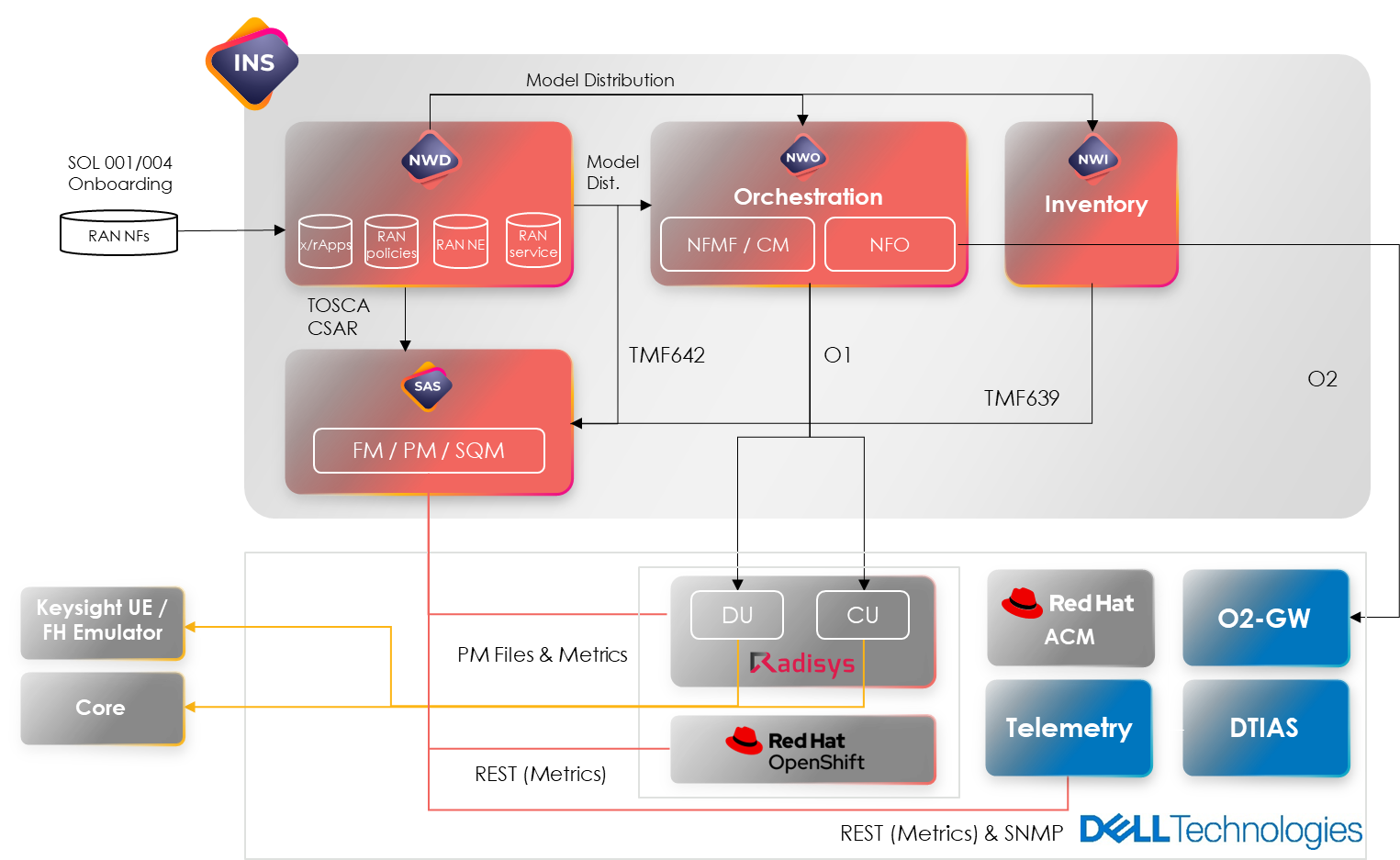

For this PoC, a joint blueprint was created, whereby Amdocs would manage SMO and system integration, with Dell overseeing O-Cloud and infrastructure (including bare metal) layers, and Radisys providing O-RAN CNFs. Additional software will include Red Hat® OpenShift®, a hybrid cloud application platform powered by Kubernetes, as a CaaS platform and Open Telemetry for performance metrics in CaaS.

Figure 3 Vodafone O-RAN PoC blueprint

Vodafone Proof of Concept use cases

The PoC aims to showcase the seamless integration of Amdocs SMO with Dell Technologies Infrastructure Automation Suite, enabling zero-touch deployment of a RAN site. The deployment involves transitioning infrastructure from bare-metal to the cloud using a declarative approach. Once the site is deployed, Amdocs and Dell will demonstrate end-to-end implementation through a data call. Both Amdocs SMO assurance capabilities and Dell Technologies Infrastructure Automation Suite will gather and transmit various telemetry data from the infrastructure, CaaS and the RAN network functions to Amdocs SMO, facilitating real-time monitoring of alarms and events. The setup is both versatile and supports service assurance and closed-loop automation.

Roadmap to innovation

Looking ahead, Amdocs and Dell Technologies remain committed to evolving SMO and O-Cloud management in alignment with O-RAN standards, and empowering CSPs with the flexibility and agility they need for O-RAN deployment activities.

Amdocs SMO remains central to this goal, supporting a rich set of capabilities, including model-driven dynamic orchestration, service decomposition, network slicing, dynamic inventory and closed-loop SLA assurance. Importantly, we’re also investing in specific O-RAN capabilities such as O1, O2, R1, and A1 interfaces, as well as management of x/rApps and respective ML-models.

Meanwhile, Dell Telecom Infrastructure Automation Suite effectively manages the complete lifecycle of the O-Cloud, using the O2 API and RESTful APIs. Employing an open software framework with vendor-agnostic resource controllers, the Suite empowers CSPs to fully capitalize on the advantages of disaggregated infrastructure and cloud layers. It can also seamlessly configure the O-Cloud by orchestrating intricate dependencies, coordinating tasks across various infrastructure elements and cloud stacks.

Even as Amdocs and Dell Technologies solidify our positions as key players in O-RAN development, we remain equally excited to find new ways to collaborate and innovate in the ever-evolving O-RAN management landscape.

Related Blog Posts

Dell Shifts vRAN into High Gear on PowerEdge with Intel vRAN Boost

Thu, 17 Aug 2023 18:33:05 -0000

|Read Time: 0 minutes

What has past

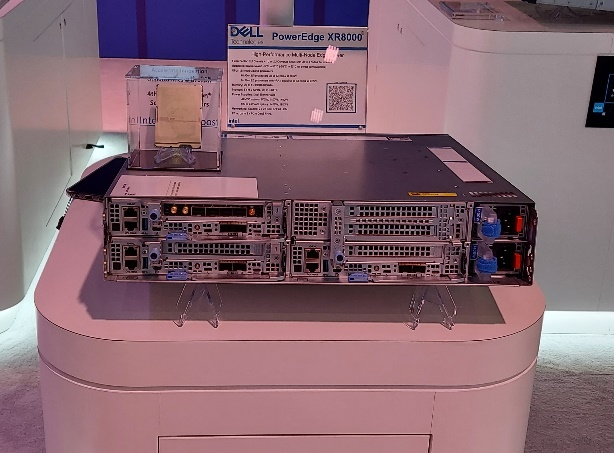

Mobile World Congress 2023 was an important event for both Dell Technologies and Intel that marked a true foundational turning point for vRAN viability. At this event, Intel launched its 4th Gen Intel Xeon Scalable processor, with Intel vRAN Boost, and Dell announced two new ruggedized server platforms, the PowerEdge XR5610 and XR8000, with support for vRAN Boost CPU SKUs.

The features and capabilities of the PowerEdge XR5610 and XR8000 have been highlighted in previous blogs and both have been available to order since May 2023. These new ruggedized servers have been evaluated and adopted as Cloud RAN reference platforms by NEPs such as Samsung and Ericsson. Short-depth, NEBS certified and TCO-optimized, these servers are purpose-built for the demanding deployment environments of Mobile Operators and are now married to the Intel vRAN Boost processor to provide a powerful and efficient alternative to classical appliance options.

What is now

Starting August 16, 2023, the 4th Gen Intel Xeon Scalable processor with Intel vRAN Boost is available to order with the PowerEdge XR5610 and XR8000. These two critical pieces of the vRAN puzzle have been brought together and are now available to order from our PowerEdge XR Rugged Servers page with the following CPU SKUs.

| CPU SKU | Cores | Base Freq. | TDP |

|---|---|---|---|

6433 N | 32 | 2.0 | 205 W |

5423 N | 20 | 2.1 | 145 W |

6423 N | 28 | 2.0 | 195 W |

6403 N | 24 | 1.9 | 185 W |

Table 1. Intel vRAN Boost SKUs available today from Dell

Additional details on these new CPU SKUs and all 4th Gen Intel® Xeon® Scalable processors can be found on the Intel Ark Site.

These processors, with Intel vRAN Boost, integrate key acceleration blocks for 4G and 5G Radio Layer 1 processing into the CPU. These include:

- 5G Low Density Parity Check (LDPC) encoder/decoder

- 4G Turbo encoder/decoder

- Rate match/dematch

- Hybrid Automatic Repeat

- Request (HARQ) with access to DDR memory for buffer management

- Fast Fourier Transform (FFT) block providing DFT/iDFT for the 5G Sounding Reference Signal (SRS)

- Queue Manager (QMGR)

- DMA subsystem

One of the most interesting features of the vRAN Boost CPU is how this acceleration block is accessed by software. Although it is integrated on-chip with the CPU, the vRAN Boost block still presents itself to the Cores/OS as a PCIe device. The genius of this approach is in software compatibility. Virtual Distributed Unit (vDU) applications written for the previous generation HW will access the new vRAN Boost block using the same standardized, open APIs that were developed for the previous generation product. This creates a platform that can support past, present (and possibly future) generations of Intel’s vRAN optimized HW with the same software image.

What is to come

Prior to vRAN Boost, the reference architecture for vDU was a 3rd Gen Intel Xeon Scalable processor along with a FEC/LDPC accelerator, such as the Intel vRAN Accelerator ACC100 Adapter, and most today’s vRAN deployments can be found with this configuration. While the ACC100 does meet the L1 acceleration needs of vRAN it does this at a price, in terms of the space of an HHHL PCIe card and at the cost of an additional 54 W of power consumed (and cooled). In addition, using a PCIe interface will further reduce additional I/O expansion options and impact the ability to scale in-chassis due to slot count – both of which are alleviated with vRAN Boost.

With the new Intel vRAN Boost processors’ fully integrated acceleration, Intel has taken a huge step in closing the performance gap with purpose-built hardware, while remaining true to the “Open” in O-RAN.

Intel says that, compared to the previous generation, the new Intel vRAN Boost processor delivers up to 2x capacity and ~ 20% compute power savings compared to its previous generation processor with ACC100 external acceleration. At the Cell Site, where every watt is counted, operators are constantly exploring opportunities to reduce both power consumption and the associated “cooling tax” of keeping the HW in its operational range, typically within a sealed environment.

Dell and Intel have worked together to provide early access Intel vRAN Boost provisioned XR5610s and XR8000s to multiple partners and customers for integration, evaluation, and proof-of-concepts. One early evaluator, Deutsche Telekom, states:

“Deutsche Telekom recently conducted a performance evaluation of Dell’s PowerEdge XR5610 server, based on Intel’s 4th Gen Intel Xeon Scalable processor with Intel vRAN Boost. Testing under selected scenarios concluded a 2x capacity gain, using approximately 20% less power versus the previous generation. We aim to leverage these significant performance gains on our journey to vRAN.”

-- Petr Ledl , Vice President of Network Trials and Integration Lab and Access Disaggregation Chief Architect, Deutsche Telekom AG

With such a solid industry foundation of the telecom-optimized PowerEdge XR5610s/XR8000s, and 4th Gen Intel Xeon Scalable processors with Intel vRAN Boost, expect to see accelerated deployments of open, vRAN-based infrastructure solutions.

What is Happening in the Network Edge

Mon, 26 Jun 2023 10:59:44 -0000

|Read Time: 0 minutes

Where is the Network Edge in Mobile Networks

The notion of ‘Edge’ can take on different meanings depending on the context, so it’s important to first define what we mean by Network Edge. This term can be broadly classified into two categories: Enterprise Edge and Network Edge. The former refers to when the infrastructure is hosted by the company using the service, while the latter refers to when the infrastructure is hosted by the Mobile Network Operator (MNO) providing the service.

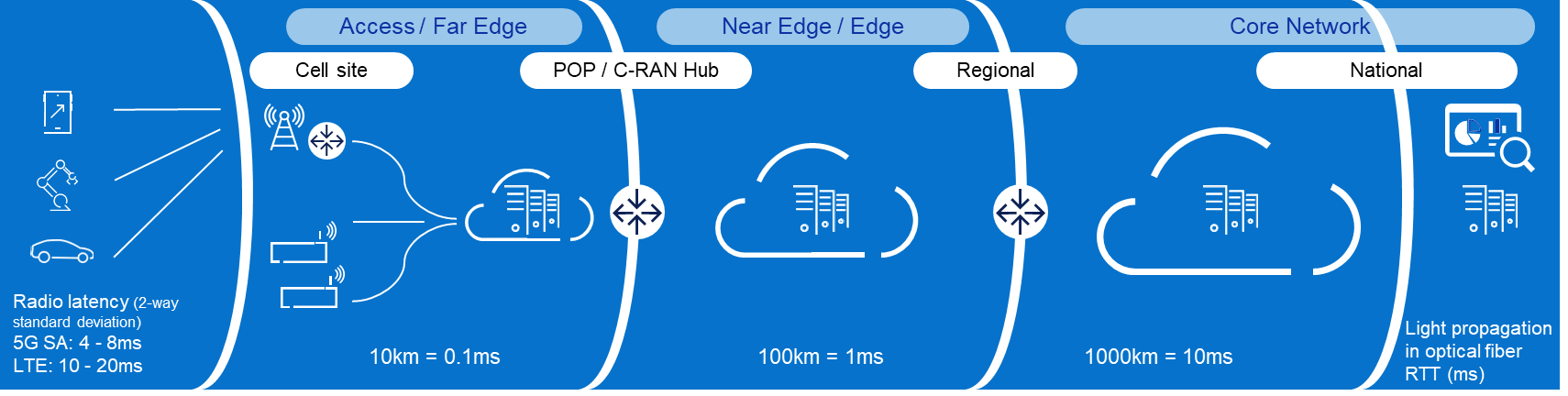

This article focuses on the Network Edge, which can be located anywhere from the Radio Access Network (RAN) to next to the Core Network (CN). Network Edge sites collocated with the RAN are often referred to as Far Edge.

What is in the Network Edge

In a 5G Standalone (5G SA) Network, a Network Edge site typically contains a cloud platform that hosts a User Plane Function (UPF) to enable local breakout (LBO). It may include a suite of consumer and enterprise applications, for example, those that require lower latency or more privacy. It can also benefit the transport network when large content such as Video-on-Demand is brought closer to the end users.

Modern cloud platforms are envisioned to be open and disaggregated to enable MNOs to rapidly onboard new applications from different Independent Software Vendors (ISV) thus accelerating technology adoption. These modern cloud platforms are typically composed of Commercial-of-the-Shelf (COTS) hardware, multi-tenant Container-as-a-Service (CaaS) platforms, and multi-cloud Management and Orchestration solutions.

Similarly, modern applications are designed to be cloud-native to maximize service agility. By having microservices architectures and supporting containerized deployments, MNOs can rapidly adapt their services to meet changing market demands.

What contributes to Network Latency

The appeal of Network Edge or Multi-access Edge Computing (MEC) is commonly associated with lower latency or more privacy. While moving applications from beyond the CN to near the RAN does eliminate up to tens of milliseconds of delay, it is also important to understand that there are many other contributors to network latency which can be optimized. In fact, latency is added at every stage from the User Equipment (UE) to the application and back.

RAN is typically the biggest contributor to network latency and jitter, the latter being a measure of fluctuations in delay. Accordingly, 3GPP has introduced a lot of enhancements in 5G New Radio (5G NR) to reduce latency and jitter in the air interface. We can actively reduce latency through the following categories: There are three primary categories where latency can be reduced:

- Transmission time: reduce symbol duration with higher subcarrier spacing or with mini slots

- Waiting time: improve scheduling (optimize handshaking), simultaneous transmit/receive, and uplink/downlink switching with TDD

- Processing time: reduce UE and gNB processing and queuing with enhanced coding and modulation

Transport latency is relatively simple to understand as it is mainly due to light propagation in optical fiber. The industry rule of thumb is 1 millisecond round trip latency for every 100 kilometers. The number of hops along the path also impacts latency as every transport equipment adds a bit of delay.

Typically, CN adds less than 1 millisecond to the latency. The challenge for the CN is more about keeping the latency low for mobile UEs, by seamlessly changing anchors to the nearest Edge UPF through a new procedure called ‘make before break’. Also, the UPF architecture and Gi/SGi services (e.g., Deep Packet Inspection, Network Address Translation, and Content Optimization) may add a few additional milliseconds to the overall latency, depending on whether these functions are integrated or independent.

Architectural and Business approaches for the Network Edge

The physical locations that host RAN and Network Edge functionalities are widely recognized to be some of the MNOs’ most valuable assets. Few other entities today have the real estate and associated infrastructure (e.g., power, fiber) to bring cloud capabilities this close to the end clients. Consequently, monetization of the Network Edge is an important component of most MNOs’ strategy for maximizing their investment in the mobile network and, specifically, in 5G. In almost all cases, the Network Edge monetization strategy includes making Network Edge available for Enterprise customers to use as an “Edge Cloud.” However, doing so involves making architectural and business model choices across several dimensions:

- Connectivity or Cloud: should the MNO offer a cloud service or just the connectivity to a cloud service provided by a third party (and potentially hosted at a third party’s site).

- aaS model: in principle, the full range of as-a-Service models are available to the MNO to offer at the network edge. This includes co-location services; Bare-Metal-as-a-Service, Infrastructure-as-a-Service (IaaS), Containers-as-a-Service (CaaS), and Platform and Software-as-a-Service (PaaS and SaaS). Going up this value chain (up being from co-lo to SaaS) allows the MNO to capture more of the value provided to the Enterprise. However, it also requires it to take on significantly more of responsibility and puts it in direct competition with well-established players in this space – e.g., the cloud hyperscale companies. The right mix of offerings – and it is invariably a mix – thus involves a complex set of technical and business case tradeoffs. The end result will be different for every MNO and how each arrives there will also be unique.

- Management framework: our industry’s initial approach to exposing the Network Edge to the enterprises involved a management framework that tightly couples to how the MNO manages its network functions (e.g., the ETSI MEC family of standards for example (ETSI MEC)). However, this approach comes with several drawbacks from an Enterprise point of view. As a result, a loosely coupled approach, where the Enterprise manages its Edge Cloud applications using typical cloud management solutions appears to be gaining significant traction, with solutions such as Amazon’s Wavelength as an example. This approach, of course, has its own drawbacks and managing the interplay between the two is an important consideration in Network Edge (and one that is intertwined with the selection of aaS model).

- Network-as-a-Service: a unique aspect of the Network Edge is the MNOs ability to expose network information to applications as well as the ability to provide those applications (highly curated) means of controlling the network. How and if this makes sense is again both an issue of the business case – for the MNO and the Enterprise – as well as a technical/architectural issue.

Certainly, the likely end state is a complex mixture of services and go-to-market models focused on the Enterprise (B2B) segment. The exposition of operational automation and the features of 5G designed to address this make it likely that this is a huge opportunity for MNOs. Navigating the complexities of this space requires a deep understanding of both what services the Enterprises are looking for and how they are looking to consume these. It also requires an architectural approach that can handle the variable mix of what is needed in a way that is highly scalable.

As the long-time leader in Enterprise IT services, Dell is uniquely positioned to address this space – stay tuned for more details in an upcoming blog!

Building the Network Edge

There are several factors to consider when moving workloads from central sites to edge locations. Limited space and power are at the top of the list. The distance of locations from the main cities and generally more exposed to the elements require a new class of denser, easier-to-service, and even ruggedized form factors. Thanks to the popularity of Open RAN and Enterprise Edge, there are already solutions in the market today that can also be used for Network Edge. Read more on Edge blog series Computing on the Edge | Dell Technologies Info Hub

Higher deployment and operating costs are another major factor. The sheer number of edge locations combined with their degraded accessibility make them more expensive to build and maintain. The economics of the Network Edge thus necessitates automation and pre-integration. Dell’s solution is the newly engineered cloud-native solution with automated deployment and life-cycle management at its core. More on this novel approach here Dell Telecom MultiCloud Foundation | Dell USA.

Last is the lower cost of running applications centrally. Central sites have the advantage of pooling computes and sharing facilities such as power, connectivity, and cooling. It is therefore important to reduce overhead wherever possible, such as opting for containerized over VM-based cloud platforms. Moreover, having an open and disaggregated horizontal cloud platform not only allows for multitenancy at edge locations, which significantly reduces overhead but also enables application portability across the network to maximize efficiency.

The ideal situation is where Open/Cloud RAN and Network Edge are sharing sites thus splitting several of the deployment and operations costs. Due to the latency requirements, Distributed Unit (DU) must be placed within 20 kilometers of the Radio Unit (RU). Latency requirements for the mid-haul interface between DU and Central Unit (CU) are less stringent, and CU could be placed roughly around 80-100 kilometers from the DU. In addition, the Near-Real Time Radio Intelligent Controller (Near-RT RIC) and the related xApps must be placed within 10ms RTT. This makes it possible to collocate Network Edge sites with the CU sites and Near-RT RIC.

Future

What has happened over the past few years is that several MNOs have already moved away from having 2-3 national DCs for their entire CN to deploying 5-10 regional DCs where some network functions such as the UPF were distributed. One example of this is AT&Ts dozen “5G Edge Zones” which were introduced in the major metropolitan areas: AT&T Launching a Dozen 5G “Edge Zones” Across the U.S. (att.com).

This approach already suffices for the majority of “low latency” use cases and for smaller countries even the traditional 2-3 national DCs can offer sufficiently low transport latency. However, when moving into critical use cases with more stringent latency requirements, which means consistently very low latency is a must, then moving the applications to the Far Edge sites becomes a necessity in tandem with 5G SA enhancements such as network slicing and an optimized air interface.

The challenge with consumer use cases such as cloud gaming is supporting the required Service Level (i.e., low latency) country wide. And since enabling the network to support this requires a substantial initial investment, we are seeing the classic chicken and egg problem where independent software vendors opt not to develop these more demanding applications while MNOs keep waiting for these “killer use cases” to justify the initial investment for the Network Edge. As a result, we expect geographically limited enterprise use cases to gain market traction first and serve as catalysts for initially limited Network Edge deployments.

For use cases where assured speeds and low latency are critical, end-to-end Network Slicing is essential. In order to adopt a new more service-oriented approach, MNOs will need Network Edge and low latency enhancements together with Network Slicing in their toolbox. For more on this approach and Network Slicing, please check out our previous blog To slice or not to slice | Dell Technologies Info Hub.

About the author: Tomi Varonen

Tomi Varonen is a Telecom Network Architect in Dell’s Telecom Systems Business Unit. He is based in Finland and working with the Cloud, Core Network, and OSS&BSS customer cases in the EMEA region. Tomi has over 23 years of experience in the Telecom sector in various technical and sales positions. Wide expertise in end-to-end mobile networks and enjoys creating solutions for new technology areas. Passion for various outdoor activities with family and friends including skiing, golf, and bicycling.

About the author: Arthur Gerona

Arthur is a Principal Global Enterprise Architect at Dell Technologies. He is working on the Telecom Cloud and Core area for the Asia Pacific and Japan region. He has 19 years of experience in Telecommunications, holding various roles in delivery, technical sales, product management, and field CTO. When not working, Arthur likes to keep active and travel with his family.

About the author: Alex Reznik

ALEX REZNIK is a Global Principal Architect in Dell Technologies Telco Solutions Business organization. In this role, he is focused on helping Dell’s Telco and Enterprise partners navigate the complexities of Edge Cloud strategy and turning the potential of 5G Edge transformation into the reality of business outcomes. Alex is a recognized industry expert in the area of edge computing and a frequent speaker on the subject. He is a co-author of the book "Multi-Access Edge Computing in Action." From March 2017 through February 2021, Alex served as Chair of ETSI’s Multi-Access Edge Computing (MEC) ISG – the leading international standards group focused on enabling edge computing in access networks.

Prior to joining Dell, Alex was a Distinguished Technologist in HPE’s North American Telco organization. In this role, he was involved in various aspects of helping Tier 1 CSPs deploy state-of-the-art flexible infrastructure capable of delivering on the full promises of 5G. Prior to HPE Alex was a Senior Principal Engineer/Senior Director at InterDigital, leading the company’s research and development activities in the area of wireless internet evolution. Since joining InterDigital in 1999, he has been involved in a wide range of projects, including leadership of 3G modem ASIC architecture, design of advanced wireless security systems, coordination of standards strategy in the cognitive networks space, development of advanced IP mobility and heterogeneous access technologies and development of new content management techniques for the mobile edge.

Alex earned his B.S.E.E. Summa Cum Laude from The Cooper Union, S.M. in Electrical Engineering and Computer Science from the Massachusetts Institute of Technology, and Ph.D. in Electrical Engineering from Princeton University. He held a visiting faculty appointment at WINLAB, Rutgers University, where he collaborated on research in cognitive radio, wireless security, and future mobile Internet. He served as the Vice-Chair of the Services Working Group at the Small Cells Forum. Alex is an inventor of over 160 granted U.S. patents and has been awarded numerous awards for Innovation at InterDigital.