Red Hat OpenShift - Windows compute nodes

Wed, 06 Dec 2023 10:35:35 -0000

|Read Time: 0 minutes

Red Hat OpenShift - Windows compute nodes

Red Hat® OpenShift® Container Platform is an industry-leading Kubernetes platform that enables a cloud-native development environment together with a cloud operations experience, giving you the ability to choose where you build, deploy, and run applications, all through a consistent interface. Powered by the open source-based OpenShift Kubernetes Engine, Red Hat OpenShift provides cluster management, platform services for managing workloads, application services for building cloud-native applications, and developer services for enhancing developer productivity.

Support for Windows containers

OpenShift Container Platform enables you to host and run Windows-based workloads on Windows compute nodes alongside the traditional Linux workloads that are hosted on Red Hat Enterprise Linux CoreOS (RHCOS) or Red Hat Enterprise Linux compute nodes. For more information, see Red Hat OpenShift support for Windows Containers.

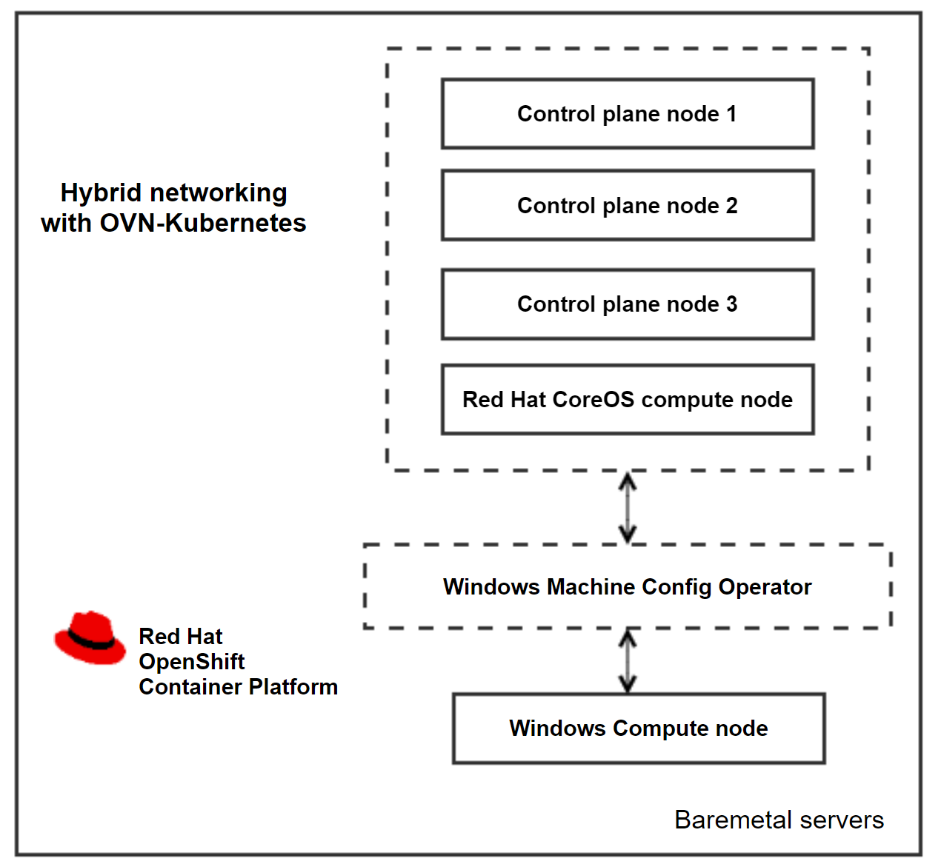

As a prerequisite for installing Widows workloads, the Windows Machine Config Operator must be installed on a cluster that is configured with hybrid networking using OVN-Kubernetes. The operator configures Windows compute nodes and orchestrates the process of deploying and managing Windows workloads on a cluster.

Open Virtual Network (OVN) is the only supported networking configuration for installing Windows compute nodes. OpenShift Container Platform uses the OVN-Kubernetes network plug-in as its default network provider. You can configure the OpenShift Networking OVN-Kubernetes network plug-in to enable Linux and Windows nodes to host Linux and Windows workloads respectively. For more information, see About the OVN-Kubernetes network plugin.

Cluster architecture and components

Cluster architecture and components

Adding a Windows node

You will need an already installed cluster, built using the IPI installation method or the Assisted Installer. For more information about deploying an OpenShift cluster on Dell bare-metal servers, see the Red Hat OpenShift Container Platform 4.12 on Dell Infrastructure Implementation Guide.

Create a custom manifest file to configure the Hybrid OVN-Kubernetes network during the cluster deployment by running the following commands:

cat cluster-network-03-config.yml

apiVersion: operator.openshift.io/v1

kind: Network

metadata:

name: cluster

spec:

defaultNetwork:

ovnKubernetesConfig:

hybridOverlayConfig:

hybridClusterNetwork:

- cidr: 10.132.0.0/14

hostPrefix: 23

To add the server to the cluster as a worker node, you need bare-metal server with a Windows operating system. For the supported Windows versions, see Red Hat OpenShift 4.13 support for Windows Containers release notes.

- Open ports 22 and 10250 for SSH and for log collection on the Windows server.

- Create an administrator user. The administrator user’s private key is used in the secret as an authorized SSH key and to enable password-less authentication to the Windows server.

- Install the Windows Machine Config Operator on the cluster.

- In the openshift-windows-machine-config-operator namespace, create the secret from the administrator user’s private key.

- Describe the IPv4 or DNS address of the Windows instance and the administrator user in the configmap.

The WMCO operator scans for the secret created during boot, and creates another user data secret with the data that is required to interact with the Windows server using the SSH protocol. After the SSH connection is established, the operator starts processing the Windows servers that are listed in the configmap and begins to transfer files and configure the nodes. The CSRs that are generated are auto-approved, and the Windows instance is added to the cluster.

Environment overview

OpenShift Container platform is hosted on Dell PowerEdge R650 servers, enabling hybrid networking with OVN-Kubernetes. The Dell-validated environment consisted of three compute nodes. The validation team added a Windows instance to the cluster as a fourth node. The following table shows the cluster version information:

OpenShift cluster version | 4.13.21 |

Kubernetes version | 1.26.9 |

WCMO operator version | 8.1.0+0.1699557880.p |

Windows instance version | Windows server 2019 (Version 1809) |

References

Configuring hybrid networking - OVN-Kubernetes network plugin

Related Blog Posts

Dell ObjectScale on Red Hat OpenShift

Tue, 10 Oct 2023 09:55:24 -0000

|Read Time: 0 minutes

Introduction

ObjectScale is a software-defined object storage offering from Dell Technologies. It is designed to deliver enterprise-grade, high-performance object storage in a Kubernetes-native environment.

ObjectScale has a layered architecture, with every function in the system built as an independent layer, making the functions horizontally scalable across all nodes and enabling high availability. The S3-compatible ObjectScale software forms the underlying cloud storage service, providing protection, geo-replication, and data access.

Red Hat® OpenShift® Container Platform is a Kubernetes distribution that provides production-oriented container and workload automation. Its declarative deployment approach, dynamic scaling, and self-healing capabilities make OpenShift a suitable platform to host ObjectScale.

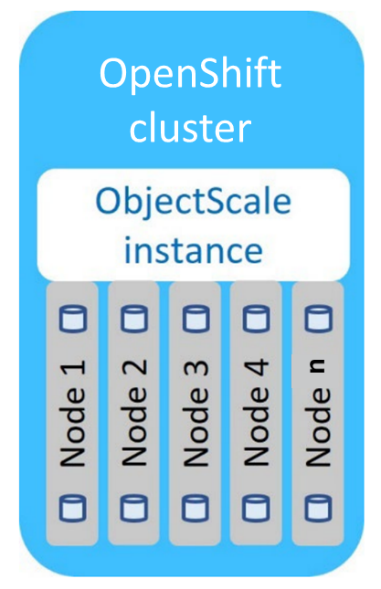

The following diagram shows the ObjectScale topology:

ObjectScale topology

ObjectScale topology

Deployment overview

The deployment of ObjectScale consists of three steps:

- Installing Bare Metal CSI drivers

- Installing the ObjectScale instance

- Creating ObjectStore

The bare-metal CSI drivers deployed as part of ObjectScale provide enhanced performance and serviceability. ObjectScale instance provides an easy-to-use web portal for its configuration and management. ObjectStores, user accounts, and buckets are some of the resources that are required to be created before the storage is ready to be consumed.

ObjectStores are independent storage systems with an individualized life cycle. One or more ObjectStores are deployed by each ObjectScale instance. ObjectStores are created, updated, and deleted independently from all other ObjectStores, and managed by the shared ObjectScale instance. Cluster resources such as storage, CPU, and RAM are defined for each ObjectStore based on workload demand inputs that are specified at ObjectStore creation. Resources that are reserved for an ObjectStore at creation may be adjusted at any time.

The minimum requirements for each OpenShift compute node are:

- 4 physical CPU cores

- 1 x 960 GB (or larger) unused SSD

- 128 GB RAM

- 200 GB of free space in /var/lib/kubelet

- 5 unused disks per node of identical storage class (minimum for a single object store), preferably the same size

Dell PowerEdge R750 and R7525 servers hosting the Red Hat OpenShift 4.8 are validated for ObjectScale deployment. The validated environment consisted of three compute nodes, each having 12 X 800 GB SSDs. The OpenShift NodePort service was used to access the ObjectScale UI.

Use cases

ObjectScale supports several modern and traditional use cases. Common use cases on OpenShift container platform are:

- ObjectScale for OpenShift internal image registry

- ObjectScale for OpenShift Quay

- ObjectScale for storing backups

- ObjectScale for building a data lake

- ObjectScale for Splunk SmartStore

Conclusion

The ease of deployment, scalability, fault tolerance, and security capabilities of OpenShift make it a preferred choice for hosting ObjectScale to fulfill object storage demands. ObjectStores running inside OpenShift, can be co-located and managed with the applications they support. This help reduce CapEx and deployment costs while improving time-to-market.

References

Authors

Indira Kannamedi (indira_kannamedi@dell.com)

Nitesh Mehra (nitesh_mehra@dell.com)

Let Robin Systems Cloud Native Be Your Containerized AI-as-a-Service Platform on Dell PE Servers

Fri, 06 Aug 2021 21:31:26 -0000

|Read Time: 0 minutes

Robin Systems has a most excellent platform that is well suited to simultaneously running a mix of workloads in a containerized environment. Containers offer isolation of varied software stacks. Kubernetes is the control plane that deploys the workloads across nodes and allows for scale-out, adaptive processing. Robin adds customizable templates and life cycle management to the mix to create a killer platform.

AI which includes the likes of machine learning for things like scikit-learn with dask, H2o.ai, spark MLlib and PySpark along with deep learning which includes tensor flow, PyTorch, MXNET, keras and Caffe2 are all things that can be run simultaneously in Robin. Nodes are identified by their resources during provisioning for cores, memory, GPUs and storage.

Cultivated data pipelines can be constructed with a mix of components. Consider a use case with ingest from kafka, store to Cassandra and then run spark MLlib to find loans submitted from last week that will be denied. All that can be automated with Robin.

The as-a-service aspect for things like MLops & AutoML can be implemented with a combination of Robin capabilities and other software to deliver a true AI-as-a-Service experience.

Nodes to run these workloads on can support disaggregated compute and storage. Some sample servers might be a combination of Dell PowerEdge C6520s for compute & R750s for storage. The compute servers are very dense and can run four server hosts in 2U offering a full range of Intel Ice Lake processors. For storage nodes the R750s can have onboard NVMe or SSDs (up to 28). For the OS image a hot swappable m.2 BOSS card with self-contained RAID1 can be used for Linux with all 15G servers.