IIoT Analytics Design: How important is MOM (message-oriented middleware)?

Tue, 08 Dec 2020 17:45:45 -0000

|Read Time: 0 minutes

Originally published on Aug 6, 2018 1:17:46 PM

Artificial intelligence (AI) is transforming the way businesses compete in today’s marketplace. Whether it’s improving business intelligence, streamlining supply chain or operational efficiencies, or creating new products, services, or capabilities for customers, AI should be a strategic component of any company’s digital transformation.

Deep neural networks have demonstrated astonishing abilities to identify objects, detect fraudulent behaviors, predict trends, recommend products, enable enhanced customer support through chatbots, convert voice to text and translate one language to another, and produce a whole host of other benefits for companies and researchers. They can categorize and summarize images, text, and audio recordings with human-level capability, but to do so they first need to be trained.

Deep learning, the process of training a neural network, can sometimes take days, weeks, or months, and effort and expertise is required to produce a neural network of sufficient quality to trust your business or research decisions on its recommendations. Most successful production systems go through many iterations of training, tuning and testing during development. Distributed deep learning can speed up this process, reducing the total time to tune and test so that your data science team can develop the right model faster, but requires a method to allow aggregation of knowledge between systems.

There are several evolving methods for efficiently implementing distributed deep learning, and the way in which you distribute the training of neural networks depends on your technology environment. Whether your compute environment is container native, high performance computing (HPC), or Hadoop/Spark clusters for Big Data analytics, your time to insight can be accelerated by using distributed deep learning. In this article we are going to explain and compare systems that use a centralized or replicated parameter server approach, a peer-to-peer approach, and finally a hybrid of these two developed specifically for Hadoop distributed big data environments.

Distributed Deep Learning in Container Native Environments

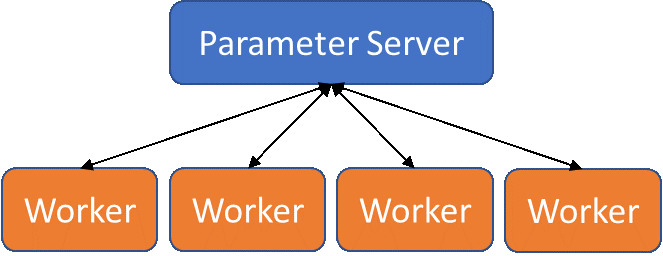

Container native (e.g., Kubernetes, Docker Swarm, OpenShift, etc.) have become the standard for many DevOps environments, where rapid, in-production software updates are the norm and bursts of computation may be shifted to public clouds. Most deep learning frameworks support distributed deep learning for these types of environments using a parameter server-based model that allows multiple processes to look at training data simultaneously, while aggregating knowledge into a single, central model.

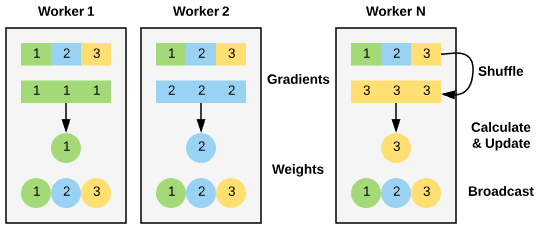

The process of performing parameter server-based training starts with specifying the number of workers (processes that will look at training data) and parameter servers (processes that will handle the aggregation of error reduction information, backpropagate those adjustments, and update the workers). Additional parameters servers can act as replicas for improved load balancing.

Parameter server model for distributed deep learning

Parameter server model for distributed deep learning

Worker processes are given a mini-batch of training data to test and evaluate, and upon completion of that mini-batch, report the differences (gradients) between produced and expected output back to the parameter server(s). The parameter server(s) will then handle the training of the network and transmitting copies of the updated model back to the workers to use in the next round.

This model is ideal for container native environments, where parameter server processes and worker processes can be naturally separated. Orchestration systems, such as Kubernetes, allow neural network models to be trained in container native environments using multiple hardware resources to improve training time. Additionally, many deep learning frameworks support parameter server-based distributed training, such as TensorFlow, PyTorch, Caffe2, and Cognitive Toolkit.

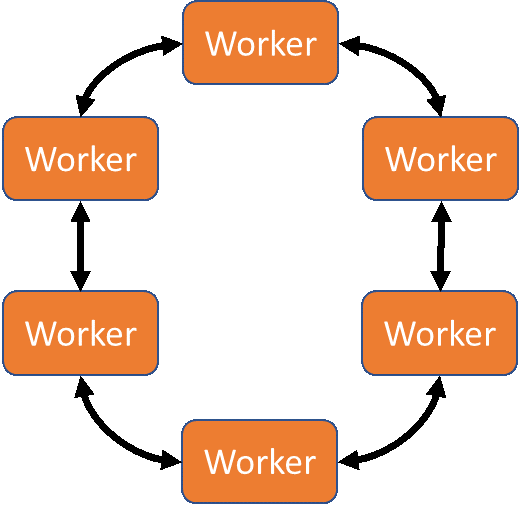

Distributed Deep Learning in HPC Environments

High performance computing (HPC) environments are generally built to support the execution of multi-node applications that are developed and executed using the single process, multiple data (SPMD) methodology, where data exchange is performed over high-bandwidth, low-latency networks, such as Mellanox InfiniBand and Intel OPA. These multi-node codes take advantage of these networks through the Message Passing Interface (MPI), which abstracts communications into send/receive and collective constructs.

Deep learning can be distributed with MPI using a communication pattern called Ring-AllReduce. In Ring-AllReduce each process is identical, unlike in the parameter-server model where processes are either workers or servers. The Horovod package by Uber (available for TensorFlow, Keras, and PyTorch) and the mpi_collectives contributions from Baidu (available in TensorFlow) use MPI Ring-AllReduce to exchange loss and gradient information between replicas of the neural network being trained. This peer-based approach means that all nodes in the solution are working to train the network, rather than some nodes acting solely as aggregators/distributors (as in the parameter server model). This can potentially lead to faster model convergence.

Ring-AllReduce model for distributed deep learning

Ring-AllReduce model for distributed deep learning

The Dell EMC Ready Solutions for AI, Deep Learning with NVIDIA allows users to take advantage of high-bandwidth Mellanox InfiniBand EDR networking, fast Dell EMC Isilon storage, accelerated compute with NVIDIA V100 GPUs, and optimized TensorFlow, Keras, or Pytorch with Horovod frameworks to help produce insights faster.

Distributed Deep Learning in Hadoop/Spark Environments

Hadoop and other Big Data platforms achieve extremely high performance for distributed processing but are not designed to support long running, stateful applications. Several approaches exist for executing distributed training under Apache Spark. Yahoo developed TensorFlowOnSpark, accomplishing the goal with an architecture that leveraged Spark for scheduling Tensorflow operations and RDMA for direct tensor communication between servers.

BigDL is a distributed deep learning library for Apache Spark. Unlike Yahoo’s TensorflowOnSpark, BigDL not only enables distributed training - it is designed from the ground up to work on Big Data systems. To enable efficient distributed training BigDL takes a data-parallel approach to training with synchronous mini-batch SGD (Stochastic Gradient Descent). Training data is partitioned into RDD samples and distributed to each worker. Model training is done in an iterative process that first computes gradients locally on each worker by taking advantage of locally stored partitions of the training data and model to perform in memory transformations. Then an AllReduce function schedules workers with tasks to calculate and update weights. Finally, a broadcast syncs the distributed copies of model with updated weights.

BigDL implementation of AllReduce functionality

BigDL implementation of AllReduce functionality

The Dell EMC Ready Solutions for AI, Machine Learning with Hadoop is configured to allow users to take advantage of the power of distributed deep learning with Intel BigDL and Apache Spark. It supports loading models and weights from other frameworks such as Tensorflow, Caffe and Torch to then be leveraged for training or inferencing. BigDL is a great way for users to quickly begin training neural networks using Apache Spark, widely recognized for how simple it makes data processing.

One more note on Hadoop and Spark environments: The Intel team working on BigDL has built and compiled high-level pipeline APIs, built-in deep learning models, and reference use cases into the Intel Analytics Zoo library. Analytics Zoo is based on BigDL but helps make it even easier to use through these high-level pipeline APIs designed to work with Spark Dataframes and built in models for things like object detection and image classification.

Conclusion

Regardless of whether you preferred server infrastructure is container native, HPC clusters, or Hadoop/Spark-enabled data lakes, distributed deep learning can help your data science team develop neural network models faster. Our Dell EMC Ready Solutions for Artificial Intelligence can work in any of these environments to help jumpstart your business’s AI journey. For more information on the Dell EMC Ready Solutions for Artificial Intelligence, go to dellemc.com/readyforai.

Lucas A. Wilson, Ph.D. is the Chief Data Scientist in Dell EMC's HPC & AI Innovation Lab. (Twitter: @lucasawilson)

Michael Bennett is a Senior Principal Engineer at Dell EMC working on Ready Solutions.

Related Blog Posts

Navigating the modern data landscape: the need for an all-in-one solution

Mon, 18 Mar 2024 19:56:59 -0000

|Read Time: 0 minutes

There are two revolutions brewing inside every enterprise. We are all very familiar with the first one - the frenzied rush to expand an organization's AI capabilities, which leads to an exponential growth in data creation, a rise in availability of high-performance computing systems with multi-threaded GPUs, and the rapid advancement of AI models. The situation creates a perfect storm that is reshaping the way enterprises operate. Then, there is a second revolution that makes the first one a reality – the ability to harness this awesome power and gain a competitive advantage to drive innovation. Enterprises are racing towards a modern data architecture that seeks to bring order to their chaotic data environment.

The Need For An All-In-One Solution

Data platforms are constantly evolving, despite a plethora of options such as data lakes, data warehouses, cloud data warehouses and even cloud data lakehouses, enterprise are still struggling. This is because the choices available today are suboptimal.

Cloud native solutions offer simplicity and scalability, but migrating all data to the cloud can be a daunting task and can end up being significantly more expensive over the long term. Moreover, concerns about the loss of control over proprietary data, particularly in the realm of AI, is a major cause for concern, as well. On the other hand, traditional on-premises solutions require significantly more expertise and resources to build and maintain. Many organizations simply lack the skills and capabilities needed to construct a robust data platform in-house.

A customer once told me – “We’ve heard from so many vendors but ultimately there is no easy button for us.”

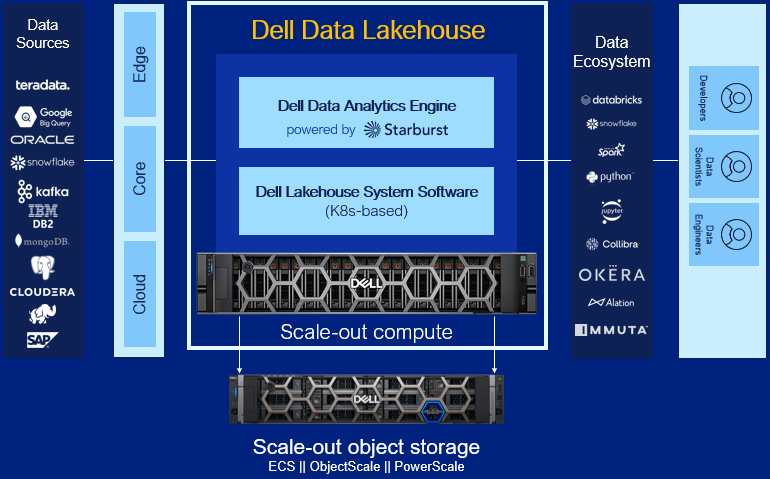

When Dell Technologies set out to build that easy button, we started with what enterprises needed most: infrastructure, software, and services all seamlessly integrated. We created a tailor-made solution with right-sized compute and a highly performant query engine that is pre-integrated and pre-optimized to perfectly streamline IT operations. We incorporated built-in enterprise-grade security that also can seamlessly integrate with 3rd party security tools. To enable rapid support, we staffed a bench of experts, offering end-to-end maintenance and deployment services. We also knew the solution needed to be future proof – not only anticipating future innovations but also accommodating the diverse needs of users today. To support this idea, we made the choice to use open data formats, which means an organization’s data is no longer locked-in to a proprietary format or vendor. To make the transition easier, the solution makes use of built-in enterprise-ready connectors that ensures business continuity. Ultimately, our goal was to deliver an experience that is easy to install, easy to use, easy to manage, easy to scale, and easy to future-proof.

Dell Data Lakehouse’s Core Capabilities

Let’s dig into each component of the solution.

- Data Analytics Engine, powered by Starburst: A high performance distributed SQL query engine, built on top of Starburst, based on Trino, which can run fast analytic queries against data lakes, lakehouses and distributed data sources at internet-scale. It integrates global security with fine-grained access controls, supports ad-hoc and long-running ELT workloads and is a gateway to building high quality data products and power AI and Analytics workloads. Dell’s Data Analytics Engine also includes exclusive features that help dramatically improve performance when querying data lakes. Stay tuned for more info!

- Data Lakehouse System Software: This new system software is the central nervous system of the Dell Data Lakehouse. It simplifies lifecycle management of the entire stack, drives down IT OpEx with pre-built automation and integrated user management, provides visibility into the cluster health and ensures high availability, enables easy upgrades and patches and lets admins control all aspects of the cluster from one convenient control center. Based on Kubernetes, it’s what converts a data lakehouse into an easy button for enterprises of all sizes.

- Scale-out Lakehouse Compute: Purpose-built Dell Compute and Networking hardware perfectly matched for compute-intensive data lakehouse workloads come pre-integrated into the solution. Independently scale from storage by seamlessly adding more compute as needs grow.

- Scale-out Object Storage: Dell ECS, ObjectScale and PowerScale deliver cyber-secure, multi-protocol, resilient and scale-out storage for storing and processing massive amounts of data. Native support for Delta Lake and Iceberg ensures read / write consistency within and across sites for handling concurrent, atomic transactions.

- Dell Services: Accelerate AI outcomes with help at every stage from trusted experts. Align a winning strategy, validate data sets, quickly implement your data platform and maintain secure, optimized operations.

- ProSupport: Comprehensive, enterprise-class support on the entire Dell Data Lakehouse stack from hardware to software delivered by highly trained experts around the clock and around the globe.

- ProDeploy: Expert delivery and configuration assure that you are getting the most from the Dell Data Lakehouse on day one. With 35 years of experience building best-in-class deployment practices and tools, backed by elite professionals, we can deploy 3x faster1 than in-house administrators.

- Advisory Services Subscription for Data Analytics Engine: Receive a pro-active, dedicated expert to maximize value of your Dell Data Analytics Engine environment, guiding your team through design and rollout of new use cases to optimize and scale your environment.

- Accelerator Services for Dell Data Lakehouse: Fast track ROI with guided implementation of the Dell Data Lakehouse platform to accelerate AI and data analytics.

Learn More

With the combination of these capabilities, Dell continues to innovate alongside our customers to help them exceed their goals in the face of data challenges. We aim to allow our customers to take advantage of the revolution brewing that is AI and this rapid change in the market to harness the power of their data and gain a competitive advantage and drive innovation. Enterprises are racing towards a modern data architecture – it's critical they don’t get stuck at the starting line.

For detailed information on this exciting product, refer to our technical guide. For other information, visit Dell.com/datamanagement.

Source

1 Based on a May 2023 Principled Technologies study “Using Dell ProDeploy Plus Infrastructure can improve deployment times for Dell Technology”

AI and Model Development Performance

Thu, 31 Aug 2023 20:47:58 -0000

|Read Time: 0 minutes

There has been a tremendous surge of information about artificial intelligence (AI), and generative AI (GenAI) has taken center stage as a key use case. Companies are looking to learn more about how to build architectures to successfully run AI infrastructures. In most cases, creating a GenAI solution involves fine-tuning a pretrained foundational model and deploying it as an inference service. Dell recently published a design guide – Generative AI in the Enterprise – Inferencing, that provides an outline of the overall process.

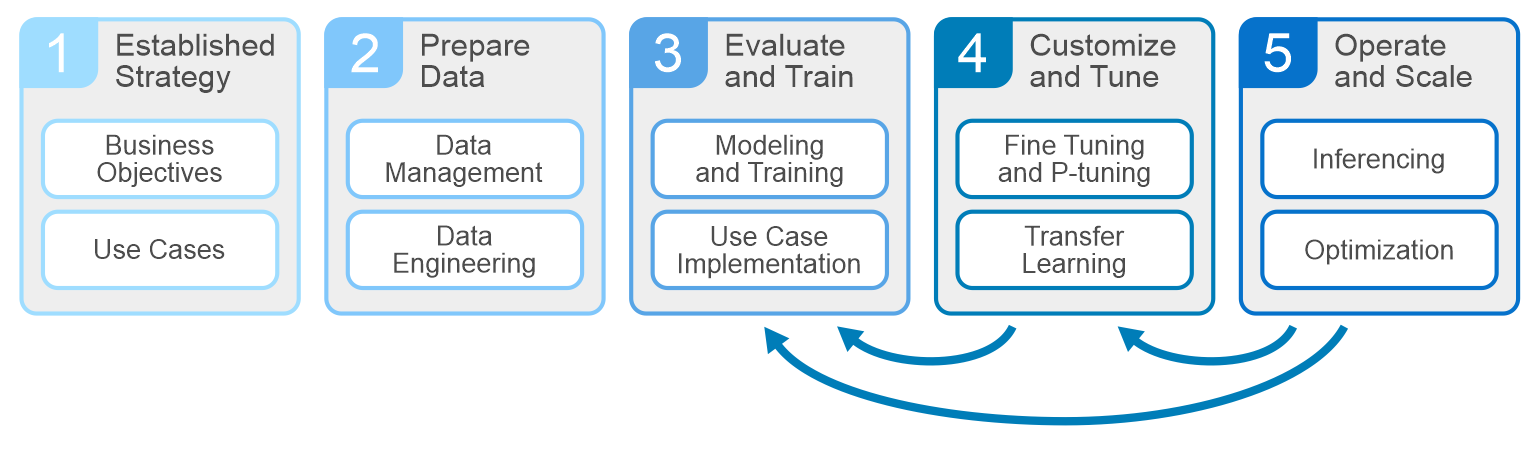

All AI projects should start with understanding the business objectives and key performance indicators. Planning, data prep, and training make up the other phases of the cycle. At the core of the development are the systems that drive these phases – servers, GPUs, storage, and networking infrastructures. Dell is well equipped to deliver everything an enterprise needs to build, develop, and maintain analytic models that serve business needs.

GPUs and accelerators have become common practice within AI infrastructures. They pull in data and training/fine-tune models within the computational capabilities of the GPU. As GPUs have evolved, their ability to handle larger models and parallel development cycles has evolved. This has left a lot of us wondering - how do we build an architecture that will support the model development that my business needs? It helps to understand a few parameters.

Defining business objectives and use cases will help shape your architecture requirements.

- The size and location of the training data set

- Model size in number of parameters and type of model being trained/fine-tuned

- Training parallelism and time to complete the training/fine-tuning.

Answering these questions helps determine how many GPUs are needed to train/fine-tune the model. Consider two main factors in GPU sizing. First is the amount of GPU memory needed to store model parameters and optimizer state. Second is the number of floating-point operations (FLOPs) needed to execute the model. Both generally scale with model size. Large models often exceed the resources of a single GPU and require spreading a single model over multiple GPUs.

Estimating the number of GPUs needed to train/fine-tune the model helps determine the server technologies to choose. When sizing servers, it’s important to balance the right GPU density and interconnect, power consumption, PCI bus technology, external port capacity, memory, and CPU. Dell PowerEdge servers include a variety of options for GPU types and density. PowerEdge XE Servers can host up to 8 NVIDIA H100 GPUs in a single server GenAI on PowerEdge XE9680, as well as the latest technologies, including NVLink, NVIDIA GPUDirect, PCIe 5.0, and NVMe disks. PowerEdge mainstream servers range from two to four GPU configurations, offering a variety of GPUs from different manufacturers. PowerEdge servers provide outstanding performance for all phases of model development. Visit Dell.com for more on PowerEdge Servers.

Now that we understand how many GPUs are needed and the servers to host them, it’s time to tackle storage. At a minimum, the storage should have capacity to host the training data set, the checkpoints during the model training, and any other data that relates to the pruning/preparing phase. The storage also needs to deliver the data at a rate the GPUs request it. The rate of delivery is multiplied by model parallelism, or the number of models being trained in parallel, and subsequently the number of GPUs requesting the data simultaneously (concurrently). Ideally, every GPU is running at 90% or better to maximize our investment, and a storage system that supports high concurrency is suited for these types of workloads.

Tools such as FIO or its cousin GDSIO (used to understand speeds and feeds of the storage system) are great for gaining hero numbers or theoretical maximums for reads/writes, but they are not representative of performance requirements for the AI development cycles. Data prep and stage shows up on the storage as random R/W, while during the training/fine-tuning phase, the GPUs are concurrently streaming reads from the storage system. Checkpoints throughout training are handled as writes back to the storage. These different points during the AI lifecycle require storage that can successfully handle these workloads at the scale determined by our model calculations and parallel development cycles.

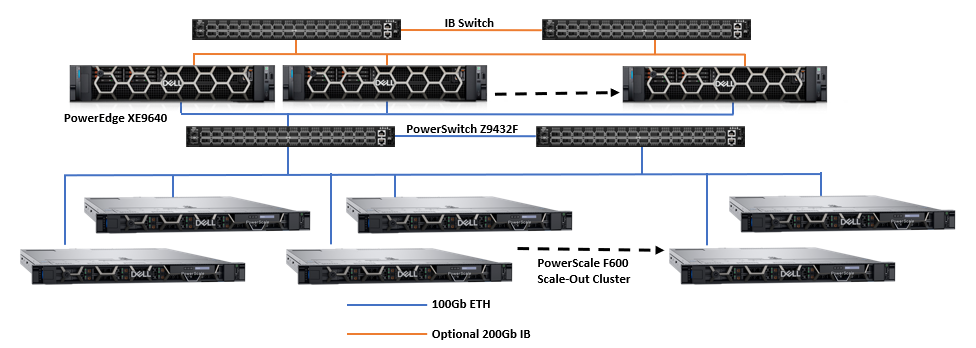

Data scientists at Dell take great effort in understanding how different model development affects server and storage requirements. For example, language models like BERT and GPT have little effect on storage performance and resources, whereas image sequencing and DLRM models have significant or show worst case storage performance and resource demand. For this, the Dell storage teams focus testing and benchmarking on AI Deep Learning workflows based on popular image models like ResNet with real GPUs to understand the performance requirements needed to deliver data to the GPU during model training. The following image shows an architecture designed with Dell PowerEdge servers and networking with PowerScale scale-out storage.

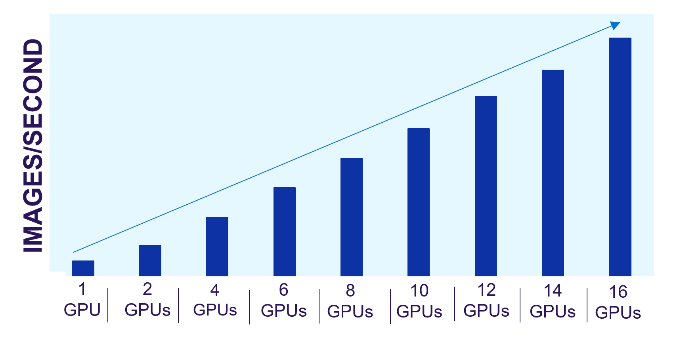

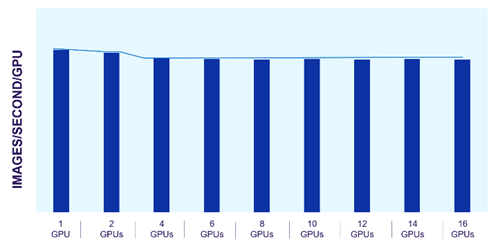

Dell PowerScale scale-out file storage is especially suited for these workloads. Each node in a PowerScale cluster delivers equivalent performance as the cluster and workloads scale. The following images show how PowerScale performance scales linearly as GPUs are increased, while the performance of each individual GPU remains constant. The scale-out architecture of PowerScale file storage easily supports AI workflows from small to large.

Figure 1. PowerScale linear performance

Figure 2. Consistent GPU performance with scale

The predictability of PowerScale allows us to estimate the storage resources needed for model training and fine-tuning. We can easily scale these architectures based on the model type and size along with the number and type of GPUs required.

Architecting for small and large AI workloads is challenging and takes planning. Understanding performance needs and how the components in the architecture will perform as the AI workload demand scales is critical.

Author: Darren Miller