Dell Validated Design Guides for Inferencing and for Model Customization – March ’24 Updates

Fri, 15 Mar 2024 20:16:59 -0000

|Read Time: 0 minutes

Continuous Innovation with Dell Validated Designs for Generative AI with NVIDIA

Since Dell Technologies and NVIDIA introduced what was then known as Project Helix less than a year ago, so much has changed. The rate of growth and adoption of generative AI has been faster than probably any technology in human history.

From the onset, Dell and NVIDIA set out to deliver a modular and scalable architecture that supports all aspects of the generative AI life cycle in a secure, on-premises environment. This architecture is anchored by high-performance Dell server, storage, and networking hardware and by NVIDIA acceleration and networking hardware and AI software.

Since that introduction, the Dell Validated Designs for Generative AI have flourished, and have been continuously updated to add more server, storage, and GPU options, to serve a range of customers from those just getting started to high-end production operations.

A modular, scalable architecture optimized for AI

This journey was launched with the release of the Generative AI in the Enterprise white paper.

This design guide laid the foundation for a series of comprehensive resources aimed at integrating AI into on-premises enterprise settings, focusing on scalable and modular production infrastructure in collaboration with NVIDIA.

Dell, known for its expertise not only in high-performance infrastructure but also in curating full-stack validated designs, collaborated with NVIDIA to engineer holistic generative AI solutions that blend advanced hardware and software technologies. The dynamic nature of AI presents a challenge in keeping pace with rapid advancements, where today's cutting-edge models might become obsolete quickly. Dell distinguishes itself by offering essential insights and recommendations for specific applications, easing the journey through the fast-evolving AI landscape.

The cornerstone of the joint architecture is modularity, offering a flexible design that caters to a multitude of use cases, sectors, and computational requirements. A truly modular AI infrastructure is designed to be adaptable and future-proof, with components that can be mixed and matched based on specific project requirements and which can span from model training, to model customization including various fine-tuning methodologies, to inferencing where we put the models to work.

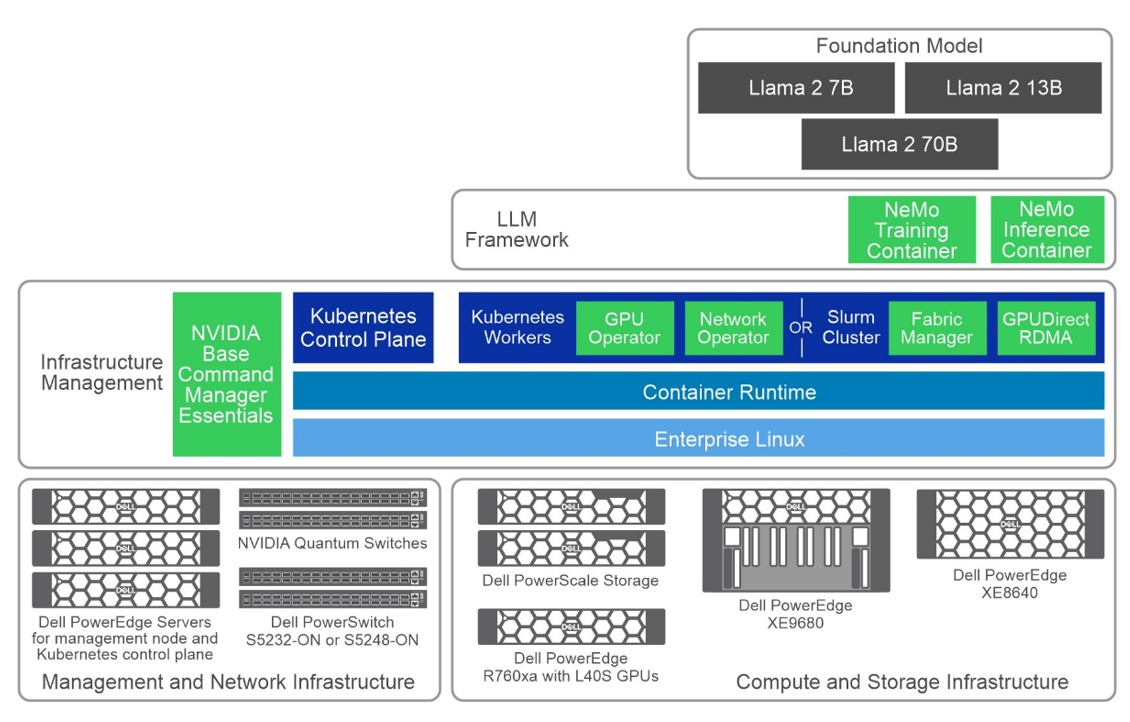

The following figure shows a high-level view of the overall architecture, including the primary hardware components and the software stack:

Figure 1: Common high-level architecture

Generative AI Inferencing

Following the introductory white paper, the first validated design guide released was for Generative AI Inferencing, in July 2023, anchored by the innovative concepts introduced earlier.

The complexity of assembling an AI infrastructure, often involving an intricate mix of open-source and proprietary components, can be formidable. Dell Technologies addresses this complexity by providing fully validated solutions where every element is meticulously tested, ensuring functionality and optimization for deployment. This validation gives users the confidence to proceed, knowing their AI infrastructure rests on a robust and well-founded base.

Key Takeaways

- In October 2023, the guide received its first update, broadening its scope with added validation and configuration details for Dell PowerEdge XE8640 and XE9680 servers. This update also introduced support for NVIDIA Base Command Manager Essentials and NVIDIA AI Enterprise 4.0, marking a significant enhancement to the guide's breadth and depth.

- The guide's evolution continues into March 2024 with its third iteration, which includes support for the PowerEdge R760xa servers equipped with NVIDIA L40S GPUs.

- The design now supports several options for NVIDIA GPU acceleration components across the multiple Dell server options. In this design, we showcase three Dell PowerEdge servers with several GPU options tailored for generative AI purposes:

- PowerEdge R760xa server, supporting up to four NVIDIA H100 GPUs or four NVIDIA L40S GPUs

- PowerEdge XE8640 server, supporting up to four NVIDIA H100 GPUs

- PowerEdge XE9680 server, supporting up to eight NVIDIA H100 GPUs

The choice of server and GPU combination is often a balance of performance, cost, and availability considerations, depending on the size and complexity of the workload.

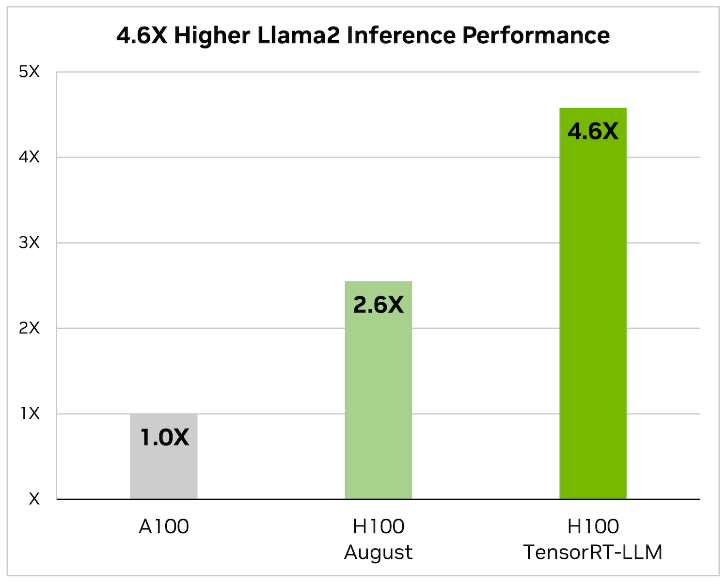

- This latest edition also saw the removal of NVIDIA FasterTransformer, replaced by TensorRT-LLM, reflecting Dell’s commitment to keeping the guide abreast of the latest and most efficient technologies. When it comes to optimizing large language models, TensorRT-LLM is the key. It ensures that models not only deliver high performance but also maintain efficiency in various applications.

The library includes optimized kernels, pre- and postprocessing steps, and multi-GPU/multi-node communication primitives. These features are specifically designed to enhance performance on NVIDIA GPUs.

It uses tensor parallelism for efficient inference across multiple GPUs and servers, without the need for developer intervention or model changes.

- Additionally, this update includes revisions to the models used for validation, ensuring users have access to the most current and relevant information for their AI deployments. The Dell Validated Design guide covers Llama 2 and now Mistral as the foundation models for inferencing with this infrastructure design with Triton Inference Server:

- Llama 2 7B, 13B, and 70B

- Mistral

- Falcon 180B

- Finally (and most importantly) performance test results and sizing considerations showcase the effectiveness of this updated architecture in handling large language models (LLMs) for various inference tasks. Key takeaways include:

- Optimized Latency and Throughput—The design achieved impressive latency metrics, crucial for real-time applications like chatbots, and high tokens per second, indicating efficient processing for offline tasks.

- Model Parallelism Impact—The performance of LLMs varied with adjustments in tensor and pipeline parallelism, highlighting the importance of optimal parallelism settings for maximizing inference efficiency.

- Scalability with Different GPU Configurations—Tests across various NVIDIA GPUs, including L40S and H100 models, demonstrated the design’s scalability and its ability to cater to diverse computational needs.

- Comprehensive Model Support—The guide includes performance data for multiple models (as we already discussed) across different configurations, showcasing the design’s versatility in handling various LLMs.

- Sizing Guidelines—Based on performance metrics, updated sizing examples are available to help users determine the appropriate infrastructure based on their specific inference requirements (these guidelines very welcome)

All this highlights Dell’s commitments and capability to deliver high-performance, scalable, and efficient generative AI inferencing solutions tailored to enterprise needs.

Generative AI Model Customization

The validated design guide for Generative AI Model Customization was first released in October 2023, anchored by the PowerEdge XE9680 server. This guide detailed numerous model customization methods, including the specifics of prompt engineering, supervised fine-tuning, and parameter-efficient fine-tuning.

The updates to the Dell Validated Design Guide from October 2023 to March 2024 included the initial release, the addition of validated scenarios for multi-node SFT and Kubernetes in November 2023, updated performance test results, and new support for PowerEdge R760xa servers, PowerEdge XE8640 servers, and PowerScale F710 all-flash storage as of March 2024.

Key Takeaways

- The validation aimed to test the reliability, performance, scalability, and interoperability of a system using model customization in the NeMo framework, specifically focusing on incorporating domain-specific knowledge into Large Language Models (LLMs).

- The process involved testing foundational models of sizes 7B, 13B, and 70B from the Llama 2 series. Various model customization techniques were employed, including:

- Prompt engineering

- Supervised Fine-Tuning (SFT)

- P-Tuning, and

- Low-Rank Adaptation of Large Language Models (LoRA)

- The design now supports several options for NVIDIA GPU acceleration components across the multiple Dell server options. In this design, we showcase three Dell PowerEdge servers with several GPU options tailored for generative AI purposes:

- PowerEdge R760xa server, supporting up to four NVIDIA H100 GPUs or four NVIDIA L40S GPUs. While the L40S is cost-effective for small to medium workloads, the H100 is typically used for larger-scale tasks, including SFT.

- PowerEdge XE8640 server, supporting up to four NVIDIA H100 GPUs.

- PowerEdge XE9680 server, supporting up to eight NVIDIA H100 GPUs.

As always, the choice of server and GPU combination depends on the size and complexity of the workload.

- The validation used both Slurm and Kubernetes clusters for computational resources and involved two datasets: the Dolly dataset from Databricks, covering various behavioral categories, and the Alpaca dataset from OpenAI, consisting of 52,000 instruction-following records. Training was conducted for a minimum of 50 steps, with the goal being to validate the system's capabilities rather than achieving model convergence, to provide insights relevant to potential customer needs.

The validation results along with our analysis can be found in the Performance Characterization section of the design guide.

What’s Next?

Looking ahead, you can expect even more innovation at a rapid pace with expansions to the Dell’s leading-edge generative AI product and solutions portfolio.

For more information, see the following resources:

- Dell Generative AI Solutions

- Dell Technical Info Hub for AI

- Generative AI in the Enterprise white paper

- Generative AI Inferencing in the Enterprise Inferencing design guide

- Generative AI in the Enterprise Model Customization design guide

- Dell Professional Services for Generative AI

~~~~~~~~~~~~~~~~~~~~~

Related Blog Posts

Dell Technologies Shines in MLPerf™ Stable Diffusion Results

Tue, 12 Dec 2023 14:51:21 -0000

|Read Time: 0 minutes

Abstract

The recent release of MLPerf Training v3.1 results includes the newly launched Stable Diffusion benchmark. At the time of publication, Dell Technologies leads the OEM market in this performance benchmark for training a Generative AI foundation model, especially for the Stable Diffusion model. With the Dell PowerEdge XE9680 server submission, Dell Technologies is differentiated as the only vendor with a Stable Diffusion score for an eight-way system. The time to converge by using eight NVIDIA H100 Tensor Core GPUs is 46.7 minutes.

Overview

Generative AI workload deployment is growing at an unprecedented rate. Key reasons include increased productivity and the increasing convergence of multimodal input. Creating content has become easier and is becoming more plausible across various industries. Generative AI has enabled many enterprise use cases, and it continues to expand by exploring more frontiers. This growth can be attributed to higher resolution text to image, text-to-video generations, and other modality generations. For these impressive AI tasks, the need for compute is even more expansive. Some of the more popular generative AI workloads include chatbot, video generation, music generation, 3D assets generation, and so on.

Stable Diffusion is a deep learning text-to-image model that accepts input text and generates a corresponding image. The output is credible and appears to be realistic. Occasionally, it can be hard to tell if the image is computer generated. Consideration of this workload is important because of the rapid expansion of use cases such as eCommerce, marketing, graphics design, simulation, video generation, applied fashion, web design, and so on.

Because these workloads demand intensive compute to train, the measurement of system performance during their use is essential. As an AI systems benchmark, MLPerf has emerged as a standard way to compare different submitters that include OEMs, accelerator vendors, and others in a like-to-like way.

MLPerf recently introduced the Stable Diffusion benchmark for v3.1 MLPerf Training. It measures the time to converge a Stable Diffusion workload to reach the expected quality targets. The benchmark uses the Stable Diffusion v2 model trained on the LAION-400M-filtered dataset. The original LAION 400M dataset has 400 million image and text pairs. A subset of those images (approximately 6.5 million) is used for training in the benchmark. The validation dataset is a subset of 30 K COCO 2014 images. Expected quality targets are FID <= 90 and CLIP>=0.15.

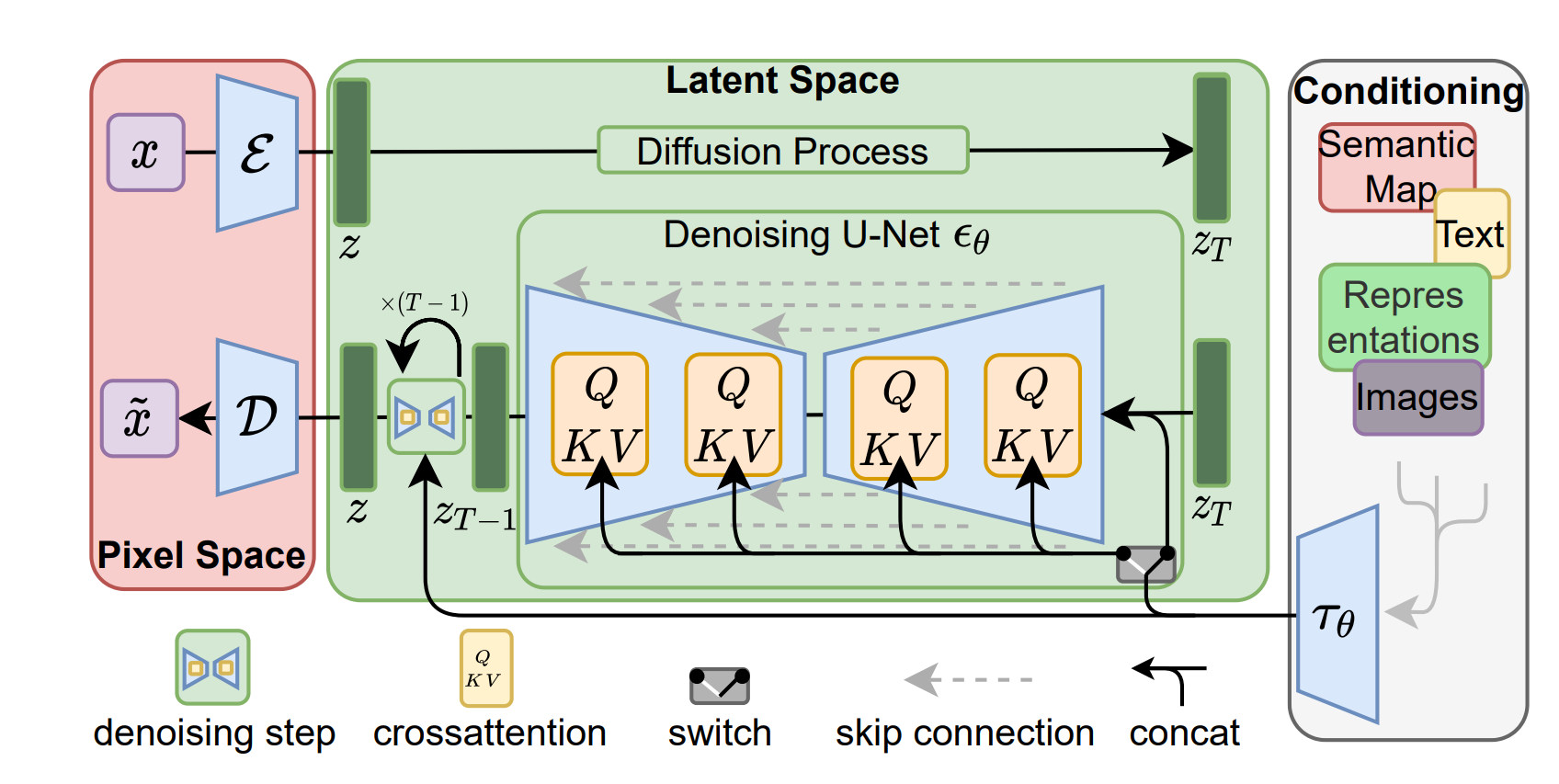

The following figure shows a latent diffusion model[1]:

Figure 1: Latent diffusion model

[1] Source: https://arxiv.org/pdf/2112.10752.pdf

Stable Diffusion v2 is a latent diffusion model that combines an autoencoder with a diffusion model that is trained in the latent space of the autoencoder. MLPerf Stable Diffusion focuses on the U-Net denoising network, which has approximately 865 M parameters. There are some deviations from the v2 model. However, these adjustments are minor and encourage more submitters to make submissions with compute constraints.

The submission uses the NVIDIA NeMo framework, included with NVIDIA AI Enterprise, for secure, supported, and stable production AI. It is a framework to build, customize, and deploy generative AI models. It includes training and inferencing frameworks, guard railing toolkits, data curation tools, and pretrained models, offering enterprises an easy, cost effective, and a fast way to adopt generative AI.

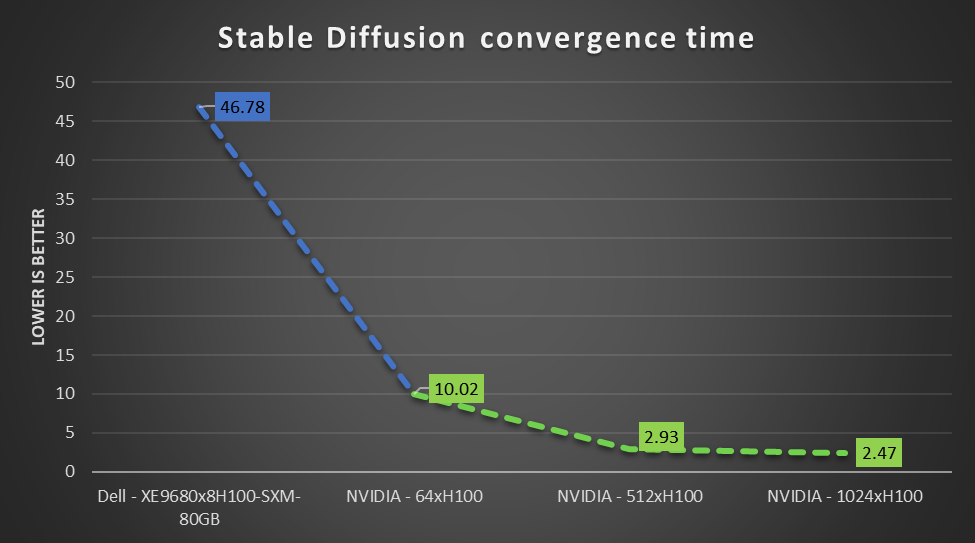

Performance of the Dell PowerEdge XE9680 server and other NVIDIA-based GPUs on Stable Diffusion

The following figure shows the performance of NVIDIA H100 Tensor Core GPU-based systems on the Stable Diffusion benchmark. It includes submissions from Dell Technologies and NVIDIA that use different numbers of NVIDIA H100 GPUs. The results shown vary from eight GPUs (Dell submission) to 1024 GPUs (NVIDIA submission). The following figure shows the expected performance of this workload and demonstrates that strong scaling is achievable with less scaling loss.

Figure 2: MLPerf Training Stable Diffusion scaling results on NVIDIA H100 GPUs from Dell Technologies and NVIDIA

End users can use state-of-the-art compute to derive faster time to value.

Conclusion

The key takeaways include:

- The latest released MLPerf Training v3.1 measures Generative AI workloads like Stable Diffusion.

- Dell Technologies is the only OEM vendor to have made an MLPerf-compliant Stable Diffusion submission.

- The Dell PowerEdge XE9680 server is an excellent choice to derive value from Image Generation AI workloads for marketing, art, gaming, and so on. The benchmark results are outstanding for Stable Diffusion v2.

MLCommons Results

https://mlcommons.org/benchmarks/training/

The preceding graphs are MLCommons results for MLPerf IDs 3.1-2019, 3.1-2050, 3.1-2055, and 3.1-2060.

The MLPerf™ name and logo are trademarks of MLCommons Association in the United States and other countries. All rights reserved. Unauthorized use strictly prohibited. See www.mlcommons.org for more information.

Dell PowerEdge Servers Achieve Stellar Scores with MLPerf™ Training v3.1

Wed, 08 Nov 2023 17:43:48 -0000

|Read Time: 0 minutes

Abstract

MLPerf is an industry-standard AI performance benchmark. For more information about the MLPerf benchmarks, see Benchmark Work | Benchmarks MLCommons.

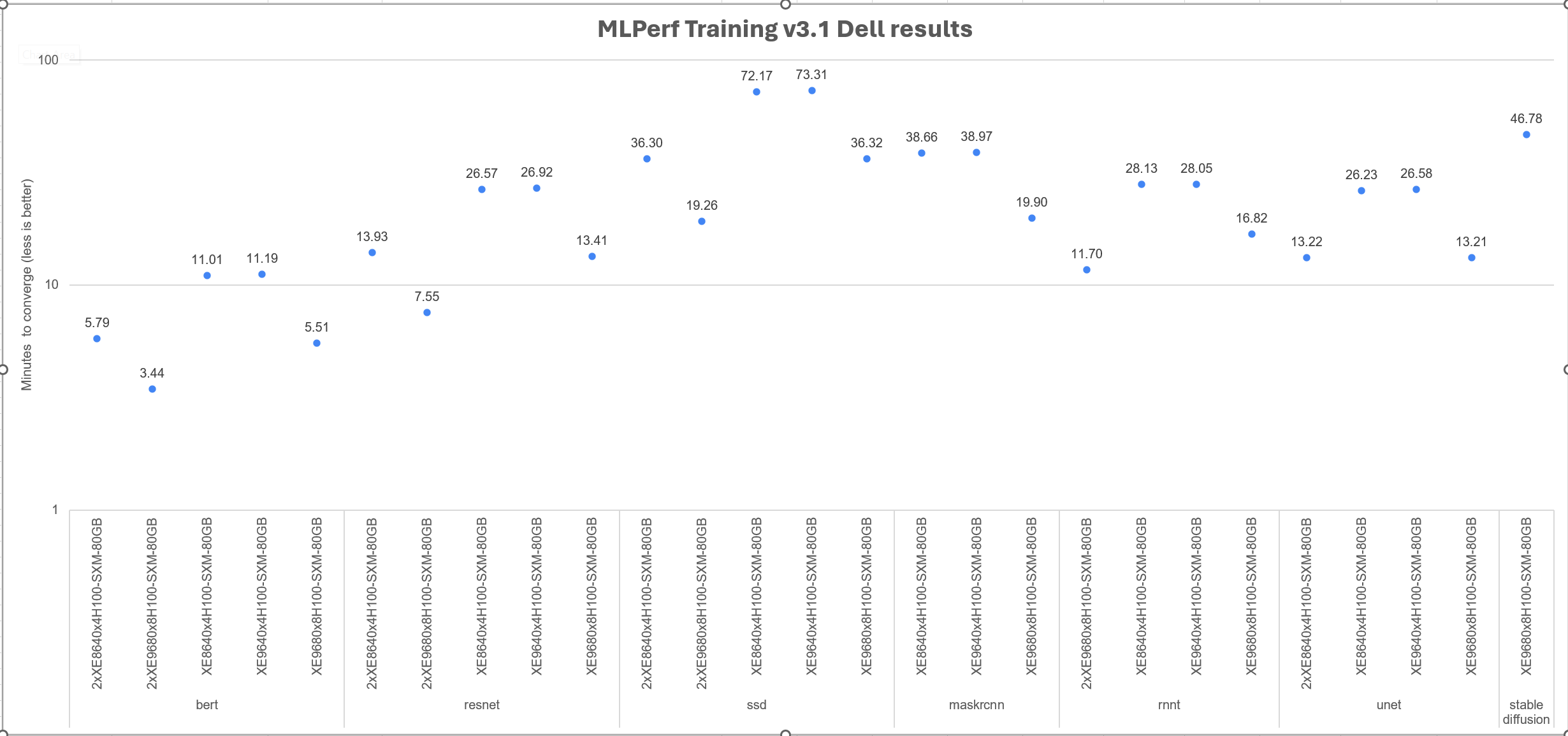

Today marks the release of a new set of results for MLPerf Training v3.1. The Dell PowerEdge XE9680, XE8640, and XE9640 servers in the submission demonstrated excellent performance. The tasks included image classification, medical image segmentation, lightweight and heavy-weight object detection, speech recognition, language modeling, recommendation, and text to image. MLPerf Training v3.1 results provide a baseline for end users to set performance expectations.

What is new with MLPerf Training 3.1 and the Dell Technologies submissions?

The following are new for this submission:

- For the benchmarking suite, a new benchmark was added: stable diffusion with the Laion400 dataset.

- Dell Technologies submitted the newly introduced Liquid Assisted Air Cooled (LAAC) PowerEdge XE9640 system, which is a part of the latest generation Dell PowerEdge servers.

Overview of results

Dell Technologies submitted 30 results. These results were submitted using five different systems. We submitted results for the PowerEdge XE9680, XE8640, and XE9640 servers. We also submitted multinode results for the PowerEdge XE9680 and XE8640 servers. The PowerEdge XE9680 server was powered by eight NVIDIA H100 Tensor Core GPUs, while the PowerEdge XE8640 and XE9640 servers were powered by four NVIDIA H100 Tensor Core GPUs each.

Datapoints of interest

Interesting datapoints include:

- Our new stable diffusion results with the PowerEdge XE9680 server have been submitted for the first time and are exclusive. Dell Technologies, NVIDIA, and Habana Labs are the only submitters to have made an official submission. This submission is important because of the explosion of Generative AI workloads. The submission uses the NVIDIA NeMo framework, included in NVIDIA AI Enterprise for secure, supported, and stable production AI.

- Dell PowerEdge XE8640 and XE9640 servers secured several top performer titles (#1 titles) among other systems equipped with four NVIDIA H100 GPUs. The tasks included language modeling, recommendation, heavy-weight object detection, speech to text, and medical image segmentation.

- A number of multinode results were submitted for the previous round, which can be compared with this round. PowerEdge XE9680 multinode results were submitted. Additionally, this round was the first time multinode results with the newer generation PowerEdge XE8640 servers were submitted. The results show near linear scaling. Furthermore, Dell Technologies is the only submitter in addition to NVIDIA, Habana Labs, and Intel making multinode, on-premises result submissions.

- The results for the PowerEdge XE9640 server with liquid assisted air cooling (LAAC) are similar to the PowerEdge XE8640 air-cooled server.

The following figure shows all the convergence times for Dell systems and corresponding workloads in the benchmark. Because different benchmarks are included in the same graph, the y axis is expressed logarithmically. Overall, these numbers show an excellent time to converge for the workload in question.

Figure 1. Logarithmic y axis: Overview of Dell MLPerf Training v3.1 results

Conclusion

We submitted compliant results for the MLCommons Training v3.1 benchmark. These results are based on the latest generation of Dell PowerEdge XE9680, XE8640, and XE9640 servers, powered by NVIDIA H100 Tensor Core GPUs. All results are stellar. They demonstrate that multinode scaling is linear and that more servers can help to solve the same problem faster. Different results allow end users to make decisions about expected performance before deploying their compute-intensive training workloads. The workloads in the submission include image classification, medical image segmentation, lightweight and heavy-weight object detection, speech recognition, language modeling, recommendation, and text to image. Enterprises can enable and maximize their AI transformation with Dell Technologies efficiently with Dell solutions.

MLCommons Results

https://mlcommons.org/benchmarks/training/

The preceding graphs are MLCommons results for MLPerf IDs from 3.1-2005 to 3.1-2009.

The MLPerf™ name and logo are trademarks of MLCommons Association in the United States and other countries. All rights reserved. Unauthorized use strictly prohibited. See www.mlcommons.org for more information.