Accelerating HPC Workloads with NVIDIA A100 NVLink on Dell PowerEdge XE8545

Tue, 13 Apr 2021 14:25:31 -0000

|Read Time: 0 minutes

NVIDIA A100 GPU

Three years after launching the Tesla V100 GPU, NVIDIA recently announced its latest data center GPU A100, built on the Ampere architecture. The A100 is available in two form factors, PCIe and SXM4, allowing GPU-to-GPU communication over PCIe or NVLink. The NVLink version is also known as the A100 SXM4 GPU and is available on the HGX A100 server board.

As you’d expect, the Innovation Lab tested the performance of the A100 GPU in a new platform. The new PowerEdge XE8545 4U server from Dell Technologies supports these GPUs with the NVLink SXM4 form factor and dual-socket AMD 3rd generation EPYC CPUs (codename Milan). This platform supports PCIe Gen 4 speed, up to 10 local drives, and up to 16 DIMM slots running at 3200 MT/s. Milan CPUs are available with up to 64 physical cores per CPU.

The PCIe version of the A100 can be housed in the PowerEdge R7525, which also supports AMD EPYC CPUs, up to 24 drives, and up to 16 DIMM slots running at 3200MT/s. This blog compares the performance of the A100-PCIe system to the A100-SXM4 system.

Figure 1: PowerEdge XE8545 Server

A previous blog discussed the performance of the NVIDIA A100-PCIe GPU compared to its predecessor NVIDIA Tesla V100-PCIe GPU in the PowerEdge R7525 platform.

The following table shows the specifications of the NVIDIA A100 and V100 GPUs.

Table 1: NVIDIA A100 and V100 GPUs with PCIe and SXM4 form factors

Form factor | PCIe | SXM (NVIDIA NVLink) | ||

Type of NVIDIA | A100 | V100 | A100 | V100 |

GPU architecture | Ampere | Volta | Ampere | Volta |

GPU memory | 40 GB | 32 GB | 40 GB | 32 GB |

GPU memory bandwidth | 1555 GB/s | 900 GB/s | 1555 GB/s | 900 GB/s |

Peak FP64 | 9.7 TFLOPS | 7 TFLOPS | 9.7 TFLOPS | 7.8 TFLOPS |

Peak FP64 Tensor Core | 19.5 TFLOPS | N/A | 19.5 TFLOPS | N/A |

GPU base clock | 765 MHz | 1230 MHz | 1095 MHz | 1290 MHz |

GPU boost clock | 1410 MHz | 1380 MHz | 1410 MHz | 1530 MHz |

NVLink speed | 600 GB/s | N/A | 600 GB/s | 300 GB/s |

Max power consumption | 250 W | 250 W | 400 W | 300 W |

From Table 1, we see that the A100 offers 42 percent improved memory bandwidth and 20 to 30 percent higher double precision FLOPS when compared to the Tesla V100 GPU. While the A100-PCIe GPU consumes the same amount of power as the V100-PCIe GPU, the NVLink version of the A100 GPU consumes 25 percent more power than the V100 GPU.

How are the GPUs connected in the PowerEdge servers?

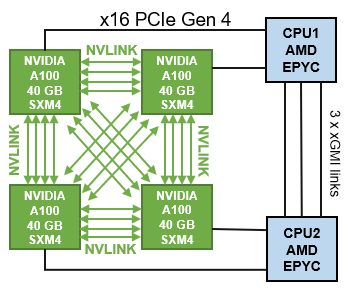

An understanding of the server architecture is helpful in determining the behavior of any application. The PowerEdge XE8545 server is an accelerator optimized server with four A100-SMX4 GPUs connected with third generation NVLink, as shown in the following figure.

Figure 2: PowerEdge XE8545 CPU-GPU connectivity

In the A100 GPU, each NVLink lane supports a data rate of 50x 4 Gbit/s in each direction. The total number of NVLink lanes increases from six lanes in the V100 GPU to 12 lanes in the A100 GPU, now yielding 600 GB/s total. Workloads that can take advantage of the higher GPU-to-GPU communication bandwidth can be benefit from the NVLink links in PowerEdge XE8545 Server.

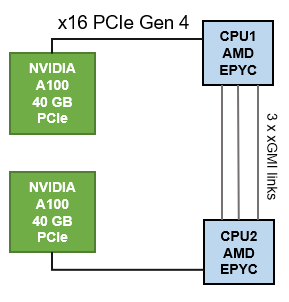

As shown in the following figure, the PowerEdge R7525 server can accommodate up to three PCIe-based GPUs; however the configuration chosen for this evaluation used two A100-PCIe GPUs. With this option, the GPU-to-GPU communication must flow through the AMD Infinity Fabric inter-CPU links.

Figure 3: PowerEdge R7525 CPU-GPU connectivity

Testbed details

The following table shows the tested configuration details:

Table 2: Test bed configuration details

Server | PowerEdge XE8545 | PowerEdge R7525 |

Processor | Dual AMD EPYC 7713, 64C, 2.8 GHz | |

Memory | 512 GB (16 x 32 GB @ 3200 MT/s) | 1024 GB (16 x 64 GB @ 3200 MT/s) |

Height of system | 4U | 2U |

GPUs | 4 x NVIDIA A100 SXM4 40 GB | 2 x NVIDIA A100 PCIe 40 GB |

Operating system Kernel | Red Hat Enterprise Linux release 8.3 (Ootpa) 4.18.0-240.el8.x86_64 | |

BIOS settings | Sysprofile=PerfOptimized LogicalProcessor=Disabled NumaNodesPerSocket=4 | |

CUDA Driver CUDA Toolkit | 450.51.05 11.1 | |

GCC | 9.2.0 | |

MPI | OpenMPI - 4.0 | |

The following table lists the version of HPC application that was used for the benchmark evaluation:

Table 3: HPC Applications used for the evaluation

Benchmark | Details |

HPL | xhpl_cuda-11.0-dyn_mkl-static_ompi-4.0.4_gcc4.8.5_7-23-20 |

HPCG | xhpcg-3.1_cuda_11_ompi-3.1 |

GROMACS | v2021 |

NAMD | Git-2021-03-02_Source |

LAMMPS | 29Oct2020 release |

Benchmark evaluation

High Performance Linpack

High Performance Linpack (HPL) is a standard HPC system benchmark that is used to measure the computing power of a server or cluster. It is also used as a reference benchmark by the TOP500 org to rank supercomputers worldwide. HPL for GPU uses double precision floating point operations. There are a few parameters that are significant for the HPL benchmark, as listed below:

- N is the problem size provided as input to the benchmark and determines the size of linear matrix that is solved by HPL. For a GPU system, the highest HPL performance is obtained when the problem size utilizes as much as possible of the GPU memory without exceeding it. For this study, we used HPL compiled with NVIDIA libraries as listed in Table 3.

- NB is the block size which is used for data distribution. For this test configuration, we used an NB of 288.

- PxQ is the matrix size and is equal to the total number of GPUs in the system.

- Rpeak is the theoretical peak of the system.

- Rmax is the maximum measured performance achieved on the system.

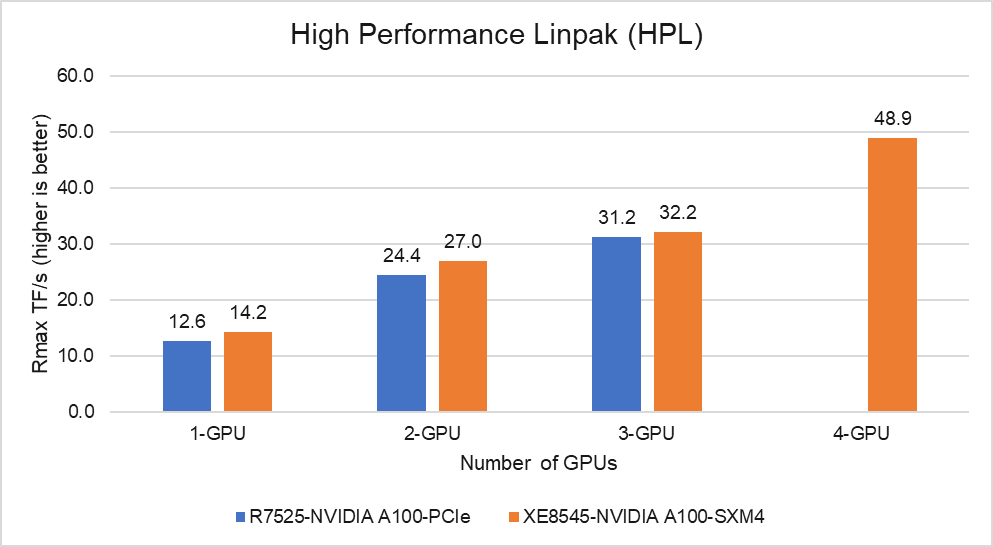

Figure 4: HPL Performance on the PowerEdge R7525 and XE8545 with NVIDIA A100-40 GB

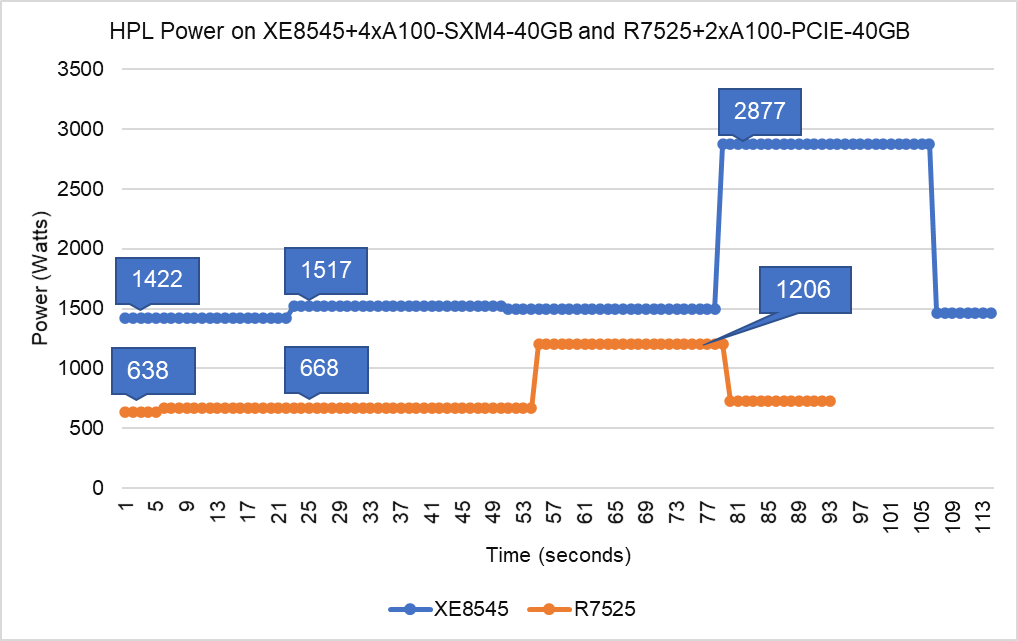

Figure 5: HPL Power Utilization on the PowerEdge XE8545 with four NVIDIA A100 GPUs and R7525 with two NVIDIA A100 GPUs

From Figure 4 and Figure 5, we can make the following observations:

- SXM4 vs PCIe: At 1-GPU, the NVIDIA A100-SXM4 GPU outperforms the A100-PCIe by 11 percent. The higher SMX4 GPU base clock frequency is the predominant factor contributing to the additional performance over the PCIe GPU.

- Scalability: The PowerEdge XE8545 server with four NVIDIA A100-SXM4-40GB GPUs delivers 3.5 times higher HPL performance compared to one NVIDIA A100-SXM4-40GB GPU. On the other hand, two A100-PCIe GPUs is 1.94 times faster than one on the R7525 platform. The A100 GPUs scale well on both platforms for HPL benchmark.

- Higher Rpeak: HPL code on A100 GPUs use the new double-precision Tensor cores. So, the theoretical peak for each card would be 19.5 TFlops, as opposed to 9.7 TFlops.

- Power: Figure 5 shows power consumption of a complete HPL run with PowerEdge XE8545 using 4 x A100-SXM4 GPUs and PowerEdge R7525 using 2 x A100-PCIe GPUs. This was measured with iDRAC commands, and the peak power consumption for XE8545 is 2877 Watts, while peak power consumption for R7525 is 1206 Watts.

High Performance Conjugate Gradient

The TOP500 list has incorporated the High Performance Conjugate Gradient (HPCG) results as an alternate metric to assess system performance.

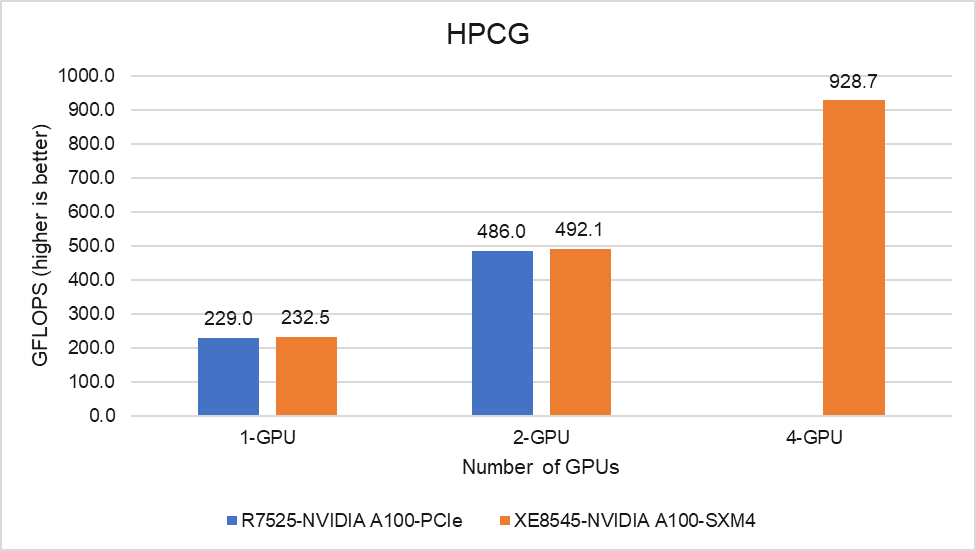

Figure 6: HPCG Performance on the PowerEdge R7525 and PowerEdge XE8545 Servers

Unlike HPL, HPCG performance depends heavily on the memory system and network performance when we go beyond one server. Because both the PCIe and SXM4 form factors of the A100 GPUs have the same memory bandwidth, there is no variation in the performance at a single node and HPCG performance scales well on both servers.

GROMACS

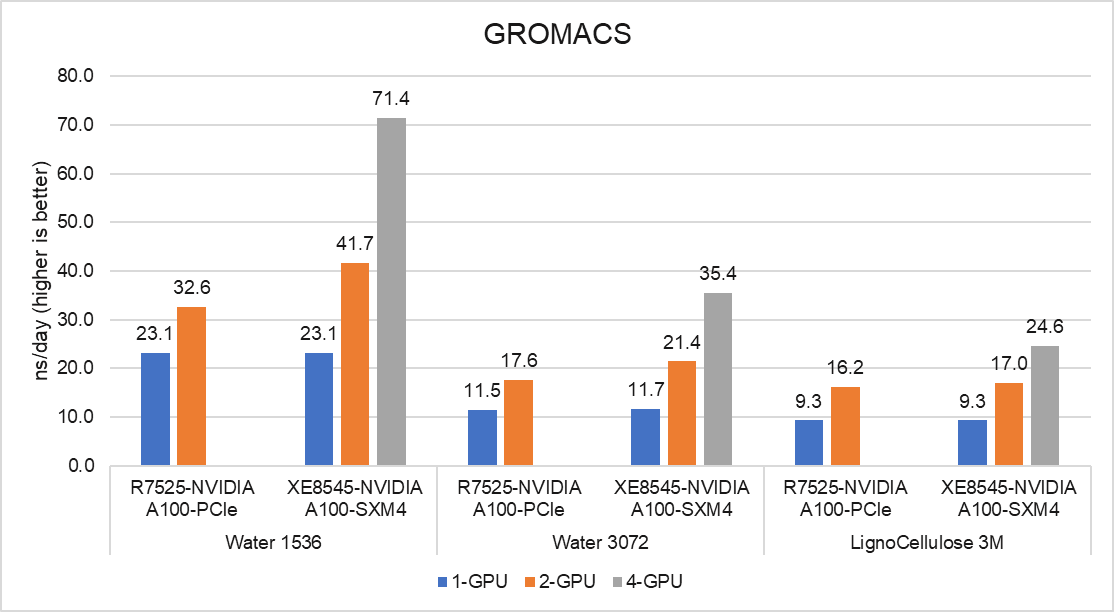

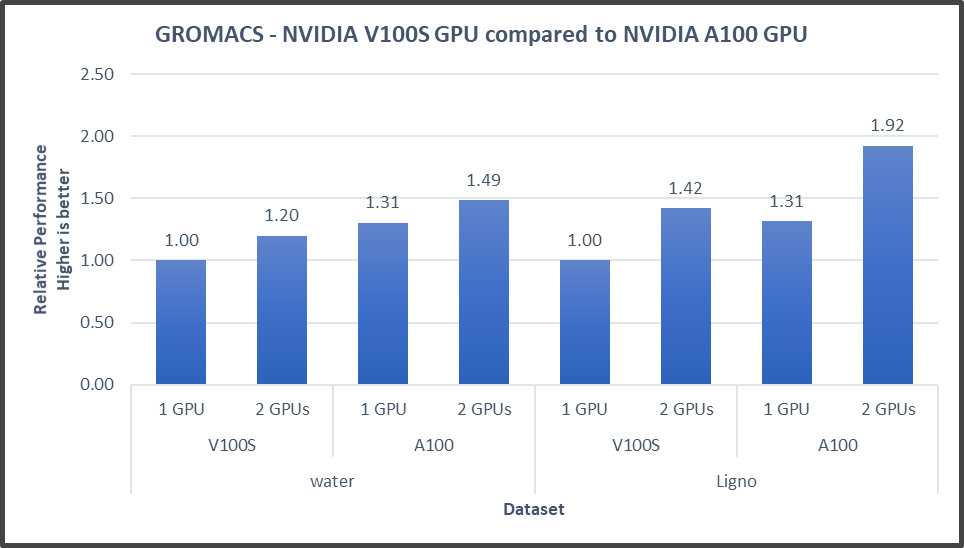

The following figure shows the performance results for GROMACS, a molecular dynamics application, on the PowerEdge R7525 and XE8545 servers. GROMACS 2021.0 was compiled with CUDA compilers and Open-MPI, as shown in Table 3.

Figure 7: GROMACS performance on the PowerEdge R7545 and PowerEdge XE8545 Server

The GROMACS build included thread MPI (built in with the GROMACS package). Performance results are presented using the ns/day metric. For each test, the performance was optimized by varying the number of MPI ranks and threads, number of PME ranks, and different nstlist values to obtain the best performance result.

With one GPU in test, the performance of the SMX4 XE8545 server is similar to the PCIe R7525. With two GPUs in test, the SMX4 XE8545 performance is up to 28 percent better than the PCIe R7525. As the performance was based on a comparative analysis between NVIDIA PCIe and SXM4 form factors along the server platforms, datasets like Water 1536 and Water 3072 demand more GPU-GPU communication, and SXM4 performs around 28 percent better. On the other hand, for datasets like LignoCellulose 3M, the two GPU R7525 achieves the same per-GPU performance as the XE8545, but with the lower 250 W GPU making it the more efficient solution.

LAMMPS

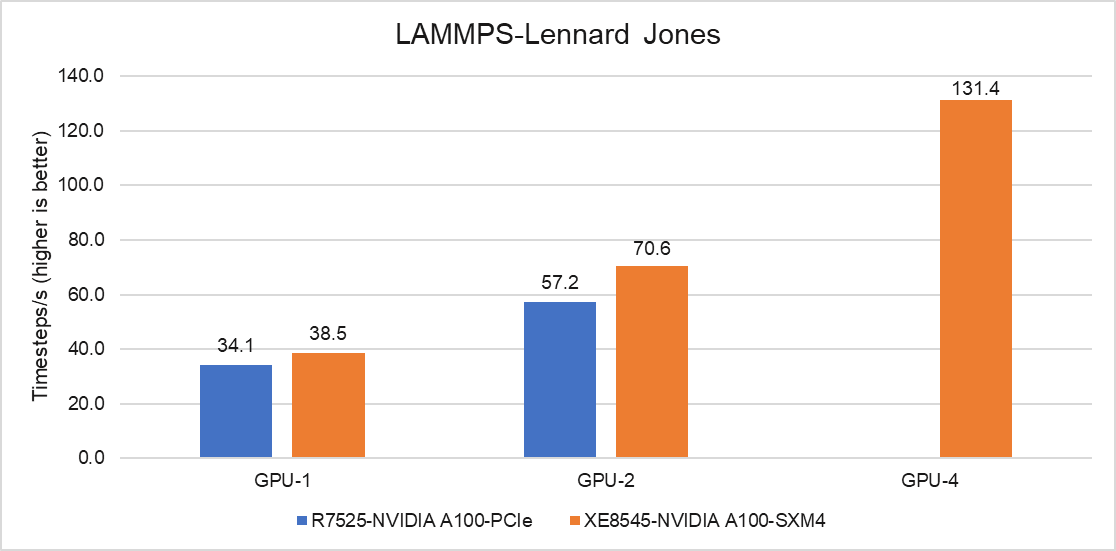

The following figure shows the performance results for LAMMPS, a molecular dynamics application, on the PowerEdge R7525 and XE8545 servers. The code was compiled with the KOKKOS package to run efficiently on NVIDIA GPUs, and Lennard Jones is the dataset that was tested with Timesteps/s as the metric for comparison.

Figure 8: LAMMPS performance on the PowerEdge R7545 and PowerEdge XE8545 Servers

With one GPU in test, the performance of the SMX4 XE8545 server is 13 percent higher than the PCIe R7525, and with two GPUs in test, a 23 percent performance improvement was measured. The PowerEdge XE8545 is at an advantage because the GPUs can communicate with each other over NVLink without the intervention of a CPU. The R7525 server with two GPUs is limited by the GPU-to-GPU communication pattern. Additionally, the other factor contributing for better performance is the higher clock rate of the SXM4 A100 GPU.

Conclusion

In this blog, we discussed the performance of NVIDIA A100 GPUs on the PowerEdge R7525 Server and the PowerEdge XE8545 Server, which is the new addition from Dell Technologies. The A100 GPU has 42 percent more memory bandwidth and higher double precision FLOPs compared to its predecessor, the V100 series GPU. For workloads which demand more GPU-to-GPU communication, the PowerEdge XE8545 server is an ideal choice. For data centers where space and power are limited, the PowerEdge R7525 server may be the right fit. The overall performance of PowerEdge XE8545 Server with four A100-SXM4 GPUs is 1.5 to 2.3 times faster than the PowerEdge R7525 server with two A100-PCIe GPUs.

In the future, we intend to evaluate the A100-80GB GPUs and NVIDIA A40 GPUs that will be available this year. We also plan to focus on a multi-node performance study with these GPUs.

Please contact your Dell sales specialist about the HPC and AI Innovation Lab if you would like to evaluate these GPU servers.

Related Blog Posts

New Frontiers—Dell EMC PowerEdge R750xa Server with NVIDIA A100 GPUs

Tue, 01 Jun 2021 20:18:04 -0000

|Read Time: 0 minutes

Dell Technologies has released the new PowerEdge R750xa server, a GPU workload-based platform that is designed to support artificial intelligence, machine learning, and high-performance computing solutions. The dual socket/2U platform supports 3rd Gen Intel Xeon processors (code named Ice Lake). It supports up to 40 cores per processor, has eight memory channels per CPU, and up to 32 DDR4 DIMMs at 3200 MT/s DIMM speed. This server can accommodate up to four double-width PCIe GPUs that are located in the front left and the front right of the server.

Compared with the previous generation PowerEdge C4140 and PowerEdge R740 GPU platform options, the new PowerEdge R750xa server supports larger storage capacity, provides more flexible GPU offerings, and improves the thermal requirement

Figure 1 PowerEdge R750xa server

The NVIDIA A100 GPUs are built on the NVIDIA Ampere architecture to enable double precision workloads. This blog evaluates the new PowerEdge R750xa server and compares its performance with the previous generation PowerEdge C4140 server.

The following table shows the specifications for the NVIDIA GPU that is discussed in this blog and compares the performance improvement from the previous generation.

Table 1 NVIDIA GPU specifications

PCIe | Improvement | ||

GPU name | A100 | V100 |

|

GPU architecture | Ampere | Volta | - |

GPU memory | 40 GB | 32 GB | 60% |

GPU memory bandwidth | 1555 GB/s | 900 GB/s | 73% |

Peak FP64 | 9.7 TFLOPS | 7 TFLOPS | 39% |

Peak FP64 Tensor Core | 19.5 TFLOPS | N/A | - |

Peak FP32 | 19.5 TFLOPS | 14 TFLOPS | 39% |

Peak FP32 Tensor Core | 156 TFLOPS 312 TFLOPS* | N/A | - |

Peak Mixed Precision FP16 ops/ FP32 Accumulate | 312 TFLOPS 624 TFLOPS* | 125 TFLOPS | 5x |

GPU base clock | 765 MHz | 1230 MHz | - |

Peak INT8 | 624 TOPS 1,248 TOPS* | N/A | - |

GPU Boost clock | 1410 MHz | 1380 MHz | 2.1% |

NVLink speed | 600 GB/s | N/A | - |

Maximum power consumption | 250 W | 250 W | No change |

Test bed and applications

This blog quantifies the performance improvement of the GPUs with the new PowerEdge GPU platform.

Using a single node PowerEdge R750xa server in the Dell HPC & AI Innovation Lab, we derived all results presented in this blog from this test bed. This section describes the test bed and the applications that were evaluated as part of the study. The following table provides test environment details:

Table 2 Server configuration

Component | Test Bed 1 | Test Bed 2 |

Server | Dell PowerEdge R750xa

| Dell PowerEdge C4140 configuration M |

Processor | Intel Xeon 8380 | Intel Xeon 6248 |

Memory | 32 x 16 GB @ 3200MT/s | 16 x 16 GB @ 2933MT/s |

Operating system | Red Hat Enterprise Linux 8.3 | Red Hat Enterprise Linux 8.3 |

GPU | 4 x NVIDIA A100-PCIe-40 GB GPU | 4 x NVIDIA V100-PCIe-32 GB GPU |

The following table provides information about the applications and benchmarks used:

Table 3 Benchmark and application details

Application | Domain | Version | Benchmark dataset |

High-Performance Linpack | Floating point compute-intensive system benchmark | xhpl_cuda-11.0-dyn_mkl-static_ompi-4.0.4_gcc4.8.5_7-23-20 | Problem size is more than 95% of GPU memory |

HPCG | Sparse matrix calculations | xhpcg-3.1_cuda_11_ompi-3.1 | 512 * 512 * 288

|

GROMACS | Molecular dynamics application | 2020 | Ligno Cellulose Water 1536 Water 3072 |

LAMMPS | Molecular dynamics application | 29 October 2020 release | Lennard Jones |

LAMMPS

Large-Scale Atomic/Molecular Massively Parallel simulator (LAMMPS) is distributed by Sandia National Labs and the US Department of Energy. LAMMPS is open-source code that has different accelerated models for performance on CPUs and GPUs. For our test, we compiled the binary using the KOKKOS package, which runs efficiently on GPUs.

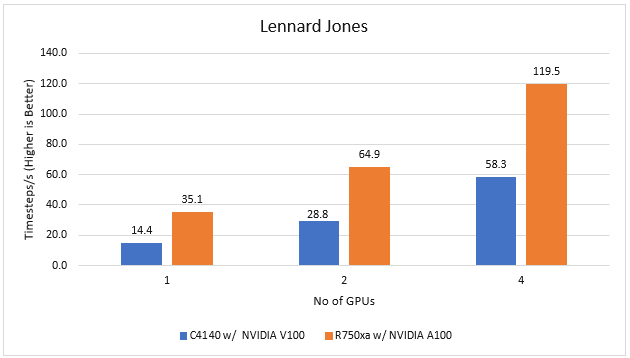

Figure 2 LAMMPS Performance on PowerEdge R750xa and PowerEdge C4140 servers

With the newer generation GPUs, this application improves 2.4 times compared to single GPU performance. The overall performance from a single server improved twice with the PowerEdge R750xa server and NVIDIA A100 GPUs.

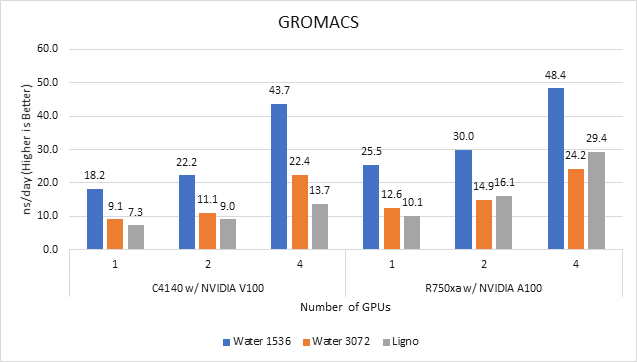

GROMACS

GROMACS is a free and open-source parallel molecular dynamics package designed for simulations of biochemical molecules such as proteins, lipids, and nucleic acids. It is used by a wide variety of researchers, particularly for biomolecular and chemistry simulations. GROMACS supports all the usual algorithms expected from modern molecular dynamics implementation. It is open-source software with the latest versions available under the GNU Lesser General Public License (LGPL).

Figure 3 GROMACS performance on PowerEdge C4140 and r750xa servers

With the newer generation GPUs, this application improved approximately 1.5 times across the dataset compared to single GPU performance. The overall performance from a single server improved 1.5 times with a PowerEdge R750xa server and NVIDIA A100 GPUs.

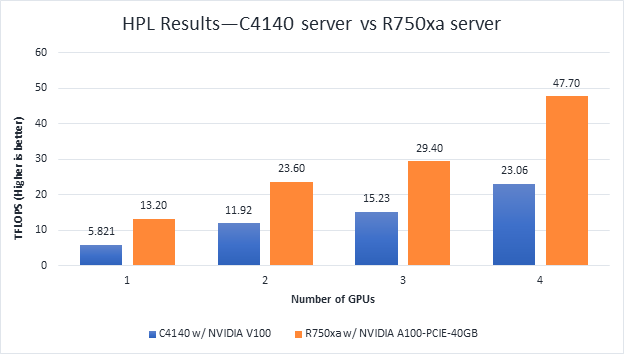

High-Performance Linpack

High-Performance Linpack (HPL) needs no introduction in the HPC arena. It is a widely used standard benchmark tests in the industry.

Figure 4 HPL Performance on the PowerEdge R750xa server with A100 GPU and PowerEdge C4140 server with V100 GPU

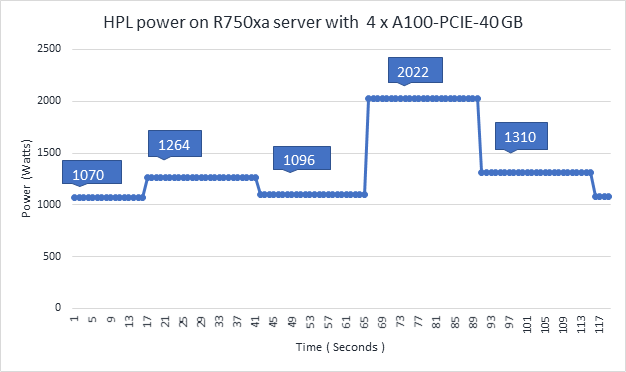

Figure 5 Power use of the HPL running on NVIDIA GPUs

From Figure 4 and Figure 5, the following results were observed:

- Performance—For GPU count, the NVIDIA A100 GPU demonstrates twice the performance of the NVIDIA V100 GPU. Higher memory size, double precision FLOPS, and a newer architecture contribute to the improvement for the NVIDIA A100 GPU.

- Scalability—The PowerEdge R750xa server with four NVIDIA A100-PCIe-40 GB GPUs delivers 3.6 times higher HPL performance compared to one NVIDIA A100-PCIE-40 GB GPU. The NVIDIA A100 GPUs scale well inside the PowerEdge R750xa server for the HPL benchmark.

- Higher Rpeak—The HPL code on NVIDIA A100 GPUs uses the new double-precision Tensor cores. The theoretical peak for each GPU is 19.5 TFlops, as opposed to 9.7 TFlops.

- Power—Figure 5 shows power consumption of a complete HPL run with the PowerEdge R750xa server using four A100-PCIe GPUs. This result was measured with iDRAC commands, and the peak power consumption was observed as 2022 Watts. Based on our previous observations, we know that the PowerEdge C4140 server consumes approximately 1800 W of power.

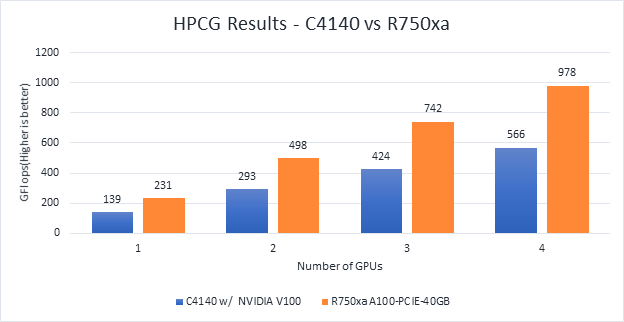

HPCG

Figure 6 Scaling GPU performance data for HPCG Benchmark

As discussed in other blogs, high performance conjugate gradient (HPCG) is another standard benchmark to test data access patterns of sparse matrix calculations. From the graph, we see that the HPCG benchmark scales well with this benchmark resulting in 1.6 times performance improvement over the previous generation PowerEdge C4140 server with an NVIDIA V100 GPU.

The 72 percent improvement in memory bandwidth of the NVIDIA A100 GPU over the NVIDIA V100 GPU contributes to the performance improvement.

Conclusion

In this blog, we introduced the latest generation PowerEdge R750xa platform and discussed the performance improvement over the previous generation PowerEdge C4140 server. The PowerEdge R750xa server is a good option for customers looking for an Intel Xeon scalable CPU-based platform powered with NVIDIA GPUs.

With the newer generation PowerEdge R750xa server and NVIDIA A100 GPUs, the applications discussed in this blog show significant performance improvement.

Next steps

In future blogs, we plan to evaluate NVLINK bridge support, which is another important feature of the PowerEdge R750xa server and NVIDIA A100 GPUs.

HPC Application Performance on Dell PowerEdge R7525 Servers with NVIDIA A100 GPGPUs

Tue, 24 Nov 2020 17:49:03 -0000

|Read Time: 0 minutes

Overview

The Dell PowerEdge R7525 server powered with 2nd Gen AMD EPYC processors was released as part of the Dell server portfolio. It is a 2U form factor rack-mountable server that is designed for HPC workloads. Dell Technologies recently added support for NVIDIA A100 GPGPUs to the PowerEdge R7525 server, which supports up to three PCIe-based dual-width NVIDIA GPGPUs. This blog describes the single-node performance of selected HPC applications with both one- and two-NVIDIA A100 PCIe GPGPUs.

The NVIDIA Ampere A100 accelerator is one of the most advanced accelerators available in the market, supporting two form factors:

- PCIe version

- Mezzanine SXM4 version

The PowerEdge R7525 server supports only the PCIe version of the NVIDIA A100 accelerator.

The following table compares the NVIDIA A100 GPGPU with the NVIDIA V100S GPGPU:

NVIDIA A100 GPGPU | NVIDIA V100S GPGPU | |||

Form factor | SXM4 | PCIe Gen4 | SXM2 | PCIe Gen3 |

GPU architecture | Ampere | Volta | ||

Memory size | 40 GB | 40 GB | 32 GB | 32 GB |

CUDA cores | 6912 | 5120 | ||

Base clock | 1095 MHz | 765 MHz | 1290 MHz | 1245 MHz |

Boost clock | 1410 MHz | 1530 MHz | 1597 MHz | |

Memory clock | 1215 MHz | 877 MHz | 1107 MHz | |

MIG support | Yes | No | ||

Peak memory bandwidth | Up to 1555 GB/s | Up to 900 GB/s | Up to 1134 GB/s | |

Total board power | 400 W | 250 W | 300 W | 250 W |

The NVIDIA A100 GPGPU brings innovations and features for HPC applications such as the following:

- Multi-Instance GPU (MIG)—The NVIDIA A100 GPGPU can be converted into as many as seven GPU instances, which are fully isolated at the hardware level, each using their own high-bandwidth memory and cores.

- HBM2—The NVIDIA A100 GPGPU comes with 40 GB of high-bandwidth memory (HBM2) and delivers bandwidth up to 1555 GB/s. Memory bandwidth with the NVIDIA A100 GPGPU is 1.7 times higher than with the previous generation of GPUs.

Server configuration

The following table shows the PowerEdge R7525 server configuration that we used for this blog:

Server | PowerEdge R7525 |

Processor | 2nd Gen AMD EPYC 7502, 32C, 2.5Ghz |

Memory | 512 GB (16 x 32 GB @3200MT/s) |

GPGPUs | Either of the following: 2 x NVIDIA A100 PCIe 40 GB 2 x NVIDIA V100S PCIe 32 GB |

Logical processors | Disabled |

Operating system | CentOS Linux release 8.1 (4.18.0-147.el8.x86_64) |

CUDA | 11.0 (Driver version - 450.51.05) |

gcc | 9.2.0 |

MPI | OpenMPI-3.0 |

HPL | hpl_cuda_11.0_ompi-4.0_ampere_volta_8-7-20 |

HPCG | xhpcg-3.1_cuda_11_ompi-3.1 |

GROMACS | v2020.4 |

Benchmark results

The following sections provide our benchmarks results with observations.

High-Performance Linpack benchmark

High Performance Linpack (HPL) is a standard HPC system benchmark. This benchmark measures the compute power of the entire cluster or server. For this study, we used HPL compiled with NVIDIA libraries.

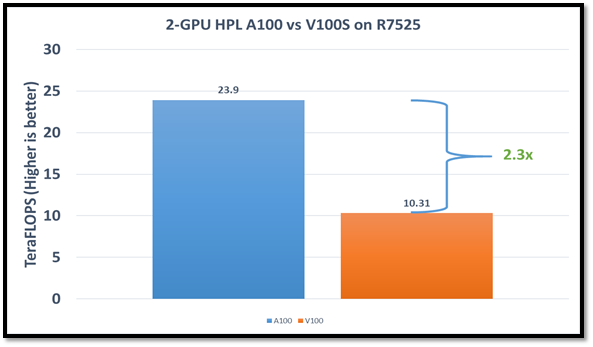

The following figure shows the HPL performance comparison for the PowerEdge R7525 server with either NVIDIA A100 or NVIDIA V100S GPGPUs:

Figure1: HPL performance on the PowerEdge R7525 server with the NVIDIA A100 GPGPU compared to the NVIDIA V100SGPGPU

The problem size (N) is larger for the NVIDIA A100 GPGPU due to the larger capacity of GPU memory. We adjusted the block size (NB) used with the:

- NVIDIA A100 GPGPU to 288

- NVIDIA V100S GPGPU to 384

The AMD EPYC processors provide options for multiple NUMA combinations. We found that the best value of 4 NUMA per socket (NPS=4), with NUMA per socket 1 and 2 lower the performance by 10 percent and 5 percent respectively. In a single PowerEdge R7525 node, the NVIDIA A100 GPGPU delivers 12 TF per card using this configuration without an NVLINK bridge. The PowerEdge R7525 server with two NVIDIA A100 GPGPUs delivers 2.3 times higher HPL performance compared to the NVIDIA V100S GPGPU configuration. This performance improvement is credited to the new double-precision Tensor Cores that accelerate FP64 math.

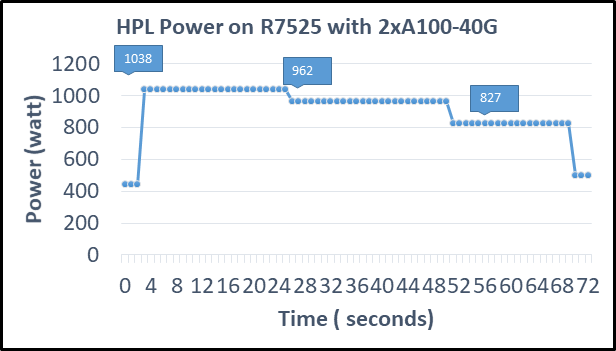

The following figure shows power consumption of the server while running HPL on the NVIDIA A100 GPGPU in a time series. Power consumption was measured with an iDRAC. The server reached 1038 Watts at peak due to a higher GFLOPS number.

Figure2: Power consumption while running HPL

High Performance Conjugate Gradient benchmark

The High Performance Conjugate Gradient (HPCG) benchmark is based on a conjugate gradient solver, in which the preconditioner is a three-level hierarchical multigrid method using the Gauss-Seidel method.

As shown in the following figure, HPCG performs at a rate 70 percent higher with the NVIDIA A100 GPGPU due to higher memory bandwidth:

Figure 3: HPCG performance comparison

Due to different memory size, the problem size used to obtain the best performance on the NVIDIA A100 GPGPU was 512 x 512 x 288 and on the NVIDIA V100S GPGPU was 256 x 256 x 256. For this blog, we used NUMA per socket (NPS)=4 and we obtained results without an NVLINK bridge. These results show that applications such as HPCG, which fits into GPU memory, can take full advantage of GPU memory and benefit from the higher memory bandwidth of the NVIDIA A100 GPGPU.

GROMACS

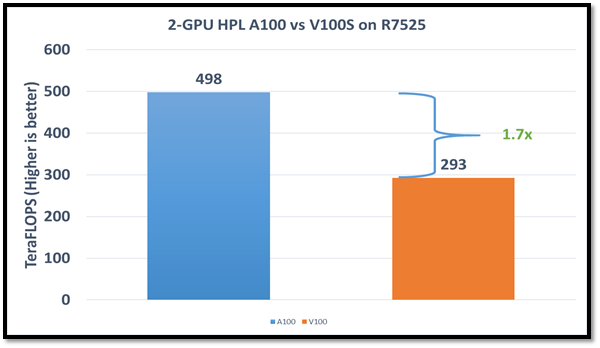

In addition to these two basic HPC benchmarks (HPL and HPCG), we also tested GROMACS, an HPC application. We compiled GROMACS 2020.4 with the CUDA compilers and OPENMPI, as shown in the following table:

Figure4: GROMACS performance with NVIDIA GPGPUs on the PowerEdge R7525 server

The GROMACS build included thread MPI (built in with the GROMACS package). All performance numbers were captured from the output “ns/day.” We evaluated multiple MPI ranks, separate PME ranks, and different nstlist values to achieve the best performance. In addition, we used settings with the best environment variables for GROMACS at runtime. Choosing the right combination of variables avoided expensive data transfer and led to significantly better performance for these datasets.

GROMACS performance was based on a comparative analysis between NVIDIA V100S and NVIDIA A100 GPGPUs. Excerpts from our single-node multi-GPU analysis for two datasets showed a performance improvement of approximately 30 percent with the NVIDIA A100 GPGPU. This result is due to improved memory bandwidth of the NVIDIA A100 GPGPU. (For information about how the GROMACS code design enables lower memory transfer overhead, see Developer Blog: Creating Faster Molecular Dynamics Simulations with GROMACS 2020.)

Conclusion

The Dell PowerEdge R7525 server equipped with NVIDIA A100 GPGPUs shows exceptional performance improvements over servers equipped with previous versions of NVIDIA GPGPUs for applications such as HPL, HPCG, and GROMACS. These performance improvements for memory-bound applications such as HPCG and GROMACS can take advantage of higher memory bandwidth available with NVIDIA A100 GPGPUs.