A Simple Poster at NVIDIA GTC – Running NVIDIA Riva on Red Hat OpenShift with Dell PowerFlex

Fri, 15 Mar 2024 21:45:09 -0000

|Read Time: 0 minutes

A few months back, Dell and NVIDIA released a validated design for running NVIDIA Riva on Red Hat OpenShift with Dell PowerFlex. A simple poster—nothing more, nothing less—yet it can unlock much more for your organization. This design shows the power of NVIDIA Riva and Dell PowerFlex to handle audio processing workloads.

What’s more, it will be showcased as part of the poster gallery at NVIDIA GTC this week in San Jose California. If you are at GTC, we strongly encourage you to join us during the Poster Reception from 4:00 to 6:00 PM. If you are unable to join us, you can view the poster online from the GTC website.

For those familiar with ASR, TTS, and NMT applications, you might be curious as to how we can synthesize these concepts into a simple poster. Read on to learn more.

NVIDIA Riva

For those not familiar with NVIDIA Riva, let’s start there.

NVIDIA Riva is an AI software development kit (SDK) for building conversational AI pipelines, enabling organizations to program AI into their speech and audio systems. It can be used as a smart assistant or even a note taker at your next meeting. Super cool, right?

Taking that up a notch, NVIDIA Riva lets you build fully customizable, real-time conversational AI pipelines, which is a fancy way of saying it allows you to process speech in a bunch of different ways including automatic speech recognition (ASR), text-to-speech (TTS), and neural machine translation (NMT) applications:

- Automatic speech recognition (ASR) – this is essentially dictation. Provide AI with a recording and get a transcript—a near perfect note keeper for your next meeting.

- Text-to-speech (TTS) – a computer reads what you type. In the past, this was often in a monotone voice. It’s been around for more than a couple of decades and has evolved rapidly with more fluid voices and emotion.

- Neural machine translation (NMT) – this is the translation of spoken language in near real-time to a different language. It is a fantastic tool for improving communication, which can go a long way in helping organizations extend business.

Each application is powerful in its own right, so think about what’s possible when we bring ASR, TTS, and NMT together, especially with an AI-backed system. Imagine having a technical support system that could triage support calls, sounded like you were talking to an actual support engineer, and could provide that support in multiple languages. In a word: ground-breaking.

NVIDIA Riva allows organizations to become more efficient in handling speech-based communications. When organizations become more efficient in one area, they can improve in other areas. This is why NVIDIA Riva is part of the NVIDIA AI Enterprise software platform, focusing on streamlining the development and deployment of production AI.

I make it all sound simple, however those creating large language models (LLMs) around multilingual speech and translation software know it’s not so. That’s why NVIDIA developed the Riva SDK.

The operating platform also plays a massive role in what can be done with workloads. Red Hat OpenShift enables AI speech recognition and inference with its robust container orchestration, microservices architecture, and strong security features. This allows workloads to scale to meet the needs of an organization. As the success of a project grows, so too must the project.

Why is Storage Important

You might be wondering how storage fits into all of this. That’s a great question. You’ll need high performance storage for NVIDIA Riva. After all, it’s designed to process and/or generate audio files and being able to do that in near real-time requires a highly performant, enterprise-grade storage system like Dell PowerFlex.

Additionally, AI workloads are becoming mainstream applications in the data center and should be able to run side by side with other mission critical workloads utilizing the same storage. I wrote about this in my Dell PowerFlex – For Business-Critical Workloads and AI blog.

At this point you might be curious how well NVIDIA Riva runs on Dell PowerFlex. That is what a majority of the poster is about.

ASR and TTS Performance

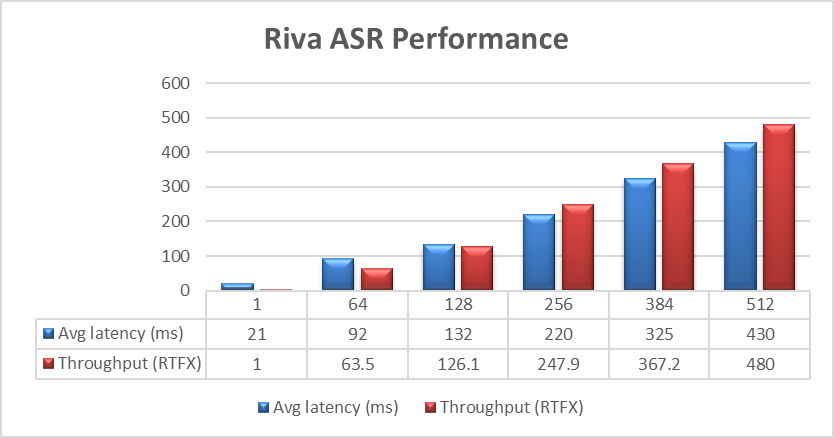

The Dell PowerFlex Solutions Engineering team did extensive testing using the LibriSpeech dev-clean dataset available from Open SLR. With this data set, they performed automatic speech recognition (ASR) testing using NVIDIA Riva. For each test, the stream was increased from 1 to 64, 128, 256, 384, and finally 512, as shown in the following graph.

Figure 1. NVIDIA Riva ASR Performance

Figure 1. NVIDIA Riva ASR Performance

The objective of these tests is to have the lowest latency with the highest throughput. Throughput is measured in RTFX, or the duration of audio transcribed divided by computation time. During these tests, the GPU utilization was approximately 48% without any PowerFlex storage bottlenecks. These results are comparable to NVIDIA’s own findings in in the NVIDIA Riva User Guide.

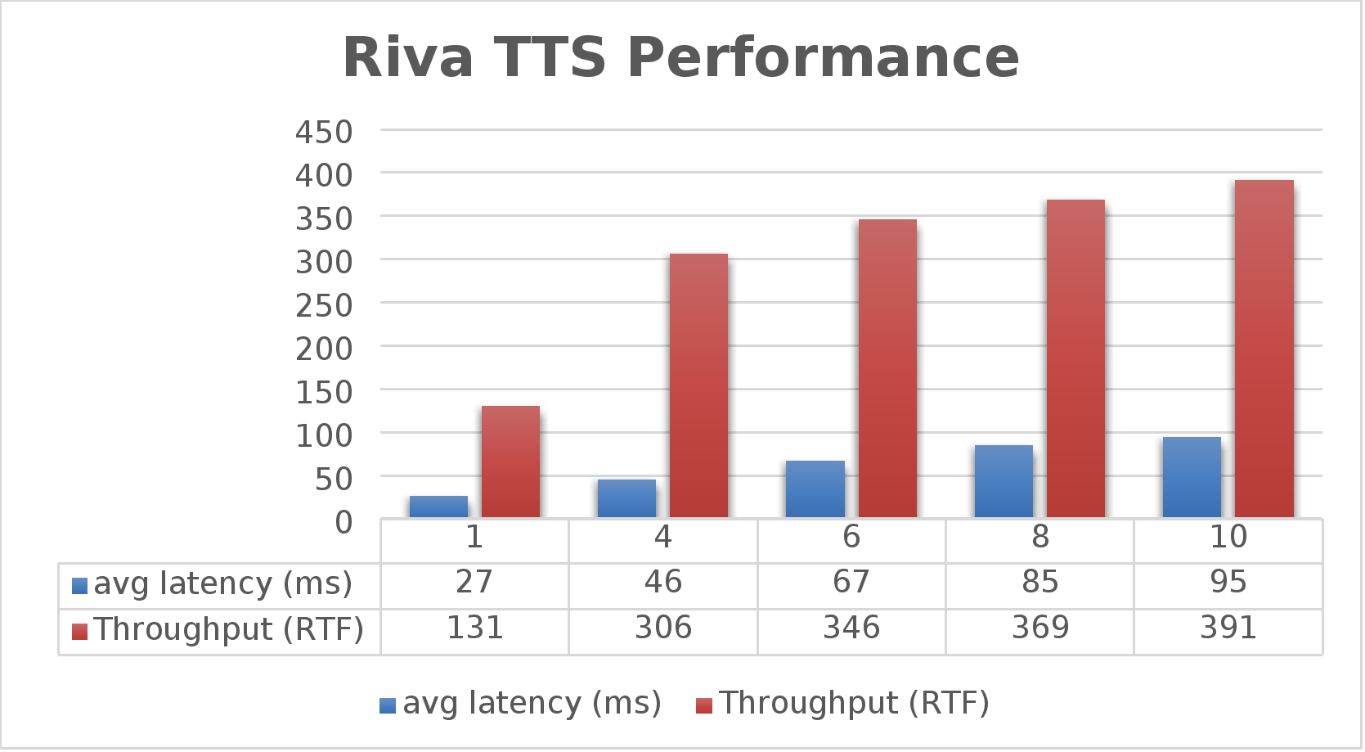

The Dell PowerFlex Solutions Engineering team went beyond just looking at how fast NVIDIA Riva could transcribe text, also exploring the speed at which it could convert text to speech (TTS). They validated this as well. Starting with a single stream, for each run the stream is changed to 4, 6, 8, and 10, as shown in the following graph.

Figure 2. NVIDIA Riva TTS Performance

Figure 2. NVIDIA Riva TTS Performance

Again, the goal is to have a low average latency with a high throughput. The throughput (RTFX) in this case is the duration of audio generated divided by computation time. As we can see, this results in a RTFX throughput of 391 with a latency of 91ms with ten streams. It is also worth noting that during testing, GPU utilization was approximately 82% with no storage bottlenecks.

This is a lot of data to pack into one poster. Luckily, the Dell PowerFlex Solutions Engineering team created a validated architecture that details how all of these results were achieved and how an organization could replicate them if needed.

Now, to put all this into perspective, with PowerFlex you can achieve great results on both spoken language coming into your organization and converting text to speech. Pair this capability with some other generative AI (genAI) tools, like NVIDIA NeMo, and you can create some ingenious systems for your organization.

For example, if an ASR model is paired with a large language model (LLM) for a help desk, users could ask it questions verbally, and—once it found the answers—it could use TTS to provide them with support. Think of what that could mean for organizations.

It's amazing how a simple poster can hold so much information and so many possibilities. If you’re interested in learning more about the research Dell PowerFlex has done with NVIDIA Riva, visit the Poster Reception at NVIDIA GTC on Monday, March 18th from 4:00 to 6:00 PM. If you are unable to join us at the poster reception, the poster will be on display throughout NVIDIA GTC. If you are unable to attend GTC, check out the white paper, and reach out to your Dell representative for more information.

Authors: Tony Foster | Twitter: @wonder_nerd | LinkedIn

Praphul Krottapalli

Kailas Goliwadekar

Related Blog Posts

NVIDIA AI Enterprise on Red Hat OpenShift

Wed, 15 Nov 2023 14:20:48 -0000

|Read Time: 0 minutes

NVIDIA AI Enterprise on Red Hat OpenShift

Red Hat OpenShift Container Platform is an enterprise-grade Kubernetes platform for deploying and managing secure and hardened Kubernetes clusters at scale. This Kubernetes distribution enables users to easily configure and use GPU resources to accelerate deep learning (DL) and machine learning (ML) workloads.

The NVIDIA H100 Tensor Core GPU, an integral part of the NVIDIA data center platform, is a high-performance GPU that is designed and optimized for AI workloads that are intended for data center and cloud-based applications. The GPU features major advances to accelerate AI, HPC, memory bandwidth, interconnect, and communication at data center scale. For more information, see NVIDIA H100 Tensor Core GPU.

NVIDIA AI Enterprise

NVIDIA AI Enterprise is an end-to-end, secure, cloud-native suite of AI software that enables organizations to solve new challenges while increasing operational efficiency. NVIDIA AI Enterprise accelerates the data science pipeline and streamlines development and deployment of production AI, including generative AI, computer vision, speech AI, and more. For more information, see NVIDIA AI Enterprise.

NVIDIA NGC catalog

The NVIDIA NGC catalog is a curated set of GPU-optimized software for AI, HPC, and Visualization. The NGC catalog simplifies building, customizing, and integrating GPU-optimized software into workflows on a variety of platforms, accelerating the time to solutions for users. The catalog includes containers, pre-trained models, Helm charts for Kubernetes deployments, and industry-specific AI toolkits. These toolkits consist of software development kits (SDKs) for NVDIA AI Enterprise that can be deployed on OpenShift Container Platform.

Prerequisites for installing NVIDIA AI Enterprise on OpenShift Container Platform

- An OpenShift cluster with a minimum of three nodes, at least one of which has an NVIDIA-supported GPU. For the list of supported GPUs, see the NVIDIA Product Support Matrix.

- A service instance for licenses. This blog briefly describes how to deploy a containerized DLS instance on OpenShift Container Platform that serves licenses to the clients.

NVIDIA license system

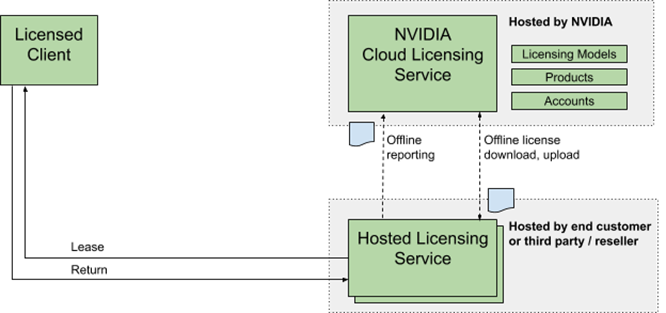

The NVIDIA license system is used to provide software licenses to licensed NVIDIA software products. The licenses are available from the NVIDIA Licensing Portal (access requires NVIDIA login credentials). The NVIDIA license system supports the following types of service instances: a Cloud License Service (CLS) instance that is hosted on the NVIDIA Licensing Portal, and a Delegated License Service (DLS) instance that is hosted on-premises at a location that is accessible from your private network, such as inside your data center.

A DLS instance is fully disconnected from the NVIDIA Licensing Portal. Licenses are downloaded from the portall and uploaded manually to the instance. The following figure depicts the flow:

Figure 1. NVIDIA DLS instance license system workflow

Figure 1. NVIDIA DLS instance license system workflow

The following DLS software image types are available:

- A virtual appliance image to be installed in a virtual machine on a supported hypervisor.

- A containerized software image for bare-metal deployment on a supported container orchestration platform.

Setting up a DLS instance

1. Download the latest "NLS License Server (DLS) 2.1 for Container Platforms" software from the NVIDIA Licensing Portal.

2. To import DLS appliance and PostgreSQL, run the following commands:

podman load --input dls_appliance_2.1.0.tar.gz

podman load --input dls_pgsql_2.1.0.tar.gz

3. Upload the DLS appliance artifact and the PostgreSQL database artifact images to a private repository.

4. Edit the deployment files for the DLS appliance artifact, and then use the PostgreSQL database artifact to pull these artifacts from the private repository.

You must provide an IP address for DLS_PUBLIC_IP. Optionally, you can edit the DLS default ports in the nls-si-0-deployment.yaml and nls-si-0-service.yaml deployment files. If a registry secret is required to pull the images from the private repository, edit the deployment files for the DLS appliance and the PostgreSQL database to reference the secret.

5. Create a Postgres instance by running the following command:

oc create -f directory/postgres-nls-si-0-deployment.yaml

6. Fetch the IP address of the Postgres pod that you created in the previous step, and then set the DLS_DB_HOST environment variable in the nls-si-0-deployment.yaml file to the IP address of the postgres pod:

oc create -f directory/nls-si-0-deployment.yaml

7. Access the DLS instance at https://<worker-node-ip>:30001. Register the default admin user dls_admin with a new password during the first login.

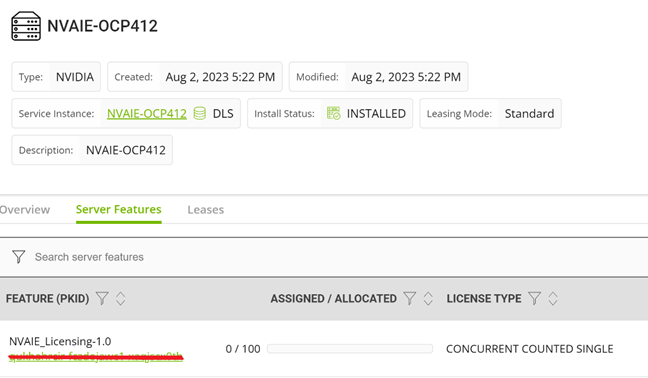

8. Create a license server on the NVIDIA Licensing Portal, and then add the licenses for the products that you want to allot to this license server.  Figure 2. Adding a license to the DLS instance

Figure 2. Adding a license to the DLS instance

9. Register the on-premises DLS instance by uploading the DLS token file dls_instance_token_mm-dd-yyyy-hh-mm-ss.tok to the NVIDIA Licensing Portal. Bind the license server that you created in the preceding step to the registered service instance.

10. Download the license file license_mm-dd-yyyy-hh-mm-ss.bin from the license server on the portal and upload it to your on-premises DLS instance. The licenses on the server are made available to the DLS instance.

11. Generate the client configuration token file from the DLS instance. The client configuration token contains information about the service instance, license servers, and fulfillment conditions to be used to serve a license in response to a client request.

12. Copy the client configuration token to clients so that the service instance has the necessary information to serve licenses to clients.

Installing NVIDIA AI Enterprise on OpenShift

1. Install the Node Feature Discovery (NFD) operator.

Install the NFD operator from the embedded Red Hat OperatorHub. After the operator is installed, create an NFD API so that the NFD operator can label the cluster nodes that have GPUs.

2. Install the NVIDIA GPU operator.

Install the NVIDIA GPU operator from the embedded Red Hat OperatorHub. The GPU operator enables Kubernetes cluster engineers to manage GPU nodes just like CPU nodes in the cluster. The operator installs and manages the life cycle of software components so that GPU-accelerated applications can be run on Kubernetes. This operator is installed in the nvidia-gpu-operator namespace by default.

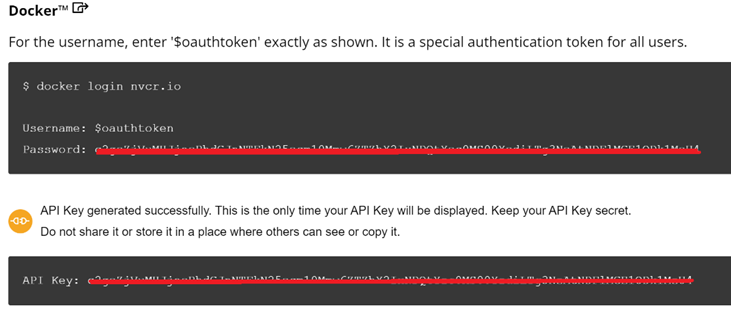

3. Create an NGC secret.

Create an image pull secret object n the nvidia-gpu-operator namespace. This object is for storing the NGC API key to authenticate your access to the NGC container registry. Generate the API key from the NGC catalog.

Use the following credentials for the NGC secret:

- Authentication type in the secret Image registry: the registry server address is nvcr.io/nvaie

- Username: $oauthtoken

- Password: the generated API key.

Figure 3. NGC secret

Figure 3. NGC secret

4. Create a ConfigMap with configuration data.

Create a configmap in the nvidia-gpu-operator namespace with the client configuration token as data.

kind: ConfigMap

apiVersion: v1

metadata:

name: licensing-config

data:

client_configuration_token.tok: >-

eyJhbGciOiJSUzI1NiIsInR5cCI6IkpXVCJ9.eyJqdGkiOiIwY2QxZ<...>

gridd.conf: '# empty file'

5. Create a Cluster Policy Custom Resource instance.

When you install the NVIDIA GPU operator in OpenShift Container Platform, a custom resource definition for a cluster policy is created. The policy configures the GPU stack that will be deployed, configuring the image names and repository, pod restrictions or credentials, and so on. When creating the cluster policy from the OpenShift web console, make the following customizations:

1. Enter the configmap containing the client configuration token that you created in the NVIDIA GPU/vGPU driver configuration file and enable the NLS.

2. Enable the deployment of the NVIDIA driver through the operator. The image repository is nvcr.io/nvaie.

3. Enter the NGC secret name in the driver configuration.

4. Specify the image name and NVIDIA vGPU driver version in the NVIDIA GPU/vGPU driver configuration section. Get this information from the NGC catalog, as shown in the following figure:

kind: ConfigMap

apiVersion: v1

metadata:

name: licensing-config

data:

client_configuration_token.tok: >-

eyJhbGciOiJSUzI1NiIsInR5cCI6IkpXVCJ9.eyJqdGkiOiIwY2QxZ<...>

gridd.conf: '# empty file'

Figure 4. Configmap with Client configuration token

For a cluster on OpenShift Container Platform version 4.12, the NVIDIA GPU driver image is vgpu-guest-driver-3-1 and the version is 525.105.17. The GPU operator installs all the components that are required to set up the NVIDIA GPUs in the OpenShift cluster.

Validation

Environment overview: The Dell OpenShift validation team used Dell PowerEdge servers hosting Red Hat OpenShift Platform 4.12 to validate the NVIDIA AI Enterprise on OpenShift. The validated environment consisted of three compute nodes hosted on PowerEdge R760, R750 and R7525 servers and equipped respectively with NVIDIA GPU H100, A40, and A100. For more information about deploying an OpenShift cluster on Dell-powered bare metal servers, see the Red Hat OpenShift Container Platform 4.12 on Dell Infrastructure Implementation Guide.

A containerized DLS instance is present on the same OpenShift cluster with all the required licenses.

The team created a TensorFlow pod using the "tensorflow-3-1" image from the nvcr.io/nvaie repository by running the following commands:

apiVersion: v1

kind: Pod

metadata:

name: gpu

spec:

nodeSelector:

nvidia.com/gpu.product: NVIDIA-H100-PCIe

containers:

- image: nvcr.io/nvaie/tensorflow-3-1:23.03-tf1-nvaie-3.1-py3

name: tensorflow

command: ["/bin/sh","-c"]

resources:

limits:

nvidia.com/gpu: 1

requests:

nvidia.com/gpu: 1

restartPolicy: Never

The ResNet-50 convolutional neural network with FP32 and FP16 precision from inside the TensorFlow pod ran successfully.

To run the test, the team used the following commands:

cd /workspace/nvidia-examples/cnn

python resnet.py --layers 50 -b 64 -i 200 -u batch --precision fp16

python resnet.py --layers 50 -b 64 -i 300 -u batch --precision fp32

References

Red Hat OpenShift Container Platform 4.12 on Dell Infrastructure Implementation Guide

OpenShift on Bare Metal Deployment Guide

NVIDIA License System v3.2.0

NVIDIA User Guide

NVIDIA AI Enterprise with OpenShift

VMware Explore, PowerFlex, and Silos of Glitter: this blog has it all!

Fri, 18 Aug 2023 19:30:20 -0000

|Read Time: 0 minutes

Those who know me are aware that I’ve been a big proponent of one platform that must be able to support multiple workloads—and Dell PowerFlex can. If you are at VMware Explore you can see a live demo of both traditional database workloads and AI workloads running on the same four PowerFlex nodes.

When virtualization took the enterprise by storm, a war was started against silos. First was servers, and the idea that we can consolidate them on a few large hosts with virtualization. This then rapidly moved to storage and continued to blast through every part of the data center. Yet today we still have silos. Mainly in the form of workloads, these hide in plain sight - disguised with other names like “departmental,” “project,” or “application group.”

Some of these workload silos are becoming even more stealthy and operate under the guise of needing “different” hardware or performance, so IT administrators allow them to operate in a separate silo.

That is wasteful! It wastes company resources, it wastes the opportunity to do more, and it wastes your time managing multiple environments. It has become even more of an issue with the rise of Machine Learning (ML) and AI workloads.

If you are at VMware Explore this year you can see how to break down these silos with Dell PowerFlex at the Kioxia booth (Booth 309). Experience the power of running ResNet 50 image classification and OLTP (Online Transactional Processing) workloads simultaneously, live from the show floor. And if that’s not enough, there are experts, and lots of them! You might even get the chance to visit with the WonderNerd.

This might not seem like a big deal, right? You just need a few specialty systems, some storage, and a bit of IT glitter… some of the systems run the databases, some run the ML workloads. Sprinkle some of that IT glitter and poof you’ve got your workloads running together. Well sort of. They’re in the same rack at least.

Remember: silos are bad. Instead, let’s put some PowerFlex in there! And put that glitter back in your pocket, this is a data center, not a five-year old’s birthday party.

PowerFlex supports NVIDIA GPUs with MIG technology which is part of NVIDIA AI Enterprise, so we can customize our GPU resources for the workloads that need them. (Yes, there is nothing that says you can’t run different workloads on the same hosts.) Plus, PowerFlex uses Kioxia PM7 series SSDs, so there is plenty of IOPS to go around while ensuring sub-millisecond latency for both workloads. This allows the data to be closer to the processing, maybe even on the same host.

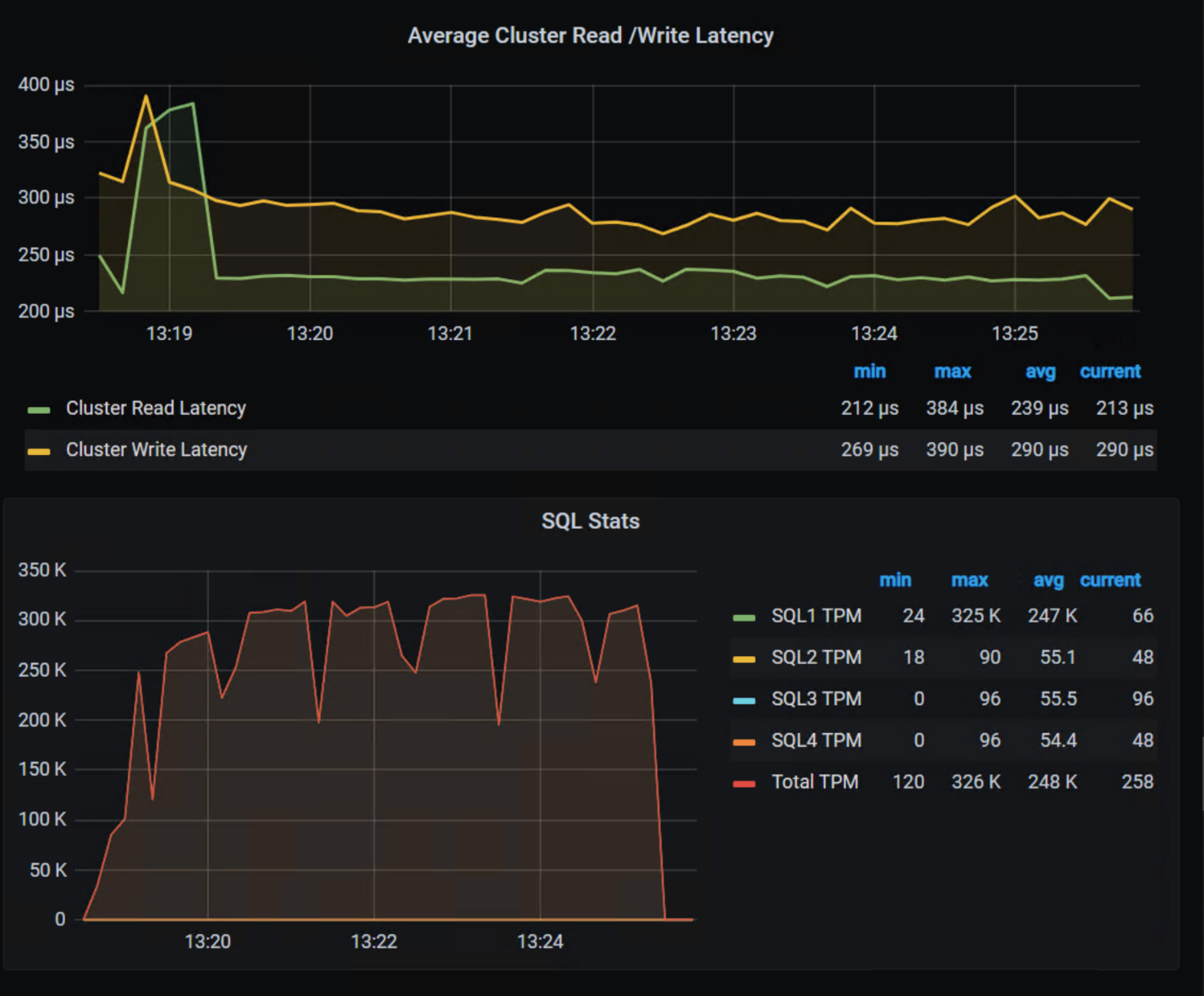

In our lab tests, we could push one million transactions per minute (TPM) with OLTP workloads while also processing 6620 images per second using a RESNET50 model built on NVIDIA NGC containers. These are important if you want to keep customers happy, especially as more and more organizations add AI/ML capabilities to their online apps, and more and more data is generated from all those new apps.

Here are the TPM results from the demo environment that is running our four SQL VMs. The TPMs in this test are maxing out around 320k and the latency is always sub-millisecond. This is the stuff you want to show off, not that pocket full of glitter.

Yeah, you can silo your environments and hide them with terms like “project” and “application group,” but everyone will still know they are silos.

We all started battling silos at the dawn of virtualization. PowerFlex with Kioxia drives and NVIDIA GPUs gives administrators a fighting chance to win the silo war.

You can visit the NVIDIA team at Lounge L3 on the show floor during VMware Explore. And of course, you have to stop by the Kioxia booth (309) to see what PowerFlex can do for your IT battles. We’ll see you there!

Author: Tony Foster

Twitter: | |

LinkedIn: | |

Personal Blog: | |

Location: | The Land of Oz [-6 GMT] |

Contributors: Kailas Goliwadekar, Anup Bharti