Blog

Blogs for Dell Technologies Computer Vision solutions.

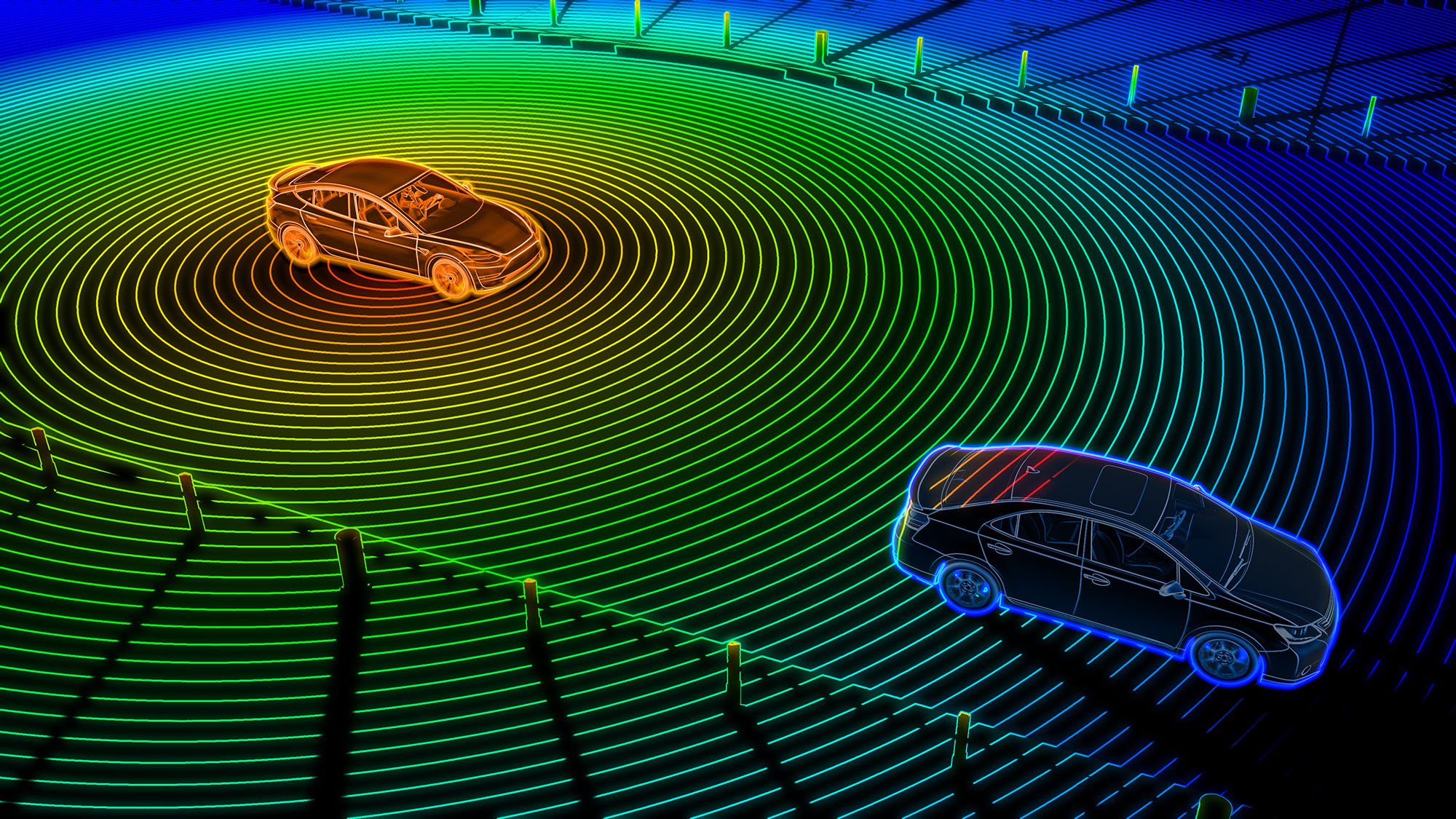

Synthetic Data Generation with Dell PowerEdge R760xa and NVIDIA Omniverse Platform

Tue, 14 May 2024 15:08:48 -0000

|Read Time: 0 minutes

Developing Digital Twin 3D Models on Dell PowerEdge R760xa with NVIDIA Omniverse virtualized platform

Tue, 14 May 2024 15:16:38 -0000

|Read Time: 0 minutes

Dell Integrated System for Microsoft Azure Stack HCI with Storage Spaces Direct

Fri, 01 Mar 2024 22:18:07 -0000

|Read Time: 0 minutes

Deploy Virtualized NVIDIA Omniverse Environment with Dell PowerEdge R760xa and NVIDIA L40 GPUs

Tue, 14 May 2024 15:08:48 -0000

|Read Time: 0 minutes

Optimizing Computer Vision Workloads: A Guide to Selecting NVIDIA GPUs

Fri, 27 Oct 2023 15:31:21 -0000

|Read Time: 0 minutes

Who’s watching your IP cameras?

Thu, 20 Jul 2023 18:05:50 -0000

|Read Time: 0 minutes

Insights into selecting a self-service UI framework for Ansible Automation

Wed, 03 Aug 2022 01:09:40 -0000

|Read Time: 0 minutes

Automating the Installation of AWX Using Minikube and Ansible

Tue, 02 Aug 2022 19:38:15 -0000

|Read Time: 0 minutes