Mastering PowerStore

Fri, 03 Feb 2023 23:22:59 -0000

|Read Time: 0 minutes

Introduction

Are you wanting to learn more about PowerStore but not sure where to start? Look no further! In this blog, we describe the top five best PowerStore resources to get you started on PowerStore. These resources include:

- the PowerStore YouTube playlist

- the PowerStore Data Sheet, and

- the Introduction to PowerStore white paper

In this blog we take a look at the advantages of each resource with an emphasis on its use cases and value provided to you.

PowerStore Youtube playlist

The journey towards PowerStore mastery begins at the PowerStore YouTube playlist. The playlist consists of 25 videos and provides an assortment of introduction videos, overviews, demos, lightboard sessions, and discussions of particular features of PowerStore.

To get acquainted with PowerStore at a high level, the introduction videos provide a broad survey of all things PowerStore, including key features, hardware components, architecture, services, performance, and the competitive advantages of PowerStore. These videos leave you wanting to hear more about the technologies and services that PowerStore has to offer.

To understand PowerStore features and services in more detail, the playlist contains another set of overview videos that go over key features and services, such as cybersecurity, hardware, migration, cloud storage services. , and a demo. These videos also include a comprehensive demo that introduces the design of the PowerStore UI.

The videos also include lightboard sessions that have an instruction feel to them. These short-form lightboard sessions go over always-on data reduction, scale up and scale out, machine learning and AI, and PowerStore’s modern software architecture. They do a great job at communicating the technologies that PowerStore has to offer.

Finally, this playlist features discussion videos that further elaborate on VMware virtual volumes, appson, anytime upgrades, and intelligent automation. These take a more personal approach to discussing features and allow the audience to see a back and forth discussion of many questions that viewers may be asking themselves, and to have those questions answered.

PowerStore datasheet

The next resource for mastering PowerStore is the PowerStore Datasheet.

The PowerStore datasheet consolidates PowerStore into four pages and revolves around three key ideas, that PowerStore is:

- adaptable - PowerStore can support any workload, is built for performance, scales up and scales out, and protects critical data.

- intelligent - PowerStore provides self-optimization, proactive health analytics, and programmable infrastructure.

- continuously modern - PowerStore offers an all-inclusive subscription, non-disruptive hardware updates, and an anytime upgrade advantage.

The datasheet also describes the ways you can migrate to PowerStore, and the services that Dell Technologies offers to make the transition to PowerStore as seamless as possible.

A PowerStore white paper: Introduction to the Platform

Building off of the PowerStore datasheet is the white paper Introduction to the Platform.

As the abstract states, this white paper details the value proposition, architecture, and various deployment considerations of the available PowerStore appliances. Where the previous resources discussed (videos and datasheet) provide immediate overviews and discussions to help you grasp PowerStore in a more consolidated way, the Introduction to the Platform white paper takes a deep dive into the details of PowerStore and is intended for IT administrators, storage architects, partners, Dell Technologies employees, and people who are considering PowerStore.

The white paper follows through on your PowerStore education with in-depth displays of the PowerStore value proposition, architecture, and hardware. The hardware section includes information about modules, power supplies, drives, expansion enclosures, and much more. It also includes a glossary of terms and definitions to help you further your understanding of PowerStore and storage as a whole.

Another helpful resource that complements the white paper is the PowerStore Installation and Service guide, available here. This document includes all customer facing hardware procedures, is far more technical, and conveys the level of detail needed to further your knowledge of PowerStore.

PowerStore: Info Hub - Product Documentation & Videos

Hands-on Labs

Sometimes learning is done by doing. This resource does just that! After a proper introduction including videos, datasheet, and in-depth insights, this resource calls you to action. For PowerStore T, the Administration and Management Hands-on Lab and the Data Protection Hands-on Lab walk you through various aspects of PowerStore, and allow you to experience PowerStore’s UX/UI design and its capabilities first hand.

The Administration and Management Hands-on Lab covers three modules:

- PowerStore Management - As the module name states, this module walks you through a wide array of management actions and functions you can perform.This module allows the user to create clusters and manage devices in scale out functions.

- PowerStore Provisioning - This module guides you through step-by-step instructions on how to use the PowerStore Manager UI to provision volumes. PowerStore Manager’s intuitive and easy to use UI design is one of the differentiators that is highlighted in this module.

- PowerStore Monitoring - Finally, the third module walks you through PowerStore monitoring capabilities that include health analytics and warning messages that are delivered to the user.

In the Data Protection Hands-on Lab for PowerStore T, you can walk through various data protection features. There are four modules in this lab:

- PowerStore Protection Policies - allows you to work with PowerStore protection policies, which include scheduled snapshots and replication

- PowerStore Thin Clones - lets you configure and work with thin clones

- PowerStore Import - lets you configure an import session, to PowerStore from another Dell system

- PowerStore Migration – shows how to initiate an internal migration between PowerStore systems in the same cluster

Administration and Management Hands-on Lab

The Dell Technologies Info Hub

The final resource to round out mastering PowerStore is this site itself! The Dell Info Hub contains a wide assortment of blogs regarding particular features, attributes, and releases. From this page, you can find white papers, blogs, and hands-on labs on a variety of PowerStore topics. Whether its block storage, file storage, or updates, the Dell Info Hub provides all sorts of educational materials on all things PowerStore.

https://infohub.delltechnologies.com/t/storage/

Author: Hector Reyes, Analyst, Product Management

Related Blog Posts

Q1 2024 Update for Ansible Integrations with Dell Infrastructure

Tue, 02 Apr 2024 14:45:56 -0000

|Read Time: 0 minutes

In this blog post, I am going to cover the new Ansible functionality for the Dell infrastructure portfolio that we released over the past two quarters. Ansible collections are now on a monthly release cadence, and you can bookmark the changelog pages from their respective GitHub pages to get updates as soon as they are available!

PowerScale Ansible collections 2.3 & 2.4

SyncIQ replication workflow support

SyncIQ is the native remote replication engine of PowerScale. Before seeing what is new in the Ansible tasks for SyncIQ, let’s take a look at the existing modules:

- SyncIQPolicy: Used to query, create, and modify replication policies, as well as to start a replication job.

- SyncIQJobs: Used to query, pause, resume, or cancel a replication job. Note that new synciq jobs are started using the synciqpolicy module.

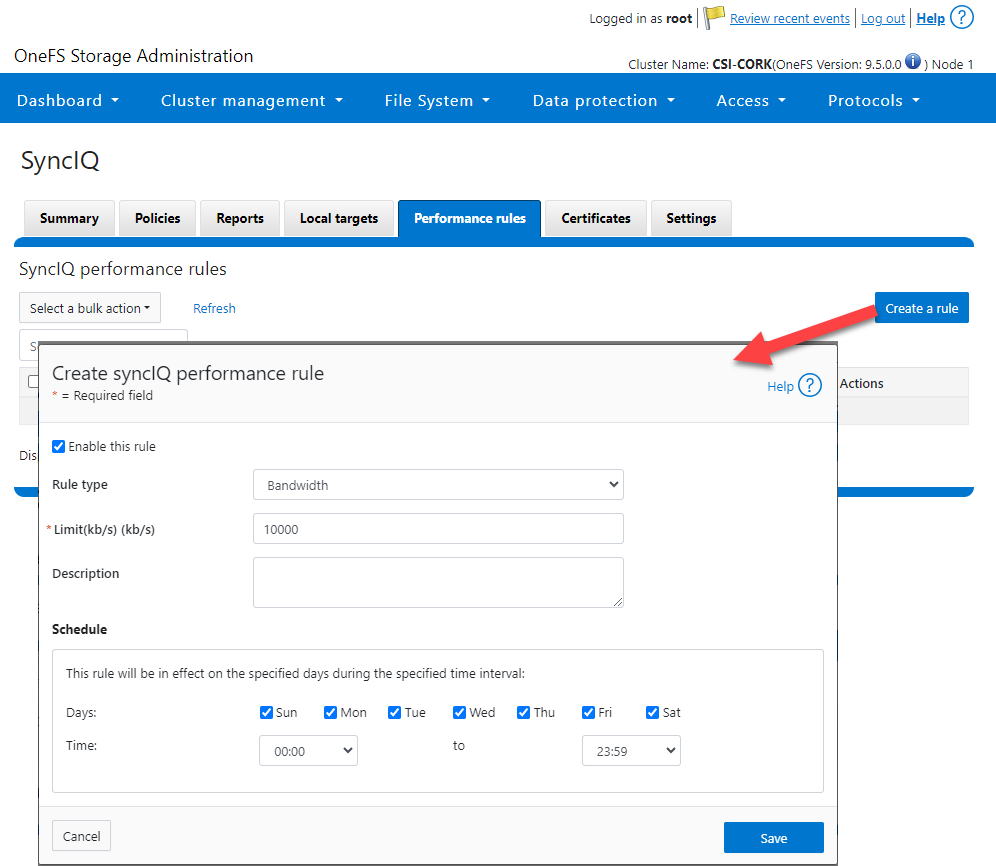

- SyncIQRules: Used to manage the replication performance rules that can be accessed as follows on the OneFS UI:

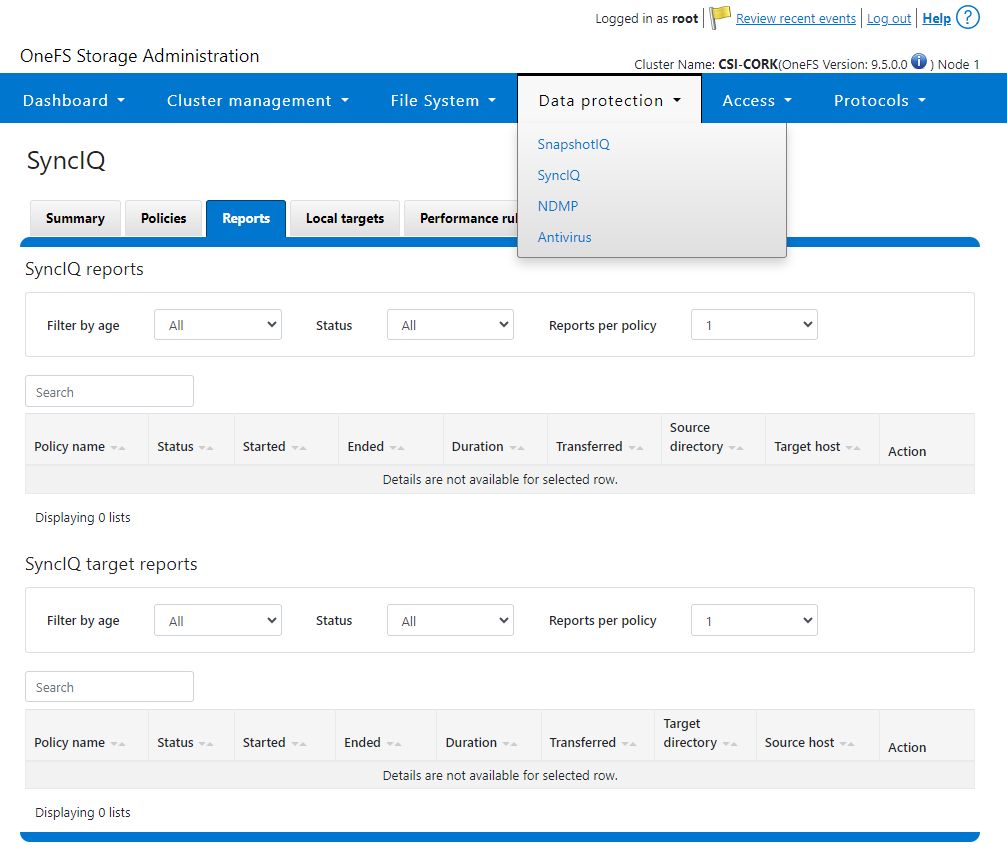

- SyncIQReports and SyncIQTargetReports: Used to manage SyncIQ reports. Following is the corresponding management UI screen where it is done manually:

Following are the new modules introduced to enhance the Ansible automation of SyncIQ workflows:

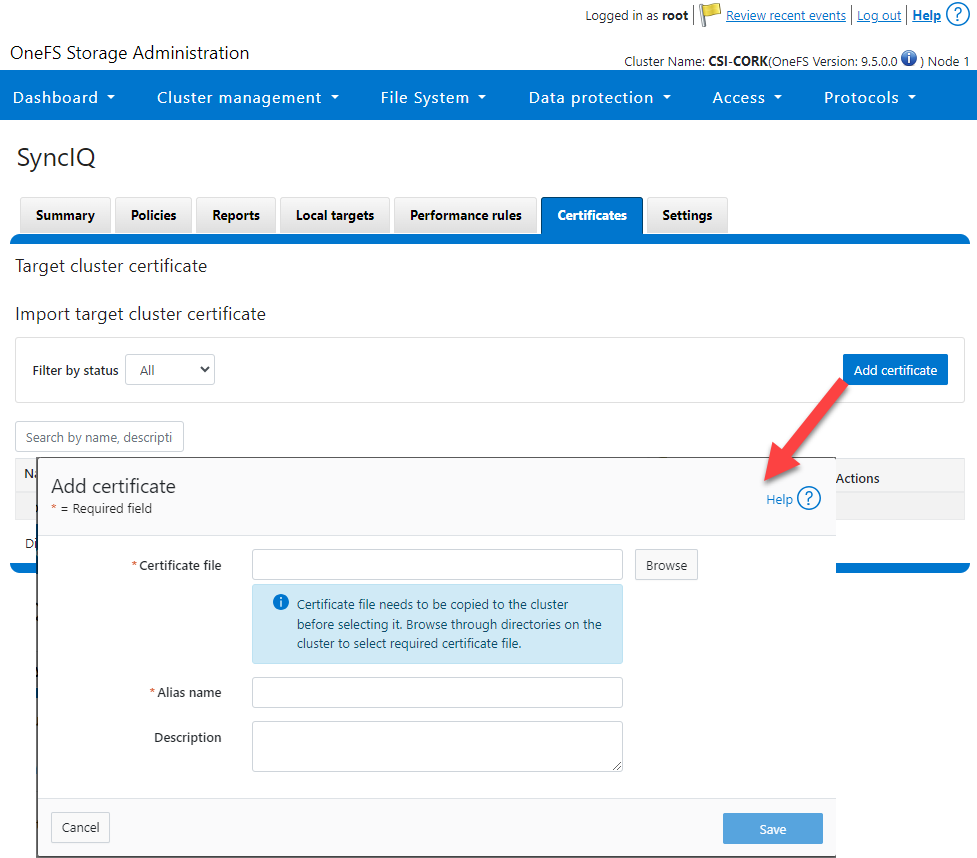

- SyncIQCertificate (v2.3): Used to manage SyncIQ target cluster certificates on PowerScale. Functionality includes getting, importing, modifying, and deleting target cluster certificates. Here is the OneFS UI for these settings:

- SyncIQ_global_settings (v2.3): Used to configure SyncIQ global settings that are part of the include the following:

Table 1. SyncIQ settings

SyncIQ Setting (datatype) | Description |

bandwidth_reservation_reserve_absolute (int) | The absolute bandwidth reservation for SyncIQ |

bandwidth_reservation_reserve_percentage (int) | The percentage-based bandwidth reservation for SyncIQ |

cluster_certificate_id (str) | The ID of the cluster certificate used for SyncIQ |

encryption_cipher_list (str) | The list of encryption ciphers used for SyncIQ |

encryption_required (bool) | Whether encryption is required or not for SyncIQ |

force_interface (bool) | Whether the force interface is enabled or not for SyncIQ |

max_concurrent_jobs (int) | The maximum number of concurrent jobs for SyncIQ |

ocsp_address (str) | The address of the OCSP server used for SyncIQ certificate validation |

ocsp_issuer_certificate_id (str) | The ID of the issuer certificate used for OCSP validation in SyncIQ |

preferred_rpo_alert (bool) | Whether the preferred RPO alert is enabled or not for SyncIQ |

renegotiation_period (int) | The renegotiation period in seconds for SyncIQ |

report_email (str) | The email address to which SyncIQ reports are sent |

report_max_age (int) | The maximum age in days of reports that are retained by SyncIQ |

report_max_count (int) | The maximum number of reports that are retained by SyncIQ |

restrict_target_network (bool) | Whether to restrict the target network in SyncIQ |

rpo_alerts (bool) | Whether RPO alerts are enabled or not in SyncIQ |

service (str) | Specifies whether the SyncIQ service is currently on, off, or paused |

service_history_max_age (int) | The maximum age in days of service history that is retained by SyncIQ |

service_history_max_count (int) | The maximum number of service history records that are retained by SyncIQ |

source_network (str) | The source network used by SyncIQ |

tw_chkpt_interval (int) | The interval between checkpoints in seconds in SyncIQ |

use_workers_per_node (bool) | Whether to use workers per node in SyncIQ or not |

Additions to Info module

The following information fields have been added to the Info module:

- S3 buckets

- SMB global settings

- Detailed network interfaces

- NTP servers

- Email settings

- Cluster identity (also available in the Settings module)

- Cluster owner (also available in the Settings module)

- SNMP settings

- SynciqGlobalSettings

PowerStore Ansible collections 3.1: More NAS configuration

In this release of Ansible collections for PowerStore, new modules have been added to manage the NAS Server protocols like NFS and SMB, as well as to configure a DNS or NIS service running on PowerStore NAS.

Managing NAS Server interfaces on PowerStore

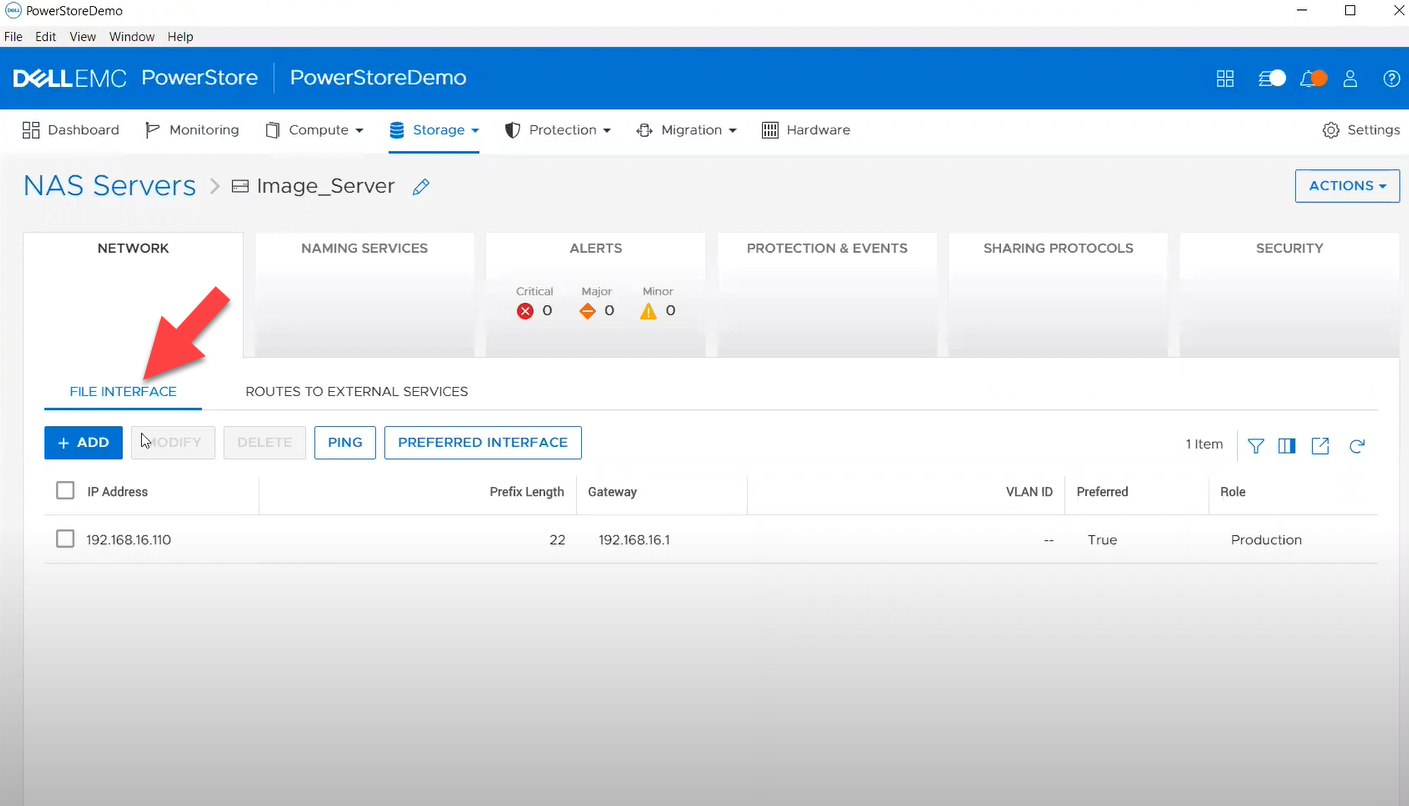

- file_interface - to enable, query, and modify PowerStore NAS interfaces. Some examples can be found here.

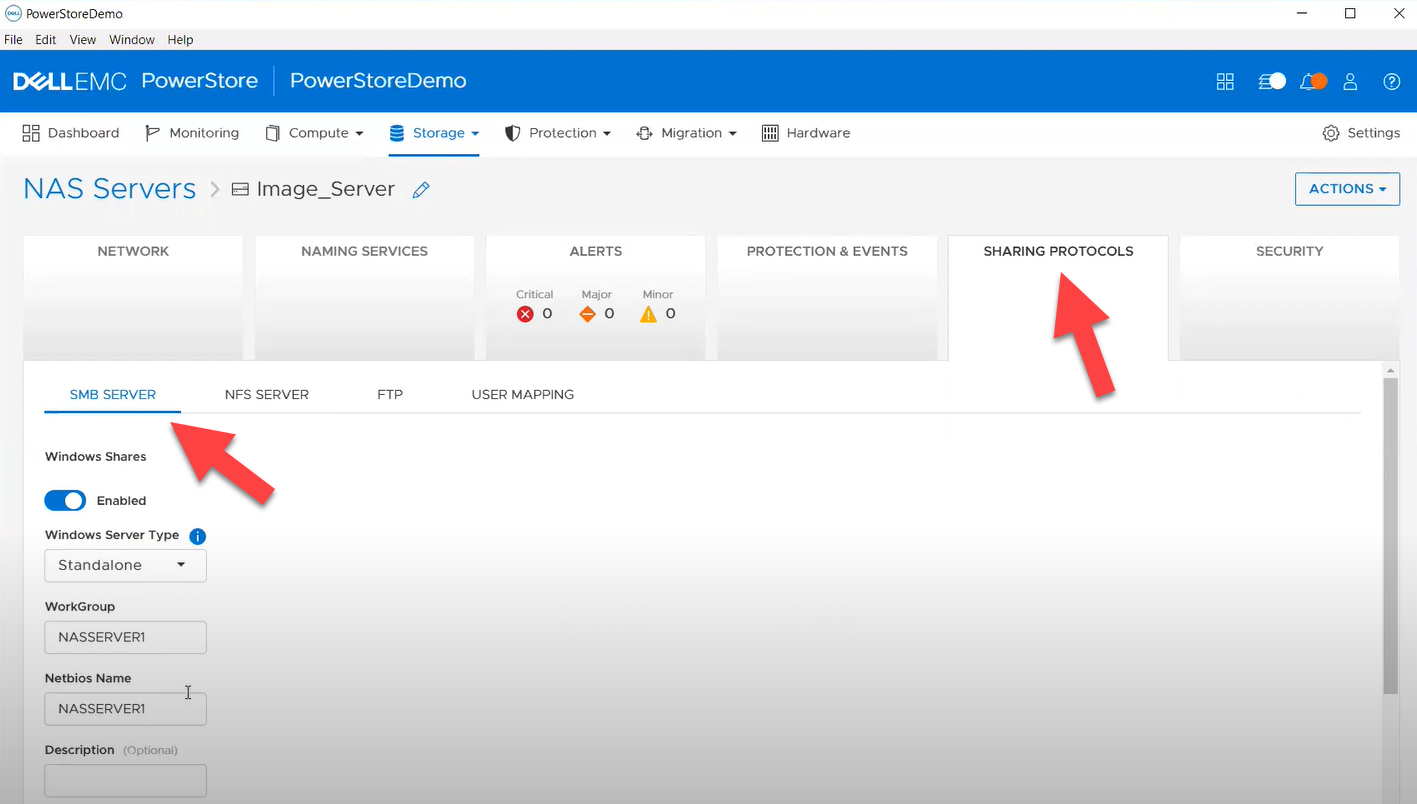

- smb_server - to enable, query, and modify SMB Shares on PowerStore NAS. Some examples can be found here.

- nfs_server - to enable, query, and modify NFS Server on PowerStore NAS. Some examples can be found here.

Naming services on PowerStore NAS

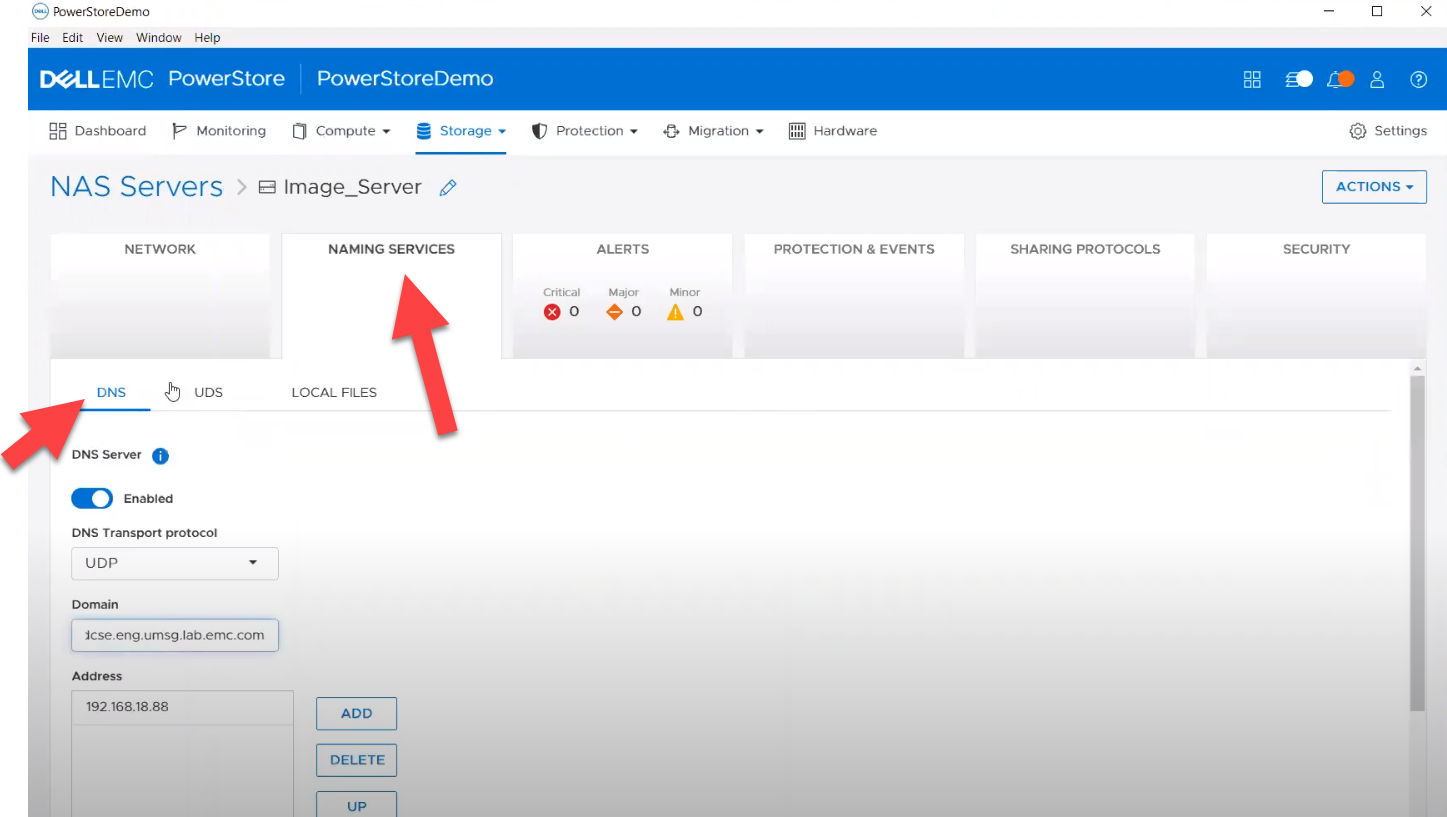

- file_dns – to enable, query, and modify File DNS on PowerStore NAS. Some examples can be found here.

- file_nis - to enable, query, and modify NIS on PowerStore NAS. Some examples can be found here.

- service_config - manage service config for PowerStore

The Info module is enhanced to list file interfaces, DNS Server, NIS Server, SMB Shares, and NFS exports. Also in this release, support has been added for creating multiple NFS exports with same name but different NAS servers.

PowerFlex Ansible collections 2.0.1 and 2.1: More roles

In releases 1.8 and 1.9 of the PowerFlex collections, new roles have been introduced to install and uninstall various software components of PowerFlex to enable day-1 deployment of a PowerFlex cluster. In the latest 2.0.1 and 2.1 releases, more updates have been made to roles, such as:

- Updated config role to support creation and deletion of protection domains, storage pools, and fault sets

- New role to support installation and uninstallation of Active MQ

- Enhanced SDC role to support installation on ESXi, Rocky Linux, and Windows OS

OpenManage Ansible collections: More power to iDRAC

At the risk of repetition, OpenManage Ansible collections have modules and roles for both OpenManage Enterprise as well as iDRAC/Redfish node interfaces. In the last five months, a plethora of a new functionalities (new modules and roles) have become available, especially for the iDRAC modules in the areas of security and user and license management. Following is a summary of the new features:

V9.1

- redfish_storage_volume now supports iDRAC8.

- dellemc_idrac_storage_module is deprecated and replaced with idrac_storage_volume.

v9.0

- Module idrac_diagnostics is added to run and export diagnostics on iDRAC.

- Role idrac_user is added to manage local users of iDRAC.

v8.7

- New module idrac_license to manage iDRAC licenses. With this module you can import, export, and delete licenses on iDRAC.

- idrac_gather_facts role enhanced to add storage controller details in the role output and provide support for secure boot.

v8.6

- Added support for the environment variables, `OME_USERNAME` and `OME_PASSWORD`, as fallback for credentials for all modules of iDRAC, OME, and Redfish.

- Enhanced both idrac_certificates module and role to support the import and export of `CUSTOMCERTIFICATE`, Added support for import operation of `HTTPS` certificate with the SSL key.

v8.5

- redfish_storage_volume module is enhanced to support reboot options and job tracking operation.

v8.4

- New module idrac_network_attributes to configure the port and partition network attributes on the network interface cards.

Conclusion

Ansible is the most extensively used automation platform for IT Operations, and Dell Technologies provides an exhaustive set of modules and roles to easily deploy and manage server and storage infrastructure on-prem as well as on Cloud. With the monthly release cadence for both storage and server modules, you can get access to our latest feature additions even faster. Enjoy coding your Dell infrastructure!

Author: Parasar Kodati, Engineering Technologist, Dell ISG

When Performance Testing Your Storage, Avoid Zeros!

Tue, 20 Feb 2024 17:37:42 -0000

|Read Time: 0 minutes

Storage benchmarking

Occasionally, Dell Technologies customers will want to run their own storage performance tests to ensure that their storage can meet the demands of their workload. Dell Technologies partners like Microsoft publish guidance on how to use benchmarking tools such as Diskspd to test various workloads. When running these tools on intelligent storage appliances like those offered by Dell Technologies, don’t forget to watch for how your test files are populated!

The first step in using performance benchmark tools is creating one or more test files for use when testing. The benchmark tool will then write and read data to and from these files, taking measurements to assess performance. An important detail that is often overlooked is how the test files are populated with data. If the files are not populated correctly, it can lead to misleading results and inaccurate conclusions.

We’ll use Diskspd as an example, however please note that most tools have the same default behavior. By default, when you run a Diskspd test, you need to specify several parameters, such as a test file location and size, IO block size, read/write ratio, queue depth, and so on.

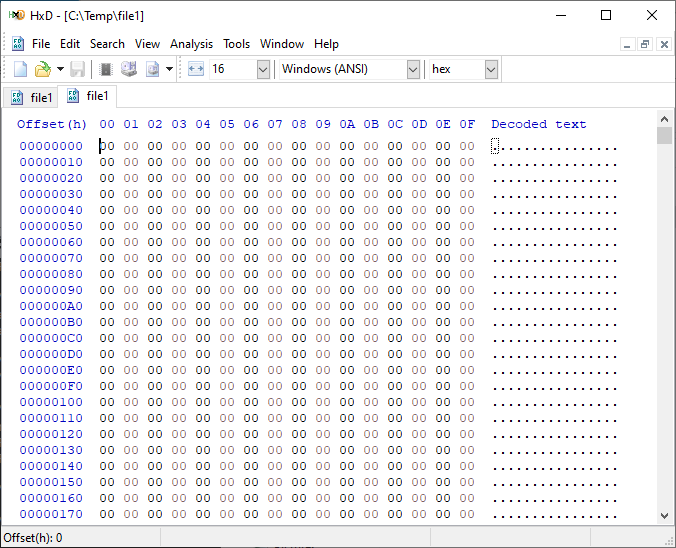

If we open a test file created with default parameters and examine it with a hexadecimal editor, this is what it looks like:

It is filled with nothing, 0x00 throughout the entire file – all “zeros”!

OK, so what is the problem?

When storage benchmarking tools create test files, they all use synthetic data for testing. This is fine when performing IO to a storage device with no “intelligence” built in because it will perform unaltered IO directly to the storage without the data content mattering. In the past, storage devices were simple and would read and write data as commanded, so the data content was irrelevant.

However, intelligent storage appliances such as those offered by Dell Technologies look at data differently. These products are built for efficiency and performance. Compression, deduplication, zero detection, and other optimizations may be used for space savings and performance. Since an empty file would obviously compress and deduplicate well, most of this IO will not access the disks in the same manner that a file of actual data would. It is also possible that other components in the data path would behave differently than normal when repeatedly presented with an identical piece of data.

It is safe to assume that these optimizations likely exist on data being stored in the cloud as well. Many cloud providers use intelligent storage appliances or have developed proprietary software to optimize storage.

The bottom line is that your test is likely inaccurate and may not represent your storage performance under more realistic conditions. While no synthetic test can reproduce a real workload 100%, you should try to make it as realistic as possible.

Mitigations

Some tools can initialize the test files with random data. Diskspd, for example, has parameters that can be added to create a buffer of random data to be used to write to the files or specify a source file of data. Regardless of the method used, you should inspect the test files to make sure that at a minimum, random data is being used. Zero-filled files and repeating patterns should be avoided.

Random data also may not achieve the expected behavior when compression and deduplication capabilities are used. More advanced testing tools such as vdbench can use target compression and deduplication capabilities independently.

Tips

Here are a few more tips when benchmarking storage performance to try to make it as realistic as possible:

- Use datasets of comparable size to real data workloads. Smaller datasets may fit entirely in the cache and skew results.

- Use IO sizes and read/write ratios that match your workload. If you are unsure of what your workload looks like, your Dell Technologies representative can assist you.

- Test with “multiples”. Intelligent storage assumes multiple files, volumes, and hosts. At a minimum, use multiple files and volumes. When testing larger block sizes, you may need to use multiple hosts and multiple host bus adapters to generate enough IO to test the full bandwidth capabilities of the storage.

- Start with a light load and scale up. Begin with one file, one worker thread, and a queue depth of one. In general, modern storage is designed for concurrency. Some amount of concurrency will be required to fully use storage system resources. As you scale up, observe the behavior. Pay attention to the measured latency. At some point as you scale the test, latency will start to increase rapidly.

- Excessive latency indicates a bottleneck. Once latencies are excessive, you have encountered a bottleneck somewhere. “Excessive” is a relative term when it comes to storage latency and is determined by your workload and business needs. Only scale the test to the point where the measured latency is within your acceptable range or above. Further increasing the test load will result in diminishing returns.

- Make sure the entire test environment can drive the wanted performance. The storage network and host configuration must be capable of desired performance levels and configured properly.

- Beware of outdated guidance. There are still articles online that are over a decade old that reference testing methods and best practices that were developed when storage was based on spinning disks. Those assumptions may be inaccurate on the latest storage devices and storage network protocols.

Summary

Storage performance benchmarking can be interesting and provide useful data points. That said, what is most important is how the storage supports actual business workloads and—most importantly—your unique workload. As such, there is no true substitute for testing with your actual workload.

Selecting the proper storage fit for your environment can be challenging, and Dell Technologies has the expertise to help. Leveraging tools like CloudIQ and LiveOptics, Dell Technologies can help you analyze your storage performance, explain storage metrics, and make recommendations to increase storage efficiency.

Author: Doug Bernhardt, Sr. Principal Engineering Technologist | LinkedIn