Q1 2024 Update for Ansible Integrations with Dell Infrastructure

Tue, 02 Apr 2024 14:45:56 -0000

|Read Time: 0 minutes

In this blog post, I am going to cover the new Ansible functionality for the Dell infrastructure portfolio that we released over the past two quarters. Ansible collections are now on a monthly release cadence, and you can bookmark the changelog pages from their respective GitHub pages to get updates as soon as they are available!

PowerScale Ansible collections 2.3 & 2.4

SyncIQ replication workflow support

SyncIQ is the native remote replication engine of PowerScale. Before seeing what is new in the Ansible tasks for SyncIQ, let’s take a look at the existing modules:

- SyncIQPolicy: Used to query, create, and modify replication policies, as well as to start a replication job.

- SyncIQJobs: Used to query, pause, resume, or cancel a replication job. Note that new synciq jobs are started using the synciqpolicy module.

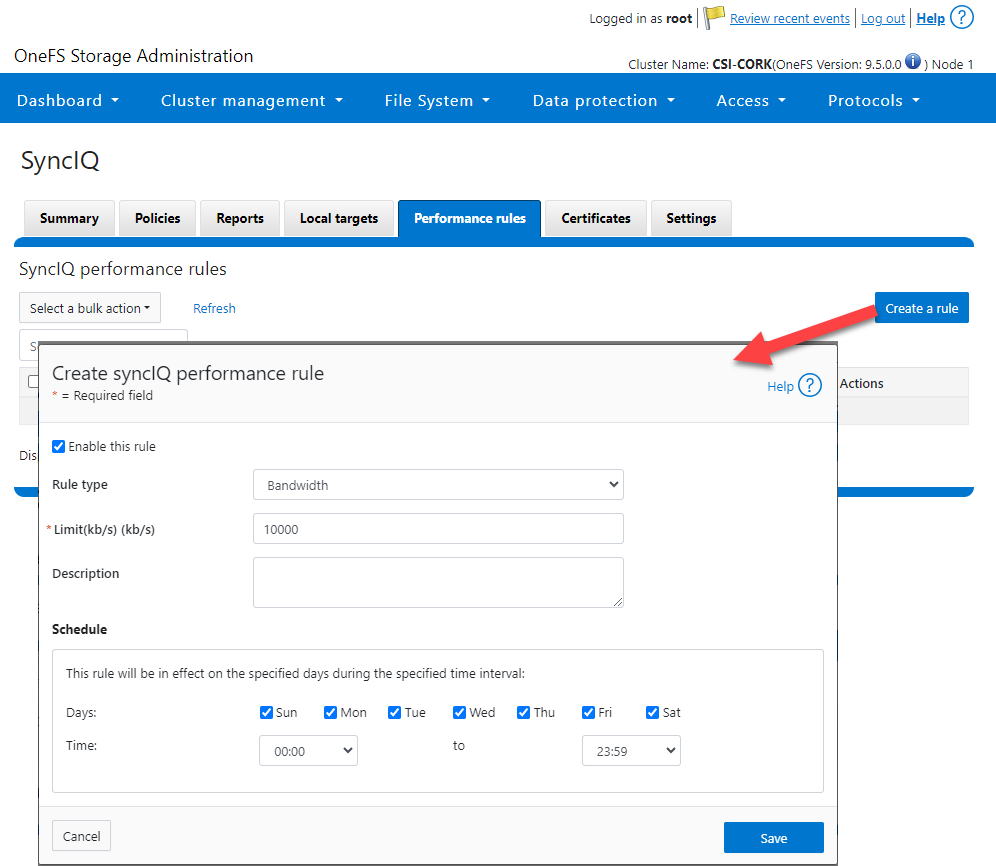

- SyncIQRules: Used to manage the replication performance rules that can be accessed as follows on the OneFS UI:

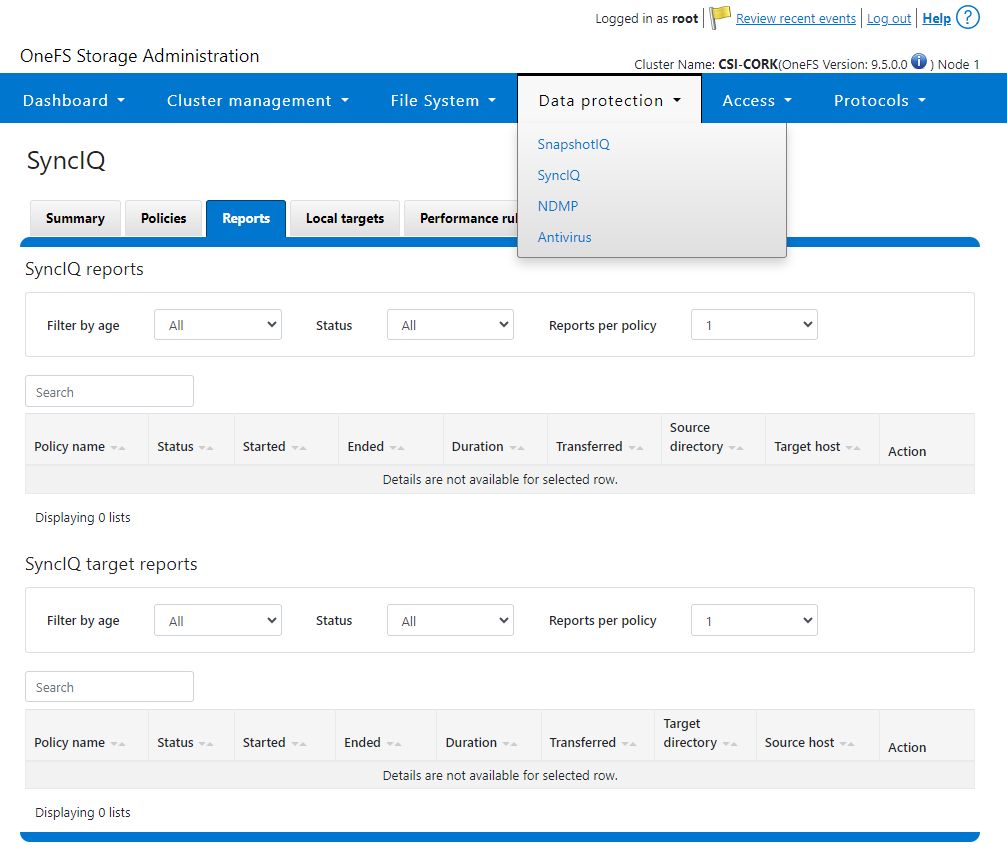

- SyncIQReports and SyncIQTargetReports: Used to manage SyncIQ reports. Following is the corresponding management UI screen where it is done manually:

Following are the new modules introduced to enhance the Ansible automation of SyncIQ workflows:

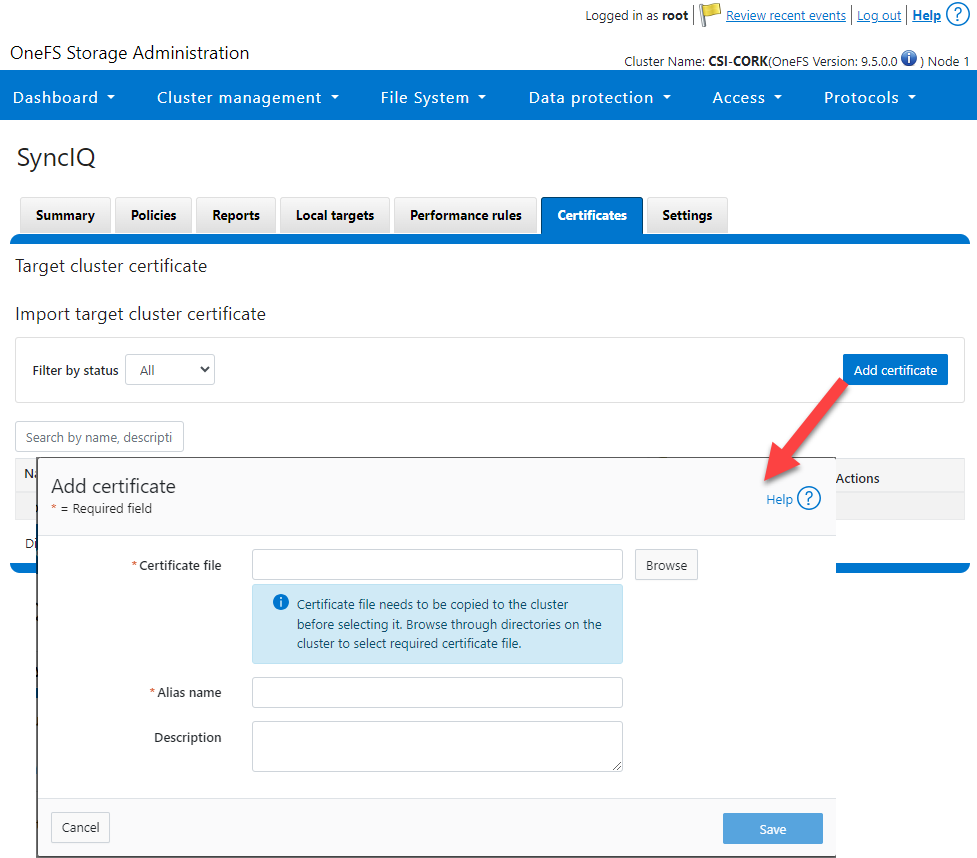

- SyncIQCertificate (v2.3): Used to manage SyncIQ target cluster certificates on PowerScale. Functionality includes getting, importing, modifying, and deleting target cluster certificates. Here is the OneFS UI for these settings:

- SyncIQ_global_settings (v2.3): Used to configure SyncIQ global settings that are part of the include the following:

Table 1. SyncIQ settings

SyncIQ Setting (datatype) | Description |

bandwidth_reservation_reserve_absolute (int) | The absolute bandwidth reservation for SyncIQ |

bandwidth_reservation_reserve_percentage (int) | The percentage-based bandwidth reservation for SyncIQ |

cluster_certificate_id (str) | The ID of the cluster certificate used for SyncIQ |

encryption_cipher_list (str) | The list of encryption ciphers used for SyncIQ |

encryption_required (bool) | Whether encryption is required or not for SyncIQ |

force_interface (bool) | Whether the force interface is enabled or not for SyncIQ |

max_concurrent_jobs (int) | The maximum number of concurrent jobs for SyncIQ |

ocsp_address (str) | The address of the OCSP server used for SyncIQ certificate validation |

ocsp_issuer_certificate_id (str) | The ID of the issuer certificate used for OCSP validation in SyncIQ |

preferred_rpo_alert (bool) | Whether the preferred RPO alert is enabled or not for SyncIQ |

renegotiation_period (int) | The renegotiation period in seconds for SyncIQ |

report_email (str) | The email address to which SyncIQ reports are sent |

report_max_age (int) | The maximum age in days of reports that are retained by SyncIQ |

report_max_count (int) | The maximum number of reports that are retained by SyncIQ |

restrict_target_network (bool) | Whether to restrict the target network in SyncIQ |

rpo_alerts (bool) | Whether RPO alerts are enabled or not in SyncIQ |

service (str) | Specifies whether the SyncIQ service is currently on, off, or paused |

service_history_max_age (int) | The maximum age in days of service history that is retained by SyncIQ |

service_history_max_count (int) | The maximum number of service history records that are retained by SyncIQ |

source_network (str) | The source network used by SyncIQ |

tw_chkpt_interval (int) | The interval between checkpoints in seconds in SyncIQ |

use_workers_per_node (bool) | Whether to use workers per node in SyncIQ or not |

Additions to Info module

The following information fields have been added to the Info module:

- S3 buckets

- SMB global settings

- Detailed network interfaces

- NTP servers

- Email settings

- Cluster identity (also available in the Settings module)

- Cluster owner (also available in the Settings module)

- SNMP settings

- SynciqGlobalSettings

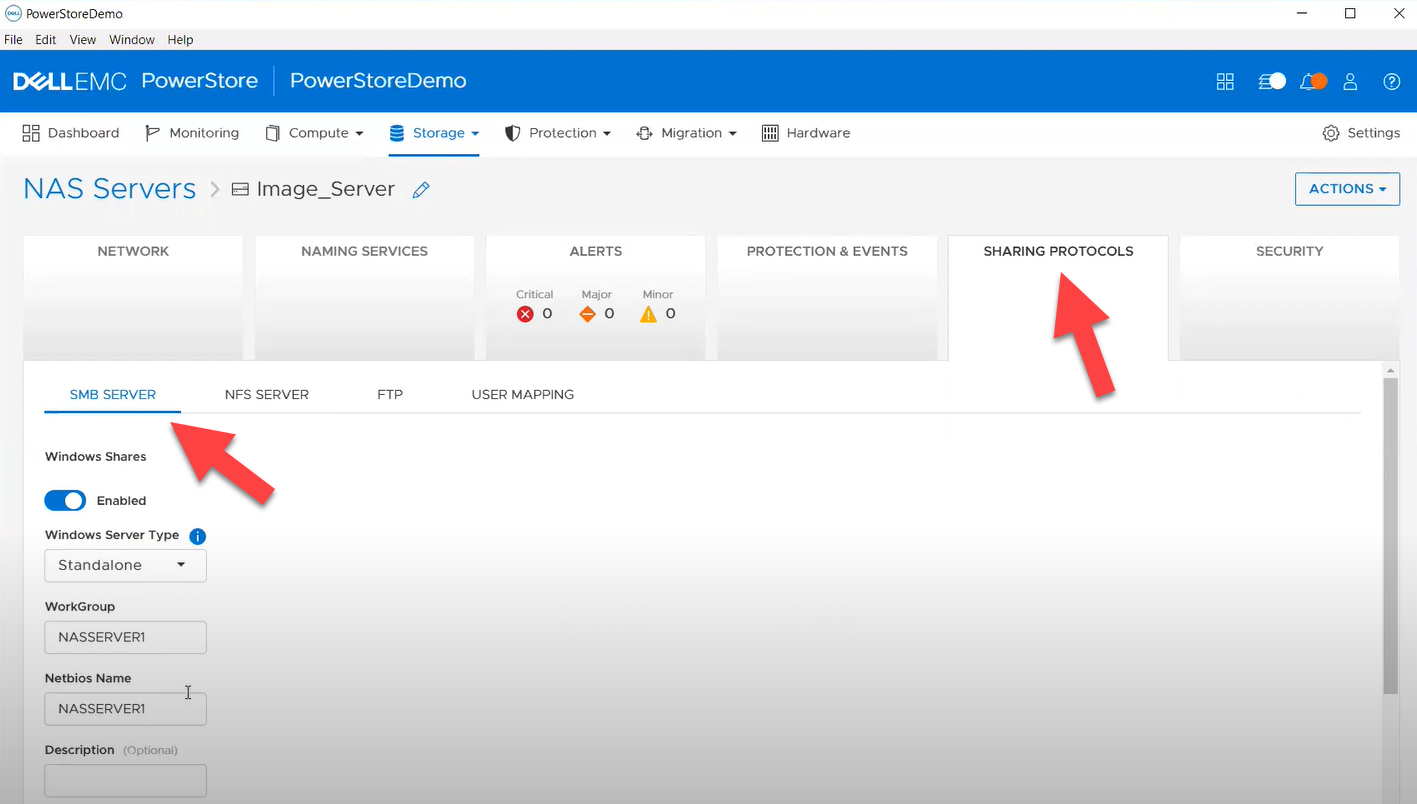

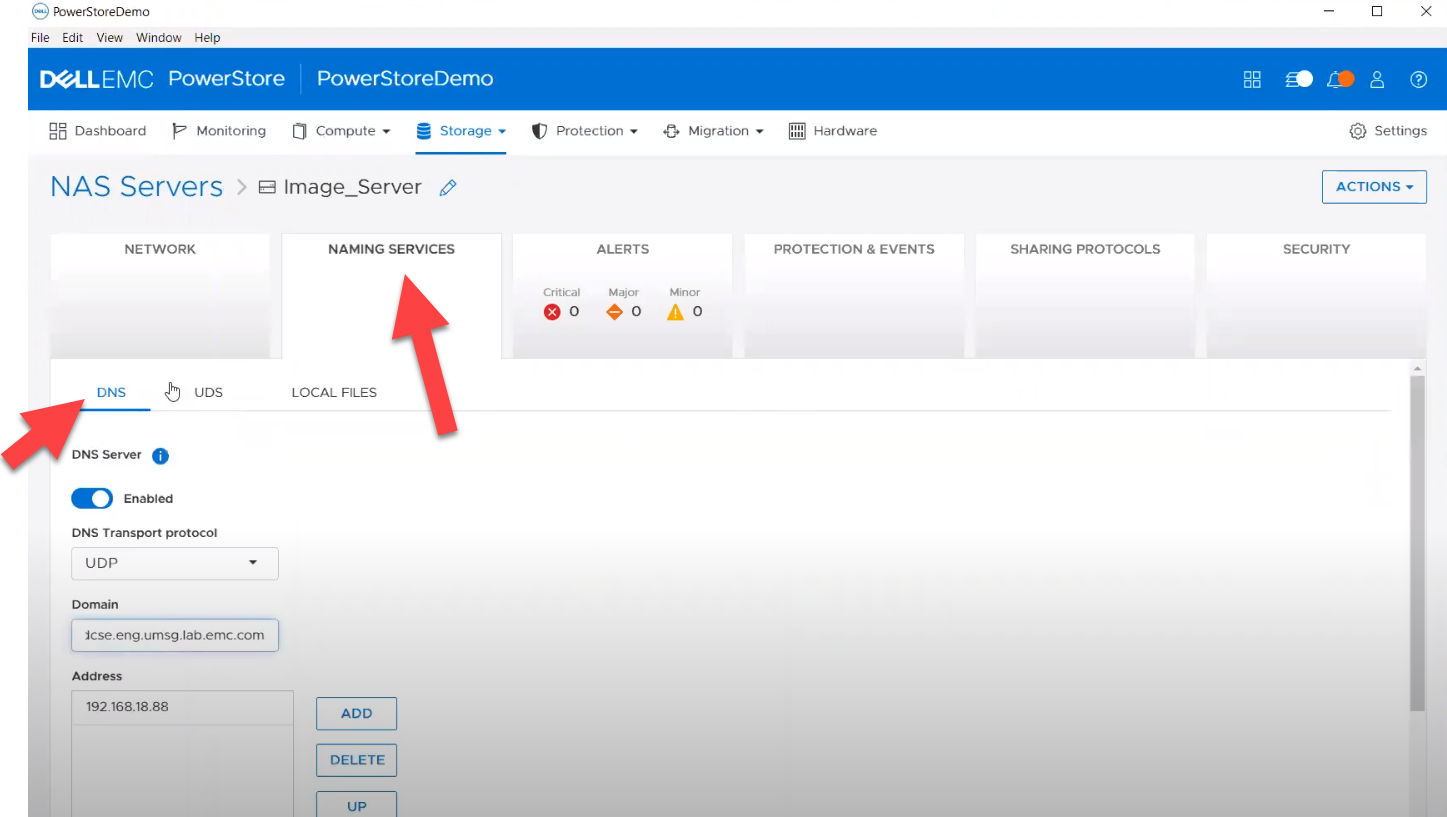

PowerStore Ansible collections 3.1: More NAS configuration

In this release of Ansible collections for PowerStore, new modules have been added to manage the NAS Server protocols like NFS and SMB, as well as to configure a DNS or NIS service running on PowerStore NAS.

Managing NAS Server interfaces on PowerStore

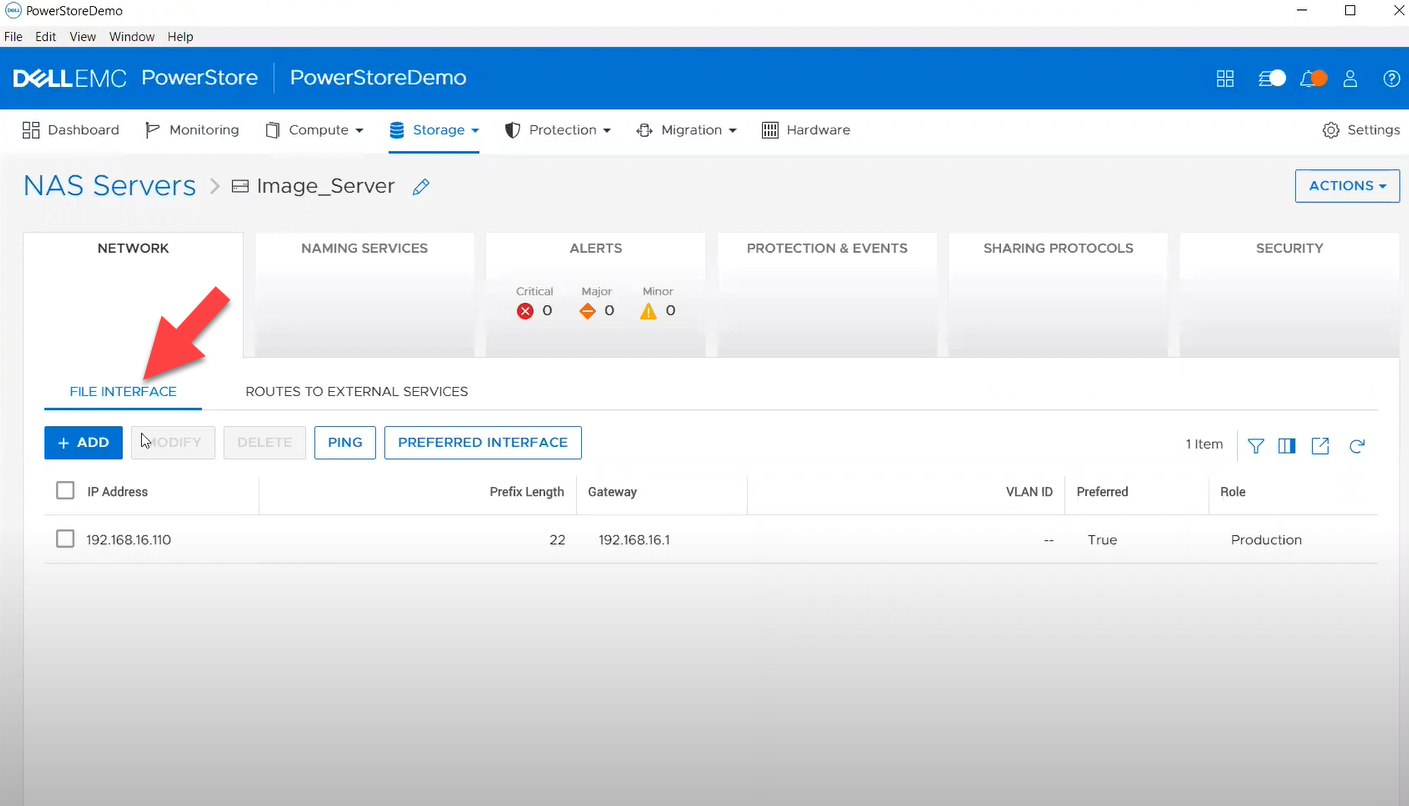

- file_interface - to enable, query, and modify PowerStore NAS interfaces. Some examples can be found here.

- smb_server - to enable, query, and modify SMB Shares on PowerStore NAS. Some examples can be found here.

- nfs_server - to enable, query, and modify NFS Server on PowerStore NAS. Some examples can be found here.

Naming services on PowerStore NAS

- file_dns – to enable, query, and modify File DNS on PowerStore NAS. Some examples can be found here.

- file_nis - to enable, query, and modify NIS on PowerStore NAS. Some examples can be found here.

- service_config - manage service config for PowerStore

The Info module is enhanced to list file interfaces, DNS Server, NIS Server, SMB Shares, and NFS exports. Also in this release, support has been added for creating multiple NFS exports with same name but different NAS servers.

PowerFlex Ansible collections 2.0.1 and 2.1: More roles

In releases 1.8 and 1.9 of the PowerFlex collections, new roles have been introduced to install and uninstall various software components of PowerFlex to enable day-1 deployment of a PowerFlex cluster. In the latest 2.0.1 and 2.1 releases, more updates have been made to roles, such as:

- Updated config role to support creation and deletion of protection domains, storage pools, and fault sets

- New role to support installation and uninstallation of Active MQ

- Enhanced SDC role to support installation on ESXi, Rocky Linux, and Windows OS

OpenManage Ansible collections: More power to iDRAC

At the risk of repetition, OpenManage Ansible collections have modules and roles for both OpenManage Enterprise as well as iDRAC/Redfish node interfaces. In the last five months, a plethora of a new functionalities (new modules and roles) have become available, especially for the iDRAC modules in the areas of security and user and license management. Following is a summary of the new features:

V9.1

- redfish_storage_volume now supports iDRAC8.

- dellemc_idrac_storage_module is deprecated and replaced with idrac_storage_volume.

v9.0

- Module idrac_diagnostics is added to run and export diagnostics on iDRAC.

- Role idrac_user is added to manage local users of iDRAC.

v8.7

- New module idrac_license to manage iDRAC licenses. With this module you can import, export, and delete licenses on iDRAC.

- idrac_gather_facts role enhanced to add storage controller details in the role output and provide support for secure boot.

v8.6

- Added support for the environment variables, `OME_USERNAME` and `OME_PASSWORD`, as fallback for credentials for all modules of iDRAC, OME, and Redfish.

- Enhanced both idrac_certificates module and role to support the import and export of `CUSTOMCERTIFICATE`, Added support for import operation of `HTTPS` certificate with the SSL key.

v8.5

- redfish_storage_volume module is enhanced to support reboot options and job tracking operation.

v8.4

- New module idrac_network_attributes to configure the port and partition network attributes on the network interface cards.

Conclusion

Ansible is the most extensively used automation platform for IT Operations, and Dell Technologies provides an exhaustive set of modules and roles to easily deploy and manage server and storage infrastructure on-prem as well as on Cloud. With the monthly release cadence for both storage and server modules, you can get access to our latest feature additions even faster. Enjoy coding your Dell infrastructure!

Author: Parasar Kodati, Engineering Technologist, Dell ISG

Related Blog Posts

Q3 2023: Updated Ansible Collections for Dell Portfolio

Fri, 29 Sep 2023 17:33:34 -0000

|Read Time: 0 minutes

The Ansible collection release schedule for the storage platforms is now monthly--just like the openmanage collection--so starting this quarter, I will roll up the features we released for storage modules for the past three months of the quarter. Over the past quarter, we made major enhancements to Ansible collections for PowerScale and PowerFlex.

Roll out PowerFlex with Roles!

We introduced Ansible Roles for the openmanage Ansible collection to gather and package multiple steps into a single small Ansible code block. In release v1.8 and 1.9 of Ansible Collections for PowerFlex, we are introducing roles for PowerFlex, targeting day-1 deployment as well as ongoing day-2 cluster expansion and management. This is a huge milestone for PowerFlex deployment automation.

Here is a complete list of the different roles and the tasks available under each role:

Role | Workflows |

SDC | |

SDS | |

MDM | |

Tie Breaker (TB) | |

Gateway | |

SDR | |

WebUI | |

PowerScale Common | This role has installation tasks on a node and is common to all the components like SDC, SDS, MDM, and LIA on various Linux distributions. All other roles call upon these tasks with the appropriate Ansible environment variable. The vars folder of this role also has dependency installations for different Linux distros. |

My favorite roles are installation-related, where the role task reduces the Ansible code required to automate by an order of magnitude. For example, this MDM installation role automates 140 lines of Ansible automation:

- name: "Install and configure powerflex mdm"

ansible.builtin.import_role:

name: "powerflex_mdm"

vars:

powerflex_common_file_install_location: "/opt/scaleio/rpm"

powerflex_mdm_password: password

powerflex_mdm_state: presentOther tasks under the role have a similar definition. And following the Ansible module pattern, just flipping the powerflex_mdm_state parameter to absent uninstalls MDM. For the sake of completion, we provided separate tasks for configure and uninstall as part of every role.

Complete PowerFlex deployment

Now here is where all the roles come together. A complete PowerFlex install playbook looks remarkably elegant like this:

---

---

- name: "Install PowerFlex Common"

hosts: all

roles:

- powerflex_common

- name: Install and configure PowerFlex MDM

hosts: mdm

roles:

- powerflex_mdm

- name: Install and configure PowerFlex gateway

hosts: gateway

roles:

- powerflex_gateway

- name: Install and configure PowerFlex TB

hosts: tb

vars_files:

- vars_files/connection.yml

roles:

- powerflex_tb

- name: Install and configure PowerFlex Web UI

hosts: webui

vars_files:

- vars_files/connection.yml

roles:

- powerflex_webui

- name: Install and configure PowerFlex SDC

hosts: sdc

vars_files:

- vars_files/connection.yml

roles:

- powerflex_sdc

- name: Install and configure PowerFlex LIA

hosts: lia

vars_files:

- vars_files/connection.yml

roles:

- powerflex_lia

- name: Install and configure PowerFlex SDS

hosts: sds

vars_files:

- vars_files/connection.yml

roles:

- powerflex_sds

- name: Install PowerFlex SDR

hosts: sdr

roles:

- powerflex_sdrYou can define your inventory based on the exact PowerFlex node setup:

node0 ansible_host=10.1.1.1 ansible_port=22 ansible_ssh_pass=password ansible_user=root

node1 ansible_host=10.x.x.x ansible_port=22 ansible_ssh_pass=password ansible_user=root

node2 ansible_host=10.x.x.y ansible_port=22 ansible_ssh_pass=password ansible_user=root

[mdm]

node0

node1

[tb]

node2

[sdc]

node2

[lia]

node0

node1

node2

[sds]

node0

node1

node2Note: You can change the defaults of each of the component installations as well update the corresponding /defaults/main.yml, which looks like this for SDC:

---

powerflex_sdc_driver_sync_repo_address: 'ftp://ftp.emc.com/'

powerflex_sdc_driver_sync_repo_user: 'QNzgdxXix'

powerflex_sdc_driver_sync_repo_password: 'Aw3wFAwAq3'

powerflex_sdc_driver_sync_repo_local_dir: '/bin/emc/scaleio/scini_sync/driver_cache/'

powerflex_sdc_driver_sync_user_private_rsa_key_src: ''

powerflex_sdc_driver_sync_user_private_rsa_key_dest: '/bin/emc/scaleio/scini_sync/scini_key'

powerflex_sdc_driver_sync_repo_public_rsa_key_src: ''

powerflex_sdc_driver_sync_repo_public_rsa_key_dest: '/bin/emc/scaleio/scini_sync/scini_repo_key.pub'

powerflex_sdc_driver_sync_module_sigcheck: 1

powerflex_sdc_driver_sync_emc_public_gpg_key_src: ../../../files/RPM-GPG-KEY-powerflex_2.0.*.0

powerflex_sdc_driver_sync_emc_public_gpg_key_dest: '/bin/emc/scaleio/scini_sync/emc_key.pub'

powerflex_sdc_driver_sync_sync_pattern: .*

powerflex_sdc_state: present

powerflex_sdc_name: sdc_test

powerflex_sdc_performance_profile: Compact

file_glob_name: sdc

i_am_sure: 1

powerflex_role_environment:Please look at the structure of this repo folder to setup your Ansible project so that you don’t miss the different levels of variables for example. I personally can’t wait to redeploy my PowerFlex lab setup both on-prem and on AWS with these roles. I will consider sharing any insights of that in a separate blog.

Ansible collection for PowerScale v2.0, 2.1, and 2.2

Following are the enhancements for Ansible Collection for PowerScale v2.0, 2.1, and 2.2:

- PowerScale is known for its extensive multi-protocol support, and S3 protocol enables use cases like application access to object storage with S3 API and as a data protection target. The new s3_bucket Ansible module now allows you to do CRUD operations for S3 buckets on PowerScale. You can find examples here.

- New modules for more granular NFS settings:

- Nfs_default_settings

- Nfs_global_settings

- Nfs_zone_settings

- The Info module has also been updated to fetch with the above NFS settings.

- map_root and map_non_root in the existing NFS Export (nfs) module for root and non-root access of the share. New examples added to the NFS modules examples.

- Enhanced AccessZone module with:

- Ability to reorder Access Zone Auth providers using the priority parameter of the auth_providers field elements, as shown in the following example:

auth_providers:

- provider_name: "System"

provider_type: "file"

priority: 2

- provider_name: "ansildap"

provider_type: "ldap"

priority: 1- AD module

- The ADS module for Active Directory integration is updated to support Service Principal Names (SPN). An SPN is a unique identifier for a service instance in a network, typically used within Windows environments and associated with the Kerberos authentication protocol. Learn more about SPNs here.

- Adding an SPN from AD looks like this:

- name: Add an SPN

dellemc.powerscale.ads:

onefs_host: "{{ onefs_host }}"

api_user: "{{ api_user }}"

api_password: "{{ api_password }}"

verify_ssl: "{{ verify_ssl }}"

domain_name: "{{ domain_name }}"

spns:

- spn: "HOST/test1"

state: "{{ state_present }}"- As you would expect, state: absent will remove the SPN. There is also a command parameter that takes two values: check and fix.

- Network Pool module

- The SmartConnect feature of PowerScale OneFS simplifies network configuration of a PowerScale cluster by enabling intelligent client connection load-balancing and failover capabilities. Learn more here.

- The Network Pool module has been updated to support specifying SmartConnect Zone aliases (DNS names). Here is an example:

- name: Network pool Operations on PowerScale

hosts: localhost

connection: local

vars:

onefs_host: '10.**.**.**'

verify_ssl: false

api_user: 'user'

api_password: 'Password'

state_present: 'present'

state_absent: 'absent'

access_zone: 'System'

access_zone_modify: "test"

groupnet_name: 'groupnet0'

subnet_name: 'subnet0'

description: "pool Created by Ansible"

new_pool_name: "rename_Test_pool_1"

additional_pool_params_mod:

ranges:

- low: "10.**.**.176"

high: "10.**.**.178"

range_state: "add"

ifaces:

- iface: "ext-1"

lnn: 1

- iface: "ext-2"

lnn: 1

iface_state: "add"

static_routes:

- gateway: "10.**.**.**"

prefixlen: 21

subnet: "10.**.**.**"

sc_params_mod:

sc_dns_zone: "10.**.**.169"

sc_connect_policy: "round_robin"

sc_failover_policy: "round_robin"

rebalance_policy: "auto"

alloc_method: "static"

sc_auto_unsuspend_delay: 0

sc_ttl: 0

aggregation_mode: "roundrobin"

sc_dns_zone_aliases:

- "Test"Ansible collection for PowerStore v2.1

This release of Ansible collections for PowerStore brings updates to two modules to manage and operate NAS on PowerStore:

- Filesystem - support for clone, refresh and restore. Example tasks can be found here.

- NAS server - support for creation and deletion. You can find examples of various Ansible tasks using the module here.

Ansible collection for OpenManage Enterprise

Here are the features that have become available over the last three monthly releases of the Ansible Collections for OpenManage Enterprise.

V8.1

- Support for subject alternative names while generating certificate signing requests on OME.

- Create a user on iDRAC using custom privileges.

- Create a firmware baseline on OME with the filter option of no reboot required.

- Retrieve all server items in the output for ome_device_info.

- Enhancement to add detailed job information for ome_discovery and ome_job_info.

V8.2

- redfish_firmware and ome_firmware_catalog module is enhanced to support IPv6 address.

- Module to support firmware rollback of server components.

- Support for retrieving alert policies, actions, categories, and message id information of alert policies for OME and OME Modular.

- ome_diagnostics module is enhanced to update changed flag status in response.

V8.3

- Module to manage OME alert policies.

- Support for RAID6 and RAID60 for module redfish_storage_volume.

- Support for reboot type options for module ome_firmware.

Conclusion

Ansible is the most extensively used automation platform for IT Operations, and Dell provides an exhaustive set of modules and roles to easily deploy and manage server and storage infrastructure on-prem as well as on Cloud. With the monthly release cadence for both storage and server modules, you can get access to our latest feature additions even faster. Enjoy coding your Dell infrastructure!

Author: Parasar Kodati, Engineering Technologist, Dell ISG

Q2 2023 Release for Ansible Integrations with Dell Infrastructure

Thu, 29 Jun 2023 11:21:49 -0000

|Read Time: 0 minutes

Thanks to the quarterly release cadence of infrastructure as code integrations for Dell infrastructure, we have a great set of enhancements and improved functionality as part of the Q2 release. The Q2 release is all about data protection and data security. Data services that come with the ISG storage portfolio deliver huge value in terms of built-in data protection, security, and recovery mechanisms. This blog provides a summary of what’s new in the Ansible collections for Dell infrastructure:

Ansible Modules for PowerStore v2.0.0

- A new module to manage Storage Containers. More in the subsection below.

- An easier way to get replication_session details. The replication_session module is updated to use a new replication_group parameter, which can take either the name or ID of the group to get replication session details.

Support for PowerStore Storage Containers

Storage Containers is a logical group of vVol on PowerStore. Learn more here. In v2.0 of Ansible Collections for PowerStore, we are introducing a new module to create and manage the Storage Containers from within Ansible. Let’s start with the list of parameters for the Storage Container task:

Parameter name | Type | Description |

storage_container_id | string | Unique identifier of the storage container. Mutually exclusive with storage_container_name |

storage_container_name | string | Name of the storage container. Mutually exclusive with storage_container_id. Mandatory for creating a storage container. |

new_name | string | The new name of the storage container |

quota | int | The total number of bytes that can be provisioned/reserved against this storage container. |

quota_unit | string | Unit of the quota |

storage_protocol | string | The type of Storage Container.

|

high_water_mark | int | This is the percentage of the quota that can be consumed before an alert is raised. |

force_delete | bool | This option overrides the error and allows the deletion to continue in case there are any vVols associated with the storage container. |

state | string | The state of the storage container after execution of the task. Choices: ['present', 'absent'] |

storage_container_destination_state | str | The state of the storage container destination after execution of the task. Required while deleting the storage container destination. Choices: [present, absent] |

storage_container_destination | dict | Dict container remote system and remote storage container. |

remote_system

remote_address

user

password

validate_certs

port

timeout

remote_storage_container | str | The name/id of the remote system |

str | The IP address of the remote array | |

str | Username for the remote array | |

str | Password for the remote array | |

bool | Whether or not to verify the SSL certificate | |

int | Port of the remote array (443) | |

int | Time after which the connection will get terminated (120) | |

str | The unique name/id of the destination storage container on the remote array |

Here are some YAML snippet examples to use the new module:

Task | Example |

Get a storage container | - name: Get details of a storage container Let me call this snippet <basic-sc-details> for reference

|

Create a new storage container | <basic-sc-details> quota: 10

|

Delete a storage container | <basic-sc-details> state: 'absent' |

Create a storage container destination | <basic-sc-details> storage_container_destination: "Destination_container" |

Ansible Modules for PowerFlex v1.7.0

- A new module to create and manage snapshot policies

- An enhanced replication_consistency_group module to orchestrate workflows, such as failover and failback, that are essential for disaster recovery.

- An enhanced SDC module to assign a performance profile and option to remove an SDC altogether.

Create and manage snapshot policies

If you want to refresh your knowledge here is a great resource to learn all about snapshots and snapshot policy setup on PowerFlex. In this version of Ansible collections for PowerFlex, we are introducing a new module for snapshot policy setup and management from within Ansible.

Here are the parameters for the snapshot policy task in Ansible:

Parameter name | Type | Description |

snapshot_policy_id | str | Unique identifier of the snapshot policy |

snapshot_policy_name | str | Name of the snapshot policy |

new_name | str | The new name of the snapshot policy |

access_mode | str | Defines the access for all snapshots created with this snapshot policy |

secure_snapshots | bool | Defines whether the snapshots created from this snapshot policy will be secure and not editable or removable before the retention period is complete |

auto_snapshot_creation_cadence

-- time -- unit | dict -- int -- str | The auto snapshot creation cadence of the snapshot policy. |

num_of_retained_snapshots_per_level | list | The number of snapshots per retention level. There are one to six levels, and the first level has the most frequent snapshots. |

source_volume

-- id

-- name

-- auto_snap_removal_action -- detach_locked_auto_snapshots -- state | list of dict -- str -- str

-- bool

-- str | The source volume details to be added or removed.

-- Whether to detach the locked auto snapshots during the removal of the source volume. -- State of the source volume: |

pause | bool | Whether to pause or resume the snapshot policy |

state | str | State of the snapshot policy after execution of the task |

And some examples of how the task can be configured in a playbook:

Get details of a snapshot policy | - name: Get snapshot policy details using name Let me call the above code block <basic-policy-details> for reference |

Create a policy | <basic-policy-details> |

Delete a policy | <basic-policy-details> state: "absent" |

Add source volumes to a policy | <basic-policy-details> source_volume: |

Remove source volumes from a policy | <basic-policy-details> source_volume: |

Pause/resume a snapshot policy | <basic-policy-details> pause: True //False to resume |

Failover and failback workflows for consistency groups

Today Ansible collections for PowerFlex already has the replication consistency group module to create and manage consistency groups, and to create snapshots of these consistency groups. Now we are also adding workflows that are essential for disaster recovery. Here is what the playbook tasks look like for various DR tasks:

Task | Syntax |

Code block: <Access details and name of consistency group> | gateway_host: "{{gateway_host}}" |

Failover the RCG | - name: Failover the RCG rcg_state: 'failover' |

Restore the RCG | - name: Restore the RCG |

Switch over the RCG | - name: Switch over the RCG rcg_state: 'switchover' |

Synchronization of the RCG | - name: Synchronization of the RCG rcg_state: 'sync' |

Reverse the direction of replication for the RCG | - name: Reverse the direction of replication for the RCG rcg_state: 'reverse' |

Force switch over the RCG | - name: Force switch over the RCG rcg_state: 'switchover' force: true |

Ansible Modules for PowerScale v2.0.0

This release for Ansible Collections for PowerScale has enhancements related to the theme of identity and access management which is fundamental to the security posture of a system. We are introducing a new module, user_mappings which corresponds to the user mappings feature of OneFS.

New module for user_mappings

Let’s see some examples of creating and managing user_mappings:

Common code block: <user-mapping-access> | dellemc.powerscale.user_mapping_rules: onefs_host: "{{onefs_host}}" verify_ssl: "{{verify_ssl}}" api_user: "{{api_user}}" api_password: "{{api_password}}" |

Get user mapping rules of a certain order | - name: Create a user mapping rule <user-mapping-access> Order: 1 |

Create a mapping rule | - name: Create a user mapping rule <user-mapping-access> operator: "insert" options: break_on_match: false group: true groups: true user: true user1: user: "test_user" user2: user: "ans_user" state: 'present' |

Delete a rule | <user-mapping-access> Order: 1 state: "absent" |

As part of this effort the Info module also has been updated to get all the user mapping rules and LDAPs configured with OneFS:

- name: Get list of user mapping rules <user-mapping-access> gather_subset: -user_mapping_rules - name: Get list of ldap of the PowerScale cluster <user-mapping-access> gather_subset: -ldap

Filesystem and NFS module enhancements

The Filesystem module continues the theme of access control and now allows you to pass a new value called ‘wellknown’ for the Trustee type when setting Access Control for the file system. This option provides access to all users. Here is an example:

- name: Create a Filesystem

filesystem:

onefs_host: "{{onefs_host}}"

api_user: "{{api_user}}"

api_password: "{{api_password}}"

verify_ssl: "{{verify_ssl}}"

path: "{{acl_test_fs}}"

access_zone: "{{access_zone_acl}}"

access_control_rights:

access_rights: "{{access_rights_dir_gen_all}}"

access_type: "{{access_type_allow}}"

inherit_flags: "{{inherit_flags_object_inherit}}"

trustee:

name: 'everyone'

type: "wellknown"

access_control_rights_state: "{{access_control_rights_state_add}}"

quota:

container: True

owner:

name: '{{acl_local_user}}'

provider_type: 'local'

state: "present"The NFS module now can handle the case of unresolvable hosts in terms of ignoring or erroring out with a new parameter called ignore_unresolvable_hosts that can be set to True (ignores) or False (errors out).

Ansible Modules for Dell Unity v1.7.0

V1.7 of Ansible collections for Dell Unity follow the theme of data protection as well. We are introducing a new module for data replication and recovery workflows that are key to disaster recovery. The new replication_session module allows you to manage data replication sessions between two Dell Unity storage arrays. You can also use the module to initiate DR workflows such as failover and failback. Let’s see some examples:

Common code block to access a replication session: <unity-replication-session> | dellemc.unity.replication_session: unispherehost: "{{unispherehost}}" username: "{{username}}" password: "{{password}}" validate_certs: "{{validate_certs}}" name: "{{session_name}}" |

Pause and resume a replication session | - name: Pause (or resume) a relication session <unity-replication session> Pause: True //(False to resume) |

Failover the source to target for a session | - name: Failover a replication session <unity-replication-session> failover_with_sync: True force: True |

Failback the current session (that is in a failover state) to go back to the original source and target replication sessions | - name: Failback to original replication session <unity-replication-session> failback: True force_full_copy: True |

Sync the target with the source | - name: Sync a replication session <unity-replication-session> failover_with_sync: True sync: True |

Delete or suspend a replication session | - name: Failover a replication session <unity-replication-session> state: “absent” |

Ansible Modules for PowerEdge (iDRAC and OME)

When it comes to PowerEdge servers, the openmanage Ansible collection is updated every month! In my Q1 release blog post, I covered till v7.3. If you noticed, we started talking about Roles! To make the iDRAC tasks easy to manage and execute, we started grouping iDRAC tasks into appropriate Ansible Roles. Since v7.3, three (number of months in a quarter!) more releases happened, each one adding new Roles to the mix. For a roll up of features in the last three months, here are the details:

New roles in v7.4, v7.5, and v7.6:

- dellemc.openmanage.idrac_certificate - Role to manage the iDRAC certificates - generate CSR, import/export certificates, and reset configuration - for PowerEdge servers.

- dellemc.openmanage.idrac_gather_facts - Role to gather facts from the iDRAC Server.

- dellemc.openmanage.idrac_import_server_config_profile - Role to import iDRAC Server Configuration Profile (SCP).

- dellemc.openmanage.idrac_os_deployment - Role to deploy the specified operating system and version on the servers.

- dellemc.openmanage.idrac_server_powerstate - Role to manage the different power states of the specified device.

- dellemc.openmanage.idrac_firmware - Firmware update from a repository on a network share (CIFS, NFS, HTTP, HTTPS, FTP).

- dellemc.openmanage.redfish_firmware - Update a component firmware using the image file available on the local or remote system.

- dellemc.openmanage.redfish_storage_volume - Role to manage the storage volume configuration.

- dellemc.openmanage.idrac_attributes - Role to configure iDRAC attributes.

- dellemc.openmanage.idrac_bios - Role to modify BIOS attributes, clear pending BIOS attributes, and reset the BIOS to default settings.

- dellemc.openmanage.idrac_reset - Role to reset and restart iDRAC (iDRAC8 and iDRAC9 only) for Dell PowerEdge servers.

- dellemc.openmanage.idrac_storage_controller - Role to configure the physical disk, virtual disk, and storage controller settings on iDRAC9 based PowerEdge servers.

OME module enhancements

- Plugin OME inventory enhanced to support the environment variables for the input parameters.

- ome_template module enhanced to include job tracking.

Other enhancements

- redfish_firmware module is enhanced to include job tracking.

- Updated the idrac_gather_facts role to use jinja template filters.

Author: Parasar Kodati