Hybrid Kubernetes Clusters with PowerStore CSI

Fri, 26 Apr 2024 17:47:47 -0000

|Read Time: 0 minutes

In today’s world and in the context of Kubernetes (K8s), hybrid can mean many things. For this blog I am going to use hybrid to mean running both physical and virtual nodes in a K8s cluster. Often, when we think of a K8s cluster of multiple hosts, there is an assumption that they should be the same type and size. While that simplifies the architecture, it may not always be practical or feasible. Let’s look at an example of using both physical and virtual hosts in a K8s cluster.

Necessity is the mother of invention

When you need to get things done, often you will find a way to do it. This happened on a recent project at Dell Technologies where I needed to perform some storage testing with Dell PowerStore on K8s, but I didn’t have enough physical servers in my environment for the control plane and the workload. I knew that I wanted to run my performance workload on my physical servers and knowing that the workload of the control plane would be light, I opted to run them on virtual machines (VMs). The additional twist is that I also wanted additional worker nodes, but I didn’t have enough physical servers for everything. The goal was to run my performance workload on physical servers and allow everything else to run on VMs.

Dell PowerStore CSI to the rescue!

My performance workload that I am running on physical hosts was also using Fibre Channel storage. This adds a bit of a twist for workloads running on virtual machines if I were to present the storage uniformly to all the hosts. However, using the features of Dell PowerStore CSI and Kubernetes, I don’t need to do that. I can simply present Dell PowerStore storage with Fibre Channel to my physical hosts and run my workload there.

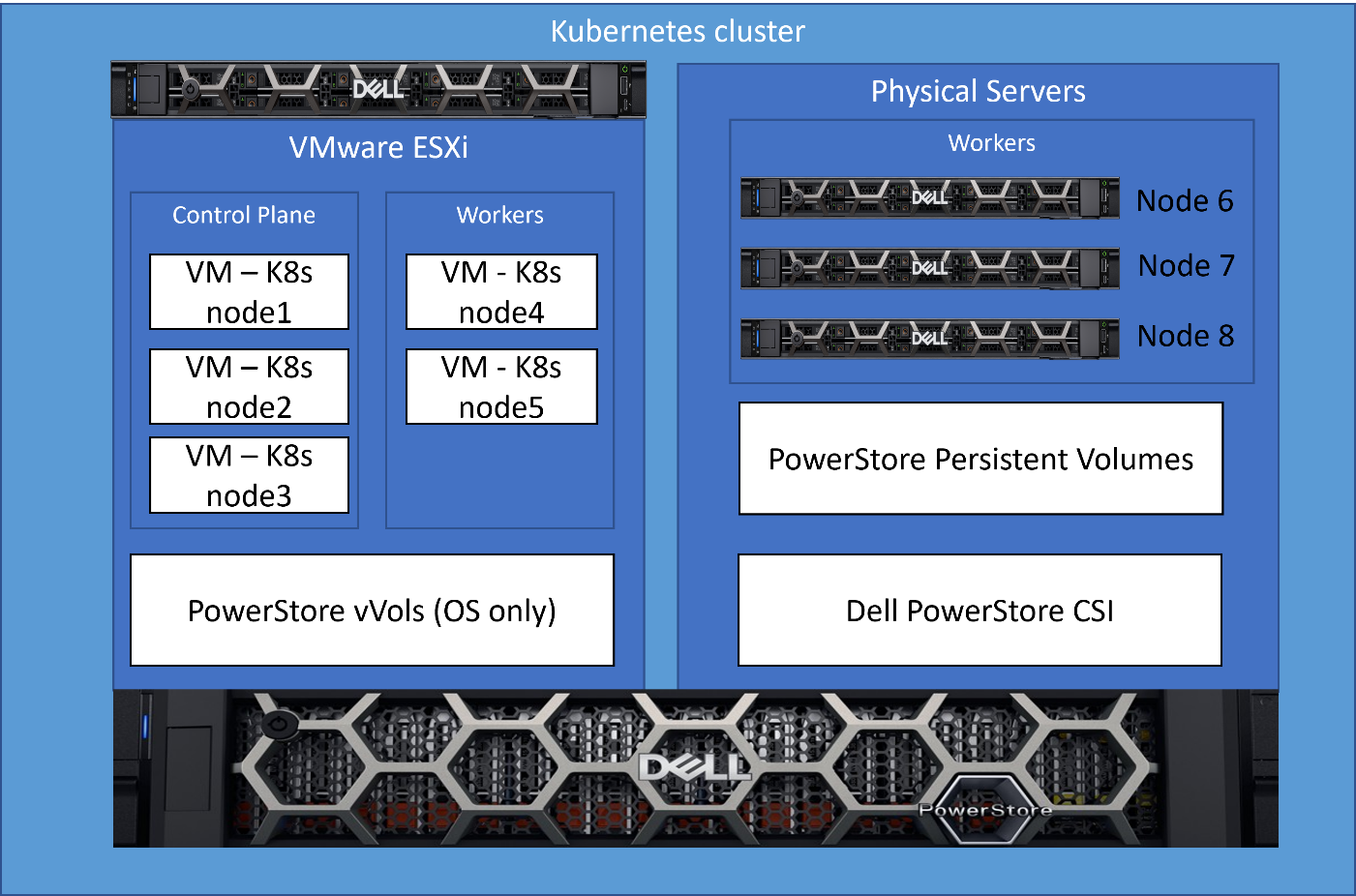

The following is a diagram of my infrastructure and key components. There is one physical server running VMware ESXi that hosts several VMs used for K8s nodes, and then three other physical servers that run as physical nodes in the cluster.

What kind of mess is this?!?

As the reader, you’re probably thinking…what kind of hodge-podge maintenance nightmare is this? I have K8s nodes that aren’t all the same and then some hacked up solution to make it work?!? Well, it’s not a mess at all, allow me to explain how it’s quite simple and elegant.

For those new to K8s, implementing something like this probably seems very complicated and hard to manage. After all, the workload should only run on the physical K8s nodes that are connected though Fiber Channel. Outside of K8s, Dell CSI, and the features they provide, it likely would be a mess of scripting and dependency checking.

An elegant solution!

In this solution I leveraged the labels and scheduling features of K8s with the PowerStore CSI features to implement a simple solution to accomplish this. This implementation is very clean and easy to maintain with no complicated scripts or configuration to maintain.

Step 1 – PowerStore CSI Driver configuration

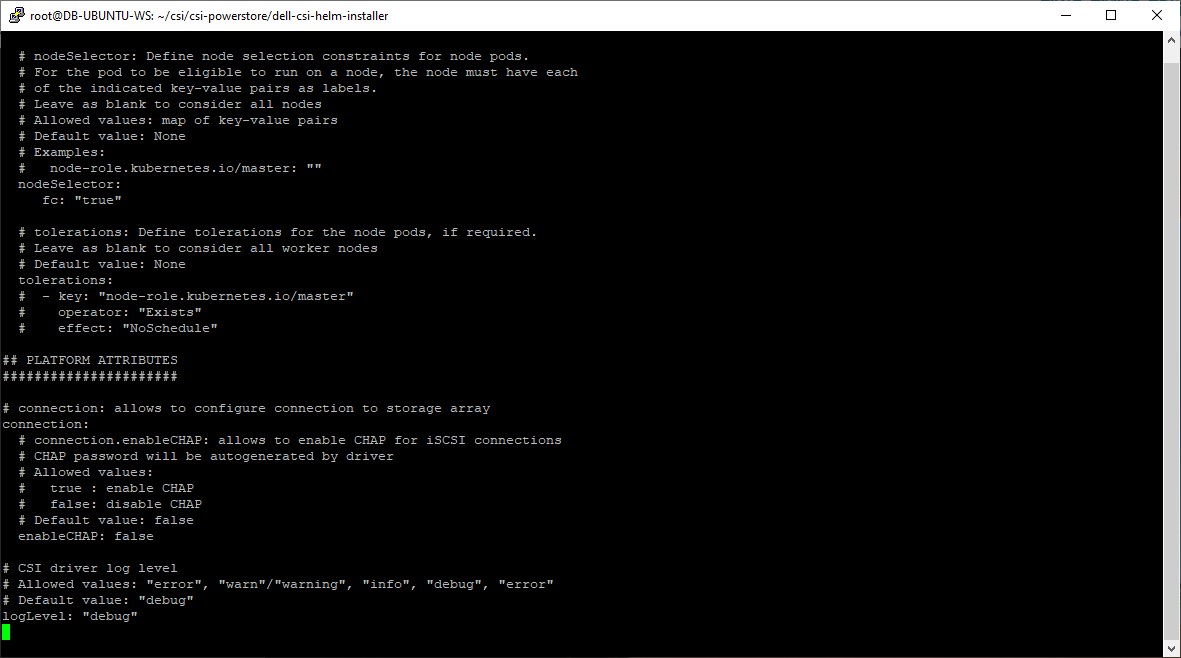

As part of the PowerStore CSI driver configuration, one of the supported features (node selection) is the ability to select the nodes on which the K8s pods (in this case the CSI driver) will run, by using K8s labels. In the following figure, in the driver configuration, I specify that the PowerStore CSI driver should only run on nodes that contain the label “fc=true”. The label itself can contain any value; the key is that this value must match in a search.

The following is an excerpt from the Dell PowerStore CSI configuration file showing how this is done.

This is a one-time configuration setting that is done during Dell CSI driver deployment.

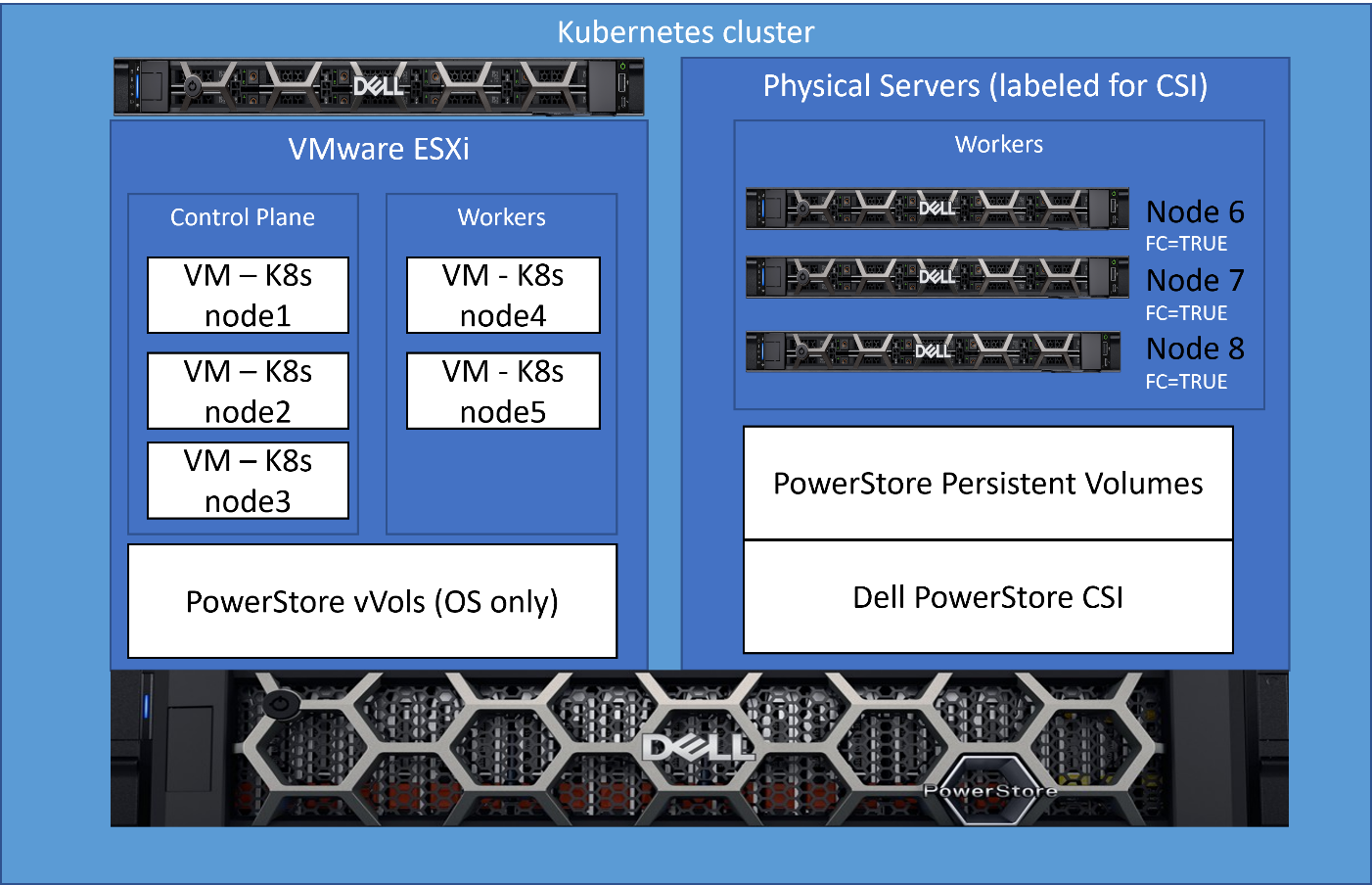

Step 2 – Label the physical nodes

The next step is to apply a label “fc=true” to the nodes that contain a Fibre Channel configuration on which we want the node to run. It’s as simple as running the command “kubectl label nodes <your-node-name> fc=true”. When this label is set, the CSI driver pods will only run on K8s nodes that contain this label value.

This label only needs to be applied when adding new nodes to the cluster or if you were to change the role of this node and remove it from this workload.

Step 3 – Let Kubernetes do its magic

Now, I leverage basic K8s functionality. Kubernetes resource scheduling evaluates the resource requirements for a pod and will only schedule on the nodes that meet those requirements. Storage volumes provided by the Dell PowerStore CSI driver are a dependency for my workload pods, and therefore, my workload will only be scheduled on K8s nodes that can meet this dependency. Because I’ve enabled the node selection constraint for the CSI driver only on physical nodes, they are the only nodes that can fill the PowerStore CSI storage dependency.

The result of this configuration is that the three physical nodes that I labeled are the only ones that will accept my performance workload. It’s a very simple solution that requires no complex scripting or configuration.

Here is that same architecture diagram showing the nodes that were labeled for the workload.

Kubernetes brings lots of exciting new capabilities that can provide elegant solutions to complex challenges. Our latest collaboration with Microsoft utilized this architecture. For complete details, see our latest joint white paper: Dell PowerStore with Azure Arc-enabled Data Services which highlights performance and scale.

Also, for more information about Arc-enabled SQL Managed Instance and PowerStore, see:

- the Microsoft blog post: Performance benchmark of Azure Arc-enabled SQL Managed Instance

- the Microsoft digital events Microsoft Build and Azure Hybrid, Multicloud, and Edge Day

Author: Doug Bernhardt

Sr. Principal Engineering Technologist

Related Blog Posts

Exploring Amazon EKS Anywhere on PowerStore X – Part I

Wed, 19 Jan 2022 15:17:00 -0000

|Read Time: 0 minutes

A number of years ago, I began hearing about containers and containerized applications. Kiosks started popping up at VMworld showcasing fun and interesting uses cases, as well as practical uses of containerized applications. A short time later, my perception was that focus had shifted from containers to container orchestration and management or simply put, Kubernetes. I got my first real hands on experience with Kubernetes about 18 months ago when I got heavily involved with VMware’s Project Pacific and vSphere with Tanzu. The learning experience was great and it ultimately lead to authoring a technical white paper titled Dell EMC PowerStore and VMware vSphere with Tanzu and TKG Clusters.

Just recently, a Product Manager made me aware of a newly released Kubernetes distribution worth checking out: Amazon Elastic Kubernetes Service Anywhere (Amazon EKS). Amazon EKS Anywhere was preannounced at AWS re:Invent 2020 and announced as generally available in September 2021.

Amazon EKS Anywhere is a deployment option for Amazon EKS that enables customers to stand up Kubernetes clusters on-premises using VMware vSphere 7+ as the platform (bare metal platform support is planned for later this year). Aside from a vSphere integrated control plane and running vSphere native pods, the Amazon EKS Anywhere approach felt similar to the work I performed with vSphere with Tanzu. Control plane nodes and worker nodes are deployed to vSphere infrastructure and consume native storage made available by a vSphere administrator. Storage can be block, file, vVol, vSAN, or any combination of these. Just like vSphere with Tanzu, storage consumption, including persistent volumes and persistent volume claims, is made easy by leveraging the Cloud Native Storage (CNS) feature in vCenter Server (released in vSphere 6.7 Update 3). No CSI driver installation necessary.

Amazon EKS users will immediately gravitate towards the consistent AWS management experience in Amazon EKS Anywhere. vSphere administrators will enjoy the ease of deployment and integration with vSphere infrastructure that they already have on-premises. To add to that, Amazon EKS Anywhere is Open Source. It can be downloaded and fully deployed without software or license purchase. You don’t even need an AWS account.

I found PowerStore was a good fit for vSphere with Tanzu, especially the PowerStore X model, which has a built in vSphere hypervisor, allowing customers to run applications directly on the same appliance through a feature known as AppsON.

The question that quickly surfaces is: What about Amazon EKS Anywhere on PowerStore X on-premises or as an Edge use case? It’s a definite possibility. Amazon EKS Anywhere has already been validated on VxRail. The AppsON deployment option in PowerStore 2.1 offers vSphere 7 Update 3 compute nodes connected by a vSphere Distributed Switch out of the box, plus support for both vVol and block storage. CNS will enable DevOps teams to consume vVol storage on a storage policy basis for their containerized applications, which is great for PowerStore because it boasts one of the most efficient vVol implementations on the market today. The native PowerStore CSI driver is also available as a deployment option. What about sizing and scale? Amazon EKS Anywhere deploys on a single PowerStore X appliance consisting of two nodes but can be scaled across four clustered PowerStore X appliances for a total of eight nodes.

As is often the case, I went to the lab and set up a proof of concept environment consisting of Amazon EKS Anywhere running on PowerStore X 2.1 infrastructure. In short, the deployment was wildly successful. I was up and running popular containerized demo applications in a relatively short amount of time. In Part II of this series, I will go deeper into the technical side, sharing some of the steps I followed to deploy Amazon EKS Anywhere on PowerStore X.

Author: Jason Boche

Twitter: (@jasonboche)

PowerStore validation with Microsoft Azure Arc-enabled data services updated to 1.25.0

Mon, 12 Feb 2024 20:04:34 -0000

|Read Time: 0 minutes

Microsoft Azure Arc-enabled data services allow you to run Azure data services on-premises, at the edge, or in the cloud. Arc-enabled data services align with Dell Technologies’ vision, by allowing you to run traditional SQL Server workloads on Kubernetes, on your infrastructure of choice. For details about a solution offering that combines PowerStore and Microsoft Azure Arc-enabled data services, see the white paper Dell PowerStore with Azure Arc-enabled Data Services.

Dell Technologies works closely with partners such as Microsoft to ensure the best possible customer experience. We are happy to announce that Dell PowerStore has been revalidated with the latest version of Azure Arc-enabled data services, 1.25.0.

Deploy with confidence

One of the deployment requirements for Azure Arc-enabled data services is that you must deploy on one of the validated solutions. At Dell Technologies, we understand that customers want to deploy solutions that have been fully vetted and tested. Key partners such as Microsoft understand this too, which is why they have created a validation program to ensure that the complete solution will work as intended.

By working through this process with Microsoft, Dell Technologies can confidently say that we have deployed and tested a full end-to-end solution and validated that it passes all tests.

The validation process

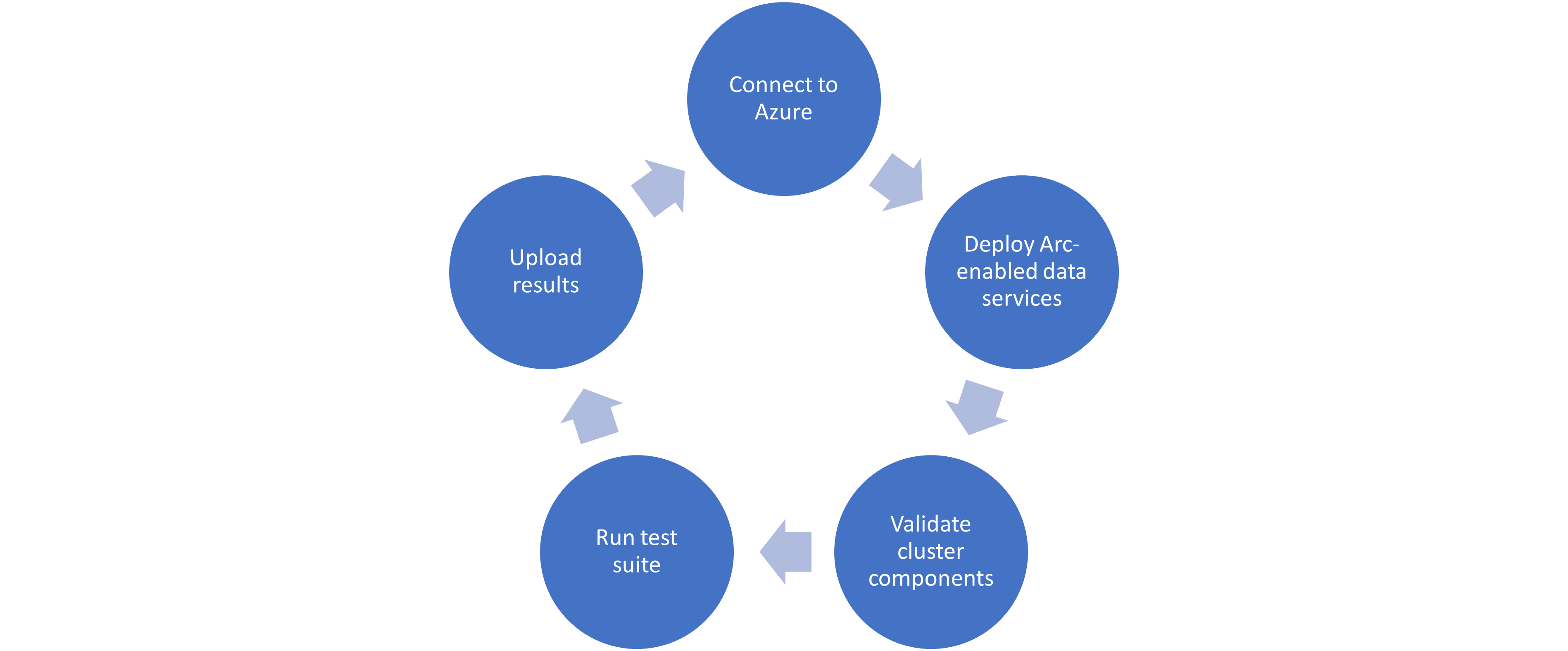

Microsoft haspublished tests for their continuous integration/continuous delivery (CI/CD) pipeline that partners and customers to run. For Microsoft to support an Arc-enabled data services solution, it must pass these tests. At a high level, these tests perform the following:

- Connect to an Azure subscription provided by Microsoft.

- Deploy the components for Arc-enabled data services, including SQL Managed Instance, using both direct and indirect connect modes.

- Validate Kubernetes (K8s), hosts, storage, container storage interface (CSI), and networking.

- Run Sonobuoy tests ranging from simple smoke tests to complex high-availability scenarios and chaos tests.

- Upload results to Microsoft for analysis.

When Microsoft accepts the results, they add the new or updated solution to their list of validated solutions. At that point, the solution is officially supported. This process is repeated as needed as new component versions are introduced. Complete details about the validation testing and links to the GitHub repositories are available here.

More to come

Stay tuned for more additions and updates from Dell Technologies to the list of validated solutions for Azure Arc-enabled data services. Dell Technologies is leading the way on hybrid solutions, proven by our work with partners such as Microsoft on these validation efforts. Reach out to your Dell Technologies representative for more information about these solutions and validations.

Author: Doug Bernhardt

Sr. Principal Engineering Technologist