Home > Workload Solutions > Container Platforms > SUSE Containers as a Service > Guides > SUSE Rancher, SUSE Linux Enterprise Micro, and K3s for Edge Computing > Deploying K3s

Deploying K3s

-

Preparing the deployment

To prepare the K3s deployment:

- Identify the appropriate version of the K3s binary file (for example, vX.YY.ZZ+k3s1) by consulting:

- The "Installing SUSE Rancher on K3s" steps that are associated with your SUSE Rancher version.

- "Releases" on the GitHub download page

- For the underlying operating system firewall service, do one of the following:

- Enable and configure the necessary inbound ports. See K3s Resource Profiling.

- Stop and completely disable the firewall service.

Installing K3s

To install the first K3s server on one of the nodes to be used for the Kubernetes control plane:

- Set the following variable with the version of K3s that you identified in Step 1 of Preparing the deployment:

K3s_VERSION=""

- Install the wanted version of K3s with embedded etcd enabled:

curl -sfL https://get.k3s.io | \

INSTALL_K3S_VERSION=${K3s_VERSION} \

INSTALL_K3S_SKIP_SELINUX_RPM=true \

INSTALL_K3S_EXEC='server --cluster-init --write-kubeconfig-mode=644' \

sh -s -

Tip: To address availability and possible scaling to a multiple node cluster, enable etcd instead of using the default SQLite data store.

- Monitor the progress of the installation:

watch -c "kubectl get deployments -A"

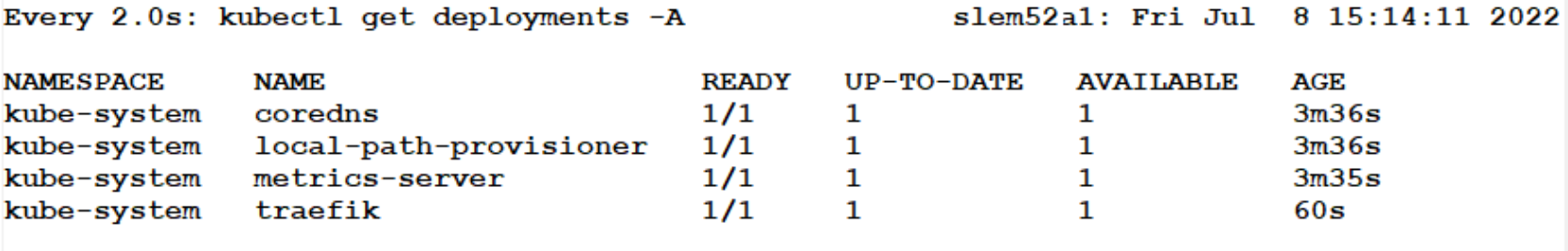

The K3s deployment is complete when elements of all the deployments (coredns, local-path-provisioner, metrics-server, and traefik) show at least "1" as "AVAILABLE," as shown in the following figure:

Figure 7. K3s complete deployment status

- Press Ctrl+c to exit the watch loop after all deployment pods are running.

Deployment best practices

Follow these best practices to further optimize the deployment.

Availability

SUSE recommends a full HA K3s cluster for production workloads. The etcd key/value store (or database) requires that an odd number of servers (or control nodes) be allocated to the K3s cluster. In this case, add two additional control-plane servers for a total of three servers.

- Deploy the same operating system on the new compute platform nodes, and then log in to the new nodes as root or as a user with sudo privileges.

- Run the following commands on each of the remaining control-plane nodes, setting the additional variables as appropriate for the cluster:

# Private IP preferred, if available

FIRST_SERVER_IP=""

# From /var/lib/rancher/k3s/server/node-token file on the first server

NODE_TOKEN=""

# Match the version of the first server

K3s_VERSION=""

- To install K3s, run:

curl -sfL https://get.k3s.io | \

INSTALL_K3S_VERSION=${K3s_VERSION} \

INSTALL_K3S_SKIP_SELINUX_RPM=true \

K3S_URL=https://${FIRST_SERVER_IP}:6443 \

K3S_TOKEN=${NODE_TOKEN} \

K3S_KUBECONFIG_MODE="644" INSTALL_K3S_EXEC='server' \

sh -

- Monitor the progress of the installation, as described in Step 3 of Installing K3s:

watch -c "kubectl get deployments -A"

- Use Ctrl+c to exit the watch loop after all deployment pods are running.

By default, the K3s server nodes are available to run non-control-plane workloads. In this case, the K3s default behavior is ideal for the SUSE Rancher server cluster because it does not require additional agent (worker) nodes to maintain a highly available SUSE Rancher server application.

Note: You can change this scenario to the normal Kubernetes default by adding a taint to each server node. For more information, see the official Kubernetes document Taints and Tolerations.

- (Optional) In cases where agent nodes are wanted, run the following commands, using the same K3s_VERSION, FIRST_SERVER_IP, and NODE_TOKEN variable settings on each of the agent nodes to add the node to the K3s cluster:

curl -sfL https://get.k3s.io | \

INSTALL_K3S_VERSION=${K3s_VERSION} \

INSTALL_K3S_SKIP_SELINUX_RPM=true \

K3S_URL=https://${FIRST_SERVER_IP}:6443 \

K3S_TOKEN=${NODE_TOKEN} \

K3S_KUBECONFIG_MODE="644" \

sh -