PowerEdge MX Ethernet I/O modules support Fibre Channel (FC) connectivity in different ways:

- Direct Attach, also called F_Port

- NPIV Proxy Gateway (NPG)

- FIP Snooping Bridge (FSB)

- Internet Small Computer Systems Interface, or iSCSI

The method to implement depends on the existing infrastructure and application requirements. Consult your Dell representative for more information.

Configuring FC connectivity in SmartFabric mode is simple and is almost identical across the three connectivity types.

NPIV Proxy Gateway

The most common connectivity method, NPIV Proxy Gateway mode (NPG) is used when connecting PowerEdge MX to a storage area network that hosts a storage array. NPG mode is simple to implement as there is little configuration that must be done. The NPG switch converts FCoE from the server to native FC and aggregates the traffic into an uplink. The NPG switch is effectively transparent to the FC SAN, which “sees” the hosts themselves. This mode is supported only on the MX9116n FSE.

OS10 supports configuring N_Port mode on an Ethernet port that connects to converged network adapters (CNA). NPG node port (N_Port) is a port on a network node that act as a host or initiator device and is used in FC point-to-point or FC switched fabric. N_Port ID Virtualization (NPIV) allows multiple N_Port IDs to share a single physical N_Port.

In the deployment example shown below, MX9116n IOMs are configured as NPGs connected with pre-configured FC switches using port 1/1/44 on each MX9116n to allow connectivity to a Dell PowerStore 1000T storage array. Port-group 1/1/16 is configured as 4x 16 GFC to convert physical port 1/1/44 into 4x 16 GFC connections. MX9116n FSE universal ports 44:1 and 44:2 are used for FC connections and operate in N_Port mode to connect to the FC switches. The FC Gateway uplink type enables N_Port functionality on the MX9116n unified ports, converting FCoE traffic to native FC traffic and passing that traffic to a storage array through FC switches.

Direct attached (F_Port)

Direct Attached mode, or F_Port, is used when FC storage needs to be directly connected to the MX9116n FSE. The MX9116n supports the required FC services such as name server and zoning that are typical of standard FC switches.

This example demonstrates the direct attachment of the Dell PowerStore 1000T storage array. MX9116n FSE universal ports 44:1 and 44:2 are required for FC connections and operate in F_Port mode, which allows for an FC storage array to be connected directly to the MX9116n FSE. The uplink type enables F_Port functionality on the MX9116n unified ports, converting FCoE traffic to native FC traffic and passing that traffic to a directly attached FC storage array.

This mode is supported only on the MX9116n FSE.

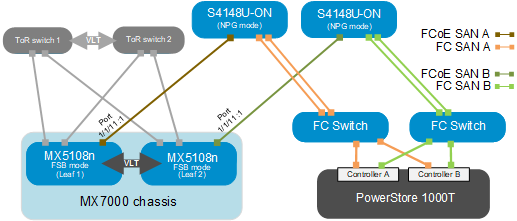

FCoE Transit or FIP Snooping Bridge

The FCoE Transit, or FIP Snooping Bridge (FSB) mode is used when connecting the Dell PowerEdge MX to an upstream switch, such as the Dell PowerSwitch S4148U that accepts FCoE and converts it to native FC. This mode is typically used when an existing FCoE infrastructure is in place that PowerEdge MX must connect to. In the following example, the PowerSwitch S4148U-ON receives FCoE traffic from the MX5108n Ethernet switch and converts that FCoE traffic to native FC passes that traffic to an external FC switch.

When operating in FSB mode, the switch snoops Fibre Channel over Ethernet (FCoE) Initialization Protocol (FIP) packets on FCoE-enabled VLANs, and discovers the following information:

- End nodes (ENodes)

- Fibre channel forwarders (FCFs)

- Connections between ENodes and FCFs

- Sessions between ENodes and FCFs

Using the discovered information, the switch installs ACL entries that provide security and point-to-point link emulation. This mode is supported on both the MX9116n FSE and the MX5108n Ethernet Switch.

spanning-tree {vlan vlan-id priority priority-value}. Set the external switch to the lowest priority-value to force its assignment as the STP root bridge. For additional details on using STP with OS10 switches, see the Dell OS10 SmartFabric User Guide. iSCSI

iSCSI is a transport layer protocol that embeds SCSI commands inside of TCP/IP packets. TCP/IP transports the SCSI commands from the Host (initiator) to storage array (target). iSCSI traffic can be run on a shared or dedicated network depending on application performance requirements.

In the example below, MX9116n FSEs are connected to Dell PowerStore 1000T storage array controllers SP A and SP B through ports 1/1/41:1-2. If there are multiple paths from host to target, iSCSI can use multiple sessions for each path. Each path from the initiator to the target will have its own session and connection. This connectivity method is often referred as “port binding”.

Dell Technologies recommends that you use the port binding method for connecting MX environment to the PowerStore 1000T storage array. Configure multiple iSCSI targets on PowerStore 1000T and establish connectivity from the host initiators (MX compute sleds) to each of the targets. When Logical Unit Numbers (LUNs) are successfully created on target, host initiators can make connections to target through iSCSI session. For more information, see the Dell PowerStore T page.

NVMe/TCP

OME-M 1.40.20 NVMe/TCP support

With the release of OME-M 1.40.20, the MX platform supports NVMe/TCP.

NVMe/TCP and SFSS solutions with PowerEdge MX require PowerEdge MX Baseline 22.03.00 (1.40.20), and are supported in full switch mode only. Converged FCoE and NVMe/TCP on the same IOM is not currently supported.

NVMe/TCP support on 25 GbE IOMs to include MX9116n FSE, MX7116n FEM, and MX5108n

NVMe/TCP and SFSS solutions with PowerEdge MX require PowerEdge MX Baseline 22.09.00 (2.00.00 and later) and are supported in SmartFabric mode and Full Switch mode.

When operating in SmartFabric Mode, the Storage - NVMe/TCP VLAN type for NVMe/TCP traffic is required.

Converged FCoE and NVMe/TCP on the same IOM is not currently supported.

NVMe/TCP support on 100 GbE solution with external Z9432F-ON FSE and MX8116n FEM, for operation at both 25 GbE and 100 GbE

NVMe/TCP and SFSS solutions with PowerEdge MX require PowerEdge MX Baseline 23.05.00 (2.10.00 and later) and is supported in Full Switch mode.

FCoE is not supported on the MX8116n based solution and therefore, converged FCoE and NVMe/TCP is not supported.

All network mezzanine cards supported on the MX8116n based solution are supported for NVMe/TCP and SFSS.

For more information, refer to the following resources:

| Resource | Description |

| SFSS Deployment Guide | This document demonstrates the planning and deployment of SmartFabric Storage Software (SFSS) for NVMe/TCP. |

| NVMe/TCP Host/Storage Interoperability Simple Support Matrix | This document provides information about the NVMe/TCP Host/Storage Interoperability support matrix. |

| NVMe/TCP Supported Switches Simple Support Matrix | This document provides information about the NVMe/TCP Supported Switches Simple Support matrix. |