Assets

Archiving Snapshots with PowerScale OneFS SyncIQ

Mon, 08 Jul 2024 16:20:03 -0000

|Read Time: 0 minutes

Archiving Snapshots with PowerScale OneFS SyncIQ

PowerScale OneFS SyncIQ stands out as the ultimate choice for archiving snapshots efficiently and securely. This is vital for managing storage well and enhancing data lifecycles. By replicating snapshots in a sequential order, SyncIQ ensures that datasets stay consistent across clusters. Snapshots created on the target cluster reflect the data from the source cluster.

Configuring snapshot archiving on the target cluster is essential for the optimal use of OneFS SyncIQ, which requires a SnapshotIQ license. If snapshot archiving is not set up on the target cluster, only the most recent snapshot is available for failover. Yet, the expiration configuration allows you to set a limit on the number of snapshots. This management of snapshot numbers aids in the efficient utilization of storage resources, a vital aspect of storage management.

Introduction to Snapshot Archiving

Snapshot archiving plays a vital role in data protection. It allows organizations to store critical snapshots without crowding their main storage, which is crucial for proper administration and effective data compliance standards.

Benefits of Using OneFS SyncIQ

PowerScale OneFS SyncIQ brings several advantages to snapshot archiving. It delivers fast replication that grows with datasets. This is critical for expanding amounts of data.

SyncIQ excels at replicating unstructured data asynchronously, scaling as needed. This is vital for extensive backup and recovery needs. It supports different replication strategies, such as Initial, Incremental, and Differential, for efficient transfers. Also, it accommodates various deployment setups, ensuring it can match any organization's structure.

Administrators can set up job schedules based on recovery point objectives (RPOs) for consistent synchronization. For instance, customer data might sync every six hours, while HR data could sync every two days.

SnapshotIQ and SyncIQ Integration

Combining SnapshotIQ with SyncIQ offers a robust data protection solution. It ensures dependable storage management by seamlessly consolidating snapshots. These snapshots, powered by OneFS, are highly scalable. They take almost no time to create, thus does not hinder performance. This feature allows for an efficient record of snapshots over time.

SyncIQ stands out with its policy-driven approach to data replication. Thanks to its parallel architecture, it scales well as data volume increases. This ensures that Recovery Point Objectives (RPO) remain stable as data needs grow. You can customize the replication schedules to fit your organization's unique storage management requirements.

SyncIQ is notably adaptable, offering various deployment options for scenarios like disaster recovery, business continuity, and remote archiving. It is designed to work across different network types, employing both LAN and WAN optimizations for efficient data replication. This adaptability ensures effective replication regardless of the distance involved.

In summary, SnapshotIQ and SyncIQ integration deliver a thorough solution for data protection, streamlined storage management, and efficient snapshot consolidation.

Configuring SyncIQ Policies for Snapshot Archiving

Setting up SyncIQ policies properly is critical for efficient snapshot archiving. This task involves choosing the right source and target paths. It also includes enabling target cluster snapshot archiving when needed. With these steps, we can delve into making the most of SyncIQ's powerful tools.

Setting Source and Target Paths

Accurate setup of source and target paths is essential in SyncIQ. These paths show where data starts and where it's saved. This approach streamlines backup and recovery, making the process more efficient.

Enabling Target Snapshot Archiving

We recommended Activating target snapshot archiving for data safety and accessibility. It requires a SnapshotIQ license. For this feature, administrators may set a snapshot expiration to manage the snapshots maintained over time.

Detailed security settings enhance snapshot safety and SyncIQ replication. Using OneFS RBAC, organizations can tailor access levels, ensuring secure data management for backup and recovery processes.

Configuring Snapshot Alias pointers helps keep snapshots organized and readily accessible. The Snapshot Alias always points to the most recent SyncIQ snapshot. It updates with each policy run, maintaining current data links.

It's vital to keep snapshots in order, to maintain data integrity and support accurate data lifecycle management. With OneFS SyncIQ, the system sequentially replicates snapshots. This method creates an accurate record of changes across snapshots, aiding backup and recovery.

Admins have the option to set up recurring SyncIQ jobs with varied Recovery Point Objectives (RPO). This allows for different replication speeds based on the importance of the data. It means critical business data can be prioritized, enhancing data management efficiency.

The parallel architecture and scalable performance of PowerScale SyncIQ can manage vast amounts of data without splitting it into separate volumes. This keeps the replication process smooth and cohesive, essential for disaster recovery and ongoing operations.

In summary, OneFS SyncIQ's meticulous approach supports the reliable replication of snapshots. This method is crucial for data integrity and efficient lifecycle management. It offers strong protection for critical data, making it a key component in streamlined data management strategies.

Archiving Snapshots

Archiving snapshots through PowerScale OneFS SyncIQ is vital for keeping data safe and easily accessible. Here, we provide a detailed guide on archiving snapshots and offer solutions to common problems that you might face during the process.

To start, make sure you have a SnapshotIQ license on the target cluster. When you start syncing using SyncIQ policies, snapshots are created at the start and end of the job. However, for the smaller updates that follow, they're only taken once, at the end.

To start a SyncIQ policy with a specified snapshot, use the following command:

isi sync jobs start <policy-name> [--source-snapshot <snapshot>]

The command executes data replication based on the chosen SnapshotIQ snapshot since only SnapshotIQ snapshots can be selected. Snapshots created by a SyncIQ policy are incompatible. During the import of a snapshot for a policy, the system does not create a SyncIQ snapshot for that particular replication task.

When snapshots are replicated to the target cluster, by default, only the most recent snapshot is retained, and the naming convention on the target cluster is system generated. However, to prevent a single snapshot from being overwritten on the target cluster and the default naming convention, select the Enable capture of snapshots on the target cluster. When this checkbox is selected, specify a naming pattern and select the Snapshots do not expire option. Alternatively, specify a date for snapshot expiration. Limiting snapshots from expiring ensures that they are retained on the target cluster rather than overwritten when a newer snapshot is available.

By following the outlined strategies and best practices of OneFS SyncIQ, companies can reach highly effective snapshot archiving outcomes. This leads to superior data protection and backup operations. Ultimately, such an approach fosters efficient data management, which is critical for long-term gains and operational durability.

For replicating SnapshotIQ snapshots with SyncIQ, consider the following:

- Snapshot Replication: When SnapshotIQ snapshots take up too much space, they can be replicated to a remote cluster for backup or disaster recovery, freeing up space on the original cluster.

- Chronological Order: Snapshots must be replicated in chronological order to ensure the dataset, and its history is accurately copied from the source to the target cluster.

- Sequential Jobs: The replication process involves placing snapshots into sequential jobs, allowing the target cluster to create snapshots with deltas that reflect the most recent changes.

- Archival Process: It's important to configure and retain target snapshots before starting the archival process to avoid errors and ensure data integrity. After replication, the source cluster's snapshots can be deleted to create additional space.

For more information on snapshots and SyncIQ, see Introduction to SnapshotIQ and SyncIQ | Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations | Dell Technologies Info Hub.

Author: Aqib Kazi, Senior Principal Engineering Technologist

Dell PowerScale Source Based Routing Guide

Mon, 17 Jun 2024 19:34:13 -0000

|Read Time: 0 minutes

Welcome to the Dell PowerScale guide on Source-Based Routing (SBR). SBR is a cool way to ensure that data packets go where they need to, based on where they came from. This part of OneFS helps set up paths for data using unique routes for each subnet. We'll cover how to set up these pathways using firewall rules and ensure the right data gets priority.

Before the introduction of OneFS Release 9.8.0.0, SBR was not enabled by default. However, with the launch of OneFS Release 9.8.0.0, SBR has been set to enabled by default for all fresh PowerScale deployments, also known as Greenfield deployments. For existing OneFS clusters, referred to as Brownfield deployments, upgrading to OneFS Release 9.8.0.0 will retain the current SBR configuration. Additionally, OneFS Release 9.8.0.0 has expanded SBR's capabilities to include IPv6 support, whereas earlier versions were limited to IPv4 support only.

Understanding how SBR works is key to taking the best network action. Though SBR changes the default route, it respects the paths you set manually. It focuses on managing the data and responding to requests, not starting them, which can really change the way data moves out of your network.

SBR Overview

Source-Based Routing (SBR) uses the source IP address of IP packets to make smart routing choices. SBR's big plus is how it handles network traffic. To put it simply, it sends return traffic back the same way it came. This reduces pressure on other routes, allowing network traffic to flow better and more evenly. This smart approach to routing shows why knowing SBR's role is crucial for network traffic.

The term Source-Based Routing (SBR) implies that it directs traffic according to the originating IP address. Yet, in practice, SBR establishes default routes specific to each subnet. SBR operates by employing designated gateways for each individual subnet.

In the absence of an SBR configuration, the gateway with the highest priority, meaning the one with the smallest numerical value that can be accessed, is selected as the default route. When SBR is activated, if traffic originates from a subnet that the default gateway cannot access, firewall rules are implemented. These rules are incorporated using IPFW.

IPFW and SBR

IPFW, also known as IP firewall, is a firewall software component of some UNIX-like operating systems and plays a key role in Source-Based Routing (SBR). IPFW acts as a firewall and can also manage routing rules. In the context of SBR, it's used to dynamically create default routes for each subnet, which are essential for directing traffic efficiently within a network.

Although SBR and firewall functionalities are both part of the IPFW table, they operate independently within different partitions of the table, allowing for separate control over each feature. With SBR, IPFW helps direct traffic based on the source IP address. If a session is initiated from a source subnet, IPFW creates a rule that ensures that the traffic is routed through the appropriate gateway.

The process of adding IPFW rules is stateless, meaning it doesn't maintain any session information. This translates to creating per-subnet default routes based on the traffic's source IP address. While SBR does not eliminate the need for a default gateway, it effectively overrides the default gateway for traffic originating from subnets not covered by static routes.

In summary, IPFW is a versatile tool that provides firewall capabilities and supports SBR by managing routing rules to ensure that traffic is directed through the most appropriate pathways within a network.

Configuring source-based routing

In OneFS, to see if SBR is configured in your cluster, use the isi network external view command, which tells you whether SBR is set to True or False:

OneFS-1# isi network external view Client TCP Ports: 2049, 445, 20, 21, 80 Default Groupnet: groupnet0 SC Rebalance Delay: 0 Source Based Routing: False SC Server TTL: 900

On OneFS 8.x and newer releases, you can use both the CLI and WebUI to configure SBR. The CLI command is:

isi network external modify --sbr=[false|true]

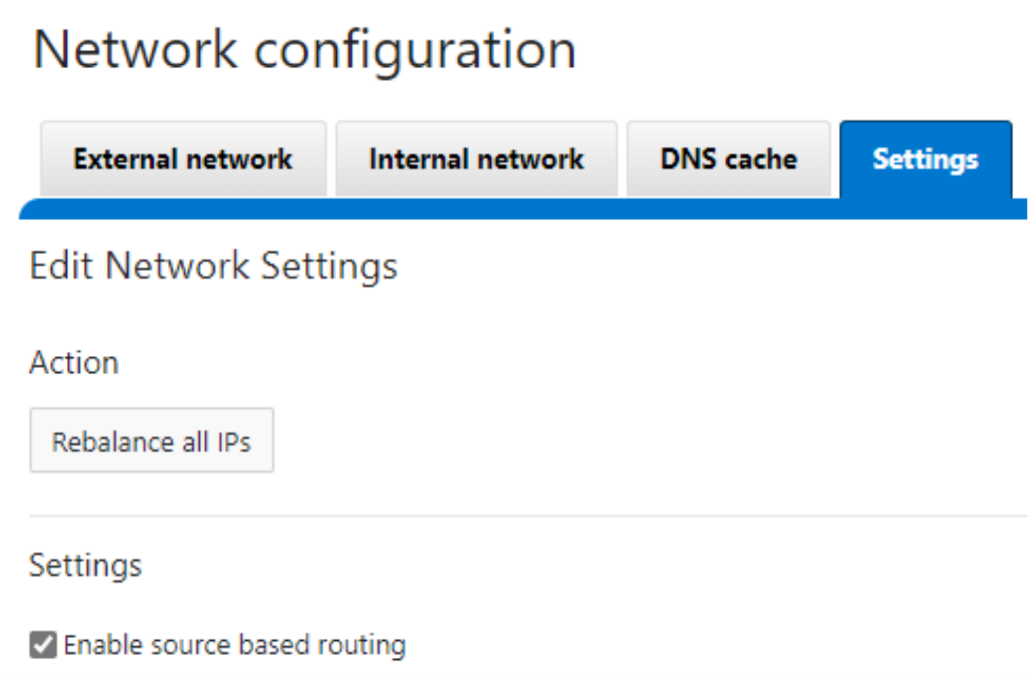

To configure SBR from the WebUI, navigate to Cluster Management > Network Configuration. Under the Settings tab, select the Enable source based routing checkbox, as shown here:

For more about SBR, including some important considerations, see Overview | Dell PowerScale: Network Design Considerations | Dell Technologies Info Hub.

Author: Aqib Kazi, Senior Principal Technical Marketing Engineer

Accelerating AI Innovation and Sustainability: The High-Density, High-Performance Dell PowerScale F910

Mon, 03 Jun 2024 16:31:19 -0000

|Read Time: 0 minutes

Accelerating AI Innovation and Sustainability: The High-Density, High-Performance Dell PowerScale F910

In the era of rapid technological advancement, enterprises face an unprecedented challenge: accelerating AI innovation while minimizing environmental impact. As the demand for AI processing power skyrockets, so does the associated energy consumption, thus leading to a significant increase in carbon footprint. Power consumption has become a recurring topic with the introduction of AI. The New York Times reports, A.I. Could Soon Need as Much Electricity as an Entire Country (nytimes.com). These challenges call for a modern solution bridging the gap between performance and sustainability.

Enter Dell’s PowerScale F910 platform, a high-density, high-performance node designed to accelerate AI innovation while dramatically reducing an enterprise’s carbon footprint. A cutting-edge platform, transforming the way organizations approach AI, reaching ambitious performance goals without compromising our environmental responsibility.

How much more density are we talking about? The F910 offers 20% more density per rack unit than the F710 which was released merely three months ago with extraordinary performance. Moreover, the F910 delivers density with performance, as it’s up to 2.2x faster to AI insights.

In this blog post, we’ll explore how our high-density, high-performance platform redefines the landscape of AI innovation and sustainability. Discover how your enterprise can leverage this groundbreaking technology to accelerate AI initiatives, drive business growth, and make a positive impact on the environment.

AI innovation and sustainability challenges

Many challenges arise as enterprises increasingly rely on AI to drive innovation and gain a competitive edge. One of the most significant challenges is the exponentially increased demand for AI processing power. As AI models become more sophisticated and data volumes explode, enterprises require hardware solutions to keep pace with these evolving needs.

However, with great processing power comes great energy consumption. The energy-intensive nature of AI workloads has led to a concerning rise in enterprises' carbon footprints. Data centers housing AI infrastructure consume vast amounts of electricity, often generated from non-renewable resources, contributing significantly to greenhouse gas emissions. The impact here is not only environment but it is also a risk to businesses as consumers, investors, and regulators who increasingly prioritize sustainability.

Moreover, AI hardware's high energy consumption translates into substantial operational costs for enterprises. The electricity required to power and cool AI systems can quickly eat into an organization’s bottom line, making it challenging to justify the ROI of AI initiatives. In fact, Forbes reports Generative AI Breaks The Data Center: Data Center Infrastructure And Operating Costs Projected To Increase To Over $76 Billion By 2028 (forbes.com).

To address these challenges, enterprises urgently need hardware platforms that deliver high performance while prioritizing energy efficiency. They require platforms that can handle the demanding workloads of AI innovation without compromising on sustainability goals. The industry is calling for a paradigm shift in AI hardware design that places equal emphasis on processing power and environmental responsibility.

Fortunately, as the AI factory, Dell Technologies has answered the call to balance AI innovation with sustainability, by developing the new PowerScale platform that tackles these challenges head-on. In leveraging cutting-edge technology and innovative design principles, our solution enables enterprises to accelerate AI innovation while significantly reducing their carbon footprint. In the next section, we’ll examine how our hardware platform revolutionizes the AI landscape and paves the way for a more sustainable future.

Introducing the PowerScale F910

At Dell, we understand the pressing demand for a hardware solution that can bridge the gap between AI innovation and sustainability. From this need, we’ve developed a cutting-edge hardware platform designed to address the challenges enterprises face in the AI landscape.

Our high-density, high-performance hardware platform is a testament to our commitment to push the boundaries of AI technology while prioritizing environmental responsibility. By leveraging state-of-the-art hardware and the technical innovations of OneFS, the F910 provides unparalleled processing power in a compact, energy-efficient package.

Overview

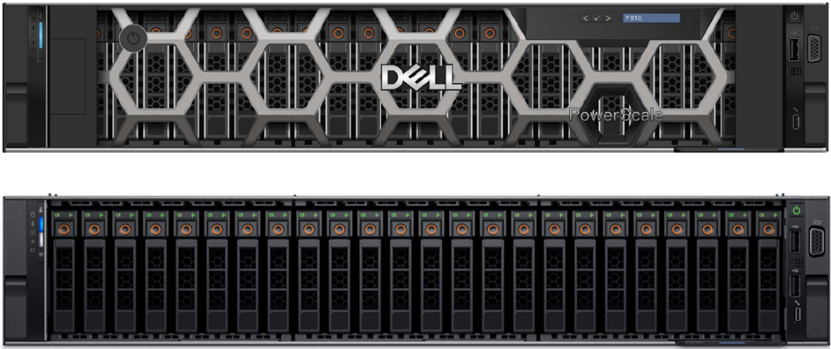

The F910's front panel has a bezel protecting the 24 NVMe SSD drives, as displayed in the image below.

The front panel has an LCD that offers a range of information and status updates. It also has an option to add a node to an existing PowerScale cluster. The LCD display is also used to view the node’s iDRAC IP address, MAC address, cluster name, asset tag, power output, and temperature information. Furthermore, the front panel has an LED for the status on the left. For example, a failed drive illuminates an amber LED.

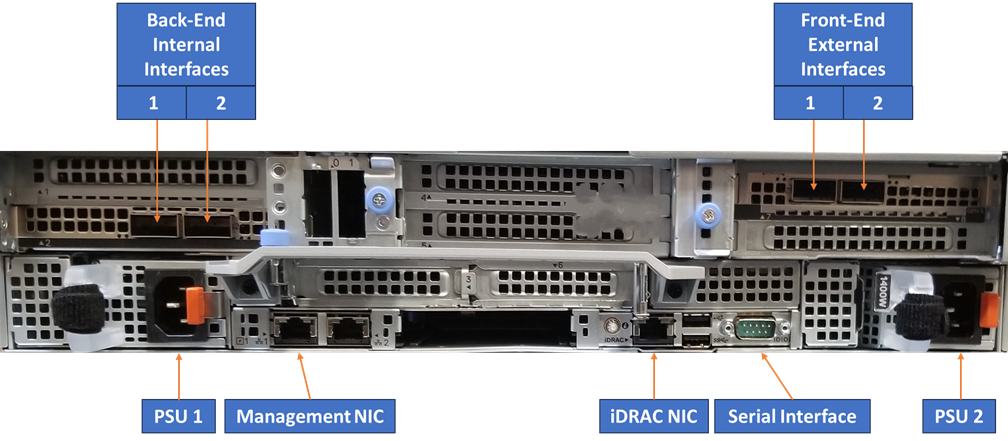

Moving on to the rear of the F910, we can see all the connections.

The power supplies are split across the backplane, allowing maximum airflow through the center of the chassis. The front-end and back-end network interfaces are on opposite sides, offering Ethernet connectivity. The other interfaces on the rear include iDRAC, serial, and management NICs.

The F910 nodes use NVMe SSDs. In a 6 RU rack configuration of 3 nodes, the F910 raw capacity spans a minimum of 276.5 TB to a maximum of 2.16 PB. The available drive capacities for the F910 are listed in the following table.

Non-SED Drive Capacities | SED-FIPS Drive Capacities | SED-Non-FIPS Drive Capacities |

3.84 TB | 3.84 TB | 15.36 TB |

7.68 TB | 7.68 TB | 30.72 TB QLC |

15.36 TB QLC | 15.36 TB QLC* | |

30.72 TB QLC | 30.72 TB QLC* |

*Future availability

For a new cluster deployment, a minimum of 3 F910 nodes is required to form a cluster. For existing cluster deployments, the F910 is node pool compatible with the F900, allowing the F910 to be added in a multiple of 1. If an existing cluster does not have any F900s, a minimum of 3 nodes is required to form a new node pool.

High density

In addition to being a high-performance platform, the F910 is the highest-density all-flash PowerScale node. We’ve engineered our system to pack not only an exceptional amount of computing power but also drive density, maximizing AI capabilities without the need for extensive physical infrastructure. This reduces the spatial footprint of AI hardware and minimizes the energy required for cooling and maintenance. See the table below to compare how the F910 compares to our other all-flash platforms.

Platform | Cluster Density per Rack Unit |

PowerScale F200 | 30.72 TB |

PowerScale F210 | 61 TB |

PowerScale F600 | 245 TB |

PowerScale F710 | 307 TB |

Isilon F810 | 231 TB |

PowerScale F910 | 360 TB |

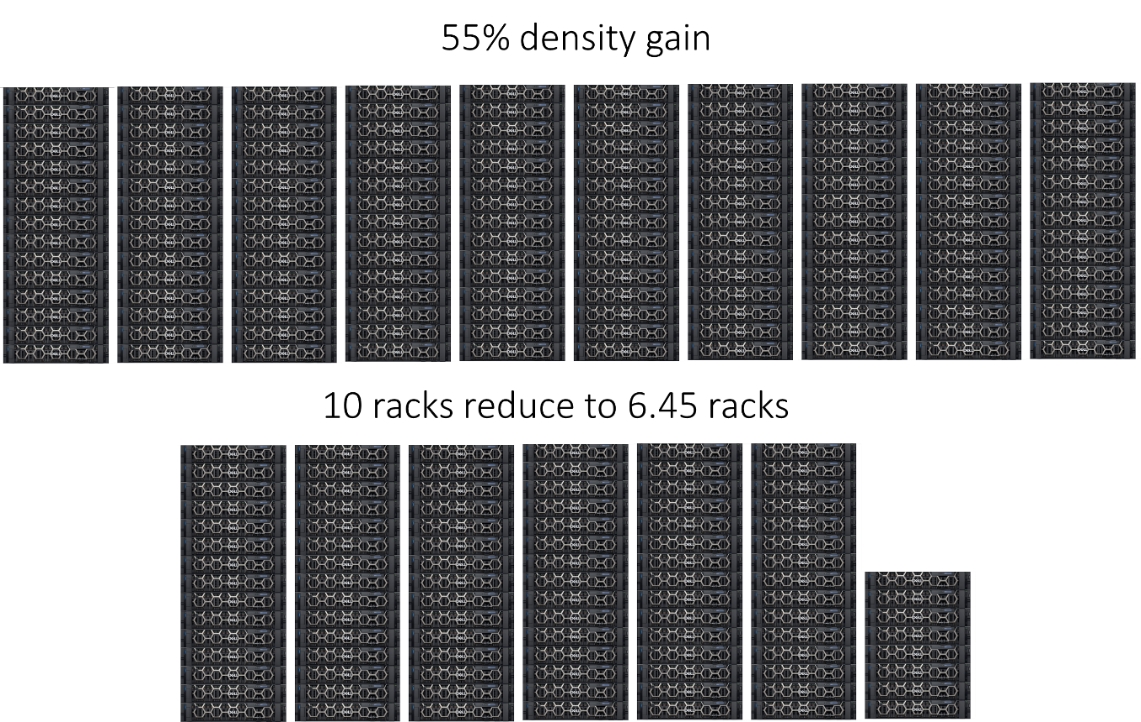

The F910 offers 20% more density compared to the F710. This number is further magnified if we compare the F910 to the F810, where the F910 offers a 55% gain! So now, let's characterize this into. What would look like in a data center? Let’s take a scenario where a data center currently has 10 racks, and each rack is filled to its current maximum capacity. With a 55% gain in density per rack unit, we can now fit 55% more computing and storage resources in each rack compared to the previous setup. If we take a simple scenario where a current data center has 10 racks, with 55% density gain, that's 10 ÷ 155% ≈ 6.45 racks.

In this scenario, the impact of a 55% gain in density per rack unit is still significant, as it allows the data center to reduce its rack consumption by 35%. Reducing physical space requirements can lead to cost savings, improved efficiency, and greater flexibility for future growth. Now, let’s visualize this in the image below.

Data reduction is enabled by default out of the box, further increasing the F910’s high density and capacity envelope. The inline data reduction process incorporates both compression and deduplication. When these elements are combined, they significantly boost the overall density of a cluster. As the density per Rack Unit (RU) increases, it decreases the Total Cost of Ownership (TCO) for the solution, reducing the carbon footprint.

High performance

The F910 achieves the ultimate performance envelope by taking advantage of hardware and software updates. PowerScale OneFS 9.7 and 9.8 introduced several performance-oriented updates.

Note: The PowerScale F910 requires OneFS 9.8 at minimum. The mention of OneFS 9.7 here is to understand the performance leap from previous OneFS releases.

OneFS 9.7 introduced a significant leap in performance by enhancing the following:

- Implementing a round-robin distribution strategy across thread groups has significantly reduced thread lock contention, increasing OneFS's overall efficiency and performance.

- Contention on turnstile locks has been reduced by increasing the value of Read-Write (RW) Lock retries, optimizing system performance.

- In the context of NVMe storage nodes, writing operations are strategically executed around the journal for newly allocated blocks, therefore maintaining high performance and preventing data processing and access delays.

OneFS 9.8 further pushes the pure software performance envelope, optimizing the OneFS 9.7 updates to further build on them. Additionally, OneFS 9.8 introduces enhancements to thread handling and lock management. Finally, general code updates have brought about a significant performance leap.

On the hardware front, the F910 leverages the PowerEdge platform for extreme performance. Powered by a dual-socket Intel® Xeon® Gold 6442Y Processor, it delivers higher core counts, faster memory speeds, and improved security features. The F910 features PCIe 5.0 technology, which doubles the bandwidth and reduces the latency of the previous generation, thus enabling faster data transfers and more efficient use of accelerators. Furthermore, the F910 takes advantage of the DDR5 RAM, offering greater speed and bandwidth. The table below summarizes the F910’s hardware specifications:

Attribute | PowerScale F910 Specification |

CPU | Dual Socket – Intel Sapphire Rapids 6442Y (2.6G/24C) |

Memory | Dual Rank DDR5 RDIMMs 512 GB (16 x 32 GB) |

Front-end networking | 2 x 100 GbE or 25 GbE |

Infrastructure networking | 2 x 100 GbE |

NVMe SSD drives | 24 |

The combination of hardware and software updates allows the F910 to tackle even the most challenging workloads, minimizing time for AI insights. Overall, the F910 delivers AI insights 2.2x faster than previous generations. Let’s take that into context for a minute. If learning an AI model takes 10 hours to complete, being able to do it 2.2 times faster means it would be finished in approximately 4.55 hours. That’s a significant improvement in efficiency and productivity. You’re saving approximately 5.45 hours that can be used for other AI models.

NVIDIA DGX SuperPOD certification

Dell PowerScale is the world’s first Ethernet-based storage solution certified on NVIDIA DGX SuperPOD. The collaboration between Dell and NVIDIA is designed to help customers achieve faster and more efficient AI storage. Dell PowerScale exceeds the performance benchmark requirements for DGX SuperPOD. Integrating PowerScale and DGX SuperPOD allows for handling vast amounts of data at unprecedented speeds, thereby accelerating the process of training AI models.

The PowerScale F910 expands the family of the already NVIDIA DGX SuperPOD-certified storage solution, while accelerating training times and balancing sustainability. For more on the PowerScale NVIDIA DGX SuperPOD certification, see h19971-powerscale-ethernet-superpod-certification.pdf (delltechnologies.com).

Services

Accelerate AI outcomes with help at every stage from Dell Professional services. Trusted experts work alongside you to align a winning strategy, validate data sets, implement, train and support GenAI models and close skills gaps to help you maintain secure and optimized F910 operations now and into the future. Furthermore, Dell services embeds sustainability throughout our services portfolio to proactively help customers approach the most pressing environmental challenges from sustainability to reducing waste.

Customer feedback

During our F910 beta program, partners and customers tested and validated the F910's performance. We wanted to confirm our performance and density gains in a real-world environment. More importantly, we wanted to know what the gains would be on an existing workload.

At the onset, after the first batch of tests, all of the initial feedback was consistent. To paraphrase,

“I know you all claimed a lot more performance, but I didn’t think it would be this good.”

We were ecstatic to hear that feedback. As the tests rolled on, more glowing reviews continued to come in.

In the end, John Lochausen, Technical Solutions Architect at World Wide Technology, summed up the sentiment best:

“We're hyper-focused on AI innovation in our AI Proving Ground, and the all-flash PowerScale F910 has exceeded our expectations. It doubles performance, reducing the power and energy costs required for the same workload, further advancing our customers' sustainability goals.”

Conclusion

In conclusion, what the F910 proves is that when it comes to AI innovation and sustainability, you can have your cake and eat it, too. Organizations can now accelerate AI innovation while accelerating sustainability. To summarize, the F910 checks all the modern AI workload requirements: High-Performance 🗹 High-Density 🗹 Power-Efficient 🗹 NVIDIA-Certified 🗹

For more on the PowerScale F910, see PowerScale All-Flash F210, F710, and F910 | Dell Technologies Info Hub

Author: Aqib Kazi, Senior Principal Engineering Technologist

Future-Proof Your Data: Airgap Your Business Continuity Dataset

Thu, 02 May 2024 17:49:35 -0000

|Read Time: 0 minutes

In today's digital age, the ransomware threat looms larger than ever, posing a significant risk to businesses worldwide. As someone deeply entrenched in the intricacies of data protection, I've seen firsthand how devastating data breaches can be. It's not just about the immediate loss of data: the ripple effects can disrupt business operations for weeks, if not months. Interestingly, while nearly 91% of organizations use some form of data backup, a staggering 72% have had to recover lost data from a backup in the past year. This highlights the critical need for robust business continuity plans that go beyond simple data backup.

With the rate of new business failures varying significantly by location, the importance of being prepared cannot be overstated. For instance, in the District of Columbia, 28% of businesses fail in their first year, partly due to inadequate data protection strategies. I aim to shed light on how a well-crafted business continuity dataset can protect your company against ransomware attacks. By understanding common pitfalls and leveraging PowerScale’s Cyber Protection Suite, businesses can ensure that their data remains secure and accessible in an airgap, even in the face of unforeseen disasters.

Understanding ransomware attacks and their impact on business continuity

Ransomware attacks are not just a temporary disruption but a significant threat to a company's ongoing ability to conduct business. As a cybersecurity enthusiast committed to sharing valuable insights, I've seen firsthand how these attacks can dismantle a business's operations overnight. It's crucial for organizations to understand the ramifications of ransomware and to implement strategies that mitigate these risks. Let’s delve into the cost of ransomware to businesses and examine some case studies that highlight the importance of being prepared.

The cost of ransomware to businesses

The financial implications of ransomware attacks on businesses are staggering. Beyond the demand for ransom payments, which can reach millions of dollars, hidden costs can be even more detrimental. I've seen businesses suffer from prolonged downtime, loss of productivity, and irreversible damage to their reputation. According to recent cybersecurity reports, businesses attacked by ransomware often face comprehensive audits, increased insurance premiums, and, in some cases, legal liabilities due to compromised data.

Moreover, the recovery process incurs significant IT expenditures, including forensic analysis, system upgrades, and employee training. With organizations being attacked by ransomware every 14 seconds, the urgency for resilient data protection strategies is clear. Understanding these costs underlines the importance of investing in proactive cybersecurity measures, underscoring that the expense of prevention pales when compared to the costs of recovery.

Recent ransomware attacks

In September 2023, the MGM Grand Hotel in Las Vegas, Nevada faced a significant ransomware attack that had widespread repercussions. The attack targeted MGM Resorts International properties across the U.S. Operational disruptions were severe, including the disabling of online reservation systems, malfunctioning digital room keys, and affected slot machines on casino floors. MGM Resorts’ websites were also taken down. The financial impact was substantial, costing the company approximately $100 million due to operational disruptions and recovery efforts. The perpetrators behind this attack were the ALPHV group (also known as BlackCat), which is believed to have links to the Russian government.

Also in September 2023, further down the Las Vegas Strip, Caesars Entertainment, one of the world’s largest casino companies, fell victim to a ransomware attack. The attack targeted their systems, resulting in unauthorized access to their network. The hackers exfiltrated data, including many customers’ driver’s licenses and Social Security numbers. Caesars confirmed the breach and subsequently paid a multi-million-dollar ransom to the cybercriminals.

These attacks serve as a stark reminder that even seemingly impenetrable organizations can fall victim to sophisticated cyber threats.

Key elements of effective business continuity plans

As I delve deeper into the significance of business continuity planning for mitigating ransomware risks, it's crucial to outline the key elements that make these plans effective. First and foremost, a comprehensive risk assessment stands at the foundation. It enables businesses to identify potential vulnerabilities and ransomware threats, ensuring that all angles are covered. Next, comes the development of a robust incident response plan. This detailed guide prepares organizations to react swiftly and efficiently during a ransomware attack, minimizing operational disruptions and financial losses.

Another essential component is securing data backups. These backups must be regularly stored in an airgap to prevent them from becoming ransomware targets. Communication plans must also be in place to ensure transparent and timely information sharing with all stakeholders during and after a ransomware incident. Lastly, continuous employee training on cybersecurity best practices helps strengthen the first line of defense against ransomware attacks.

Incorporating ransomware preparedness into business continuity plans

Incorporating ransomware preparedness into business continuity plans is not just an option — it's a necessity in today's digital age. To start, adjusting the incident response plan specifically for ransomware involves identifying the most critical assets and ensuring they are protected with the most robust defenses. This can include employing advanced threat detection tools and securing endpoints to limit the spread of an attack.

Next, understanding the specific recovery requirements for key operations is vital. It involves establishing clear recovery time objectives (RTOs) and recovery point objectives (RPOs) for all critical systems. This guides the priority of system restorations to ensure that the most essential services are brought back online first, to minimize the business impact.

Testing the business continuity plan against ransomware scenarios on a regular basis is indispensable. Simulated attacks provide invaluable insights into the effectiveness of the current strategy and reveal areas for improvement. It ensures that when a real attack occurs, the organization is not caught off guard.

How an airgap protects your data

An airgap, also known as an air wall or air gapping, is a network security measure employed on one or more computers to ensure that a secure computer network is physically isolated from unsecured networks, such as the public Internet or an unsecured local area network. This strategy seeks to ensure the total isolation of a given system electromagnetically, electronically, and physically. An airgapped computer or network has no network interface controllers connected to other networks, with a physical and logical airgap.

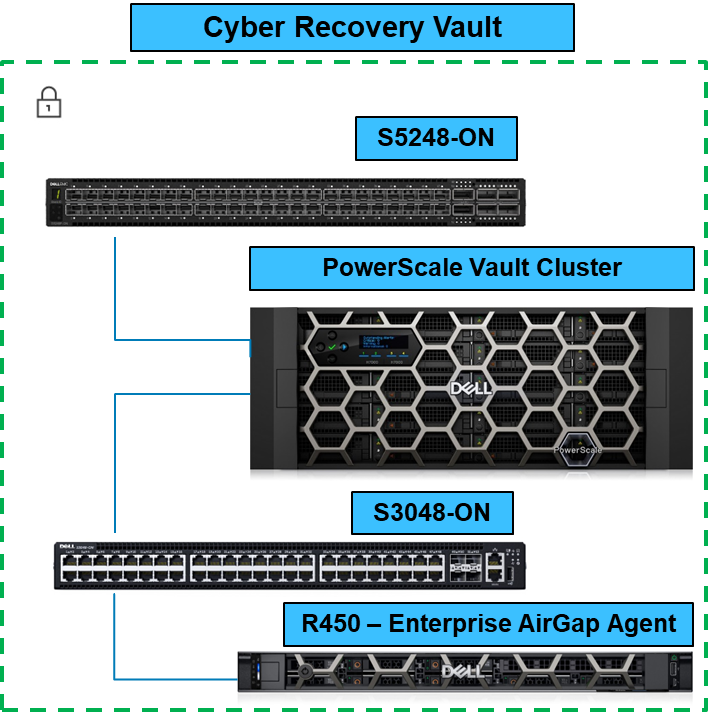

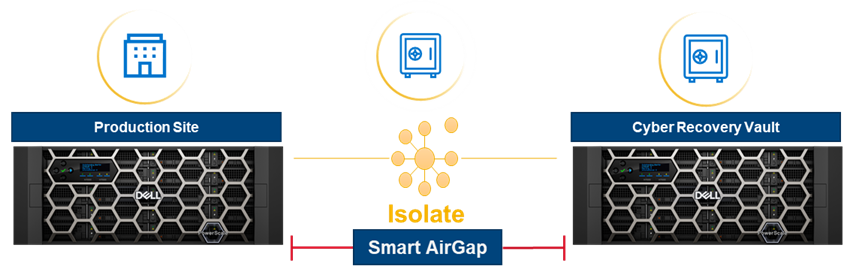

For example, data on a PowerScale cluster in an airgap is fully protected from ransomware attacks, because the malware does not have access to it. In the following figure, the PowerScale cluster resides in the Cyber Recovery Vault, which has no access to the outside world.

When the Business Continuity Dataset is copied to the PowerScale Vault Cluster, it is completely airgapped from the outside world. You can configure the solution to check for new dataset updates or simply have it remain as a vault.

In this blog, I explained how to airgap a PowerScale cluster. In future blogs, I’ll cover the entire PowerScale Cyber Protection Suite. For more information about the PowerScale Cyber Protection Suite, be sure to see PowerScale Cyber Protection Suite Reference Architecture | Dell Technologies Info Hub.

Author: Aqib Kazi Senior Principal Engineering Technologist

Dell PowerScale OneFS Introduction for NetApp Admins

Fri, 26 Apr 2024 17:09:51 -0000

|Read Time: 0 minutes

For enterprises to harness the advantages of advanced storage technologies with Dell PowerScale, a transition from an existing platform is necessary. Enterprises are challenged by how the new architecture will fit into the existing infrastructure. This blog post provides an overview of PowerScale architecture, features, and nomenclature for enterprises migrating from NetApp ONTAP.

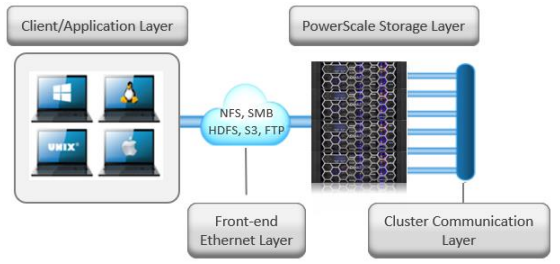

PowerScale overview

The PowerScale OneFS operating system is based on a distributed architecture, built from the ground up as a clustered system. Each PowerScale node provides compute, memory, networking, and storage. The concepts of controllers, HA, active/standby, and disk shelves are not applicable in a pure scale-out architecture. Thus, when a node is added to a cluster, the cluster performance and capacity increase collectively.

Due to the scale-out distributed architecture with a single namespace, single volume, single file system, and one single pane of management, the system management is far simpler than with traditional NAS platforms. In addition, the data protection is software-based rather than RAID-based, eliminating all the associated complexities, including configuration, maintenance, and additional storage utilization. Administrators do not have to be concerned with RAID groups or load distribution.

NetApp’s ONTAP storage operating system has evolved into a clustered system with controllers. The system includes ONTAP FlexGroups composed of aggregates and FlexVols across nodes.

OneFS is a single volume, which makes cluster management simple. As the cluster grows in capacity, the single volume automatically grows. Administrators are no longer required to migrate data between volumes manually. OneFS repopulates and balances data between all nodes when a new node is added, making the node part of the global namespace. All the nodes in a PowerScale cluster are equal in the hierarchy. Drives share data intranode and internode.

PowerScale is easy to deploy, operate, and manage. Most enterprises require only one full-time employee to manage a PowerScale cluster.

For more information about the PowerScale OneFS architecture, see PowerScale OneFS Technical Overview and Dell PowerScale OneFS Operating System.

Figure 1. Dell PowerScale scale-out NAS architecture

OneFS and NetApp software features

The single volume and single namespace of PowerScale OneFS also lead to a unique feature set. Because the entire NAS is a single file system, the concepts of FlexVols, shares, qtrees, and FlexGroups do not apply. Each NetApp volume has specific properties associated with limited storage space. Adding more storage space to NetApp ONTAP could be an onerous process depending on the current architecture. Conversely, on a PowerScale cluster, as soon as a node is added, the cluster is rebalanced automatically, leading to minimal administrator management.

NetApp’s continued dependence on volumes creates potential added complexity for storage administrators. From a software perspective, the intricacies that arise from the concept of volumes span across all the features. Configuring software features requires administrators to base decisions on the volume concept, limiting configuration options. The volume concept is further magnified by the impacts on storage utilization.

The fact that OneFS is a single volume means that many features are not volume dependent but, rather, span the entire cluster. SnapshotIQ, NDMP backups, and SmartQuotas do not have limits based on volumes; instead, they are cluster-specific or directory-specific.

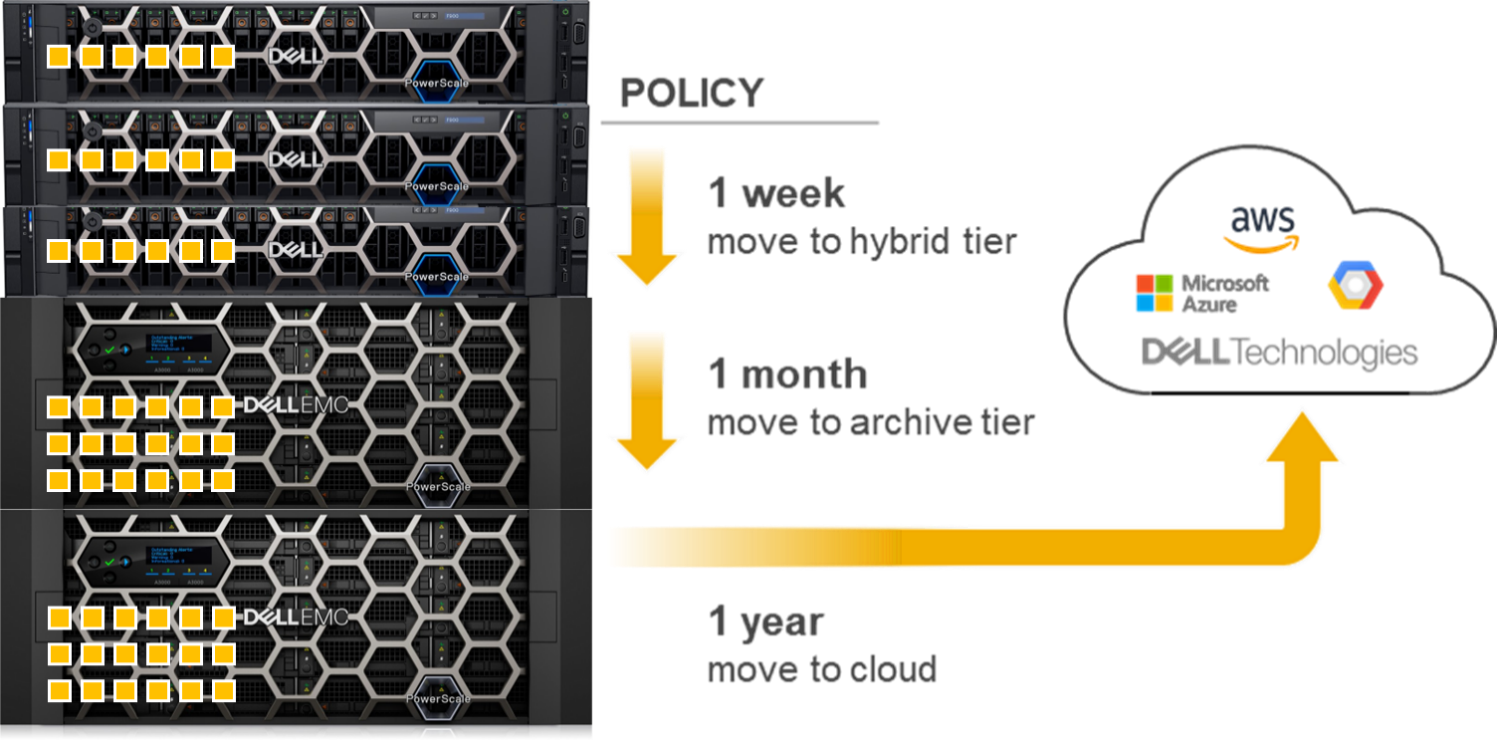

As a single-volume NAS designed for file storage, OneFS has the scalable capacity with ease of management combined with features that administrators require. Robust policy-driven features such as SmartConnect, SmartPools, and CloudPools enable maximum utilization of nodes for superior performance and storage efficiency for maximum value. You can use SmartConnect to configure access zones that are mapped to specific node performances. SmartPools can tier cold data to nodes with deep archive storage, and CloudPools can store frozen data in the cloud. Regardless of where the data is residing, it is presented as a single namespace to the end user.

Storage utilization and data protection

Storage utilization is the amount of storage available after the NAS system overhead is deducted. The overhead consists of the space required for data protection and the operating system.

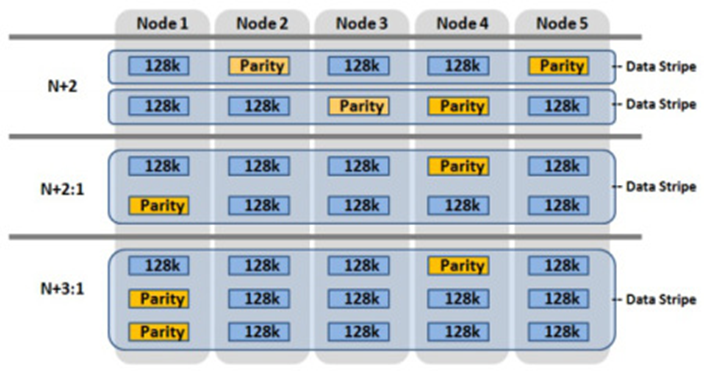

For data protection, OneFS uses software-based Reed-Solomon Error Correction with up to N+4 protection. OneFS offers several custom protection options that cover node and drive failures. The custom protection options vary according to the cluster configuration. OneFS provides data protection against more simultaneous hardware failures and is software-based, providing a significantly higher storage utilization.

The software-based data protection stripes data across nodes in stripe units, and some of the stripe units are Forward Error Correction (FEC) or parity units. The FEC units provide a variable to reformulate the data in the case of a drive or node failure. Data protection is customizable to be for node loss or hybrid protection of node and drive failure.

With software-based data protection, the protection scheme is not per cluster. It has additional granularity that allows for making data protection specific to a file or directory—without creating additional storage volumes or manually migrating data. Instead, OneFS runs a job in the background, moving data as configured.

Figure 2. OneFS data protection

OneFS protects data stored on failing nodes, or drives in a cluster through a process called SmartFail. During the process, OneFS places a device into quarantine and, depending on the severity of the issue, places the data on the device into a read-only state. While a device is quarantined, OneFS reprotects the data on the device by distributing the data to other devices.

NetApp’s data protection is all RAID-based, including NetApp RAID-TEC, NetApp RAID-DP, and RAID 4. NetApp only supports a maximum of triple parity, and simultaneous node failures in an HA pair are not supported.

For more information about SmartFail, see the following blog: OneFS Smartfail. For more information about OneFS data protection, see High Availability and Data Protection with Dell PowerScale Scale-Out NAS.

NetApp FlexVols, shares, and Qtrees

NetApp requires administrators to manually create space and explicitly define aggregates and flexible volumes. The concept of FlexVols, shares, and Qtrees are nonexistent in OneFS, as the file system is a single volume and namespace, spanning the entire cluster.

SMB shares and NFS exports are created through the web or command-line interface in OneFS. Both methods allow the user to create either within seconds with security options. SmartQuotas is used to manage storage limits, cluster-wide, across the entire namespace. They include accounting, warning messages, or hard limits of enforcement. The limits can be applied by directory, user, or group.

Conversely, ONTAP quota management is at the volume or FlexGroup level, creating additional administrative overhead because the process is more onerous.

Snapshots

The OneFS snapshot feature is SnapshotIQ, which does not have specified or enforced limits for snapshots per directory or snapshots per cluster. However, the best practice is 1,024 snapshots per directory and 20,000 snapshots per cluster. OneFS also supports writable snapshots. For more information about SnapshotIQ and writable snapshots, see High Availability and Data Protection with Dell PowerScale Scale-Out NAS.

NetApp Snapshot supports 255 snapshots per volume in ONTAP 9.3 and earlier. ONTAP 9.4 and later versions support 1,023 snapshots per volume. By default, NetApp requires a space reservation of 5 percent in the volume when snapshots are used, requiring the space reservation to be monitored and manually increased if space becomes exhausted. Further, the space reservation can also affect volume availability. The space reservation requirement creates additional administration overhead and affects storage efficiency by setting aside space that might or might not be used.

Data replication

Data replication is required for disaster recovery, RPO, or RTO requirements. OneFS provides data replication through SyncIQ and SmartSync.

SyncIQ provides asynchronous data replication, whereas NetApp’s asynchronous replication, which is called SnapMirror, is block-based replication. SyncIQ provides options for ensuring that all data is retained during failover and failback from the disaster recovery cluster. SyncIQ is fully configurable with options for execution times and bandwidth management. A SyncIQ target cluster may be configured as a target for several source clusters.

SyncIQ offers a single-button automated process for failover and failback with Superna Eyeglass DR Edition. For more information about Superna Eyeglass DR Edition, see Superna | DR Edition (supernaeyeglass.com).

SyncIQ allows configurable options for replication down to a specific file, directory, or entire cluster. Conversely, NetApp’s SnapMirror replication starts at the volume at a minimum. The volume concept and dependence on volume requirements continue to add management complexity and overhead for administrators while also wasting storage utilization.

To address the requirements of the modern enterprise, OneFS version 9.4.0.0 introduced SmartSync. This feature replicates file-to-file data between PowerScale clusters. SmartSync cloud copy replicates file-to-object data from PowerScale clusters to Dell ECS and cloud providers. Having multiple target destinations allows administrators to store multiple copies of a dataset across locations, providing further disaster recovery readiness. SmartSync cloud copy replicates file-to-object data from PowerScale clusters to Dell ECS and cloud providers. SmartSync cloud copy also pulls the replicated object data from a cloud provider back to a PowerScale cluster in file. For more information about SyncIQ, see Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations. For more information about SmartSync, see Dell PowerScale SmartSync.

Quotas

OneFS SmartQuotas provides configurable options to monitor and enforce storage limits at the user, group, cluster, directory, or subdirectory level. ONTAP quotas are user-, tree-, volume-, or group-based.

For more information about SmartQuotas, see Storage Quota Management and Provisioning with Dell PowerScale SmartQuotas.

Load balancing and multitenancy

Because OneFS is a distributed architecture across a collection of nodes, client connectivity to these nodes requires load balancing. OneFS SmartConnect provides options for balancing the client connections to the nodes within a cluster. Balancing options are round-robin or based on current load. Also, SmartConnect zones can be configured to have clients connect based on group and performance needs. For example, the Engineering group might require high-performance nodes. A zone can be configured, forcing connections to those nodes.

NetApp ONTAP supports multitenancy with Storage Virtual Machines (SVMs), formerly vServers and Logical Interfaces (LIFs). SVMs isolate storage and network resources across a cluster of controller HA pairs. SVMs require managing protocols, shares, and volumes for successful provisioning. Volumes cannot be nondisruptively moved between SVMs. ONTAP supports load balancing using LIFs, but configuration is manual and must be implemented by the storage administrator. Further, it requires continuous monitoring because it is based on the load on the controller.

OneFS provides multitenancy through SmartConnect and access zones. Management is simple because the file system is one volume and access is provided by hostname and directory, rather than by volume. SmartConnect is policy-driven and does not require continuous monitoring. SmartConnect settings may be changed on demand as the requirements change.

SmartConnect zones allow administrators to provision DNS hostnames specific to IP pools, subnets, and network interfaces. If only a single authentication provider is required, all the SmartConnect zones map to a default access zone. However, if directory access and authentication providers vary, multiple access zones are provisioned, mapping to a directory, authentication provider, and SmartConnect zone. As a result, authenticated users of an access zone only have visibility into their respective directory. Conversely, an administrator with complete file system access can migrate data nondisruptively between directories.

For more information about SmartConnect, see PowerScale: Network Design Considerations.

Compression and deduplication

Both ONTAP and OneFS provide compression. The OneFS deduplication feature is SmartDedupe, which allows deduplication to run at a cluster-wide level, improving overall Data Reduction Rate (DRR) and storage utilization. With ONTAP, the deduplication is enabled at the aggregate level, and it cannot cross over nodes.

For more information about OneFS data reduction, see Dell PowerScale OneFS: Data Reduction and Storage Efficiency. For more information about SmartDedupe, see Next-Generation Storage Efficiency with Dell PowerScale SmartDedupe.

Data tiering

OneFS has integrated features to tier data based on the data’s age or file type. NetApp has similar functionality with FabricPools.

OneFS SmartPools uses robust policies to enable data placement and movement across multiple types of storage. SmartPools can be configured to move data to a set of nodes automatically. For example, if a file has not been accessed in the last 90 days, in can be migrated to a node with deeper storage, allowing admins to define the value of storage based on performance.

OneFS CloudPools migrates data to a cloud provider, with only a stub remaining on the PowerScale cluster, based on similar policies. CloudPools not only tiers data to a cloud provider but also recalls the data back to the cluster as demanded. From a user perspective, all the data is still in a single namespace, irrespective of where it resides.

Figure 3. OneFS SmartPools and CloudPools

ONTAP tiers to S3 object stores using FabricPools.

For more information about SmartPools, see Storage Tiering with Dell PowerScale SmartPools. For more information about CloudPools, see:

- Dell PowerScale: CloudPools and Amazon Web Services

- Dell PowerScale: CloudPools and Amazon Web Services

- Dell PowerScale: CloudPools and Alibaba Cloud

- Dell PowerScale: CloudPools and Google Cloud

- Dell PowerScale: CloudPools and ECS

Monitoring

Dell InsightIQ and Dell CloudIQ provide performance monitoring and reporting capabilities. InsightIQ includes advanced analytics to optimize applications, correlate cluster events, and accurately forecast future storage needs. NetApp provides performance monitoring and reporting with Cloud Insights and Active IQ, which are accessible within BlueXP.

For more information about CloudIQ, see CloudIQ: A Detailed Review. For more information about InsightIQ, see InsightIQ on Dell Support.

Security

Similar to ONTAP, the PowerScale OneFS operating system comes with a comprehensive set of integrated security features. These features include data at rest and data in flight encryption, virus scanning tool, WORM SmartLock compliance, external key manager for data at rest encryption, STIG-hardened security profile, Common Criteria certification, and support for UEFI Secure Boot across PowerScale platforms. Further, OneFS may be configured for a Zero Trust architecture and PCI-DSS.

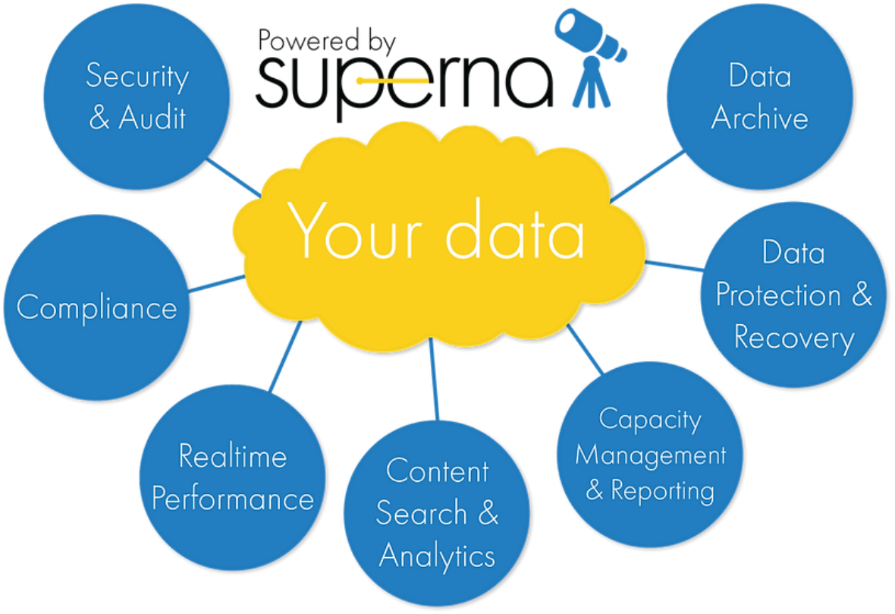

Superna security

Superna exclusively provides the following security-focused applications for PowerScale OneFS:

- Ransomware Defender: Provides real-time event processing through user behavior analytics. The events are used to detect and stop a ransomware attack before it occurs.

- Easy Auditor: Offers a flat-rate license model and ease-of-use features that simplify auditing and securing PBs of data.

- Performance Auditor: Provides real-time file I/O view of PowerScale nodes to simplify root cause of performance impacts, assessing changes needed to optimize performance and debugging user, network, and application performance.

- Airgap: Deployed in two configurations depending on the scale of clusters and security features:

- Basic Airgap Configuration that deploys the Ransomware Defender agent on one of the primary clusters being protected.

- Enterprise Airgap Configuration that deploys the Ransomware Defender agent on the cyber vault cluster. This solution comes with greater scalability and additional security features.

Figure 4. Superna security

NetApp ONTAP security is limited to the integrated features listed above. Additional applications for further security monitoring, like Superna, are not available for ONTAP.

For more information about Superna security, see supernaeyeglass.com. For more information about PowerScale security, see Dell PowerScale OneFS: Security Considerations.

Authentication and access control

NetApp and PowerScale OneFS both support several methods for user authentication and access control. OneFS supports UNIX and Windows permissions for data-level access control. OneFS is designed for a mixed environment that allows the configuration of both Windows Access Control Lists (ACLs) and standard UNIX permissions on the cluster file system. In addition, OneFS provides user and identity mapping, permission mapping, and merging between Windows and UNIX environments.

OneFS supports local and remote authentication providers. Anonymous access is supported for protocols that allow it. Concurrent use of multiple authentication provider types, including Active Directory, LDAP, and NIS, is supported. For example, OneFS is often configured to authenticate Windows clients with Active Directory and to authenticate UNIX clients with LDAP.

Role-based access control

OneFS supports role-based access control (RBAC), allowing administrative tasks to be configured without a root or administrator account. A role is a collection of OneFS privileges that are limited to an area of administration. Custom roles for security, auditing, storage, or backup tasks may be provisioned with RBACs. Privileges are assigned to roles. As users log in to the cluster through the platform API, the OneFS command-line interface, or the OneFS web administration interface, they are granted privileges based on their role membership.

For more information about OneFS authentication and access control, see PowerScale OneFS Authentication, Identity Management, and Authorization.

Learn more about PowerScale OneFS

To learn more about PowerScale OneFS, see the following resources:

- Dell PowerScale Info Hub

- PowerScale OneFS Technical Overview

- Dell PowerScale OneFS Operating System

- OneFS Smartfail blog post

- High Availability and Data Protection with Dell PowerScale Scale-Out NAS

- Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations

- Dell PowerScale: NDMP Technical Overview and Design Considerations

- Storage Quota Management and Provisioning with Dell PowerScale SmartQuotas

- PowerScale: Network Design Considerations

- Dell PowerScale OneFS: Data Reduction and Storage Efficiency

- Next-Generation Storage Efficiency with Dell PowerScale SmartDedupe

- Storage Tiering with Dell PowerScale SmartPools

- Dell PowerScale: CloudPools and Amazon Web Services

- Dell PowerScale: CloudPools and Microsoft Azure

- Dell PowerScale: CloudPools and Alibaba Cloud

- Dell PowerScale: CloudPools and Google Cloud

- Dell PowerScale: CloudPools and ECS

- CloudIQ: A Detailed Review

- InsightIQ (Dell Support page with documentation links)

- Dell PowerScale OneFS: Security Considerations

- PowerScale OneFS Authentication, Identity Management, and Authorization

- Superna website (supernaeyeglass.com)

Address your Security Challenges with Zero Trust Model on Dell PowerScale

Fri, 26 Apr 2024 16:48:47 -0000

|Read Time: 0 minutes

Dell PowerScale, the world’s most secure NAS storage array[1], continues to evolve its already rich security capabilities with the recent introduction of External Key Manager for Data-at-Rest-Encryption, enhancements to the STIG security profile, and support for UEFI Secure Boot across PowerScale platforms.

Our next release of PowerScale OneFS adds new security features that include software-based firewall functionality, multi-factor authentication with support for CAC/PIV, SSO for administrative WebUI, and FIPS-compliant data in flight.

As the PowerScale security feature set continues to advance, meeting the highest level of federal compliance is paramount to support industry and federal security standards. We are excited to announce that our scheduled verification by the Department of Defense Information Network (DISA) for inclusion on the DoD Approved Product List will begin in March 2023. For more information, see the DISA schedule here.

Moreover, OneFS will embrace the move to IPv6-only networks with support for USGv6-r1, a critical network standard applicable to hundreds of federal agencies and to the most security-conscious enterprises, including the DoD. Refreshed Common Criteria certification activities are underway and will provide a highly regarded international and enterprise-focused complement to other standards being supported.

We believe that implementing the zero trust model is the best foundation for building a robust security framework for PowerScale. This model and its principles are discussed below.

Supercharge Dell PowerScale security with the zero trust model

In the age of digital transformation, multiple cloud providers, and remote employees, the confines of the traditional data center are not enough to provide the highest levels of security. In the traditional sense, security was considered placing your devices in an imaginary “bubble.” The thought was that as long as devices were in the protected “bubble,” security was already accounted for through firewalls on the perimeter. However, the age-old concept of an organization’s security depending on the firewall is no longer relevant and is the easiest for a malicious party to attack.

Now that the data center is not confined to an area, the security framework must evolve, transform, and adapt. For example, although firewalls are still critical to network infrastructure, security must surpass just a firewall and security devices.

Why is data security important?

Although this seems like an easy question, it’s essential to understand the value of what is being protected. Traditionally, an organization’s most valuable assets were its infrastructure, including a building and the assets required to produce its goods. However, in the age of Digital Transformation, organizations have realized that the most critical asset is their data.

Why a zero trust model?

Because data is an organization’s most valuable asset, protecting the data is paramount. And how do we protect this data in the modern environment without data center confines? Enter the zero trust model!

Although Forrester Research first defined zero trust architecture in 2010, it has recently received more attention with the ever-changing security environment leading to a focus on cybersecurity. The zero trust architecture is a general model and must be refined for a specific implementation. For example, in September 2019, the National Institute of Standards and Technology (NIST) introduced its concept of Zero Trust Architecture. As a result, the White House has also published an Executive Order on Improving the Nation’s Cybersecurity, including zero trust initiatives.

In a zero trust architecture, all devices must be validated and authenticated. The concept applies to all devices and hosts, ensuring that none are trusted until proven otherwise. In essence, the model adheres to a “never trust, always verify” policy for all devices.

NIST Special Publication 800-207 Zero Trust Architecture states that a zero trust model is architected with the following design tenets:

- All data sources and computing services are considered resources.

- All communication is secured regardless of network location.

- Access to individual enterprise resources is granted on a per session basis.

- Access to resources is determined by dynamic policy—including the observable state of client identity, application/service, and the requesting asset—and may include other behavioral and environmental attributes.

- The enterprise monitors and measures the integrity and security posture of all owned and associated assets.

- All resource authentication and authorization are dynamic and strictly enforced before access is allowed.

- The enterprise collects as much information as possible related to the current state of assets, network infrastructure, and communications and uses it to improve its security posture.

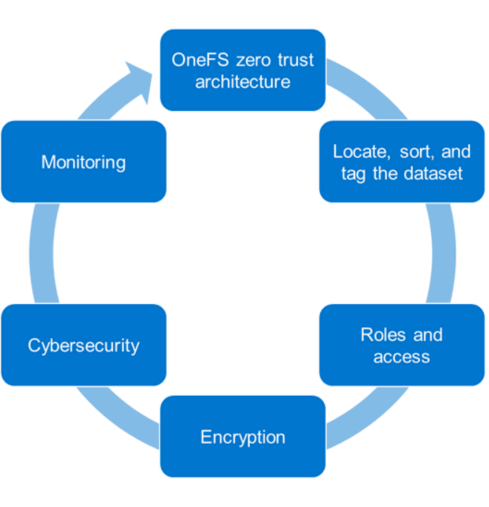

PowerScale OneFS follows the zero trust model

The PowerScale family of scale-out NAS solutions includes all-flash, hybrid, and archive storage nodes that can be deployed across the entire enterprise – from the edge, to core, and the cloud, to handle the most demanding file-based workloads. PowerScale OneFS combines the three layers of storage architecture—file system, volume manager, and data protection—into a scale-out NAS cluster. Dell Technologies follows the NIST Cybersecurity Framework to apply zero trust principles on a PowerScale cluster. The NIST Framework identifies five principles: identify, protect, detect, respond, and recover. Combining the framework from the NIST CSF and the data model provides the basis for the PowerScale zero trust architecture in five key stages, as shown in the following figure.

Let’s look at each of these stages and what Dell Technologies tools can be used to implement them.

1. Locate, sort, and tag the dataset

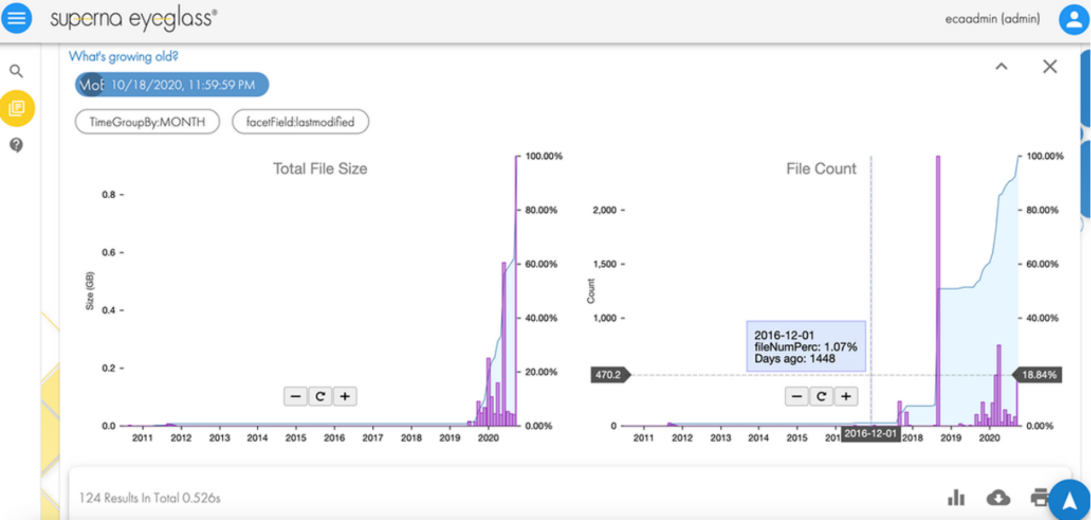

To secure an asset, the first step is to identify the asset. In our case, it is data. To secure a dataset, it must first be located, sorted, and tagged to secure it effectively. This can be an onerous process depending on the number of datasets and their size. We recommend using the Superna Eyeglass Search and Recover feature to understand your unstructured data and to provide insights through a single pane of glass, as shown in the following image. For more information, see the Eyeglass Search and Recover Product Overview.

2. Roles and access

Once we know the data we are securing, the next step is to associate roles to the indexed data. The role-specific administrators and users only have access to a subset of the data necessary for their responsibilities. PowerScale OneFS allows system access to be limited to an administrative role through Role-Based Access Control (RBAC). As a best practice, assign only the minimum required privileges to each administrator as a baseline. In the future, more privileges can be added as needed. For more information, see PowerScale OneFS Authentication, Identity Management, and Authorization.

3. Encryption

For the next step in deploying the zero trust model, use encryption to protect the data from theft and man-in-the-middle attacks.

Data at Rest Encryption

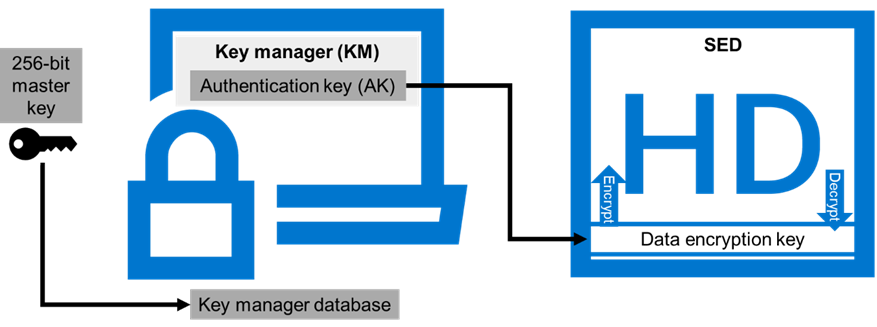

PowerScale OneFS provides Data at Rest Encryption (D@RE) using self-encrypting drives (SEDs), allowing data to be encrypted during writes and decrypted during reads with a 256-bit AES encryption key, referred to as the data encryption key (DEK). Further, OneFS wraps the DEK for each SED in an authentication key (AK). Next, the AKs for each drive are placed in a key manager (KM) that is stored securely in an encrypted database, the key manager database (KMDB). Next, the KMDB is encrypted with a 256-bit master key (MK). Finally, the 256-bit master key is stored external to the PowerScale cluster using a key management interoperability protocol (KMIP)-compliant key manager server, as shown in the following figure. For more information, see PowerScale Data at Rest Encryption.

Data in flight encryption

Data in flight is encrypted using SMB3 and NFS v4.1 protocols. SMB encryption can be used by clients that support SMB3 encryption, including Windows Server 2012, 2012 R2, 2016, Windows 10, and 11. Although SMB supports encryption natively, NFS requires additional Kerberos authentication to encrypt data in flight. OneFS Release 9.3.0.0 supports NFS v4.1, allowing Kerberos support to encrypt traffic between the client and the PowerScale cluster.

Once the protocol access is encrypted, the next step is encrypting data replication. OneFS supports over-the-wire, end-to-end encryption for SyncIQ data replication, protecting and securing in-flight data between clusters. For more information about these features, see the following:

- PowerScale: Solution Design and Considerations for SMB Environments

- PowerScale OneFS NFS Design Considerations and Best Practices

- PowerScale SyncIQ: Architecture, Configuration, and Considerations

4. Cybersecurity

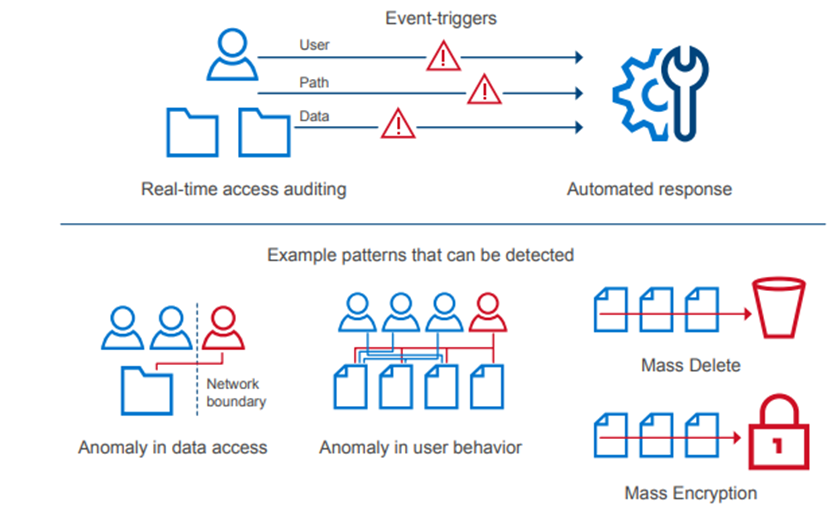

In an environment of ever-increasing cyber threats, cyber protection must be part of any security model. Superna Eyeglass Ransomware Defender for PowerScale provides cyber resiliency. It protects a PowerScale cluster by detecting attack events in real-time and recovering from cyber-attacks. Event triggers create an automated response with real-time access auditing, as shown in the following figure.

The Enterprise AirGap capability creates an isolated data copy in a cyber vault that is network isolated from the production environment, as shown in the following figure. For more about PowerScale Cyber Protection Solution, check out this comprehensive eBook.

5. Monitoring

Monitoring is a critical component of applying a zero trust model. A PowerScale cluster should constantly be monitored through several tools for insights into cluster performance and tracking anomalies. Monitoring options for a PowerScale cluster include the following:

- Dell CloudIQ for proactive monitoring, machine learning, and predictive analytics.

- Superna Ransomware Defender for protecting a PowerScale cluster by detecting attack events in real-time and recovering from cyber-attacks. It also offers AirGap.

- PowerScale OneFS SDK to create custom applications specific to an organization. Uses the OneFS API to configure, manage, and monitor cluster functionality. The OneFS SDK provides greater visibility into a PowerScale cluster.

Conclusion

This blog introduces implementing the zero trust model on a PowerScale cluster. For additional details and applying a complete zero trust implementation, see the PowerScale Zero Trust Architecture section in the Dell PowerScale OneFS: Security Considerations white paper. You can also explore the other sections in this paper to learn more about all PowerScale security considerations.

Author: Aqib Kazi

[1] Based on Dell analysis comparing cybersecurity software capabilities offered for Dell PowerScale vs competitive products, September 2022.

PowerScale Security Baseline Checklist

Fri, 26 Apr 2024 16:24:29 -0000

|Read Time: 0 minutes

As a security best practice, a quarterly security review is recommended. Forming an aggressive security posture for a PowerScale cluster is composed of different facets that may not be applicable to every organization. An organization’s industry, clients, business, and IT administrative requirements determine what is applicable. To ensure an aggressive security posture for a PowerScale cluster, use the checklist in the following table as a baseline for security.

This table serves as a security baseline and must be adapted to specific organizational requirements. See the Dell PowerScale OneFS: Security Considerations white paper for a comprehensive explanation of the concepts in the table below.

Further, cluster security is not a single event. It is an ongoing process: Monitor this blog for updates. As new updates become available, this post will be updated. Consider implementing an organizational security review on a quarterly basis.

The items listed in the following checklist are not in order of importance or hierarchy but rather form an aggressive security posture as more features are implemented.

Table 1. PowerScale security baseline checklist

Security Feature | Configuration | Links | Complete (Y/N) | Notes |

Data at Rest Encryption | Implement external key manager with SEDs | PowerScale Data at Rest Encryption |

|

|

Data in flight encryption | Encrypt protocol communication and data replication | PowerScale: Solution Design and Considerations for SMB Environments PowerScale OneFS NFS Design Considerations and Best Practices PowerScale SyncIQ: Architecture, Configuration, and Considerations |

|

|

Role-based access control (RBACs) | Assign the lowest possible access required for each role | Dell PowerScale OneFS: Authentication, Identity Management, and Authorization |

|

|

Multi-factor authentication | Dell PowerScale OneFS: Authentication, Identity Management, and Authorization Disabling the WebUI and other non-essential services |

|

| |

Cybersecurity |

|

| ||

Monitoring | Monitor cluster activity | Dell CloudIQ - AIOps for Intelligent IT Infrastructure Insights |

|

|

Secure Boot | Configure PowerScale Secure Boot | See PowerScale Secure Boot section |

|

|

Auditing | Configure auditing | File System Auditing with Dell PowerScale and Dell Common Event Enabler |

|

|

Custom applications | Create a custom application for cluster monitoring |

|

| |

Perform a quarterly security review | Review all organizational security requirements and current implementation. Check this paper and checklist for updates Monitor security advisories for PowerScale: https://www.dell.com/support/security/en-us |

|

| |

General cluster security best practices

| See the Security best practices section in the Security Configuration Guide for the relevant release at OneFS Info Hubs |

|

| |

Login, authentication, and privileges best practices |

|

| ||

SNMP security best practices |

|

| ||

SSH security best practices |

|

| ||

Data-access protocols best practices |

|

| ||

Web interface security best practices |

|

| ||

Anti-Virus |

|

| ||

Author: Aqib Kazi

PowerScale Security Baseline Checklist

Tue, 16 Apr 2024 22:36:48 -0000

|Read Time: 0 minutes

As a security best practice, a quarterly security review is recommended. Forming an aggressive security posture for a PowerScale cluster is composed of different facets that may not be applicable to every organization. An organization’s industry, clients, business, and IT administrative requirements determine what is applicable. To ensure an aggressive security posture for a PowerScale cluster, use the checklist in the following table as a baseline for security.

This table serves as a security baseline and must be adapted to specific organizational requirements. See the Dell PowerScale OneFS: Security Considerations | Dell Technologies Info Hub white paper for a comprehensive explanation of the concepts in the table below.

Further, cluster security is not a single event. It is an ongoing process: Monitor this blog for updates. As new updates become available, this post will be updated. Consider implementing an organizational security review on a quarterly basis.

The items listed in the following checklist are not in order of importance or hierarchy but rather form an aggressive security posture as more features are implemented.

Security feature | Configuration | References and notes | Complete (Y/N) | Notes |

Data at Rest Encryption | Implement external key manager with SEDs | Overview | Dell PowerScale OneFS: Security Considerations | Dell Technologies Info Hub |

|

|

Data in flight encryption | Encrypt protocol communication and data replication | Dell PowerScale: Solution Design and Considerations for SMB Environments (delltechnologies.com)

PowerScale OneFS NFS Design Considerations and Best Practices | Dell Technologies Info Hub

Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations | Dell Technologies Info Hub |

|

|

Role Based Access Control (RBAC) | Assign the lowest possible access required for each role | PowerScale OneFS Authentication, Identity Management, and Authorization | Dell Technologies Info Hub |

|

|

Multifactor authentication |

|

|

| |

Cybersecurity | PowerScale Cyber Protection Suite Reference Architecture | Dell Technologies Info Hub |

|

| |

Monitoring | Monitor cluster activity |

|

|

|

Cluster configuration backup and recovery | Ensure quarterly cluster backups | Backing Up and Restoring PowerScale Cluster Configurations in OneFS 9.7 | Dell Technologies Info Hub |

|

|

Secure Boot | Configure PowerScale Secure Boot | Overview | Dell PowerScale OneFS: Security Considerations | Dell Technologies Info Hub |

|

|

Auditing | Configure auditing |

|

| |

Custom applications | Create a custom application for cluster monitoring | GitHub - Isilon/isilon_sdk: Official repository for isilon_sdk |

|

|

SED and cluster Universal Key rekey | Set a frequency to automatically rekey the Universal Key for SEDs and the cluster | Cluster services rekey | Dell PowerScale OneFS: Security Considerations | Dell Technologies Info Hub |

|

|

Perform a quarterly security review | Review all organizational security requirements and current implementation. Check this paper and checklist for updates: |

|

| |

General cluster security best practices | See the best practices section of the Security Configuration Guide for the relevant release, at PowerScale OneFS Info Hubs | Dell US |

|

| |

Login, authentication, and privileges best practices |

|

| ||

SNMP security best practices |

|

| ||

SSH security best practices |

|

| ||

Data-access protocols best practices |

|

| ||

Web interface security best practices |

|

| ||

Anti-virus | PowerScale: AntiVirus Solutions | Dell Technologies Info Hub |

|

| |

Author: Aqib Kazi – Senior Principal Engineering Technologist

Securing PowerScale OneFS SyncIQ

Tue, 16 Apr 2024 17:55:56 -0000

|Read Time: 0 minutes

In the data replication world, ensuring your PowerScale clusters' security is paramount. SyncIQ, a powerful data replication tool, requires encryption to prevent unauthorized access.

Concerns about unauthorized replication

A cluster might inadvertently become the target of numerous replication policies, potentially overwhelming its resources. There’s also the risk of an administrator mistakenly specifying the wrong cluster as the replication target.

Best practices for security

To secure your PowerScale cluster, Dell recommends enabling SyncIQ encryption as per Dell Security Advisory DSA-2020-039: Dell EMC Isilon OneFS Security Update for a SyncIQ Vulnerability | Dell US. This feature, introduced in OneFS 8.2, prevents man-in-the-middle attacks and addresses other security concerns.

Encryption in new and upgraded clusters

SyncIQ is disabled by default for new clusters running OneFS 9.1. When SyncIQ is enabled, a global encryption flag requires all SyncIQ policies to be encrypted. This flag is also set for clusters upgraded to OneFS 9.1, unless there’s an existing SyncIQ policy without encryption.

Alternative measures

For clusters running versions earlier than OneFS 8.2, configuring a SyncIQ pre-shared key (PSK) offers protection against unauthorized replication policies.

By following these security measures, administrators can ensure that their PowerScale clusters are safeguarded against unauthorized access and maintain the integrity and confidentiality of their data.

SyncIQ encryption: securing data in transit

Securing information as it moves between systems is paramount in the data-driven world. Dell PowerScale OneFS release 8.2 has brought a game-changing feature to the table: end-to-end encryption for SyncIQ data replication. This ensures that data is not only protected while at rest but also as it traverses the network between clusters.

Why encryption matters

Data breaches can be catastrophic, and because data replication involves moving large volumes of sensitive information, encryption acts as a critical shield. With SyncIQ’s encryption, organizations can enforce a global setting that mandates encryption across all SyncIQ policies, to add an extra layer of security.

Test before you implement

It’s crucial to test SyncIQ encryption in a lab environment before deploying it in production. Although encryption introduces minimal overhead, its impact on workflow can vary based on several factors, such as network bandwidth and cluster resources.

Technical underpinnings

SyncIQ encryption is powered by X.509 certificates, TLS version 1.2, and OpenSSL version 1.0.2o6. These certificates are meticulously managed within the cluster’s certificate stores, ensuring a robust and secure data replication process.

Remember, this is just the beginning of a comprehensive guide about SyncIQ encryption. Stay tuned for more insights about configuration steps and best practices for securing your data with Dell PowerScale’s innovative solutions.

Configuration

Configuring SyncIQ encryption requires a supported OneFS release, certificates, and finally, the OneFS configuration. Before enabling SyncIQ encryption in production, test it in a lab environment that mimics the production setup. Measure the impact on transmission overhead by considering network bandwidth, cluster resources, workflow, and policy configuration.

Here’s a high level summary of the configuration steps:

- Ensure compatibility:

- Ensure that the source and target clusters are running OneFS 8.2 or later.

- Upgrade and commit both clusters to OneFS release 8.2 or later.

- Create X.509 certificates: