Dell PowerScale OneFS Introduction for NetApp Admins

Tue, 04 Apr 2023 17:15:00 -0000

|Read Time: 0 minutes

For enterprises to harness the advantages of advanced storage technologies with Dell PowerScale, a transition from an existing platform is necessary. Enterprises are challenged by how the new architecture will fit into the existing infrastructure. This blog post provides an overview of PowerScale architecture, features, and nomenclature for enterprises migrating from NetApp ONTAP.

PowerScale overview

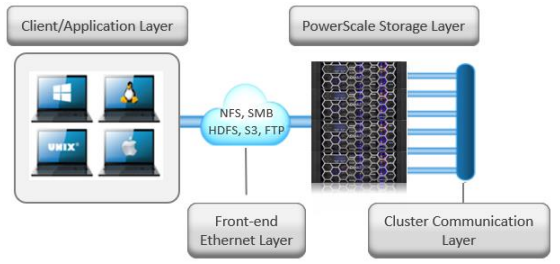

The PowerScale OneFS operating system is based on a distributed architecture, built from the ground up as a clustered system. Each PowerScale node provides compute, memory, networking, and storage. The concepts of controllers, HA, active/standby, and disk shelves are not applicable in a pure scale-out architecture. Thus, when a node is added to a cluster, the cluster performance and capacity increase collectively.

Due to the scale-out distributed architecture with a single namespace, single volume, single file system, and one single pane of management, the system management is far simpler than with traditional NAS platforms. In addition, the data protection is software-based rather than RAID-based, eliminating all the associated complexities, including configuration, maintenance, and additional storage utilization. Administrators do not have to be concerned with RAID groups or load distribution.

NetApp’s ONTAP storage operating system has evolved into a clustered system with controllers. The system includes ONTAP FlexGroups composed of aggregates and FlexVols across nodes.

OneFS is a single volume, which makes cluster management simple. As the cluster grows in capacity, the single volume automatically grows. Administrators are no longer required to migrate data between volumes manually. OneFS repopulates and balances data between all nodes when a new node is added, making the node part of the global namespace. All the nodes in a PowerScale cluster are equal in the hierarchy. Drives share data intranode and internode.

PowerScale is easy to deploy, operate, and manage. Most enterprises require only one full-time employee to manage a PowerScale cluster.

For more information about the PowerScale OneFS architecture, see PowerScale OneFS Technical Overview and Dell PowerScale OneFS Operating System.

Figure 1. Dell PowerScale scale-out NAS architecture

OneFS and NetApp software features

The single volume and single namespace of PowerScale OneFS also lead to a unique feature set. Because the entire NAS is a single file system, the concepts of FlexVols, shares, qtrees, and FlexGroups do not apply. Each NetApp volume has specific properties associated with limited storage space. Adding more storage space to NetApp ONTAP could be an onerous process depending on the current architecture. Conversely, on a PowerScale cluster, as soon as a node is added, the cluster is rebalanced automatically, leading to minimal administrator management.

NetApp’s continued dependence on volumes creates potential added complexity for storage administrators. From a software perspective, the intricacies that arise from the concept of volumes span across all the features. Configuring software features requires administrators to base decisions on the volume concept, limiting configuration options. The volume concept is further magnified by the impacts on storage utilization.

The fact that OneFS is a single volume means that many features are not volume dependent but, rather, span the entire cluster. SnapshotIQ, NDMP backups, and SmartQuotas do not have limits based on volumes; instead, they are cluster-specific or directory-specific.

As a single-volume NAS designed for file storage, OneFS has the scalable capacity with ease of management combined with features that administrators require. Robust policy-driven features such as SmartConnect, SmartPools, and CloudPools enable maximum utilization of nodes for superior performance and storage efficiency for maximum value. You can use SmartConnect to configure access zones that are mapped to specific node performances. SmartPools can tier cold data to nodes with deep archive storage, and CloudPools can store frozen data in the cloud. Regardless of where the data is residing, it is presented as a single namespace to the end user.

Storage utilization and data protection

Storage utilization is the amount of storage available after the NAS system overhead is deducted. The overhead consists of the space required for data protection and the operating system.

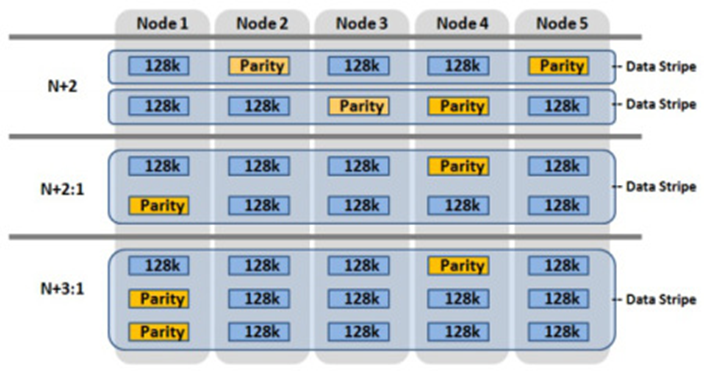

For data protection, OneFS uses software-based Reed-Solomon Error Correction with up to N+4 protection. OneFS offers several custom protection options that cover node and drive failures. The custom protection options vary according to the cluster configuration. OneFS provides data protection against more simultaneous hardware failures and is software-based, providing a significantly higher storage utilization.

The software-based data protection stripes data across nodes in stripe units, and some of the stripe units are Forward Error Correction (FEC) or parity units. The FEC units provide a variable to reformulate the data in the case of a drive or node failure. Data protection is customizable to be for node loss or hybrid protection of node and drive failure.

With software-based data protection, the protection scheme is not per cluster. It has additional granularity that allows for making data protection specific to a file or directory—without creating additional storage volumes or manually migrating data. Instead, OneFS runs a job in the background, moving data as configured.

Figure 2. OneFS data protection

OneFS protects data stored on failing nodes, or drives in a cluster through a process called SmartFail. During the process, OneFS places a device into quarantine and, depending on the severity of the issue, places the data on the device into a read-only state. While a device is quarantined, OneFS reprotects the data on the device by distributing the data to other devices.

NetApp’s data protection is all RAID-based, including NetApp RAID-TEC, NetApp RAID-DP, and RAID 4. NetApp only supports a maximum of triple parity, and simultaneous node failures in an HA pair are not supported.

For more information about SmartFail, see the following blog: OneFS Smartfail. For more information about OneFS data protection, see High Availability and Data Protection with Dell PowerScale Scale-Out NAS.

NetApp FlexVols, shares, and Qtrees

NetApp requires administrators to manually create space and explicitly define aggregates and flexible volumes. The concept of FlexVols, shares, and Qtrees are nonexistent in OneFS, as the file system is a single volume and namespace, spanning the entire cluster.

SMB shares and NFS exports are created through the web or command-line interface in OneFS. Both methods allow the user to create either within seconds with security options. SmartQuotas is used to manage storage limits, cluster-wide, across the entire namespace. They include accounting, warning messages, or hard limits of enforcement. The limits can be applied by directory, user, or group.

Conversely, ONTAP quota management is at the volume or FlexGroup level, creating additional administrative overhead because the process is more onerous.

Snapshots

The OneFS snapshot feature is SnapshotIQ, which does not have specified or enforced limits for snapshots per directory or snapshots per cluster. However, the best practice is 1,024 snapshots per directory and 20,000 snapshots per cluster. OneFS also supports writable snapshots. For more information about SnapshotIQ and writable snapshots, see High Availability and Data Protection with Dell PowerScale Scale-Out NAS.

NetApp Snapshot supports 255 snapshots per volume in ONTAP 9.3 and earlier. ONTAP 9.4 and later versions support 1,023 snapshots per volume. By default, NetApp requires a space reservation of 5 percent in the volume when snapshots are used, requiring the space reservation to be monitored and manually increased if space becomes exhausted. Further, the space reservation can also affect volume availability. The space reservation requirement creates additional administration overhead and affects storage efficiency by setting aside space that might or might not be used.

Data replication

Data replication is required for disaster recovery, RPO, or RTO requirements. OneFS provides data replication through SyncIQ and SmartSync.

SyncIQ provides asynchronous data replication, whereas NetApp’s asynchronous replication, which is called SnapMirror, is block-based replication. SyncIQ provides options for ensuring that all data is retained during failover and failback from the disaster recovery cluster. SyncIQ is fully configurable with options for execution times and bandwidth management. A SyncIQ target cluster may be configured as a target for several source clusters.

SyncIQ offers a single-button automated process for failover and failback with Superna Eyeglass DR Edition. For more information about Superna Eyeglass DR Edition, see Superna | DR Edition (supernaeyeglass.com).

SyncIQ allows configurable options for replication down to a specific file, directory, or entire cluster. Conversely, NetApp’s SnapMirror replication starts at the volume at a minimum. The volume concept and dependence on volume requirements continue to add management complexity and overhead for administrators while also wasting storage utilization.

To address the requirements of the modern enterprise, OneFS version 9.4.0.0 introduced SmartSync. This feature replicates file-to-file data between PowerScale clusters. SmartSync cloud copy replicates file-to-object data from PowerScale clusters to Dell ECS and cloud providers. Having multiple target destinations allows administrators to store multiple copies of a dataset across locations, providing further disaster recovery readiness. SmartSync cloud copy replicates file-to-object data from PowerScale clusters to Dell ECS and cloud providers. SmartSync cloud copy also pulls the replicated object data from a cloud provider back to a PowerScale cluster in file. For more information about SyncIQ, see Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations. For more information about SmartSync, see Dell PowerScale SmartSync.

Quotas

OneFS SmartQuotas provides configurable options to monitor and enforce storage limits at the user, group, cluster, directory, or subdirectory level. ONTAP quotas are user-, tree-, volume-, or group-based.

For more information about SmartQuotas, see Storage Quota Management and Provisioning with Dell PowerScale SmartQuotas.

Load balancing and multitenancy

Because OneFS is a distributed architecture across a collection of nodes, client connectivity to these nodes requires load balancing. OneFS SmartConnect provides options for balancing the client connections to the nodes within a cluster. Balancing options are round-robin or based on current load. Also, SmartConnect zones can be configured to have clients connect based on group and performance needs. For example, the Engineering group might require high-performance nodes. A zone can be configured, forcing connections to those nodes.

NetApp ONTAP supports multitenancy with Storage Virtual Machines (SVMs), formerly vServers and Logical Interfaces (LIFs). SVMs isolate storage and network resources across a cluster of controller HA pairs. SVMs require managing protocols, shares, and volumes for successful provisioning. Volumes cannot be nondisruptively moved between SVMs. ONTAP supports load balancing using LIFs, but configuration is manual and must be implemented by the storage administrator. Further, it requires continuous monitoring because it is based on the load on the controller.

OneFS provides multitenancy through SmartConnect and access zones. Management is simple because the file system is one volume and access is provided by hostname and directory, rather than by volume. SmartConnect is policy-driven and does not require continuous monitoring. SmartConnect settings may be changed on demand as the requirements change.

SmartConnect zones allow administrators to provision DNS hostnames specific to IP pools, subnets, and network interfaces. If only a single authentication provider is required, all the SmartConnect zones map to a default access zone. However, if directory access and authentication providers vary, multiple access zones are provisioned, mapping to a directory, authentication provider, and SmartConnect zone. As a result, authenticated users of an access zone only have visibility into their respective directory. Conversely, an administrator with complete file system access can migrate data nondisruptively between directories.

For more information about SmartConnect, see PowerScale: Network Design Considerations.

Compression and deduplication

Both ONTAP and OneFS provide compression. The OneFS deduplication feature is SmartDedupe, which allows deduplication to run at a cluster-wide level, improving overall Data Reduction Rate (DRR) and storage utilization. With ONTAP, the deduplication is enabled at the aggregate level, and it cannot cross over nodes.

For more information about OneFS data reduction, see Dell PowerScale OneFS: Data Reduction and Storage Efficiency. For more information about SmartDedupe, see Next-Generation Storage Efficiency with Dell PowerScale SmartDedupe.

Data tiering

OneFS has integrated features to tier data based on the data’s age or file type. NetApp has similar functionality with FabricPools.

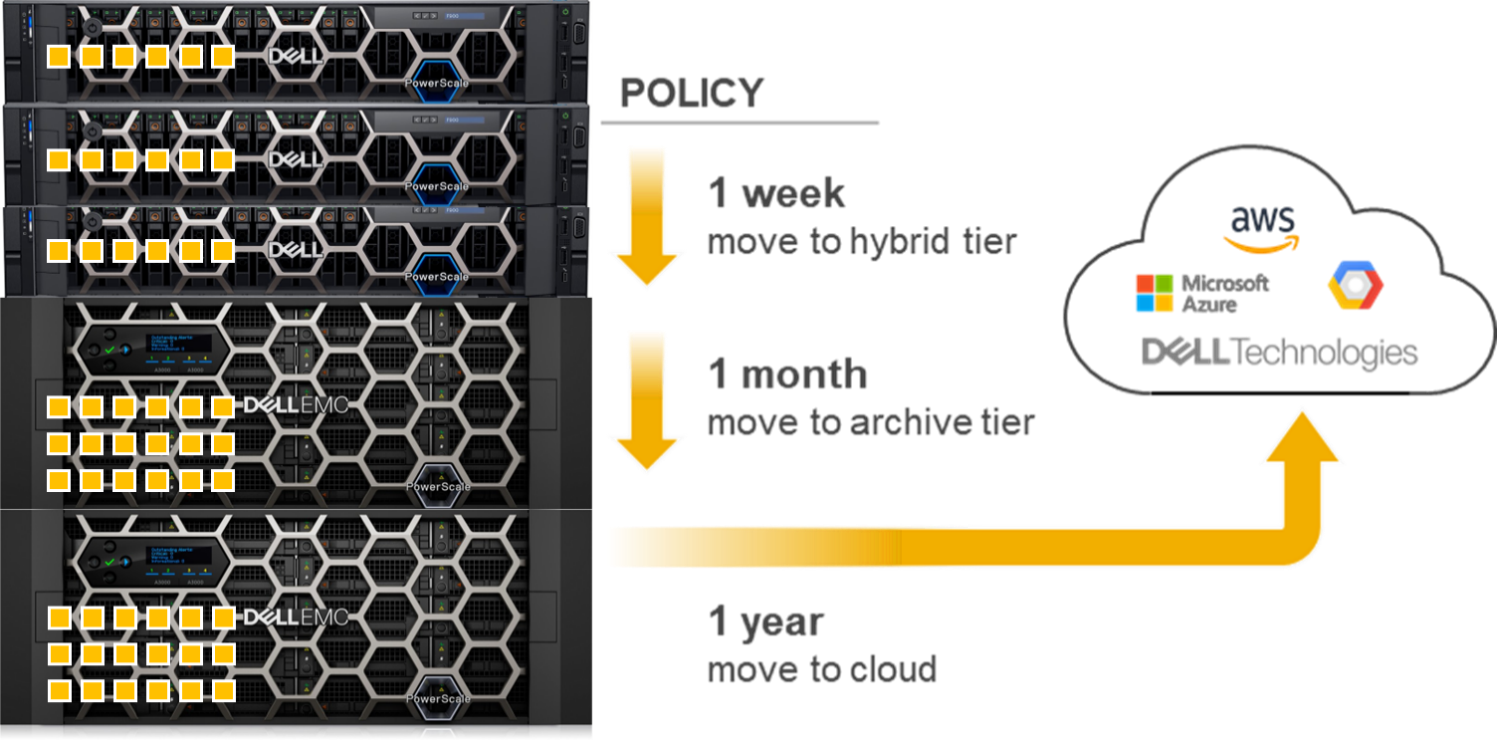

OneFS SmartPools uses robust policies to enable data placement and movement across multiple types of storage. SmartPools can be configured to move data to a set of nodes automatically. For example, if a file has not been accessed in the last 90 days, in can be migrated to a node with deeper storage, allowing admins to define the value of storage based on performance.

OneFS CloudPools migrates data to a cloud provider, with only a stub remaining on the PowerScale cluster, based on similar policies. CloudPools not only tiers data to a cloud provider but also recalls the data back to the cluster as demanded. From a user perspective, all the data is still in a single namespace, irrespective of where it resides.

Figure 3. OneFS SmartPools and CloudPools

ONTAP tiers to S3 object stores using FabricPools.

For more information about SmartPools, see Storage Tiering with Dell PowerScale SmartPools. For more information about CloudPools, see:

- Dell PowerScale: CloudPools and Amazon Web Services

- Dell PowerScale: CloudPools and Amazon Web Services

- Dell PowerScale: CloudPools and Alibaba Cloud

- Dell PowerScale: CloudPools and Google Cloud

- Dell PowerScale: CloudPools and ECS

Monitoring

Dell InsightIQ and Dell CloudIQ provide performance monitoring and reporting capabilities. InsightIQ includes advanced analytics to optimize applications, correlate cluster events, and accurately forecast future storage needs. NetApp provides performance monitoring and reporting with Cloud Insights and Active IQ, which are accessible within BlueXP.

For more information about CloudIQ, see CloudIQ: A Detailed Review. For more information about InsightIQ, see InsightIQ on Dell Support.

Security

Similar to ONTAP, the PowerScale OneFS operating system comes with a comprehensive set of integrated security features. These features include data at rest and data in flight encryption, virus scanning tool, WORM SmartLock compliance, external key manager for data at rest encryption, STIG-hardened security profile, Common Criteria certification, and support for UEFI Secure Boot across PowerScale platforms. Further, OneFS may be configured for a Zero Trust architecture and PCI-DSS.

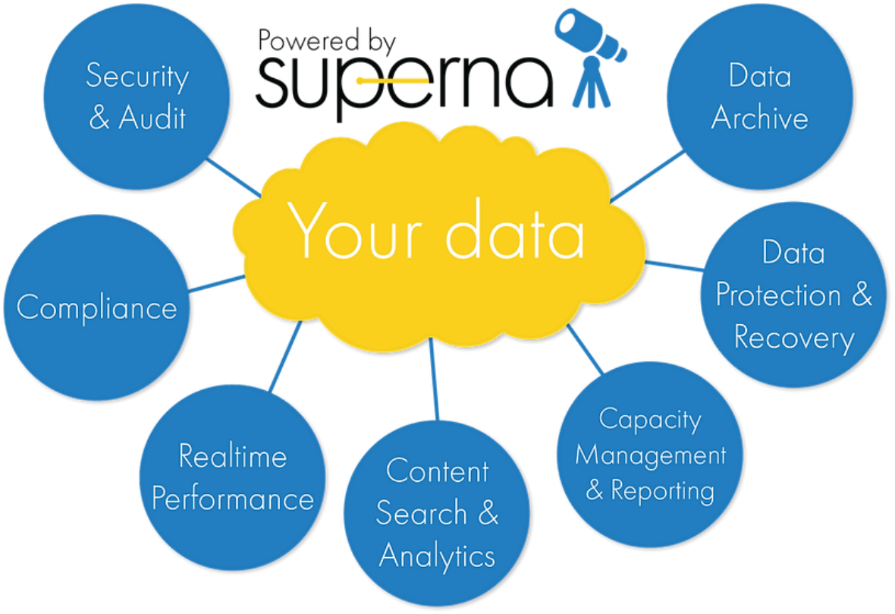

Superna security

Superna exclusively provides the following security-focused applications for PowerScale OneFS:

- Ransomware Defender: Provides real-time event processing through user behavior analytics. The events are used to detect and stop a ransomware attack before it occurs.

- Easy Auditor: Offers a flat-rate license model and ease-of-use features that simplify auditing and securing PBs of data.

- Performance Auditor: Provides real-time file I/O view of PowerScale nodes to simplify root cause of performance impacts, assessing changes needed to optimize performance and debugging user, network, and application performance.

- Airgap: Deployed in two configurations depending on the scale of clusters and security features:

- Basic Airgap Configuration that deploys the Ransomware Defender agent on one of the primary clusters being protected.

- Enterprise Airgap Configuration that deploys the Ransomware Defender agent on the cyber vault cluster. This solution comes with greater scalability and additional security features.

Figure 4. Superna security

NetApp ONTAP security is limited to the integrated features listed above. Additional applications for further security monitoring, like Superna, are not available for ONTAP.

For more information about Superna security, see supernaeyeglass.com. For more information about PowerScale security, see Dell PowerScale OneFS: Security Considerations.

Authentication and access control

NetApp and PowerScale OneFS both support several methods for user authentication and access control. OneFS supports UNIX and Windows permissions for data-level access control. OneFS is designed for a mixed environment that allows the configuration of both Windows Access Control Lists (ACLs) and standard UNIX permissions on the cluster file system. In addition, OneFS provides user and identity mapping, permission mapping, and merging between Windows and UNIX environments.

OneFS supports local and remote authentication providers. Anonymous access is supported for protocols that allow it. Concurrent use of multiple authentication provider types, including Active Directory, LDAP, and NIS, is supported. For example, OneFS is often configured to authenticate Windows clients with Active Directory and to authenticate UNIX clients with LDAP.

Role-based access control

OneFS supports role-based access control (RBAC), allowing administrative tasks to be configured without a root or administrator account. A role is a collection of OneFS privileges that are limited to an area of administration. Custom roles for security, auditing, storage, or backup tasks may be provisioned with RBACs. Privileges are assigned to roles. As users log in to the cluster through the platform API, the OneFS command-line interface, or the OneFS web administration interface, they are granted privileges based on their role membership.

For more information about OneFS authentication and access control, see PowerScale OneFS Authentication, Identity Management, and Authorization.

Learn more about PowerScale OneFS

To learn more about PowerScale OneFS, see the following resources:

- Dell PowerScale Info Hub

- PowerScale OneFS Technical Overview

- Dell PowerScale OneFS Operating System

- OneFS Smartfail blog post

- High Availability and Data Protection with Dell PowerScale Scale-Out NAS

- Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations

- Dell PowerScale: NDMP Technical Overview and Design Considerations

- Storage Quota Management and Provisioning with Dell PowerScale SmartQuotas

- PowerScale: Network Design Considerations

- Dell PowerScale OneFS: Data Reduction and Storage Efficiency

- Next-Generation Storage Efficiency with Dell PowerScale SmartDedupe

- Storage Tiering with Dell PowerScale SmartPools

- Dell PowerScale: CloudPools and Amazon Web Services

- Dell PowerScale: CloudPools and Microsoft Azure

- Dell PowerScale: CloudPools and Alibaba Cloud

- Dell PowerScale: CloudPools and Google Cloud

- Dell PowerScale: CloudPools and ECS

- CloudIQ: A Detailed Review

- InsightIQ (Dell Support page with documentation links)

- Dell PowerScale OneFS: Security Considerations

- PowerScale OneFS Authentication, Identity Management, and Authorization

- Superna website (supernaeyeglass.com)

Related Blog Posts

Securing PowerScale OneFS SyncIQ

Tue, 16 Apr 2024 17:55:56 -0000

|Read Time: 0 minutes

In the data replication world, ensuring your PowerScale clusters' security is paramount. SyncIQ, a powerful data replication tool, requires encryption to prevent unauthorized access.

Concerns about unauthorized replication

A cluster might inadvertently become the target of numerous replication policies, potentially overwhelming its resources. There’s also the risk of an administrator mistakenly specifying the wrong cluster as the replication target.

Best practices for security

To secure your PowerScale cluster, Dell recommends enabling SyncIQ encryption as per Dell Security Advisory DSA-2020-039: Dell EMC Isilon OneFS Security Update for a SyncIQ Vulnerability | Dell US. This feature, introduced in OneFS 8.2, prevents man-in-the-middle attacks and addresses other security concerns.

Encryption in new and upgraded clusters

SyncIQ is disabled by default for new clusters running OneFS 9.1. When SyncIQ is enabled, a global encryption flag requires all SyncIQ policies to be encrypted. This flag is also set for clusters upgraded to OneFS 9.1, unless there’s an existing SyncIQ policy without encryption.

Alternative measures

For clusters running versions earlier than OneFS 8.2, configuring a SyncIQ pre-shared key (PSK) offers protection against unauthorized replication policies.

By following these security measures, administrators can ensure that their PowerScale clusters are safeguarded against unauthorized access and maintain the integrity and confidentiality of their data.

SyncIQ encryption: securing data in transit

Securing information as it moves between systems is paramount in the data-driven world. Dell PowerScale OneFS release 8.2 has brought a game-changing feature to the table: end-to-end encryption for SyncIQ data replication. This ensures that data is not only protected while at rest but also as it traverses the network between clusters.

Why encryption matters

Data breaches can be catastrophic, and because data replication involves moving large volumes of sensitive information, encryption acts as a critical shield. With SyncIQ’s encryption, organizations can enforce a global setting that mandates encryption across all SyncIQ policies, to add an extra layer of security.

Test before you implement

It’s crucial to test SyncIQ encryption in a lab environment before deploying it in production. Although encryption introduces minimal overhead, its impact on workflow can vary based on several factors, such as network bandwidth and cluster resources.

Technical underpinnings

SyncIQ encryption is powered by X.509 certificates, TLS version 1.2, and OpenSSL version 1.0.2o6. These certificates are meticulously managed within the cluster’s certificate stores, ensuring a robust and secure data replication process.

Remember, this is just the beginning of a comprehensive guide about SyncIQ encryption. Stay tuned for more insights about configuration steps and best practices for securing your data with Dell PowerScale’s innovative solutions.

Configuration

Configuring SyncIQ encryption requires a supported OneFS release, certificates, and finally, the OneFS configuration. Before enabling SyncIQ encryption in production, test it in a lab environment that mimics the production setup. Measure the impact on transmission overhead by considering network bandwidth, cluster resources, workflow, and policy configuration.

Here’s a high level summary of the configuration steps:

- Ensure compatibility:

- Ensure that the source and target clusters are running OneFS 8.2 or later.

- Upgrade and commit both clusters to OneFS release 8.2 or later.

- Create X.509 certificates:

- Create X.509 certificates for the source and target clusters using publicly available tools.

- The certificate creation process results in the following components:

- Certificate Authority (CA) certificate

- Source certificate and private key

- Target certificate and private key

Note: Some certificate authorities may not generate the public and private key pairs. In that case, manually generate a Certificate Signing Request (CSR) and obtain signed certificates.

3. Transfer certificates to clusters:

- Transfer the certificates to each cluster.

4. Activate each certificate as follows:

- Add the source cluster certificate under Data Protection > SyncIQ > Certificates.

- Add the target server certificate under Data Protection > SyncIQ > Settings.

- Add the Certificate Authority under Access > TLS Certificates and select Import Authority.

5. Enforce encryption:

- Each cluster stores its certificate and its peer’s certificate.

- The source cluster must store the target cluster’s certificate, and vice versa.

- Storing the peer’s certificate creates a list of approved clusters for data replication.

By following these steps, you can secure your data in transit between PowerScale clusters using SyncIQ encryption. Remember to customize the certificates and settings according to your specific environment and requirements.

For more detailed information about configuring SyncIQ encryption, see SyncIQ encryption | Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations | Dell Technologies Info Hub.

SyncIQ pre-shared key

A SyncIQ pre-shared key (PSK) is configured solely on the target cluster to restrict policies from source clusters without the PSK.

Use Cases: This is recommended for environments without SyncIQ encryption, such as clusters pre-OneFS 8.2 or due to other factors.

SmartLock Compliance: Not supported by SmartLock Compliance mode clusters; upgrading and configuring SyncIQ encryption is advised.

Policy Update: After updating source cluster policies with the PSK, no further configuration is needed. Use the isi sync policies view command to verify.

Remember, configuring the PSK will cause all replicating jobs to the target cluster to fail, so ensure that all SyncIQ jobs are complete or canceled before proceeding.

For more detailed information about configuring a SyncIQ pre-shared key, see SyncIQ pre-shared key | Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations | Dell Technologies Info Hub.

Resources

- SyncIQ encryption | Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations | Dell Technologies Info Hub

- SyncIQ pre-shared key | Dell PowerScale SyncIQ: Architecture, Configuration, and Considerations | Dell Technologies Info Hub

Author: Aqib Kazi, Senior Principal Engineering Technologist

Optimizing AI: Meeting Unstructured Storage Demands Efficiently

Thu, 21 Mar 2024 14:46:23 -0000

|Read Time: 0 minutes

The surge in artificial intelligence (AI) and machine learning (ML) technologies has sparked a revolution across industries, pushing the boundaries of what's possible. However, this innovation comes with its own set of challenges, particularly when it comes to storage. The heart of AI's potential lies in its ability to process and learn from vast amounts of data, most of which is unstructured. This has placed unprecedented demands on storage solutions, becoming a critical bottleneck for advancing AI technologies.

Navigating the complex landscape of unstructured data storage is no small feat. Traditional storage systems struggle to keep up with the scale and flexibility required by AI workloads. Enterprises find themselves at a crossroads, seeking solutions that can provide scalable, affordable, and fault-tolerant storage. The quest for such a platform is not just about meeting current needs but also paving the way for the future of AI-driven innovation.

The current state of ML and AI

The evolution of ML and AI technologies has reshaped industries far and wide, setting new expectations for data processing and analysis capabilities. These advancements are directly tied to an organization's capacity to handle vast volumes of unstructured data, a domain where traditional storage solutions are being outpaced.

ML and AI applications demand unprecedented levels of data ingestion and computational power, necessitating scalable and flexible storage solutions. Traditional storage systems—while useful for conventional data storage needs—grapple with scalability issues, particularly when faced with the immense file quantities AI and ML workloads generate.

Although traditional object storage methods are capable of managing data as objects within a pool, they fall short when meeting the agility and accessibility requirements essential for AI and ML processes. These storage models struggle with scalability and facilitating the rapid access and processing of data crucial for deep learning and AI algorithms.

The dire necessity of a new kind of storage solution is evident as the current infrastructure is unable to cope with the silos of unstructured data. These silos make it challenging to access, process, and unify data sources, which in turn cripples the effectiveness of AI and ML projects. Furthermore, the maximum storage capacity of traditional storage, tethering at tens of terabytes, is insufficient for the needs of AI-driven initiatives which often require petabytes of data to train sophisticated models.

As ML and AI continue to advance, the quest for a storage solution that can support the growing demands of these technologies remains pivotal. The industry is in dire need of systems that provide ample storage and ensure the flexibility, reliability, and performance efficiency necessary to propel AI and ML into their next phase of innovation.

Understanding unstructured storage demands for AI

The advent of AI and ML has brought unprecedented advancements across industries, enhancing efficiency, accuracy, and the ability to manage and process large datasets. However, the core of these technologies relies on the capability to store, access, and analyze unstructured data efficiently. Understanding the storage demands essential for AI applications is crucial for businesses looking to harness the full power of AI technology.

High throughput and low latency

For AI and ML applications, time is of the essence. The ability to process data at high speeds with high throughput and access it with minimal delay and low latency are non-negotiable requirements. These applications often involve complex computations performed on vast datasets, necessitating quick access to data to maintain a seamless process. For instance, in real-time AI applications such as voice recognition or instant fraud detection, any delay in data processing can critically impact performance and accuracy. Therefore, storage solutions must be designed to accommodate these needs, delivering data as swiftly as possible to the application layer.

Scalability and flexibility

As AI models evolve and the volume of data increases, the need for scalability in storage solutions becomes paramount. The storage architecture must accommodate growth without compromising on performance or efficiency. This is where the flexibility of the storage solutions comes into play. An ideal storage system for AI would scale in capacity and performance, adapting to the changing demands of AI applications over time. Combining the best of on-premises and cloud storage, hybrid storage solutions offer a viable path to achieving this scalability and flexibility. They enable businesses to leverage the high performance of on-premise solutions and the scalability and cost-efficiency of cloud storage, ensuring the storage infrastructure can grow with the AI application needs.

Data durability and availability

Ensuring the durability and availability of data is critical for AI systems. Data is the backbone of any AI application, and its loss or unavailability can lead to significant setbacks in development and performance. Storage solutions must, therefore, provide robust data protection mechanisms and redundancies to safeguard against data loss. Additionally, high availability is essential to ensure that data is always accessible when needed, particularly for AI applications that require continuous operation. Implementing a storage system with built-in redundancy, failover capabilities, and disaster recovery plans is essential to maintain continuous data availability and integrity.

In the context of AI where data is continually ingested, processed, and analyzed, the demands on storage solutions are unique and challenging. Key considerations include maintaining high throughput and low latency for real-time processing, establishing scalability and flexibility to adapt to growing data volumes, and ensuring data durability and availability to support continuous operation. Addressing these demands is critical for businesses aiming to leverage AI technologies effectively, paving the way for innovation and success in the digital era.

What needs to be stored for AI?

The evolution of AI and its underlying models depends significantly on various types of data and artifacts generated and used throughout its lifecycle. Understanding what needs to be stored is crucial for ensuring the efficiency and effectiveness of AI applications.

Raw data

Raw data forms the foundation of AI training. It's the unmodified, unprocessed information gathered from diverse sources. For AI models, this data can be in the form of text, images, audio, video, or sensor readings. Storing vast amounts of raw data is essential as it provides the primary material for model training and the initial step toward generating actionable insights.

Preprocessed data

Once raw data is collected, it undergoes preprocessing to transform it into a more suitable format for training AI models. This process includes cleaning, normalization, and transformation. As a refined version of raw data, preprocessed data needs to be stored efficiently to streamline further processing steps, saving time and computational resources.

Training datasets

Training datasets are a selection of preprocessed data used to teach AI models how to make predictions or perform tasks. These datasets must be diverse and comprehensive, representing real-world scenarios accurately. Storing these datasets allows AI models to learn and adapt to the complexities of the tasks they are designed to perform.

Validation and test datasets

Validation and test datasets are critical for evaluating an AI model's performance. These datasets are separate from the training data and are used to tune the model's parameters and test its generalizability to new, unseen data. Proper storage of these datasets ensures that models are both accurate and reliable.

Model parameters and weights

An AI model learns to make decisions through its parameters and weights. These elements are fine-tuned during training and crucial for the model's decision-making processes. Storing these parameters and weights allows models to be reused, updated, or refined without retraining from scratch.

Model architecture

The architecture of an AI model defines its structure, including the arrangement of layers and the connections between them. Storing the model architecture is essential for understanding how the model processes data and for replicating or scaling the model in future projects.

Hyperparameters

Hyperparameters are the configuration settings used to optimize model performance. Unlike parameters, hyperparameters are not learned from the data but set prior to the training process. Storing hyperparameter values is necessary for model replication and comparison of model performance across different configurations.

Feature engineering artifacts

Feature engineering involves creating new input features from the existing data to improve model performance. The artifacts from this process, including the newly created features and the logic used to generate them, need to be stored. This ensures consistency and reproducibility in model training and deployment.

Results and metrics

The results and metrics obtained from model training, validation, and testing provide insights into model performance and effectiveness. Storing these results allows for continuous monitoring, comparison, and improvement of AI models over time.

Inference data

Inference data refers to new, unseen data that the model processes to make predictions or decisions after training. Storing inference data is key for analyzing the model's real-world application and performance and making necessary adjustments based on feedback.

Embeddings

Embeddings are dense representations of high-dimensional data in lower-dimensional spaces. They play a crucial role in processing textual data, images, and more. Storing embeddings allows for more efficient computation and retrieval of similar items, enhancing model performance in recommendation systems and natural language processing tasks.

Code and scripts

The code and scripts used to create, train, and deploy AI models are essential for understanding and replicating the entire AI process. Storing this information ensures that models can be retrained, refined, or debugged as necessary.

Documentation and metadata

Documentation and metadata provide context, guidelines, and specifics about the AI model, including its purpose, design decisions, and operating conditions. Proper storage of this information supports ethical AI practices, model interpretability, and compliance with regulatory standards.

Challenges of unstructured data in AI

In the realm of AI, handling unstructured data presents a unique set of challenges that must be navigated carefully to harness its full potential. As AI systems strive to mimic human understanding, they face the intricate task of processing and deriving meaningful insights from data that lacks a predefined format. This section delves into the core challenges associated with unstructured data in AI, primarily focusing on data variety, volume, and velocity.

Data variety

Data variety refers to the myriad types of unstructured data that AI systems are expected to process, ranging from texts and emails to images, videos, and audio files. Each data type possesses its unique characteristics and demands specific preprocessing techniques to be effectively analyzed by AI models.

- Richer Insights but Complicated Processing: While the diverse data types can provide richer insights and enhance model accuracy, they significantly complicate the data preprocessing phase. AI tools must be equipped with sophisticated algorithms to identify, interpret, and normalize various data formats.

- Innovative AI Applications: The advantage of mastering data variety lies in the development of innovative AI applications. By handling unstructured data from different domains, AI can contribute to advancements in natural language processing, computer vision, and beyond.

Data volume

The sheer volume of unstructured data generated daily is staggering. As digital interactions increase, so does the amount of data that AI systems need to analyze.

- Scalability Challenges: The exponential growth in data volume poses scalability challenges for AI systems. Storage solutions must not only accommodate current data needs but also be flexible enough to scale with future demands.

- Efficient Data Processing: AI must leverage parallel processing and cloud storage options to keep up with the volume. Systems designed for high-throughput data analysis enable quicker insights, which are essential for timely decision-making and maintaining relevance in a rapidly evolving digital landscape.

Data velocity

Data velocity refers to the speed at which new data is generated and the pace at which it needs to be processed to remain actionable. In the age of real-time analytics and instant customer feedback, high data velocity is both an opportunity and a challenge for AI.

- Real-Time Processing Needs: AI systems are increasingly required to process information in real-time or near-real-time to provide timely insights. This necessitates robust computational infrastructure and efficient data streaming technologies.

- Constant Adaptation: The dynamic nature of unstructured data, coupled with its high velocity, demands that AI systems constantly adapt and learn from new information. Maintaining accuracy and relevance in fast-moving data environments is critical for effective AI performance.

In addressing these challenges, AI and ML technologies are continually evolving, developing more sophisticated systems capable of handling the complexity of unstructured data. The key to unlocking the value hidden within this data lies in innovative approaches to data management where flexibility, scalability, and speed are paramount.

Strategies to manage unstructured data in AI

The explosion of unstructured data poses unique challenges for AI applications. Organizations must adopt effective data management strategies to harness the full potential of AI technologies. In this section, we delve into key strategies like data classification and tagging and the use of PowerScale clusters to efficiently manage unstructured data in AI.

Data classification and tagging

Data classification and tagging are foundational steps in organizing unstructured data and making it more accessible for AI applications. This process involves identifying the content and context of data and assigning relevant tags or labels, which is crucial for enhancing data discoverability and usability in AI systems.

- Automated tagging tools can significantly reduce the manual effort required to label data, employing AI algorithms to understand the content and context automatically.

- Custom metadata tags allow for the creation of a rich set of file classification information. This not only aids in the classification phase but also simplifies later iterations and workflow automation.

- Effective data classification enhances data security by accurately categorizing sensitive or regulated information, enabling compliance with data protection regulations.

Implementing these strategies for managing unstructured data prepares organizations for the challenges of today's data landscape and positions them to capitalize on the opportunities presented by AI technologies. By prioritizing data classification and leveraging solutions like PowerScale clusters, businesses can build a strong foundation for AI-driven innovation.

Best practices for implementing AI storage solutions

Implementing the right AI storage solutions is crucial for businesses seeking to harness the power of artificial intelligence. With the explosive growth of unstructured data, adhering to best practices that optimize performance, scalability, and cost is imperative. This section delves into key practices to ensure your AI storage infrastructure meets the demands of modern AI workloads.

Assess workload requirements

Before diving into storage solutions, one must thoroughly assess AI workload requirements. Understanding the specific needs of your AI applications—such as the volume of data, the necessity for high throughput/low latency, and the scalability and availability requirements—is fundamental. This step ensures you select the most suitable storage solution that meets your application's needs.

AI workloads are diverse, with each having unique demands on storage infrastructure. For instance, training a machine learning model could require rapid access to vast amounts of data, whereas inference workloads may prioritize low latency. An accurate assessment leads to an optimized infrastructure, ensuring that storage solutions are neither overprovisioned nor underperforming, thereby supporting AI applications efficiently and cost-effectively.

Leverage PowerScale

For managing large volumes and varieties of unstructured data, leveraging PowerScale nodes offers a scalable and efficient solution. PowerScale nodes are designed to handle the complexities of AI and machine learning workloads, offering optimized performance, scalability, and data mobility. These clusters allow organizations to store and process vast amounts of data efficiently for a range of AI use cases due to the following:

- Scalability is a key feature, with PowerScale clusters capable of growing with the organization's data needs. They support massive capacities, allowing businesses to store petabytes of data seamlessly.

- Performance is optimized for the demanding workloads of AI applications with the ability to process large volumes of data at high speeds, reducing the time for data analyses and model training.

- Data mobility within PowerScale clusters on-premise and in the cloud ensures that data can be accessed when and where needed, supporting various AI and machine learning use cases across different environments.

PowerScale clusters allow businesses to start small and grow capacity as needed, ensuring that storage infrastructure can scale alongside AI initiatives without compromising on performance. The ability to handle multiple data types and protocols within a single storage infrastructure simplifies management and reduces operational costs, making PowerScale nodes an ideal choice for dynamic AI environments.

Utilize PowerScale OneFS 9.7.0.0

PowerScale OneFS 9.7.0.0 is the latest version of the Dell PowerScale operating system for scale-out network-attached storage (NAS). OneFS 9.7.0.0 introduces several enhancements in data security, performance, cloud integration, and usability.

OneFS 9.7.0.0 extends and simplifies the PowerScale offering in the public cloud, providing more features across various instance types and regions. Some of the key features in OneFS 9.7.0.0 include:

- Cloud Innovations: Extends cloud capabilities and features, building upon the debut of APEX File Storage for AWS

- Performance Enhancements: Enhancements to overall system performance

- Security Enhancements: Enhancements to data security features

- Usability Improvements: Enhancements to make managing and using PowerScale easier

Employ PowerScale F210 and F710

PowerScale, through its continuous innovation, extends into the AI era by introducing the next generation of PowerEdge-based nodes: the PowerScale F210 and F710. These new all-flash nodes leverage the Dell PowerEdge R660 from the PowerEdge platform, unlocking enhanced performance capabilities.

On the software front, both the F210 and F710 nodes benefit from significant performance improvements in PowerScale OneFS 9.7. These nodes effectively address the most demanding workloads by combining hardware and software innovations. The PowerScale F210 and F710 nodes represent a powerful combination of hardware and software advancements, making them well-suited for a wide range of workloads. For more information on the F210 and F710, see PowerScale All-Flash F210 and F710 | Dell Technologies Info Hub.

Ensure data security and compliance

Given the sensitivity of the data used in AI applications, robust security measures are paramount. Businesses must implement comprehensive security strategies that include encryption, access controls, and adherence to data protection regulations. Safeguarding data protects sensitive information and reinforces customer trust and corporate reputation.

Compliance with data protection laws and regulations is critical to AI storage solutions. As regulations can vary significantly across regions and industries, understanding and adhering to these requirements is essential to avoid significant fines and legal challenges. By prioritizing data security and compliance, organizations can mitigate risks associated with data breaches and non-compliance.

Monitor and optimize

Continuous storage environment monitoring and optimization are essential for maintaining high performance and efficiency. Monitoring tools can provide insights into usage patterns, performance bottlenecks, and potential security threats, enabling proactive management of the storage infrastructure.

Regular optimization efforts can help fine-tune storage performance, ensuring that the infrastructure remains aligned with the evolving needs of AI applications. Optimization might involve adjusting storage policies, reallocating resources, or upgrading hardware to improve efficiency, reduce costs, and ensure that storage solutions continue to effectively meet the demands of AI workloads.

By following these best practices, businesses can build and maintain a storage infrastructure that supports their current AI applications and is poised for future growth and innovation.

Conclusion

Navigating the complexities of unstructured storage demands for AI is no small feat. Yet, by adhering to the outlined best practices, businesses stand to benefit greatly. The foundational steps include assessing workload requirements, selecting the right storage solutions, and implementing robust security measures. Furthermore, integrating PowerScale nodes and a commitment to continuous monitoring and optimization are key to sustaining high performance and efficiency. As the landscape of AI continues to evolve, these practices will not only support current applications but also pave the way for future growth and innovation. In the dynamic world of AI, staying ahead means being prepared, and these strategies offer a roadmap to success.

Frequently asked questions

How big are AI data centers?

Data centers catering to AI, such as those by Amazon and Google, are immense, comparable to the scale of football stadiums.

How does AI process unstructured data?

AI processes unstructured data including images, documents, audio, video, and text by extracting and organizing information. This transformation turns unstructured data into actionable insights, propelling business process automation and supporting AI applications.

How much storage does an AI need?

AI applications, especially those involving extensive data sets, might require significant memory, potentially as much as 1TB or more. Such vast system memory efficiently facilitates the processing and statistical analysis of entire data sets.

Can AI handle unstructured data?

Yes, AI is capable of managing both structured and unstructured data types from a variety of sources. This flexibility allows AI to analyze and draw insights from an expansive range of data, further enhancing its utility across diverse applications.

Author: Aqib Kazi, Senior Principal Engineer, Technical Marketing