Scaling and Optimizing ML in Enterprises

Download PDFTue, 16 May 2023 19:53:46 -0000

|Read Time: 0 minutes

Summary

This joint paper, written by Dell Technologies, in collaboration with Intel®, describes the key hardware considerations when configuring a successful MLOps deployment and recommends configurations based on the most recent 15th Generation Dell PowerEdge Server portfolio offerings.

Today’s enterprises are looking to operationalize machine learning to accelerate and scale data science across the organization. This is especially the case as their needs grow to deploy, monitor, and maintain data pipelines and models. Cloud native infrastructure, such as Kubernetes, offers a fast and scalable means to implement Machine Learning Operations (MLOps) by using Kubeflow, an open source platform for developing and deploying Machine Learning (ML) pipelines on Kubernetes.

Dell PowerEdge R650 servers with 3rd Generation Intel® Xeon® Scalable processors deliver a scalable, portable, and cost-effective solution to implement and operationalize machine learning within the Enterprise organization.

Key Considerations

- Portability. A single end-to-end platform to meet the machine learning needs of various use cases, including predictive analytics, inference, and transfer learning.

- Optimized performance. High-performance 3rd Generation Intel® Xeon® Scalable processors optimize performance for machine learning algorithms using AVX-512. Intel® performance optimizations that are built into Dell PowerEdge servers can help fine-tune large Transformers models across multi-node systems. These work in conjunction with open-source cloud native MLOps tools. Optimizations include Intel® and open-source software and hardware technologies such as Kubernetes stack, AVX-512, Horovod for distributed training, and Tensorflow 2.10.0.

- Scalability. As the machine learning workload grows, additional compute capacity needs to be added to the cloud native infrastructure. Dell PowerEdge R750 servers with 3rd Generation Intel® Xeon® Scalable processors deliver an efficient and scalable approach to MLOps.

Recommended Configurations

Cluster | ||

| Control Plane Nodes (Three Nodes Required) | Data Plane Nodes (4 Nodes or More) |

Functions | Kubernetes services | Develop, Deploy, Run Machine Learning (ML) workflows |

Platform | Dell PowerEdge R650 up to 10x 2.5” NVMe Direct Drives | |

CPU | 2x Intel® Xeon® Gold 6326 processor (16 cores @ 2.9GHz), or better | 2x Intel® Xeon® Platinum 8380 processor (40 cores at 2.3 GHz), or 2x Intel® Xeon® Platinum 8368 processor (38 cores @ 2.4GHz), or Intel® Xeon® Platinum 8360Y processor (36 cores @ 2.4GHz) |

DRAM | 128 GB (16x 8 GB DDR4-3200) | 512 GB (16x 32 GB DDR5-4800) |

Boot device | Dell Boot Optimized Server Storage (BOSS)-S2 with 2x 240GB or 2x 480 GB Intel® SSD S4510 M.2 SATA (RAID1) | |

Storage adapter | Not required for all-NVMe configuration. | |

Storage (NVMe) | 1x 1.6TB Enterprise NVMe Mixed- Use AG Drive U.2 Gen4 | 1x 1.6TB (or larger) Enterprise NVMe Mixed-Use AG Drive U.2 Gen4 |

NIC | Intel® E810-XXVDA2 for OCP3 (dual-port 25GbE) | Intel® E810-XXVDA2 for OCP3 (dual-port 25GbE), or Intel® E810-CQDA2 PCIe (dual-port 100Gb) |

Resources

Visit the Dell support page or contact your Dell or Intel account team for a customized quote 1-877-289-3355.

Related Documents

Powering AI using Red Hat Openshift with Intel based PowerEdge servers

Fri, 13 Oct 2023 14:42:09 -0000

|Read Time: 0 minutes

End-to-End AI using OpenShift Overview

At the top of this webpage are 3 PDF files outlining test results and reference configurations for Dell PowerEdge servers using both the 3rd Generation Intel® Xeon® processors and the 4th Generation Intel Xeon processors. All testing was conducted in Dell Labs by Intel and Dell Engineers in May and June of 2023.

- “Dell DfD E2E AI ICX” – highlights the recommended configurations for Dell PowerEdge servers using 3rd generation Intel Xeon processors.

- “Dell DfD E2E AI SPR” – highlights the recommended configurations for Dell PowerEdge servers using 4th generation Intel Xeon processors.

- “DfD – PowerEdge E2E AI Test Report” – Highlights the results of performance testing on both configurations with comparisons that demonstrate both performance and reduced power consumption for each.

Solution Overview

Red Hat OpenShift, the industry's leading hybrid cloud application platform powered by Kubernetes, brings together tested and trusted services to reduce the friction of developing, modernizing, deploying, running, and managing applications. OpenShift delivers a consistent experience across public cloud, on-premise, hybrid cloud, or edge architecture.[i]

Companies using OpenShift[ii]

- 50% of Fortune Global 500 aerospace and defense companies.

- 57% of Fortune Global 500 technology companies.

- 51% of Fortune Global 500 financial companies.

- 80% of Fortune Global 500 telecommunications companies.

- 54% of Fortune Global 500 motor vehicles and parts companies.

- 50% of Fortune Global 500 food and drug stores.

Elasticsearch with Dell PowerEdge and Intel processor benefits

The introduction of new server technologies allows customers to deploy solutions using the newly introduced functionality but it can also provide an opportunity for them to review their current infrastructure and determine if the new technology might increase performance and efficiency. With this in mind, Dell and Intel recently conducted Natural Language Processing Artificial Intelligence (AI) performance testing of a RedHat OpenShift solution on the new Dell PowerEdge R760 with 4th generation Intel® Xeon® Scalable processors and compared the results to the same solution running on the previous generation R750 with 3rd generation Intel® Xeon® Scalable processors to determine if customers could benefit from a transition.

Some of the key changes incorporated into 4th generation Intel® Xeon® Scalable processors utilized for this test included:

- New Advanced Matrix Extension (AMX) capabilities

- Improved Advanced Vector Extension (AVX) performance

- The new Intel® Extension for TensorFlow® open-source solution

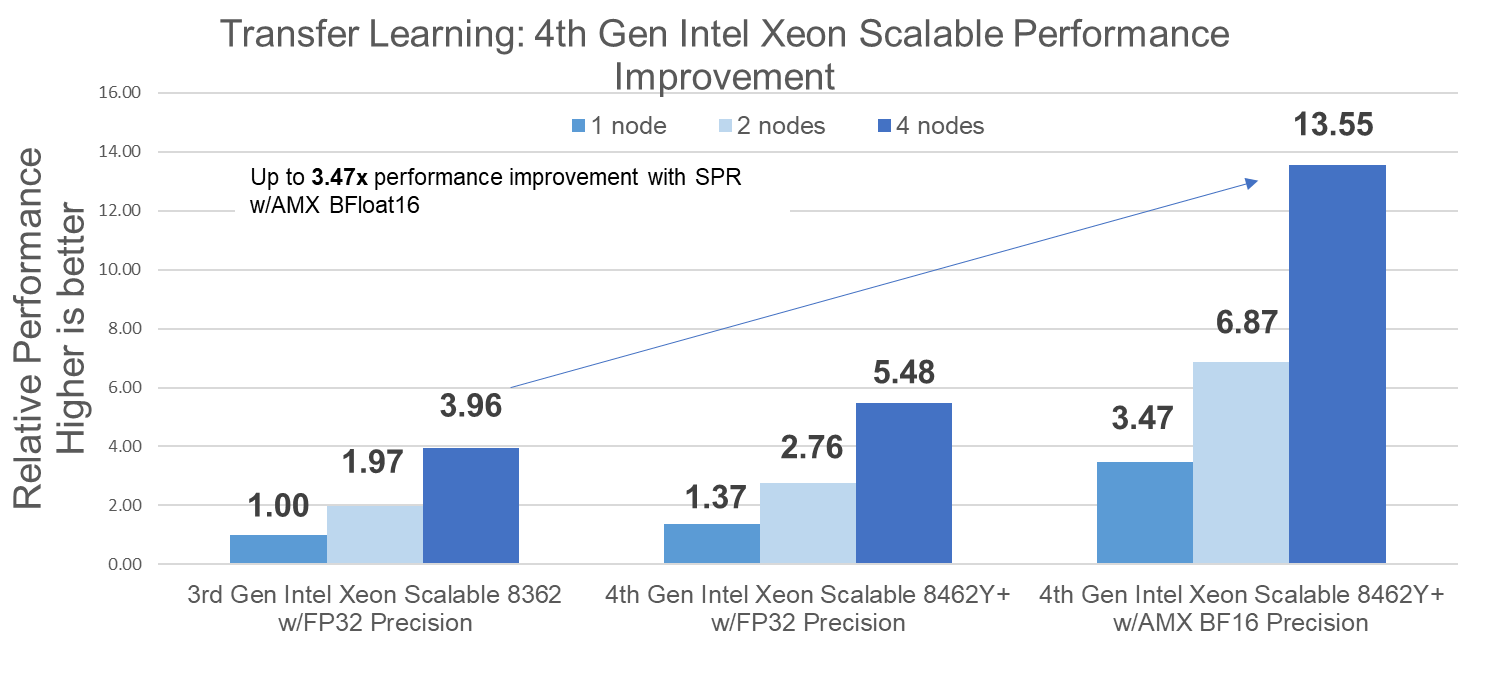

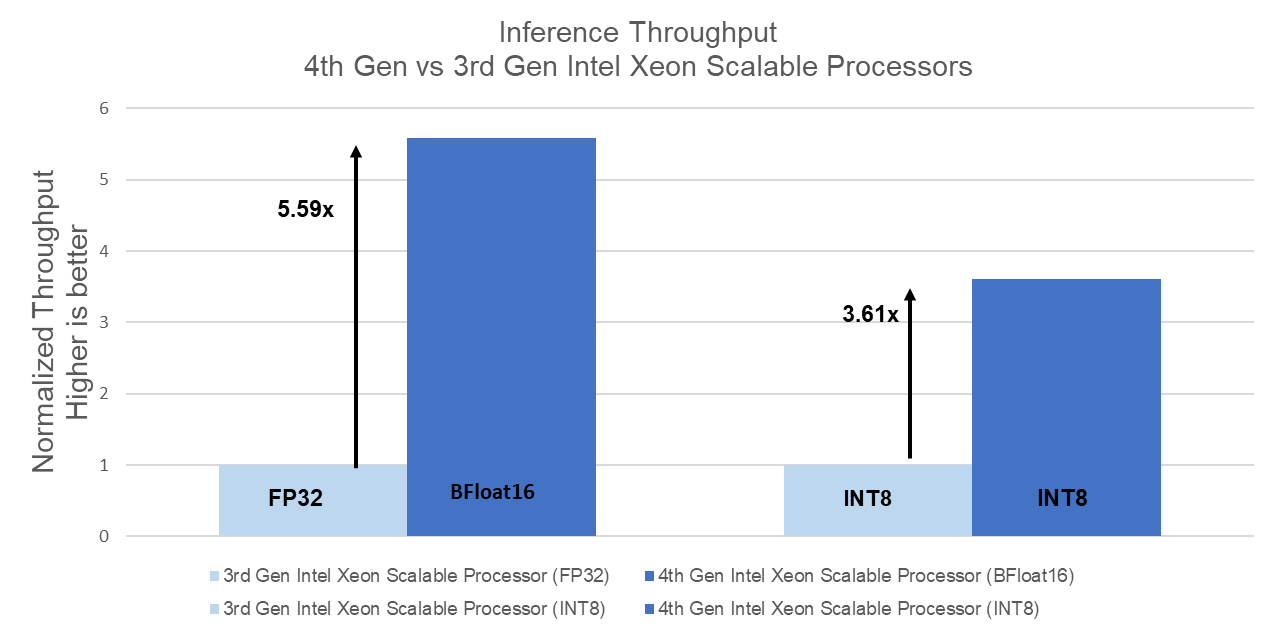

Raw performance: As noted in the report, our tests showed a 3.47x increase in transfer learning performance and a 5.59x increase in Inferencing Performance

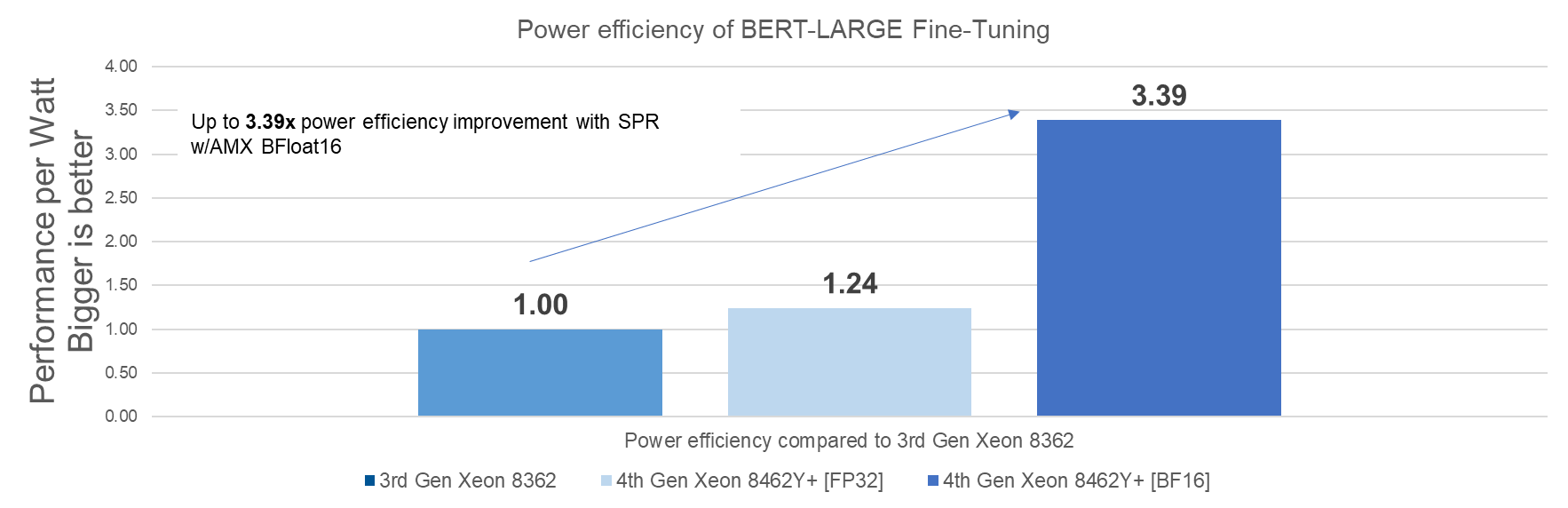

Relative Power Consumption: In addition to higher performance, the R760 based solution also delivered up to 3.39x better performance per watt than the previous generation:

Conclusion

Choosing the right combination of Server and Processor can increase performance and reduce cost. As this testing demonstrated, the Dell PowerEdge R760 with 4th Generation Intel® Xeon® Platinum 8462Y+ CPU’s delivered up to 5.59x more throughput than the Dell PowerEdge R750 with 3rd Generation Intel® Xeon® Platinum 8362 CPU’s and provided up to 3.39x better power efficiency.

Efficient, scalable, and optimized means to run Enterprise AI pipelines on Intel HW; full end-to-end OpenShift stack with Kubeflow

- Up to 3.47x better transfer learning (Fine Tuning) throughput than 3rd Gen Xeon Scalable Processor; with linear scaling on 1, 2, and 4 nodes

- Up to 3.39x higher transfer learning power efficiency than 3rd Gen Xeon Scalable Processor

- Up to 5.59x better performance (inferencing) over 3rd gen Intel Xeon Scalable Processors with FP32 precision using the same core count

- Up to 3.61x performance improvement over 3rd generation Intel® Xeon® Scalable Processors with INT8 precision using same core count

[ii] Source: Fortune 500 subscription data as of 26 September 2022

Powering Your Elasticsearch on Kubernetes

Tue, 17 Jan 2023 08:32:07 -0000

|Read Time: 0 minutes

Summary

This joint paper, written by Dell Technologies, in collaboration with Intel®, describes the key hardware considerations when configuring a successful Elasticsearch deployment and recommends configurations based on the most recent 15th Generation PowerEdge Server portfolio offerings.

Elasticsearch is a distributed, open-source search and analytics engine for all types of data, including textual, numerical, geospatial, structured, and unstructured. This proposal contains recommended configurations for Elasticsearch clusters on the Kubernetes platform (Red Hat OpenShift Container Platform with Elastic Cloud on Kubernetes (ECK) operator) running on 15th Generation Dell PowerEdge with 3rd Generation Intel® Xeon® Scalable processors (Ice Lake).

Key Considerations

- Faster and scalable performance. Elasticsearch running on the latest Dell PowerEdge servers is built on high- performing Intel® architecture and configured with 3rd Generation Intel® Xeon® Scalable processors. Indexing is faster and capacity can scale with your needs.

- Index more data. Elasticsearch can handle and store more data by increasing DRAM capacity and using PCIe Gen 4 NVMe disk drives attached to Dell PowerEdge servers.

- Reduced search times and increased # of concurrent searches. As data grows and needs to be accessed across the cluster, data-access response times are critical, especially for real-time analytics applications. Elasticsearch, running on the latest Dell PowerEdge servers, is built on high-performing Intel® architecture. Intel® Ethernet network controllers, adapters, and accessories enable agility in the data center and support high throughput and low latency response times.

- Easy and secure installation. The Elastic Cloud on Kubernetes (ECK) operator is an official Elasticsearch operator certified on Red Hat OpenShift Container Platform, providing easy deployment, management, and operation of Elasticsearch, Kibana, APM Server, Beats, and Enterprise Search on OpenShift clusters. Elasticsearch clusters deployed using this operator are secure by default (with enabled encryption and strong passwords).

- Multi Data Tiers. As data grows, costs do not have to. With multiple tiers of data, capacity can extend, and storage costs can be driven lower without performance loss. Each capacity layer can be scaled independently by using larger drives or mode nodes (or both), depending on customer needs.

Available Configurations

Elasticsearch cluster on Kubernetes (Red Hat OpenShift Kubernetes) platform | ||||

| OpenShift Control Plane Master Nodes (three nodes required) | Elasticsearch Master / Ingest / Hot tier data nodes (minimum of three nodes required) |

Elasticsearch Warm tier data nodes (optional) |

Elasticsearch Cold tier data nodes (optional) |

Functions |

OpenShift services, Kubernetes services | Elasticsearch roles: master, ingest, hot tier data Additional services, such as Kibana |

Elasticsearch roles: warm tier data |

Elasticsearch roles: cold tier data |

Platform |

Dell PowerEdge R650 chassis with up to 10x2.5” NVMe Direct Drives | Dell PowerEdge R750 chassis with up to 12x3.5” HDD with RAID | ||

CPU | 2 x Intel® Xeon® Gold 6326 processor (16 cores @ 2.9GHz) or better |

2 x Intel® Xeon® Gold 6338 processor (32 cores @ 2.0GHz) |

2 x Intel® Xeon® Gold 5318Y processor (24 cores @ 2.1GHz) |

2 x Intel® Xeon® Gold 5318N processor (24 cores @ 2.1GHz) |

DRAM | 128GB (16x 8GB DDR4- 3200) |

256 GB (16 x 16 GB DDR4-3200) | 128 GB (16 x 8 GB DDR4-3200) | |

Boot Device | Dell BOSS-S2 with 2x 240GB or 2x 480GB M.2 SATA SSD (RAID1) | |||

Storage adapter |

Not needed for all-NVMe configurations | Dell PERC H755 SAS/SATA RAID adapter | ||

Storage (NVMe) |

1x 1.6TB Enterprise NVMe Mixed-Use AG Drive U.2 Gen4 |

2x (up to 10x) 3.2TB Enterprise NVMe Mixed-Use AG Drive U.2 Gen4 |

10x 7.68TB Enterprise NVMe Read-Intensive AG Drive U.2 Gen4 |

up to 12x 16TB / 18TB / 20TB 12Gbps SAS ISE 3.5” HDD, 7200RPM |

NIC | Intel E810-XXVDA2 for OCP3 (dual-port 25GbE) | |||

Note: This document may contain language from third-party content that is not under Dell Technologies’ control and is not consistent with current guidelines for Dell Technologies’ own content. When such third-party content is updated by the relevant third parties, this document will be revised accordingly.

Resources

For more information:

- Contact your Dell or Intel® account team for a customized quote, at 1-877-ASK-DELL (1-877-275-3355).

- See the following documents:

Elastic Cloud on Kubernetes is now a Red Hat OpenShift Certified Operator