Expanding GPU Choice with Intel Data Center GPU Max Series

Fri, 12 Jan 2024 18:03:05 -0000

|Read Time: 0 minutes

This is part two, read part one here: https://infohub.delltechnologies.com/p/llama-2-on-dell-poweredge-xe9640-with-intel-data-center-gpu-max-1550/

|  |  |  |

|  |  |

| MORE CHOICE IN THE GPU MARKET

We are delighted to showcase our collaboration with Intel® to introduce expanded options within the GPU market with the Intel® Data Center GPU Max Series, now accessible via Dell™ PowerEdge™ XE9640 & 760xa. The Intel® Data Center GPU Max Series is Intel® highest performing GPU, with more than 100 billion transistors, up to 128 Xe cores, and up to 128 GB of high bandwidth memory. Intel® Data Center GPU Max Series pairs seamlessly with both Dell™ PowerEdge™ XE9640, the first liquid-cooled 4-way GPU platform in a 2U server from Dell™, and Dell™ PowerEdge™ 760xa, offering a wide range of choice and scalability in performance.

Dell™ recently announced partnerships with both Meta and Hugging Face to enable seamless support for enterprises to select, deploy, and fine-tune AI models for industry specific use cases, anchored by Llama-2 from Meta. We paired Dell™ PowerEdge™ XE9640 & 760xa with the Intel® Data Center GPU Max Series and tested the performance of the Llama-2 7B Chat model by measuring both the rate of token generation and the number of concurrent users that can be supported while scaling up to four GPUs.

Dell™ PowerEdge™ Servers and Intel® Data Center GPU Max Series showcased a strong scalability and met target end user latency goals.

“Scalers AI™ ran Llama-2 7B Chat with Dell™ PowerEdge™Servers, powered by the Intel® Data Center GPU Max Series with optimizations from Intel® that enabled us to meet the end user latency requirements for our enterprise AI chatbot”

Chetan Gadgil, CTO at Scalers AI

Chetan Gadgil, CTO at Scalers AI

| LLAMA-2 7B CHAT MODEL

Large Language Models (LLMs), such as OpenAI GPT-4 and Google PaLM, are powerful deep learning architectures that have been pre-trained on large datasets and can perform a variety of natural language processing (NLP) tasks including text classification, translation, and text generation. In this demonstration, we have chosen to test Llama-2 7B Chat because it is an open source model that can be leveraged for various commercial use cases.

For inference testing in LLMs such as Llama-2 7B Chat, powerful GPUs such as the Intel® Data Center GPU Max Series are incredibly useful due to their parallel processing architecture which can support massive parameter sets and efficiently handle expanding datasets.

| PART II

In part I of our blog series on Intel® Data Center GPU Max Series, we put Intel® Data Center GPU Max 1550 to the test by running Llama-2 7B Chat and optimizing using Hugging Face Optimum with an Intel® OpenVINO™ backend in an FP32 format.

In part II of our blog series, we will focus on both the Intel® Data Center GPU Max 1550 and 1100 and leverage the lower precision INT8 format for enhanced performance using Intel® OpenVINO™. We will also use a new toolkit, Intel® BigDL, through which we will be able to run Llama-2 7B Chat on Intel® Data Center Max GPUs in the INT4 format.

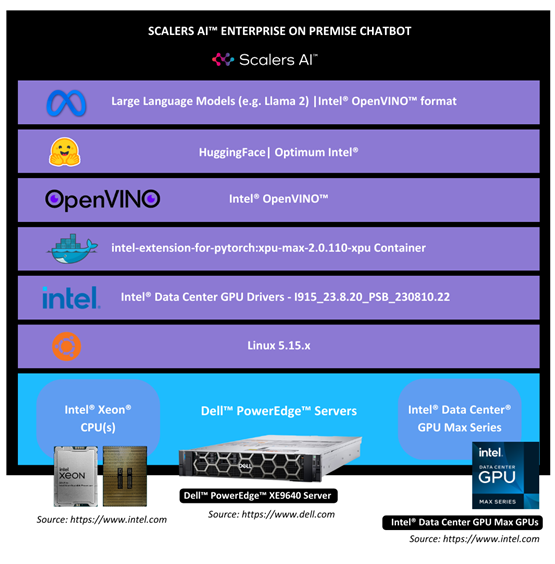

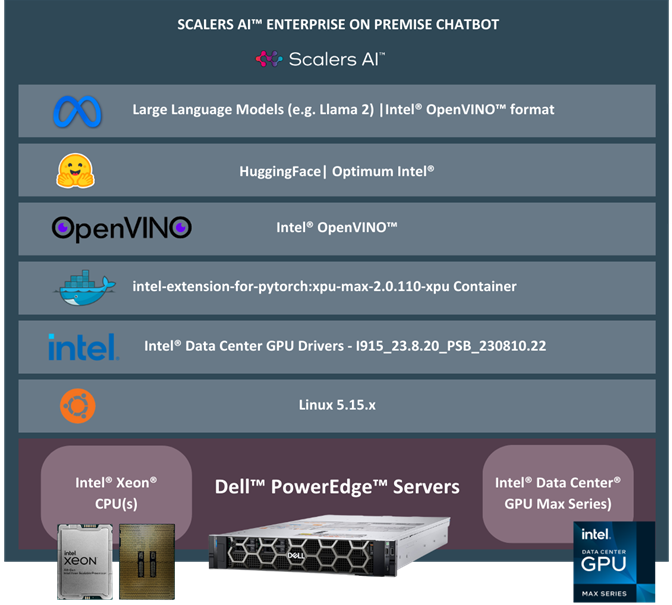

| ARCHITECTURES

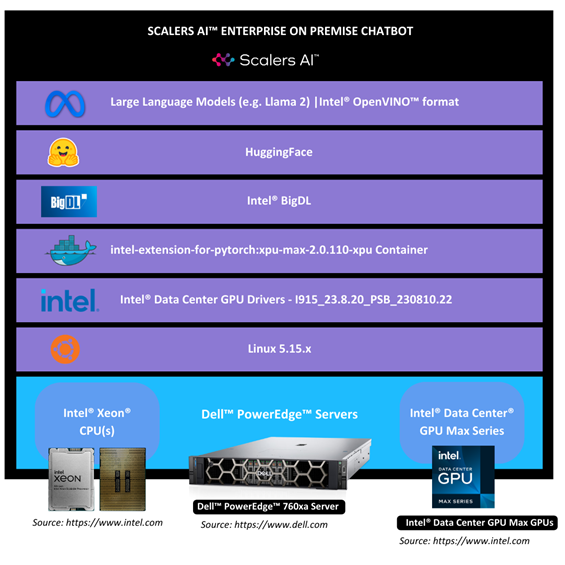

We initialized our testing environment with Dell™ PowerEdge™ XE9640 Server with four Intel® Data Center GPUs Max 1550 running on Ubuntu 22.04. We paired the Intel® Data Center Max GPUs 1100 with Dell™ PowerEdge™ 760xa Rack Server.

To ensure maximum efficiency, we used Hugging Face Optimum, an extension of Hugging Face Transformers that provides a set of performance optimization tools to train and run models on targeted hardware. For the Intel® Data Center Max GPU, we selected the Optimum-Intel package, which integrates libraries provided by Intel® to accelerate end-to-end pipelines on Intel® hardware. Optimum-Intel allows you to optimize your model to the Intel® OpenVINO™ IR format and attain enhanced performance using the Intel® OpenVINO™ runtime.

We also tested bigdl-llm, a library for running LLMs on Intel® hardware with support for Pytorch and lower precision formats. By using bigdl-llm, we are able to leverage INT4 precision on Llama-2 7B Chat.

The following architectures depict both scenarios:

1) Hugging Face Optimum

2) bigdl-llm

| SYSTEM SET-UP SETUP

1. Installation of Drivers

To install drivers for the Intel® Data Center GPU Max Series, we followed the steps here.

2. Verification of Installation

To verify the installation of the drivers, we followed the steps here.

3. Installation of Docker

To install Docker on Ubuntu 22.04.3, we followed the steps here.

| RUNNING THE LLAMA-2 7B CHAT MODEL WITH OPTIMUM-INTEL

4. Set up a Docker container for all our dependencies to ensure seamless deployment and straightforward replication:

sudo docker run --rm -it --privileged --device=/dev/dri --ipc=host intel/intel-extension-for-pytorch:xpu-max-2.0.110-xpu bash

5. To install the Python dependencies, our Llama-2 7B Chat model requires:

pip install openvino==2023.2.0

pip install transformers==4.33.1

pip install optimum-intel==1.11.0

pip install onnx==1.15.0

6. Access the Llama-2 7B Chat model through HuggingFace:

huggingface-cli login

7. Convert the Llama-2 7B Chat HuggingFace model into Intel® OpenVINO™ IR with INT8 precision format using Intel® Optimum to export it:

from optimum.intel import OVModelForCausalLM

from transformers import AutoTokenizer

model_id = "meta-llama/Llama-2-7b-chat-hf"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = OVModelForCausalLM.from_pretrained(model_id, export=True, load_in_8bit=True)

model.save_pretrained("llama-2-7b-chat-ov")

tokenizer.save_pretrained("llama-2-7b-chat-ov")

8. Run the code snippet below to generate the text with the Llama-2 7B Chat model:

import time

from optimum.intel import OVModelForCausalLM

from transformers import AutoTokenizer, pipeline

model_name = "llama-2-7b-chat-ov"

input_text = "What are the key features of Intel's data center GPUs?"

max_new_tokens = 100

# Initialize and load tokenizer, model

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = OVModelForCausalLM.from_pretrained(model_name, ov_config= {"INFERENCE_PRECISION_HINT":"f32"}, compile=False)

model.to("GPU")

model.compile()

# Initialize HF pipeline

text_generator = pipeline( "text-generation", model=model, tokenizer=tokenizer, return_tensors=True, )

# Inference

start_time = time.time()

output = text_generator( input_text, max_new_tokens=max_new_tokens ) _ = tokenizer.decode(output[0]["generated_token_ids"])

end_time = time.time()

# Calculate number of tokens generated

num_tokens = len(output[0]["generated_token_ids"])

inference_time = end_time - start_time

token_per_sec = num_tokens / inference_time

print(f"Inference time: {inference_time} sec")

print(f"Token per sec: {token_per_sec}")

| RUNNING THE LLAMA-2 7B CHAT MODEL WITH BIGDL-LLM

1. Set up a Docker container for all our dependencies to ensure seamless deployment and straightforward replication:

sudo docker run --rm -it --privileged -u 0:0 --device=/dev/dri --ipc=host intelanalytics/bigdl-llm-xpu:2.5.0-SNAPSHOT bash

2. Access the Llama-2 7B Chat model through HuggingFace:

huggingface-cli login

3. Run the code snippet below to generate the text with the Llama-2 7B Chat model in INT4 precision:

import torch

import intel_extension_for_pytorch as ipex

import time

import argparse

from bigdl.llm.transformers import AutoModelForCausalLM

from transformers import LlamaTokenizer

model = AutoModelForCausalLM.from_pretrained("meta-llama/Llama-2-7b-chat-hf",

load_in_4bit=True,

optimize_model=True,

trust_remote_code=True,

use_cache=True)

model = model.to('xpu')

tokenizer = LlamaTokenizer.from_pretrained("meta-llama/Llama-2-7b-chat-hf", trust_remote_code=True)

with torch.inference_mode():

input_ids = tokenizer.encode("What are the key features of Intel's data center GPUs?", return_tensors="pt").to(self.device)

# ipex model needs a warmup, then inference time can be accurate

output = model.generate(input_ids,

temperature=0.1,

max_new_tokens=100)

# start inference

start_time = time.time()

output = model.generate(input_ids, max_new_tokens=100)

end_time = time.time()

num_tokens = len(output[0].detach().numpy().flatten())

inference_time = end_time - start_time

token_per_sec = num_tokens / inference_time

print(f"Inference time: {inference_time} sec")

print(f"Token per sec: {token_per_sec}")

| ENTER PROMPT

What are the key features of Intel® Data Center GPUs?

Output for Llama-2 OpenVINO INT8

Intel® Data Center GPUs are designed to provide high levels of performance and power efficiency for a wide range of applications, including machine learning, artificial intelligence, and high-performance computing. Some of the key features of Intel's data center GPUs include:

1. Many Cores: Intel's data center GPUs are designed with many cores, which allows them to handle large workloads and perform complex tasks quickly and efficiently.

2. High Memory Band

Output for Llama-2 BigDL INT4

Intel's data center GPUs are designed to provide high levels of performance, power efficiency, and scalability for demanding workloads such as artificial intelligence, machine learning, and high-performance computing. Some of the key features of Intel's data center GPUs include:

1. Architecture: Intel's data center GPUs are based on the company's own architecture, which is optimized for high-per

| PERFORMANCE RESULTS & ANALYSIS

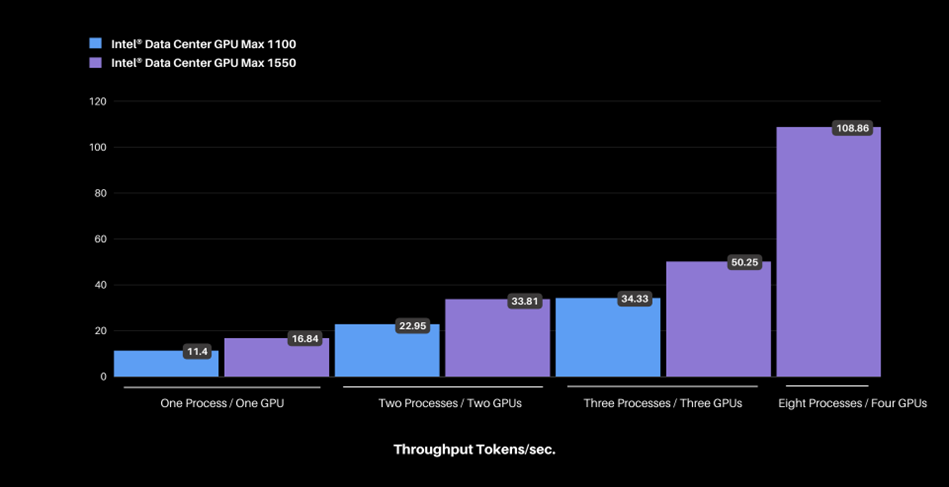

Llama-2 Intel® OpenVINO™ INT8 (Hugging Face Backend Intel® OpenVINO™)

Figure: Scaling Intel® Data Center GPU Max 1100 & 1550 and increasing concurrent processes measured in total throughput in tokens per second.

Figure: Scaling Intel® Data Center GPU Max 1100 & 1550 and increasing concurrent processes measured in total throughput in tokens per second.

Using a machine with a single GPU and a single process, we achieved a throughput of ~11 tokens per second on the Intel® Data Center GPU Max 1100, which increased to ~109 tokens per second when scaling up to four Intel® Data Center Max GPUs 1550 and eight processes. The latency per process remains well below the Scalers AI™ target of 100 milliseconds despite an increase in the number of concurrent processes.

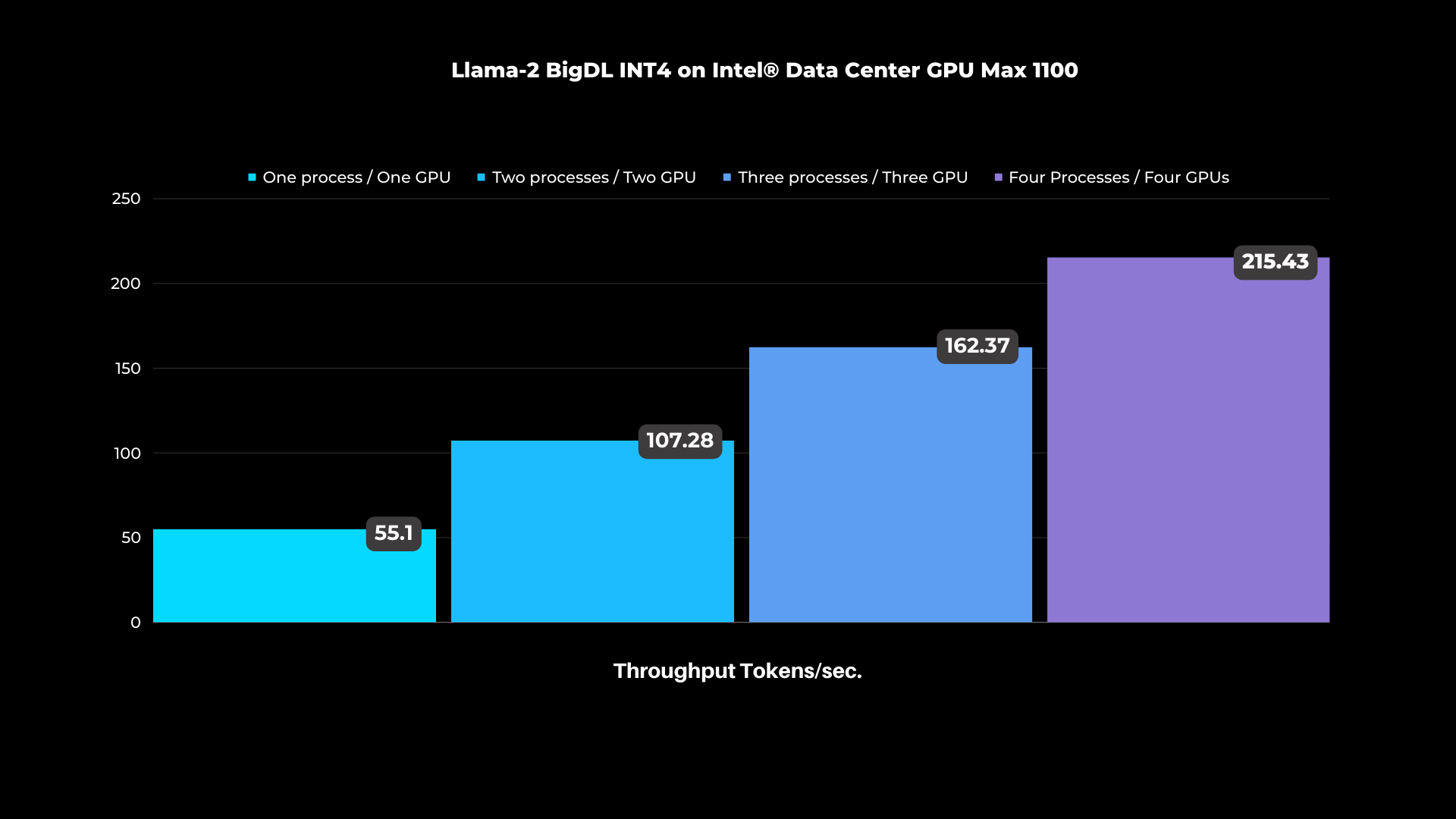

Llama-2 BigDL INT4 on Intel® Data Center GPU Max 1100

Figure: Scaling Intel® Data Center Max GPUs from one to four GPUs and increasing concurrent processes measured in total throughput in tokens per second.

Using a machine with a single GPU and a single process, we achieved a throughput of ~55 tokens per second on the Intel® Data Center GPU Max 1100, which increased to ~215 tokens per second when scaling up to four Intel® data Center GPUs Max 1100 and four processes.

Our results demonstrate that Dell™ PowerEdge™ Servers with Intel® Data Center GPU Max Series are up to the task of running Llama-2 7B Chat and meeting end user experience targets.

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

| ABOUT SCALERS AI™

Scalers AI™ specializes in creating end-to-end artificial intelligence (AI) solutions to fast track industry transformation across a wide range of industries, including retail, smart cities, manufacturing, insurance, finance, legal and healthcare. Scalers AI™ industry offerings include custom large language models and multimodal platforms supporting voice, image, and text. As a full stack AI solutions company with solutions ranging from the cloud to the edge, our customers often need versatile common off the shelf (COTS) hardware that works well across a range of workloads.

| Dell™ PowerEdge™ XE9640 & 760xa Servers Key Specifications

MACHINE | Dell™ PowerEdge™ XE9640 Server |

Operating system | Ubuntu 22.04.3 LTS |

CPU | Intel® Xeon® Platinum 8468 |

MEMORY | 512Gi |

GPU | Intel® Data Center GPU Max 1550 |

GPU COUNT | 4 |

MACHINE | Dell™ PowerEdge™ 760xa Server |

Operating system | Ubuntu 22.04.3 LTS |

CPU | Intel® Xeon® Platinum 8480+ |

MEMORY | 1024Gi |

GPU | Intel® Data Center GPU Max 1100 |

GPU COUNT | 4 |

| HUGGING FACE OPTIMUM & INTEL® BIGDL

Learn more: https://huggingface.co, https://github.com/intel-analytics/BigDL

| TEST METHODOLOGY

The Llama-2 7B Chat INT8 model is exported into the Intel® OpenVINO™ format and then tested for text generation (inference) using Hugging Face Optimum. Hugging Face Optimum is an extension of Hugging Face transformers and Diffusers that provides tools to export and run optimized models on various ecosystems including Intel® OpenVINO™. We also tested bigdl-llm, a library for running large language models on Intel® supporting PyTorch and offering lower precision formats. Using bigdl-llm, we are able to leverage INT4 precision on llama-2 chat 7B.

For performance tests, 20 iterations were executed for each inference scenario out of which initial five iterations were considered as warm-up and were discarded for calculating Inference time (in seconds) and tokens per second. The time collected includes encode-decode time using tokenizer and LLM inference time.

Related Documents

Llama-2 on Dell PowerEdge XE9640 with Intel Data Center GPU Max 1550

Fri, 12 Jan 2024 18:04:24 -0000

|Read Time: 0 minutes

Part two is now available: https://infohub.delltechnologies.com/p/expanding-gpu-choice-with-intel-data-center-gpu-max-series/

|  |  |  |

| MORE CHOICE IN THE GPU MARKET

We are delighted to showcase our collaboration with Intel® to introduce expanded options within the GPU market with the Intel® Data Center GPU Max Series, now accessible via Dell™ PowerEdge™ XE9640.

The Intel® Data Center GPU Max Series is Intel® highest performing GPU with more than 100 billion transistors, up to 128 Xe cores, and up to 128 GB of high bandwidth memory. Intel® Data Center GPU Max Series pairs seamlessly with Dell™ PowerEdge™ XE9640, Dell™ first liquid-cooled 4-way GPU platform in a 2u server.

Dell™ recently announced partnerships with both Meta and Hugging Face to enable seamless support for enterprises to select, deploy, and fine-tune AI models for industry specific use cases anchored by Llama 2 7B Chat from Meta.

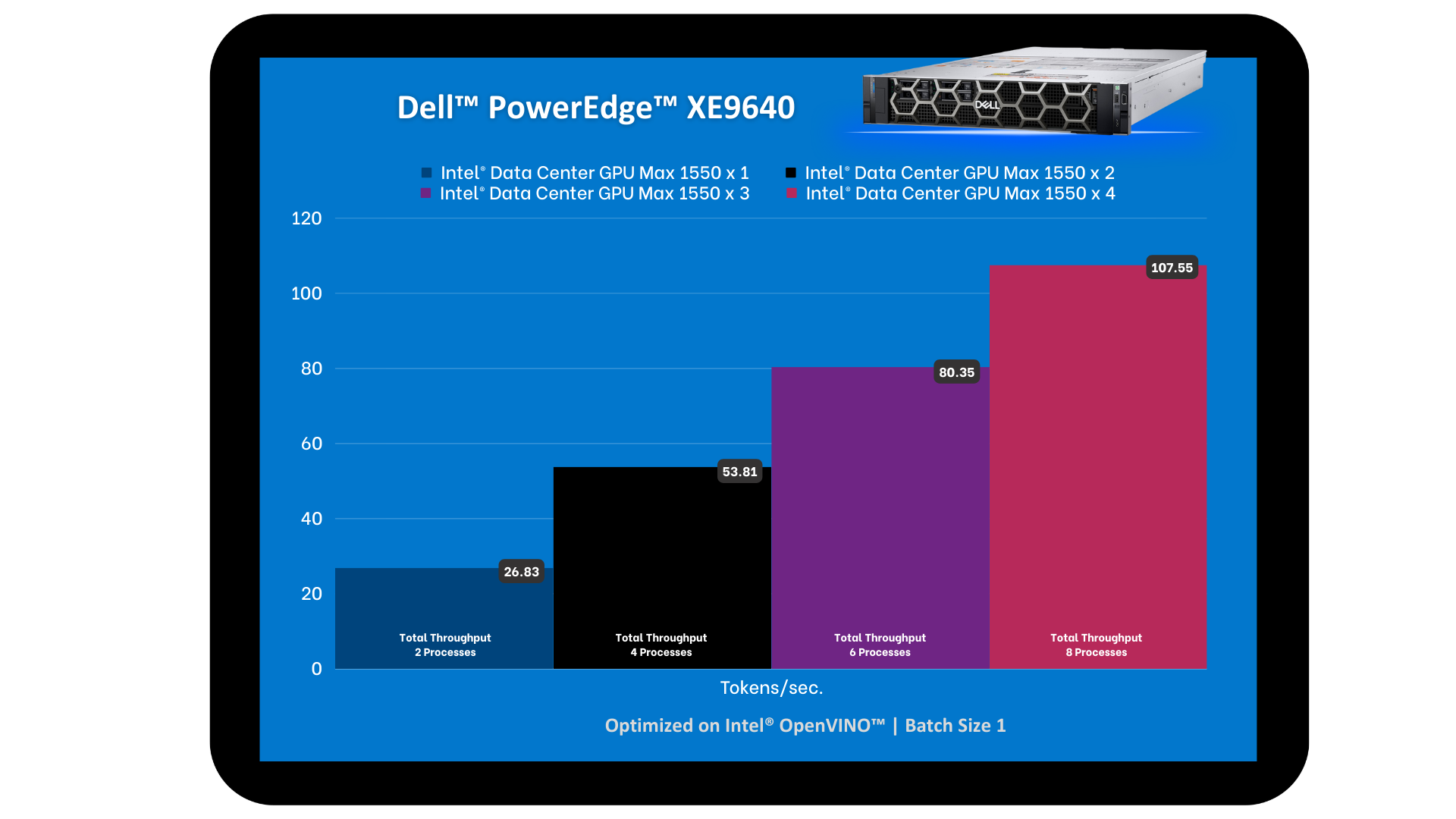

We put Dell™ PowerEdge™ XE9640 and Intel® Data Center GPU Max Series to the test with the Llama-2 7B Chat model. In doing so, we tested the tokens per second and the number of concurrent users that can be supported while scaling up to four GPUs. Dell™ PowerEdge™ XE9640 and Intel® Data Center GPU Max Series showcased a strong scalability and met target end user latency goals.

“Scalers AI™ ran eight concurrent processes of Llama-2 7B Chat with Dell™ PowerEdge™ XE9640 and Intel® Data Center GPU Max Series for a total throughput of >107 tokens per second, achieving our end user token latency target of 100 milliseconds”

Chetan Gadgil, CTO at Scalers AI

Chetan Gadgil, CTO at Scalers AI

| LLAMA-2 7B CHAT MODEL

Large Language Models (LLMs) are powerful deep learning architectures that have been pre-trained on large datasets such as OpenAI ChatGPT. We have chosen to test Llama-2 7B Chat because it is an open source model that can be leveraged for commercial use cases, such as coding, functional tasks, and even creative tasks.

For inference testing in Large Language Models such as Llama-2 7B Chat, GPUs are incredibly useful due to their parallel processing architecture which can handle Llama-2's massive parameter sets. To efficiently handle expanding datasets, powerful GPUs such as Intel® Data Center GPU Max 1550 are critical.

| ARCHITECTURE

We started our testing environment with Dell™ PowerEdge™ XE9640 with four Intel® Data Center GPU Max 1550, running on Ubuntu 22.04.

We used Hugging Face Optimum, an extension of Transformers that provides a set of performance optimization tools to train and run models on targeted hardware, ensuring maximum efficiency. For Intel® Data Center GPU Max 1550, we selected the Optimum-Intel package. Optimum-intel integrates libraries provided by Intel® to accelerate end-to-end pipelines on Intel®. With Optimum-intel you can optimize your model to Intel® OpenVINO™ IR format and attain enhanced performance using the Intel® OpenVINO™ runtime.

Dell™ PowerEdge™ XE9640 Intel® Data Center GPU Max 1550

Source: https://www.dell.com/ Source: https://www.intel.com

| SYSTEM SET-UP SETUP

1. Installation of Drivers

To install drivers for the Intel® Data Center GPU Max Series, we followed the steps here.

2. Verification of Installation

To verify the installation of the drivers, we followed the steps here.

3. Installation of Docker

To install Docker on Ubuntu 22.04.3., we followed the steps here.

| RUNNING THE LLAMA-2 7B CHAT MODEL

1. Set up a Docker container for all our dependencies to ensure seamless deployment and straightforward replication:

sudo docker run --rm -it --privileged --device=/dev/dri --ipc=host intel/intel-extension-for-pytorch:xpu-max-2.0.110-xpu bash

2. To install the Python dependencies, our Llama-2 7B Chat model requires:

pip install openvino==2023.2.0

pip install transformers==4.33.1

pip install optimum-intel==1.11.0

pip install onnx==1.15.0

3. Access the Llama-2 7B Chat model through HuggingFace:

huggingface-cli login

4. Convert the Llama-2 7B Chat HuggingFace model into Intel® OpenVINO™ IR format using Intel® Optimum to export it:

from optimum.intel import OVModelForCausalLM

from transformers import AutoTokenizer

model_id = "meta-llama/Llama-2-7b-chat-hf"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = OVModelForCausalLM.from_pretrained(model_id, export=True) model.save_pretrained("llama-2-7b-chat-ov")

tokenizer.save_pretrained("llama-2-7b-chat-ov")

5. Run the code snippet below to generate the text with the Llama-2 7B Chat model:

import time

from optimum.intel import OVModelForCausalLM

from transformers import AutoTokenizer, pipeline

model_name = "llama-2-7b-chat-ov"

input_text = "What are the key features of Intel's data center GPUs?"

max_new_tokens = 100

# Initialize and load tokenizer, model

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = OVModelForCausalLM.from_pretrained(model_name, ov_config= {"INFERENCE_PRECISION_HINT":"f32"}, compile=False)

model.to("GPU")

model.compile()

# Initialize HF pipeline

text_generator = pipeline( "text-generation", model=model, tokenizer=tokenizer, return_tensors=True, )

# Inference

start_time = time.time()

output = text_generator( input_text, max_new_tokens=max_new_tokens ) _ = tokenizer.decode(output[0]["generated_token_ids"])

end_time = time.time()

# Calculate number of tokens generated

num_tokens = len(output[0]["generated_token_ids"])

inference_time = end_time - start_time

token_per_sec = num_tokens / inference_time

print(f"Inference time: {inference_time} sec")

print(f"Token per sec: {token_per_sec}")

| ENTER PROMPT

What are the key features of Intel® Data Center GPUs?

Output

Intel® Data Center GPUs are designed to provide high levels of performance and power efficiency for a wide range of applications including machine learning, artificial intelligence and high performance computing.

Some of the key features of Intel® Data Center GPUs include:

1. High performance Intel® Data Center GPUs are designed to provide high levels of performance for demanding workloads, such as deep learning and scientific simulations.

2. Power efficiency.

| PERFORMANCE RESULTS & ANALYSIS

Figure: Comparing GPU vs CPU Performance

During the evaluation of the GPU configurations performance, we observed that a machine with a single GPU achieved a throughput of ~13 tokens per second across two concurrent processes. With two GPUs, we noted ~13 tokens per second across four concurrent processes for a total throughput of ~54 tokens per second. With four GPUs, we observed a total throughput of ~107 tokens per second supporting eight processes concurrently. The latency per process remains well below Scalers AI™ target of 100 milliseconds, despite an increase in the number of concurrent processes.

As latency represents the time a user must wait before task completion, it is a critical metric for hardware selection on large language models. This evaluation underscores the significant impact of GPU parallelism on both throughput and user response time. The scalability from one GPU to four GPUs reflects a significant enhancement in computational power, enabling more concurrent processes at nearly the same latency.

Our results demonstrate that Dell™ PowerEdge™ XE9640 with four Intel® Data Center GPU Max 1550 is up to the task of running Llama-2 7B Chat and meeting end user experience targets.

Number of GPUS | Throughput (Tokens/second) | Number of processes | Token Latency (ms) |

1 | 26.83 | 2 | 74.55 |

2 | 53.81 | 4 | 74.34 |

3 | 80.35 | 6 | 74.68 |

4 | 107.55 | 8 | 74.38 |

Table: Results after taking different number of GPUs

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

| ABOUT SCALERS AI™

Scalers AI™ specializes in creating end-to-end artificial intelligence (AI) solutions to fast track industry transformation across a wide range of industries, including retail, smart cities, manufacturing, insurance, finance, legal and healthcare. Scalers AI™ industry offerings include custom large language models and multimodal platforms supporting voice, image, and text. As a full stack AI solutions company with solutions ranging from the cloud to the edge, our customers often need versatile common off the shelf (COTS) hardware that works well across a range of workloads.

| Dell™ PowerEdge™ XE9640 Key specifications

MACHINE | Dell™ PowerEdge™ XE9640 |

Operating system | Ubuntu 22.04.3 LTS |

CPU | Intel® Xeon® Platinum 8468 |

MEMORY | 512Gi |

GPU | Intel® Data Center GPU Max 1550 |

GPU COUNT | 4 |

SOFTWARE STACK | Intel® OpenVINO® - 2023.2.0 transformers - 4.33.1 optimum-intel - 1.11.0" xpu-smi - 1.2.22.20231025 |

| HUGGING FACE OPTIMUM

Learn more: https://huggingface.co

| TEST METHODOLOGY

The Llama-2 7B Chat FP32 model is exported into the Intel® OpenVINO™ format and then tested for text generation (inference) using Hugging Face Optimum. Hugging Face Optimum is an extension of Hugging Face transformers and Diffusers that provides tools to export and run optimized models on various ecosystems including Intel® OpenVINO™.

For performance tests, 20 iterations were executed for each inference scenario out of which initial five iterations were considered as warm-up and were discarded for calculating Inference time (in seconds) and tokens per second. The time collected includes encode-decode time using tokenizer and LLM inference time.

Read part two: https://infohub.delltechnologies.com/p/expanding-gpu-choice-with-intel-data-center-gpu-max-series/

Testing LLAMA-2 models on Dell PowerEdge R760xa with 5th Gen Intel Xeon Processors

Tue, 13 Feb 2024 04:08:19 -0000

|Read Time: 0 minutes

This is part three, read part two here: https://infohub.delltechnologies.com/p/expanding-gpu-choice-with-intel-data-center-gpu-max-series/

| NEXT GENERATION OF INTEL® XEON® PROCESSORS

We are excited to showcase our collaboration with Intel® as we explore the capabilities of 5th Gen Intel® Xeon® Processors, now accessible via Dell™ PowerEdge™ R760xa, offering versatile AI solutions that can be deployed onto general purpose servers as an alternative to specialized hardware “accelerator” based implementations. Built upon the advancements of its predecessors, the latest generation of Intel® Xeon® Processors introduces advancements designed to provide customers with enhanced performance and efficiency. 5th Gen Intel® Xeon® Processors are engineered to seamlessly handle demanding AI workloads, including inference and fine-tuning on models containing up to 20 billion parameters, without an immediate need for additional hardware. Furthermore, the compatibility with 4th Gen Intel® Xeon® processors facilitates a smooth upgrade process for existing solutions, minimizing the need for extensive testing and validation.

The integration of 5th Gen Intel® Xeon® Processors with Dell™ PowerEdge™ R760xa, ensures a seamless pairing, providing a wide range of options and scalability in performance.

Dell™ has recently established a strategic partnership with Meta and Hugging Face to facilitate the seamless integration of enterprise-level support for the selection, deployment, and fine-tuning of AI models tailored to industry-specific use cases, leveraging the Llama-2 7B Chat Model from Meta.

In a prior analysis, we integrated Dell™ PowerEdge™ R760xa with 4th Gen Intel® Xeon® Processors and tested the performance of the Llama-2 7B Chat model by measuring both the rate of token generation and the number of concurrent users that can be supported while scaling up to four accelerators. In this demonstration, we explore the advancements offered by 5th Gen Intel® Xeon® Processors paired with Dell™ PowerEdge™ R760xa, while focusing on the same task.

Dell™ PowerEdge™ Servers featuring 5th Gen Intel® Xeon® Processors demonstrated a strong scalability and successfully achieved the targeted end user latency goals.

“Scalers AI™ ran Llama-2 7B Chat with Dell™ PowerEdge™ R760xa Server, powered by 5th Gen Intel® Xeon® Processors, enabling us to meet end user latency requirements for our enterprise AI chatbot.”

Chetan Gadgil, CTO at Scalers AI

Chetan Gadgil, CTO at Scalers AI

| LLAMA-2 7B CHAT MODEL

For this demonstration, we have chosen to work with Llama-2 7B Chat, an open source large language model from Meta capable of generating text and code in response to given prompts. As a part of the Llama-2 family of large language models, Llama-2 7B Chat is pre-trained on 2 trillion tokens of data from publicly available sources and additionally fine-tuned on public instruction datasets and more than a million human annotations. This particular model is optimized for dialogue use cases, making it ideal for applications such as chatbots or virtual assistants that need to engage in conversational interactions.

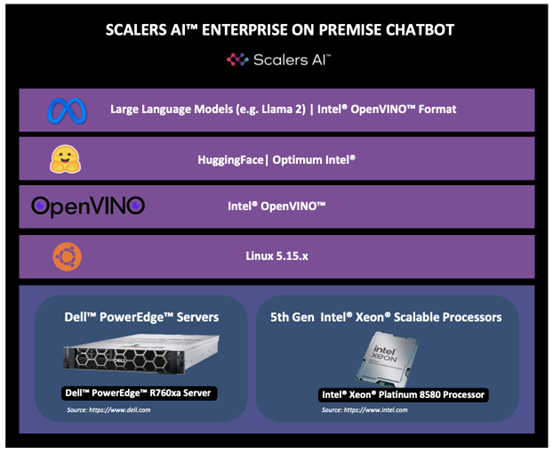

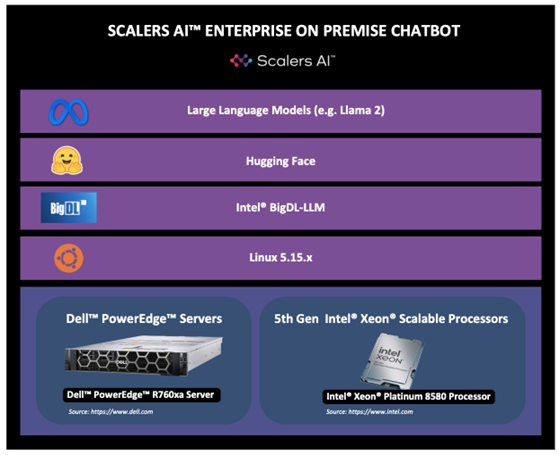

| ARCHITECTURES

We initialized our testing environment using Dell™ PowerEdge™ R760xa Rack Server featuring 5th Gen Intel® Xeon® Processors running on Ubuntu 22.04. To ensure maximum efficiency, we used Hugging Face Optimum, an extension of Hugging Face Transformers that provides a set of performance optimization tools to train and run models on the targeted hardware. We specifically selected the Optimum-Intel package, which integrates libraries provided by Intel® to accelerate end-to-end pipelines on Intel® hardware. Hugging Face Optimum Intel is the interface between the Hugging Face Transformers and Diffusers libraries and the different tools and libraries provided by Intel® to accelerate end-to-end pipelines on Intel® architectures.

We also tested 5th Gen Intel® Xeon® Processors with bigdl-llm, a library for running LLMs on Intel® hardware with support for Pytorch and lower precision formats. By using bigdl-llm, we are able to leverage INT4 precision on Llama-2 7B Chat.

The following architectures depict both scenarios:

1) Hugging Face Optimum

2) BigDL-LLM

| TEST METHODOLOGY

For each iteration of our performance tests, we prompted Llama-2 with the following command: “Discuss the history and evolution of artificial intelligence in 80 words or less.” We then collected the test response and recorded total inference time in seconds and tokens per second. 25 of these iterations were executed for each inference scenario (Hugging Face Optimum vs BigDL-LLM), out of which the initial five iterations were considered as warm-ups and were discarded for calculating total inference time and tokens per second. Here, the total time collected includes both the encode-decode time using the tokenizer and LLM inference time.

We also scaled the number of processes from one to four to observe how total latency and tokens per second change as the number of concurrent processes is increased. In the hypothetical scenario of an enterprise chatbot, this analysis simulates engaging several different users having separate conversations with the chatbot at the same time, during which the chatbot should still deliver responses to each user in a reasonable amount of time. The total number of tests comes from running each inference scenario with a varying number of processes (1, 2, or 4 processes) and recording the performance (measured in throughput) of different model precision formats.

| RUNNING THE LLAMA-2 7B CHAT MODEL WITH OPTIMUM-INTEL

1. Install the Python dependencies:

openvino==2023.2.0

transformers==4.36.2

optimum-intel==1.12.3

optimum==1.16.1

onnx==1.15.0

2. Request access to Llama-2 model through Hugging Face by following the instructions here:

https://huggingface.co/meta-llama/Llama-2-7b-chat-hf

Use the following command to login to your Hugging Face account:

huggingface-cli login

3. Convert the Llama-2 7B Chat HuggingFace model into Intel® OpenVINO™ IR with INT8 precision format using Optimum-Intel to export it:

from optimum.intel import OVModelForCausalLM

from transformers import AutoTokenizer

model_id = "meta-llama/Llama-2-7b-chat-hf"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = OVModelForCausalLM.from_pretrained(model_id, export=True, load_in_8bit=True)

model.save_pretrained("llama-2-7b-chat-ov")

tokenizer.save_pretrained("llama-2-7b-chat-ov")

4. Run the code snippet below to generate the text with the Llama-2 7B Chat model:

import time

from optimum.intel import OVModelForCausalLM

from transformers import AutoTokenizer, pipeline

model_name = "llama-2-7b-chat-ov"

input_text = "Discuss the history and evolution of artificial intelligence"

max_new_tokens = 100

# Initialize and load tokenizer, model

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = OVModelForCausalLM.from_pretrained(model_name, ov_config= {"INFERENCE_PRECISION_HINT":"f32"}, compile=False)

model.compile()

# Initialize HF pipeline

text_generator = pipeline( "text-generation", model=model, tokenizer=tokenizer, return_tensors=True, )

# Warmup

output = text_generator( input_text, max_new_tokens=max_new_tokens )

# Inference

start_time = time.time()

output = text_generator( input_text, max_new_tokens=max_new_tokens )

_ = tokenizer.decode(output[0]["generated_token_ids"])

end_time = time.time()

# Calculate number of tokens generated

num_tokens = len(output[0]["generated_token_ids"])

inference_time = end_time - start_time

token_per_sec = num_tokens / inference_time

print(f"Inference time: {inference_time} sec")

print(f"Token per sec: {token_per_sec}")

| RUNNING THE LLAMA-2 7B CHAT MODEL WITH BIGDL-LLM

1. Install the Python dependencies, our Llama-2 7B Chat model requires:

pip install bigdl-llm[all]==2.4.0

2. Request access to Llama-2 model through Hugging Face by following the instructions here:

https://huggingface.co/meta-llama/Llama-2-7b-chat-hf

Use the following command to login to your Hugging Face account:

huggingface-cli login

3. Run the code snippet below to generate the text with the Llama-2 7B Chat model in INT4 precision:

import torch

import intel_extension_for_pytorch as ipex

import time

import argparse

from bigdl.llm.transformers import AutoModelForCausalLM

from transformers import LlamaTokenizer

model = AutoModelForCausalLM.from_pretrained("meta-llama/Llama-2-7b-chat-hf",

load_in_4bit=True,

optimize_model=True,

trust_remote_code=True,

use_cache=True)

tokenizer = LlamaTokenizer.from_pretrained("meta-llama/Llama-2-7b-chat-hf", trust_remote_code=True)

with torch.inference_mode():

input_ids = tokenizer.encode("Discuss the history and evolution of artificial intelligence", return_tensors="pt")

# ipex model needs a warmup, then inference time can be accurate

output = model.generate(input_ids,

max_new_tokens=100)

# start inference

start_time = time.time()

output = model.generate(input_ids, max_new_tokens=100)

end_time = time.time()

num_tokens = len(output[0].detach().numpy().flatten())

inference_time = end_time - start_time

token_per_sec = num_tokens / inference_time

print(f"Inference time: {inference_time} sec")

print(f"Token per sec: {token_per_sec}")

| ENTER PROMPT

Discuss the history and evolution of artificial intelligence in 80 words or less.

Output for Llama-2 7B Chat - Using Optimum-Intel:

Artificial intelligence (AI) has a long history dating back to the 1950s when computer scientist Alan Turing proposed the Turing Test to measure machine intelligence. Since then, AI has evolved through various stages, including rule-based systems, machine learning, and deep learning, leading to the development of intelligent systems capable of performing tasks that typically require human intelligence, such as visual recognition, natural language processing, and decision-making.

Output for Llama-2 7B Chat - Using BigDL-LLM:

Artificial intelligence (AI) has a long history dating back to the mid-20th century. The term AI was coined in 1956, and since then, AI has evolved significantly with advancements in computer power, data storage, and machine learning algorithms. Today, AI is being applied to various industries such as healthcare, finance, and transportation, leading to increased efficiency and productivity.

| PERFORMANCE RESULTS & ANALYSIS

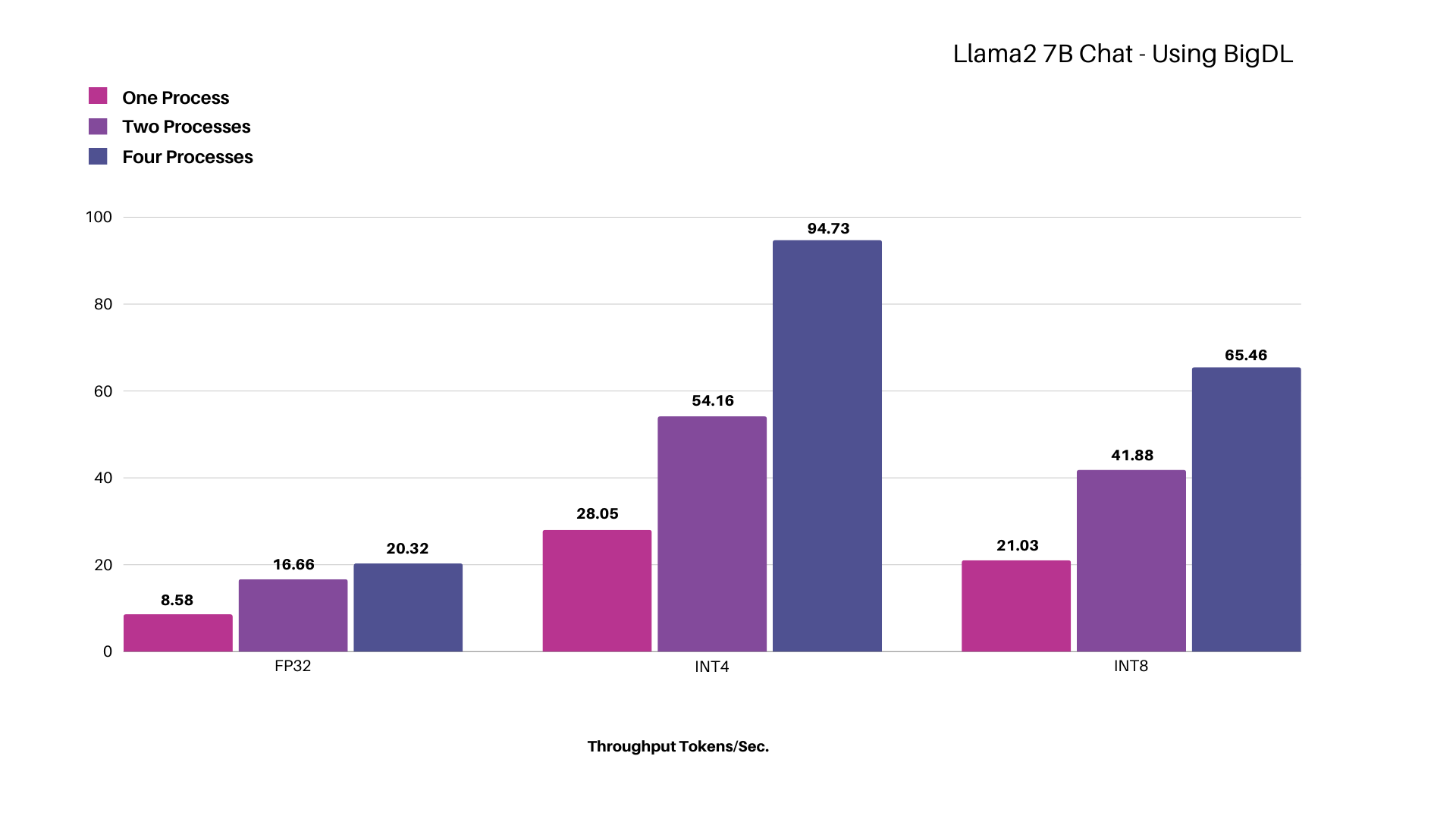

Testing Llama-2 7B Chat - Using BigDL-LLM

Figure: Scaling Intel® Xeon® Platinum 8580 Processor for different model precisions while concurrently increasing processes up to four, measured in total throughput represented in tokens per second.

Using the Llama-2 7B Chat model with INT4 precision, we achieved a throughput of ~28 tokens per second with a single process, which increased to ~95 tokens per second when scaling up to four processes.

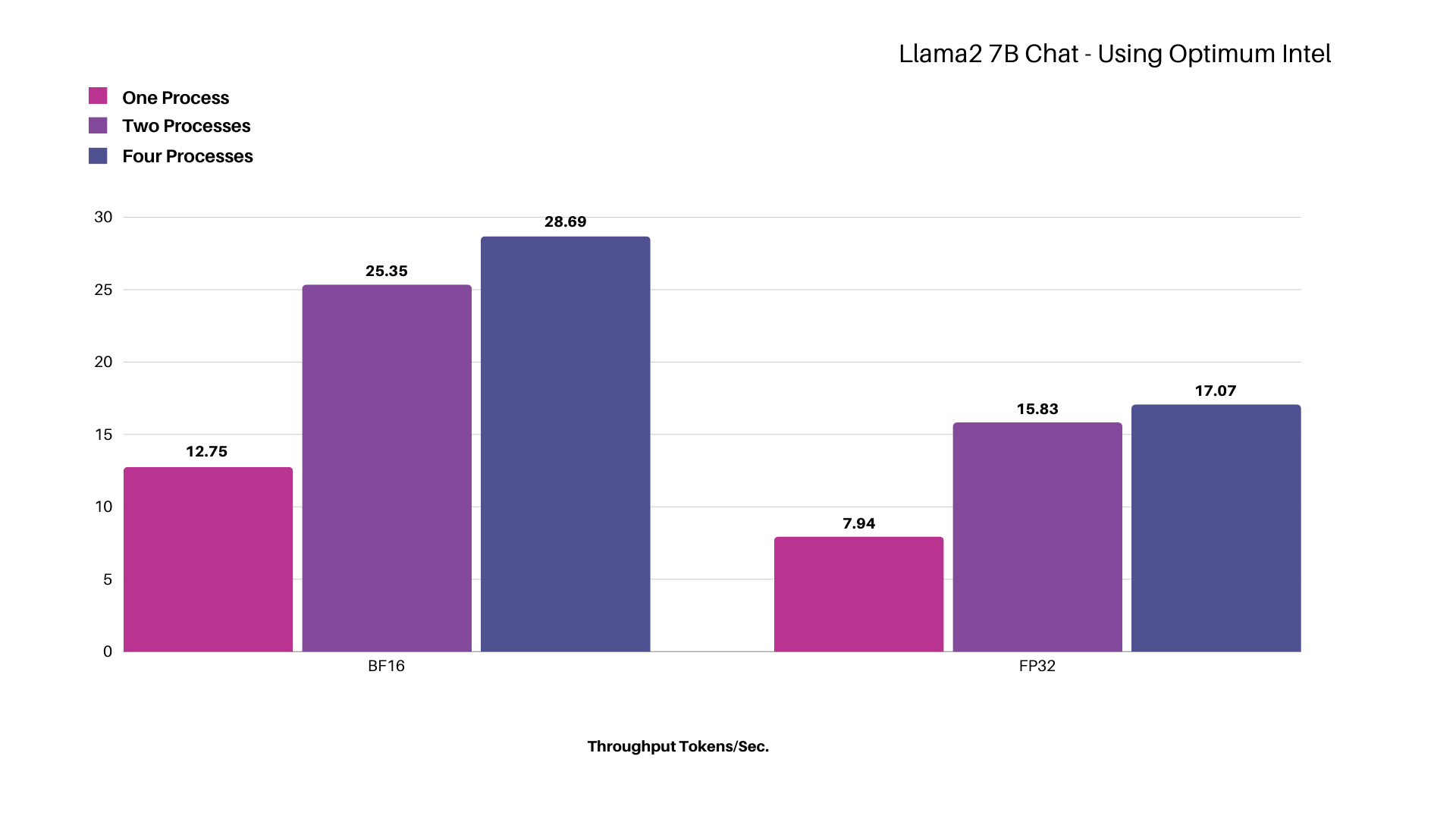

Testing Llama-2 7B Chat - Using Optimum-Intel

Figure: Scaling Intel® Xeon® Platinum 8580 Processor for different model precisions while concurrently increasing processes up to four, measured in total throughput represented in tokens per second.

Using the Llama-2 7B Chat model with BF16 precision in a single process, we achieved a throughput of ~13 tokens per second, which increased to ~29 tokens per second when scaling up to four processes. The token latency per process remains well below the Scalers AI™ target of 100 milliseconds despite an increase in the number of concurrent processes.

Our results demonstrate that Dell™ PowerEdge™ R760xa Server featuring Intel® Xeon® Platinum 8580 Processor is up to the task of running Llama-2 7B Chat and meeting end user experience responsiveness targets for interactive applications like “chatbots”. For batch processing tasks like report generation where real-time response is not a major requirement, an enterprise will be able to add more workload by scaling the concurrent processes.

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

| ABOUT SCALERS AI™

Scalers AI™ specializes in creating end-to-end artificial intelligence (AI) solutions to fast track industry transformation across a wide range of industries, including retail, smart cities, manufacturing, insurance, finance, legal and healthcare. Scalers AI™ industry offerings include custom large language models and multimodal platforms supporting voice, video, image, and text. As a full stack AI solutions company with solutions ranging from the cloud to the edge, our customers often need versatile, easily available (COTS) hardware that works well across a range of functionality, performance and accuracy requirements.

| Dell™ PowerEdge™ R760xa Server Key Specifications

MACHINE | Dell™ PowerEdge™ R760xa Server |

Operating system | Ubuntu 22.04.3 LTS |

CPU | Intel® Xeon® Platinum 8580 Processor |

MEMORY | 1024Gi |

| HUGGING FACE OPTIMUM & INTEL® BIGDL-LLM

Learn more:

https://huggingface.co/docs/optimum/intel/index

https://github.com/intel-analytics/BigDL

| References