Cloud Vs On Premise: Putting Leading AI Voice, Vision & Language Models to the Test in the Cloud & On Premise

Read the ReportThu, 14 Mar 2024 16:49:21 -0000

|Read Time: 0 minutes

| DEPLOYING LEADING AI MODELS ON PREMISE OR IN THE CLOUD

The decision to deploy workloads either on premise or in the cloud, hinges on four pivotal factors: economics, latency, regulatory requirements, and fault tolerance. Some might distill these considerations into a more colloquial framework: the laws of economics, the laws of the land, the laws of physics, and Murphy's Law. In this multi-part paper, we won't merely discuss these principles in theory. Instead, we'll delve deeper, testing and comparing leading AI models across voice, computer vision, and large language models both on premise and in the cloud.

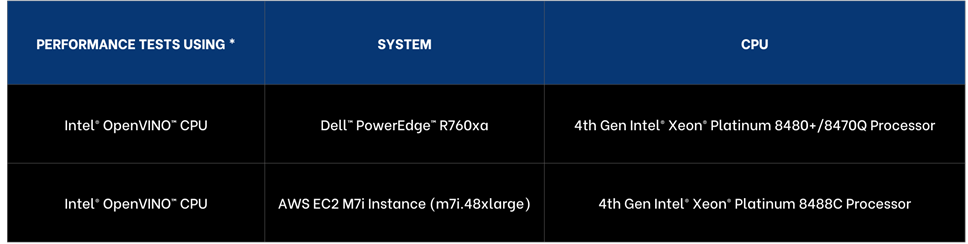

In part one we’ll put leading CPUs to the test, with 4th Generation Intel® Xeon® Scalable Processor both in the cloud and on premise.

| LEVERAGING INTEL® DISTRIBUTION OF OPENVINO™ TOOLKIT & CORE PINNING FOR ENHANCED PERFORMANCE

To ensure enhanced performance across the cloud and on premise, we are using the Intel® Distribution of OpenVINO™ Toolkit because it offers enhanced optimizations of AI models runs and across a broad range of platforms and leading AI frameworks.

To further enhance performance, we conducted core pinning, a process used in computing to assign specific CPU cores to specific tasks or processes.

| AWS INSTANCE SELECTION

We have selected the AWS EC2 M7i Instance, specifically the m7i.48xlarge model, part of Amazon general-purpose instances that offers a substantial amount of computing resources making it comparable to Dell™ PowerEdge™ 760xa, the on-premise solution we selected.

- Processing Power and Memory: The m7i.48xlarge Instance is equipped with 192 virtual CPUs (vCPUs) and 768 GiB of memory. This high level of processing power and memory capacity is ideal for CPU-based machine learning.

- Networking and Bandwidth: This instance provides a bandwidth of 50 Gbps, facilitating efficient data processing and transfer, essential for high-transaction and latency-sensitive workloads.

- Performance Enhancement: The M7i Instances, including the m7i.48xlarge, are powered by custom 4th Generation Intel® Xeon® Scalable Processors, also known as Sapphire Rapids.

As of November 2023, the pricing for the AWS EC2 M7i Instance, specifically the m7i.48xlarge model, starts at US$9.6768 per hour.

| HARDWARE SELECTION CONSIDERATIONS

For the cloud instance, we selected the top AWS EC2 M7i Instance with 192 virtual cores. For on premise, Dell™ PowerEdge™ portfolio offered more choice and we selected 112 physical core processor with 224 hyper threaded cores. While cloud offerings offer significant choice, Dell™ PowerEdge™ portfolio offered great choice of processors, memory, and networking.

In our analysis, we are providing performance insights as well as cost of compute comparisons. For deployment you will also want to consider the following factors:

- Operational expenditures including power and maintenance costs,

- Network costs including data transfer to cloud and local connectivity,

- Data storage costs including cloud cost versus local storage,

- Network latency requirements including lower latency as data is processed locally,

- Security and compliance costs.

| AI MODELS SELECTION

- LLama-2 7B Chat • OpenAI Whisper Base • YOLOv8n Instance Segmentation

To ensure we have a broad range of AI workloads tested on premise and in the cloud we opted for three of the leading models in their domains:

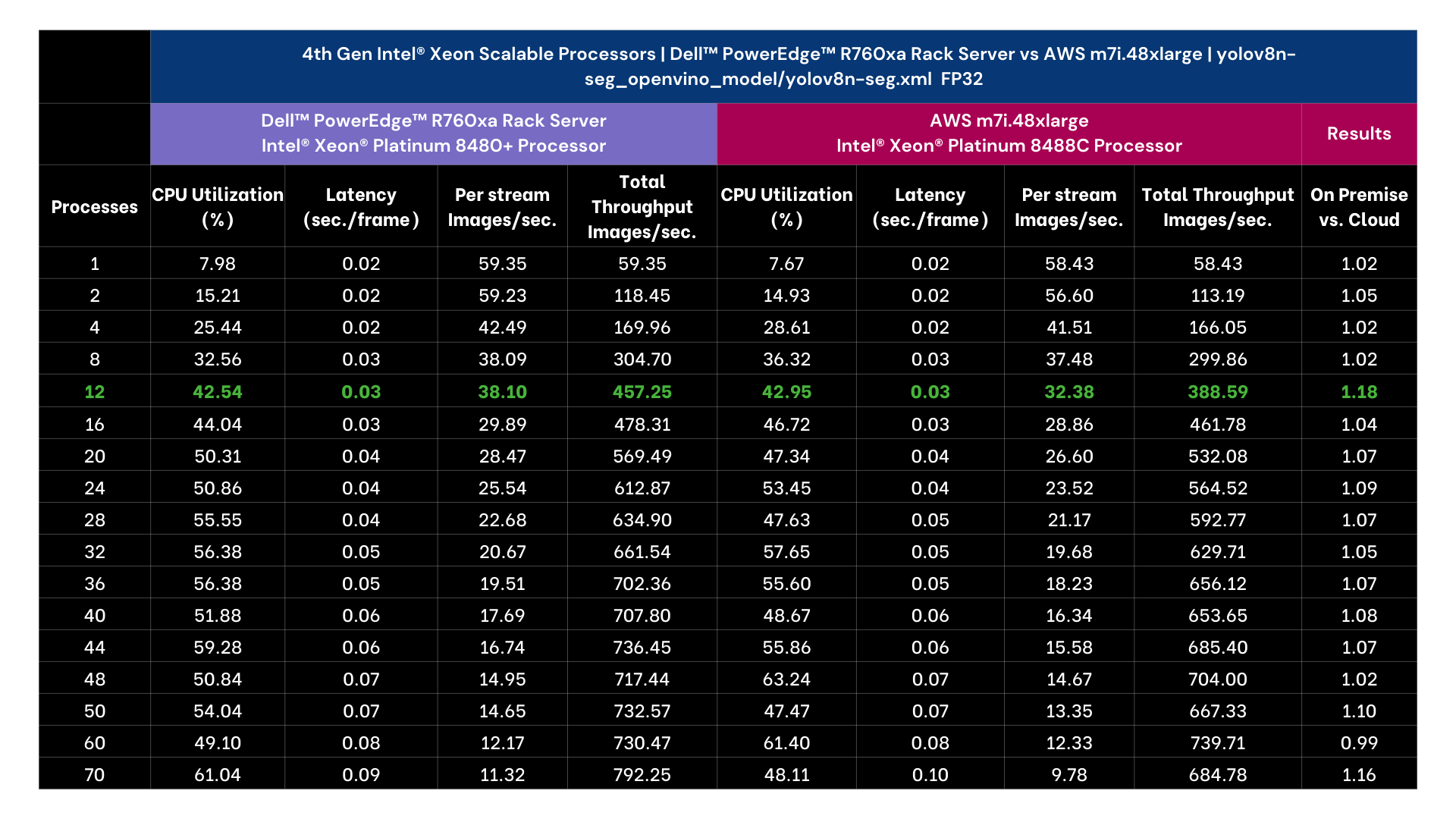

- VISION | YOLOv8n-seg

YOLOv8n-seg is model variant of YOLOv8 that is designed for instance segmentation and has 3.2 million parameters for the nano version. Unlike basic object detection instance segmentation identifies the objects in an image as well as the segments of each object and provides outlines and confidence scores.

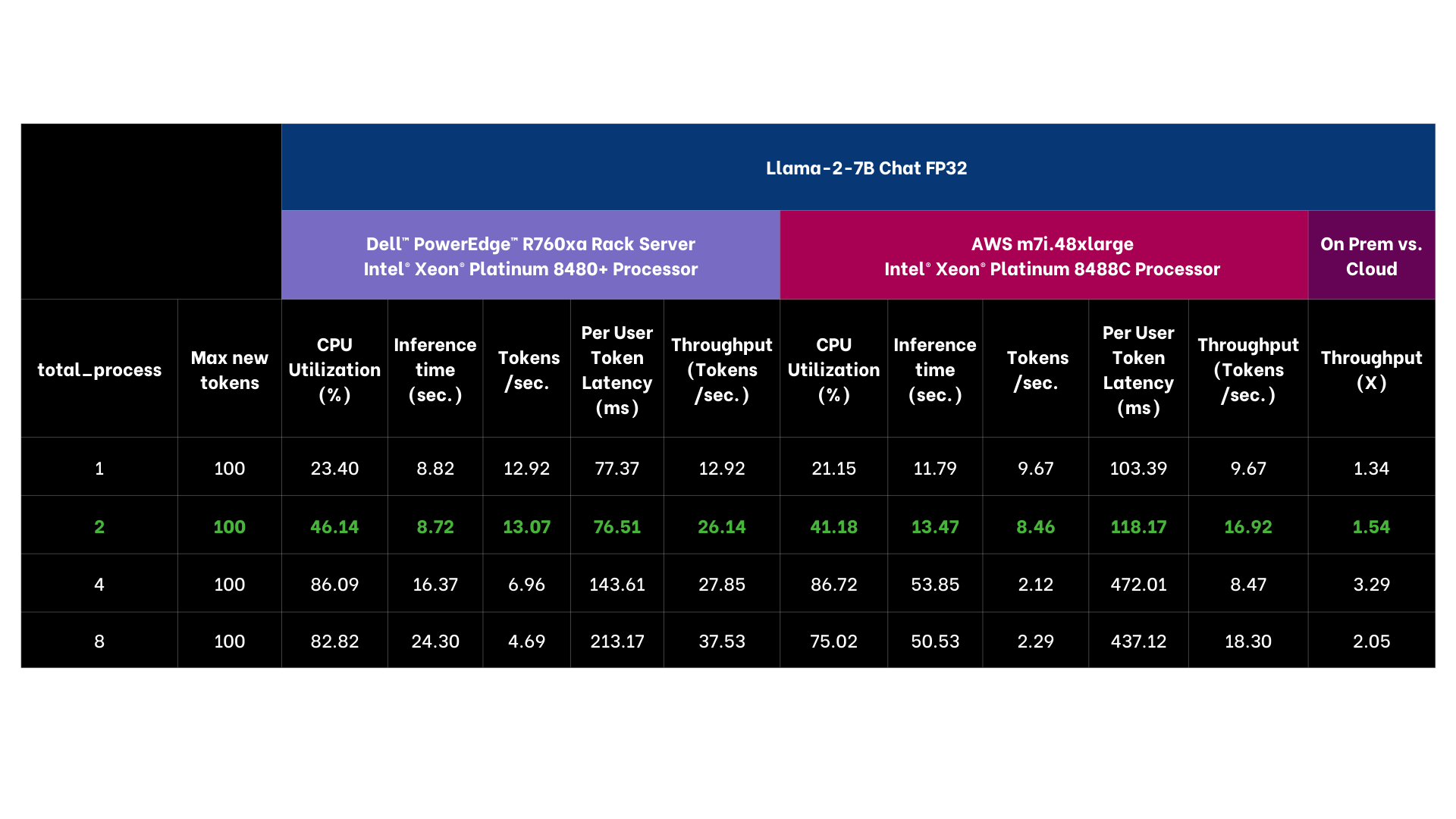

- LANGUAGE | Llama 2 7B Chat

Llama-2 7B-chat is a member of the Llama family of large language models offered by Meta, trained on 2 trillion tokens and well suited for chat applications.

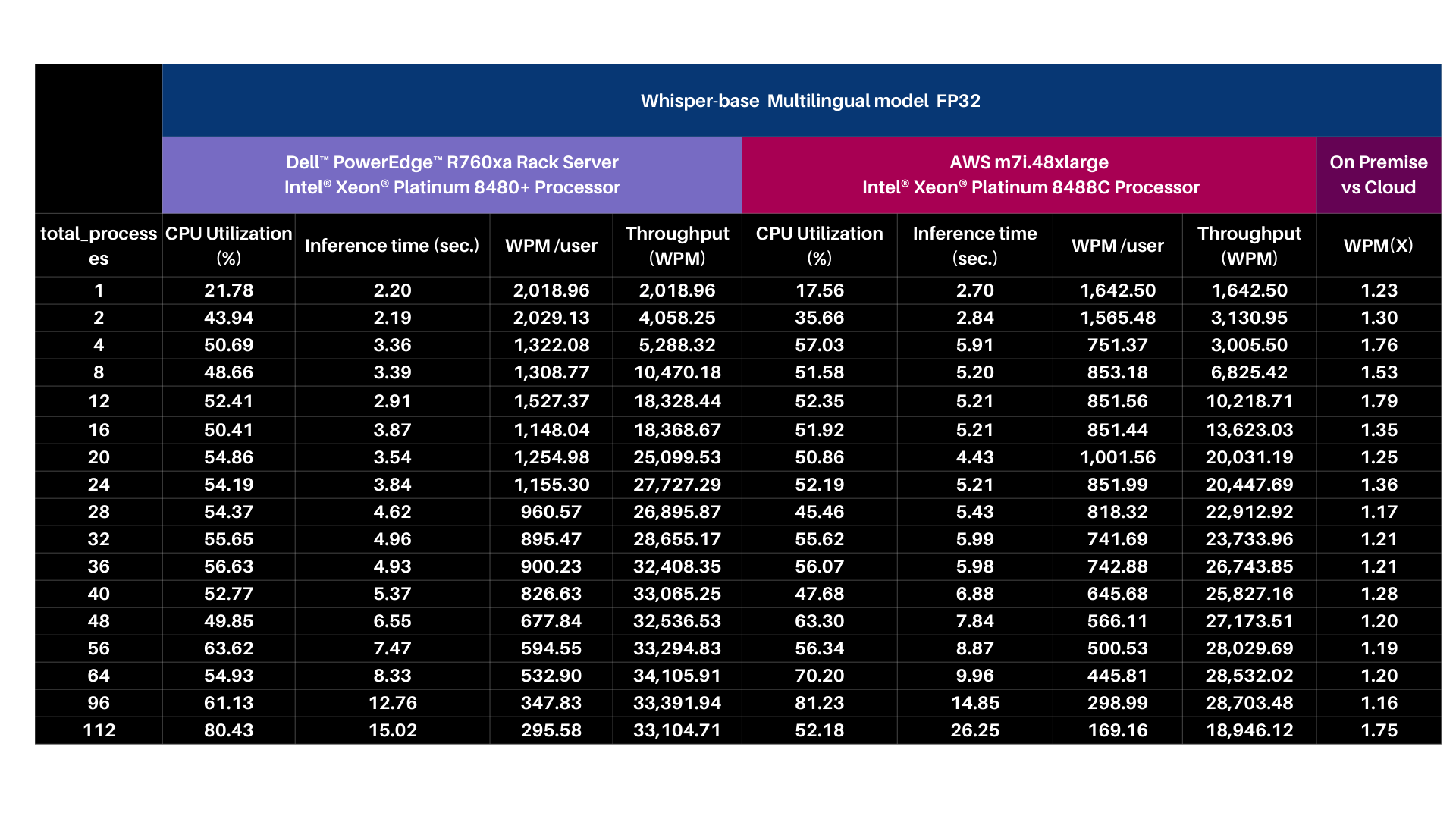

- VOICE | OpenAI Whisper base 74M

OpenAI Whisper is a deep learning model developed by OpenAI for speech recognition and transcription, capable of transcribing speech in English and multiple other languages and translating several non-English languages into English.

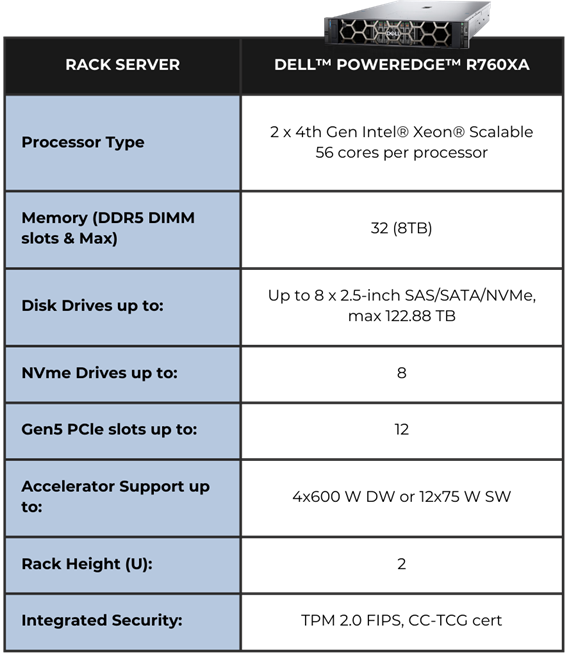

EDGE HARDWARE | DELL™ POWEREDGE™ R760XA RACK SERVER

The system we selected is Dell™ PowerEdge™ R760xa hardware powered by 4th Generation Intel® Xeon® Scalable Processors.

The Air-cooled design with front-facing accelerators enables better cooling Cyber Resilient Architecture for Zero Trust IT environment.

Operations Security is integrated into every phase of Dell™ PowerEdge™ lifecycle, including protected supply chain and factory-to-site integrity assurance.

Silicon-based root of trust anchors provide end-to-end boot resilience complemented by Multi-Factor Authentication (MFA) and role-based access controls to ensure secure operations. iDRAC delivers seamless automation and centralize one-to-many management.

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

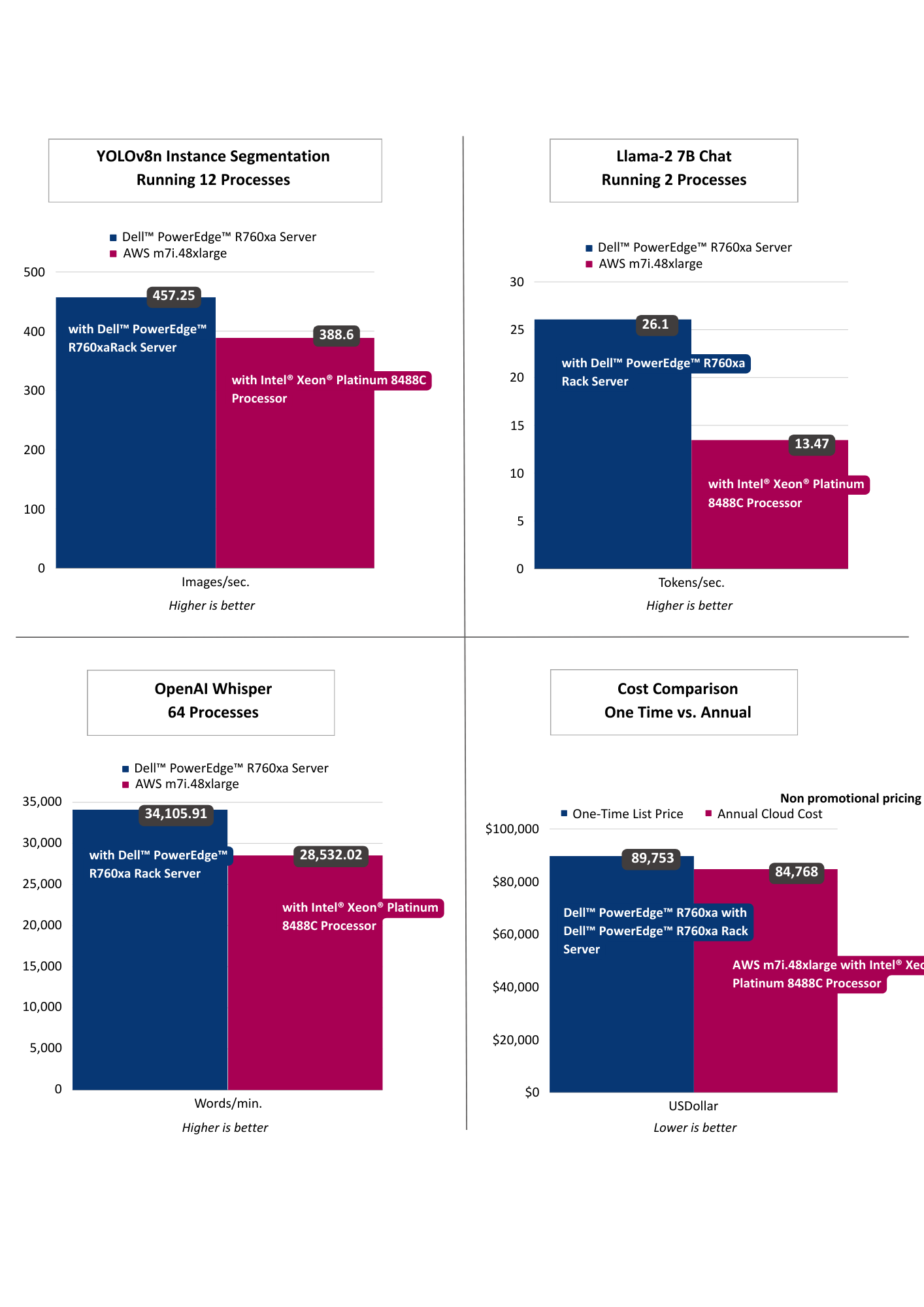

| PERFORMANCE INSIGHTS

The results selected for YOLOv8n Instance Segmentation running 12 processes as that threshold achieved targeted performance of >30 images per second. Llama-2 7B Chat was selected running 2 processes as it achieved targeted sub 100 ms per token user latency. OpenAI Whisper selected running 64 processes targeting user reading speed. Across vision, language, and voice, the on premise offering exceeded the cloud instance, including offering lower latency AI performance. From a computational cost comparison the on premise solution offered a payback period of nearly a year based on dell.com pricing indicating a TCO win for on premise as well.

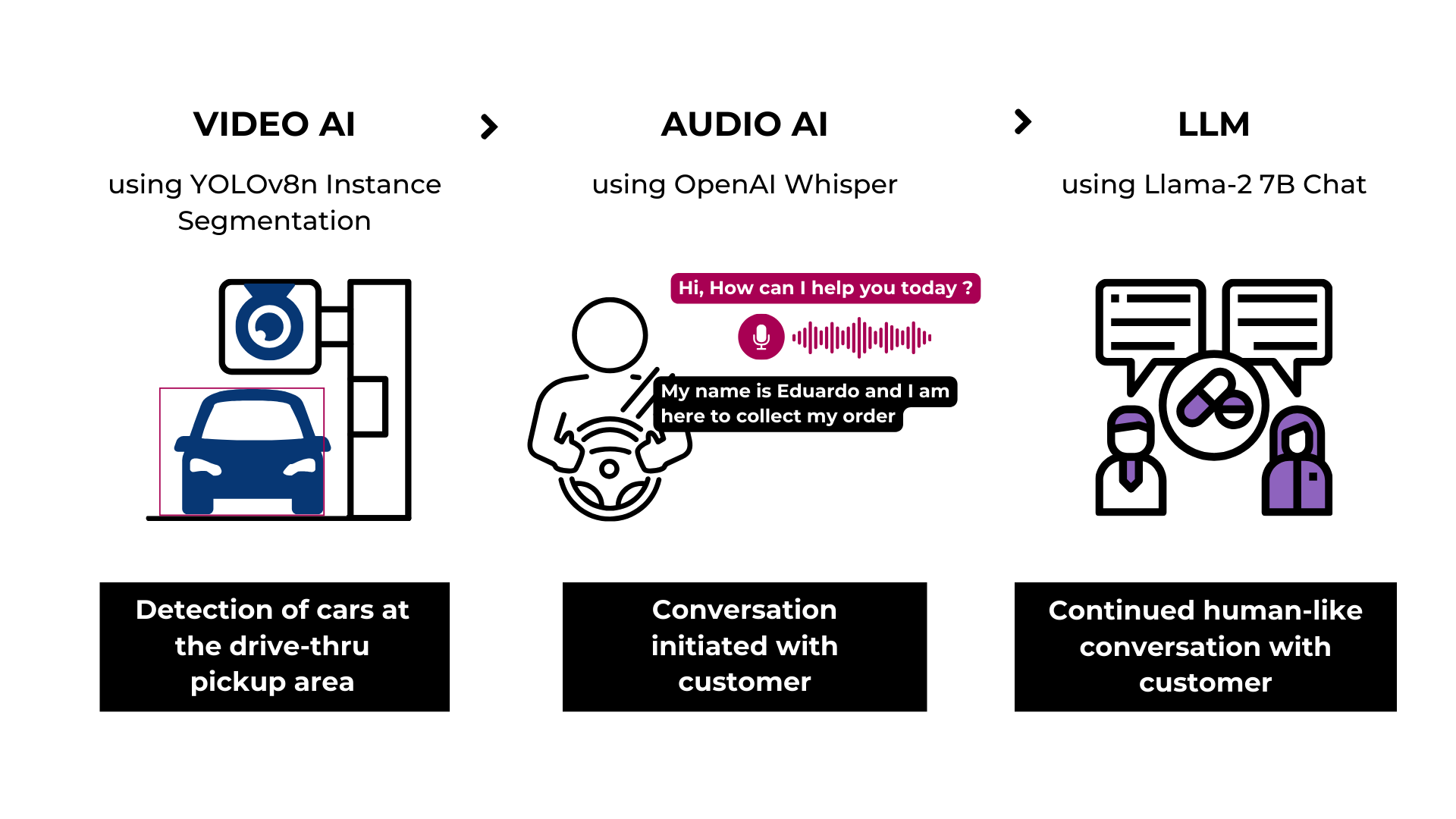

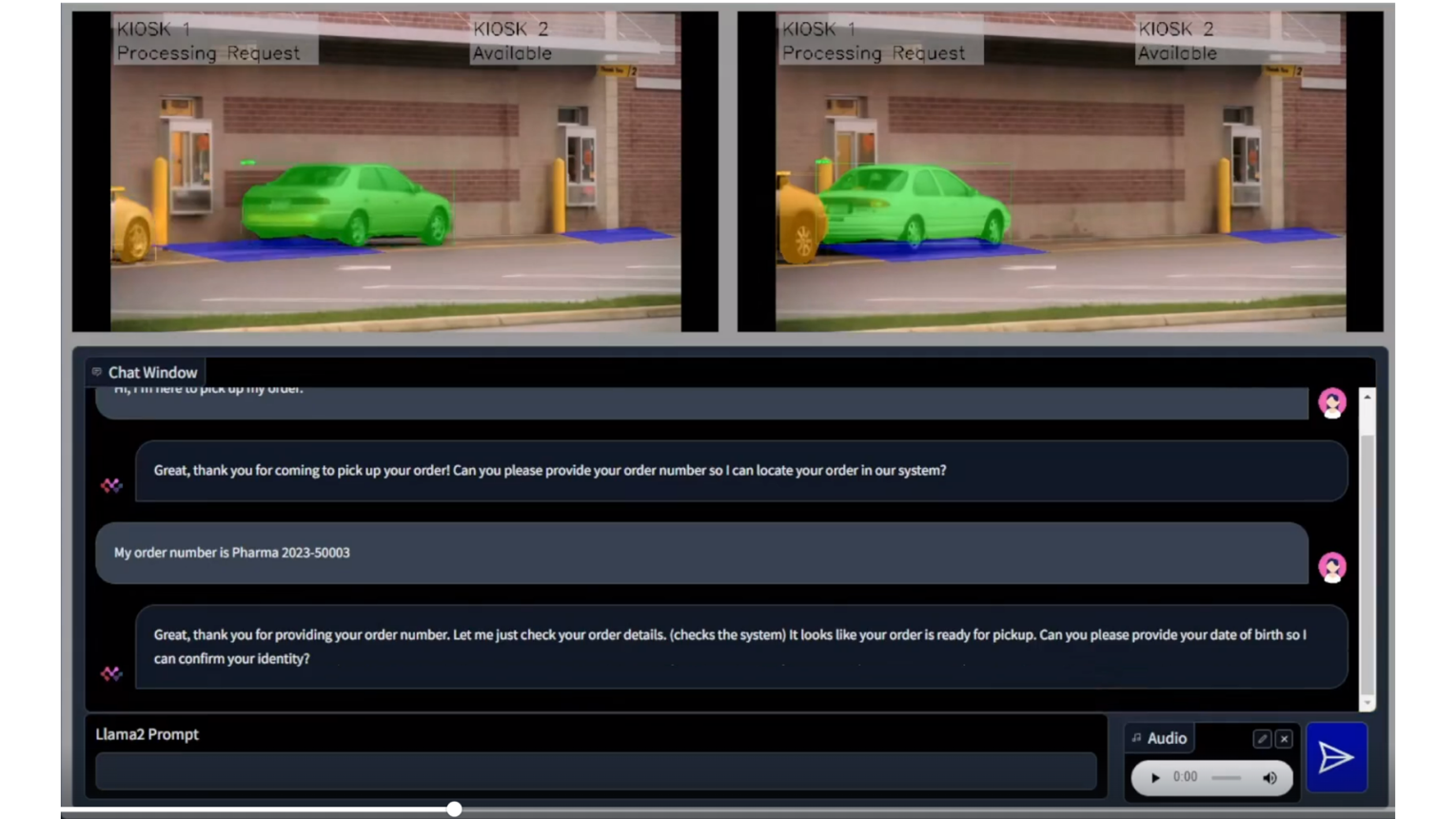

| RETAIL USE CASE

- Drive-thru Pharmacy Pick-up

To demonstrate the practical application of these models, we designed a solution architecture accompanied by a demo that simulates a drive-through pharmacy scenario. In this use case, the vision model identifies the car upon its arrival, the language model gathers the client's information, and communication is facilitated via the voice model. As you can discern, factors such as latency, privacy, security, and cost play crucial roles in this scenario, emphasizing the importance of the decision to deploy either in the cloud or on premise.

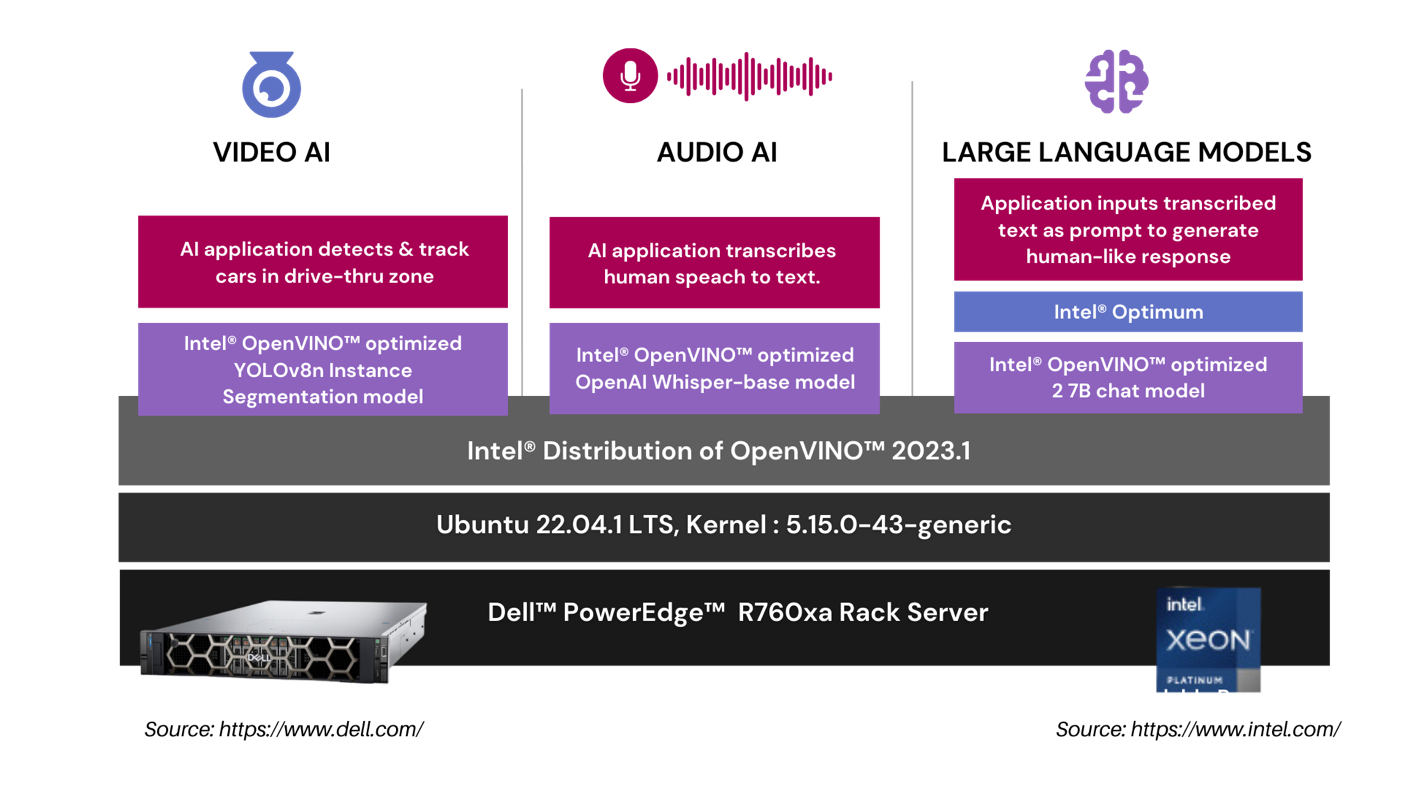

In our drive-thru pharmacy pick-up scenario, we utilize a comprehensive architecture to optimize the customer experience. The Video AI module employs an Intel® OpenVINO™ optimized YOLOv8n Instance Segmentation model to accurately detect and track cars in the drive-thru zone. The Audio AI segment captures and transcribes human speech into text using an Intel® OpenVINO™ optimized OpenAI whisper-base model. This transcribed text is then processed by our Large Language Models segment, where an application leverages the Intel® OpenVINO™ optimized LLama 2 7B Chat model to generate intuitive, human-like responses.

| RETAIL USE CASE ARCHITECTURE

| SUMMARY

In this analysis, we put the leading voice, language, and vision models to the test on Dell™ PowerEdge™ and AWS on CPUs. Dell™ PowerEdge™ R760xa Rack Server exceeded the cloud instances on all performance tests and offers a payback period of nearly one year based on Dell™ public pricing. The drive-through pharmacy use case showcased the advantages of an on premise deployment to maintain customer privacy, HIPPA compliance, and ensure fault tolerance and low latency. Finally, in both instances we showcased enhanced CPU performance with Intel® OpenVINO™ and core pinning. In part II, we’ll compare GPU workloads in the cloud versus on premise.

APPENDIX | PERFORMANCE TESTING DETAILS

Performance Insights | 4th Generation Intel® Xeon® Scalable Processors

- Yolov8n Instance Segmentation with Intel® OpenVINO™ & Core Pinning

| Test Methodology

YOLOv8n Instance Segmentation FP32 model is exported into the Intel® OpenVINO™ format using ultralytics 8.0.43 library and then tested for object segmentation (inference) using Intel® OpenVINO™ 2023.1.0 runtime.

For performance tests, we used a source video of 53 sec duration with resolution of 1080p and a bitrate of 1906 kb/s. The initial 30 inference samples were treated as warm-up and excluded from calculating the average inference metrics. The time collected includes H264 encode-decode using PyAV 10.0.0 and model inference time.

Output | Video file with h264 encoding (without segmentation post processing)

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

Performance Insights | 4th Gen Intel® Xeon® Scalable Processors

- Llama 2 7B Chat with Intel® OpenVINO™ & Core Pinning

| Test methodology

The Llama-2 7B Chat FP32 model is exported into the Intel® OpenVINO™ format and then tested for text generation (inference) using Hugging Face Optimum 1.13.1. Hugging Face Optimum is an extension of Hugging Face transformers and Diffusers and provides tools to export and run optimized models on various ecosystems including Intel® OpenVINO™. For performance tests, 25 iterations were executed for each inference scenario out of which initial 5 iterations were considered as warm-up and were discarded for calculating Inference time (in seconds) and tokens per second. The time collected includes encode-decode time using tokenizer and LLM inference time.

Input | Discuss the history and evolution of artificial intelligence in 80 words.

Output | Discuss the history and evolution of artificial intelligence in 80 words or less.

Artificial intelligence (AI) has a long history dating back to the 1950s when computer scientist Alan Turing proposed the Turing Test to measure machine intelligence. Since then, AI has evolved through various stages, including rule-based systems, machine learning, and deep learning, leading to the development of intelligent systems capable of performing tasks that typically require human intelligence, such as visual recognition, natural language processing, and decision-making.

Base Model | https://huggingface.co/meta-llama/Llama-2-7b-chat-hf

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

PERFORMANCE INSIGHTS | 4TH GEN INTEL® XEON® SCALABLE PROCESSORS

- OpenAI Whisper-base model with Intel® OpenVINO™ & Core Pinning

| Test methodology

The OpenAI Whisper base 74M FP32 model is exported into the Intel® OpenVINO™ format and then tested for inference using Intel® OpenVINO™. For performance tests, 25 iterations were executed for each inference scenario out of which initial 5 iterations were considered as warm-up and were discarded for calculating Inference time (in seconds) and tokens per second. The time collected includes encode-decode time using tokenizer and LLM inference time.

Input | MP3 file with 28.2 sec audio

Output | Generative AI has revolutionized the retail industry by offering a wide array of innovative use cases that enhance customer experiences and streamline operations. One prominent application of Generative AI is personalized product recommendations. Retailers can utilize advanced recommendation algorithms to analyze customer data and generate tailored product suggestions in real time. This not only drives sales but also enhances customer satisfaction by presenting them with items that align with their preferences and purchase history.

| 74 words transcribed.

Base Model | https://github.com/openai/whisper#available-models-and-languages

***Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

| About Scalers AI™

Scalers AI™ specializes in creating end-to-end artificial intelligence (AI) solutions to fast-track industry transformation across a wide range of industries, including retail, smart cities, manufacturing, insurance, finance, legal and healthcare. Scalers AI™ industry offering include predictive analytics, generative AI chatbots, stable diffusion, image and speech recognition, and natural language processing. As a full stack AI solutions company with solutions ranging from the cloud to the edge, our customers often need versatile common off the shelf (COTS) hardware that works well across a range of workloads.

- Fast track development & save hundreds of hours in development with access to the solution code.

As part of this effort, Scalers AI™ is making the solution code available. Reach out to your Dell™ representative or contact Scalers AI™ at contact@scalers.ai for access to GitHub repo.

Related Documents

Lab Insight: Dell CPU-Based AI PoC for Retail

Mon, 13 May 2024 20:45:53 -0000

|Read Time: 0 minutes

Introduction

As part of Dell’s ongoing efforts to help make industry-leading AI workflows available to its clients, this paper outlines a sample AI solution for the retail market. The PoC leverages DellTM technology to showcase an AI-powered inventory management application for retail organizations.

AI technology has been in development for some time, but recent technological advancements have greatly accelerated AI’s ability to provide value across a wide range of enterprise applications. AI solutions have become a key initiative for many organizations. While the advancement of AI technology provides the basis for a diverse set of AI-powered applications, the specific requirements of different verticals provide distinct hardware and software challenges. IT organizations might be unsure of the technical requirements for deploying such a solution. This uncertainty may be due to unfamiliarity with AI, as well as an expectation that AI applications will require specialized hardware, often with limited availability.

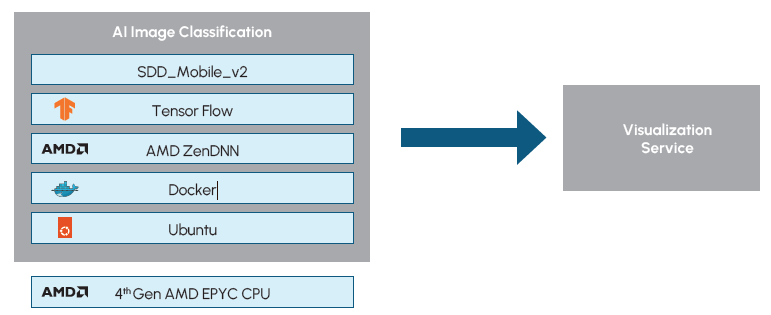

This paper covers a solution specifically designed to capture the requirements of a retail-based AI deployment using a standard AMDTM CPU for AI training and inference. The solution leverages hardware from Dell, AMD , and BroadcomTM, to create a solution powerful enough to capture and analyze large-scale video data from cameras in retail environments, as well as flexible enough to scale to the unique needs of individual retail environments. Training of the model was achieved in two days, utilizing the same Dell PowerEdge server that is used for inferencing. The scalability of the solution was tested with up to 20 video streams. The PoC additionally demonstrates AI optimizations for AMD CPUs by utilizing AMD’s ZenDNN library. The utilization of the ZenDNN library, along with node pinning, resulted in an average throughput increase of 1.5x.

While the overall applications of AI in retail environments are much broader than the single inventory management solution outlined in this paper, the PoC demonstrates a framework for how IT organizations can quickly deploy an AI solution that delivers practical value in a retail environment by using readily available hardware.

Importance for the Retail Market

As with many other industries, the retail market has become increasingly data driven. Data can provide greater insight into areas such as customer behavior and product demand, as well as assist in optimizing operational areas such as procurement and inventory management. The emergence of AI technology provides even greater opportunity for valuable data-driven insights and optimizations within the retail industry.

Possibilities for retail-focused AI solutions include both customer experience (CX)-driven solutions and operations-focused applications. CX might be enhanced with personalized recommendation systems based on customer purchase trends, or virtual assistants capable of providing product recommendations for online retail experiences. Retail operations may be optimized through solutions such as AI-enhanced surveillance to detect fraud or theft, inventory management systems, or AI-powered product pricing systems.

These examples, as well as the more in-depth PoC study outlined in this paper, are a small subset of possible AI applications that may be implemented by retail organizations. While the exact solution implementations that are most appropriate may vary between organizations based on several factors such as location, size, type of goods sold, and distribution of online versus in-person sales, it is clear that AI applications can provide immense value in retail environments.

While a proactive approach to AI adoption may be beneficial to retail organizations, unfamiliarity with AI technology and the hardware and software components needed to deploy and optimize such solutions act as a barrier to adoption. The following solution demonstrates a PoC for an AI-powered retail inventory management system that can be quickly deployed and further expanded upon by retail organizations using commonly available hardware.

Solution Overview

The retail inventory management solution addresses a common challenge in retail environments of inventory distortion. Without accurate and timely inventory management, retail organizations can be challenged with stock levels that are either too low or too high. Both situations can prove to be costly. Too much inventory requires additional storage, commitment of capital, and potential waste of perishable items. Conversely, too low of inventory can lead to customer dissatisfaction and loss of sales. In many cases, low inventory leads to customers purchasing at competitive retailers and may lead to overall loss of brand loyalty. By utilizing computer vision and object detection AI models to monitor and track inventory, retailers can achieve real-time insights into their stock to balance their inventory more appropriately and provide valuable insights back to suppliers.

To demonstrate a real-world example solution of an AI application that could be deployed to address such retail challenges, Scalers AITM, in partnership with Dell, Broadcom, and The Futurum Group, implemented a PoC solution for a retail inventory management system. The solution was designed to capture data from store cameras and use an object-detection AI model to monitor and manage product stock levels. The solution was capable of detecting products on store shelves, keeping track of inventory, and raising alerts of low or out of stock items.

All of this was accomplished using standard Dell PowerEdge servers with 32 core 4th Gen AMD EPYC processors and Broadcom networking. No GPUs were required. The CPU-based solution was further optimized with AMD’s Zen Deep Neural Network (ZenDNN) library, which provides optimizations for deep learning inferencing on AMD CPU hardware. AMD’s ZenDNN optimizations delivered an average of 1.5x increased throughput performance to the PoC. By utilizing modest, CPU-based hardware, this PoC solution demonstrates a clear example of a readily deployable and broadly applicable AI retail solution.

To achieve the solution, store shelves were configured in zones with the product names and corresponding x,y coordinate pairs that indicated the shelf location. The products, location, and the maximum capacity for each item were stored as JSON objects.

Solution Highlights

|

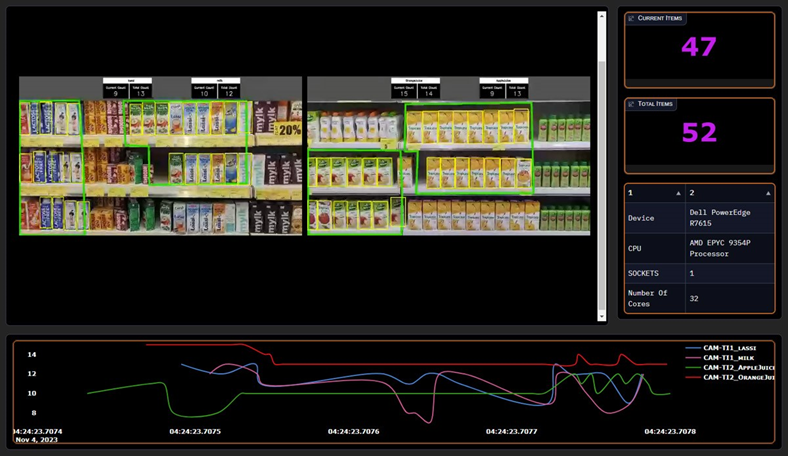

Figure 1: Visualization Dashboard.

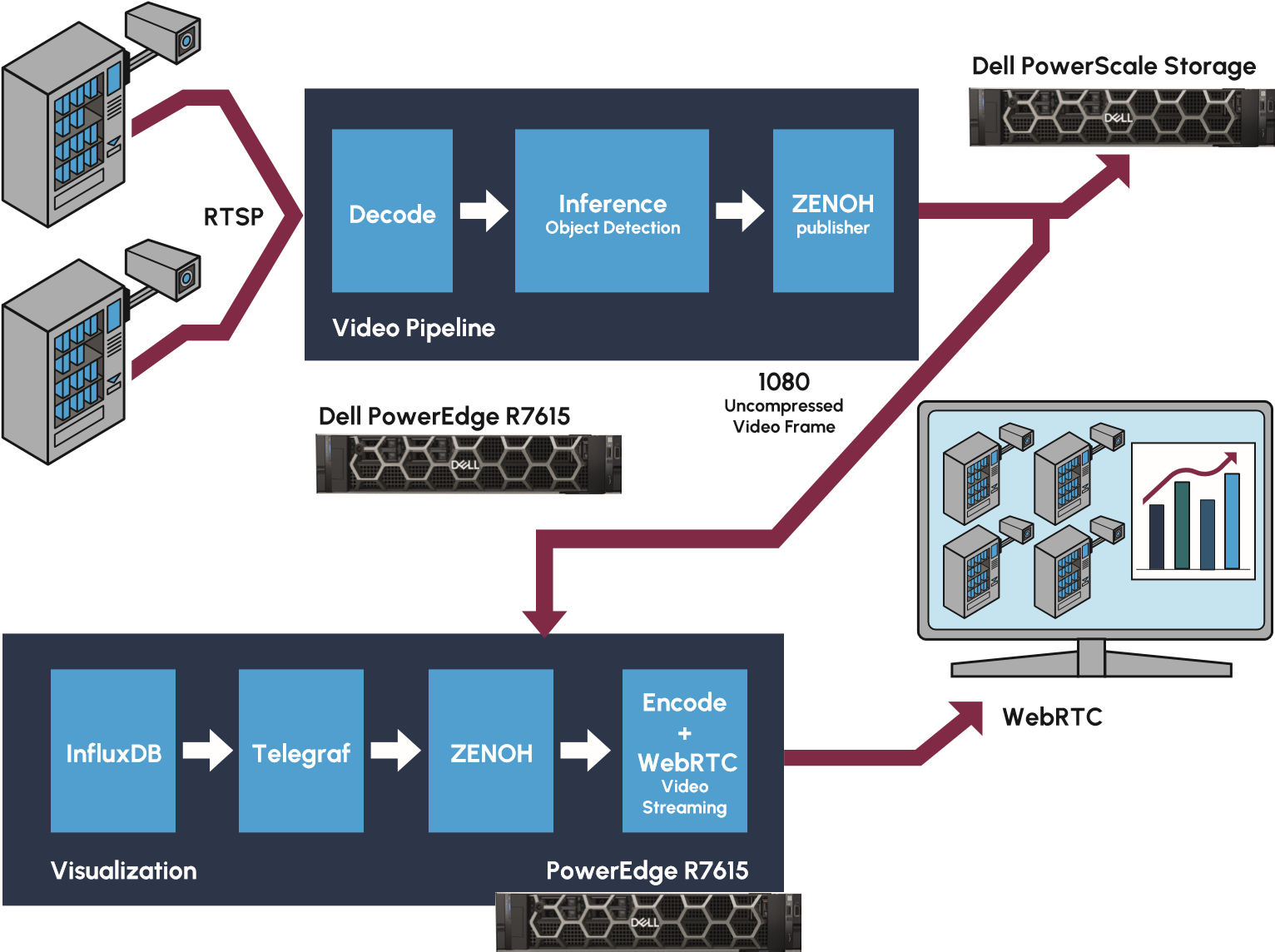

The identification and monitoring of products in each zone is achieved by capturing video data from store cameras into a video pipeline for processing. The live video stream is captured, decoded, and then inferenced using an object-detection AI model. The video pipeline is run on a typical Dell PowerEdge server without requiring any GPUs or specialized accelerators. The video streams can additionally be directed to Dell PowerScale NAS storage for long term retention. Zenoh (Zero Overhead Network Protocol) is then utilized for distribution to an additional Dell server running a visualization process. The visualization engine enables the video stream to be shared over the web for remote viewing and analysis. The visualization dashboard can be seen in Figure 1. Figure 2 depicts a high-level diagram of the solution pipeline.

Figure 2: Retail Inventory Management AI Pipeline (Source: Scalers AI)

By separating the architecture into two distinct pieces, with one server powering video decoding and object detection, and a separate server for the visualization process, the PoC provides a framework for a highly scalable solution. Traditional approaches would combine the processes into a single pipeline, however, this architecture can prove challenging to scale due to the different computational needs of the services. Utilizing a dual service approach, provides greater flexibility to scale the processes as needed for retail organizations further expanding upon this PoC. Both the video pipeline and the visualization service can be scaled independently as requirements such as the number of video streams or application logic are adjusted. The dual service architecture and scalability of the overall solution is enabled by utilizing high speed Broadcom NetXtreme-E NICs which maintain high bandwidth between the video inferencing and visualization services.

Additional details about the implementation and performance testing of the PoC have been made available by Dell on GitHub.

The key hardware components used in the solution include the following: Dell PowerEdge R7615 Servers

|

Highlights for AI Practitioners

It is notable for AI practitioners that the project was not limited to the deployment and inferencing of the AI model. The solution additionally involved customization of the pre-trained base model using a process known as Transfer Learning. The solution began with the SSD_MobileNet_v2 model for object detection, which was an ideal model for this PoC as it provides a one-stage object detection model that does not require exceptional compute power. The model was then customized via Transfer Learning with the SKU110K image data set. The training process involved 23,000 images and resulted in a mean average precision (mAP) of 0.7. The training process was completed in approximately two days.

Figure 3: Object Detection Software Overview

It should also be noted that both the model training and deployment of the video pipeline solution were accomplished using the same 32 core Dell PowerEdge R7615 server. The PoC demonstrates the ability to achieve useful AI applications on CPU-based hardware that is commonly found in retail environments. The solution is further optimized for inferencing on AMD CPUs by utilizing AMD’s ZenDNN library and node pinning. The ZenDNN library provides performance tuning for deep learning inferencing on AMD CPUs while node pinning can further optimize the application by binding processes to dedicated compute resources.

The below table shows the ZenDNN parameter configurations used.

Variable | Value | Notes |

TF_ENABLE_ZENDNN_OPTS | 0 | Sets native TensorFlow code path |

ZENDNN_CONV_ALGO | 3 | Direct convolution algorithm with blocked inputs and filters |

ZENDNN_TF_CONV_ADD_FUSION_SAFE | 0 | Default Value |

ZENDNN_TENSOR_POOL_LIMIT | 512 | Set to 512 to optimize for Convolutional Neural Network |

OMP_NUM_THREADS | 32 | Sets threads to 32 to match # of cores |

GOMP_CPU_AFFINITY | 0-31 | Binds threads to physical CPUs. Set to number of cores in the system |

Figure 4: ZenDNN Configurations

Key Highlights for AI Practitioners

|

Considerations for IT Operations

The hardware used in this AI application, including Dell PowerEdge R7615 servers with 4th Gen 32 core AMD EPYC 9354P Processors, Dell PowerScale NAS, Dell PowerSwitch Z9664, and Broadcom NetXtreme-E NICs, is familiar and available to IT operations, yet each component provides valuable characteristics needed to support this type of solution.

The Dell PowerEdge servers provide powerful 4th Generation AMD EPYC processors that are capable of supporting both the AI and application workloads, and the Dell PowerScale NAS provides a high-performance, highly scalable NAS storage system capable of handling large-scale video and image data. The solution is then tied together using Broadcom Ethernet capable of supporting the high bandwidth requirements of video streaming. Most notably, these components all provide scalability for IT organizations to further build out this application with more demanding requirements such as additional video streams or additional application logic.

Futurum Group Comment: The specific use of Dell PowerEdge R7615 servers should be noted, as it demonstrates the ability to run AI workloads on standard hardware, commonly deployed in retail environments. While not considered a high-end compute server, the R7615 servers with mid-range 32 core 9354P Processors proved capable of all processes including model training, inferencing, and the separate visualization engine. This enables retail IT organizations to deploy such solutions without acquiring GPUs or requiring the datacenter level cooling needed for higher end servers. Additionally, by separating the architecture into separate video and visualization pipelines, the solution can be scaled to meet the size and performance requirements of a broad range of retail environments.

The on-premises deployment of this solution additionally enables IT operations to achieve their data security and data privacy requirements. While public cloud has been utilized for many early iterations of AI applications, data privacy becomes a concern for many organizations as they build further AI applications leveraging private data. By deploying this, or similar, retail solutions on-premises, IT operations have greater control over the privacy of their data, which may include sensitive consumer or product information. The on-premises deployment of this solution also offers a potential economic advantage in its ability to avoid cloud storage costs when storing large capacities of video data. It additionally avoids the high networking requirements of uploading many video streams to the cloud.

Specifications of the Dell PowerEdge servers used in this PoC can be found in Figure 5

PowerEdge R7615 |

| |

Device Name |

| Dell PowerEdge R7615 |

CPU | Model Name | AMD EPYC 9354P 32-Core Processor |

Number Of Cores per Socket | 32 | |

Number Of Sockets | 1 | |

Memory | Size | 768 GB |

Storage | Size | 1 TB |

Network |

| Broadcom NetXtreme-E BCM57508 |

OS | Name | Ubuntu 22.04.3 LTS |

Kernel | 5.15.0-86-generic | |

Figure 5: Dell PowerEdge Server Details

Key Highlights for IT Operations

|

Retail Solution Performance Observations

A key performance metric for the retail inventory management reference solution is the throughput of images per second as they are streamed by the in-store video cameras, decoded, and inferenced by the video pipeline. Video data is a common source for AI applications in the retail market, due to the prevalence of existing cameras deployed in stores, and the value of information that can be obtained by the video data. Because of this, the throughput performance insights gained from this PoC can translate to additional retail solutions that rely on image processing.

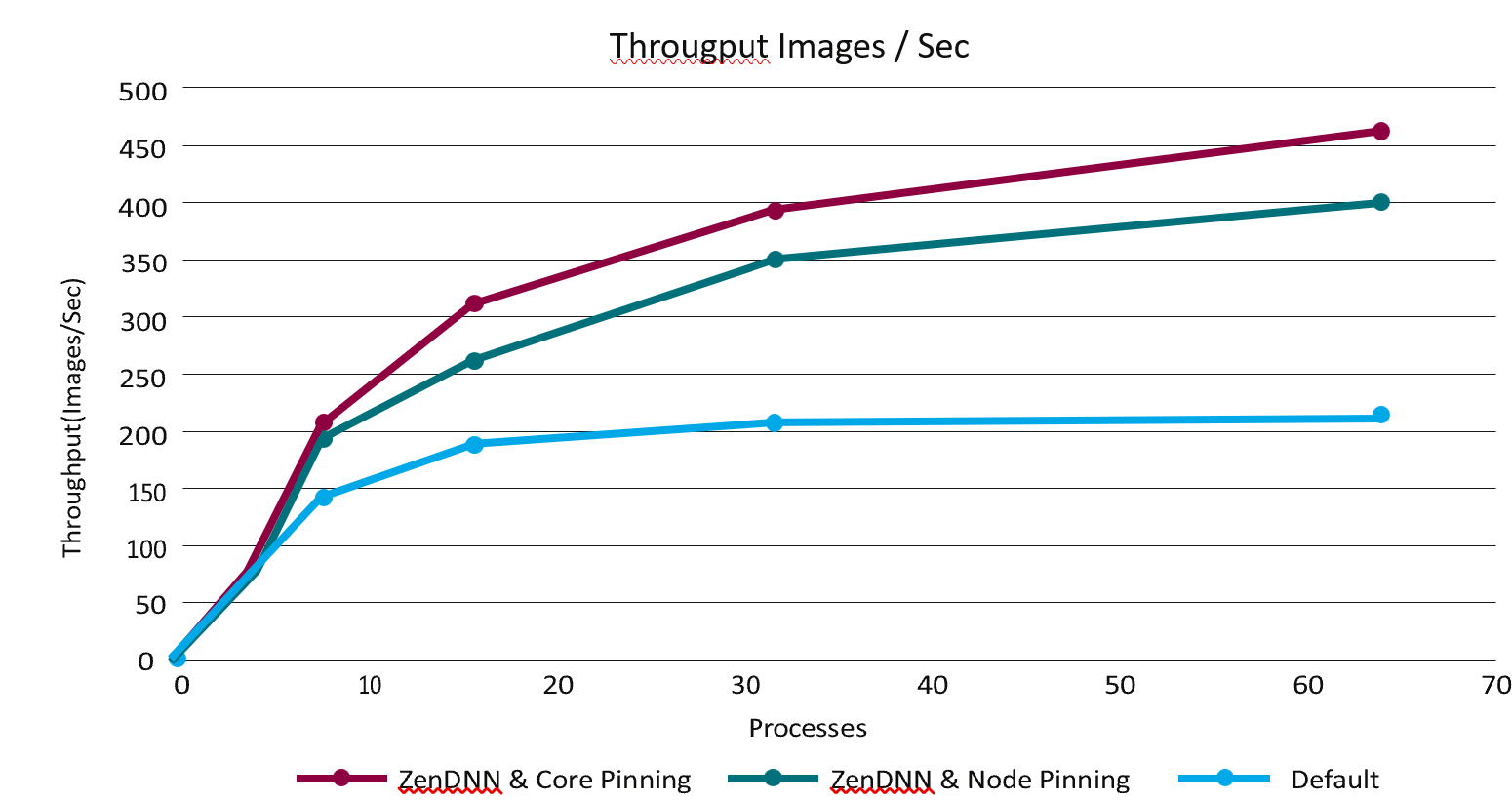

To examine the performance of the 32 core AMD EPYC 9354P processor for data capture and inferencing, the video pipeline was tested both with and without ZenDNN performance tuning, as well as with core pinning and node pinning. ZenDNN is a library that optimizes the performance of AMD processors for deep learning inferencing applications. The node pinning and core pinning are techniques offer optimization by binding processes to specific NUMA nodes or cores. The tests were run with up to 64 processes running on a 32 core server. The results of this testing can be seen in Figure 6.

Figure 6: Throughput Performance

Figure 6: Throughput Performance

The performance results demonstrate that the use of ZenDNN with node pinning can provide a dramatic increase in throughput, with mostly lower CPU utilization. On average, ZenDNN with node pinning achieved a throughput increase of approximately 1.5x. Further throughput increases were additionally achieved by utilizing core pinning. Full results can be seen in Figure 7.

Processes | Throughput Images/sec - ZenDNN | Throughput Images/sec - ZenDNN OFF |

| |||

Core Pinning | Node pinning | CPU utilization | Default | CPU utilization | Difference ZenD- NN(Node pinning) vs Default | |

1 | 29.86 | 31.72 | 7.808695652 | 25.06 | 10.75217391 | 1.27 |

8 | 195.7 | 188.26 | 46.27717391 | 125.02 | 59.36684783 | 1.51 |

16 | 305.06 | 264.24 | 62.7548913 | 176.99 | 75.2388587 | 1.49 |

32 | 389.1 | 347.58 | 78.978125 | 204.98 | 83.00978261 | 1.7 |

64 | 460.88 | 392.32 | 93.09952446 | 214.43 | 91.55903533 | 1.83 |

Figure 7: Video Pipeline Throughput Test

The performance gains achieved with ZenDNN, core pinning, and node pinning demonstrate the ability to optimize CPUs for AI applications. Commonly, computationally demanding AI processes, such as the computer vision and object detection utilized in this PoC, are expected to require GPUs. Hardware alone, however, is not the only component that affects performance. Software such as ZenDNN plays a key role in optimizing the performance of the chosen hardware, as does configuration details such as utilizing core pinning or node pinning. By utilizing these configurations, organizations can achieve AI applications that meet their performance needs with a CPU-based solution utilizing readily available hardware.

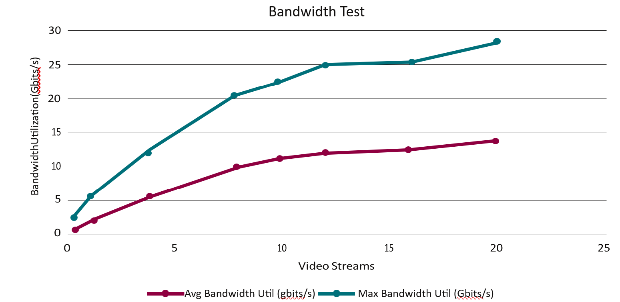

The PoC solution was additionally tested with an increasing number of video streams to assess the bandwidth of the networked video pipeline and visualization service. 1080p video was streamed to the video pipeline where it was decoded and inferenced. It was then transmitted and received by the visualization pipeline to be encoded and shared. The number of video streams was increased incrementally between 1 and 20 which resulted in an increasing bandwidth utilization. The bandwidth scaled from an average utilization of 1.65 Gbits/s and a max utilization of 3.4 Gbits/s with 1 stream, to an average utilization of 13.9 Gbits/s and a max utilization of 27.4 Gbits/s with 20 streams. An overview of the results can be seen in Figure 8.

Figure 8: Inventory Management System Bandwidth

Notably, the bandwidth does not increase linearly in relation to the number of streams, allowing the solution to scale as additional streams are needed. As the number of streams increases, however, the solution does experience a decrease in frames-per-second. While frames-per-second decreases, the overall utility of the solution is not significantly impacted. Higher frame rates are of greater importance when considering video with large amounts of motion, or when viewing quality is a major priority. In this particular solution, lower frame rates are acceptable as the focus is stationary store shelves, and real time viewing is not the primary use case. Full results of testing the networked solution, including both bandwidth utilization and frames per second, can be seen in Figure 9.

Number of Streams | AVG FPS / Stream | Throughput (FPS) | Avg Bandwidth Util (Gbits/s) | Max Bandwidth Util (Gbits/s) | Avg CPU Util (%) | Avg Memory Util (GB) |

1 | 31.14 | 31.14 | 1.65 | 3.4 | 12.61 | 6.5 |

2 | 30.92 | 61.84 | 3.2 | 6.7 | 21.8 | 7.27 |

4 | 28.78 | 115.12 | 6.2 | 12.2 | 41.38 | 9.2 |

8 | 22.17 | 177.36 | 9.86 | 20.5 | 65.06 | 13.9 |

10 | 20.53 | 205.3 | 11.2 | 22.4 | 73.18 | 16.4 |

12 | 18.8 | 225.6 | 12.1 | 24.7 | 78.76 | 18.2 |

16 | 13.97 | 223.52 | 12.6 | 25.6 | 81.39 | 22.2 |

20 | 11.7 | 234 | 13.9 | 27.4 | 84.1 | 26.7 |

Figure 9: Inventory Management System Bandwidth Test

The results of this performance testing demonstrate that the bandwidth of the networked servers is capable of scaling alongside more demanding video requirements. The separation of the video pipeline and the visualization service onto distinct servers allows the architecture to independently scale the compute resources for the two services. To capitalize on this architecture however, the networking between the servers must be capable of providing adequate bandwidth between the services. To do so, the PoC solution utilizes Broadcom BCM57508 NetXtreme-E Ethernet controllers capable of supporting up to 200GbE. By utilizing a modular architecture that’s connected with scalable, high bandwidth networking, the retail inventory management PoC provides a flexible starting point for retail organizations to scale to their individual needs, including the number of video streams, FPS requirements, and additional application logic.

Final Thoughts

With the rapid development of AI technology, the retail market presents many opportunities to deploy valuable new AI-powered applications. With the broad range of value that AI can bring to retail environments, both in improving CX and optimizing store operations, retail organizations should look to be proactive in adopting the emerging technology.

As a new technology, there are many unknowns and misconceptions for those in IT who may be unfamiliar with AI deployments, complicating and delaying new AI applications. A common challenge faced by IT is the expectation that AI applications will require specialized hardware solutions that are inaccessible. The AI-powered retail inventory management solution outlined in this paper serves as a demonstration of a broadly applicable AI solution for retail that can be deployed on off-the-shelf hardware solutions. The Dell hardware solutions used in the PoC deployment were demonstrated to handle the high-bandwidth video requirements as well as the AI modeling and inferencing requirements without the use of purpose-built accelerators, GPUs, or custom hardware.

The PoC solution outlined in this paper additionally serves as a reference for retail organizations to quickly deploy their own inventory management solution. While the solution discussed in this paper is limited to a PoC, it was designed with scalability in mind for organizations to further develop and scale a solution for their needs.

The use of an AI-powered inventory management system can provide real value and cost savings to organizations by avoiding over- or under-stocking products. By using readily available hardware and reference solutions, the barrier of entry for deploying such an AI solution is dramatically lowered, allowing retail organizations to achieve quicker deployments of new AI applications and quicker time to value.

CONTRIBUTORS

Mitch Lewis

Research Analyst | The Futurum Group

PUBLISHER Daniel Newman

CEO | The Futurum Group

INQUIRIES

Contact us if you would like to discuss this report and The Futurum Group will respond promptly.

CITATIONS

This paper can be cited by accredited press and analysts, but must be cited in-context, displaying author’s name, author’s title, and “The Futurum Group.” Non-press and non-analysts must receive prior written permission by The Futurum Group for any citations.

LICENSING

This document, including any supporting materials, is owned by The Futurum Group. This publication may not be reproduced, distributed, or shared in any form without the prior written permission of The Futurum Group.

DISCLOSURES

The Futurum Group provides research, analysis, advising, and consulting to many high-tech companies, including those mentioned in this paper. No employees at the firm hold any equity positions with any companies cited in this document.

ABOUT THE FUTURUM GROUP

The Futurum Group is an independent research, analysis, and advisory firm, focused on digital innovation and market-disrupting technologies and trends. Every day our analysts, researchers, and advisors help business leaders from around the world anticipate tectonic shifts in their industries and leverage disruptive innovation to either gain or maintain a competitive advantage in their markets.

A Path to Virtualization at the Edge

Thu, 14 Mar 2024 16:47:05 -0000

|Read Time: 0 minutes

Get next-generation performance at the edge from the Dell PowerEdge XR family of servers

Executive Summary

Edge sensors and devices generate data on a massive scale. And much of the data is generated in rugged environments. Heavy machinery used in underground mining operations, for example, can be outfitted with smart sensors to monitor gas concentrations, air quality, and temperature. Once this data is captured by a high-performance edge server, an analytics application processes the data to generate real-time insights.

Prowess Consulting investigated options for organizations looking for rugged edge servers with the performance needed for compute-intensive analytics. We started by evaluating the Dell™ PowerEdge™ XR7620 server, a member of Dell Technologies’ PowerEdge XR rugged servers portfolio. We looked at performance, durability, and compliance to military and telecom industry standards.

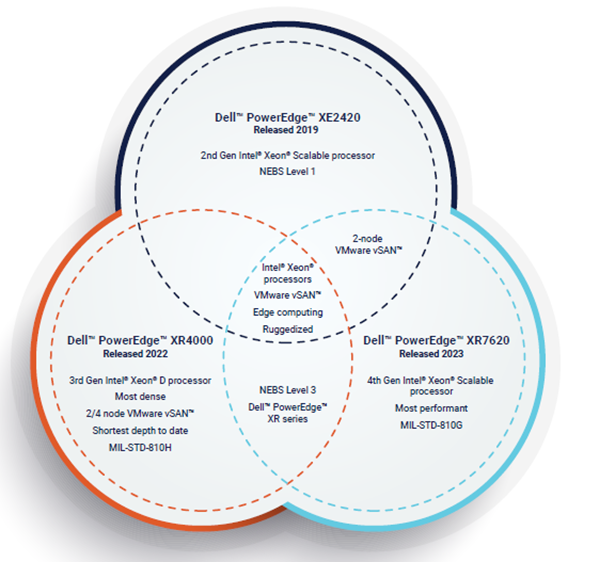

We then compared the PowerEdge XR7620 server to the PowerEdge XE2420 server, a previous-generation rugged edge server, and observed significant generational performance gains. Finally, we compared the PowerEdge XR7620 server to another member of the PowerEdge XR family, the PowerEdge XR4000 series servers. This helped us summarize key differences between the PowerEdge XR7620 server and the PowerEdge XR4000 series. We found that, for organizations looking for the ideal edge server, the PowerEdge XR7620 server delivers high performance, including excellent virtualization capabilities and VMware vSAN™ performance, whereas the PowerEdge XR4000 series servers deliver excellent density and deployment flexibility.

Life at the Edge

Modern businesses are processing more data at the edge. This brings a unique set of requirements for edge servers: the need for high performance, the ability for a server to fit into tiny spaces, and the ability to tolerate the extremes of remote field deployments whether on a manufacturing floor or in a busy retail environment.

Workloads like data analytics and AI/ML that process data at the edge drive the need for high performance. Decoupled from your data center, servers at the edge combat a host of environmental and logistical challenges. A factory that combines Internet of Things (IoT) and digital twin technologies to automate resource allocation and optimize efficiency through analytics and AI will need servers on the factory floor to generate actionable data. And that means exposure to heat, vibration, dust, and more.

How your organization addresses the dual considerations of performance and durability inherent to edge computing is key. Regardless of your solution, maximizing performance and safeguarding against harsh environments is critical.

The PowerEdge XR7620 Server: Performance and Durability at the Edge

Performance

Research by Prowess Consulting shows that the new PowerEdge XR7620 server, powered by 4th Gen Intel® Xeon® Scalable processors, can meet the challenges of ensuring performance and durability. The PowerEdge XR7620 server is a two-socket server featuring data center–level compute with high performance, high capacity, and reduced latency. Moreover, its rugged form factor ensures performance-protecting durability, from military deployments to the factory floor. The PowerEdge XR7620 server can process and analyze data at the point of capture for maximum impact when away from the data center. Given its high performance, the PowerEdge XR7620 server excels at tasks like virtualization.

The PowerEdge XR7620 server also offers compact GPU- and CPU-optimized variants to further customize performance.

Durability

The PowerEdge XR7620 server—like the entire PowerEdge XR family—is purpose-built to withstand the most extreme environments. It can handle dust, humidity, extreme temperatures, shocks, and more. And it’s both MIL-STD-810G and Network Equipment Building System (NEBS) Level 3, GR-3108 Class 1, tested[1]. This means the PowerEdge XR7620 server is compliant with edge-computing standards for both the telecom industry (NEBS Level 3) and military-related applications (MIL-STD-810G). These are foundational requirements, and we dove deeper into their importance.

NEBS Level 3

“NEBS describes the environment of a typical United States Regional Bell Operating Company (RBOC) central office. NEBS is the most common set of safety, spatial, and environmental design standards applied to telecommunications equipment in the United States. It is not a legal or regulatory requirement, but rather an industry requirement.”[2]

NEBS levels relate primarily to the telecom industry and are rated 1–3. Whereas NEBS Levels 1 and 2 are essentially office-based and targeted toward more controlled environments like data centers, NEBS Level 3 is the standard. It’s what telecom and network providers base their installation requirements on, as this level ensures equipment operability. It also requires the most time, effort, and cost in terms of design and maintenance.

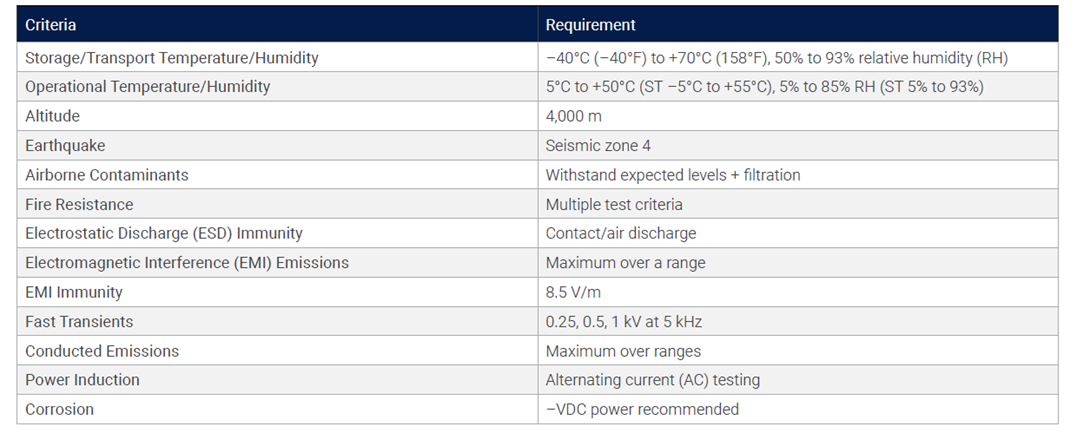

Table 1 illustrates the specific requirements for NEBS Level 3.

Table 1. NEBS Level 3 requirements[3]

MIL-STD

“This Standard contains materiel acquisition program planning and engineering direction for considering the influences that environmental stresses have on materiel throughout all phases of its service life. It is important to note that this document [the MIL-STD-810G standard] does not impose design or test specifications. Rather, it describes the environmental tailoring process that results in realistic materiel designs and test methods based on materiel system performance requirements.”[4]

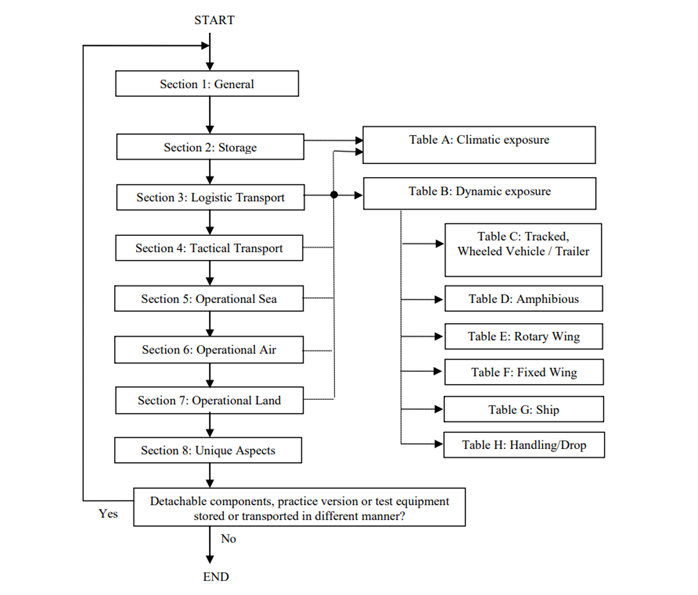

A military standard (MIL-STD) is a US defense standard that centers around ensuring standardization and interoperability for the products used by the US Department of Defense (DoD). There are different standards for specific use cases and industries, and the PowerEdge XR7620 server specifically addresses the 810G standard. The 810G standard centers around environmental engineering and testing, and it provides a rigorous framework—rather than universal guidelines—for vetting potential deployments through extensive testing.

Figure 1 shows a decision tree from the 810G standard guidelines that illustrates how rigorous and extensive the requirements for testing are to meet 810G compliance.

Figure 1. A decision tree from the MIL-STD-810G guidelines[5]

The PowerEdge XR7620 Server: A New Generation

Prowess Consulting examined the performance difference between the PowerEdge XR7620 server and the previous-generation PowerEdge XE2420 server. We began by comparing the processors between the generations.

The 4th Gen Intel Xeon Scalable processors that power the PowerEdge XR7620 server provide a number of benefits over the 2nd Gen Intel Xeon Scalable processors that power the PowerEdge XE2420 server. These benefits include:

- 1.53x average generation-on-generation performance improvement[6]

- Up to 1.60x higher input/output operations per second (IOPS) and up to 37% latency reduction for large-packet sequential reads using integrated Intel® Data Streaming Accelerator (Intel® DSA) versus the prior generation[7]

- Up to 95% fewer cores and 2x higher level-1 compression throughput using integrated Intel® QuickAssist Technology (Intel® QAT) versus the prior generation[8]

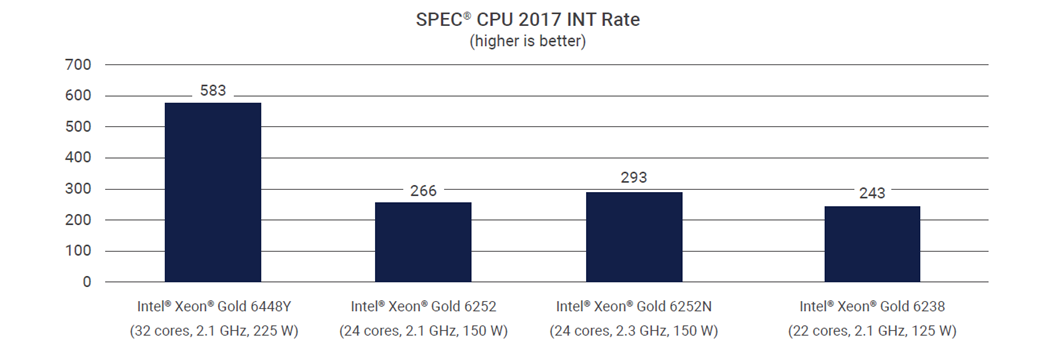

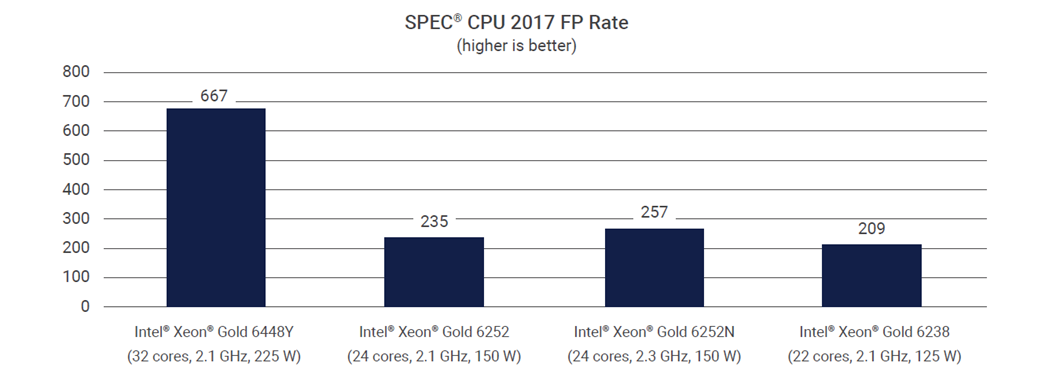

We then reviewed the top-line specs between the PowerEdge XE2420 server and the PowerEdge XR7620 server, shown in Table 3 in the Methodology section. These specs show a clear and consistent improvement between generations. Further analysis of SPEC® CPU 2017 Integer and Floating Point (FP) rates—both of which measure CPU processing power by integer and floating point rates, respectively—shows the same generational increase, with the PowerEdge XR7620 server and its 4th Gen Intel Xeon Scalable processors the clear winner. These results are shown in Figures 2 and 3.

Figure 2. SPEC® CPU INT Rate for the Dell™ PowerEdge™ XR7620 server (with an Intel® Xeon® Gold 6448Y processor) versus the PowerEdge XE2420 server (with Intel Xeon Gold 6252, Intel Xeon Gold 6252N, and Intel Xeon Gold 6238 processors)[9]

Figure 3. SPEC® CPU FP rate for the Dell™ PowerEdge™ XR7620 server (with an Intel® Xeon® Gold 6448Y processor) versus the PowerEdge XE2420 server (with Intel Xeon Gold 6252, Intel Xeon Gold 6252N, and Intel Xeon Gold 6238 processors)9

This performance improvement between generations can also be seen by comparing VMware vSAN deployments. The PowerEdge XE2420 server and the PowerEdge XR7620 server can both implement two-node vSAN deployments. However, as noted previously, the PowerEdge XR7620 server will be more performant with those deployments. This higher level of performance doesn’t just come from the upgraded processor, either. The 4th Gen Intel Xeon Scalable processors in the PowerEdge XR7620 are optimized to take full advantage of the new features and software improvements in VMware vSphere® 8, including GPU- and CPU-based acceleration.

The PowerEdge XR Family

Before we examine the Dell PowerEdge XR family of servers in more detail, Figure 4 provides a quick visual reference of the servers discussed in this report.

- Venn diagram of the Dell™ PowerEdge™ XE2420, XR7620, and XR4000 series servers

VMmark® Examination of PowerEdge XR7620 and PowerEdge XR4000 Series Servers

The PowerEdge XR7620 server is part of the PowerEdge XR family of servers, all of which are built to handle the most extreme environments while still delivering performance and reliability. We wanted to examine the PowerEdge XR7620 server alongside some of its “younger siblings,” the PowerEdge XR4000 series servers, and investigate the inter-generational differences. (While not discussed in this study, PowerEdge XR8000 series servers provide excellent flexibility and stability, and would be the “elder sibling” in the family.)

To do this, we analyzed VMmark® results for both the PowerEdge XR4510c (representing the PowerEdge XR4000 series) and the PowerEdge XR7620, shown in Table 4 in the Methodology section. VMmark is a tool for hardware vendors and others to measure the performance, scalability, and power consumption of virtualization platforms. VMmark allows for: benchmarking of virtual data center performance and power consumption; comparing performance and power consumption between different virtualization platforms; and examining how changes in hardware, software, or configuration affect performance within the virtualization environment.[10]

The VMmark results show the PowerEdge XR7620 server can achieve more performance across more tiles (fourteen versus four). These results also illustrate what can be achieved with a full, dual-socket server with the latest-generation processors in a short-depth, 2U ruggedized chassis at the edge. Moreover, the 4th Gen Intel Xeon Scalable processors in the PowerEdge XR7620 server also account for the higher performance. While the PowerEdge XR7620 server’s overall performance wins are expected, what’s missing is how performant at the edge PowerEdge XR4000 series servers are. Given the smaller size and shorter form factor overall, the PowerEdge XR4000 series servers are very performant relative to size, and they are an excellent option when a smaller, denser, more flexible deployment is called for. Moreover, their redundancy allows for more hardware failures, making them resilient and durable.

- Optional witness node on the Dell™ PowerEdge™ XR4000 series servers[11]

VMware vSAN is an “enterprise-class storage virtualization software that provides the simplest path to hyperconverged infrastructure (HCI) and multi-cloud.”[12] VMware vSAN is widely deployed, so we also compared vSAN deployments inter-generationally. While the PowerEdge XR7620 server (and PowerEdge XE2420 server, too) can implement two-node vSAN deployments, PowerEdge XR4000 series servers can implement four-node vSAN deployments. Additionally, the PowerEdge XR7620 server can also be deployed in a two-node architecture using a vSAN witness appliance to take advantage of the many benefits of vSAN—especially its performance benefits. While both servers take advantage of vSAN, the PowerEdge XR7620 server will offer more overall performance, whereas PowerEdge XR4000 series servers offer the highest density in the smallest form factor.

There is, however, another significant benefit to the upgraded PowerEdge XR7620 server: power savings and sustainability. As Table 4 in the Methodology section shows, the PowerEdge XR7620 server offers double the cores of the PowerEdge XR4510c server tested for less than double the wattage, resulting in a smaller power draw when the PowerEdge XR7620 is deployed the edge. The PowerEdge XR7620 server reduces power consumption, leading to higher energy efficiency and power availability for the PowerEdge XR7620 server. The reduced power consumption can also potentially lower total cost of ownership (TCO) and help meet your business’s sustainability goals.

Potential PowerEdge XR Family Use Cases

The PowerEdge XR family of servers has use cases in retail, manufacturing, defense, and telecom. We explore two specific use cases in the following sections.

The PowerEdge XR7620 Server: Autonomous Driving

Let’s examine how the PowerEdge XR7620 server—which excels at virtualization—might perform in a real-world setting in the auto industry. As demand increases for technologies such as advanced driver assistance systems (ADAS) and autonomous driving capabilities, the industry needs more efficient development and testing. Virtualization is a key strategy for generating this efficiency, and it’s leading to a change in the way vehicles are designed, developed, manufactured, tested, and maintained.[13]

As software becomes increasingly essential to the average vehicle, updating that software as efficiently as possible becomes a customer pain point and a business requirement. Vast amounts of data are generated when physically testing the update process in the factory or out on the track. You’ll need a high-performance server to capture and process that data as it’s generated for the fastest analytics and most actionable insights possible. Moreover, the 4th Gen Intel Xeon Scalable processors in the PowerEdge XR7620 server are optimized to use the software upgrades in vSphere 8, allowing you to modernize your hardware and software as you replace aging assets, while increasing capacity.

Additionally, this server must be able to withstand the dust and temperature fluctuations of the factory, or the vibrations and humidity of the track, or a host of other adverse conditions. The PowerEdge XR7620 server meets both performance and durability needs, offering the levels of performance required for intense data analytics and the ruggedized form factor required at the edge.

PowerEdge XR4000 Series Servers: Telecom Deployments

Let’s take a proper look at PowerEdge XR4000 series servers now. If the PowerEdge XR7620 server is at home on the factory floor, then the PowerEdge XR4000 series server is at home under the cell tower. While the PowerEdge XR7620 server is built for durability, PowerEdge XR4000 series servers are especially rugged and come in Dell’s smallest form factor for flexibility and customization in the most difficult deployments. They are NEBS Level 3 and MIL-STD-810H tested.[14] Moreover, their four sleds in a single 2U chassis offer excellent scalability and portability when in the field. They have “rackable” and “stackable” configuration options for maximum deployment flexibility, and they support multiple configurations within each option. And PowerEdge XR4000 series servers do so while still offering the high performance needed for analytics and virtualization at the edge.

Finding an Edge Within the PowerEdge XR Family

While the PowerEdge XR family of servers all feature a ruggedized, short-depth form factor, there’s a spectrum of purpose-built options to consider, varying from maximum performance at one end to maximum density and durability at the other.

As our research shows, the PowerEdge XR7620 server is an excellent choice for maximum performance within the PowerEdge family of servers examined. It’s powered by the next-generation Intel Xeon Gold 6448Y processor, giving the PowerEdge XR7620 server excellent virtualization capabilities and vSAN performance. And the PowerEdge XR7620 server does all this in a ruggedized, short-depth form factor that provides the durability required for intense edge computing.

The PowerEdge XR7620 Server: Under the Hood

The performance of the PowerEdge XR7620 server shouldn’t be seen as a simple generational update. It owes some of its performance to the 4th Gen Intel Xeon Scalable processors and the Dell™ PowerEdge RAID Controller 12 (PERC 12).

Intel® Xeon® Gold 6448Y Processor

The Intel Xeon Gold 6448Y processor found in the PowerEdge XR7620 server is based on 4th Gen Intel Xeon Scalable processor architecture, representing a serious upgrade from 2nd and 3rd Gen processors in several ways. With double the cores, a higher max turbo frequency, and a larger cache than the previous model’s processor, the Intel Xeon Gold 6448Y processor is built for performance. Moreover, the processor features Intel DSA, which helps speed up data movement and improve transformation operations to increase performance for storage, networking, and data-intensive workloads.[15]

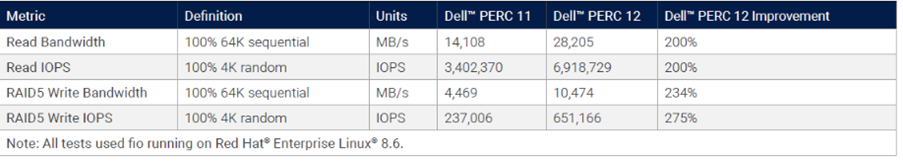

Dell™ PERC 12

PERC 12, Dell’s latest RAID controller, features the new Broadcom® SAS4116W series chip and offers increased capabilities compared with its predecessor, PERC 11. These capabilities include support for 24 gigabits per second (Gb/s) Serial-Attached SCSI (SAS) drives, increased cache memory speed, and a single front controller that supports both NVM Express® (NVMe®) and SAS. Table 2 shows the generational improvement between PERC 11 and PERC 12.[16]

Table 2. IOPS/bandwidth comparison between the Dell™ PERC 11 and PERC 12 controllers16

PowerEdge XR4000 Series Servers: Inside the Box

At the density end of the spectrum, we have the PowerEdge XR4000 series servers. These are Dell Technologies’ shortest-depth servers to date: modular 2U servers with a sled-based design for maximum flexibility. They come in two new 14”-depth form factors called “rackable” and “stackable,” and they offer rack or wall mounting options.

PowerEdge XR4000 series servers also feature an optional nano-server-sled that can serve as an in-chassis witness node for the vSAN cluster. This replaces the need for a virtual witness node and establishes a native, self-contained, two-node vSAN cluster—even in the 14” x 12” stackable configuration. You can choose between two and four nodes in a chassis while still using vSAN because of the in-chassis witness node. This makes virtual machine (VM) deployments possible where latency or bandwidth constraints previously prevented doing so. PowerEdge XR4000 series servers offer high-performance edge computing in a form factor small enough to fit in a backpack.[17] This form factor and size also lead to high computing density, which is the measurement of the amount of information that can be stored and processed in a given area to determine efficient use of space.

When Rugged Matters as Much as Performance

Our research concludes that the Dell PowerEdge XR family of servers is a great option for organizations looking for reliable, high-performing servers in ruggedized, short-depth form factors designed specifically for edge computing. Among the range of PowerEdge XR family servers examined by Prowess, the PowerEdge XR7620 server represents a solid upgrade from the previous generation, and is the performance-focused offering in the new PowerEdge XR family of servers. PowerEdge XR4000 series servers are the high-density, performant option when durability and space constraints are primary concerns.

Learn More

For more information on the Dell PowerEdge XR7620 server, see “Dell’s PowerEdge XR7620 for Telecom/Edge Compute” and the PowerEdge XR7620 server product page.

For more information on the new offerings in the PowerEdge XR family, see “Dell PowerEdge Gets Edgy with XR8000, XR7620, and XR5610 Servers.”

Methodology

Table 3 shows the configuration details for the comparison between the PowerEdge XE2420 server and the PowerEdge XR7620 server.

Table 3. Dell™ PowerEdge™ XR7620 server versus PowerEdge XE2420 server comparison

Server | ||

Processor | 2nd Gen Intel® Xeon® Scalable processors | 4th Gen Intel® Xeon® Scalable processors |

Cores per Processor | Up to 24 | Up to 32 |

Number of Processors Supported | 2 | 2 |

Memory | 16 x DDR4 RDIMM/LR-DIMM (12 DIMMs are balanced), up to 2,993 megatransfers per second (MT/s) | 16 x DDR5 DIMM slots, supports RDIMM 1 TB max, speeds up to 4,800 MT/s; supports registered error correction code (ECC) DDR5 DIMMs only |

Drive Bays | Up to 4 x 2.5-inch SAS/SATA/NVMe® solid-state drives (SSDs); up to 6 Enterprise and Data Center SSD Form Factor (EDSFF) drives | Front bays: Up to 4 x 2.5-inch SAS/SATA/NVMe® SSDs, 61.44 TB max; up to 8 x E3.S NVMe® direct drives, 51.2 TB max |

Dimensions | 2 x 2.5-inches or 4 x 2.5 with seven possible configurations | Rear-accessed configuration:

Front accessed configuration:

|

Weight | 17.36 kg (38.19 pounds) to 18.93 kg (41.65 pounds), depending on configuration | Max 21.16 kg (46.64 pounds) |

Form Factor | 2U rack | 2U rack |

Table 4 shows the configuration details for the VMmark comparison between the two PowerEdge XR family servers.

Table 4, VMmark® comparison between the Dell™ PowerEdge™ XR7620 server and the PowerEdge XR4510c server

VMmark® 3.1.1 Results | ||

Summary | ||

Category | Dell™ PowerEdge™ XR4510c[23] | Dell™ PowerEdge™ XR7620[24] |

VMmark® 3 Average Watts | 1,085.50 | 1,878.63 |

VMmark® 3 Applications Score | 4.93 | 14.08 |

VMmark® 3 Infrastructure Score | 2.15 | 1.06 |

VMmark® 3 Score | 4.37 | 11.48 |

VMmark® 3 PPKW | 4.0285 at 4 tiles | 6.1093 at 14 tiles |

Configuration | ||

Server | Dell™ PowerEdge™ XR4510c23 | Dell™ PowerEdge™ XR762024 |

Nodes | 4 physical (with local hardware-based witness node) | 2 (with VMware vSAN™ witness appliance) |

Storage | VMware vSAN™ 8.0—all-flash | VMware vSAN™ 8.0—all-flash |

Hypervisor | VMware ESXi™ 8.0 GA, build 20513097 | VMware ESXi™ 8.0b, build 21203435 |

Data Center Management Software | VMware vCenter Server® 8.0 GA, build 20519528 | VMware vCenter Server® 8.0c, build 21457384 |

Number of Servers in System Under Test | 4 | 2 |

Processor | Intel® Xeon® D-2776NT processor | Intel® Xeon® Gold 6448Y processor |

Processor Speed (GHz)/Intel® Turbo Boost Technology Speed (GHz) | 2.10 GHz/3.20 GHz | 2.10 GHz/4.10 GHz |

Total Sockets/Cores/Threads in Test | 4 sockets/64 cores/128 threads | 4 sockets/128 cores/256 threads |

Memory Size (in GB, Number of DIMMs) | 512 GB, 4 | 2,048 GB, 16 |

Memory Type and Speed | 128 GB 4Rx4 DDR4 3,200 MT/s LRDIMM | 128 GB DDR5 4Rx4 4,800 MT/s RDIMMs |

The analysis in this document was done by Prowess Consulting and commissioned by Dell Technologies.

Results have been simulated and are provided for informational purposes only. Any difference in system hardware or software design or configuration may affect actual performance.

Prowess Consulting and the Prowess logo are trademarks of Prowess Consulting, LLC.

Copyright © 2023 Prowess Consulting, LLC. All rights reserved.

Other trademarks are the property of their respective owners.

[1] Dell. “Dell’s PowerEdge XR7620 for Telecom/Edge Compute.” May 2023. https://infohub.delltechnologies.com/p/dell-s-poweredge-xr7620-for-telecom-edge-compute/.

[2] Cisco. “Cisco Firepower 4112, 4115, 4125, and 4145 Hardware Installation Guide.” June 2023. www.cisco.com/c/en/us/td/docs/security/firepower/41x5/hw/guide/install-41x5.html.

[3] Dell. “Computing on the Edge: NEBS Criteria Levels.” November 2022. https://infohub.delltechnologies.com/p/computing-on-the-edge-nebs-criteria-levels/.

[4] MIL-STD-810. “Environmental Engineering Considerations and Laboratory Tests.” May 2022. https://quicksearch.dla.mil/qsDocDetails.aspx?ident_number=35978.

[5] US Department of Defense. “Environmental Engineering Considerations And Laboratory Tests.” Revision G Change 1 (change incorporated). Figure 402-1. Life Cycle Environmental Profile Development Guide. April 2014. https://quicksearch.dla.mil/qsDocDetails.aspx?ident_number=35978 [then select the "Revision G Change 1 (change incorporated)" document].

[6] Intel. Performance Index (4th Gen Intel Xeon Scalable Processors, G1). Accessed May 2023. www.intel.com/PerformanceIndex.

[7] Intel. Performance Index (4th Gen Intel Xeon Scalable Processors, N18). Accessed May 2023. www.intel.com/PerformanceIndex.

[8] Intel. Performance Index (4th Gen Intel Xeon Scalable Processors, N16). Accessed May 2023. www.intel.com/PerformanceIndex.

[9] Data provided by Dell Technologies in May 2023.

[10] VMware. “VMmark.” Accessed June 2023. www.vmware.com/products/vmmark.html.

[11] Dell. "XR4000w Multi-Node Edge Server (Intel)." Accessed July 2023. https://www.dell.com/en-us/shop/ipovw/poweredge-xr4000w.

[12] VMware. “What Is VMware vSAN?” Accessed July 2023. www.vmware.com/products/vsan.html.

[13] Luxoft. “Achieving the benefits of SDVs using virtualization.” May 2023. www.luxoft.com/blog/virtualization-revolutionizing-software-defined-vehicles-development.

[14] Dell. “Dell PowerEdge XR4000 Specification Sheet.” Accessed June 2023. www.dell.com/en-us/dt/oem/servers/rugged-servers.htm#pdf-overlay=//www.delltechnologies.com/asset/en-us/solutions/oem-solutions/technical-support/dell-oem-poweredge-xr4000-spec-sheet.pdf.

[15] Intel. “Intel® Accelerator Engines.” Accessed June 2023. www.intel.com/content/www/us/en/products/docs/accelerator-engines/overview.html.

[16] Dell. “Dell PowerEdge RAID Controller 12.” May 2023. https://infohub.delltechnologies.com/p/dell-poweredge-raid-controller-12/.

[17] Dell. “VMmark on XR4000.” January 2023. https://infohub.delltechnologies.com/p/vmmark-on-xr4000/.

[18] Intel. “Intel Xeon D2776NT Processor.” Accessed June 2023. https://ark.intel.com/content/www/us/en/ark/products/226239/intel-xeon-d2776nt-processor-25m-cache-up-to-3-20-ghz.html.

[19] Dell. “Dell EMC PowerEdge XE2420 Technical Specifications.” Accessed June 2023. https://dl.dell.com/topicspdf/poweredge-xe2420_reference-guide_en-us.pdf.

[20] Dell. “PowerEdge XE2420 Specification Sheet.” Accessed June 2023. https://i.dell.com/sites/csdocuments/Product_Docs/en/PowerEdge-XE2420-Spec-Sheet.pdf.

[21] Intel. “Intel Xeon Gold 6448Y Processor.” Accessed June 2023. https://ark.intel.com/content/www/us/en/ark/products/232384/intel-xeon-gold-6448y-processor-60m-cache-2-10-ghz.html.

[22] Dell. “PowerEdge XR7620 Specification Sheet.” Accessed June 2023. www.delltechnologies.com/asset/en-us/products/servers/technical-support/poweredge-xr7620-spec-sheet.pdf.

[23] VMmark. “VMmark® 3.1.1 Results, November 29, 2022.” www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/vmmark/2022-11-29-Dell-PowerEdge-XR4510c-serverPPKW.pdf.

[24] VMmark. “VMmark® 3.1.1 Results, May 16, 2023.” www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/vmmark/2023-05-16-Dell-PowerEdge-XR7620.pdf.