Llama-2 on Dell PowerEdge XE9640 with Intel Data Center GPU Max 1550

Fri, 12 Jan 2024 18:04:24 -0000

|Read Time: 0 minutes

Part two is now available: https://infohub.delltechnologies.com/p/expanding-gpu-choice-with-intel-data-center-gpu-max-series/

|  |  |  |

| MORE CHOICE IN THE GPU MARKET

We are delighted to showcase our collaboration with Intel® to introduce expanded options within the GPU market with the Intel® Data Center GPU Max Series, now accessible via Dell™ PowerEdge™ XE9640.

The Intel® Data Center GPU Max Series is Intel® highest performing GPU with more than 100 billion transistors, up to 128 Xe cores, and up to 128 GB of high bandwidth memory. Intel® Data Center GPU Max Series pairs seamlessly with Dell™ PowerEdge™ XE9640, Dell™ first liquid-cooled 4-way GPU platform in a 2u server.

Dell™ recently announced partnerships with both Meta and Hugging Face to enable seamless support for enterprises to select, deploy, and fine-tune AI models for industry specific use cases anchored by Llama 2 7B Chat from Meta.

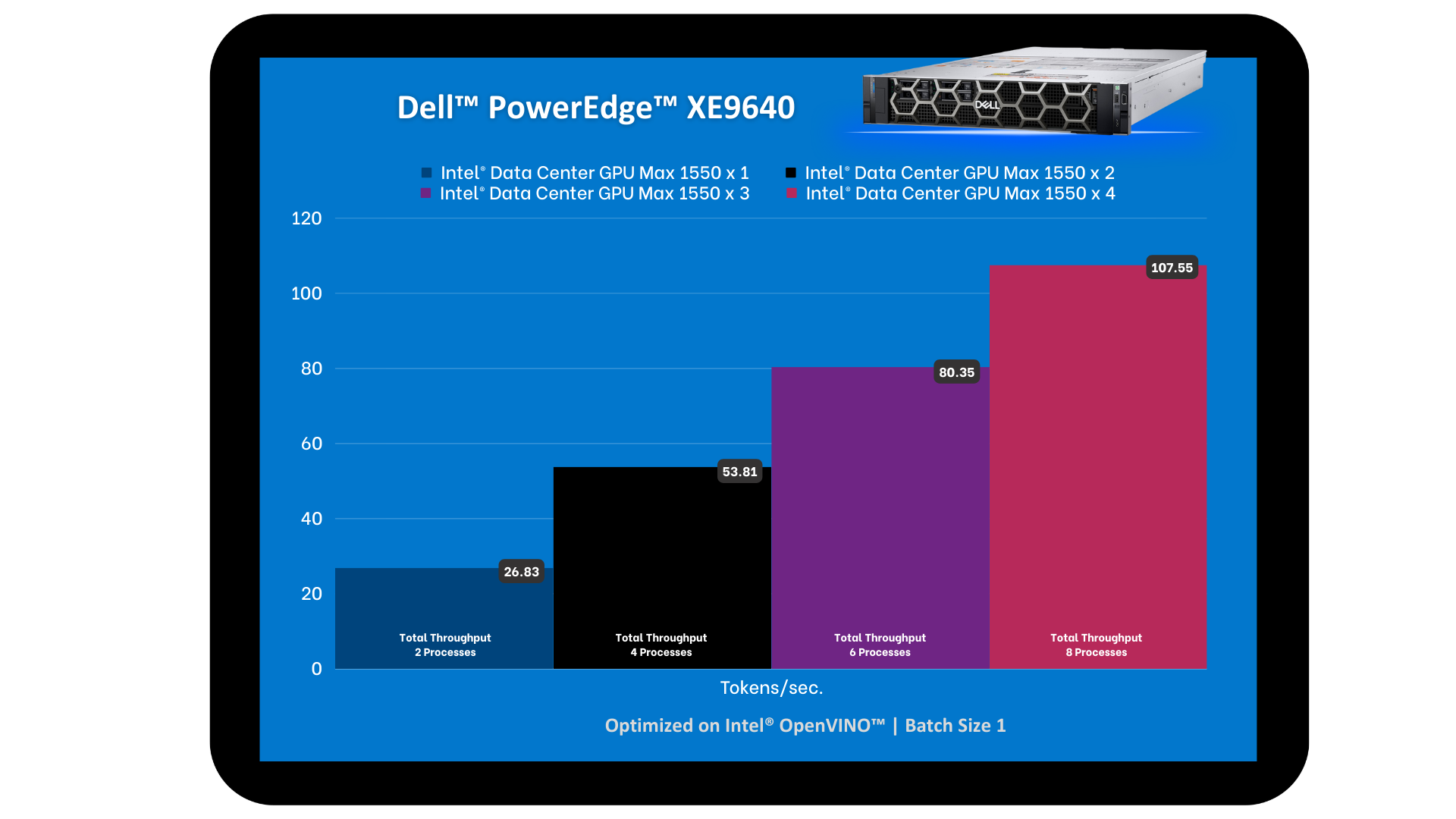

We put Dell™ PowerEdge™ XE9640 and Intel® Data Center GPU Max Series to the test with the Llama-2 7B Chat model. In doing so, we tested the tokens per second and the number of concurrent users that can be supported while scaling up to four GPUs. Dell™ PowerEdge™ XE9640 and Intel® Data Center GPU Max Series showcased a strong scalability and met target end user latency goals.

“Scalers AI™ ran eight concurrent processes of Llama-2 7B Chat with Dell™ PowerEdge™ XE9640 and Intel® Data Center GPU Max Series for a total throughput of >107 tokens per second, achieving our end user token latency target of 100 milliseconds”

Chetan Gadgil, CTO at Scalers AI

Chetan Gadgil, CTO at Scalers AI

| LLAMA-2 7B CHAT MODEL

Large Language Models (LLMs) are powerful deep learning architectures that have been pre-trained on large datasets such as OpenAI ChatGPT. We have chosen to test Llama-2 7B Chat because it is an open source model that can be leveraged for commercial use cases, such as coding, functional tasks, and even creative tasks.

For inference testing in Large Language Models such as Llama-2 7B Chat, GPUs are incredibly useful due to their parallel processing architecture which can handle Llama-2's massive parameter sets. To efficiently handle expanding datasets, powerful GPUs such as Intel® Data Center GPU Max 1550 are critical.

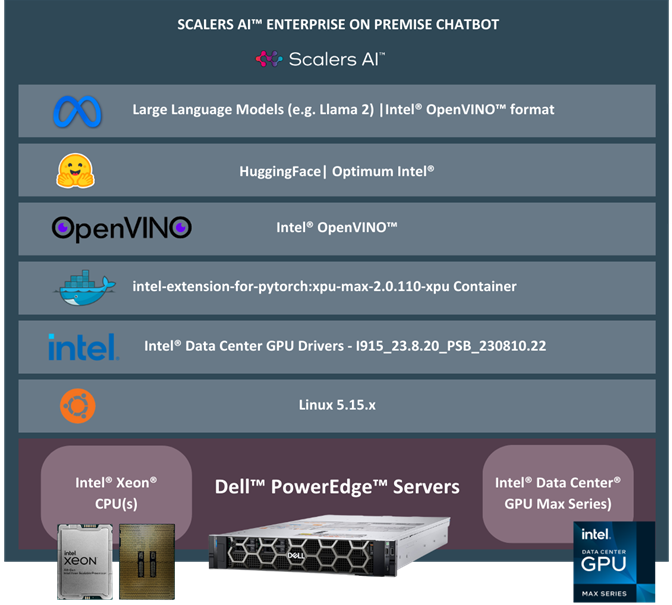

| ARCHITECTURE

We started our testing environment with Dell™ PowerEdge™ XE9640 with four Intel® Data Center GPU Max 1550, running on Ubuntu 22.04.

We used Hugging Face Optimum, an extension of Transformers that provides a set of performance optimization tools to train and run models on targeted hardware, ensuring maximum efficiency. For Intel® Data Center GPU Max 1550, we selected the Optimum-Intel package. Optimum-intel integrates libraries provided by Intel® to accelerate end-to-end pipelines on Intel®. With Optimum-intel you can optimize your model to Intel® OpenVINO™ IR format and attain enhanced performance using the Intel® OpenVINO™ runtime.

Dell™ PowerEdge™ XE9640 Intel® Data Center GPU Max 1550

Source: https://www.dell.com/ Source: https://www.intel.com

| SYSTEM SET-UP SETUP

1. Installation of Drivers

To install drivers for the Intel® Data Center GPU Max Series, we followed the steps here.

2. Verification of Installation

To verify the installation of the drivers, we followed the steps here.

3. Installation of Docker

To install Docker on Ubuntu 22.04.3., we followed the steps here.

| RUNNING THE LLAMA-2 7B CHAT MODEL

1. Set up a Docker container for all our dependencies to ensure seamless deployment and straightforward replication:

sudo docker run --rm -it --privileged --device=/dev/dri --ipc=host intel/intel-extension-for-pytorch:xpu-max-2.0.110-xpu bash

2. To install the Python dependencies, our Llama-2 7B Chat model requires:

pip install openvino==2023.2.0

pip install transformers==4.33.1

pip install optimum-intel==1.11.0

pip install onnx==1.15.0

3. Access the Llama-2 7B Chat model through HuggingFace:

huggingface-cli login

4. Convert the Llama-2 7B Chat HuggingFace model into Intel® OpenVINO™ IR format using Intel® Optimum to export it:

from optimum.intel import OVModelForCausalLM

from transformers import AutoTokenizer

model_id = "meta-llama/Llama-2-7b-chat-hf"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = OVModelForCausalLM.from_pretrained(model_id, export=True) model.save_pretrained("llama-2-7b-chat-ov")

tokenizer.save_pretrained("llama-2-7b-chat-ov")

5. Run the code snippet below to generate the text with the Llama-2 7B Chat model:

import time

from optimum.intel import OVModelForCausalLM

from transformers import AutoTokenizer, pipeline

model_name = "llama-2-7b-chat-ov"

input_text = "What are the key features of Intel's data center GPUs?"

max_new_tokens = 100

# Initialize and load tokenizer, model

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = OVModelForCausalLM.from_pretrained(model_name, ov_config= {"INFERENCE_PRECISION_HINT":"f32"}, compile=False)

model.to("GPU")

model.compile()

# Initialize HF pipeline

text_generator = pipeline( "text-generation", model=model, tokenizer=tokenizer, return_tensors=True, )

# Inference

start_time = time.time()

output = text_generator( input_text, max_new_tokens=max_new_tokens ) _ = tokenizer.decode(output[0]["generated_token_ids"])

end_time = time.time()

# Calculate number of tokens generated

num_tokens = len(output[0]["generated_token_ids"])

inference_time = end_time - start_time

token_per_sec = num_tokens / inference_time

print(f"Inference time: {inference_time} sec")

print(f"Token per sec: {token_per_sec}")

| ENTER PROMPT

What are the key features of Intel® Data Center GPUs?

Output

Intel® Data Center GPUs are designed to provide high levels of performance and power efficiency for a wide range of applications including machine learning, artificial intelligence and high performance computing.

Some of the key features of Intel® Data Center GPUs include:

1. High performance Intel® Data Center GPUs are designed to provide high levels of performance for demanding workloads, such as deep learning and scientific simulations.

2. Power efficiency.

| PERFORMANCE RESULTS & ANALYSIS

Figure: Comparing GPU vs CPU Performance

During the evaluation of the GPU configurations performance, we observed that a machine with a single GPU achieved a throughput of ~13 tokens per second across two concurrent processes. With two GPUs, we noted ~13 tokens per second across four concurrent processes for a total throughput of ~54 tokens per second. With four GPUs, we observed a total throughput of ~107 tokens per second supporting eight processes concurrently. The latency per process remains well below Scalers AI™ target of 100 milliseconds, despite an increase in the number of concurrent processes.

As latency represents the time a user must wait before task completion, it is a critical metric for hardware selection on large language models. This evaluation underscores the significant impact of GPU parallelism on both throughput and user response time. The scalability from one GPU to four GPUs reflects a significant enhancement in computational power, enabling more concurrent processes at nearly the same latency.

Our results demonstrate that Dell™ PowerEdge™ XE9640 with four Intel® Data Center GPU Max 1550 is up to the task of running Llama-2 7B Chat and meeting end user experience targets.

Number of GPUS | Throughput (Tokens/second) | Number of processes | Token Latency (ms) |

1 | 26.83 | 2 | 74.55 |

2 | 53.81 | 4 | 74.34 |

3 | 80.35 | 6 | 74.68 |

4 | 107.55 | 8 | 74.38 |

Table: Results after taking different number of GPUs

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

| ABOUT SCALERS AI™

Scalers AI™ specializes in creating end-to-end artificial intelligence (AI) solutions to fast track industry transformation across a wide range of industries, including retail, smart cities, manufacturing, insurance, finance, legal and healthcare. Scalers AI™ industry offerings include custom large language models and multimodal platforms supporting voice, image, and text. As a full stack AI solutions company with solutions ranging from the cloud to the edge, our customers often need versatile common off the shelf (COTS) hardware that works well across a range of workloads.

| Dell™ PowerEdge™ XE9640 Key specifications

MACHINE | Dell™ PowerEdge™ XE9640 |

Operating system | Ubuntu 22.04.3 LTS |

CPU | Intel® Xeon® Platinum 8468 |

MEMORY | 512Gi |

GPU | Intel® Data Center GPU Max 1550 |

GPU COUNT | 4 |

SOFTWARE STACK | Intel® OpenVINO® - 2023.2.0 transformers - 4.33.1 optimum-intel - 1.11.0" xpu-smi - 1.2.22.20231025 |

| HUGGING FACE OPTIMUM

Learn more: https://huggingface.co

| TEST METHODOLOGY

The Llama-2 7B Chat FP32 model is exported into the Intel® OpenVINO™ format and then tested for text generation (inference) using Hugging Face Optimum. Hugging Face Optimum is an extension of Hugging Face transformers and Diffusers that provides tools to export and run optimized models on various ecosystems including Intel® OpenVINO™.

For performance tests, 20 iterations were executed for each inference scenario out of which initial five iterations were considered as warm-up and were discarded for calculating Inference time (in seconds) and tokens per second. The time collected includes encode-decode time using tokenizer and LLM inference time.

Read part two: https://infohub.delltechnologies.com/p/expanding-gpu-choice-with-intel-data-center-gpu-max-series/