HPC Application Performance on Dell PowerEdge C6620 with INTEL 8480+ SPR

Thu, 09 Nov 2023 15:46:41 -0000

|Read Time: 0 minutes

Overview

With a robust HPC and AI Innovation Lab at the helm, Dell continues to ensure that PowerEdge servers are cutting-edge pioneers in the ever-evolving world of HPC. The latest stride in this journey comes in the form of the Intel Sapphire Rapids processor, a powerhouse of computational prowess. When combined with the cutting-edge infrastructure of the Dell PowerEdge 16th generation servers, a new era of performance and efficiency dawns upon the HPC landscape. This blog post provides comprehensive benchmark assessments spanning various verticals within high-performance computing.

It is Dell Technologies’ goal to help accelerate time to value for customers, as well as leverage benchmark performance and scaling studies to help plan out their environments. By using Dell’s solutions, customers spend less time testing different combinations of CPU, memory, and interconnect, or choosing the CPU with the sweet spot for performance. Additionally, customers do not have to spend time deciding which BIOS features to tweak for best performance and scaling. Dell wants to accelerate the set-up, deployment, and tuning of HPC clusters to enable customers real value while running their applications and solving complex problems (such as weather modeling).

Testbed Configuration

This study conducted benchmarking on high-performance computing applications using Dell PowerEdge 16th generation servers featuring Intel Sapphire Rapids processors.

Benchmark Hardware and Software Configuration

Table 1. Test bed system configuration used for this benchmark study

Platform | Dell PowerEdge C6620 |

Processor | Intel Sapphire Rapids 8480+ |

Cores/Socket | 56 (112 total) |

Base Frequency | 2.0 GHz |

Max Turbo Frequency | 3.80 GHz |

TDP | 350 W |

L3 Cache | 105 MB |

Memory | 512 GB | DDR5 4800 MT/s |

Interconnect | NVIDIA Mellanox ConnectX-7 NDR 200 |

Operating System | Red Hat Enterprise Linux 8.6 |

Linux Kernel | 4.18.0-372.32.1 |

BIOS | 1.0.1 |

OFED | 5.6.2.0.9 |

System Profile | Performance Optimized |

Compiler | Intel OneAPI 2023.0.0 | Compiler 2023.0.0 |

MPI | Intel MPI 2021.8.0 |

Turbo Boost | ON |

Interconnect | Mellanox NDR 200 |

Application | Vertical Domain | Benchmark Datasets |

OpenFOAM | Manufacturing - Computational Fluid Dynamics (CFD) | Motorbike 50 M 34 M and 20 M cell mesh |

Weather Research and Forecasting (WRF) | Weather and Environment | |

Large-scale Atomic/Molecular Massively Parallel Simulator (LAMMPS) | Molecular Dynamics | Rhodo, EAM, Stilliger Weber, tersoff, HECBIOSIM, and Airebo |

GROMACS | Life Sciences – Molecular Dynamics | HECBioSim Benchmarks – 3M Atoms, Water, and Prace LignoCellulose |

CP2K | Life Sciences | H2O-DFT-LS-NREP- 4, 6 H2O-64-RI-MP 2 |

Performance Scalability for HPC Application Domain

Vertical – Manufacturing | Application - OPENFOAM

OpenFOAM is an open-source computational fluid dynamics (CFD) software package renowned for its versatility in simulating fluid flows, turbulence, and heat transfer. It offers a robust framework for engineers and scientists to model complex fluid dynamics problems and conduct simulations with customizable features. This study worked on OpenFOAM version 9, which have been compiled with Intel ONE API 2023.0.0 and Intel MPI 2021.8.0 compilers. For successful compilation and optimization with the Intel compilers, additional flags such as '-O3 -xSAPPHIRERAPIDS -m64 -fPIC' have been added.

The tutorial case under the simpleFoam solver category, motorbike, were used for evaluating the performance of the OpenFOAM package on intel 8480+ processors. Three different types of grids were generated such as 20 M, 34 M, and 50 M cells using the blockMesh and snappyHexMesh utilities of OpenFOAM. Each run was conducted with full cores (112 cores per node) and from a single node to sixteen nodes, while scalability tests were done for all three sets of grids. The steady state simpleFoam solver execution time was noted as performance numbers.

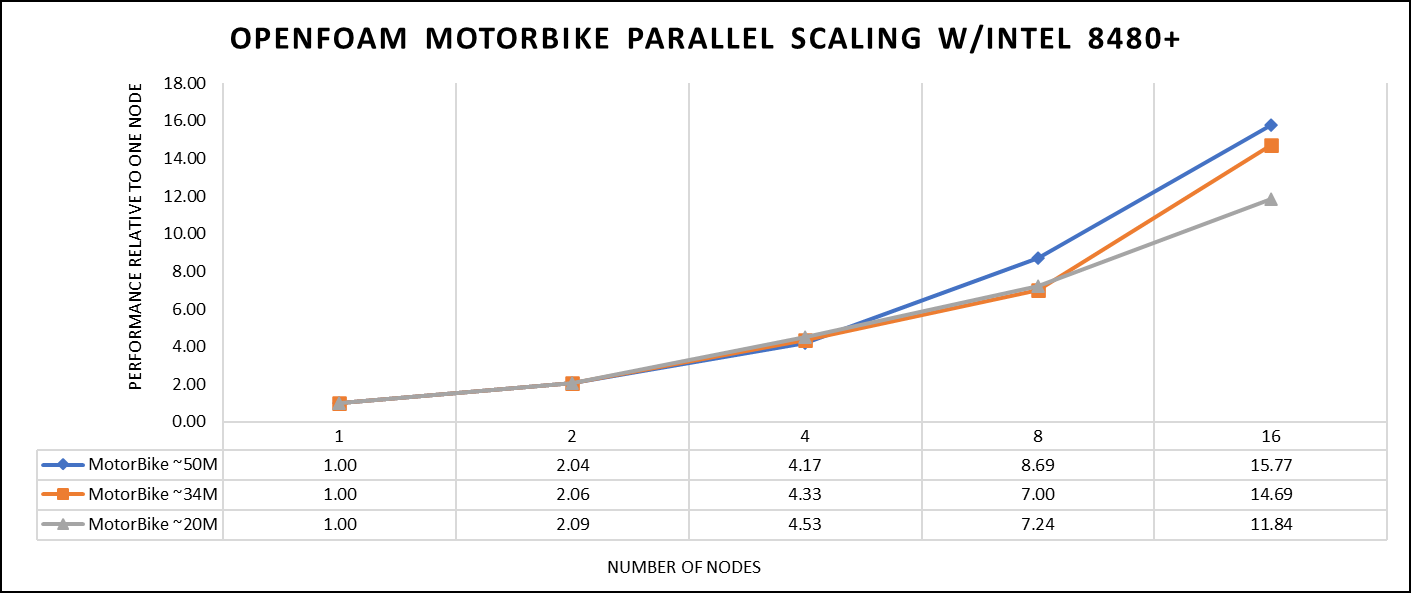

The figure below shows the application performance for all the datasets:

Figure 1. The scaling performance of the OpenFOAM Motorbike dataset using the Intel 8480+ processor, with a focus on performance compared to a single node.

The results are non-dimensionalized with single node result, with the scalability depicted in Figure 1. The Intel-compiled binaries of OpenFOAM shows linear scaling from a single node to sixteen nodes on 8480+ processors for higher dataset (50 M). For other datasets with 20 M and 34 M cells, the linear scaling was shown up to eight nodes and from eight nodes to sixteen nodes the scalability was reduced.

Achieving satisfactory results with smaller datasets can be accomplished using fewer processors and nodes. Nonetheless, augmenting the node count; therefore, the processor count, in relation to the solver's computation time, leads to increased inter-processor communication, later extending the overall runtime. Consequently, higher node counts prove more beneficial when handling larger datasets within OpenFOAM simulations.

Vertical – Weather and Environment | Application - WRF

The Weather Research and Forecasting model (WRF) is at the forefront of meteorological and atmospheric research, with its latest version being a testament to advancements in high-performance computing (HPC). WRF enables scientists and meteorologists to simulate and forecast complex weather patterns with unparalleled precision. This study involved working on WRF version 4.5, which have been compiled with Intel ONEAPI 2023.0.0 and Intel MPI 2021.8.0 compilers. For successful compilation and optimization with the Intel compilers, additional flags such as ' -O3 qopt-zmm-usage=high –xSAPPHIRERAPIDS -fpic’ were used.

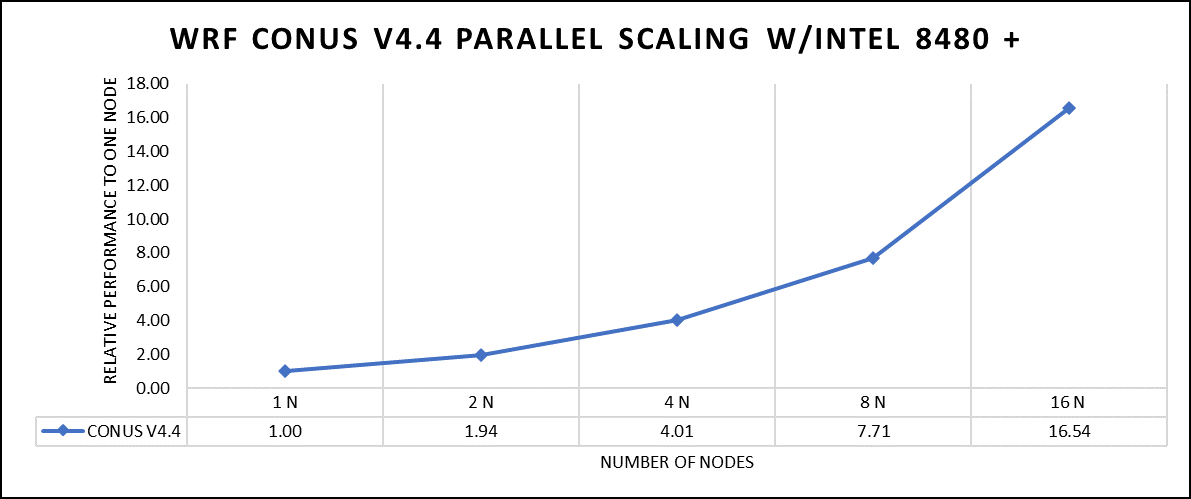

The dataset used in this study is CONUS v4.4, meaning the model's grid, parameters, and input data are set up to focus on weather conditions within the continental United States. This configuration is particularly useful for researchers, meteorologists, and government agencies who need high-resolution weather forecasts and simulations tailored to this specific geographic area. The configuration details, such as grid resolution, atmospheric physics schemes, and input data sources, can vary depending on the specific version of WRF and the goals of the modeling project. This study predominantly adhered to the default input configuration, making minimal alterations or adjustments to the source code or input file. Each run was conducted with full cores (112 cores per node). The scalability tests were done from a single node to sixteen nodes, and the performance metric in “sec” was noted.

Figure 2. The scaling performance of the WRF CONUS dataset using the Intel 8480+ processor, with a focus on performance compared to a single node.

The INTEL compiled binaries of WRF show linear scaling from a single node to sixteen nodes on 8480+ processors for the new CONUS v4.4. For the best performance with WRF, the impact of the tile size, process, and threads per process should be carefully considered. Given that the memory and DRAM bandwidth constrain the application, the team opted for the latest DDR5 4800 MT/s DRAM for test evaluations. Additionally, it is crucial to consider the BIOS settings, particularly SubNUMA configurations, as these settings can significantly influence the performance of memory-bound applications, potentially leading to improvements ranging from one to five percent.

For more detailed BIOS tuning recommendations, see the previous blog post on optimizing BIOS settings for optimal performance.

Vertical – Molecular Dynamics | Application – LAMMPS

LAMMPS, which stands for Large-scale Atomic/Molecular Massively Parallel Simulator, is a powerful tool for HPC. It is specifically designed to harness the immense computational capabilities of HPC clusters and supercomputers. LAMMPS allows researchers and scientists to conduct large-scale molecular dynamics simulations with remarkable efficiency and scalability. This study worked on LAMMPS, the 15 June 2023 version, which have been compiled with Intel ONEAPI 2023.0.0 and Intel MPI 2021.8.0 compilers. For successful compilation and optimization with the Intel compilers, additional flags such as “ -O3 qopt-zmm-usage=high –xSAPPHIRERAPIDS -fpic,” were used.

The team opted for the default INTEL package, which offers optimized atom pair styles for vector instructions on Intel processors. The team also tried running some benchmarks which are not supported with the INTEL package to check the performance and scaling. The performance metric for this benchmark is nanoseconds per day where higher is considered better.

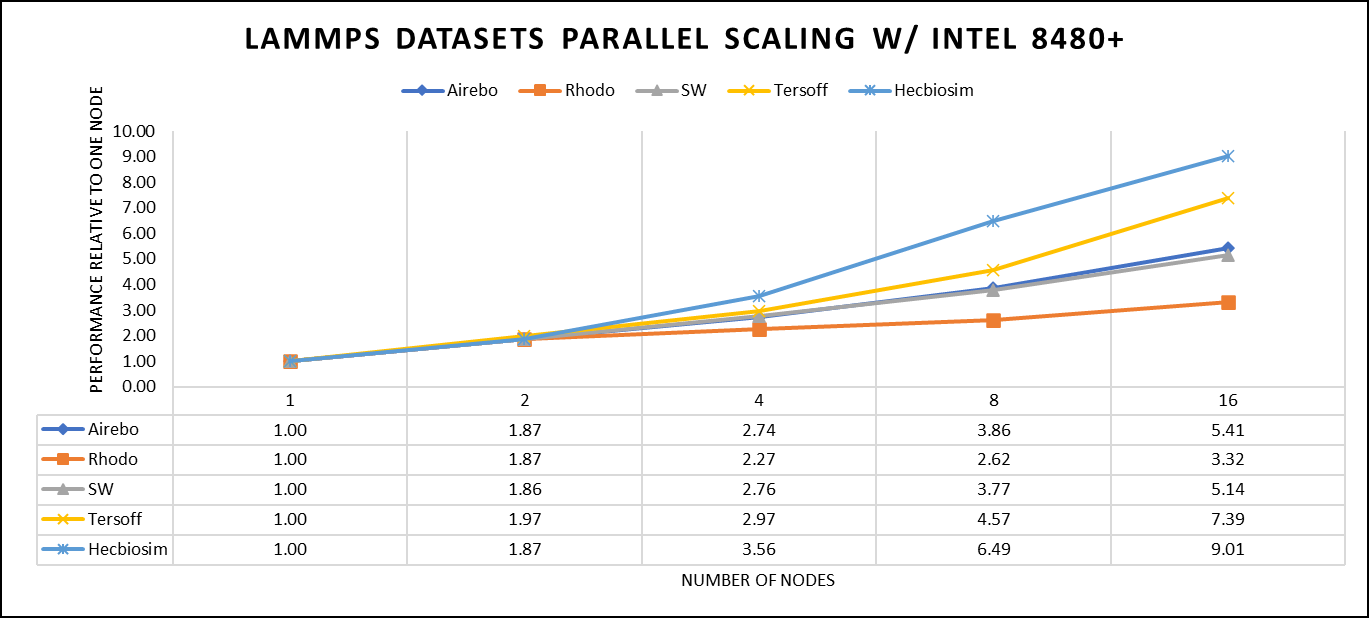

There are two factors that were considered when compiling data for comparison: the number of nodes and the core count. Below are the results of performance improvement observed on processor 8480+ with 112 cores:

Figure 3. The scaling performance of the LAMMPS datasets using the Intel 8480+ processor, with a focus on performance compared to a single node.

Figure 3 shows the scaling of different LAMMPS datasets. Noticeable enhancement in scalability is evident with the increment in atom size and step size. The examination involved two datasets, EAM and Hecbiosim, each containing over 3 million atoms. The results indicated better scalability when compared to the other datasets analyzed.

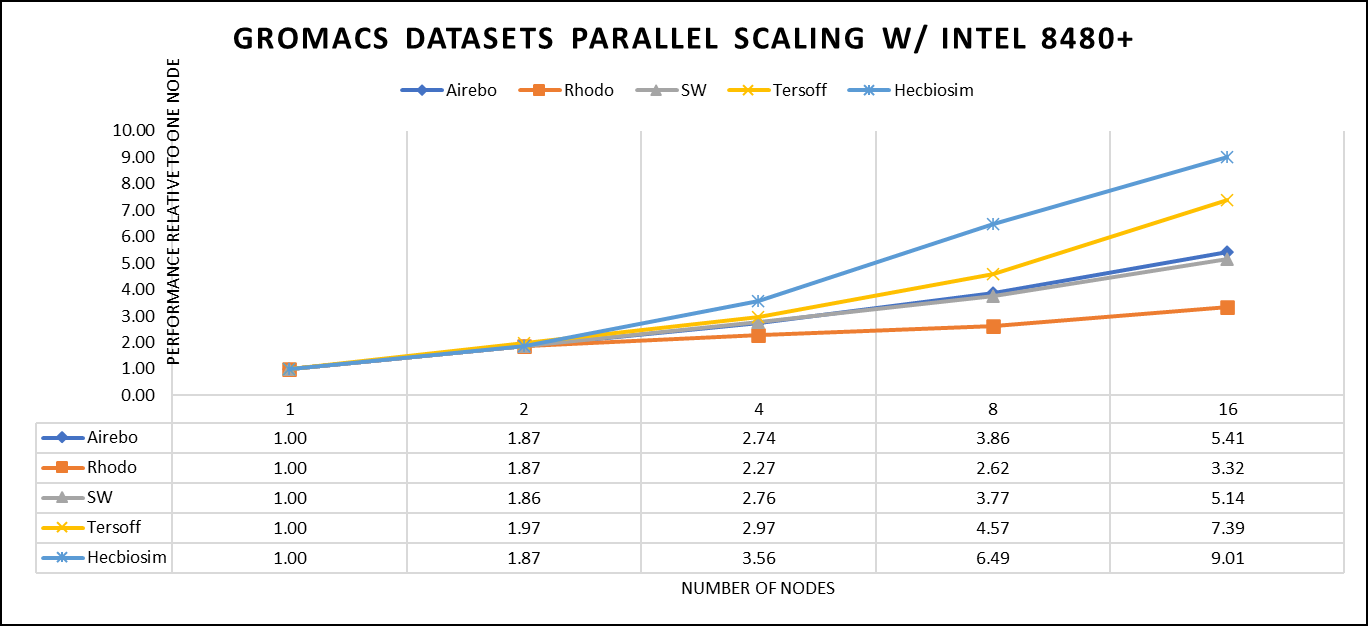

Vertical – Molecular Dynamics | Application - GROMACS

GROMACS, a high-performance molecular dynamics software, is a vital tool for HPC environments. Tailored for HPC clusters and supercomputers, GROMACS specializes in simulating the intricate movements and interactions of atoms and molecules. Researchers in diverse fields, including biochemistry and biophysics, rely on its efficiency and scalability to explore complex molecular processes. GROMACS is used for its ability to harness the immense computational power of HPC, allowing scientists to conduct intricate simulations that reveal critical insights into atomicatomic-level behaviours, from biomolecules to chemical reactions and materials. This study worked on GROMACS 2023.1 version, which has been compiled with Intel ONEAPI 2023.0.0 and Intel MPI 2021.8.0 compilers. For successful compilation and optimization with the Intel compilers, additional flags such as “ -O3 qopt-zmm-usage=high –xSAPPHIRERAPIDS -fpic,” were used.

The team curated a range of datasets for the benchmarking assessments. First, the team included "water GMX_50 1536" and "water GMX_50 3072," which represent simulations involving water molecules. These simulations are pivotal for gaining insights into solvation, diffusion, and the water's behavior in diverse conditions. Next, the team incorporated "HECBIOSIM 14 K" and "HECBIOSIM 30 K" datasets, which were specifically chosen for their ability to investigate intricate systems and larger biomolecular structures. Lastly, the team included the "PRACE Lignocellulose" dataset, which aligns with the benchmarking objectives, particularly in the context of lignocellulose research. These datasets collectively offer a diverse array of scenarios for the benchmarking assessments.

The performance assessment was based on the measurement of nanoseconds per day (ns/day) for each dataset, providing valuable insights into the computational efficiency. Additionally, the team paid careful attention to optimizing the mdrun tuning parameters (i.e, ntomp, dlb tunepme nsteps, etc )in every test run to ensure accurate and reliable results. The team examined the scalability by conducting tests spanning from a single node to sixteen nodes.

Figure 4. The scaling performance of the GROMACS datasets using the Intel 8480+ processor, with a focus on performance compared to a single node.

For ease of comparison across the various datasets, the relative performance has been included into a single graph. However, each dataset behaves individually when performance is considered, as each uses different molecular topology input files (tpr), and configuration files.

The team achieved the expected linear performance scalability for GROMACS of up to eight nodes All cores in each server were used while running these benchmarks. The performance increases are close to linear across all the dataset types; however, there is a drop in the larger number of nodes due to the smaller dataset size and the simulation iterations.

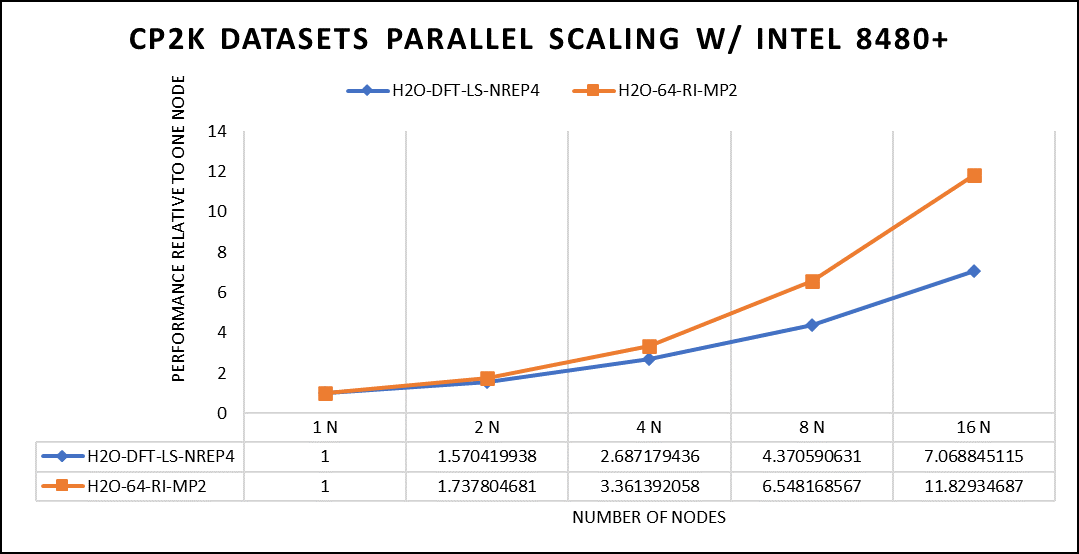

Vertical – Molecular Dynamics | Application – CP2K

CP2K is a versatile computational software package that covers a wide range of quantum chemistry and solid-state physics simulations, including molecular dynamics. It is not strictly limited to molecular dynamics but is instead a comprehensive tool for various computational chemistry and materials science tasks. While CP2K is widely used for molecular dynamics simulations, it can also perform tasks like electronic structure calculations, ab initio molecular dynamics, hybrid quantum mechanics/molecular mechanics (QM/MM) simulations, and more.

This study worked on the CP2K 2023.1 version, which has been compiled with Intel ONEAPI 2023.0.0 and Intel MPI 2021.8.0 compilers. For successful compilation and optimization with the Intel compilers, additional flags such as “ -O3 qopt-zmm-usage=high –xSAPPHIRERAPIDS -fpic,” were used.

Focusing on high-performance computing (HPC), the team used specific datasets optimized for computational efficiency. The first dataset, "H2O-DFT-LS-NREP-4,6," was configured for HPC simulations and calculations, emphasizing the modeling of water (H2O) using Density Functional Theory (DFT). The appended "NREP-4,6" parameter settings were fine-tuned to ensure efficient HPC performance. The second dataset, "H2O-64-RI-MP2," was exclusively crafted for HPC applications and revolved around the examination of a system consisting of 64 water molecules (H2O). By employing the Resolution of Identity (RI) method with the Møller–Plesset perturbation theory of second order (MP2), this dataset demonstrated the significant computational capabilities of HPC for conducting advanced electronic structure calculations within a high-molecule-count environment. The team examined the scalability by conducting tests spanning from a single node to sixteen nodes.

Figure 5. The scaling performance of the CP2K datasets using the Intel 8480+ processor, with a focus on performance compared to a single node.

The datasets represent a single-point energy calculation employing linear-scaling Density Functional Theory (DFT). The system consists of 6144 atoms confined within a 39 Å^3 simulation box, which translates to 2048 water molecules. To adjust the computational workload, you can modify the NREP parameter within the input file.

Performing with NREP6 necessitates more than 512 GB of memory on a single node. Failing to meet this requirement will result in a segmentation fault error. These benchmarking efforts encompass configurations involving up to 16 computational nodes. Optimal performance is achieved when using NREP4 and NREP6 in Hybrid mode, which combines Message Passing Interface (MPI) and Open Multi-Processing (OpenMP). This configuration exhibits the best scaling performance, particularly on four to eight nodes. However, it is worth noting that scaling beyond eight nodes does not exhibit a strictly linear performance improvement. Figure 5 depicts the outcomes when using Pure MPI, using 112 cores with a single thread per core.

Conclusion

With equivalent core counts, the prior generation of Intel Xeon processors can match the performance of the Sapphire Rapids counterpart. However, achieving this level of performance necessitates doubling the number of nodes. Therefore, a single 350W node equipped with the 8480+ processor can deliver comparable performance when compared to using two 500W nodes with the 8358 processor. In addition to optimizing the BIOS settings as outlined in our INTEL-focused blog, the team advises disabling Hyper-threading specifically for the benchmarks discussed in this article. However, for different types of workloads, the team recommends conducting thorough testing and enabling Hyper-threading if it proves beneficial. Furthermore, for this performance study, the team highly recommends using the Mellanox NDR 200 interconnect.