Can CPUs Effectively Run AI Applications?

Fri, 03 Mar 2023 20:06:28 -0000

|Read Time: 0 minutes

Due to the inherent advantages of GPUs in high speed scale matrix operations, developers have gravitated to GPUs for AI training (developing the model) and inference (the model in execution).

With the scarcity of GPUs driven by the massive growth of AI applications, including recent advancements in stable diffusion and large language models that have taken the world by storm, such as ChatGPT by OpenAI, the question for many developers is:

Are CPUs up to the task of AI?

To answer the question, Dell Technologies and Scalers AI set up a Dell PowerEdge R760 server with 4th Gen Intel® Xeon® processors and integrated Intel® Deep Learning acceleration. Notably, we did not install a GPU on this server.

In this blog, Part One of a two-part series, we’ll put this latest and greatest Intel® Xeon® CPU just released this month by Intel® to the test on AI inference . We’ll also run AI on video streams, one of the most common mediums to run AI, and pair industry specific application logic to showcase a real-world AI workload.

In Part Two, we’ll train a model in a technique called transfer learning. Most training is done on GPUs today, and transfer learning presents a great opportunity to leverage existing models while customizing for targeted use cases.

The industry specific use case

Scalers AI developed a smart city solution that uses artificial intelligence and computer vision to monitor traffic safety in real time. The solution identifies potential safety hazards, such as illegal lane changes on freeway on-ramps, reckless driving, and vehicle collisions, by analyzing video footage from cameras positioned at key locations.

For comparison, we also set up the previous generation Dell PowerEdge R750 server and ran the AI inferencing object detection workload on both servers. What did we learn?

Dell PowerEdge R760 with 4th Gen Intel® Xeon® Processors and Intel® Deep Learning Boost delivered!

Let’s find out about the generational server comparison.

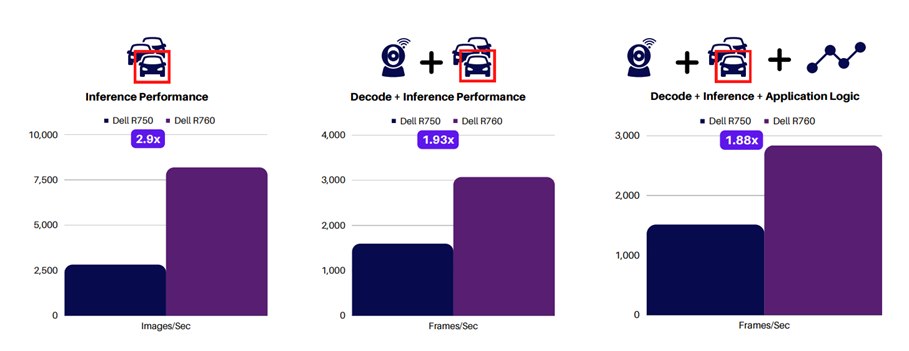

The following charts show the performance gain from the last gen to the current gen server. The graph on the left shows inference-only performance, while the middle graph adds video decode. Finally, the graph on the right shows the full application performance with the smart city solution application logic.

The performance claims are great. But what does this mean for my business?

Dell PowerEdge R760 and Scalers AI smart city solution results show that for a similar application, users can expect the Dell PowerEdge R760 server to perform real-time inferencing on up to 90 1080P video streams when it is deployed. Dell PowerEdge R750 can handle up to 50 1080P video streams, and this is all without a GPU. Although GPUs add additional AI computing capability, this study shows that they may only sometimes be necessary, depending on your unique requirements, such as how many streams must be displayed concurrently.

Given these results, Scalers AI confidently recommends using Dell PowerEdge R760 with 4th Gen Intel® Xeon® Processors and Intel® Deep Learning Boost for AI computer vision workloads, such as the Scalers AI Traffic Safety Solution using object detection, because they fulfill all application requirements.

Now that we have shown highly effective object detection on a CPU, what about a more compute-intensive complex model such as segmentation?

Here we are running segmentation on 10 streams, while displaying four streams on the more complex segmentation model.

As you can see, CPUs are up to the task of running AI inference on models such as object detection and segmentation. Perhaps more important for developers, they offer the flexibility to run the full workload on the same processor, thereby lowering the TCO.

With the rapid growth of AI, the ability to deploy on CPUs is a key differentiator for real-world use cases such as traffic safety. This frees up GPU resources for training and graphics use cases.

Check in for Part Two of this blog series as we discuss a technique to train a transfer learning model and put a CPU to the test there.

Resources

Interested in trying for yourself? Get access to the solution code!

To save developers hundreds of potential hours of development, Dell Technologies and Scalers AI are offering access to the solution code to fast-track development of AI workloads on next-generation Dell PowerEdge servers with 4th Gen Intel® Xeon® scalable processors.

For access to the code, reach out to your Dell representative or contact Scalers AI!

To learn more about the study discussed here, visit the following webpages:

• Myth-Busting:

Can Intel® Xeon® Processors Effectively Run AI Applications?

• Accelerate Industry Transformation:

Build Custom Models with Transfer Learning on Intel® Xeon®

• Scalers AI Performance Insights:

Dell PowerEdge R760 with 4th Gen Intel® Xeon® Scalable Processors in AI

Authors:

Steen Graham, CEO at Scalers AI

Delmar Hernandez, Server Technologist at Dell Technologies