Home > Storage > PowerFlex > White Papers > Dell PowerFlex Storage with VxRail Dynamic Nodes > VxRail dynamic node configuration

VxRail dynamic node configuration

-

PowerFlex recommends having a dedicated data networks for communication between the VxRail dynamic nodes. Once the VxRail dynamic node cluster is set up using VxRail Manager wizard, configure vDS and port groups for dynamic nodes as recommended by PowerFlex.

Configure PowerFlex network

Use the following steps to configure the VMware vCenter network switch. This configuration creates additional vDS switches that are required for storage data traffic that is used by the PowerFlex system.

Create vDS in VMware vCenter

To create vDS in VMware vCenter:

1. Log in to the VMware vSphere Web Client.

2. From the drop-down Menu select Networking. The Networking page is displayed.

3. Right-click the data center name and select Distributed Switch > New Distributed Switch.

- On the Name and location page, enter flex_dvswitch and then click Next.

- On the Select version page, keep the highest version, and click Next.

- Under configure settings:

- Set the Number of uplinks to 2.

- Enable Network I/O Control.

- Clear the default port group check box.

- Click Next.

d. On the Ready to complete page, review the settings that you have selected, and click Finish.

4. Right-click the newly created dvswitch and select Settings > Edit Settings.

- Click Advanced and change the MTU to 9000.

- Select the required protocol under Discovery protocol.

- Change the Operation to Both.

- Click OK.

5. Create four port groups (flex_data1, flex_data2, flex_data3 and flex_data4) on the newly created vDS:

- Right-click the dvswitch that is created for PowerFlex network and select Distributed Port group > New Distributed Port group.

- In Select name and location page, enter flex_data1 and then click Next.

- Under Configuration settings, select VLAN in VLAN type and enter the appropriate VLAN ID. Click Next.

- Review the settings on the Ready to complete page, and then click Finish.

- Follow the preceding steps to create a port group for flex_data2, flex_data3, and flex_data4.

6. Add the VxRail dynamic nodes to the newly created virtual distributed switches.

Create VMkernel interfaces

To create VMkernel interfaces for PowerFlex data1, data2, data3, and data4 networks:

1. In the vSphere Web Client, navigate to Menu a Hosts and Clusters.

2. Select one of the deployed VxRail dynamic nodes.

3. Go to Configure Networking VMkernel adapters.

- Click Add Networking.

- On the Select connection type page, select VMkernel Network Adapter and click Next.

- From the Select an existing network option, click Browse, and select flex_data1.

- Click OK, and then click Next.

- In the Port properties page, set MTU to 9000 if it is not already set, and then click Next.

- On the IPv4 settings page, select Use static IPv4 settings, enter the IP address and subnet for PowerFlex data1 network, and then click Next.

- Review the settings.

- Click Finish.

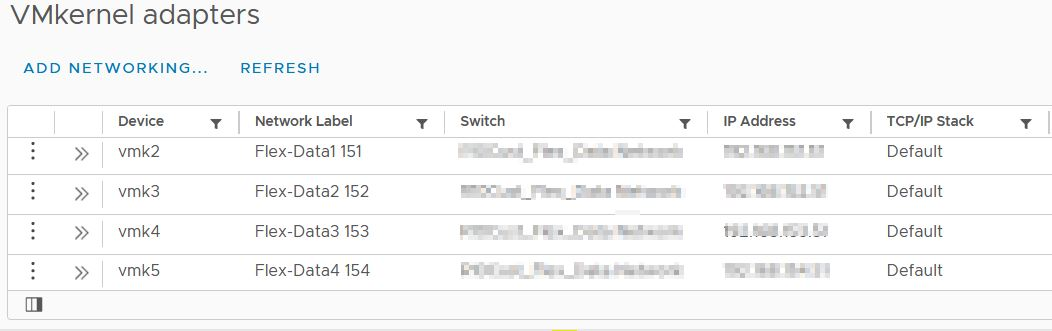

4. Perform step 1-3 for flex_data2, flex_data3 and flex_data4 networks. The following figure shows the Kernel adapters:

Figure 8. VMkernel adapters

5. Complete steps 1–4 for all the VxRail dynamic nodes.

Verify the network configuration

Perform the following steps to check the storage network configuration.

Before you start

Validate the network configuration on each node.

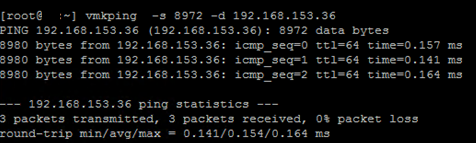

1. Log in to the node using SSH and run the following command:

vmkping-s 8972 -d xxx.xxx.xxx.xxx (replace xxx.xxx.xxx.xxx with the 1st PowerFlex storage VIP IP Address)

vmkping-s 8972 -d xxx.xxx.xxx.xxx (replace xxx.xxx.xxx.xxx with the 2nd PowerFlex storage VIP IP Address)

2. Review the output and validate the ping communication.

WARNING: Do not proceed further until the ping test passes successfully. Double-check all network settings and ensure that jumbo frames are properly set up in the network.

3. Perform the preceding two steps for each of the nodes.

Figure 9. Network configurartion.

Figure 9. Network configurartion.Perform the following steps to manually install the PowerFlex SDC (storage data client) driver on each VxRail dynamic CO node. This allows PowerFlex volumes to be mapped to the ESXi hosts.

Note: Most of the following steps are automated by using the Dell VSI plug-in. For more information, see Dell VSI capabilities for PowerFlex.

1. Copy the appropriate (based on ESXi version) PowerFlex SDC VIB file to each of the VxRail dynamic nodes. This solution requires the VIB for ESXi 7.x.

2. SSH to the node and run the following command to set the acceptance level:

esxcli software acceptance set --level=PartnerSupported

3. Install SDC by running following command :

esxcli software vib install -d /sdc-3.5.x.xxx-esx7.x- comp.zip

4. Reboot the ESXi node. For a first-time installation, the SDC will not automatically initialize. Further configuration and an additional reboot are required.

5. Once the host is up, run the following command to set the IP address of the MDM:

Note: See the local IP allocation table for the correct SDC, SDS, and MDM IPs.

esxcli system module parameters set -m scini -p "IoctlIniGuidStr=<GUID ID> IoctlMdmIPStr=<List_VIP_MDM_IPS >"

where:

- <LIST_VIP_MDM_IPS> is a comma-separated list of the MDM IP addresses or the virtual IP address of the MDM.

Separate the IP addresses of the same MDM cluster with a “,” symbol.

If connecting to more than one PowerFlex cluster , separate multiple MDM clusters with the “+” symbol.

- <GUID ID> is a user-generated string and it can be generated by tools that are freely available online. See https://www.guidgen.com/.

Note: Include the IP addresses of as many potential manager MDMs as possible to make it easier to switch MDMs in the future. A total of eight IP addresses is supported. If an MDM virtual IP address is not in use, obtain the IP addresses of all the MDM managers.

6. Perform a reboot on the VxRail dynamic nodes.

7. Run the following command to verify that the driver is loaded:

vmkload_mod -l | grep scini

8. Perform the preceding steps for each of the VxRail dynamic nodes.

Note: For detailed information, see Install the SDC on an ESXi server and connect it to PowerFlex using esxcli.

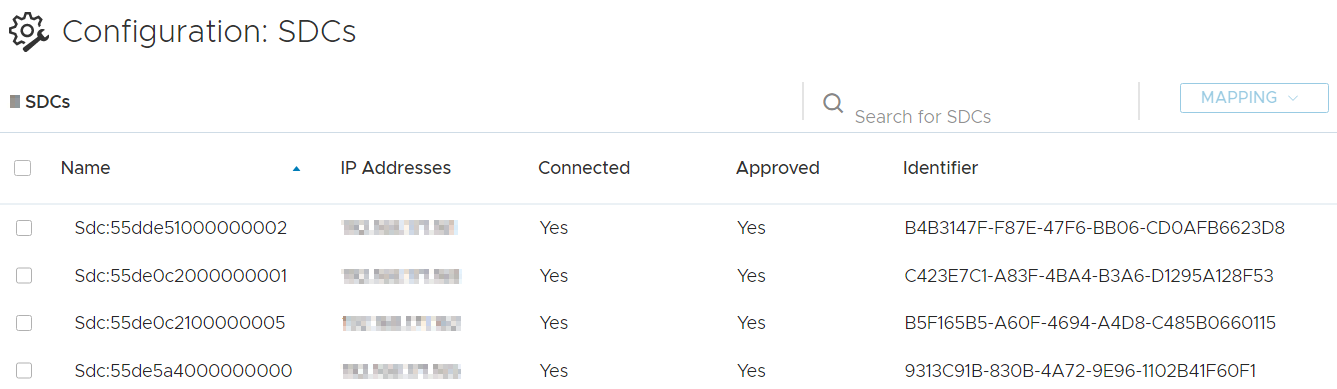

9. Verify the SDC connectivity and status from PowerFlex GUI:

- Log in to the PowerFlex GUI (PowerFlex presentation server) using MDM credentials.

- From Configuration section navigate to the SDC tab and verify that the SDCs are visible and that their connected status shows as Yes with a unique GUID listed as identifier (The SDCs can be renamed for convenience, if wanted), as shown in the following figure:

Figure 10. SDCs

Figure 10. SDCs