OpenShift Virtualization with NVIDIA virtual GPU - Part 1

Thu, 15 Feb 2024 08:55:16 -0000

|Read Time: 0 minutes

OpenShift Virtualization with NVIDIA virtual GPU

Red Hat OpenShift Virtualization enables users to run virtual machines (VMs) alongside containers on the same platform, simplifying management and reducing the complexity of maintaining separate infrastructures and management tools. OpenShift Virtualization unifies the operations and management of VMs and containers on the same platform, helping organizations to benefit from their existing virtualization investments. The seamless deployment of OpenShift Virtualization makes configuration quick and easy for administrators. An enhanced web console provides a graphical portal to manage these virtualized resources. For more information, see OpenShift Virtualization.

NVIDIA virtual GPU

NVIDIA virtual GPU (vGPU) products leverage NVIDIA GPU capabilities to accelerate compute-intensive workloads, Artificial Intelligence/Machine Learning (AI/ML), data processing, scientific computing, and professional workstations across on-premises, hybrid, and multicloud environments.

NVIDIA vGPU technology enables multiple VMs to access and share the resources of a single physical GPU through virtualization capabilities. You can install the NVIDIA vGPU software in data centers, cloud platforms, and virtual desktop infrastructure (VDI). The vGPU software stack divides the GPU, enabling efficient GPU resource sharing, improved performance for graphics-intensive applications within virtualized environments, and flexibility in allocating GPU resources to different VMs based on workload demands.

NVIDIA vGPU technology on OpenShift accelerates both containerized and VM-based workloads through the use of GPU devices. vGPU creates a mediated device (mdev) that represents a virtual GPU instance. The performance of the physical GPU is divided among these virtual devices and made available on OpenShift Container Platform. Although you can assign multiple vGPU devices to VMs, you can only allocate a vGPU device to one VM at a time.

Common use cases in OpenShift environments include AI/ML model training and inference, data processing, and complex simulations. Scalable and efficient GPU resource utilization can significantly improve performance.

NVIDIA GPU operator for OpenShift

The NVIDIA GPU operator for OpenShift is a Kubernetes operator that automates the deployment and management of the components of GPU-enabled workloads, including device drivers, container runtimes, and monitoring tools. The operator enables OpenShift Virtualization to attach GPUs or virtual GPUs to workloads running on OpenShift Container Platform. Users can easily provision and manage GPU-enabled VMs that run complex AI/ML workloads, and the operator can work in tandem with vGPU technology to streamline the management of GPU resources.

The GPU operator is responsible for configuring every node in the cluster with the required components to support GPU devices in Red Hat OpenShift. It is flexible enough to support heterogenous clusters that may contain multiple GPU device types.

The GPU operator uses the Kubernetes operator framework to automate the deployment and management of all the NVIDIA software components on worker nodes depending on what GPU workload is configured to run on those nodes. These components include the NVIDIA drivers (to enable CUDA), the Kubernetes device plug-in for GPUs, the NVIDIA Container Toolkit, and automatic node labeling using GPU Feature Discovery, DGCM-based monitoring, and more.

Architecture

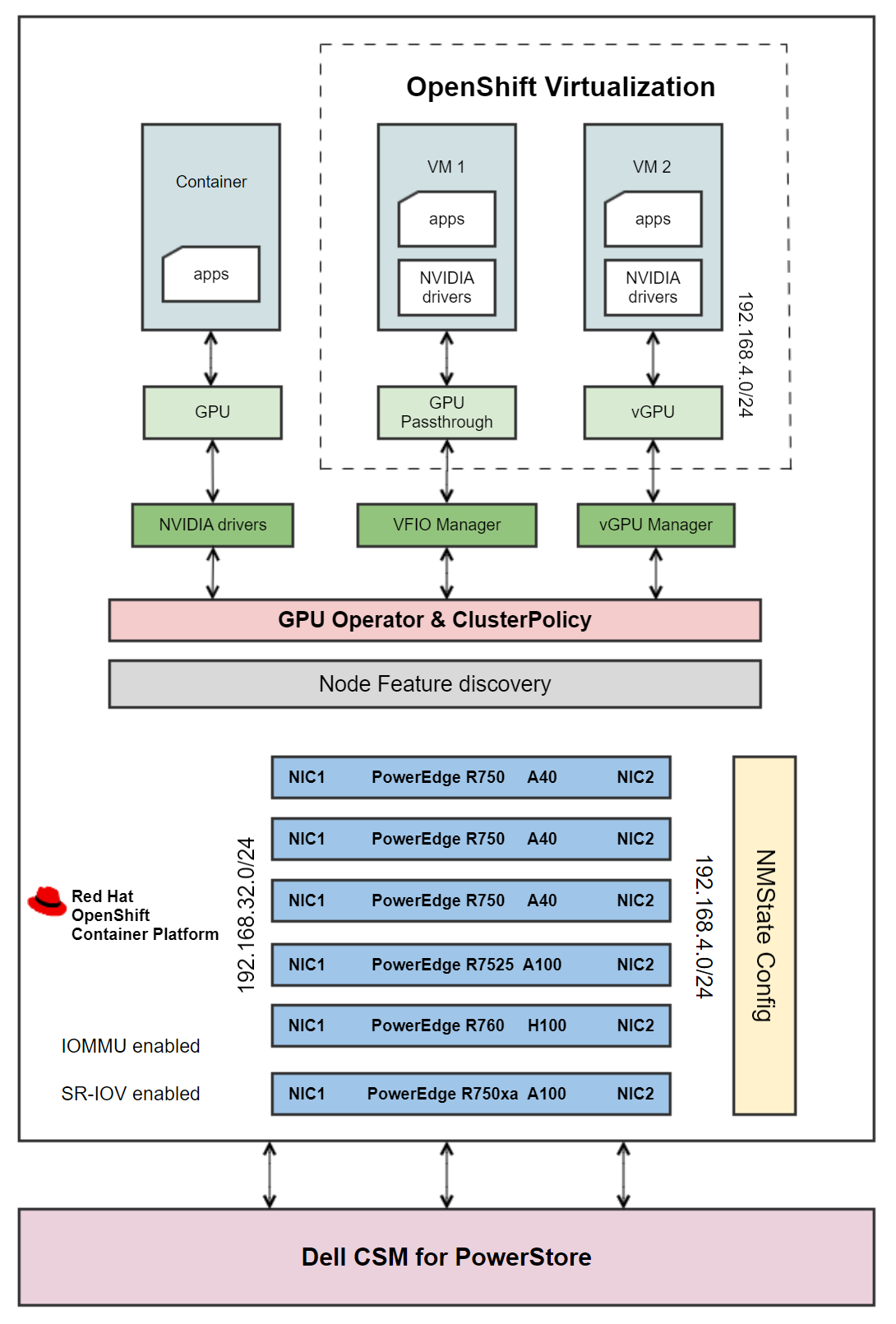

Figure 1 - Architecture diagram

Figure 1 - Architecture diagram

A CSI-enabled storage provider is configured on the cluster to provision storage for VMs. The PowerStore 5000T standard deployment model provides organizations with all the benefits of a unified storage platform for block, file, and NFS storage, while also enabling flexible growth with the intelligent scale-up and scale-out capability of appliance clusters.

Dell Container Storage Modules (CSMs) enable simple and consistent integration and automation experiences, extending enterprise storage capabilities. Storage modules for Dell PowerStore expose enterprise features of storage arrays to Kubernetes, enabling developers to effortlessly leverage these features in their deployments, making PowerStore an ideal candidate for a VM storage solution. For information about deploying Dell CSI drivers on OpenShift, see Dell CSM.

OpenShift VMs are configured to use a separate network with a VLAN that is different from the MachineNetwork that is configured in the OpenShift cluster. To achieve isolation and security, create a network for VMs on a dedicated network interface on OpenShift nodes using an IP address range that does not overlap with the cluster’s MachineNetwork. The nodes are configured with a second network, and VMs are built on this network using the Kubernetes NMState operator. For more information, see OpenShift Virtualization Networking.

Further, the OpenShift worker nodes are enabled with:

- Single Root Input/Output Virtualization (SR-IOV): SR-IOV allows for more efficient use of network resources and improves the overall performance of network traffic in virtualized environments by enhancing how physical network devices are shared.

- Input/Output Memory Management Unit (IOMMU): IOMMU isolates and protects the memory spaces of devices such as network cards or GPUs and VMs. This isolation ensures that a malfunctioning or malicious device driver cannot access or corrupt the memory that is allocated to other devices or VMs. IOMMU is essential for SR-IOV, which allows a single physical device to appear as multiple virtual devices to different VMs, providing direct and isolated access to the VMs.

An OpenShift worker node can run either GPU-accelerated container VMs with GPU passthrough, or GPU-accelerated VMs with vGPU, but not a combination. The prerequisites for running containers and VMs with GPUs vary, with the primary difference being the required drivers. During the GPU operator deployment, OpenShift worker nodes are labeled with the details of the detected GPU devices. The labels are used for scheduling pods to be deployed by the GPU operator. The ClusterPolicy custom resource (CR) that is included with the GPU operator installs the required drivers and components as determined by the node labels. For example, the data center driver is needed for containers, the vfio-pci driver is needed for GPU passthrough, and the NVIDIA vGPU Manager is needed for creating vGPU devices. For more information, see NVIDIA GPU Operator with OpenShift Virtualization.

The architecture diagram shows how the GPU operator is configured to deploy different software components on worker nodes depending on what GPU workload is configured to run on those nodes:

- Containers: The GPU operator creates the NVIDIA GPU device drivers, and a container is assigned a whole GPU. The GPU operator also installs components such as the NVIDIA Container Toolkit, NVIDIA device plug-in, CUDA validator, DCGM exporter, and operator validator.

- VMs with Passthrough GPU: The VFIO driver that is created by the GPU operator gives the VM direct and exclusive access to the GPU resources, dedicating an entire physical GPU to a VM. This configuration is commonly used in scenarios where a VM requires direct access to the full capabilities of a GPU, such as high-performance computing (HPC) workloads, gaming VMs, or applications that demand GPU acceleration.

- VMs with vGPU: The vGPU manager enables virtualization of a physical GPU. A single GPU is sliced into multiple vGPU instances, allowing VMs to share the GPU resources.

For containerized workloads that do not require the capability of an entire GPU, you can configure an OpenShift cluster with Multi Instance GPU (MIG). By allowing the partitioning of a single physical GPU into multiple smaller instances, NVIDIA MIG technology enables each instance to be allocated to different containers, providing isolation and resource allocation for different tasks. MIG is different from vGPU in that the isolation is implemented by the device firmware. Also, MIGuses hardware boundaries, whereas vGPU is a higher-level, software-only approach.

When the driver installation is complete, OpenShift Virtualization automatically creates vGPUs and PCI Host devices based on GPU device configuration information that is provided in the HyperConverged CR. These devices are then assigned to VMs.

For detailed instructions for installing OpenShift Virtualization on the hardware depicted in Figure 1, as well as component versions and GPU workload combinations that have been validated across nodes, see OpenShift Virtualization with NVIDIA virtual GPU - Part 2.

References

- NVIDIA GPU Operator with OpenShift Virtualization

- NVIDIA Virtual GPU (vGPU) Software Documentation

- Configuring virtual GPUs

- GPU Operator Component Matrix

- NVIDIA Virtual GPU Software Documentation