Reliability in Dell Technologies PowerEdge Servers

Download PDFThu, 25 Apr 2024 18:31:15 -0000

|Read Time: 0 minutes

Introduction

Reliability is defined as the characteristic of a product or system that assures the performance of its intended function over time and assures operation in a defined environment without failure. Reliability is designed into PowerEdge servers, and it is constantly evaluated and improved throughout the product lifecycle. Full in-house test and analysis capabilities allow Dell Technologies to develop and implement robust product qualification and release procedures.

Dell Technologies Design Guidelines

Dell Technologies server design-to-criteria includes:

- Servers to operate continuously at 40C degrees/80% relative humidity, and allow for short term excursions to 45 degrees C and 90% relative humidity

note: 40C/85%RH capability is configuration specific, but the vast majority of PowerEdge server configurations allow for these conditions

- Additional design life margin, and accommodation for the potential of lifetime limited warranty

- Potential deployment in uncontrolled environments – locations with polluted air and dust

- Customer special requests – for example, higher shock and vibration tolerance

Dell Technologies Design for Reliability Process

The Dell Technologies Reliability Engineering team is part of the Server Product Development team and has developed a full suite of procedures. Many are based on industry standards which define DfR: Subsystem Qualification, Ongoing Reliability Testing, Validation, Shock and Vibration, and associated Failure Analysis requirements. This suite must be met and fulfilled before any product is released. Dell Technologies environmental test chambersDell Technologies uses internally developed web-based design for reliability (DfR) tools for systems development. In addition to using these tools at Dell Technologies, we require that our supply base use these tools in their product development processes to ensure our suppliers also design in reliability.

Dell Technologies environmental test chambersDell Technologies uses internally developed web-based design for reliability (DfR) tools for systems development. In addition to using these tools at Dell Technologies, we require that our supply base use these tools in their product development processes to ensure our suppliers also design in reliability.

Design for Reliability Starts at the Component Level

Dell Technologies reliability begins with choosing and approving component suppliers. Dell Technologies specifies JEDEC qualified components from all suppliers (JEDEC is a global industry group that creates standards for broad range of technologies). To ensure enterprise-class reliability, Dell Technologies may require qualification testing beyond the standard JEDEC suite depending on the nature of the component – new, unique, different, and difficult or NUDD. Dell Technologies has specific qualification requirements for NUDDs.

Subsystem Level Comes Next

Dell Technologies defines qualification protocol for all subsystems (HDD, SSD, PSU, fans, memory, PCIe cards, PERC, and daughter cards) and ensures that the supply base executes to Dell Technologies requirements. Dell Technologies does this by:

- Defining test requirements, sample sizes, ramp rates, durations, and accept/reject criteria

- Working closely with Suppliers during their product development process

- Reviewing and approving results, and addressing qualification fails, if any

- Auditing product by conducting our own in house testing as appropriate

- Auditing supplier Quality and Assembly/Test processes

- Requiring ongoing reliability testing (ORT) on all subsystems throughout their shipping life

The System is the Third Level of Reliability

Dell Technologies does extensive testing and analysis of all systems during development and prior to release:

- Dell Technologies has developed and refined a suite of multiple environment over-stress validation tests that it executes on every system during its development and prior to release

- Dell Technologies has a separate suite of shock and vibration tests, many of which are industry-standards-based, that we execute on every system prior to release

- Dell Technologies has full internal capability to analyze test fails in our own in-house Failure Analysis Labs

Dell Technologies Reliability is designed in and closes the loop: from the component level to subsystem level to system level. Our product qualification and release systems ensure that design criteria, including deployment life, additional deployment life margin, and accommodation for potential lifetime limited warranty, are met before product is launched. This qualification and release system is based on industry standards and on our own rigorous methods which have been developed and refined over multiple generations of PowerEdge products. This includes Ongoing Reliability Testing (ORT) on components and subsystems which is required to be implemented throughout the shipping life of PowerEdge servers.

Dell Technologies’ focus is on Design for Reliability - using a full suite of internally developed web-based tools, HW Validation Tests, and Shock and Vibration tests. Full in-house capabilities allow Dell Technologies to conduct all phases of product qualification and release in house, including multiple environment overstress tests, shock and vibration tests, and failure analysis.

Dell Technologies also conducts research on long term reliability of our products in expanded operating environments. This research, and associated multimillion-dollar investments in applied research facilities, allow Dell Technologies to continue to improve reliability on PowerEdge products.

Related Documents

Dell PowerEdge R7625 Rack Server & Emulex LPe36002 Host Bus Adapter: 64G Fibre Channel Microsoft SQL Server

Fri, 29 Mar 2024 16:19:02 -0000

|Read Time: 0 minutes

Dell PowerEdge R7625 Rack Server & Emulex LPe36002 Host Bus Adapter

64G Fibre Channel Microsoft SQL Server Performance – NVMe/FC vs. SCSI/FC

Tolly Report #224107

Tolly test report demonstrating that Dell PowerEdge R7625 Rack Server outfitted with the Emulex LPe36002 Host Bus Adapter using NVMe/FC can improve application performance vs older generation SCSI/FC.

Executive Summary

New generation servers can bring higher performance across a range of areas. This is certainly the case with Dell’s 16th-generation server line. Similarly, newer protocols like NVM Express (NVMe) over Fibre Channel (FC) can provide greater throughput and efficiency than older SCSI over FC. Dell is unique in offering an end-to-end NVMe/FC connectivity solution in the mid-range storage marketplace with the PowerStore line.

Dell commissioned Tolly to benchmark the performance of the Broadcom Emulex LPe36002 64G Fibre Channel dual-port host bus adapter (HBA) running in the Dell PowerEdge R7625 Rack Server with AMD EPYC processors by testing using actual database applications rather than simulated I/O microbenchmarks. Testing focused on evaluating the database throughput, latency, and CPU efficiency of accessing Microsoft SQL Server 2019 for Linux systems over older SCSI/FC and newer NVMe/FC. Databases were stored on a Dell PowerStore 9200T storage appliance.

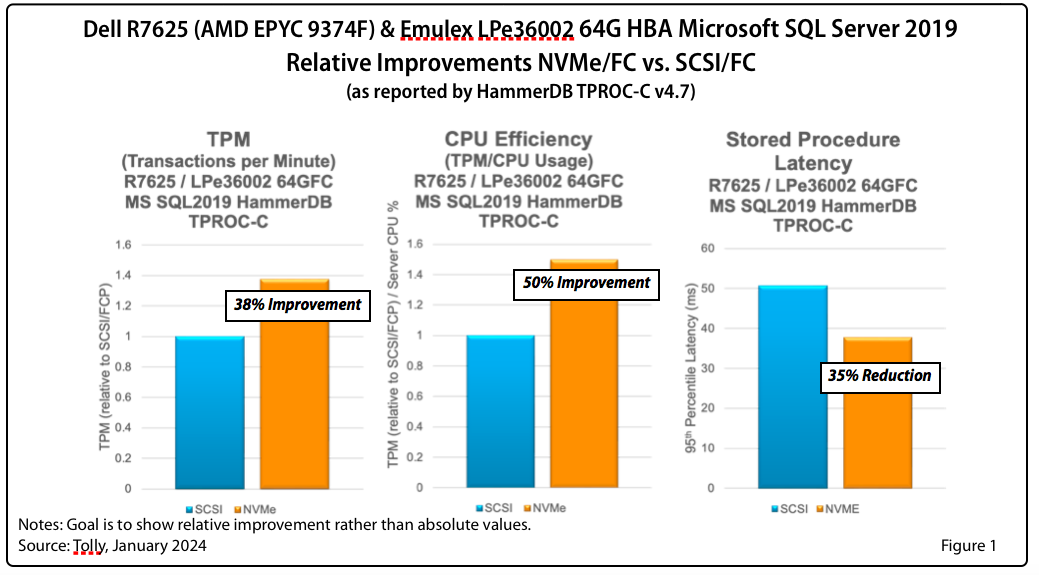

Tests showed significant improvements in transaction throughput, latency reduction, and CPU efficiency. See Figure 1 for a summary of relative improvements.

The Bottom Line | |

Dell PowerEdge R7625 with AMD EPYC processors & Emulex LPe36002 64G HBA using NVMe/FC: | |

1 | Improved database transactions by 38% |

2 | Reduced database stored procedure latency by 35% |

Overview

The goal of this test was to illustrate the performance benefits of using the newer, more-efficient NVMe/FC protocol in lieu of the older, less-efficient SCSI/FC protocol in conjunction with Emulex 64G FC HBAs running under Linux in a Dell PowerEdge R7625 Rack Server. (Dell sells the Emulex 64G FC HBA for the same price as the Emulex 32G FC HBA.)

The test was run using Microsoft SQL Server 2019 for Linux accessing the database via SCSI and then via NVMe.

While low-level component benchmarks are instructive, ultimately system architects are rightly most interested in how network-level improvements can translate into application performance improvements. This benchmarking was done with HammerDB which generates actual user transactions against an actual database. The test was focused on TPROC-C which is the HammerDB, database-oriented implementation of the de facto standard TPC-C online transaction processing benchmark.

Tests showed significant improvements in key benchmarks.

Test Results

Microsoft SQL Server 2019 for Linux

Transaction Processing. The NVMe/FC results were significantly better than the SCSI/FC results. When run over NVMe/FC, 38% more transactions per minute were processed.

CPU Efficiency. The NVMe/FC results were significantly better than the SCSI/FC results. When run over NVMe/FC, the CPU efficiency was improved by 50%.

P95 Stored Procedure Latency. Similarly, the NVMe/FC results were significantly better than the SCSI/FC results. When run over NVMe/FC, the latency was reduced by 35%.

Test Setup & Methodology

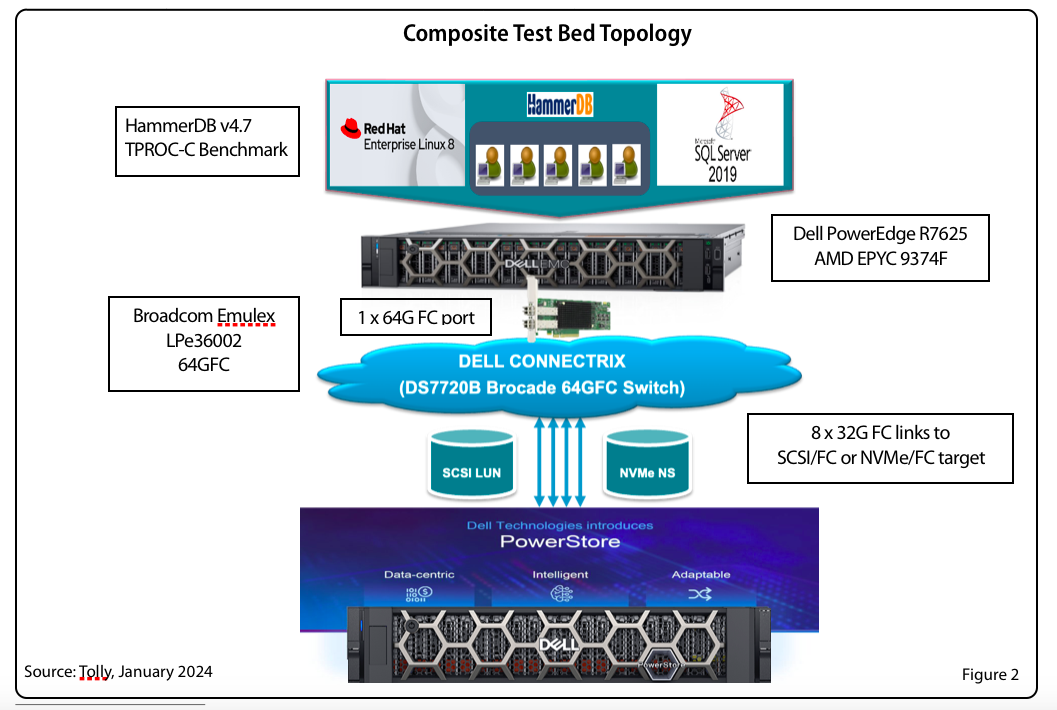

The HBA under test used current production drivers that are publicly available. Default settings were used. Details of the test environment and systems under test are found in Tables 1-5. Figure 2 shows a composite test environment.

Database Test

The goal of this test was to benchmark the database transaction performance of each HBA running the HammerDB “TPROC-C” workload which, as noted earlier, is the HammerDB, database version of the Transaction Processing Council’s TPC-C OLTP benchmarked

A Dell PowerEdge R7625 server, powered by AMD EPYC processors, was configured with the HBA under test. The Broadcom Emulex LPe36002 64G HBA connected to a Dell PowerStore 9200T via a Dell Connectrix 64G Fibre Channel switch. The test utilized a single 64G FC port of the Emulex HBA.

The server ran RHEL 8.9. SCSI Device Mapper and NVMe native multipath were enabled for the respective devices. NUMA was set to off and “transparent huge pages” was disabled.

For storage, path selection policy for NVMe native multipath was set to “round-robin". For SCSI Device mapper multipath was set to "queue-length 0”.

This test was run using Microsoft SQL Server 2019 for Linux,

The open source HammerDB test tool was used to populate the database schema and run the workload.

Table 1. HBA Under Test

Vendor | Product Name | Firmware | Driver |

Broadcom | Emulex LPe36002 (64G) (PCIe 4.0) | 14.0.539.26 | 14.0.0.15 |

Table 2. Server Configuration

Vendor/System | Dell PowerEdge R7625 |

CPU | 2 socket AMD EPYC 9374F 32-Core Processor @ 3.8 GHz |

Number of CPUs | 128 logical processors. Profile: Performance, Logical Processors: Enabled, Sub Numa Clustering: Disabled |

Memory (RAM) | 256 GB |

Power Mode

| Performance |

OS | Red Hat Ent. Linux 8.9 (RHEL8) |

Kernel | 4.18.0-425.3.1 |

Table 3. Microsoft Database Configuration

Database | Microsoft SQL Server 2019 for Linux |

Storage | Single volume, XFS |

Dataset Size | 100 GB |

DB Memory Allocation | 10G |

Table 4. Database Test Tool

Vendor | Open Source |

Application | HammerDB 4.7 |

TPROC-C settings | Total # of Warehouses = 1,000 Transactions per user = 1 million Ramp-up time: 2 minutes Run time: 5 minutes |

Table 5. Storage Configuration

Vendor/Device | Dell PowerStore 9200T v3.5 |

Ports | 8 x 32G FC |

Volume Size | 1,024GB volume each for NVMe/FC and SCSI/FC |

Namespace/LUN | 8 x 32G target ports (single namespace) |

Network Fabric | Dell Connectrix 64G FC switch v9.0.1a |

About AMD

For over 50 years, AMD has been at the forefront of driving innovation in high-performance computing, graphics, and visualization technologies. Their products are relied upon by billions of people, leading Fortune 500 businesses, and cutting-edge scientific research institutions worldwide. AMD's mission is to build exceptional products that accelerate next-generation computing experiences and power solutions for the world's most important challenges. Visit http://www.amd.com for more information about AMD.

Broadcom Emulex LPe36002

The Broadcom Emulex LPe36000-series Gen 7 Fibre Channel HBAs are designed for demanding mission-critical workloads and emerging applications. The family of adapters features Silicon Root of Trust security, designed to thwart firmware attacks aimed at enterprises and governments.

Gen 7 64G provides seamless backward compatibility to 32G and 16G networks.

Dell sells the LPe36002 64G HBA for the same price as the 32G model.

About Tolly

The Tolly Group companies have been delivering world-class IT services for over 30 years. Tolly is a leading global provider of third-party validation services for vendors of IT products, components and services.

You can reach the company by E-mail at sales@tolly.com, or by telephone at +1 561.391.5610.

Visit Tolly on the Internet at: http://www.tolly.com

Tolly Terms Of Usage

The Tolly Gro This document is provided, free-of-charge, to help you understand whether a given product, technology, or service merits additional investigation for your particular needs. Any decision to purchase a product must be based on your own assessment of suitability based on your needs. The document should never be used as a substitute for advice from a qualified IT or business professional. This evaluation was focused on illustrating specific features and/or performance of the product(s) and was conducted under controlled, laboratory conditions. Certain tests January have been tailored to reflect performance under ideal conditions; performance January vary under real-world conditions. Users should run tests based on their own real-world scenarios to validate performance for their own networks.

Reasonable efforts were made to ensure the accuracy of the data contained herein but errors and/or oversights can occur. The test/audit documented herein January also rely on various test tools the accuracy of which is beyond our control. Furthermore, the document relies on certain representations by the sponsor that are beyond our control to verify. Among these is that the software/hardware tested is production or production track and is, or will be, available in equivalent or better form to commercial customers. Accordingly, this document is provided "as is," and Tolly Enterprises, LLC (Tolly) gives no warranty, representation or undertaking, whether express or implied, and accepts no legal responsibility, whether direct or indirect, for the accuracy, completeness, usefulness, or suitability of any information contained herein. By reviewing this document, you agree that your use of any information contained herein is at your own risk, and you accept all risks and responsibility for losses, damages, costs, and other consequences resulting directly or indirectly from any information or material available on it. Tolly is not responsible for, and you agree to hold Tolly and its related affiliates harmless from any loss, harm, injury, or damage resulting from or arising out of your use of or reliance on any of the information provided herein.

Tolly makes no claim as to whether any product or company described herein is suitable for investment. You should obtain your own independent professional advice, whether legal, accounting or otherwise, before proceeding with any investment or project related to any information, products or companies described herein. When foreign translations exist, the English document is considered authoritative. To assure accuracy, only use documents downloaded directly from Tolly.com. No part of any document January be reproduced, in whole or in part, without the specific written permission of Tolly. All trademarks used in the document are owned by their respective owners. You agree not to use any trademark in or as the whole or part of your own trademarks in connection with any activities, products or services which are not ours, or in a manner which January be confusing, misleading, or deceptive or in a manner that disparages us or our information, projects or developments.

Dell PowerEdge 16G Server BIOS Settings for Optimized Performance: R7625, R6625, R7615, R6615, C6615

Tue, 26 Mar 2024 22:46:05 -0000

|Read Time: 0 minutes

BIOS setting recommendations

The following tables provide the BIOS setting recommendations for the latest generation of PowerEdge servers:

Table 1. BIOS setting recommendations - System profile settings

| System setup screen | Setting | BIOS Defaults | SPEC cpu2017 int rate (General Purpose Performance) | SPEC cpu2017 fp rate | SPEC cpu2017 int speed | SPEC cpu2017 fp speed | Memory Throughput | HPC | Latency |

| System profile setting | System profile | Performance Per Watt | Custom | Custom | Custom | Custom | Custom | Custom | Custom |

| System profile setting[*] | CPU Power Management | OS DBPM | OS DBPM | OS DBPM | OS DBPM | OS DBPM | OS DBPM | Max Performance | Max Performance |

| System profile setting | Memory Frequency | Max Performance | Max Performance | Max Performance | Max Performance | Max Performance | Max Performance | Max Performance | Max Performance |

| System profile setting | Turbo Boost | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| System profile setting | C-States | Enabled | Enabled | Enabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| System profile setting | Write Data CRC | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Enabled | Disabled |

| System profile setting | Memory Patrol Scrub | Standard | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| System profile setting | Memory Refresh Rate | 1x | 1x | 1x | 1x | 1x | 1x | 1x | 1x |

| System profile setting | Workload Profile | not configured | not configured | not configured | not configured | not configured | not configured | HPL | not configured |

| System profile setting | PCI ASPM L1 Link Power Management | Enabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| System profile setting | Determinism Slider | Performance Determinism | Power Determinism | Power Determinism | Power Determinism | Power Determinism | Power Determinism | Power Determinism | Power Determinism |

| System profile setting | Power Profile Select | High Performance Mode | High Performance Mode | High Performance Mode | High Performance Mode | High Performance Mode | High Performance Mode | High Performance Mode | High Performance Mode |

| System profile setting | PCIE Speed PMM Control | Auto | Auto | Auto | Auto | Auto | Auto | Auto | (GEN 5) |

| System profile setting | EQ Bypass To Highest Rate | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| System profile setting | DF PState Frequency Optimizer | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| System profile setting | DF PState Latency Optimizer | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| System profile setting | DF CState | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| System profile setting | Host System Management Port (HSMP) Support | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| System profile setting | Boost FMax | 0-Auto | 0-Auto | 0-Auto | 0-Auto | 0-Auto | 0-Auto | 0-Auto | 0-Auto |

| System profile setting | Algorithm Performance Boost Disable (ApbDis) | Disabled | Disabled | Disabled | Enabled | Enabled | Disabled | Disabled | Enabled |

| System profile setting | ApbDis Fixed Socket P-State[2] | P0 | P0 | P0 | |||||

| System profile setting | Dynamic Link Width Management (DLWM) | Unforced | Unforced | Unforced | Unforced | Unforced | Unforced | Unforced | Forced x16 |

[*] For C6615, apply setting from Table 3.

[1] Depends on how system was ordered. Other System Profile defaults are driven by this choice and may be different than the examples listed. Select Performance Profile first, and then select Custom to load optimal profile defaults for further modification.

[2] Pstate field is dependent on Algorithm Performance Boost Disable (ApbDis) and is visible only when it is enabled.

Table 2. BIOS setting recommendations – Memory, processor, and iDRAC settings

| System setup screen | Setting | BIOS Defaults | SPEC cpu2017 int rate (General Purpose Performance) | SPEC cpu2017 fp rate | SPEC cpu2017 int speed | SPEC cpu2017 fp speed | Memory Throughput | HPC | Latency |

| Memory settings | System Memory Testing | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Memory settings | DRAM Refresh Delay | Minimum | Performance | Performance | Performance | Performance | Performance | Performance | Performance |

| Memory settings | Correctable memory ECC SMI | Enabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Memory settings | Uncorrectable Memory Error (DIMM Self healing on uncorrectable memory) | Enabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Memory settings | Correctable Error Logging | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Processor settings | Logical Processor | Enabled | Enabled | Disabled[1] | Disabled[1] | Disabled[1] | Disabled[1] | Disabled[1] | Disabled[1] |

| Processor settings | Virtualization Technology | Enabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Processor settings | IOMMU Support | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| Processor settings | Kernel DMA Protection | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Processor settings | L1 Stream HW Prefetcher | Enabled | Enabled | Disabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| Processor settings | L2 Stream HW Prefetcher | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| Processor settings | L1 Stride Prefetcher | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| Processor settings | L1 Region Prefetcher | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| Processor settings | L2 Up Down Prefetcher | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| Processor settings | MADT Core Enumeration | Linear | Linear | Linear | Linear | Linear | Linear | Linear | Linear |

| Processor settings[*] | NUMA Node Per Socket | 1 | 4 | 4 | 4 | 1 | 4 | 4 | 4 |

| Processor settings | L3 cache as NUMA | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Processor settings | Secure Memory Encryption | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Processor settings | Minimum SEV no-ES ASID | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 |

| Processor settings | SNP Memory Coverage | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Processor settings | Secure Nested Paging | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Processor settings | Transparent Secure Memory Encryption | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled | Disabled |

| Processor settings | ACPI CST C2 Latency | 800 | 18 | 18 | 18 | 18 | 800 | 18 | 800 |

| Processor settings | Configurable TDP | Maximum | Maximum | Maximum | Maximum | Maximum | Maximum | Maximum | Maximum |

| Processor settings | x2APIC Mode | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled | Enabled |

| Processor settings | Number of CCDs per Processor | All | All | All | All | All | All | All | All |

| Processor settings | Number of Cores per CCD | All | All | All | All | All | All | All | All |

| iDRAC settings | Thermal Profile | Default | Maximum Performance | Maximum Performance | Maximum Performance | Maximum Performance | Maximum Performance | Maximum Performance | Maximum Performance |

[*] For C6615, apply setting from Table 3.

[1] Logical Processor (Hyper Threading) tends to benefit throughput-oriented workloads such as SPEC CPU2017. Many HPC workloads disable this option.

Table 3. BIOS setting recommendations specific to C6615 (apply remaining settings from Table 1 and 2)

System setup screen | Setting | BIOS Defaults | SPEC cpu2017 int rate (General Purpose Performance) | SPEC cpu2017 fp rate | SPEC cpu2017 int speed | SPEC cpu2017 fp speed | Memory Throughput | HPC | Latency |

Processor settings | NUMA Node per Socket | 1 | 1 | 1 | 1 | 1 | 2 | 1 | 2 |

System profile setting | CPU Power Management | OS DBPM | OS DBPM | OS DBPM | OS DBPM | OS DBPM | OS DBPM | OS DBPM | OS DBPM |

iDRAC recommendations

Following are what we would recommend for an iDRAC environment:

- Thermally challenged environments should increase fan speed through iDRAC Thermal Section.

- All Power Capping should be removed in performance-sensitive environments.

Glossary

System profile: (Default="Performance Per Watt")

To assist the average customer in setting each individual power/performance feature for their specific environment, a menu option is provided that can help a customer optimize the system for factors such as minimum power usage/acoustic levels, maximum efficiency, Energy Star optimization, and maximum performance.

Performance Per Watt OS mode optimizes the performance/watt efficiency with a bias towards performance. It is the favored mode for Energy Star. Note that this mode is slightly different than Performance Per Watt DAPC mode. In this mode, no bus speeds are derated, leaving the OS in charge of making those changes.

Custom allows the user to individually modify any of the low-level settings that are preset and unchangeable in any of the other four preset modes.

C-States

C-states reduce CPU idle power. There are three options in this mode: Legacy, Autonomous, and Disable.

Enabled: When “Enabled” is selected, the operating system initiates the C-state transitions. Some OS SW may defeat the ACPI mapping, such as intel_idle driver.

Autonomous: When "Autonomous" is selected, HALT and C1 requests get converted to C6 requests in hardware.

Disable: When "Disable" is selected, only C0 and C1 are used by the OS. C1 gets enabled automatically when an OS autohalts.

CPU Power Management

CPU Power Management allows the selection of CPU power management methodology. Maximum Performance is typically selected for performance-centric workloads where it is acceptable to consume additional power to achieve the highest possible performance for the computing environment. This mode drives processor frequency to the maximum across all cores (although idled cores can still be frequency-reduced by C-States enforcement through BIOS or OS mechanisms if enabled). This mode also offers the lowest latency of the CPU Power Management Mode options, so it is always preferred for latency-sensitive environments. OS DBPM is another Performance Per Watt option that relies on the operating system to dynamically control individual core frequency. Both Windows and Linux can take advantage of this mode to reduce the frequency of idled or underutilized cores in order to save power. This will be Read-only unless System Profile is set to Custom.

Memory Frequency

Memory Frequency governs the BIOS memory frequency. The variables that govern maximum memory frequency include the maximum rated frequency of the DIMMs, the DIMMs per channel population, the processor choice, and this BIOS option. Additional power savings can be achieved by reducing the memory frequency at the expense of reduced performance. This will be Read-only unless System Profile is set to Custom.

Turbo Boost

Turbo Boost governs the Boost Technology. This feature allows the processor cores to be automatically clocked up in frequency beyond the advertised processor speed. The amount of increased frequency (or 'turbo upside') one can expect from an EPYC processor depends on the processor model, thermal limitations of the operating environment, and in some cases power consumption. In general terms, the fewer cores being exercised with work, the higher the potential turbo upside. The potential drawbacks for Boost are mainly centered on increased power consumption and possible frequency jitter that can affect a small minority of latency-sensitive environments. This will be Read-only unless System Profile is set to Custom.

Memory Patrol Scrub

Memory Patrol Scrubbing searches the memory for errors and repairs correctable errors to prevent the accumulation of memory errors. When set to Disabled, no patrol scrubbing will occur. When set to Standard Mode, the entire memory array will be scrubbed once in a 24-hour period. When set to Extended Mode, the entire memory array will be scrubbed more frequently to further increase system reliability. This will be Read-only unless System Profile is set to Custom.

Memory Refresh Rate

The memory controller will periodically refresh the data in memory. The frequency at which memory is normally refreshed is referred to as 1X refresh rate. When memory modules are operating at a higher-than-normal temperature or to further increase system reliability, the refresh rate can be set to 2X, however this may have a negative impact on memory subsystem performance under certain circumstances. This will be Read-only unless System Profile is set to Custom.

PCI ASPM L1 Link Power Management

When enabled, PCIe Advanced State Power Management (ASPM) can reduce overall system power while slightly reducing system performance.

Note: Some devices may not perform properly (they may hang or cause the system to hang) when ASPM is enabled; for this reason, L1 will only be enabled for validated qualified cards.

Determinism Slider

The Determinism Slider controls whether BIOS will enable determinism to control performance.

Performance Determinism: BIOS will enable 100% deterministic performance control.

Power Determinism: BIOS will not enable deterministic performance control.

Power Profile Select

High Performance Mode (default): Favors core performance. All DF P-States are available in this mode, and the default DF P-State and DLWM algorithms are active.

Efficiency Mode: Configures the system for power efficiency. Limits boost frequency available to cores and restricts DF P-States available in the system. Maximum IO.

Performance Mode: Sets up Data Fabric to maximize IO sub-system performance.

Algorithm Performance Boost Disable (ApbDis)

When enabled, a specific hard-fused Data Fabric (SoC) P-state is forced for optimizing workloads sensitive to latency or throughput. For higher performance and power savings, when disabled, P-states will be automatically managed by the Application Power Management, allowing the processor to provide maximum performance while remaining within a specified power-delivery and thermal envelope.

ApbDis Fixed Socket P-State

This value defines the forced P-state when ApbDis is enabled.

Dynamic Link Width Management (DLWM)

DLWM reduces the XGMI link width between sockets from x16 to x8 (default) when no traffic is detected on the link. As with Data Fabric and Memory P-states, this feature is optimized to trade power between core and high IO/memory bandwidth workloads.

Forced: Force link width to x16, x8, or x2.

Unforced: Link width will be managed by DLWM engine.

System Memory Testing

System Memory Testing indicates whether or not the BIOS system memory tests are conducted during POST. When set to Enabled, memory tests are performed.

Note: Enabling this feature will result in a longer boot time. The extent of the increased time depends on the size of the system memory.

Dram Refresh Delay

By enabling the CPU memory controller to delay running the REFRESH commands, you can improve the performance for some workloads. By minimizing the delay time, it is ensured that the memory controller runs the REFRESH command at regular intervals. For Intel-based servers, this setting only affects systems configured with DIMMs which use 8 Gb density DRAMs.

Correctable Memory ECC SMI

Allows the system to log ECC-corrected DRAM errors into the SEL log. Logging these rare errors can help identify marginal components, however the system will pause for a few milliseconds after an error while the log entry is created. Latency-conscious customers may want to disable the feature. Spare Mode and Mirror mode require this feature to be enabled.

DIMM Self Healing (Post Package Repair) on Uncorrectable Memory Error Enable/Disable Post Package Repair (PPR) on Uncorrectable Memory Error.

Correctable Error Logging

Enable/Disable logging of correctable memory threshold error.

Logical Processor

Each processor core supports up to two logical processors. When set to Enabled, the BIOS reports all logical processors. When set to Disabled, the BIOS only reports one logical processor per core. Generally, a higher processor count results in increased performance for most multi-threaded workloads, and the recommendation is to keep this enabled. However, there are some floating point/scientific workloads, including HPC workloads, where disabling this feature may result in higher performance.

Virtualization Technology

When set to Enabled, the BIOS will enable processor Virtualization features and provide the virtualization support to the Operating System (OS) through the DMAR table. In general, only virtualized environments such as VMware(r) ESX(tm), Microsoft Hyper-V(r), Red Hat(r) KVM, and other virtualized operating systems will take advantage of these features. Disabling this feature is not known to significantly alter the performance or power characteristics of the system, so leaving this option Enabled is advised for most cases.

IOMMU Support

Enable or Disable IOMMU support. Required to create IVRS ACPI Table.

Kernel DMA Protection

When set to Enabled, using IOMMU, BIOS & Operating System will enable direct memory access protection for DMA-capable peripheral devices. Enable IOMMU Support to use this option.

L1 Stream HW Prefetcher

When set to Enabled, the processor provides advanced performance tuning by controlling the L1 stream HW prefetcher setting. Use the recommended setting, and this option will allow for optimizing overall workloads.

L2 Stream HW Prefetcher

When set to Enabled, the processor provides advanced performance tuning by controlling the L2 stream HW prefetcher setting. Use the recommended setting, and this option will allow for optimizing overall workloads.

L1 Stride Prefetcher

When set to Enabled, the processor provides additional fetch to the data access for an individual instruction for performance tuning by controlling the L1 stride prefetcher setting. Use the recommended setting, and this option will allow for optimizing overall workloads.

L1 Region Prefetcher

When set to Enabled, the processor provides additional fetch to data along with the data access to the given instruction for performance tuning by controlling the L1 region prefetcher setting. Use the recommended setting, and this option will allow for optimizing overall workloads.

L2 Up Down Prefetcher

When set to Enabled, the processor uses memory access to determine whether to fetch next or previous for all memory accesses for advanced performance tuning by controlling the L2 up/down prefetcher setting. Use the recommended setting, and this option will allow for optimizing overall workloads.

MADT Core Enumeration

This field determines how BIOS enumerates processor cores in the ACPI MADT table. When set to Round Robin, processor cores are enumerated in a Round Robin order to evenly distribute interrupt controllers for the OS across all Sockets and Dies. When set to Linear, processor cores are enumerated across all Dies within a Socket before enumerating additional Sockets for a linear distribution of interrupt controllers for the OS.

NUMA Nodes Per Socket

This field specifies the number of NUMA nodes per socket. The Zero option is for 2 socket configurations.

L3 cache as NUMA Domain

This field specifies that each CCX within the processor will be declared as a NUMA Domain.

Secure Memory Encryption

This field enables or disables AMD secure encryption features such as Secure Memory Encryption (SME) and Secure Encrypted Virtualization (SEV). In addition to enabling this option, SME must be supported and activated by the operating system. Similarly, SEV must be supported and activated by the hypervisor. This option also determines if other secure encryption feature such as TSME and SEV-SNP features can be enabled.

Minimum SEV non-ES ASID

This field determines the number of Secure Encrypted Virtualization (SEV) Encrypted States (ES) and non-ES available Address Space IDs. The number specified is the dividing line between ES and non-ES ASIDs. The register save state area is also encrypted along with the entire guest memory area. The maximum number of ASIDs available depends on installed CPU and memory configuration which can either be 15, 253, or 509. The default value is 1, and the value entered by user means the number of non-ES ASIDs starts from the value entered and ends at the maximum number of ASIDs available. A value of 1 means there are only non-ES ASIDs available. For example, if the maximum number of ASIDs is 15, the default value 1 means there are 15 SEV non-ES ASIDs and 0 SEV ES ASIDs. Alternatively, if the maximum number of ASIDs is 15, the value 4 means there are 12 SEV non-ES ASIDs and 3 SEV ES ASIDs. Further, if the maximum number of ASIDs is 509, the value 40 means there are 470 SEV non-ES ASIDs and 39 SEV ES ASIDs.

Secure Nested Paging

This option enables or disables SEV-SNP, a set of additional security protections.

SNP Memory Coverage

This option selects the operating mode of the Secure Nested Paging (SNP) Memory and the Reverse Map Table (RMP). The RMP is used to ensure a one-to-one mapping between system physical addresses and guest physical addresses.

Transparent Secure Memory Encryption

This field enables or disables Transparent Secure Memory Encryption (TSME). TSME is always-on memory encryption that does not require operating system or hypervisor support. If the operating system supports SME, this field does not need to be enabled. If the hypervisor supports SEV, this field does not need to be enabled. Enabling TSME affects system memory performance.

ACPI CST C2 Latency

Enter in 18 - 1000 microseconds (decimal value). Larger C2 latency values will reduce the number of C2 transitions and reduce C2 residency. Fewer transitions can help when performance is sensitive to the latency of C2 entry and exit. Higher residency can improve performance by allowing higher frequency boost and reduce idle core power. With Linux kernel 6.0 or later, the C2 transition cost is significantly reduced. The best value will be dependent on kernel version, use case, and workload.

Configurable TDP

Configurable TDP allows the reconfiguration of the processor Thermal Design Power (TDP) levels based on the power and thermal delivery capabilities of the system. TDP refers to the maximum amount of power the cooling system is required to dissipate.

Note: This option is only available on certain SKUs of the processors, and the number of alternative levels varies as well.

x2APIC Mode

Enable or Disable x2APIC mode. Compared to the traditional xAPIC architecture, x2APIC extends processor addressability and enhances interrupt delivery performance.

Number of CCDs per Processor

This field enables the number of CCDs per Processor.

Number of Cores per CCD

This field enables the number of Cores per CCD.

Authors: Charan Soppadandi, Chris Cote, Donald Russell, Kavya AR