OneFS System Configuration Auditing

Thu, 18 Apr 2024 04:55:18 -0000

|Read Time: 0 minutes

OneFS auditing can detect potential sources of data loss, fraud, inappropriate entitlements, access attempts that should not occur, and a range of other anomalies that are indicators of risk. This can be especially useful when the audit associates data access with specific user identities.

In the interests of data security, OneFS provides “chain of custody” auditing by logging specific activity on the cluster. This includes OneFS configuration changes plus NFS, SMB, and HDFS client protocol activity which are required for organizational IT security compliance, as mandated by regulatory bodies like HIPAA, SOX, FISMA, MPAA, and more.

OneFS auditing uses Dell’s Common Event Enabler (CEE) to provide compatibility with external audit applications. A cluster can write audit events across up to five CEE servers per node in a parallel, load-balanced configuration. This allows OneFS to deliver an end to end, enterprise grade audit solution which efficiently integrates with third party solutions like Varonis DatAdvantage.

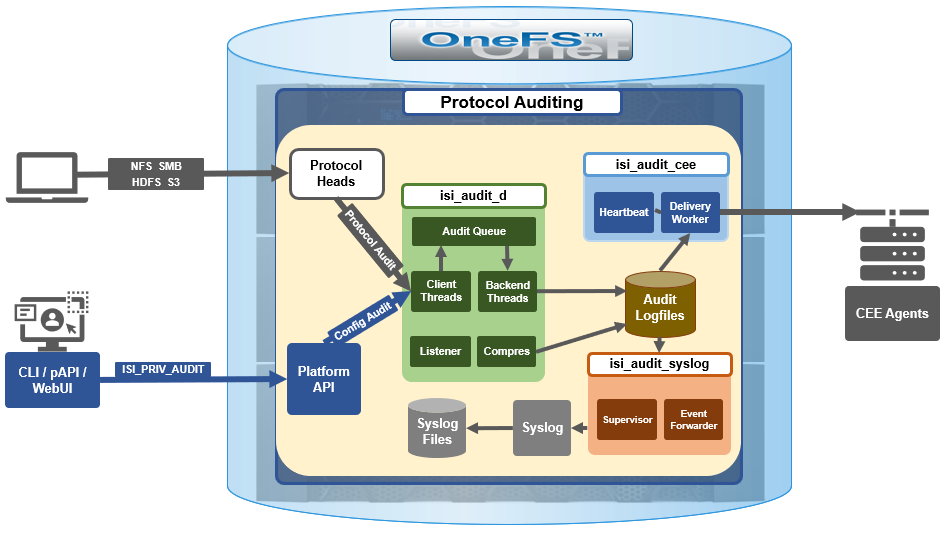

The following diagram outlines the basic architecture of OneFS audit:

Both system configuration changes, as well as protocol activity, can be easily audited on a PowerScale cluster. However, the protocol path is greyed out above, since it is outside the focus of this article. More information on OneFS protocol auditing can be found here.

Both system configuration changes, as well as protocol activity, can be easily audited on a PowerScale cluster. However, the protocol path is greyed out above, since it is outside the focus of this article. More information on OneFS protocol auditing can be found here.

As illustrated above, the OneFS audit framework is centered around three main services.

Service | Description |

isi_audit_cee | Service allowing OneFS to support third-party auditing applications. The main method of accessing protocol audit data from OneFS is through a third-party auditing application. |

isi_audit_d | Responsible for per-node audit queues and managing the data store for those queues. It provides a protocol on which clients may produce event payloads within a given context. It establishes a Unix domain socket for queue producers and handles writing and rotation of log files in /ifs/.ifsvar/audit/logs/node###/{config,protocol}/*. |

isi_audit_syslog | Daemon providing forwarding of audit config and protocol events to syslog. |

The basic configuration auditing workflow sees a cluster config change request come in via either the OneFS CLI, WebUI or platform API. The API handler infrastructure passes this request to the isi_audit_d service which intercepts it as a client thread and adds it to the audit queue. It is then processed and passed via a backend thread and written to the audit log files (IFS) as appropriate.

If audit syslog forwarding has been configured, IFS also passes the event to the isi_audit_syslog daemon, where a supervisor process instructs a writer thread to send it to the syslog which in turn updates its pertinent /var/log/ logfiles.

Similarly, if Common Event Enabler (CEE) forwarding has been enabled, IFS will also pass the request to the isi_audit_cee service where a delivery worker threads will intercept it and send the event to the CEE server pool. The isi_audit_cee heartbeat task makes CEE servers available for audit event delivery. Only after a CEE server has received a successful heartbeat will audit events be delivered to it. Every ten seconds, the heartbeat task wakes up and sends each CEE server in the configuration a heartbeat. While CEE servers are available and events are in memory, an attempt will be made to deliver these. Shutdown will only save audit log position if all the events are delivered to CEE since audit should not lose events. It isn't critical that all events are delivered at shutdown since any unsaved events can be resent to CEE on the next start of isi_audit_cee since CEE handles duplicates.

Within OneFS, all audit data is organized by topic and is securely stored in the file system.

# isi audit topics list

Name Max Cached Messages

-----------------------------

protocol 2048

config 1024

-----------------------------

Total: 2

Auditing can detect a variety of potential sources of data loss. These include unauthorized access attempts, inappropriate entitlements, plus a bevy of other fraudulent activities that plague organizations across the gamut of industries. Enterprises are increasingly required to comply with stringent regulatory mandates developed to protect against these sources of data theft and loss.

OneFS system configuration auditing is designed to track and record all configuration events that are handled by the API through the command-line interface (CLI).

# isi audit topics view config

Name: config

Max Cached Messages: 1024

Once enabled, system configuration auditing requires no additional configuration, and auditing events are automatically stored in the config audit topic directories. Audit access and management is governed by the ‘ISI_PRIV_AUDIT’ RBAC privilege, and OneFS provides a default ‘AuditAdmin’ role for this purpose.

Audit events are stored in a binary file under /ifs/.ifsvar/audit/logs. The logs automatically roll over to a new file after the size reaches 1 GB. The audit logs are consumable by auditing applications that support the Dell Common Event Enabler (CEE).

OneFS audit topics and settings can easily be viewed and modified. For example, to increase the configuration auditing maximum cached messages threshold to 2048 from the CLI:

# isi audit topics modify config --max-cached-messages 2048

# isi audit topics view config

Name: config

Max Cached Messages: 2048

Audit configuration can also be modified or viewed per access zone and/or topic.

Operation | CLI Syntax | Method and URI |

Get audit settings | isi audit settings view | GET <cluster-ip:port>/platform/3/audit/settings |

Modify audit settings | isi audit settings modify … | PUT <cluster-ip:port>/platform/3/audit/settings |

View JSON schema for this resource, including query parameters and object properties info. |

| GET <cluster-ip:port>/platform/3/audit/settings?describe |

View JSON schema for this resource, including query parameters and object properties info. |

| GET <cluster-ip:port>/platform/1/audit/topics?describe |

Configuration auditing can be enabled on a cluster from either the CLI or platform API. The current global audit configuration can be viewed as follows:

1# isi audit settings global view

Protocol Auditing Enabled: No

Audited Zones: -

CEE Server URIs: -

Hostname:

Config Auditing Enabled: No

Config Syslog Enabled: No

Config Syslog Servers: -

Config Syslog TLS Enabled: No

Config Syslog Certificate ID:

Protocol Syslog Servers: -

Protocol Syslog TLS Enabled: No

Protocol Syslog Certificate ID:

System Syslog Enabled: No

System Syslog Servers: -

System Syslog TLS Enabled: No

System Syslog Certificate ID:

Auto Purging Enabled: No

Retention Period: 180

System Auditing Enabled: No

In this case, configuration auditing is disabled – its default setting. The following CLI syntax will enable (and verify) configuration auditing across the cluster:

# isi audit settings global modify --config-auditing-enabled 1

# isi audit settings global view | grep -i 'config audit'

Config Auditing Enabled: Yes

In the next article, we’ll look at the config audit management, event viewing, and troubleshooting.

To enable configuration change audit redirection to syslog:

# isi audit settings global modify --config-auditing-enabled true

# isi audit settings global modify --config-syslog-enabled true

# isi audit settings global view | grep -i 'config audit'

Config Auditing Enabled: Yes

Similarly, to disable configuration change audit redirection to syslog:

# isi audit settings global modify --config-syslog-enabled false

# isi audit settings global modify --config-auditing-enabled false

configure audit

2.

#isi audit setting modify --add-cee-server-uris='http://seavee5.west.isilon.com:12228/cee'

4.

# isi audit settings modify --add-audited-zones=auditgti

4' if you don't want audit that much

# isi audit setting modify --remove-audited-zones=System

config zone

3.

#isi zone zones create --all-auth-providers=true --audit-failure=all --audit-success=all --path=/ifs/data --name=auditgti

3'. if you dont' want to audit that much

#isi zone zones create --all-auth-providers=true --audit-failure=read,logon --audit-success=write,delete --path=/ifs/data --name=auditgti

network pool

5.

#isi network create pool --name=subnet0:auditpool --access-zone=auditgit --iface=<your interface> --range=<your range>

5' you can also audit System by default, so this step can be ignored

other settings

#isi audit setting modify --hostname="<any name you want really, this just gets inserted into the payload>"

#isi audit setting modify --cee-log-time="Protocol@1900-01-01 00:00:01"

The platform API can also be used to configure and manage auditing. For example, to enable configuration auditing on a cluster:

PUT /platform/1/audit/settings

Authorization: Basic QWxhZGRpbjpvcGVuIHN1c2FtZQ==

{

'config_auditing_enabled': True

}

Response example

The HTTP ‘204 response code from the cluster indicates that the request was successful, and that configuration auditing is now enabled on the cluster. No message body is returned for this request.

204 No Content

Content-type: text/plain,

Allow: 'GET, PUT, HEAD'

Similarly, to modify the config audit topic’s maximum cached messages threshold to a value of ‘1000’ via the API:

PUT /1/audit/topics/config

Authorization: Basic QWxhZGRpbjpvcGVuIHN1c2FtZQ==

{

"max_cached_messages": 1000

}

Again, no message body is returned from OneFS for this request.

204 No Content

Content-type: text/plain,

Allow: 'GET, PUT, HEAD'

Note that, in the unlikely event that a cluster experiences an outage during which it loses quorum, auditing will be suspended until it is regained. Events similar to the following will be written to the /var/log/audit_d.log file:

940b5c700]: Lost quorum! Audit logging will be disabled until /ifs is writeable again.

2023-08-28T15:37:32.132780+00:00 <1.6> TME-1(id1) isi_audit_d[6495]: [0x345940b5c700]: Regained quorum. Logging resuming.

When it comes to reading audit events on the cluster, OneFS natively provides the handy ‘isi_audit_viewer’ utility. For example, the following audit viewer output shows the events logged when the cluster admin added the ‘/ifs/tmp’ path to the SmartDedupe configuration, and created a new user named ‘test’1’:

# isi_audit_viewer

[0: Tue Aug 29 23:01:16 2023] {"id":"f54a6bec-46bf-11ee-920d-0060486e0a26","timestamp":1693350076315499,"payload":{"user":{"token": {"UID":0, "GID":0, "SID": "SID:S-1-22-1-0", "GSID": "SID:S-1-22-2-0", "GROUPS": ["SID:S-1-5-11", "GID:5", "GID:10", "GID:20", "GID:70"], "protocol": 17, "zone id": 1, "client": "10.135.6.255", "local": "10.219.64.11" }},"uri":"/1/dedupe/settings","method":"PUT","args":{}

,"body":{"paths":["/ifs/tmp"]}

}}

[1: Tue Aug 29 23:01:16 2023] {"id":"f54a6bec-46bf-11ee-920d-0060486e0a26","timestamp":1693350076391422,"payload":{"status":204,"statusmsg":"No Content","body":{}}}

[2: Tue Aug 29 23:03:43 2023] {"id":"4cfce7a5-46c0-11ee-920d-0060486e0a26","timestamp":1693350223446993,"payload":{"user":{"token": {"UID":0, "GID":0, "SID": "SID:S-1-22-1-0", "GSID": "SID:S-1-22-2-0", "GROUPS": ["SID:S-1-5-11", "GID:5", "GID:10", "GID:20", "GID:70"], "protocol": 17, "zone id": 1, "client": "10.135.6.255", "local": "10.219.64.11" }},"uri":"/18/auth/users","method":"POST","args":{}

,"body":{"name":"test1"}

}}

[3: Tue Aug 29 23:03:43 2023] {"id":"4cfce7a5-46c0-11ee-920d-0060486e0a26","timestamp":1693350223507797,"payload":{"status":201,"statusmsg":"Created","body":{"id":"SID:S-1-5-21-593535466-4266055735-3901207217-1000"}

}}

The audit log entries, such as those above, typically comprise the following components:

- Timestamp (Human readable)

- Unique Entry ID

- Timestamp (Unix Epoch Time)

- Node Number

- The user tokens of the person executing the command

- User persona (Unix/Windows)

- Primary group persona (Unix/Windows)

- Supplemental group personas (Unix/Windows)

- RBAC privileges of the person executing the command

- Interface used to generate the command

- 10 = PAPI / WebUI

- 16 = Console

- 17 = SSH

- Access Zone that the command was executed against

- Where the user connected from

- The local node address where the command was executed

- Command

- Command arguments

- Command body

The ‘isi_audit_viewer’ utility automatically reads the ‘config’ log topic by default, but can also be used read the ‘protocol’ log topic too. Its CLI command syntax is as follows:

# isi_audit_viewer -h

Usage: isi_audit_viewer [ -n <nodeid> | -t <topic> | -s <starttime>|

-e <endtime> | -v ]

-n <nodeid> : Specify node id to browse (default: local node)

-t <topic> : Choose topic to browse.

Topics are "config" and "protocol" (default: "config")

-s <start> : Browse audit logs starting at <starttime>

-e <end> : Browse audit logs ending at <endtime>

-v verbose : Prints out start / end time range before printing

records

Note that, on large clusters where there is heavy (up to the 100,000’s) of audit writes, when running the isi_audit_viewer utility across the cluster with ‘isi_for_array’, it can potentially lead to memory starvation and other issues – especially if outputting to a directory under /ifs. As such, consider directing the output to a non-IFS location such as /var/temp. Also, the isi_audit_viewer ‘-s’ (start time) and ‘-e’ (end time) flags can be used to limit a search (for 1-5 minutes), helping reduce the size of data.

In addition to reading audit events, the view is also a useful tool to assist with troubleshoot any auditing issues. Additionally, any errors that are encountered while processing audit events, and when delivering them to an external CEE server, are written to the log file ‘/var/log/isi_audit_cee.log’. Additionally, the protocol specific logs will contain any issues the audit filter has collecting while auditing events.

Related Blog Posts

OneFS and HTTP Security

Mon, 22 Apr 2024 20:35:30 -0000

|Read Time: 0 minutes

To enable granular HTTP security configuration, OneFS provides an option to disable nonessential HTTP components selectively. This can help reduce the overall attack surface of your infrastructure. Disabling a specific component’s service still allows other essential services on the cluster to continue to run unimpeded. In OneFS 9.4 and later, you can disable the following nonessential HTTP services:

Service | Description |

PowerScaleUI | The OneFS WebUI configuration interface. |

Platform-API-External | External access to the OneFS platform API endpoints. |

Rest Access to Namespace (RAN) | REST-ful access by HTTP to a cluster’s /ifs namespace. |

RemoteService | Remote Support and In-Product Activation. |

SWIFT (deprecated) | Deprecated object access to the cluster using the SWIFT protocol. This has been replaced by the S3 protocol in OneFS. |

You can enable or disable each of these services independently, using the CLI or platform API, if you have a user account with the ISI_PRIV_HTTP RBAC privilege.

You can use the isi http services CLI command set to view and modify the nonessential HTTP services:

# isi http services list ID Enabled ------------------------------ Platform-API-External Yes PowerScaleUI Yes RAN Yes RemoteService Yes SWIFT No ------------------------------ Total: 5

For example, you can easily disable remote HTTP access to the OneFS /ifs namespace as follows:

# isi http services modify RAN --enabled=0

You are about to modify the service RAN. Are you sure? (yes/[no]): yes

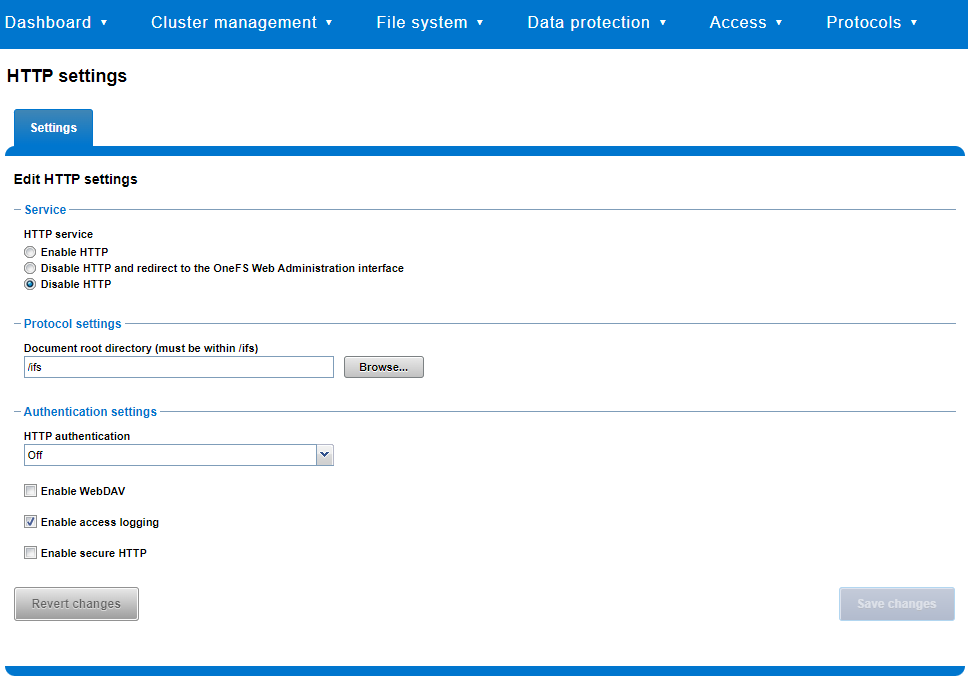

Similarly, you can also use the WebUI to view and edit a subset of the HTTP configuration settings, by navigating to Protocols > HTTP settings:

That said, the implications and impact of disabling each of the services is as follows:

Service | Disabling impacts |

WebUI | The WebUI is completely disabled, and access attempts (default TCP port 8080) are denied with the warning Service Unavailable. Please contact Administrator. If the WebUI is re-enabled, the external platform API service (Platform-API-External) is also started if it is not running. Note that disabling the WebUI does not affect the PlatformAPI service. |

Platform API | External API requests to the cluster are denied, and the WebUI is disabled, because it uses the Platform-API-External service. Note that the Platform-API-Internal service is not impacted if/when the Platform-API-External is disabled, and internal pAPI services continue to function as expected. If the Platform-API-External service is re-enabled, the WebUI will remain inactive until the PowerScaleUI service is also enabled. |

RAN | If RAN is disabled, the WebUI components for File System Explorer and File Browser are also automatically disabled. From the WebUI, attempts to access the OneFS file system explorer (File System > File System Explorer) fail with the warning message Browse is disabled as RAN service is not running. Contact your administrator to enable the service. This same warning also appears when attempting to access any other WebUI components that require directory selection. |

RemoteService | If RemoteService is disabled, the WebUI components for Remote Support and In-Product Activation are disabled. In the WebUI, going to Cluster Management > General Settings and selecting the Remote Support tab displays the message The service required for the feature is disabled. Contact your administrator to enable the service. In the WebUI, going to Cluster Management > Licensing and scrolling to the License Activation section displays the message The service required for the feature is disabled. Contact your administrator to enable the service. |

SWIFT | Deprecated object protocol and disabled by default. |

You can use the CLI command isi http settings view to display the OneFS HTTP configuration:

# isi http settings view Access Control: No Basic Authentication: No WebHDFS Ran HTTPS Port: 8443 Dav: No Enable Access Log: Yes HTTPS: No Integrated Authentication: No Server Root: /ifs Service: disabled Service Timeout: 8m20s Inactive Timeout: 15m Session Max Age: 4H Httpd Controlpath Redirect: No

Similarly, you can manage and change the HTTP configuration using the isi http settings modify CLI command.

For example, to reduce the maximum session age from four to two hours:

# isi http settings view | grep -i age Session Max Age: 4H # isi http settings modify --session-max-age=2H # isi http settings view | grep -i age Session Max Age: 2H

The full set of configuration options for isi http settings includes:

Option | Description |

--access-control <boolean> | Enable Access Control Authentication for the HTTP service. Access Control Authentication requires at least one type of authentication to be enabled. |

--basic-authentication <boolean> | Enable Basic Authentication for the HTTP service. |

--webhdfs-ran-https-port <integer> | Configure Data Services Port for the HTTP service. |

--revert-webhdfs-ran-https-port | Set value to system default for --webhdfs-ran-https-port. |

--dav <boolean> | Comply with Class 1 and 2 of the DAV specification (RFC 2518) for the HTTP service. All DAV clients must go through a single node. DAV compliance is NOT met if you go through SmartConnect, or using 2 or more node IPs. |

--enable-access-log <boolean> | Enable writing to a log when the HTTP server is accessed for the HTTP service. |

--https <boolean> | Enable the HTTPS transport protocol for the HTTP service. |

--https <boolean> | Enable the HTTPS transport protocol for the HTTP service. |

--integrated-authentication <boolean> | Enable Integrated Authentication for the HTTP service. |

--server-root <path> | Document root directory for the HTTP service. Must be within /ifs. |

--service (enabled | disabled | redirect | disabled_basicfile) | Enable/disable the HTTP Service or redirect to WebUI or disabled BasicFileAccess. |

--service-timeout <duration> | The amount of time (in seconds) that the server will wait for certain events before failing a request. A value of 0 indicates that the service timeout value is the Apache default. |

--revert-service-timeout | Set value to system default for --service-timeout. |

--inactive-timeout <duration> | Get the HTTP RequestReadTimeout directive from both the WebUI and the HTTP service. |

--revert-inactive-timeout | Set value to system default for --inactive-timeout. |

--session-max-age <duration> | Get the HTTP SessionMaxAge directive from both WebUI and HTTP service. |

--revert-session-max-age | Set value to system default for --session-max-age. |

--httpd-controlpath-redirect <boolean> | Enable or disable WebUI redirection to the HTTP service. |

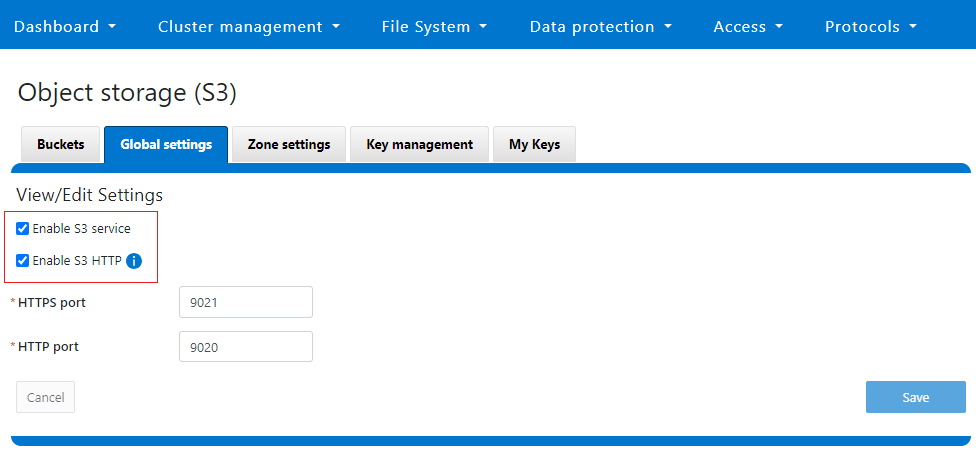

Note that while the OneFS S3 service uses HTTP, it is considered a tier-1 protocol, and as such is managed using its own isi s3 CLI command set and corresponding WebUI area. For example, the following CLI command forces the cluster to only accept encrypted HTTPS/SSL traffic on TCP port 9999 (rather than the default TCP port 9021):

# isi s3 settings global modify --https-only 1 –https-port 9921 # isi s3 settings global view HTTP Port: 9020 HTTPS Port: 9999 HTTPS only: Yes S3 Service Enabled: Yes

Additionally, you can entirely disable the S3 service with the following CLI command:

# isi services s3 disable The service 's3' has been disabled.

Or from the WebUI, under Protocols > S3 > Global settings:

Author: Nick Trimbee

OneFS and PowerScale F-series Management Ports

Mon, 22 Apr 2024 20:12:20 -0000

|Read Time: 0 minutes

Another security enhancement that OneFS 9.5 and later releases brings to the table is the ability to configure 1GbE NIC ports dedicated to cluster management on the PowerScale F900, F710, F600, F210, and F200 all-flash storage nodes and P100 and B100 accelerators. Since these platforms were released, customers have been requesting the ability to activate the 1GbE NIC ports so that the node management activity and front end protocol traffic can be separated on physically distinct interfaces.

For background, since their introduction, the F600 and F900 have shipped with a quad port 1GbE rNDC (rack Converged Network Daughter Card) adapter. However, these 1GbE ports were non-functional and unsupported in OneFS releases prior to 9.5. As such, the node management and front-end traffic was co-mingled on the front-end interface.

In OneFS 9.5 and later, 1GbE network ports are now supported on all of the PowerScale PowerEdge based platforms for the purposes of node management, and are physically separate from the other network interfaces. Specifically, this enhancement applies to the F900, F600, F200 all-flash nodes, and P100 and B100 accelerators.

Under the hood, OneFS has been updated to recognize the 1GbE rNDC NIC ports as usable for a management interface. Note that the focus of this enhancement is on factory enablement and support for existing F600 customers that have the unused 1GbE rNDC hardware. This functionality has also been back-ported to OneFS 9.4.0.3 and later RUPs. Since the introduction of this feature, there have been several requests raised about field upgrades, but that use case is separate and will be addressed in a later release through scripts, updates of node receipts, procedures, and so on.

Architecturally, aside from some device driver and accounting work, no substantial changes were required to the underlying OneFS or platform architecture to implement this feature. This means that in addition to activating the rNDC, OneFS now supports the relocated front-end NIC in PCI slots 2 or 3 for the F200, B100, and P100.

OneFS 9.5 and later recognizes the 1GbE rNDC as usable for the management interface in the OneFS Wizard, in the same way it always has for the H-series and A-series chassis-based nodes.

All four ports in the 1GbE NIC are active, and for the Broadcom board, the interfaces are initialized and reported as bge0, bge1, bge2, and bge3.

The pciconf CLI utility can be used to determine whether the rNDC NIC is present in a node. If it is, a variety of identification and configuration details are displayed. For example, let’s look at the following output from a Broadcom rNDC NIC in an F200 node:

# pciconf -lvV pci0:24:0:0

bge2@pci0:24:0:0: class=0x020000 card=0x1f5b1028 chip=0x165f14e4 rev=0x00 hdr=0x00 class = network subclass = ethernet VPD ident = ‘Broadcom NetXtreme Gigabit Ethernet’ VPD ro PN = ‘BCM95720’ VPD ro MN = ‘1028’ VPD ro V0 = ‘FFV7.2.14’ VPD ro V1 = ‘DSV1028VPDR.VER1.0’ VPD ro V2 = ‘NPY2’ VPD ro V3 = ‘PMT1’ VPD ro V4 = ‘NMVBroadcom Corp’ VPD ro V5 = ‘DTINIC’ VPD ro V6 = ‘DCM1001008d452101000d45’

We can use the ifconfig CLI utility to determine the specific IP/interface mapping on the Broadcom rNDC interface. For example:

# ifconfig bge0 TME-1: bge0: flags=8843<UP,BROADCAST,RUNNING,SIMPLEX,MULTICAST> metric 0 mtu 1500 TME-1: ether 00:60:16:9e:X:X TME-1: inet 10.11.12.13 netmask 0xffffff00 broadcast 10.11.12.255 zone 1 TME-1: inet 10.11.12.13 netmask 0xffffff00 broadcast 10.11.12.255 zone 0 TME-1: media: Ethernet autoselect (1000baseT <full-duplex>) TME-1: status: active

In this output, the first IP address of the management interface’s pool is bound to bge0, which is the first port on the Broadcom rNDC NIC.

We can use the isi network pools CLI command to determine the corresponding interface. Within the system zone, the management interface is allocated an address from the configured IP range within its associated interface pool. For example:

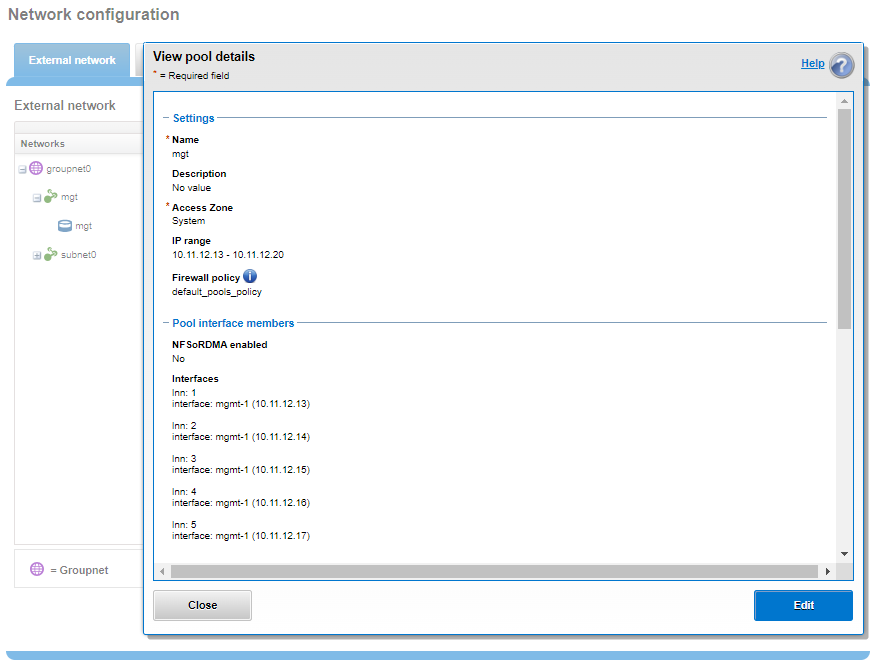

# isi network pools list ID SC Zone IP Ranges Allocation Method ---------------------------------------------------------------------------------------------- groupnet0.mgt.mgt cluster_mgt_isln.com 10.11.12.13-10.11.12.20 static # isi network pools view groupnet0.mgt.mgt | grep -i ifaces Ifaces: 1:mgmt-1, 2:mgmt-1, 3:mgmt-1, 4:mgmt-1, 5:mgmt-1

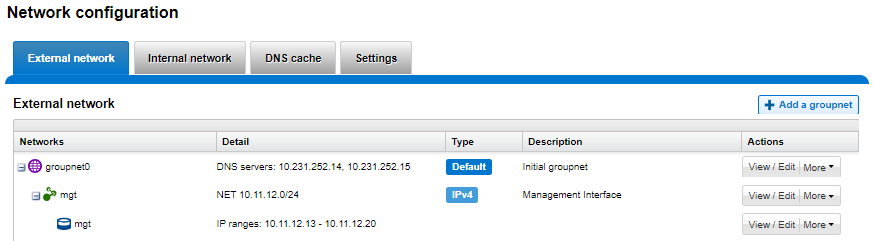

Or from the WebUI, under Network configuration > External network:

Drilling down into the mgt pool details shows the 1GbE management interfaces as the pool interface members:

Note that the 1GbE rNDC network ports are solely intended as cluster management interfaces. As such, they are not supported for use with regular front-end data traffic.

The F900 and F600 nodes already ship with a four port 1GbE rNDC NIC installed. However, the F200, B100, and P100 platform configurations have also been updated to include a quad port 1GbE rNDC card. These new configurations have been shipping by default since January 2023. This required relocating the front end network’s 25GbE NIC (Mellanox CX4) to PCI slot 2 in the motherboard. Additionally, the OneFS updates needed for this feature have also now allowed the F200 platform to be offered with a 100GbE option too. The 100GbE option uses a Mellanox CX6 NIC in place of the CX4 in slot 2.

With this 1GbE management interface enhancement, the same quad-port rNDC card (typically the Broadcom 5720) that has been shipped in the F900 and F600 since their introduction, is now included in the F200, B100 and P100 nodes as well. All four 1GbE rNDC ports are enabled and active under OneFS 9.5 and later, too.

Node port ordering continues to follow the standard, increasing numerically from left to right. However, be aware that the port labels are not visible externally because they are obscured by the enclosure’s sheet metal.

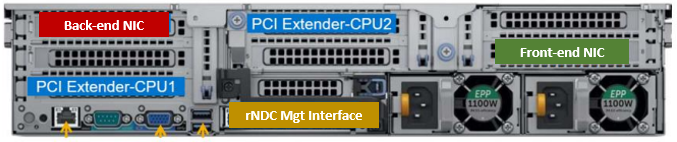

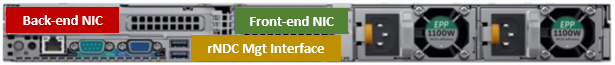

The following back-of-chassis hardware images show the new placements of the NICs in the various F-series and accelerator platforms:

F600

F900

For both the F600 and F900, the NIC placement remains unchanged, because these nodes have always shipped with the 1GbE quad port in the rNDC slot since their launch.

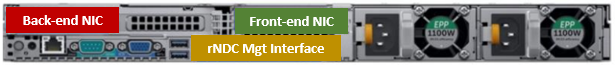

F200

The F200 sees its front-end NIC moved to slot 3, freeing up the rNDC slot for the quad-port 1GbE Broadcom 5720.

Because the B100 backup accelerator has a fibre-channel card in slot 2, it sees its front-end NIC moved to slot 3, freeing up the rNDC slot for the quad-port 1GbE Broadcom 5720.

Finally, the P100 accelerator sees its front-end NIC moved to slot 3, freeing up the rNDC slot for the quad-port 1GbE Broadcom 5720.

Note that, while there is currently no field hardware upgrade process for adding rNDC cards to legacy F200 nodes or B100 and P100 accelerators, this will be addressed in a future release.

Author: Nick Trimbee