Improved PowerEdge Server Thermal Capability with Smart Flow

Download PDFFri, 03 Mar 2023 20:12:37 -0000

|Read Time: 0 minutes

Introduction

New PowerEdge Smart Flow chassis options increase airflow to support the highest core count CPUs and DDR5 in an air-cooled environment within current IT infrastructure.

What is Smart Flow?

One way to increase the thermal capacity of an air-cooled server is to increase airflow that exhausts heat generated by components. Dell PowerEdge addresses this in several ways: high-performance fans, air baffles to direct airflow within the chassis, and intelligent thermal controls that monitor temperature sensors and dynamically adjust fan speeds.

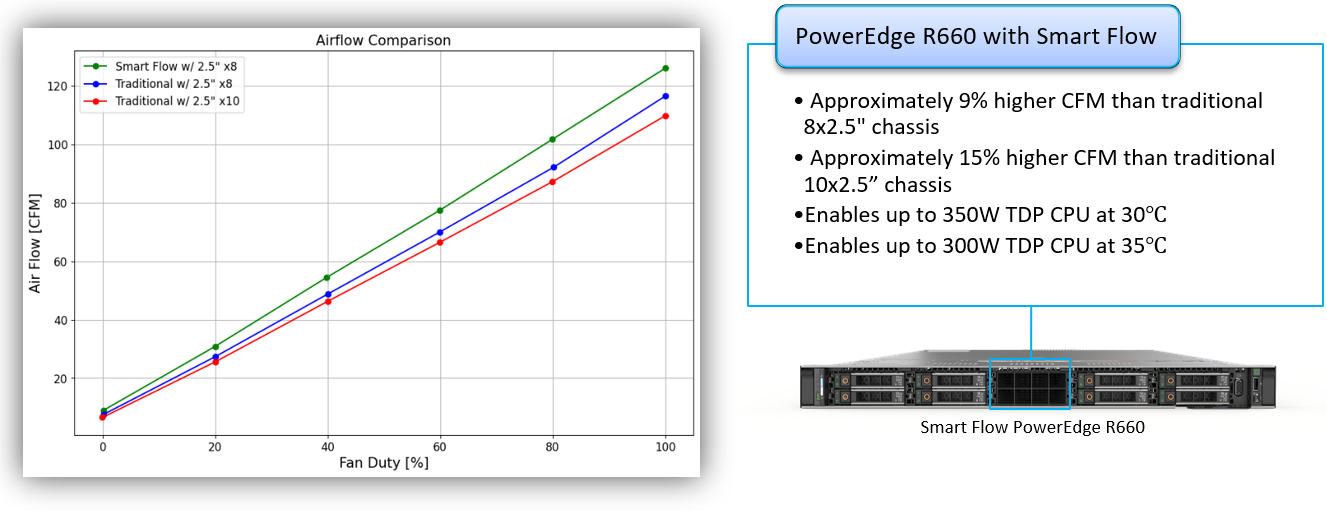

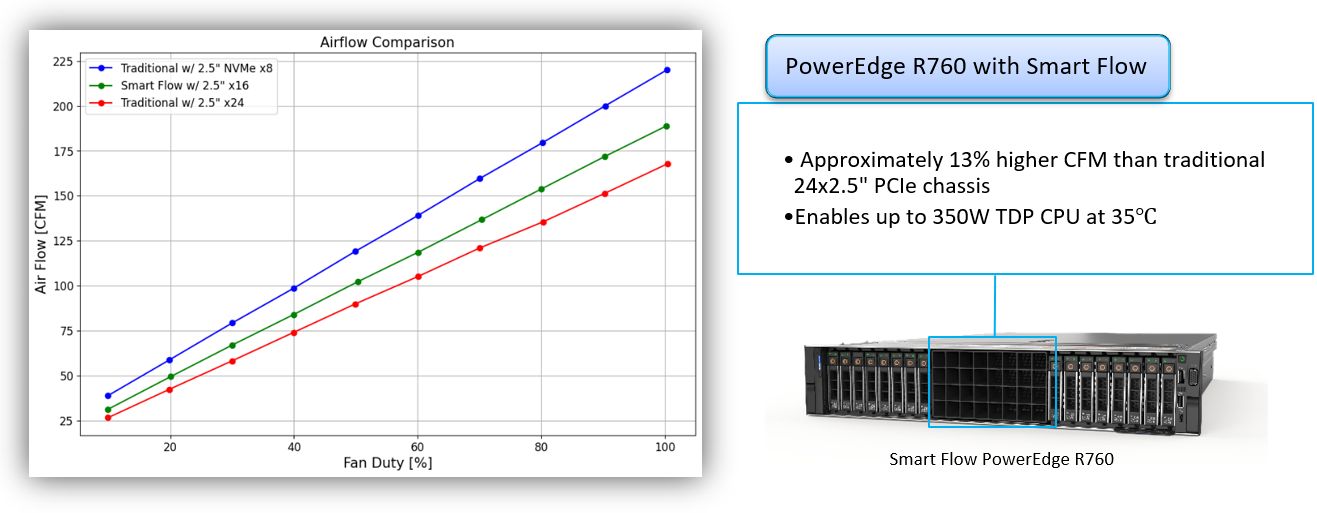

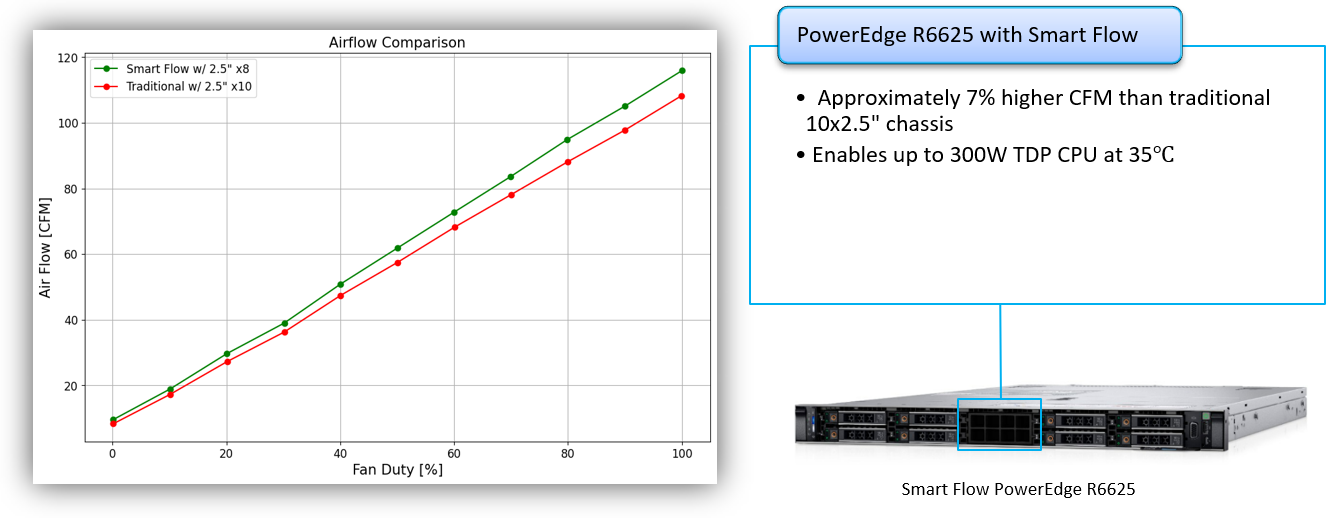

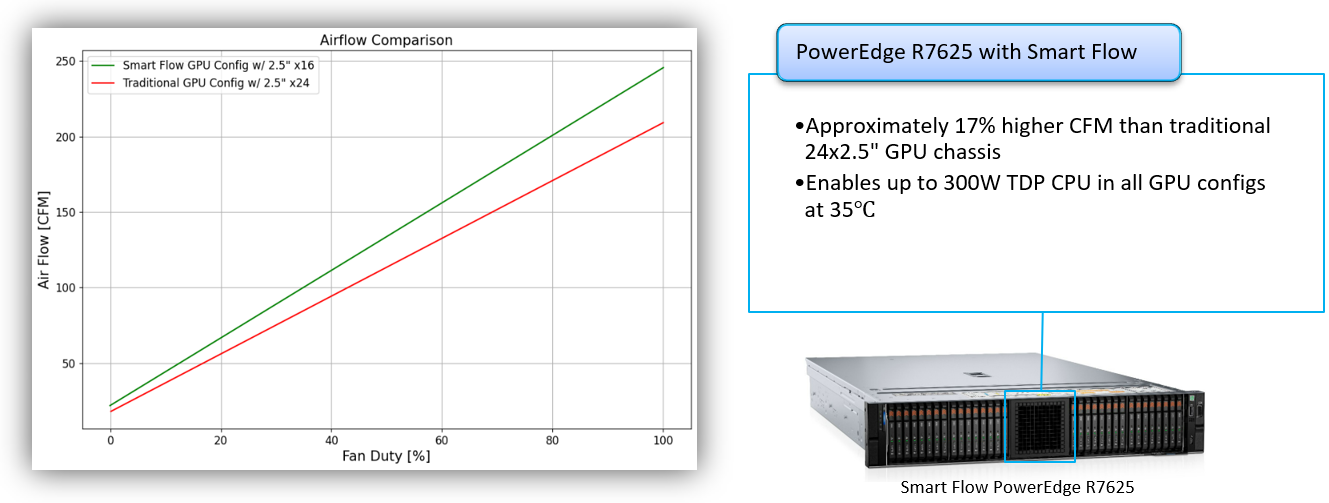

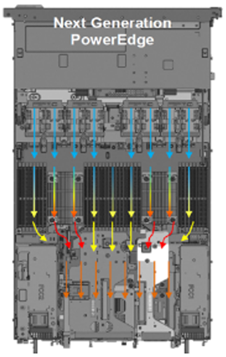

With Smart Flow, our thermal engineers have increased server thermal capacity by reducing impedance to fresh air intake on select server configurations. Servers with Smart Flow replace middle storage slots with centralized airflow inlets to maintain balanced airflow distribution within the server. This is made possible by new backplane configurations that allow larger air intake capacity. Smart Flow enables expanded CPU and memory configurations for lower storage needs in our next generation 1U and 2U air-cooled PowerEdge servers. Gains in thermal efficiency are also realized with Smart Flow implementations and will be explored in a subsequent paper. Examples for four different servers are shown here:

PowerEdge R660

Figure 1. PowerEdge R660 airflow increase with Smart Flow

PowerEdge R760

Figure 2. PowerEdge R760 airflow increase with Smart Flow

PowerEdge R6625

Figure 3. PowerEdge R6625 airflow increase with Smart Flow

PowerEdge R7625

Figure 4. PowerEdge R7625 airflow increase with Smart Flow

Conclusion

Dell PowerEdge Smart Flow increases select servers' thermal capacity, enabling high-power CPUs and GPUs, at increased ambient temperatures, for the most demanding workloads in air-cooled data centers.

Related Documents

Dell PowerEdge Servers Offer Comprehensive GPU Acceleration Options

Fri, 03 Mar 2023 19:57:27 -0000

|Read Time: 0 minutes

Summary

The next generation of PowerEdge servers is engineered to accelerate insights by enabling the latest technologies. These technologies include next-gen CPUs bringing support for DDR5 and PCIe Gen 5 and PowerEdge servers that support a wide range of enterprise-class GPUs. Over 75% of next generation Dell PowerEdge servers offer support for GPU acceleration.

Accelerate insights

For the digital enterprise, success hinges on leveraging big, fast data. But as data sets grow, traditional data centers are starting to hit performance and scale limitations — especially when ingesting and querying real-time data sources. While some have long taken advantage of accelerators for speeding visualization, modeling, and simulation, today, more mainstream applications than ever before can leverage accelerators to boost insight and innovation. Accelerators such as graphics processing units (GPUs) complement and accelerate CPUs, using parallel processing to crunch large volumes of data faster. Accelerated data centers can also deliver better economics, providing breakthrough performance with fewer servers, resulting in faster insights and lower costs. Organizations in multiple industries are adopting server accelerators to outpace the competition — honing product and service offerings with data-gleaned insights, enhancing productivity with better application performance, optimizing operations with fast and powerful analytics, and shortening time to market by doing it all faster than ever before. Dell Technologies offers a choice of server accelerators in Dell PowerEdge servers so you can turbo-charge your applications.

For the digital enterprise, success hinges on leveraging big, fast data. But as data sets grow, traditional data centers are starting to hit performance and scale limitations — especially when ingesting and querying real-time data sources. While some have long taken advantage of accelerators for speeding visualization, modeling, and simulation, today, more mainstream applications than ever before can leverage accelerators to boost insight and innovation. Accelerators such as graphics processing units (GPUs) complement and accelerate CPUs, using parallel processing to crunch large volumes of data faster. Accelerated data centers can also deliver better economics, providing breakthrough performance with fewer servers, resulting in faster insights and lower costs. Organizations in multiple industries are adopting server accelerators to outpace the competition — honing product and service offerings with data-gleaned insights, enhancing productivity with better application performance, optimizing operations with fast and powerful analytics, and shortening time to market by doing it all faster than ever before. Dell Technologies offers a choice of server accelerators in Dell PowerEdge servers so you can turbo-charge your applications.

Accelerated server architecture

Our world-class engineering team designs PowerEdge servers with the latest technologies for ultimate performance. Here’s how.

Industry enabled technologies

- Next Generation Intel and AMD Processors

- DDR5 Memory

- PCIe Gen5

- GPU Form Factor Options

Next generation air and Direct Liquid Cooling (DLC) technology

PowerEdge ensures no-compromise system performance through innovative cooling solutions while offering customers options that fit their facility or usage model.

PowerEdge ensures no-compromise system performance through innovative cooling solutions while offering customers options that fit their facility or usage model.

- Innovations that extend the range of air-cooled configurations

- Advanced designs - airflow pathways are streamlined within the server, directing the right amount of air to where it is needed

- Latest generation fan and heat sinks – to manage the latest high-TDP CPUs and other key components

- Intelligent thermal controls – automatically adjust airflow during workload or environmental changes, seamless support for channel add-in cards, plus enhanced customer control options for temp/power/acoustics

- For high-performance CPU and GPU options in dense configurations, Dell DLC effectively manages heat while improving overall system efficiency

Our GPU partners

AMD

Dell Technologies and AMD have established a solid partnership to help organizations accelerate their AI initiatives. Together our technologies provide the foundation for successful AI solutions that drive the development of advanced DL software frameworks. These technologies also deliver massively parallel computing in the form of AMD Graphic Processing Units (GPUs) for parallel model training and scale-out file systems to support the concurrency, performance and capacity requirements of unstructured image and video data sets. With AMD ROCm open software platform built for flexibility and performance, the HPC and AI communities can gain access to open compute languages, compilers, libraries, and tools designed to accelerate code development and solve the toughest challenges in the world today.

Intel

Dell Technologies and Intel are giving customers new choices in enterprise-class GPUs. The Intel Data Center GPUs are available with our next generation of PowerEdge servers. These GPUs are designed to accelerate AI inferencing, VDI, and model training workloads. And with toolsets like Intel® oneAPI and OpenVINOTM, developers have the tools they need to develop new AI applications and migrate existing applications to run optimally on Intel GPUs.

NVIDIA

Dell Technologies solutions designed with NVIDIA hardware and software enable customers to deploy high-performance deep learning and AI-capable enterprise-class servers from the edge to the data center. This relationship allows Dell to offer Ready Solutions for AI and built-to-order PowerEdge servers with your choice of NVIDIA GPUs. With Dell Ready Solutions for AI, organizations can rely on a Dell-designed and validated set of best-of-breed technologies for software – including AI frameworks and libraries – with compute, networking, and storage. With NVIDIA CUDA, developers can accelerate computing applications by harnessing the power of the GPUs. Applications and operations (such as matrix multiplication) that are typically run serially in CPUs can run on thousands of GPU cores in parallel.

GPU options for next-generation PowerEdge servers

Turbo-charge your applications with performance accelerators available in select Dell PowerEdge tower and rack servers. The number and type of accelerators that fit in PowerEdge servers are based on the physical dimensions of the PCIe adapter cards and the GPU form factor.

Brand | GPU Model | GPU Memory | Max Power Consumption | Form Factor | 2-way Bridge | Recommended Workloads | |

PCIe Adapter Form Factor | |||||||

NVIDIA | A2 | 16 GB GDDR6 | 60W | SW, HHHL or FHHL | n/a | AI Inferencing, Edge, VDI | |

NVIDIA | A16 | 64 GB GDDR6 | 250W | DW, FHFL | n/a | VDI | |

NVIDIA | A40, L40 | 48 GB GDDR6 | 300W | DW, FHFL | Y, N | Performance graphics, Multi-workload | |

NVIDIA | A30 | 24 GB HBM2 | 165W | DW, FHFL | Y | AI Inferencing, AI Training | |

NVIDIA | A100 | 80 GB HBM2e | 300W | DW, FHFL | Y, Y | AI Training, HPC, AI Inferencing | |

NVIDIA | H100 | 80GB HBM2e | 300 - 350W | DW, FHFL | Y | AI Training, HPC, AI Inferencing | |

AMD | MI210 | 64 GB HBM2e | 300W | DW, FHFL | Y | HPC, AI Training | |

Intel | Max 1100* | 48GB HBM2e | 300W | DW, FHFL | Y | HPC, AI Training | |

Intel | Flex 140* | 12GB GDDR6 | 75W | SW, HHHL or FHHL | n/a | AI Inferencing | |

SXM / OAM Form Factor | |||||||

NVIDIA | HGX A100* | 80GB HBM2 | 500W | SXM w/ NVLink | n/a | AI Training, HPC | |

NVIDIA | HGX H100* | 80GB HBM3 | 700W | SXM w/ NVLink | n/a | AI Training, HPC | |

Intel | Max 1550 * | 128GB HBM2e | 600W | OAM w/ XeLink | n/a | AI Training, HPC | |

* Development or under evaluation | |||||||

References

Intel 4th Gen Xeon featuring QAT 2.0 Technology Delivers Massive Performance Uplift in Common Cipher Suites

Sat, 27 Apr 2024 15:07:09 -0000

|Read Time: 0 minutes

Intel QAT Hardware v2.0 acceleration running on 16G PowerEdge delivers on performance for ISPs - Lab Tested and Proven

Introduction

The Internet as we know it would simply not be possible without encryption technologies. This technology lets us perform secure communication and information exchange over public networks. If you buy a pair of shoes from an online retailer, the payment information you provide is encrypted with such a high level of security that extracting your credit card information from ciphertext would be nearly an impossible task for even a supercomputer. The shoes might not end up fitting, but if the requisite encryption and secure communication tech is properly implemented, your payment information remains a secret known only to you and the entity receiving payment.

This domain of security requires hardware that is up to the task of performing handshakes, key exchanges, and other algorithmic tasks at an expeditious speed.

As we’ll demonstrate through extensive testing and proven results in our lab, Intel’s QAT 2.0 Hardware Accelerator featured on Gen4 Xeon processors is a performant and dev friendly choice to supercharge your encryption workloads. This feature is readily available on our current products across the PowerEdge Server portfolio.

What is QAT?

QAT, or “Quick Assist Technology” is an Intel technology that accelerates two common use cases: encryption acceleration and compression/decompression acceleration. In this tech note, we look at the encryption side of the QAT Accelerator feature set and explore leveraging QAT to speed up cipher suites used in deployments of OpenSSL–a common software library used by a vast array of websites and applications to secure their communications.

But before we start, let’s briefly touch on the lineage and history of QAT. QAT was introduced back in 2007, initially available as a discrete add-in PCIe card. A little further on in its evolution, QAT found a home in Intel Chipsets. Now, with the introduction of the 4th Gen Xeon processor, the silicon required to enable QAT acceleration has been added to the SOC. The hardware being this close to the processor has increased performance and reduced the logistical complexity of having to source and manage an external device.

For a complete list of the QAT Hardware v2.0’s cryptosystem and algorithms support, see: https://github.com/intel/QAT_Engine/blob/master/docs/features.md#qat_hw-features

QAT hardware acceleration may not be the fastest method to accelerate all ciphers or algorithms. With this in mind, QAT Hardware Acceleration (also called QAT_HW) can peacefully co-exist with QAT Software Acceleration (or QAT_SW). This configuration, while somewhat complex, is well supported by clear documentation. Fundamentally, this configuration relies on a method to ensure that the maximum performance is extracted for all inputs given what resources are available on the system. Allowing for use of an algorithm bitmap to dynamically choose between and prioritize the use of QAT_HW and QAT_SW based on hardware availability and which method offers the best performance.

Next we'll look at setting up QATlib and see what the performance looks like using OpenSSL Speed and a few common cipher suites.

Lab Test Setup and Notes

For this test we use a Dell PowerEdge R760. This is Dell’s mainstream 2U dual socket 4th Gen Xeon offering and features support for nearly all of Intel’s QAT enabled CPUs. Xeon gen4 CPUs that feature on-chip QAT HW 2.0 will have 1, 2 or 4 QAT endpoints per socket. We selected the Intel(R) Xeon(R) Gold 5420+ CPU that features 1 QAT endpoint for our testing. All else being equal, more endpoints allow for more QAT Hardware acceleration work to be done and allow greater performance in QAT HW accelerated use cases per socket.

As this is not a deployment guide, we’re going to use a RHEL 9.2 install as our operating system and run bare metal for our tests. Our primary resource for setting up QAT Hardware Version 2.0 Acceleration is the excellent QAT documentation found on Intel’s github here: https://intel.github.io/quickassist/index.html

Following the guide, we can simply install from RPM sources, ensure kernel drivers are loaded and we’re about ready to go.

Performance

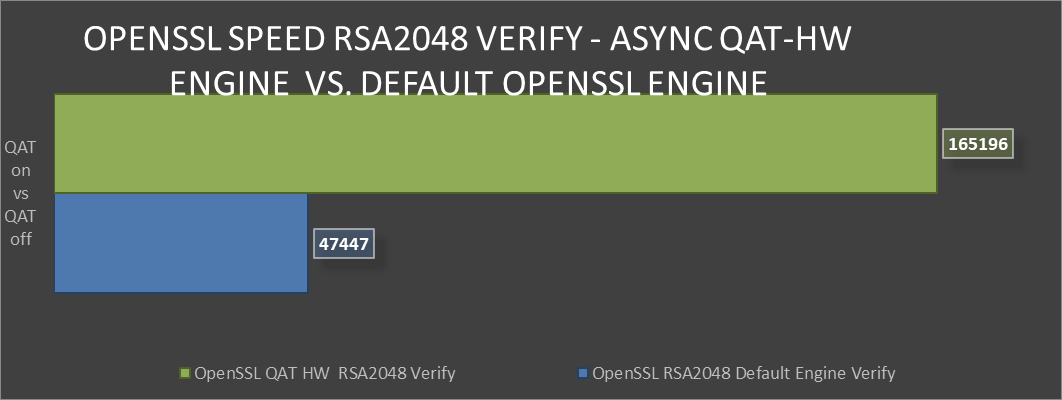

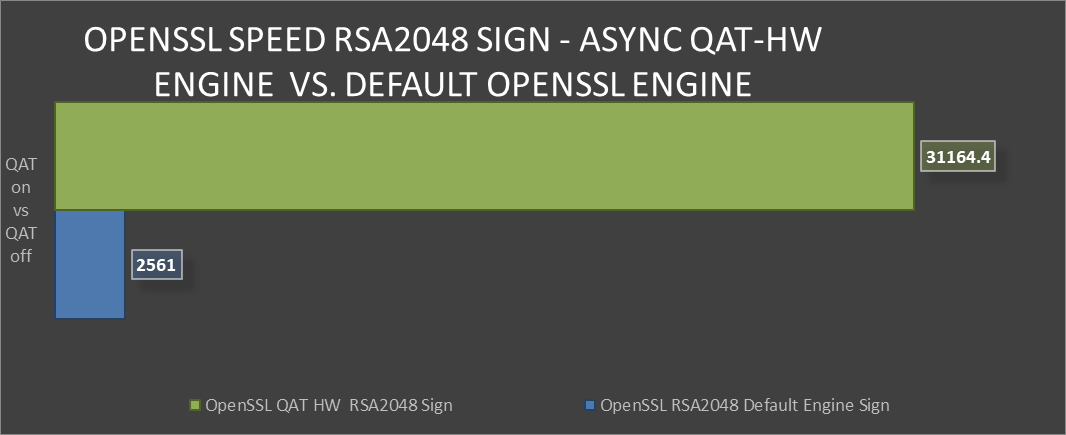

First up, we’ll take a look at probably the most common public key asymmetric cipher suite, RSA. On the Internet RSA finds its home as a key exchange and signature method used to secure communication and confirm identities. In these graphs we’re comparing the speed of the RSA Sign and Verify algorithm using symmetric QAT_HW vs symmetric QAT off (using OpenSSLs default engine).

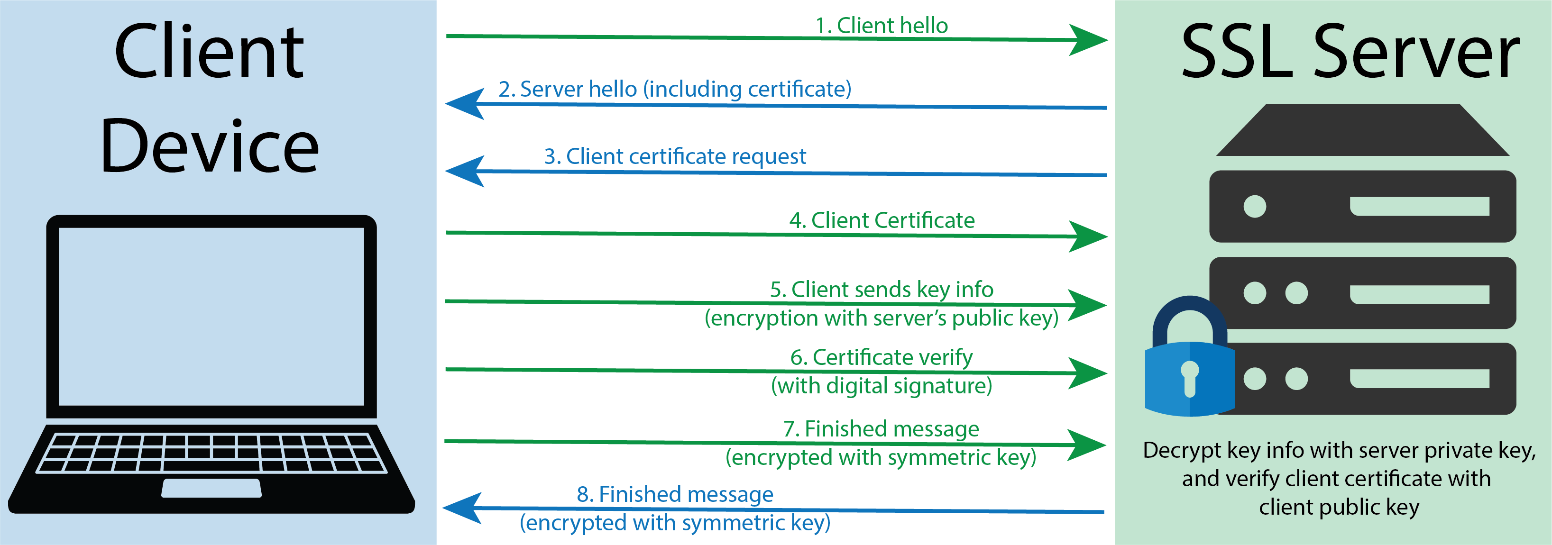

The following graphic shows a representation of a TLS handshake. This provides a bit of context concerning the role of the server in key exchange and handshakes.

TLS handshake representation

TLS handshake representation

OpenSSL Speen RSA2048 Verify comparison

OpenSSL Speen RSA2048 Verify comparison

OpenSSL Speed RSA2048 Sign comparison

OpenSSL Speed RSA2048 Sign comparison

Greater than 240% performance increase in OpenSSL RSA Verify using QAT Hardware Acceleration Engine vs Default Open SSL Engine.(1)

Greater than 240% performance increase in OpenSSL RSA Verify using QAT Hardware Acceleration Engine vs Default Open SSL Engine.(1)

Testing in our labs shows that enabling QAT offers 240% greater algorithmic operations. The result for this performance improvement could be the implementation of greater security capacity per node without the risk of negative impact on QoS.

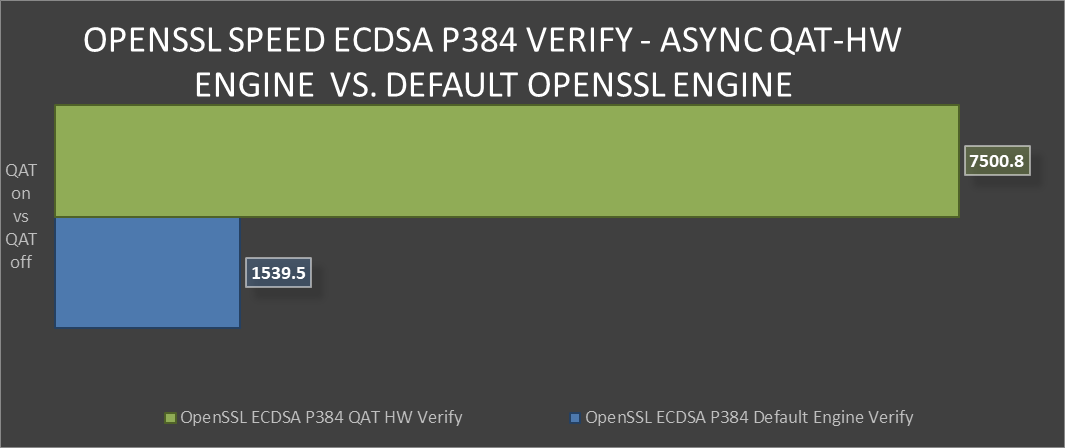

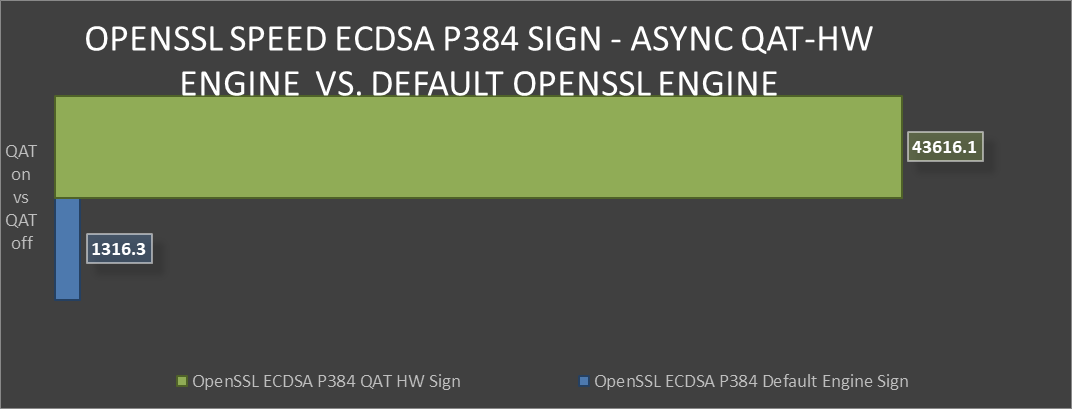

Next we’ll look at the industry standard elliptical curve digital signature algorithm (ECDSA), specifically P-384. QAT HW supports both P-256 and P-384, with both offering exceptional performance vs the default OpenSSL engine. ECDSA is a commonly used as a key agreement protocol by many Internet messaging apps.

ECDSA example

OpenSSL Speed ECDSA P384 Verify comparison

OpenSSL Speed ECDSA P384 Verify comparison OpenSSL Speed ECDSA P384 Sign comparison

OpenSSL Speed ECDSA P384 Sign comparison Over 30x improvement in ECDSA P384 Sign-in OpenSSL using QAT Hardware Acceleration Engine vs Default OpenSSL Engine(2)

Over 30x improvement in ECDSA P384 Sign-in OpenSSL using QAT Hardware Acceleration Engine vs Default OpenSSL Engine(2)

Both of these algorithms provide the level of protection that today’s server security specialists require. However, both are quite different in many aspects.

This vast performance improvement in secure key exchange offers more secure and uncompromised communication without degrading performance.

Conclusion

Intel’s QAT 2.0 Hardware acceleration offers substantial performance improvements for algorithms found in commonly used cipher suites. Also, QAT’s ample documentation and long history of use coupled with these new findings on performance should remove any reservations that a customer might have in deploying these security accelerators. Security at the server silicon level is critical to a modern and uncompromised data center. There is definite value in deploying QAT and a clear path towards realizing accelerated performance in their data center environments.

Legal disclosures

- Based on August 2023 Dell labs testing subjecting the PowerEdge R760 to OpenSSL Speed test running synchronously with default engine vs asynchronous with QAT Hardware Engine. Actual results will vary.

- Based on August 2023 Dell labs testing subjecting the PowerEdge R760 to OpenSSL Speed test running synchronously with default engine vs asynchronous with QAT Hardware Engine. Actual results will vary.