Dell PowerEdge Servers Offer Comprehensive GPU Acceleration Options

Download PDFFri, 03 Mar 2023 19:57:27 -0000

|Read Time: 0 minutes

Summary

The next generation of PowerEdge servers is engineered to accelerate insights by enabling the latest technologies. These technologies include next-gen CPUs bringing support for DDR5 and PCIe Gen 5 and PowerEdge servers that support a wide range of enterprise-class GPUs. Over 75% of next generation Dell PowerEdge servers offer support for GPU acceleration.

Accelerate insights

For the digital enterprise, success hinges on leveraging big, fast data. But as data sets grow, traditional data centers are starting to hit performance and scale limitations — especially when ingesting and querying real-time data sources. While some have long taken advantage of accelerators for speeding visualization, modeling, and simulation, today, more mainstream applications than ever before can leverage accelerators to boost insight and innovation. Accelerators such as graphics processing units (GPUs) complement and accelerate CPUs, using parallel processing to crunch large volumes of data faster. Accelerated data centers can also deliver better economics, providing breakthrough performance with fewer servers, resulting in faster insights and lower costs. Organizations in multiple industries are adopting server accelerators to outpace the competition — honing product and service offerings with data-gleaned insights, enhancing productivity with better application performance, optimizing operations with fast and powerful analytics, and shortening time to market by doing it all faster than ever before. Dell Technologies offers a choice of server accelerators in Dell PowerEdge servers so you can turbo-charge your applications.

For the digital enterprise, success hinges on leveraging big, fast data. But as data sets grow, traditional data centers are starting to hit performance and scale limitations — especially when ingesting and querying real-time data sources. While some have long taken advantage of accelerators for speeding visualization, modeling, and simulation, today, more mainstream applications than ever before can leverage accelerators to boost insight and innovation. Accelerators such as graphics processing units (GPUs) complement and accelerate CPUs, using parallel processing to crunch large volumes of data faster. Accelerated data centers can also deliver better economics, providing breakthrough performance with fewer servers, resulting in faster insights and lower costs. Organizations in multiple industries are adopting server accelerators to outpace the competition — honing product and service offerings with data-gleaned insights, enhancing productivity with better application performance, optimizing operations with fast and powerful analytics, and shortening time to market by doing it all faster than ever before. Dell Technologies offers a choice of server accelerators in Dell PowerEdge servers so you can turbo-charge your applications.

Accelerated server architecture

Our world-class engineering team designs PowerEdge servers with the latest technologies for ultimate performance. Here’s how.

Industry enabled technologies

- Next Generation Intel and AMD Processors

- DDR5 Memory

- PCIe Gen5

- GPU Form Factor Options

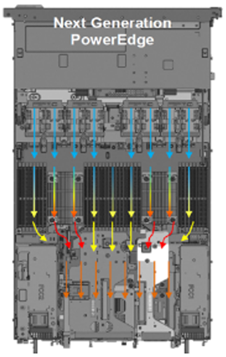

Next generation air and Direct Liquid Cooling (DLC) technology

PowerEdge ensures no-compromise system performance through innovative cooling solutions while offering customers options that fit their facility or usage model.

PowerEdge ensures no-compromise system performance through innovative cooling solutions while offering customers options that fit their facility or usage model.

- Innovations that extend the range of air-cooled configurations

- Advanced designs - airflow pathways are streamlined within the server, directing the right amount of air to where it is needed

- Latest generation fan and heat sinks – to manage the latest high-TDP CPUs and other key components

- Intelligent thermal controls – automatically adjust airflow during workload or environmental changes, seamless support for channel add-in cards, plus enhanced customer control options for temp/power/acoustics

- For high-performance CPU and GPU options in dense configurations, Dell DLC effectively manages heat while improving overall system efficiency

Our GPU partners

AMD

Dell Technologies and AMD have established a solid partnership to help organizations accelerate their AI initiatives. Together our technologies provide the foundation for successful AI solutions that drive the development of advanced DL software frameworks. These technologies also deliver massively parallel computing in the form of AMD Graphic Processing Units (GPUs) for parallel model training and scale-out file systems to support the concurrency, performance and capacity requirements of unstructured image and video data sets. With AMD ROCm open software platform built for flexibility and performance, the HPC and AI communities can gain access to open compute languages, compilers, libraries, and tools designed to accelerate code development and solve the toughest challenges in the world today.

Intel

Dell Technologies and Intel are giving customers new choices in enterprise-class GPUs. The Intel Data Center GPUs are available with our next generation of PowerEdge servers. These GPUs are designed to accelerate AI inferencing, VDI, and model training workloads. And with toolsets like Intel® oneAPI and OpenVINOTM, developers have the tools they need to develop new AI applications and migrate existing applications to run optimally on Intel GPUs.

NVIDIA

Dell Technologies solutions designed with NVIDIA hardware and software enable customers to deploy high-performance deep learning and AI-capable enterprise-class servers from the edge to the data center. This relationship allows Dell to offer Ready Solutions for AI and built-to-order PowerEdge servers with your choice of NVIDIA GPUs. With Dell Ready Solutions for AI, organizations can rely on a Dell-designed and validated set of best-of-breed technologies for software – including AI frameworks and libraries – with compute, networking, and storage. With NVIDIA CUDA, developers can accelerate computing applications by harnessing the power of the GPUs. Applications and operations (such as matrix multiplication) that are typically run serially in CPUs can run on thousands of GPU cores in parallel.

GPU options for next-generation PowerEdge servers

Turbo-charge your applications with performance accelerators available in select Dell PowerEdge tower and rack servers. The number and type of accelerators that fit in PowerEdge servers are based on the physical dimensions of the PCIe adapter cards and the GPU form factor.

Brand | GPU Model | GPU Memory | Max Power Consumption | Form Factor | 2-way Bridge | Recommended Workloads | |

PCIe Adapter Form Factor | |||||||

NVIDIA | A2 | 16 GB GDDR6 | 60W | SW, HHHL or FHHL | n/a | AI Inferencing, Edge, VDI | |

NVIDIA | A16 | 64 GB GDDR6 | 250W | DW, FHFL | n/a | VDI | |

NVIDIA | A40, L40 | 48 GB GDDR6 | 300W | DW, FHFL | Y, N | Performance graphics, Multi-workload | |

NVIDIA | A30 | 24 GB HBM2 | 165W | DW, FHFL | Y | AI Inferencing, AI Training | |

NVIDIA | A100 | 80 GB HBM2e | 300W | DW, FHFL | Y, Y | AI Training, HPC, AI Inferencing | |

NVIDIA | H100 | 80GB HBM2e | 300 - 350W | DW, FHFL | Y | AI Training, HPC, AI Inferencing | |

AMD | MI210 | 64 GB HBM2e | 300W | DW, FHFL | Y | HPC, AI Training | |

Intel | Max 1100* | 48GB HBM2e | 300W | DW, FHFL | Y | HPC, AI Training | |

Intel | Flex 140* | 12GB GDDR6 | 75W | SW, HHHL or FHHL | n/a | AI Inferencing | |

SXM / OAM Form Factor | |||||||

NVIDIA | HGX A100* | 80GB HBM2 | 500W | SXM w/ NVLink | n/a | AI Training, HPC | |

NVIDIA | HGX H100* | 80GB HBM3 | 700W | SXM w/ NVLink | n/a | AI Training, HPC | |

Intel | Max 1550 * | 128GB HBM2e | 600W | OAM w/ XeLink | n/a | AI Training, HPC | |

* Development or under evaluation | |||||||

References

Related Documents

The Latest GPUs of 2022

Mon, 16 Jan 2023 13:44:30 -0000

|Read Time: 0 minutes

And How We Recommend Applying Them to Enable Breakthrough Performance

Summary

Dell Technologies offers a wide range of GPUs to address different workloads and use cases. Deciding on which GPU model and PowerEdge server to purchase, based on intended workloads, can become quite complex for customers looking to use GPU capabilities. It is important that our customers understand why specific GPUs and PowerEdge servers will work best to accelerate their intended workloads. This DfD informs customers of the latest and greatest GPU offerings in 2022, as well as which PowerEdge servers and workloads we recommend to enable breakthrough performance.

PowerEdge servers support various GPU brands and models. Each model is designed to accelerate specific demanding applications by acting as a powerful assistant to the CPU. For this reason, it is vital to understand which GPUs on PowerEdge servers will best enable breakthrough performance for varying workloads. This paper describes the latest GPUs as of Q1 2022, shown below in Figure 1, to help educate PowerEdge customers on which GPU is best suited for their specific needs.

GPU Model | Number of Cores | Peak Double Precision (FP64) | Peak Single Precision (FP32) | Peak Half Precision (FP16) | Memory Size / Bus | Memory Bandwidth | Power Consumption |

A2 | 2560 | N/A | 4.5 TFLOPS | 18 TFLOPS | 16GB GDDR6 | 200 GB/s | 40-60W |

A16 | 1280 x4 | N/A | 4.5 TFLOPS x4 | 17.9 TFLOPS x4 | 16GB GDDR6 x4 | 200 GB/s x4 | 250W |

A30 | 3804 | 5.2 TFLOPS | 10.3 TFLOPS | 165 TFLOPS | 24GB HBM2 | 933 GB/s | 165W |

A40 | 10752 | N/A | 37.4 TFLOPS | 149.7 TFLOPS | 48GB GDDR6 | 696 GB/s | 300W |

MI100 | 7680 | 11.5 TFLOPS | 23.1 TFLOPS | 184.6 TFLOPS | 32GB HBM2 | 1.2 TB/s | 300W |

A100 PCIe | 6912 | 9.7 TFLOPS | 19.5 TFLOPS | 312 TFLOPS | 80GB HBM2e | 1.93 TB/s | 300W |

A100 SXM2 | 6912 | 9.7 TFLOPS | 19.5 TFLOPS | 312 TFLOPS | 40GB HBM2 | 1.55 TB/s | 400W |

A100 SXM2 | 6912 | 9.7 TFLOPS | 19.5 TFLOPS | 312 TFLOPS | 80GB HBM2e | 2.04 TB/s | 500W |

T4 | 2560 | N/A | 8.1 TFLOPS | 65 TFLOPS | 16GB GDDR6 | 300 GB/s | 70W |

Figure 1 – Table comparing 2022 GPU specifications

NVIDIA A2

The NVIDIA A2 is an entry-level GPU intended to boost performance for AI-enabled applications. What makes this product unique is its extremely low power limit (40W-60W), compact size, and affordable price. These attributes position the A2 as the perfect “starter” GPU for users seeking performance improvements on their servers. To benefit from the performance inferencing and entry-level specifications of the A2, we suggest attaching it to mainstream PowerEdge servers, such as the R750 and R7515, which can host up to 4x and 3x A2 GPUs respectively. Edge and space/power constrained environments, such as the XR11, are also recommended, which can host up to 2x A2 GPUs. Customers can expect more PowerEdge support by H2 2022, including the PowerEdge R650, T550, R750xa, and XR12.

Supported Workloads: AI Inference, Edge, VDI, General Purpose Recommended Workloads: AI Inference, Edge, VDI Recommended PowerEdge Servers: R750, R7515, XR11

NVIDIA A16

The NVIDIA A16 is a full height, full length (FHFL) GPU card that has four GPUs connected together on a single board through a Mellanox PCIe switch. The A16 is targeted at customers requiring high-user density for VDI environments, because it shares incoming requests across four GPUs instead of just one. This will both increase the total user count and reduce queue times per request. All four GPUs have a high memory capacity (16GB DDR6 for each GPU) and memory bandwidth (200GB/s for each GPU) to support a large volume of users and varying workload types. Lastly, the NVIDIA A16 has a large number of video encoders and decoders for the best user experience in a VDI environment.

To take full advantage of the A16s capabilities, we suggest attaching it to newer PowerEdge servers that support PCIe Gen4. For Intel-based PowerEdge servers, we recommend the R750 and R750xa, which support 2x and 4x A16 GPUs, respectively. For AMD-based PowerEdge servers, we recommend the R7515 and R7525, which support 1x and 3x A16 GPUs, respectively.

Supported Workloads: VDI, Video Encoding, Video Analytics Recommended Workloads: VDI Recommended PowerEdge Servers: R750, R750xa, R7515, R7525

NVIDIA A30

The NVIDIA A30 is a mainstream GPU offering targeted at enterprise customers who seek increased performance, scalability, and flexibility in the data center. This powerhouse accelerator is a versatile GPU solution because it has excellent performance specifications for a broad spectrum of math precisions, including INT4, INT8, FP16, FP32, and FP64 models. Having the ability to run third- generation tensor core and the Multi-Instance GPU (MIG) features in unison further secures quality performance gains for big and small workloads. Lastly, it has an unconventionally low power budget of only 165W, making it a viable GPU for virtually any PowerEdge server.

Given that the A30 GPU was built to be a versatile solution for most workloads and servers, it balances both the performance and pricing to bring optimized value to our PowerEdge servers. The PowerEdge R750, R750xa, R7525, and R7515 are all great mainstream servers for enterprise customers looking to scale. For those requiring a GPU-dense server, the PowerEdge DSS8440 can hold up to 10x A30s and will be supported in Q1 2022. Lastly, the PowerEdge XR12 can support up to 2x A30s for Edge environments.

Supported Workloads: AI Inference, AI Training, HPC, Video Analytics, General Purpose Recommended Workloads: AI Inference, AI Training Recommended PowerEdge Servers: R750, R750xa, R7525, R7515, DSS8440, XR12

NVIDIA A40

The NVIDIA A40 is a FHFL GPU offering that combines advanced professional graphics with HPC and AI acceleration to boost the performance of graphics and visualization workloads, such as batch rendering, multi-display, and 3D display. By providing support for ray tracing, advanced shading, and other powerful simulation features, this GPU is a unique solution targeted at customers that require powerful virtual and physical displays. Furthermore, with 48GB of GDDR6 memory, 10,752 CUDA cores, and PCIe Gen4 support, the A40 will ensure that massive datasets and graphics workload requests are moving quickly.

To accommodate the A40s hefty power budget of 300W, we suggest customers attach it to a PowerEdge server with ample power to spare, such as the DSS8440. However, if the DSS8440 is not possible, the PowerEdge R750xa, R750, R7525, and XR12 are also compatible with the A40 GPU and will function adequately so long as they are using PSUs with adequate power output. Lastly, populating A40 GPUs within the PowerEdge T550 is also a great play for customers who want to address visually demanding workloads outside the traditional data center.

Supported Workloads: Graphics, Batch Rendering, Multi-Display, 3D Display, VR, Virtual Workstations, AI Training, AI Inference Recommended Workloads: Graphics, Bach Rendering, Multi-Display Recommended PowerEdge Servers: DSS8440, R750xa, R750, R7525, XR12, T550

NVIDIA A100

The NVIDIA A100 focuses on accelerating HPC and AI workloads. It introduces double-precision tensor cores that significantly reduce HPC simulation run times. Furthermore, the A100 includes Multi-Instance GPU (MIG) virtualization and GPU partitioning capabilities, which benefit cloud users looking to use their GPUs for AI inference and data analytics. The newly supported sparsity feature can also double the throughput of tensor core operations by exploiting the fine- grained structure in DL networks. Lastly, A100 GPUs can be inter-connected either by NVLink bridge on platforms like the R750xa and DSS8440, or by SXM4 on platforms like the PowerEdge XE8545, which increases the GPU-to- GPU bandwidth when compared to the PCIe host interface.

The PowerEdge DSS8440 is a great server for the A100, as it provides ample power and can hold the most GPUs. If not the DSS8440, we would suggest using the PowerEdge XE8545, R750xa, or R7525. Please note that only the 80GB model is supported for PCIe connections, and be sure to provide plenty of power to accommodate the A100s 300W/400W power requirements.

Supported Workloads: HPC, AI Training, AI Inference, Data Analytics, General Purpose Recommended Workloads: HPC, AI Training, AI Inference, Data Analytics Recommended PowerEdge Servers: DSS8440, XE8545, R750xa, R7525

AMD MI100

The AMD MI100 value proposition is similar to the A100 in that it will best accelerate HPC and AI workloads. At 11.5 TFLOPS, its FP64 performance is industry-leading for the acceleration of HPC workloads. Similarly, at 23.1 TFLOPs, the FP32 specifications are more than sufficient for any AI workload. Furthermore, the MI100 supports 32GB of high-bandwidth memory (HBM2) to enable a whopping 1.2TB/s of memory bandwidth. In a nutshell, this GPU is designed to tackle complex, data-intensive HPC and AI workloads for enterprise customers.

The AMD MI100 is qualified on both the Intel-based PowerEdge R750xa, which supports up to 4x MI100 GPUs, and the AMD- based PowerEdge R7525, which supports up to 3x MI100 GPUs. We highly recommend adopting a powerful PSU for either server, as the MI100 also has a massive power consumption of 300W.

Supported Workloads: HPC, AI Training, AI Inference, ML/DL Recommended Workloads: HPC, AI Training, AI Inference Recommended PowerEdge Servers: R750xa, R7525

Conclusion

The GPUs we are recommending in this list offer a wide variety of features that are designed to accelerate a diverse range of server workloads. A PowerEdge server configured with the most appropriate GPU will enable intended customer workloads to use these features in concert with other system components to yield the best performance. We hope this discussion of the latest 2022 GPUs, as well as our recommendations for Dell PowerEdge servers and workloads, will help customers choose the most appropriate GPU for their data center needs and business goals.

Learn More

Dell PowerEdge Accelerated Servers and Accelerators Dell eBook

Demystifying Deep Learning Infrastructure Choices using MLPerf Benchmark Suite HPC at Dell

Are Rugged Compact Platforms Ready for Edge AI?

Thu, 14 Mar 2024 16:47:06 -0000

|Read Time: 0 minutes

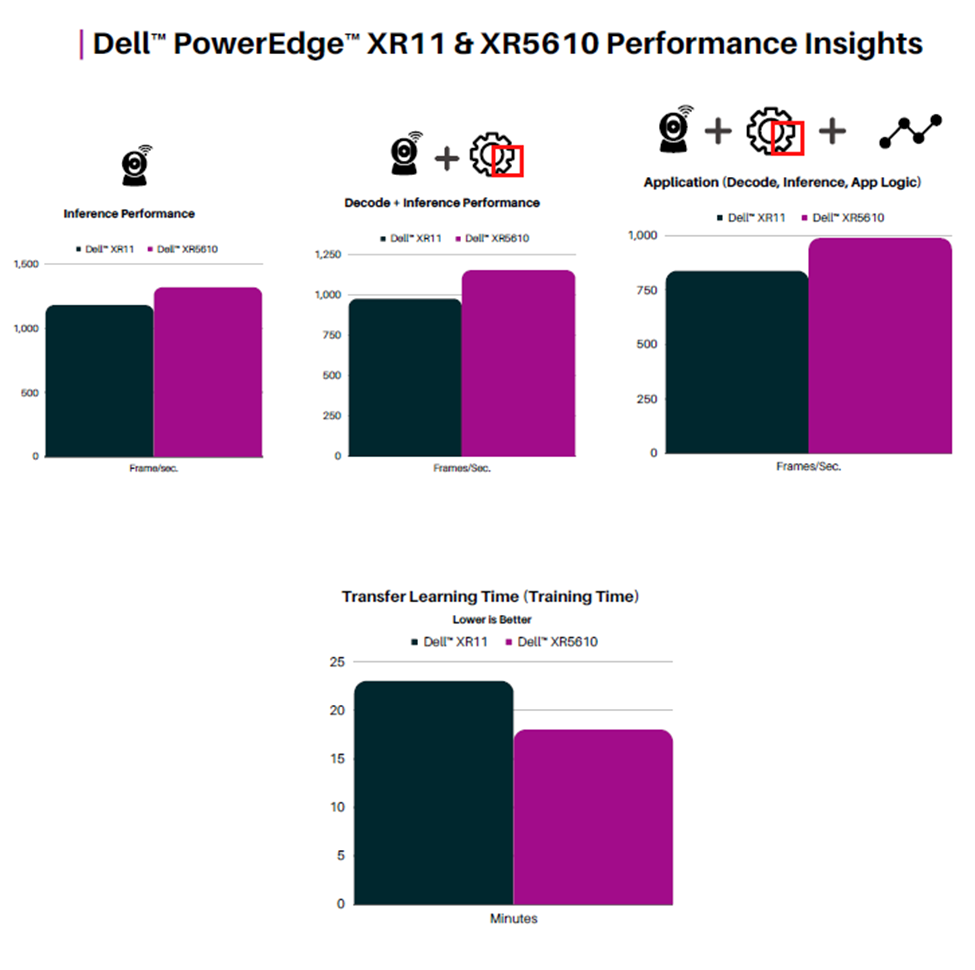

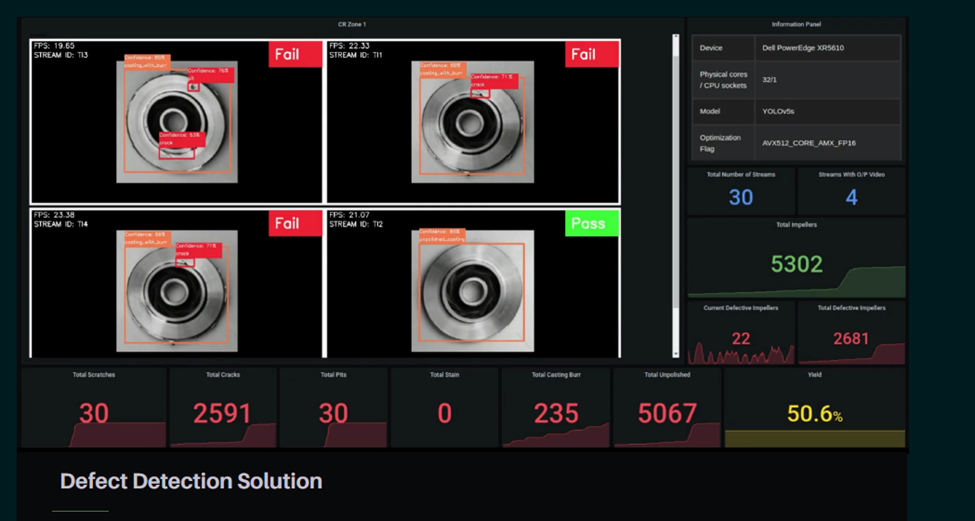

Scalers AI™ tested the Impellers Defect Inspection at the Edge on the Dell PowerEdge XR5610 server. Impellers are rotating components used in various industrial processes, including fluid handling in pumps and fans. Quality inspection of impellers is crucial to ensure their reliable performance and durability.

Dell™ PowerEdge™XR5610 Server supports 50 simultaneous streams running AI defect detection in a single CPU config with Dell™PowerEdge™ XR Portfolio offering scalability to 4CPUs at a near-linear scale. 1.4x Gen on Gen Performance Improvement Using Intel® Deep Learning Boost and Intel® OpenVINO™ Smart Factory Solution | Defect Detection Solution.

Dell™ PowerEdge™ XR 5610 servers, equipped with fourth Gen Intel® Xeon® scalable processors, are well suited to handle Edge AI applications with both AI inference and training at the Edge. The rugged form factor, extended temp, and scalability to four sockets enable compute to be deployed in the physical world closer to the point of data creation, allowing for near-real-time insights.

Fast-track development with access to the solution code:

Contact your Dell™ representative or Scalers AI™ at contact@scalers.ai for access. Save hundreds of hours of development with the solution code. As part of this effort, ScalersAI™ is making the solution code available.