Getting Started with Container Storage Interface (CSI) for Kubernetes Workloads

Fri, 27 Jan 2023 19:05:48 -0000

|Read Time: 0 minutes

What is CSI in Kubernetes?

When container deployment (a light-weight implementation of software deployment) started it was mostly used for stateless services that were running business logic without much data persistence. As more and more Stateful applications were being deployed, the storage interface to these applications needed to be well defined in native Kubernetes constructs. This need gave way to the CSI standard.

CSI stands for Container Storage Interface and is an industry-standard specification aimed at defining how storage providers can develop plugins that work across many container orchestration systems. For context, a common container orchestration system that highly utilizes CSI is Kubernetes. Kubernetes has had a GA (Generally Available) implementation of CSI since Kubernetes v1.13 was released in December 2018.

How does CSI work?

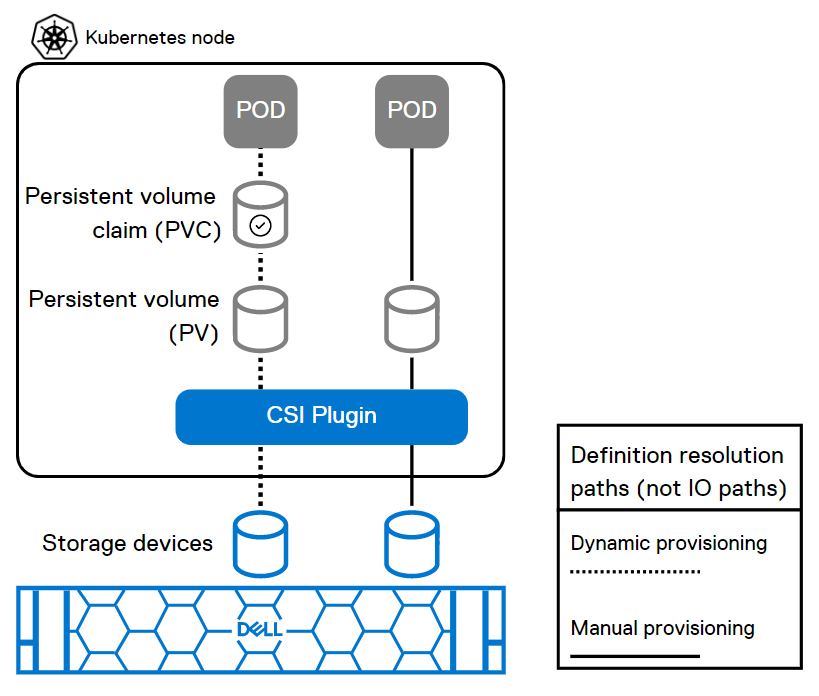

Part of the deployment declaration (manifest) of a containerized stateful service is to specify the type of storage that the application needs. This can be done in two ways: dynamic provisioning and manual provisioning:

- For dynamic provisioning, the POD (an application deployment unit) manifest points to a persistent volume claim object that references a Storage class defined by the CSI plugin of the specific storage type. This will automatically create and connect a persistent volume of the specified storage class.

- For manual provisioning, the manifest directly points to an existing persistent volume that is pre-created using the storage class definition.

Here is a figure that illustrates the two use cases:

This figure shows how the POD deployment manifest resolves to the storage devices through CSI for dynamic and manual provisioning.

Key aspects of a CSI plugin

Aspect | Description |

Persistent Volume (PV) | A logical storage volume in Kubernetes that will be made available inside of a CO-managed container, using the CSI. |

Persistent Volume Claim (PVC) | PVCs are requests for storage resources such as the persistent volumes. |

Block Volume | A volume that will appear as a block device inside the container. |

Mounted Volume | A volume that will be mounted using the specified file system and appear as a directory inside the container. |

CO (Container Orchestration) | Container orchestration system communicates with plugins using CSI service RPCs (Remote Procedure Calls). |

SP | Storage Provider, the vendor of a CSI plugin implementation. |

RPC | |

Node | A host where the user workload will be running, uniquely identifiable from the perspective of a plugin by a node ID. |

Plugin | Aka “plugin implementation,” a gRPC endpoint that implements the CSI Services. |

Plugin Supervisor | A process that governs the lifecycle of a plugin, perhaps the CO. |

Workload | The atomic unit of "work" scheduled by a CO. This might be a container or a collection of containers. |

Resources

You can explore the following resources to learn more about this topic:

Demystifying CSI plug-in for PowerFlex (persistent volumes) with Red Hat OpenShift

How to Build a Custom Dell CSI Driver

Dell Ready Stack for Red Hat OpenShift Container Platform 4.6

Solution Brief - Red Hat OpenShift Container Platform 4.10 on Dell Infrastructure

Dell PowerStore with Azure Arc-enabled Data Services

Persistent Storage for Containerized Applications on Kubernetes with PowerMax SAN Storage

Amazon Elastic Kubernetes Service Anywhere on Dell PowerFlex

What is Container Storage Interface (CSI) and how does Dell use it?

Authors: Ryan Wallner and Parasar Kodati

Related Documents

Accelerate Workload Performance with NVIDIA GPUs on VMware vSphere Tanzu and PowerEdge Servers

Wed, 21 Jun 2023 18:36:32 -0000

|Read Time: 0 minutes

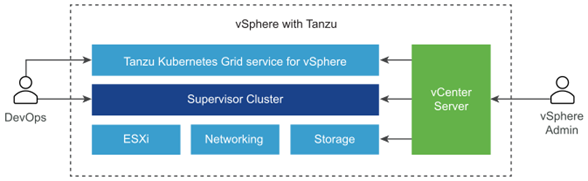

VMware vSphere with Tanzu

VMware vSphere with Tanzu is used to transform vSphere to a platform for running Kubernetes workloads natively on the hypervisor layer. When enabled on a vSphere cluster, vSphere with Tanzu provides the capability to run Kubernetes workloads directly on ESXi hosts and to create upstream Kubernetes clusters within dedicated resource pools. Tanzu Kubernetes Grid is an enterprise ready Kubernetes runtime built to run consistently with any app on any cloud. It runs the same Kubernetes workloads across the data center, public cloud, and edge for a consistent, secure experience for on-demand access and to keep your workloads properly isolated and secure.

Graphics Processing Units

Graphics processing units (GPU) are dedicated parallel hardware accelerators originally designed for accelerating graphics intensive processing. Today, GPUs have become an essential part of artificial intelligence workloads, especially machine learning and deep learning. Moreover, containers help organizations modernize applications and to align closely with current business needs (see Consolidate your VMs and Modernize Aging Environments with PowerEdge MX750c Compute Sleds and VMware Tanzu). It is only logical to empower container-based applications and workloads to leverage any accelerators that the hardware infrastructure can provide.

As investments in artificial intelligence grow, and organizations explore using AI workloads for critical business workloads, VMware vSphere with Tanzu containerized environments provide the ability to rapidly deploy applications. Although generic applications do well with standard containers, computationally demanding workloads can benefit tremendously with GPU accelerators that can help process multiple computations concurrently. VMware Tanzu Kubernetes on NVIDIA GPUs enables enterprises to adopt and deploy artificial intelligence workloads, such as machine learning and deep learning.

NVIDIA Graphics Processing Units

The NVIDIA Ampere A100 is a powerful graphics processing unit (GPU). The A100 Tensor Core GPU runs diverse compute intensive applications at every scale running in modern cloud data centers. Some compute-intensive applications include AI deep learning (DL) training and inference, data analytics, scientific computing, genomics, edge video analytics and 5G services, graphics rendering, cloud gaming, and many more. Using NVIDIA GPUs to accelerate container workload performance is the key to rapid scalability and superior performance of containers with GPUs.

NVIDIA GPUs with their simultaneous processing capabilities, supported by thousands of cores, help accelerate a wide range of applications across industry segments such as:

- HPC: Deep Learning, Defense, Weather Forecasting, Bioscience research

- Consumer Applications: Transportation, Video Editing, 3D Graphics, Machine Learning

- Entertainment: Gaming, Visual Effects

- Automotive Sectors: Visual Data, Sensors, Automation

- Finance: Analytics, Security-Fraud Detection

Testing the benefits of workloads on containers with GPU acceleration

In this study, we used ResNet-50—a deep learning image classification workload—on a Dell PowerEdge R750 server with an NVIDIA A100 Tensor Core GPU running VMware vSphere with Tanzu. NVIDIA vGPU software “creates virtual GPUs that can be shared across multiple virtual machines, accessed by any device, anywhere”. Companies can use NVIDIA vGPU software for a wide range of workloads. This approach combines the management and security benefits of virtualization with the performance of GPUs, from which many modern workloads can benefit. The results show that the PowerEdge R750 with an NVIDIA A100 Tensor Core GPU in a VMware Tanzu Kubernetes environment with GPU virtualization can support flexibly apportioning GPU compute capability across multiple machine learning workloads in VMware Tanzu Kubernetes clusters.

Dell PowerEdge servers

Modern data centers require fast, flexible cloud enabled infrastructure to respond to complex compute demands. Dell PowerEdge servers provide a scalable business architecture, intelligent automation, and integrated security for workloads from traditional applications and virtualization to cloud-native workloads. The Dell PowerEdge difference is that we deliver the same user experience, and the same integrated management experience across all our servers, so you have one way to patch, manage, update, refresh, and retire servers across the entire data center. PowerEdge servers also incorporate the embedded efficiencies of OpenManage systems management that enable IT pros to focus more time on strategic business objectives and less time on routine IT tasks.

Dell PowerEdge R750

The Dell PowerEdge R750, powered by 3rd Generation Intel® Xeon® Scalable processors, is a rack server that optimizes application performance and acceleration. The R750 is a dual-socket 2U rack server that delivers outstanding performance for the most demanding workloads. It supports eight channels of memory per CPU, and up to 32 DDR4 DIMMs at 3200 MT/s speeds. In addition, to address substantial throughput improvements, the PowerEdge R750 supports PCIe Gen 4 and up to 24 NVMe drives with improved air-cooling features and optional Direct Liquid Cooling to support increasing power and thermal requirements. These features make the R750 an ideal server for data center standardization on a wide range of workloads, including database and analytics, high performance computing (HPC), traditional corporate IT, virtual desktop infrastructure, and AI/ML environments that require performance, extensive storage, with Data Processing Unit (DPU) and Graphics Processing Unit (GPU) support.

References

About the author: Thomas MM works in the technical marketing team that focuses on Dell PowerEdge with VMware software. With vast experience in the IT industry in various roles, Thomas specializes in PowerEdge servers and VMware software and works to create technical collateral that highlights the many unique benefits of running VMware software on PowerEdge servers for Dell and VMware customers and partners.

Powering Kafka with Kubernetes and Dell PowerEdge Servers with Intel® Processors

Mon, 29 Jan 2024 23:33:38 -0000

|Read Time: 0 minutes

Kafka with Kubernetes

At the top of this webpage are 3 PDF files outlining test results and reference configurations for Dell PowerEdge servers using both the 3rd Generation Intel® Xeon® processors and 4th Generation Intel Xeon processors. All testing was conducted in Dell Labs by Intel and Dell Engineers in October and November of 2023.

- “Dell DfD Kafka ICX” – highlights the recommended configurations for Dell PowerEdge servers using 3rd generation Intel® Xeon® processors.

- “Dell DfD Kafka SPR” – highlights the recommended configurations for Dell PowerEdge servers using 4th generation Intel® Xeon® processors.

- “Dell DfD Kafka Kubernetes Test Report” – Highlights the results of performance testing on both configurations with comparisons that demonstrate the performance differences between them.

Solution Overview

The Apache® Software Foundation developed Kafka as an Open Source solution to provide distributed event store and stream processing capabilities. Apache Kafka uses a publish-subscribe model to enable efficient data sharing across multiple applications. Applications can publish messages to a pool of message brokers, which subsequently distribute the data to multiple subscriber applications in real time.

Kafka is often deployed for mission-critical applications and streaming analytics along with other use cases. These types of workloads require leading-edge performance which places significant demand on hardware.

There are five major APIs in Kafka[i]:

- Producer API – Permits an application to publish streams of records.

- Consumer API – Permits an application to subscribe to topics and process streams of records.

- Connect API – performs the reusable producer and consumer APIs that can link the topics to the existing applications.

- Streams API – This API converts the input streams to output and produces the result.

- Admin API – Used to manage Kafka topics, brokers, and other Kafka objects.

Kafka with Dell PowerEdge and Intel processor benefits

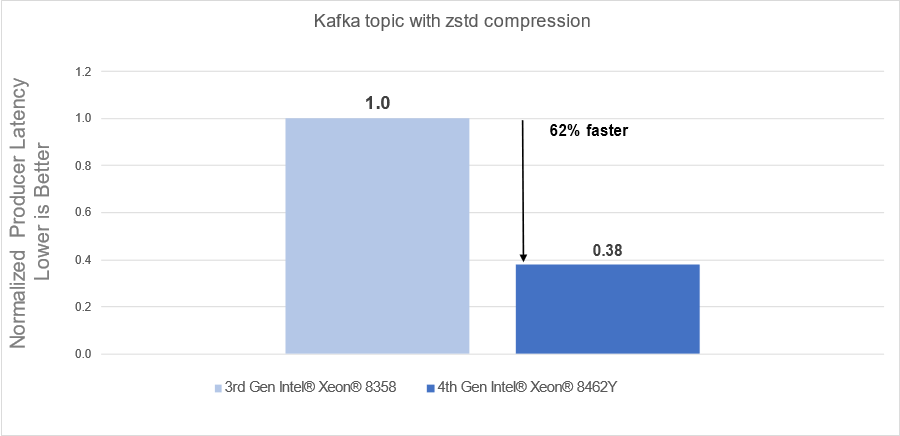

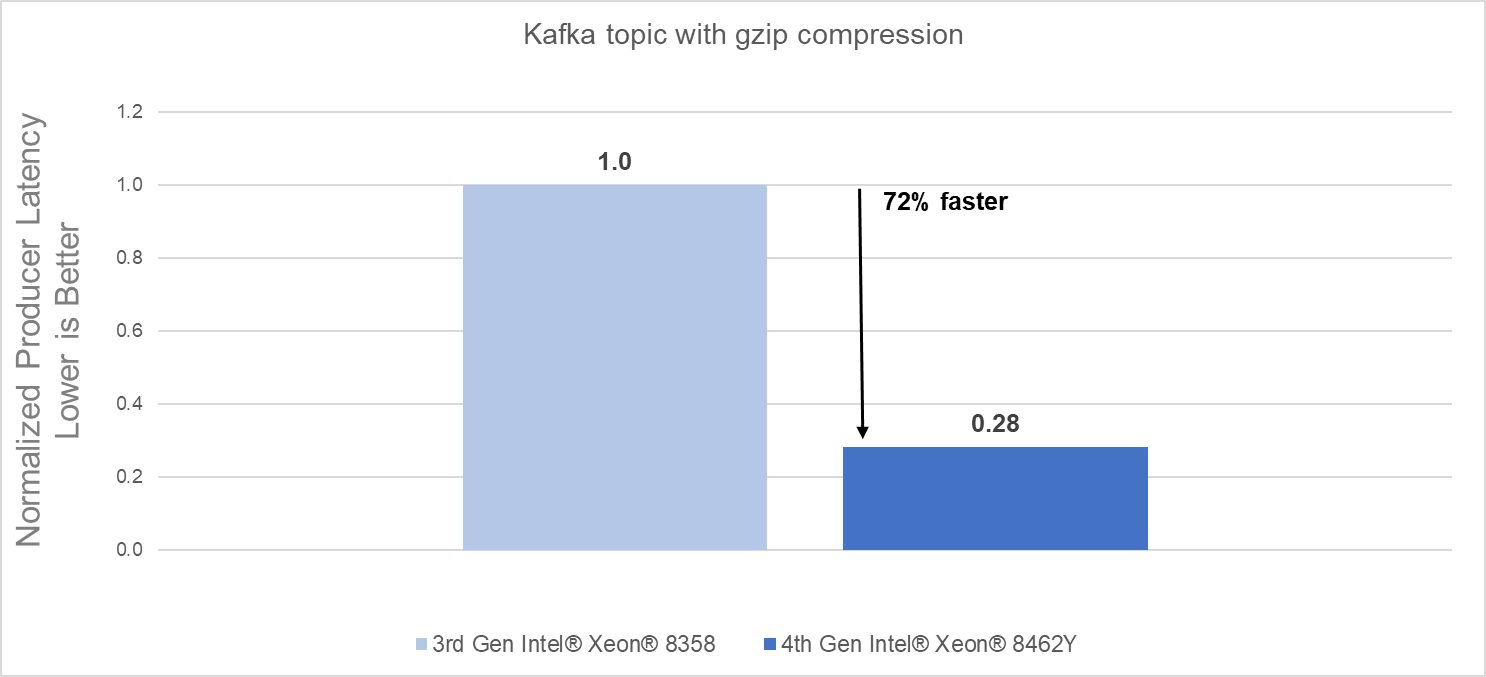

The introduction of new server technologies allows customers to deploy solutions using the newly introduced functionality, but it can also provide an opportunity for them to review their current infrastructure and determine if the new technology might increase performance and efficiency. Dell and Intel recently conducted testing of Kafka performance in a Kubernetes environment and measured the performance of two different compression engines on the new Dell PowerEdge R760 with 4th generation Intel® Xeon® Scalable processors and compared the results to the same solution running on the previous generation R750 with 3rd generation Intel® Xeon® Scalable processors to determine if customers could benefit from a transition.

Some of the key changes incorporated into 4th generation Intel® Xeon® Scalable processors include:

- Quick Assist Technology (QAT) to accelerate data compression and encryption.

- Support for 4800 MT/s DDR5 memory

Raw performance: As noted in the report, our tests showed a 72% producers’ latency decrease with gzip compression and a 62% producers’ latency decrease with zstd compression.

Conclusion

Choosing the right combination of Server and Processor can increase performance and reduce time, allowing customers to react faster and process more data. As this testing demonstrated, the Dell PowerEdge R760 with 4th Generation Intel® Xeon® CPUs significantly outperformed the previous generation.

- The Dell PowerEdge R760 with 4th Generation Intel® Xeon® Scalable processors delivered:

- 62% faster processing using zstd compression

- 72% faster procession using gzip compression

- 4th Generation Intel® Xeon® Scalable processors benefits are the results of:

- Innovative CPU microarchitecture providing a performance boost

- Introduction of DDR5 memory support

[i] https://en.wikipedia.org/wiki/Apache_Kafka