Dell Technologies PowerEdge MX 100 GbE solution with external Fabric Switching Engine

Mon, 26 Jun 2023 20:31:38 -0000

|Read Time: 0 minutes

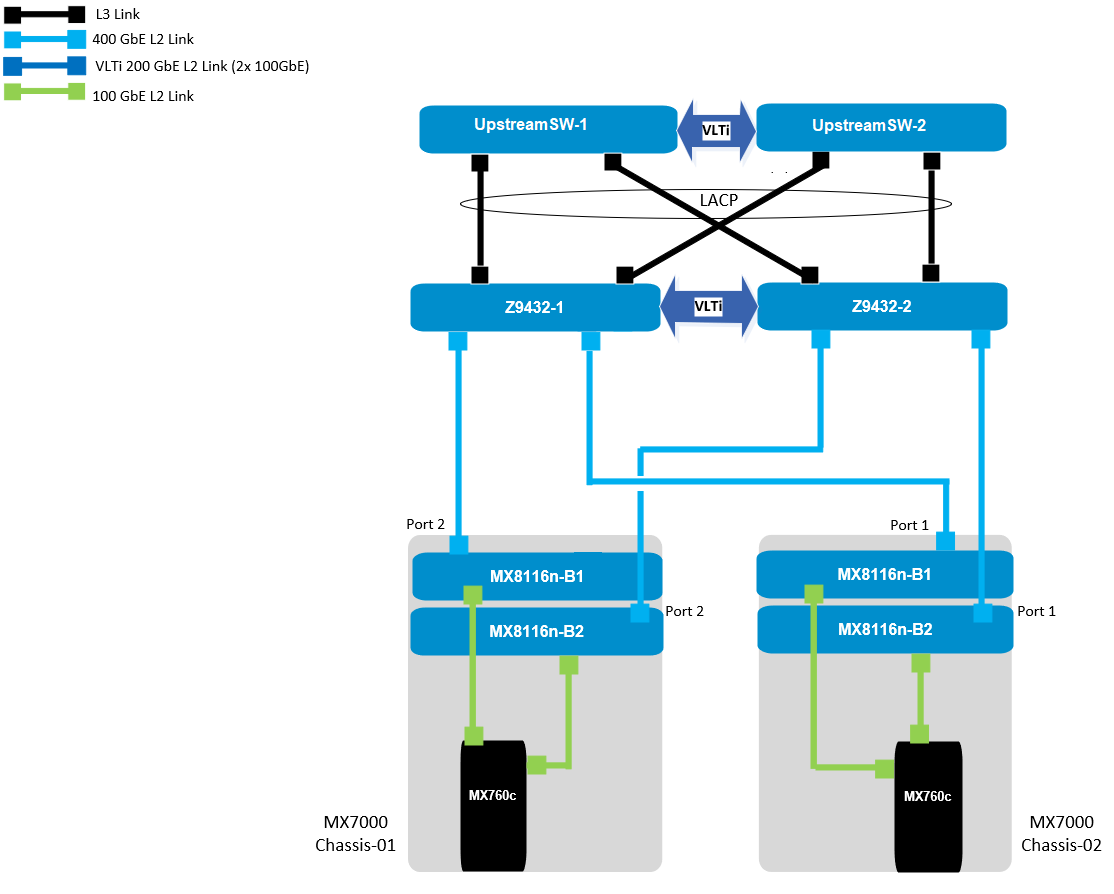

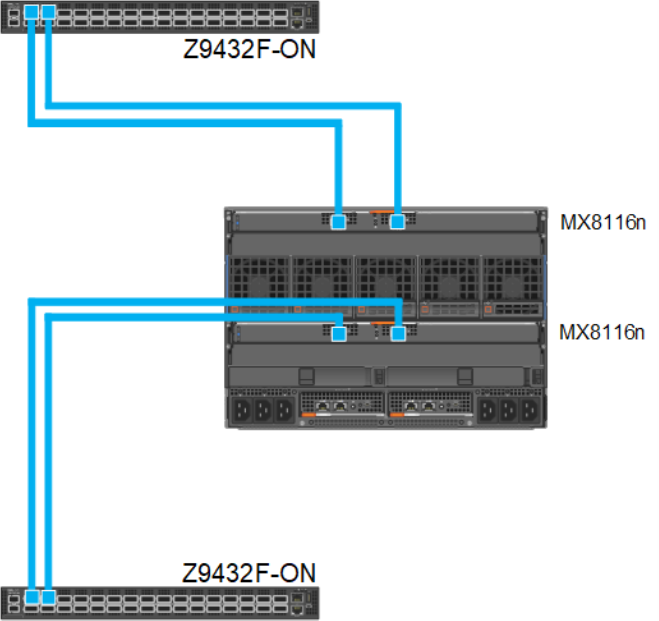

The Dell PowerEdge MX platform is advancing its position as the leading high-performance data center infrastructure by introducing a 100 GbE networking solution. This evolved networking architecture not only provides the benefit of 100 GbE speed but also increases the number of MX7000 chassis within a Scalable Fabric. The 100 GbE networking solution brings a new type of architecture, starting with an external Fabric Switching Engine (FSE).

PowerEdge MX 100 GbE solution design example

The diagram shows only one connection on each MX8116n for simplicity. See the port-mapping section in the networking deployment guide here.

Figure 1. 100 GbE solution example topology

Components for 100 GbE networking solution

The key hardware components for 100 GbE operation within the MX Platform are described below with a minimal description.

Dell Networking MX8116n Fabric Expander Module

The MX8116n FEM includes two QSFP56-DD interfaces, with each interface providing up to 4x 100Gbps connections to the chassis, 8x 100 GbE internal server-facing ports for 100 GbE NICs, and 16x 25 GbE for 25 GbE NICs.

The MX7000 chassis supports up to four MX8116n FEMs in Fabric A and Fabric B.

Figure 2. MX8116n FEM

The following MX8116n FEM components are labeled in the preceding figure:

- Express service tag

- Power and indicator LEDs

- Module insertion and removal latch

- Two QSFP56-DD fabric expander ports

Dell PowerEdge MX760c compute sled

- The MX760c is ideal for dense virtualization environments and can serve as a foundation for collaborative workloads.

- Businesses can install up to eight MX760c sleds in a single MX7000 chassis and combine them with compute sleds from different generations.

- Single or dual CPU (up to 56 cores per processor/socket with four x UPI @ 24 GT/s) and 32x DIMM slots DDR5 with eight memory channels.

- 8x E3.S NVMe (Gen5 x4) or 6 x 2.5" SAS/SATA SSDs or 6 x NVMe (Gen4) SSDs and iDRAC9 with lifecycle controller.

Note: The 100 GbE Dual Port Mezzanine card is also available on the MX750c.

Figure 3. Dell PowerEdge MX760c sled with eight E3.s SSD drives

Dell PowerSwitch Z9432F-ON external Fabric Switching Engine

The Z9432F-ON provides state-of-the-art, high-density 100/400 GbE ports, and a broad range of functionality to meet the growing demands of modern data center environments. Compact and offers an industry-leading density of 32 ports of 400 GbE in QSFP56-DD, 128 ports of 100, or up to 144 ports of 10/25/50 (through breakout) in a 1RU design. Up to 25.6 Tbps non-blocking (full duplex), switching fabric delivers line-rate performance under full load.L2 multipath support using Virtual Link Trunking (VLT) and Routed VLT support. Scalable L2 and L3 Ethernet switching with QoS and a full complement of standards-based IPv4 and IPv6 features, including OSPF and BGP routing support.

Figure 4. Dell PowerSwitch Z9432F-ON

Note: Mixed dual port 100 GbE and quad port 25 GbE mezzanine cards connecting to the same MX8116n are not a supported configuration.

100 GbE deployment options

There are four deployment options for the 100 GbE solution, and every option requires servers with a dual port 100 GbE mezzanine card. You can install the mezzanine card in either mezzanine slot A, B, or both. When you use the Broadcom 575 KR dual port 100 GbE mezzanine card, you should set the Z9432F-ON port-group to unrestricted mode and configure the port mode for 100g-4x.

PowerSwitch CLI example:

port-group 1/1/1

profile unrestricted

port 1/1/1 mode Eth 100g-4x

port 1/1/2 mode Eth 100g-4x

Note: The 100 GbE solution deployment, 14 maximum numbers of chassis are supported in single fabric, and 7 maximum numbers of chassis are supported in dual fabric using the same pair of FSE solution.

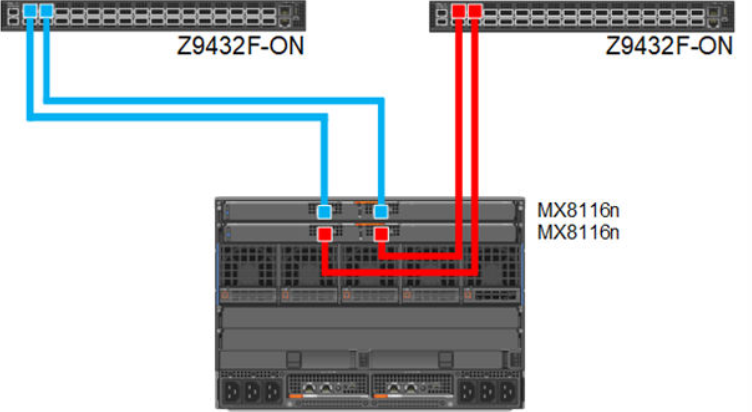

Single fabric

In a single fabric deployment, two MX8116n can be installed either in Fabric A or Fabric B, and the corresponding slot of the sled in slot-A or slot-B can have the 100 GbE mezzanine card installed.

Figure 5. 100 GbE Single Fabric

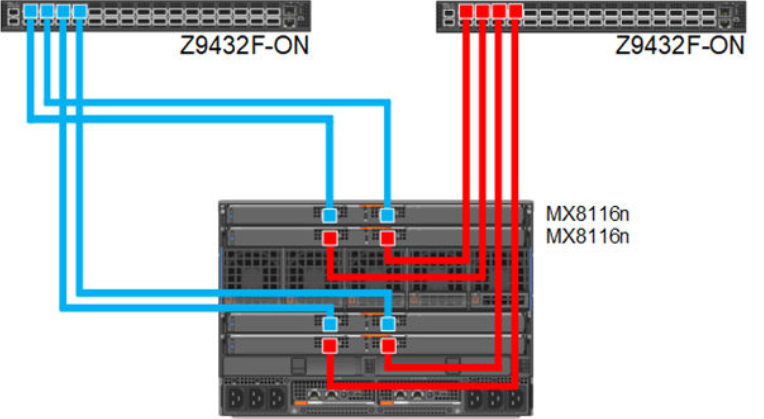

Dual fabric combined fabrics

In this option, four MX8116n (2x in Fabric A and 2x in Fabric B) can be installed and combined to connect Z9432F-ON external FSE.

Figure 6. 100 GbE Dual Fabric combined Fabrics

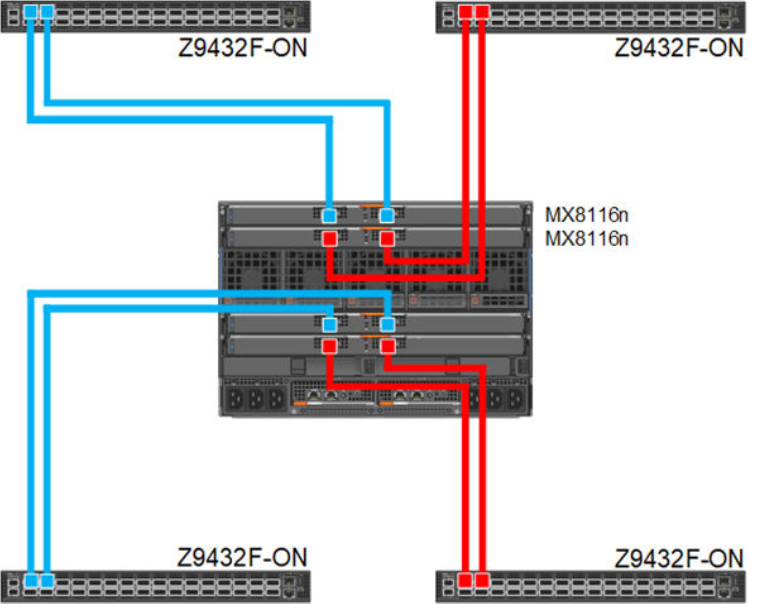

Dual fabric separate fabrics

In this option four, MX8116n (2x in Fabric A and 2x in Fabric B) can be installed and connected to two different networks. In this case, the MX760c server module has two mezzanine cards, with each card connected to a separate network.

Figure 7. 100 GbE Dual Fabric separate Fabrics

Dual fabric, single MX8116n in each fabric, separate fabrics

In this option two, MX8116n (1x in Fabric A and 1x in Fabric B) can be installed and connected to two different networks. In this case, the MX760c server module has two mezzanine cards, each connected to a separate network.

Figure 8. 100 GbE Dual Fabric single FEM in separate Fabrics

References

Dell PowerEdge Networking Deployment Guide

A chapter about 100 GbE solution with external Fabric Switching Engine