Dell Shifts vRAN into High Gear on PowerEdge with Intel vRAN Boost

Thu, 17 Aug 2023 18:33:05 -0000

|Read Time: 0 minutes

What has past

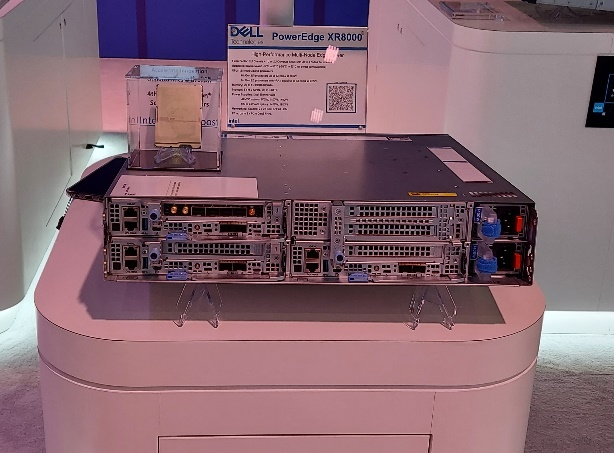

Mobile World Congress 2023 was an important event for both Dell Technologies and Intel that marked a true foundational turning point for vRAN viability. At this event, Intel launched its 4th Gen Intel Xeon Scalable processor, with Intel vRAN Boost, and Dell announced two new ruggedized server platforms, the PowerEdge XR5610 and XR8000, with support for vRAN Boost CPU SKUs.

The features and capabilities of the PowerEdge XR5610 and XR8000 have been highlighted in previous blogs and both have been available to order since May 2023. These new ruggedized servers have been evaluated and adopted as Cloud RAN reference platforms by NEPs such as Samsung and Ericsson. Short-depth, NEBS certified and TCO-optimized, these servers are purpose-built for the demanding deployment environments of Mobile Operators and are now married to the Intel vRAN Boost processor to provide a powerful and efficient alternative to classical appliance options.

What is now

Starting August 16, 2023, the 4th Gen Intel Xeon Scalable processor with Intel vRAN Boost is available to order with the PowerEdge XR5610 and XR8000. These two critical pieces of the vRAN puzzle have been brought together and are now available to order from our PowerEdge XR Rugged Servers page with the following CPU SKUs.

| CPU SKU | Cores | Base Freq. | TDP |

|---|---|---|---|

6433 N | 32 | 2.0 | 205 W |

5423 N | 20 | 2.1 | 145 W |

6423 N | 28 | 2.0 | 195 W |

6403 N | 24 | 1.9 | 185 W |

Table 1. Intel vRAN Boost SKUs available today from Dell

Additional details on these new CPU SKUs and all 4th Gen Intel® Xeon® Scalable processors can be found on the Intel Ark Site.

These processors, with Intel vRAN Boost, integrate key acceleration blocks for 4G and 5G Radio Layer 1 processing into the CPU. These include:

- 5G Low Density Parity Check (LDPC) encoder/decoder

- 4G Turbo encoder/decoder

- Rate match/dematch

- Hybrid Automatic Repeat

- Request (HARQ) with access to DDR memory for buffer management

- Fast Fourier Transform (FFT) block providing DFT/iDFT for the 5G Sounding Reference Signal (SRS)

- Queue Manager (QMGR)

- DMA subsystem

One of the most interesting features of the vRAN Boost CPU is how this acceleration block is accessed by software. Although it is integrated on-chip with the CPU, the vRAN Boost block still presents itself to the Cores/OS as a PCIe device. The genius of this approach is in software compatibility. Virtual Distributed Unit (vDU) applications written for the previous generation HW will access the new vRAN Boost block using the same standardized, open APIs that were developed for the previous generation product. This creates a platform that can support past, present (and possibly future) generations of Intel’s vRAN optimized HW with the same software image.

What is to come

Prior to vRAN Boost, the reference architecture for vDU was a 3rd Gen Intel Xeon Scalable processor along with a FEC/LDPC accelerator, such as the Intel vRAN Accelerator ACC100 Adapter, and most today’s vRAN deployments can be found with this configuration. While the ACC100 does meet the L1 acceleration needs of vRAN it does this at a price, in terms of the space of an HHHL PCIe card and at the cost of an additional 54 W of power consumed (and cooled). In addition, using a PCIe interface will further reduce additional I/O expansion options and impact the ability to scale in-chassis due to slot count – both of which are alleviated with vRAN Boost.

With the new Intel vRAN Boost processors’ fully integrated acceleration, Intel has taken a huge step in closing the performance gap with purpose-built hardware, while remaining true to the “Open” in O-RAN.

Intel says that, compared to the previous generation, the new Intel vRAN Boost processor delivers up to 2x capacity and ~ 20% compute power savings compared to its previous generation processor with ACC100 external acceleration. At the Cell Site, where every watt is counted, operators are constantly exploring opportunities to reduce both power consumption and the associated “cooling tax” of keeping the HW in its operational range, typically within a sealed environment.

Dell and Intel have worked together to provide early access Intel vRAN Boost provisioned XR5610s and XR8000s to multiple partners and customers for integration, evaluation, and proof-of-concepts. One early evaluator, Deutsche Telekom, states:

“Deutsche Telekom recently conducted a performance evaluation of Dell’s PowerEdge XR5610 server, based on Intel’s 4th Gen Intel Xeon Scalable processor with Intel vRAN Boost. Testing under selected scenarios concluded a 2x capacity gain, using approximately 20% less power versus the previous generation. We aim to leverage these significant performance gains on our journey to vRAN.”

-- Petr Ledl , Vice President of Network Trials and Integration Lab and Access Disaggregation Chief Architect, Deutsche Telekom AG

With such a solid industry foundation of the telecom-optimized PowerEdge XR5610s/XR8000s, and 4th Gen Intel Xeon Scalable processors with Intel vRAN Boost, expect to see accelerated deployments of open, vRAN-based infrastructure solutions.