Dell PowerScale and Marvel Partner to Create Optimal Media Workflows

Tue, 01 Aug 2023 17:03:47 -0000

|Read Time: 0 minutes

Now in its 9th generation, Emmy-award-winning Dell PowerScale storage has been field proven in media workflows for over two decades and is the world’s most flexible1, efficient2, and secure3 scale-out NAS solution.

Our partnership with Marvel Studios is a wonderful example of the innovations we collaborate on with leading media and entertainment companies around the world—with PowerScale as the preeminent storage solution that enables data-driven workflows to accelerate content-creation pipelines.

Hear about Marvel Studios’ implementation of PowerScale directly in this educational video series from The Advanced Imaging Society:

The PowerScale OneFS advantage

The underlying OneFS file system leverages the foundations of clustered high-performance computing to solve the challenges of data protection at scale and client accessibility in a massively parallelized way. In practice, a single namespace that easily scales out with nodes to increase performance and capacity is a fundamentally game-changing architecture.

Media workflows require increased levels of access for the applications and users to provide for workflow collaboration in balance with security that doesn’t impede performance. Further, performance and access can’t be impeded even during hardware failures such as drive rebuilds or system upgrades to ensure that production work can continue uninterrupted while maintenance is being performed in the background.

Maximizing uptime correlates with fundamental business needs including meeting project timelines and budgets while ensuring that personnel have access to the content at the required performance levels, even during a background maintenance activity.

As a sufficiently advanced enterprise-class solution, PowerScale incorporates these capabilities to eliminate complexity and provide for increased uptime through its self-healing and self-managing functionality. (For more information, see the PowerScale OneFS Technical Overview.) This takes many of the traditional storage management burdens off the administrator’s plate, lowering the overhead and time needed to maintain storage, which is often increasing in size and scale.

While the benefits of collaboration over Ethernet-based storage are inherent in PowerScale, the user experience is also paramount in correlation with the performance of the network and underlying storage system. Operations such as playout and scrubbing need to perform reliably with no frame drops while providing response times that look and feel equivalent to working from the local workstation.

As I’ve participated in the development of media storage solutions from SCSI, Fibre Channel, iSCSI, SAN, and Ethernet, I’ve been able to test and compare solutions over the years and have closely watched the evolution and trends of these protocols in relation to their ability to support media workflows.

In 2007, I demonstrated real-time color grading with uncompressed content over 1 Gb Ethernet, the first of its kind. At the time, using Ethernet-based storage for color grading was largely unheard of, with few applications supporting it. That was more of an exercise to showcase the art of the possible in comparison with Fibre Channel-based solutions. The wider adoption of Ethernet for this particular use case was not yet of high interest because Ethernet speeds still needed to evolve. However, 1 Gb Ethernet was very appropriate for compressed media workflows and rendering, which were well aligned with the high-performance, scale-out design of PowerScale.

As 10 Gb Ethernet speeds became prevalent, there was a significant uptick in the adoption of Ethernet-based storage compared to Fibre Channel-based solutions for media use cases. I also started to see more datasets being moved over Ethernet rather than by sneaker net, physically delivering drives and tapes between locations. This led to cost and time savings for project timelines and budgets, among other benefits.

Fast-forward to 2014, when, with the OneFS 7.1.1 version supporting SMB multi-channel, we were able to use two 10 Gb Ethernet connections to support a stream of full resolution uncompressed 4K, whereas a single 10 Gb connection was only capable of supporting 2K full resolution streams. This began an adoption trend of Ethernet solutions for 4K full-resolution workflows.

In 2017, with the release of the F800 All-Flash PowerScale and OneFS 8.1, 40 Gb Ethernet speeds were supported. The floodgates were unlocked for media workflows. Multiple full-resolution 2K and 4K workflows could run on a single shared OneFS namespace with uncompromised performance. Workload consolidation could be performed and started to eliminate the need for multiple discreet storage solutions that were each supporting different parts of the pipeline, bringing all those together under a single unified OneFS namespace to streamline environments.

Complete pipeline transformations were taking place and began to replace iSCSI and Fibre Channel-based solutions at an accelerated pace, as those solutions were siloed within workgroups and inflexible with the emerging needs of collaboration. When the PowerScale F900 NVMe solution supporting 100 Gb Ethernet came out in 2021, the technology was set to change the industry yet again.

With the increasing prevalence of 100 Gb Ethernet over these past few years, performance parity with Fibre Channel-based solutions to support full-resolution 4K, 8K, and all related media workflows in between is no longer in question. Native Ethernet-based solutions are preferred for many reasons—including cloud capability, scale, cost, and supportability—to facilitate unstructured media datasets, leveraging the abundance of network engineering talent in comparison to Fibre Channel-trained engineers.

With reliability, performance, and shared access for collaboration delivering uncompromised benefits, we now look to several PowerScale storage capabilities that enable rich media ecosystems to be further streamlined and flourish.

There are four additional key areas of focus and their underlying feature sets that are increasingly important to today’s media ecosystems. They encompass:

- Security, physical and logical

- API orchestration

- Data movement

- Quality of service

Security

- In relation to PowerScale being the world’s most secure scale-out NAS solution and in alignment with the Trusted Partner Network (TPN), the OneFS operating system meets or exceeds the Motion Picture Association (MPA) Content Security Best Practices (CSBP) in all relevant areas of the Content Security Model (CSM).4

- Further, I’m seeing greater adoption of self-encrypting drives (SEDs), which provide encryption at the physical layer. Security auditing and multi-factor authentication are among the features being employed to protect the logical layer. Specifically, auditing has been available and used for many years now to provide a range of benefits beyond security.

- The real-time audit logs can be parsed to provide performance introspection, analysis, and user-trend insights in addition to identifying abnormal data-access patterns. The logs can also be used to correlate with levels of access to specific projects and files, correlating back to business-level insights and reports as well.

- I’m also keen on mentioning the OneFS embedded firewall, which provides connection-level protection at the storage network layer. Firewalls are typically employed in front of the storage or further upstream on the network, so having an additional firewall within the storage that protects the network ports on the storage itself is a powerful layer of security.

For more information about OneFS security, see Dell PowerScale OneFS: Security Considerations.

Data orchestration

- Data orchestration is paramount to workflow automation. If aspects of workflows can be automated and don’t require an operator to make a decision, they should be automated to remove the possibility for operator error and streamline the environment to accelerate workflows where possible.

- Orchestration is enabled through API calls to integrate PowerScale with the environment’s application layers, which can take mundane repeatable tasks off the operator’s plate and increase workflow efficiency.

For more information about PowerScale data orchestration, see the OneFS documentation on Dell Support.

Data movement

- Data movement is integral to media workflows today, and for that we look to the high-performance, highly reliable SyncIQ protocol embedded in PowerScale OneFS. SyncIQ facilitates secure, parallelized transfer of datasets between PowerScale solutions, providing strong benefits for media workflows. Replication policies can be set up or initiated ad hoc to transfer datasets between PowerScale solutions over the network.

- With SyncIQ, PowerScale is both a storage platform and a data transfer engine, so additional servers and transfer applications, which would incur additional cost and management overhead, don’t need to be implemented in front of PowerScale.

The PowerScale Backup and Recovery Guide provides more information about data movement capabilities in OneFS.

Quality of service

- Quality of service is increasingly important and has always been of interest for media workflows. SmartQoS is an embedded OneFS feature that monitors front-end protocol traffic over NFS, SMB, and S3. It allows limits to be set for the number of protocol operations to tie them back to performance SLAs, prioritization of workloads, and support for throttling to prevent specific clients from saturating a connection. I’ve seen an unthrottled copy job use the available connection bandwidth and interrupt a playout, so that’s an example of a use case where SmartQoS can be applied.

- Clients can be logically grouped and monitored with all kinds of metrics being captured to quantify and profile workloads. Introspection into read and write latencies, IOPS, and many other metrics on a per-protocol, path, IP address, user, and group basis can be captured and correlated in real time. Metrics can be tracked and enforced to provide quality of service for specific classes of users and workflows, which can all be defined to manage workloads.

For more information about quality of service in OneFS, see this blog post: OneFS SmartQoS.

Summary

The capabilities of PowerScale storage with OneFS are delivering unparalleled scale and feature benefits that elevate the capabilities of media entertainment use cases from the highest performance workflows to highly dense archives. Standardization on this enterprise-class, secure, and collaborative platform is the key to unlocking innovation and advancing your media pipelines.

1 Based on internal analysis of publicly available information sources, February 2023. CLM-0013892.

2 Based on Dell analysis comparing efficiency-related features: data reduction, storage capacity, data protection, hardware, space, lifecycle management efficiency, and ENERGY STAR certified configurations, June 2023. CLM-008608.

3 Based on Dell analysis comparing cyber-security software capabilities offered for Dell PowerScale vs. competitive products, September 2022.

4 Dell Technologies Executive Summary of Compliance with Media Industry Security Guidelines, https://www.delltechnologies.com/asset/en-ae/products/storage/briefs-summaries/tpn-executive-summary-compliance-statement.pdf.

Author: Brian Cipponeri, Global Solutions Architect

Dell Technologies – Unstructured Data Solutions

Related Blog Posts

OneFS NFS Locking and Reporting – Part 2

Mon, 13 Nov 2023 17:58:49 -0000

|Read Time: 0 minutes

In the previous article in this series, we took a look at the new NFS locks and waiters reporting CLI command set and API endpoints. Next, we turn our attention to some additional context, caveats, and NFSv3 lock removal.

Before the NFS locking enhancements in OneFS 9.5, the legacy CLI commands were somewhat inefficient. Their output also included other advisory domain locks such as SMB, which made the output more difficult to parse. The table below maps the new 9.5 CLI commands (and corresponding handlers) to the old NLM syntax.

Type / Command set | OneFS 9.5 and later | OneFS 9.4 and earlier |

Locks | isi nfs locks | isi nfs nlm locks |

Sessions | isi nfs nlm sessions | isi nfs nlm sessions |

Waiters | isi nfs locks waiters | isi nfs nlm locks waiters |

Note that the isi_classic nfs locks and waiters CLI commands have also been deprecated in OneFS 9.5.

When upgrading to OneFS 9.5 or later from a prior release, the legacy platform API handlers continue to function through and post upgrade. Thus, any legacy scripts and automation are protected from this lock reporting deprecation. Additionally, while the new platform API handlers will work in during a rolling upgrade in mixed-mode, they will only return results for the nodes that have already been upgraded (‘high nodes’).

Be aware that the NFS locking CLI framework does not support partial responses. However, if a node is down or the cluster has a rolling upgrade in progress, the alternative is to query the equivalent platform API endpoint instead.

Performance-wise, on very large busy clusters, there is the possibility that the lock and waiter CLI commands’ output will be sluggish. In such instances, the --timeout flag can be used to increase the command timeout window. Output filtering can also be used to reduce number of locks reported.

When a lock is in a transition state, there is a chance that it may not have/report a version. In these instances, the Version field will be represented as —. For example:

# isi nfs locks list -v Client: 1/TMECLI1:487722/10.22.10.250 Client ID: 487722351064074 LIN: 4295164422 Path: /ifs/locks/nfsv3/10.22.10.250_1 Lock Type: exclusive Range: 0, 92233772036854775807 Created: 2023-08-18T08:03:52 Version: - --------------------------------------------------------------- Total: 1

This behavior should be experienced very infrequently. However, if it is encountered, simply execute the CLI command again, and the lock version should be reported correctly.

When it comes to troubleshooting NFSv3/NLM issues, if an NFSv3 client is consistently experiencing NLM_DENIED or other lock management issues, this is often a result of incorrectly configured firewall rules. For example, take the following packet capture (PCAP) excerpt from an NFSv4 Linux client:

21 08:50:42.173300992 10.22.10.100 → 10.22.10.200 NLM 106 V4 LOCK Reply (Call In 19) NLM_DENIED

Often, the assumption is that only the lockd or statd ports on the server side of the firewall need to be opened and that the client always makes that connection that way. However, this is not the case. Instead, the server will continually respond with a ‘let me get back to you’, then later reconnect to the client. As such, if the firewall blocks access to rcpbind on the client and/or lockd or statd on the client, connection failures will likely occur.

Occasionally, it does become necessary to remove NLM locks and waiters from the cluster. Traditionally, the isi_classic nfs clients rm command was used, however that command has limitations and is fully deprecated in OneFS 9.5 and later. Instead, the preferred method is to use the isi nfs nlm sessions CLI utility in conjunction with various other ancillary OneFS CLI commands to clear problematic locks and waiters.

Note that the isi nfs nlm sessions CLI command, available in all current OneFS version, is Zone-Aware. The output formatting is seen in the output for the client holding the lock as it now shows the Zone ID number at the beginning. For example:

4/tme-linux1/10.22.10.250 This represents:

Zone ID 4 / Client tme-linux1 / IP address of cluster node holding the connection.

A basic procedure to remove NLM locks and waiters from a cluster is as follows:

1. List the NFS locks and search for the pertinent filename.

In OneFS 8.5 and later, the locks list can be filtered using the --path argument.

# isi nfs locks list --path=<path> | grep <filename>

Be aware that the full path must be specified, starting with /ifs. There is no partial matching or substitution for paths in this command set.

For OneFS 9.4 and earlier, the following CLI syntax can be used:

# isi_for_array -sX 'isi nfs nlm locks list | grep <filename>'

2. List the lock waiters associated with the same filename using |grep.

For OneFS 8.5 and later, the waiters list can also be filtered using the --path syntax:

# isi nfs locks waiters –path=<path> | grep <filename>

With OneFS 9.4 and earlier, the following CLI syntax can be used:

# isi_for_array -sX 'isi nfs nlm locks waiters |grep -i <filename>'

3. Confirm the client and logical inode number (LIN) being waited upon.

This can be accomplished by querying the efs.advlock.failover.lock_waiters sysctrl. For example:

# isi_for_array -sX 'sysctl efs.advlock.failover.lock_waiters'

[truncated output]

...

client = { '4/tme-linux1/10.20.10.200’, 0x26593d37370041 }

...

resource = 2:df86:0218Note that for sanity checking, the isi get -L CLI utility can be used to confirm the path of a file from its LIN:

isi get -L <LIN>

4. Remove the unwanted locks which are causing waiters to stack up.

Keep in mind that the isi nfs nlm sessions command syntax is access zone-aware.

List the access zones by their IDs.

# isi zone zones list -v | grep -iE "Zone ID|name"

Once the desired zone ID has been determined, the isi_run -z CLI utility can be used to specify the appropriate zone in which to run the isi nfs nlm sessions commands:

# isi_run -z 4 -l root

Next, the isi nfs nlm sessions delete CLI command will remove the specific lock waiter which is causing the issue. The command syntax requires specifying the client hostname and node IP of the node holding the lock.

# isi nfs nlm sessions delete –-zone <AZ_zone_ID> <hostname> <cluster-ip>

For example:

# isi nfs nlm sessions delete –zone 4 tme-linux1 10.20.10.200 Are you sure you want to delete all NFSv3 locks associated with client tme-linux1 against cluster IP 10.20.10.100? (yes/[no]): yes

5. Repeat the commands in step 1 to confirm that the desired NLM locks and waiters have been successfully culled.

BEFORE applying the process....

# isi_for_array -sX 'isi nfs nlm locks list |grep JUN' TME-1: 4/tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_27JUN2017 TME-1: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_28JUN2017 TME-2: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_27JUN2017 TME-2: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_28JUN2017 TME-3: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_27JUN2017 TME-3: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_28JUN2017 TME-4: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_27JUN2017 TME-4: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_28JUN2017 TME-5: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_27JUN2017 TME-5: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_28JUN2017 TME-6: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_27JUN2017 TME-6: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_28JUN2017 # isi_for_array -sX 'isi nfs nlm locks waiters |grep -i JUN' TME-1: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_28JUN2017 TME-1: 4/ tme-linux1/192.168.2.214 /ifs/tmp/TME/sequences/mncr_fabjob_seq_file_28JUN2017 TME-2 exited with status 1 TME-3 exited with status 1 TME-4 exited with status 1 TME-5 exited with status 1 TME-6 exited with status 1

AFTER...

TME-1# isi nfs nlm sessions delete --hostname= tme-linux1 --cluster-ip=192.168.2.214 Are you sure you want to delete all NFSv3 locks associated with client tme-linux1 against cluster IP 192.168.2.214? (yes/[no]): yes TME-1# TME-1# TME-1# isi_for_array -sX 'sysctl efs.advlock.failover.locks |grep 2:ce75:0319' TME-1 exited with status 1 TME-2 exited with status 1 TME-3 exited with status 1 TME-4 exited with status 1 TME-5 exited with status 1 TME-6 exited with status 1 TME-1# TME-1# isi_for_array -sX 'isi nfs nlm locks list |grep -i JUN' TME-1 exited with status 1 TME-2 exited with status 1 TME-3 exited with status 1 TME-4 exited with status 1 TME-5 exited with status 1 TME-6 exited with status 1 TME-1# TME-1# isi_for_array -sX 'isi nfs nlm locks waiters |grep -i JUN' TME-1 exited with status 1 TME-2 exited with status 1 TME-3 exited with status 1 TME-4 exited with status 1 TME-5 exited with status 1 TME-6 exited with status 1

OneFS NFS Locking

Mon, 13 Nov 2023 17:56:59 -0000

|Read Time: 0 minutes

Included among the plethora of OneFS 9.5 enhancements is an updated NFS lock reporting infrastructure, command set, and corresponding platform API endpoints. This new functionality includes enhanced listing and filtering options for both locks and waiters, based on NFS major version, client, LIN, path, creation time, etc. But first, some backstory.

The ubiquitous NFS protocol underwent some fundamental architectural changes between its versions 3 and 4. One of the major differences concerns the area of file locking.

NFSv4 is the most current major version of the protocol, natively incorporating file locking and thereby avoiding the need for any additional (and convoluted) RPC callback mechanisms necessary with prior NFS versions. With NFSv4, locking is built into the main file protocol and supports new lock types, such as range locks, share reservations, and delegations/oplocks, which emulate those found in Window and SMB.

File lock state is maintained at the server under a lease-based model. A server defines a single lease period for all states held by an NFS client. If the client does not renew its lease within the defined period, all states associated with the client's lease may be released by the server. If released, the client may either explicitly renew its lease or simply issue a read request or other associated operation. Additionally, with NFSv4, a client can elect whether to lock the entire file or a byte range within a file.

In contrast to NFSv4, the NFSv3 protocol is stateless and does not natively support file locking. Instead, the ancillary Network Lock Manager (NLM) protocol supplies the locking layer. Since file locking is inherently stateful, NLM itself is considered stateful. For example, when an NFSv3 filesystem mounted on an NFS client receives a request to lock a file, it generates an NLM remote procedure call instead of an NFS remote procedure call.

The NLM protocol itself consists of remote procedure calls that emulate the standard UNIX file control (fcntl) arguments and outputs. Because a process blocks waiting for a lock that conflicts with another lock holder – also known as a ‘blocking lock’ – the NLM protocol has the notion of callbacks from the file server to the NLM client to notify that a lock is available. As such, the NLM client sometimes acts as an RPC server in order to receive delayed results from lock calls.

Attribute | NFSv3 | NFSv4 |

State | Stateless - A client does not technically establish a new session if it has the correct information to ask for files and so on. This allows for simple failover between OneFS nodes using dynamic IP pools. | Stateful - NFSv4 uses sessions to handle communication. As such, both client and server must track session state to continue communicating. |

Presentation | User and Group info is presented numerically - Client and Server communicate user information by numeric identifiers, allowing the same user to appear as different names between client and server. | User and Group info is presented as strings - Both the client and server must resolve the names of the numeric information stored. The server must look up names to present while the client must remap those to numbers on its end. |

Locking | File Locking is out of band - uses NLM to perform locks. This requires the client to respond to RPC messages from the server to confirm locks have been granted, etc. | File Locking is in band - No longer uses a separate protocol for file locking, instead making it a type of call that is usually compounded with OPENs, CREATEs, or WRITEs. |

Transport | Can run over TCP or UDP - This version of the protocol can run over UDP instead of TCP, leaving handling of loss and retransmission to the software instead of the operating system. We always recommend using TCP. | Only supports TCP - Version 4 of NFS has left loss and retransmission up to the underlying operating system. Can batch a series of calls in a single packet, allowing the server to process all of them and reply at the end. This is used to reduce the number of calls involved in common operations. |

Since NFSv3 is stateless, it requires more complexity to recover from failures like client and server outages and network partitions. If an NLM server crashes, NLM clients that are holding locks must reestablish them on the server when it restarts. The NLM protocol deals with this by having the status monitor on the server send a notification message to the status monitor of each NLM client that was holding locks. The initial period after a server restart is known as the grace period, during which only requests to reestablish locks are granted. Thus, clients that reestablish locks during the grace period are guaranteed to not lose their locks.

When an NLM client crashes, ideally any locks it was holding at the time are removed from the pertinent NLM server(s). The NLM protocol handles this by having the status monitor on the client send a message to each server's status monitor once the client reboots. The client reboot indication informs the server that the client no longer requires its locks. However, if the client crashes and fails to reboot, the client's locks will persist indefinitely. This is undesirable for two primary reasons: Resources are indefinitely leaked. Eventually, another client will want to get a conflicting lock on at least one of the files the crashed client had locked and, as a result, the other client is postponed indefinitely.

Therefore, having NFS server utilities to swiftly and accurately report on lock and waiter status and utilities to clear NFS lock waiters is highly desirable for administrators – particularly on clustered storage architectures.

Prior to OneFS 9.5, the old NFS locking CLI commands were somewhat inefficient and also showed other advisory domain locks, which rendered the output somewhat confusing. The following table shows the new CLI commands (and corresponding handlers) which replace the older NLM syntax.

Type / Command set | OneFS 9.4 and earlier | OneFS 9.5 |

Locks | isi nfs nlm locks | isi nfs locks |

Sessions | isi nfs nlm sessions | isi nfs nlm sessions |

Waiters | isi nfs nlm locks waiters | isi nfs locks waiters |

In OneFS 9.5 and later, the old API handlers will still exist to avoid breaking existing scripts and automation, however the CLI command syntax is deprecated and will no longer work.

Also be aware that the isi_classic nfs locks and waiters CLI commands have also been disabled in OneFS 9.5. Attempts to run these will yield the following warning message:

# isi_classic nfs locks

This command has been disabled. Please use isi nfs for this functionality.The new isi nfs locks CLI command output includes the following locks object fields:

Field | Description |

Client | The client host name, Frequently Qualified Domain Name, or IP |

Client_ID | The client ID (internally generated) |

Created | The UNIX Epoch time that the lock was created |

ID | The lock ID (Id necessary for platform API sorting, not shown in CLI output) |

LIN | The logical inode number (LIN) of the locked resource |

Lock_type | The type of lock (shared, exclusive, none) |

Path | Path of locked file |

Range | The byte range within the file that is locked |

Version | The NFS major version: v3, or v4 |

Note that the ISI_NFS_PRIV RBAC privilege is required in order to view the NFS locks or waiters via the CLI or PAPI. In addition to ‘root’, the cluster’s ‘SystemAdmin’ and ‘SecurityAdmin’ roles contain this privilege by default.

Additionally, the new locks CLI command sets have a default timeout of 60 seconds. If the cluster is very large, the timeout may need to be increased for the CLI command. For example:

# isi –timeout <timeout value> nfs locks list

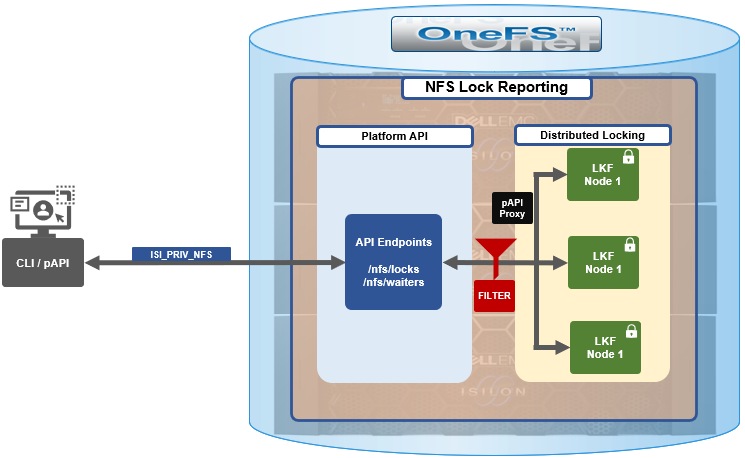

The basic architecture of the enhanced NFS locks reporting framework is as follows:

The new API handlers leverage the platform API proxy, yielding increased performance over the legacy handlers. Additionally, updated syscalls have been implemented to facilitate filtering by NFS service and major version.

Since NFSv3 is stateless, the cluster does not know when a client has lost its state unless it reconnects. For maximum safety, the OneFS locking framework (lk) holds locks forever. The isi nfs nlm sessions CLI command allows administrators to manually free NFSv3 locks in such cases, and this command remains available in OneFS 9.5 as well as prior versions. NFSv3 locks may also be leaked on delete, since a valid inode is required for lock operations. As such, lkf has a lock reaper which periodically checks for locks associated with deleted files.

In OneFS 9.5 and later, current NFS locks can be viewed with the new isi nfs locks list command. This command set also provides a variety of options to limit and format the display output. In its basic form, this command generates a basic list of client IP address and the path. For example:

# isi nfs locks list Client Path ------------------------------------------------------------------- 1/TMECLI1:487722/10.22.10.250 /ifs/locks/nfsv3/10.22.10.250_1 1/TMECLI1:487722/10.22.10.250 /ifs/locks/nfsv3/10.22.10.250_2 Linux NFSv4.0 TMECLI1:487722/10.22.10.250 /ifs/locks/nfsv4/10.22.10.250_1 Linux NFSv4.0 TMECLI1:487722/10.22.10.250 /ifs/locks/nfsv4/10.22.10.250_2 ------------------------------------------------------------------- Total: 4

To include more information, the -v flag can be used to generate a verbose locks listing:

# isi nfs locks list -v Client: 1/TMECLI1:487722/10.22.10.250 Client ID: 487722351064074 LIN: 4295164422 Path: /ifs/locks/nfsv3/10.22.10.250_1 Lock Type: exclusive Range: 0, 92233772036854775807 Created: 2023-08-18T08:03:52 Version: v3 --------------------------------------------------------------- Client: 1/TMECLI1:487722/10.22.10.250 Client ID: 5175867327774721 LIN: 42950335042 Path: /ifs/locks/nfsv3/10.22.10.250_1 Lock Type: exclusive Range: 0, 92233772036854775807 Created: 2023-08-18T08:10:31 Version: v3 --------------------------------------------------------------- Client: Linux NFSv4.0 TMECLI1:487722/10.22.10.250 Client ID: 487722351064074 LIN: 429516442 Path: /ifs/locks/nfsv3/10.22.10.250_1 Lock Type: exclusive Range: 0, 92233772036854775807 Created: 2023-08-18T08:19:48 Version: v4 --------------------------------------------------------------- Client: Linux NFSv4.0 TMECLI1:487722/10.22.10.250 Client ID: 487722351064074 LIN: 4295426674 Path: /ifs/locks/nfsv3/10.22.10.250_2 Lock Type: exclusive Range: 0, 92233772036854775807 Created: 2023-08-18T08:17:02 Version: v4 --------------------------------------------------------------- Total: 4

The previous syntax returns more detailed information for each lock, including client ID, LIN, path, lock type, range, created date, and NFS version.

The lock listings can also be filtered by client or client-id. Note that the --client option must be the full name in quotes:

# isi nfs locks list --client="full_name_of_client/IP_address" -v

For example:

# isi nfs locks list --client="1/TMECLI1:487722/10.22.10.250" -v Client: 1/TMECLI1:487722/10.22.10.250 Client ID: 5175867327774721 LIN: 42950335042 Path: /ifs/locks/nfsv3/10.22.10.250_1 Lock Type: exclusive Range: 0, 92233772036854775807 Created: 2023-08-18T08:10:31 Version: v3

Additionally, be aware that the CLI does not support partial names, so the full name of the client must be specified.

Filtering by NFS version can be helpful when attempting to narrow down which client has a lock. For example, to show just the NFSv3 locks:

# isi nfs locks list --version=v3 Client Path ------------------------------------------------------------------- 1/TMECLI1:487722/10.22.10.250 /ifs/locks/nfsv3/10.22.10.250_1 1/TMECLI1:487722/10.22.10.250 /ifs/locks/nfsv3/10.22.10.250_2 ------------------------------------------------------------------- Total: 2

Note that the –-version flag supports both v3 and nlm as arguments and will return the same v3 output in either case. For example:

# isi nfs locks list --version=nlm Client Path ------------------------------------------------------------------- 1/TMECLI1:487722/10.22.10.250 /ifs/locks/nfsv3/10.22.10.250_1 1/TMECLI1:487722/10.22.10.250 /ifs/locks/nfsv3/10.22.10.250_2 ------------------------------------------------------------------- Total: 2

Filtering by LIN or path is also supported. For example, to filter by LIN:

# isi nfs locks list --lin=42950335042 -v Client: 1/TMECLI1:487722/10.22.10.250 Client ID: 5175867327774721 LIN: 42950335042 Path: /ifs/locks/nfsv3/10.22.10.250_1 Lock Type: exclusive Range: 0, 92233772036854775807 Created: 2023-08-18T08:10:31 Version: v3

Or by path:

# isi nfs locks list --path=/ifs/locks/nfsv3/10.22.10.250_2 -v Client: Linux NFSv4.0 TMECLI1:487722/10.22.10.250 Client ID: 487722351064074 LIN: 4295426674 Path: /ifs/locks/nfsv3/10.22.10.250_2 Lock Type: exclusive Range: 0, 92233772036854775807 Created: 2023-08-18T08:17:02 Version: v4

Be aware that the full path must be specified, starting with /ifs. There is no partial matching or substitution for paths in this command set.

Filtering can also be performed by creation time, for example:

# isi nfs locks list --created=2023-08-17T09:30:00 -v

Note that when filtering by created, the output will include all locks that were created before or at the time provided.

The —limits argument can be used to curtail the number of results returned, and limits can be used in conjunction with all other query options. For example, to limit the output of the NFSv4 locks listing to one lock:

# isi nfs locks list -–version=v4 --limit=1

Note that limit can be used with the range of query types.

The filter options are mutually exclusive with the exception of version. Note that version can be used with any of the other filter options. For example, filtering by both created and version.

This can be helpful when troubleshooting and trying to narrow down results.

In addition to locks, OneFS 9.5 also provides the isi nfs locks waiters CLI command set. Note that waiters are specific to NFSv3 clients, and the CLI reports any v3 locks that are pending and not yet granted.

Since NFSv3 is stateless, a cluster does not know when a client has lost its state unless it reconnects. For maximum safety, lk holds locks forever. The isi nfs nlm command allows administrators to manually free locks in such cases. Locks may also be leaked on delete, since a valid inode is required for lock operations. Thus, lkf has a lock reaper which periodically checks for locks associated with deleted files:

# isi nfs locks waiters

The waiters CLI syntax uses a similar range of query arguments as the isi nfs locks list command set.

In addition to the CLI, the platform API can also be used to query both NFS locks and NFSv3 waiters. For example, using curl to view the waiters via the OneFS pAPI:

# curl -k -u <username>:<passwd> https://localhost:8080/platform/protocols/nfs/waiters”

{

“total” : 2,

“waiters”;

}

{

“client” : “1/TMECLI1487722/10.22.10.250”,

“client_id” : “4894369235106074”,

“created” : “1668146840”,

“id” : “1 1YUIAEIHVDGghSCHGRFHTiytr3u243567klj212-MANJKJHTTy1u23434yui-ouih23ui4yusdftyuySTDGJSDHVHGDRFhgfu234447g4bZHXhiuhsdm”,

“lin” : “4295164422”,

“lock_type” : “exclusive”

“path” : “/ifs/locks/nfsv3/10.22.10.250_1”

“range” : [0, 92233772036854775807 ],

“version” : “v3”

}

},

“total” : 1

}Similarly, using the platform API to show locks filtered by client ID:

# curl -k -u <username>:<passwd> “https://<address>:8080/platform/protocols/nfs/locks?client=<client_ID>”

For example:

# curl -k -u <username>:<passwd> “https://localhost:8080/platform/protocols/nfs/locks?client=1/TMECLI1487722/10.22.10.250”

{

“locks”;

}

{

“client” : “1/TMECLI1487722/10.22.10.250”,

“client_id” : “487722351064074”,

“created” : “1668146840”,

“id” : “1 1YUIAEIHVDGghSCHGRFHTiytr3u243567FCUJHBKD34NMDagNLKYGHKHGKjhklj212-MANJKJHTTy1u23434yui-ouih23ui4yusdftyuySTDGJSDHVHGDRFhgfu234447g4bZHXhiuhsdm”,

“lin” : “4295164422”,

“lock_type” : “exclusive”

“path” : “/ifs/locks/nfsv3/10.22.10.250_1”

“range” : [0, 92233772036854775807 ],

“version” : “v3”

}

},

“Total” : 1

}Note that, as with the CLI, the platform API does not support partial name matches, so the full name of the client must be specified.