Build a Continuous Innovation Machine

Sat, 10 Sep 2022 01:07:11 -0000

|Read Time: 0 minutes

Adopt a proven IT foundation that’s ready for anything and stops at nothing.

Perpetual motion, cold fusion, time travel, jetpacks, and many other hypothetical ideas continue to capture the imagination, even though they don’t actually exist. Each one promises to solve a slew of seemingly intractable problems with a single, elegant solution.

Those of us who work in IT know that there is no one solution that solves every problem. The technology landscape is incredibly dynamic, and the solution that’s just right for one workload today might not be the best match tomorrow—and will never be the right solution for another workload with different characteristics and requirements. The only constant in today’s enterprise data estates—encompassing multiple devices, data centers, clouds, and edges—is the relentless flow of change.

So how can you plan your IT strategy when every day launches you into uncharted digital territory?

At Dell Technologies, we believe that the best way to get from where you are to where you need to be is to understand that the route you take will not be a straight line from A to B. It will be a unique path with twists and turns determined by the demands and requirements of your customers, your industry, and your business. And all that can all change in an instant. Adopting a data-driven approach to modernization focuses on building an IT foundation that’s ready for anything and stops at nothing.

This continuous innovation machine is not a single solution, but an approach to IT that recognizes when you don’t know where the future will take you, you need a well-oiled machine that can help you chart a course to the future, navigating an evolving landscape at a rapid pace. The continuous innovation machine is a technology foundation that works together seamlessly to power your business today and can scale, evolve, and adapt quickly so you can take advantage of new opportunities as they come along.

Dell Technologies can help you on your way with benchmarked and proven solutions that help you innovate, adapt, and grow.

Adaptive compute

Be ready for what’s next and address evolving compute demands with a platform engineered to optimize the latest technology advancements while easily scaling to address your data at the point of need. For example, Dell PowerEdge servers have been tested and proven to deliver:

- 28% faster performance1

- 71% cost reduction2

- 37% higher virtual machine density3

Autonomous infrastructure

Respond rapidly to business opportunities with intelligent systems that work together and independently, delivering to the parameters that you set. Dell Technologies innovations can ease management tasks with:

- 46 seconds versus 42+ minutes to update multiple servers4

- 99.1% less hands-on deployment time5

- 17,280X more efficient reporting6

Proactive resilience

Build resilience into your digital transformation with an infrastructure designed for secure interactions and the capability to predict potential threats. Dell Technologies delivers:

- Built-in cybersecurity and a protected supply chain7

- Layered and pervasive security to combat sophisticated threats7

- Zero Trust to meet the challenge of ever-changing threats8

Be ready for anything

Be ready to drive innovation into new frontiers with an IT foundation that delivers critical capabilities across your environment. Dell Technologies delivers lab-tested, benchmarked, and third-party-proven benefits to help you adopt solutions that are just right for today and are ready to help you innovate, adapt, and grow into the future—wherever it might take you.

Learn more:

[1] Dell Technologies Direct from Development, Intel Xeon E-2300 Processor Series, and How They Improve Performance, Features, and Security For Next-Generation PowerEdge Rack and Tower Servers, 2021.

[2] Dell Technologies Direct from Development, Persistent Memory for PowerEdge Servers, 2021.

[3] A Principled Technologies report, Get more from Dell PowerEdge R750xs servers with 3rd Generation Intel Xeon Scalable processors, September 2021.

[4] A Principled Technologies report, Automate high-touch server lifecycle management tasks with OpenManage Enterprise integrations and plugins, March 2021.

[5] A Principled Technologies report, Reduce hands-on deployment times to near zero with iDRAC9 automation, February 2020.

[6] Tolly test report commissioned by Dell Technologies, iDRAC9 Telemetry Streaming, February 2020.

[7] Dell Technologies infographic, Dell PowerEdge Cyber Resilient Architecture 2.0, May 2021.

[8] Dell Technologies infographic, Zero Trust. Verified Trust, January 2021.

Related Blog Posts

MLPerf™ Inference v4.0 Performance on Dell PowerEdge R760xa and R7615 Servers with NVIDIA L40S GPUs

Fri, 05 Apr 2024 17:41:56 -0000

|Read Time: 0 minutes

Abstract

Dell Technologies recently submitted results to the MLPerf™ Inference v4.0 benchmark suite. This blog highlights Dell Technologies’ closed division submission made for the Dell PowerEdge R760xa, Dell PowerEdge R7615, and Dell PowerEdge R750xa servers with NVIDIA L40S and NVIDIA A100 GPUs.

Introduction

This blog provides relevant conclusions about the performance improvements that are achieved on the PowerEdge R760xa and R7615 servers with the NVIDIA L40S GPU compared to the PowerEdge R750xa server with the NVIDIA A100 GPU. In the following comparisons, we held the GPU constant across the PowerEdge R760xa and PowerEdge R7615 servers to show the excellent performance of the NVIDIA L40S GPU. Additionally, we also compared the PowerEdge R750xa server with the NVIDIA A100 GPU to its successor the PowerEdge R760xa server with the NVIDIA L40S GPU.

System Under Test configuration

The following table shows the System Under Test (SUT) configuration for the PowerEdge servers.

Table 1: SUT configuration of the Dell PowerEdge R750xa, R760xa, and R7615 servers for MLPerf Inference v4.0

Server | PowerEdge R750xa | PowerEdge R760xa | PowerEdge R7615 |

MLPerf Version | V4.0

| ||

GPU | NVIDIA A100 PCIe 80 GB | NVIDIA L40S

| |

Number of GPUs | 4 | 2 | |

MLPerf System ID | R750xa_A100_PCIe_80GBx4_TRT | R760xa_L40Sx4_TRT | R7615_L40Sx2_TRT

|

CPU | 2 x Intel Xeon Gold 6338 CPU @ 2.00GHz | 2 x Intel Xeon Platinum 8470Q | 1 x AMD EPYC 9354 32-Core Processor |

Memory | 512 GB | ||

Software Stack | TensorRT 9.3.0 CUDA 12.2 cuDNN 8.9.2 Driver 535.54.03 / 535.104.12 DALI 1.28.0 | ||

The following table lists the technical specifications of the NVIDIA L40S and NVIDIA A100 GPUs.

Table 2: Technical specifications of the NVIDIA A100 and NVIDIA L40S GPUs

Model | NVIDIA A100 | NVIDIA L40S | ||

Form factor | SXM4 | PCIe Gen4 | PCIe Gen4 | |

GPU architecture | Ampere | Ada Lovelace | ||

CUDA cores | 6912 | 18176 | ||

Memory size | 80 GB | 48 GB | ||

Memory type | HBM2e | HBM2e | ||

Base clock | 1275 MHz | 1065 MHz | 1110 MHz | |

Boost clock | 1410 MHz | 2520 MHz | ||

Memory clock | 1593 MHz | 1512 MHz | 2250 MHz | |

MIG support | Yes | No | ||

Peak memory bandwidth | 2039 GB/s | 1935 GB/s | 864 GB/s | |

Total board power | 500 W | 300 W | 350 W | |

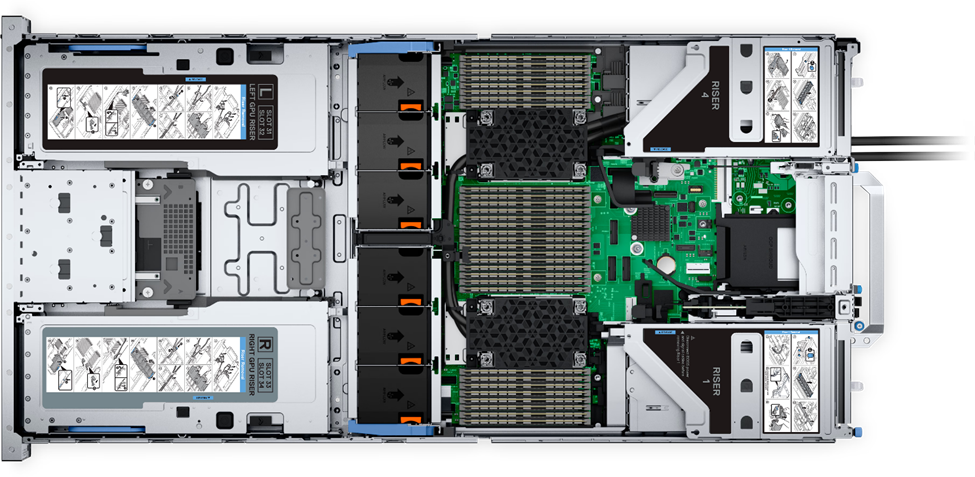

Dell PowerEdge R760xa server

The PowerEdge R760xa server shines as an Artificial Intelligence (AI) workload server with its cutting-edge inferencing capabilities. This server represents the pinnacle of performance in the AI inferencing space with its processing prowess enabled by Intel Xeon Platinum processors and NVIDIA L40S GPUs. Coupled with NVIDIA TensorRT and CUDA 12.2, the PowerEdge R760xa server is positioned perfectly for any AI workload including, but not limited to, Large Language Models, computer vision, Natural Language Processing, robotics, and edge computing. Whether you are processing image recognition tasks, natural language understanding, or deep learning models, the PowerEdge R760xa server provides the computational muscle for reliable, precise, and fast results.

Figure 1: Front view of the Dell PowerEdge R760xa server

Figure 2: Top view of the Dell PowerEdge R760xa server

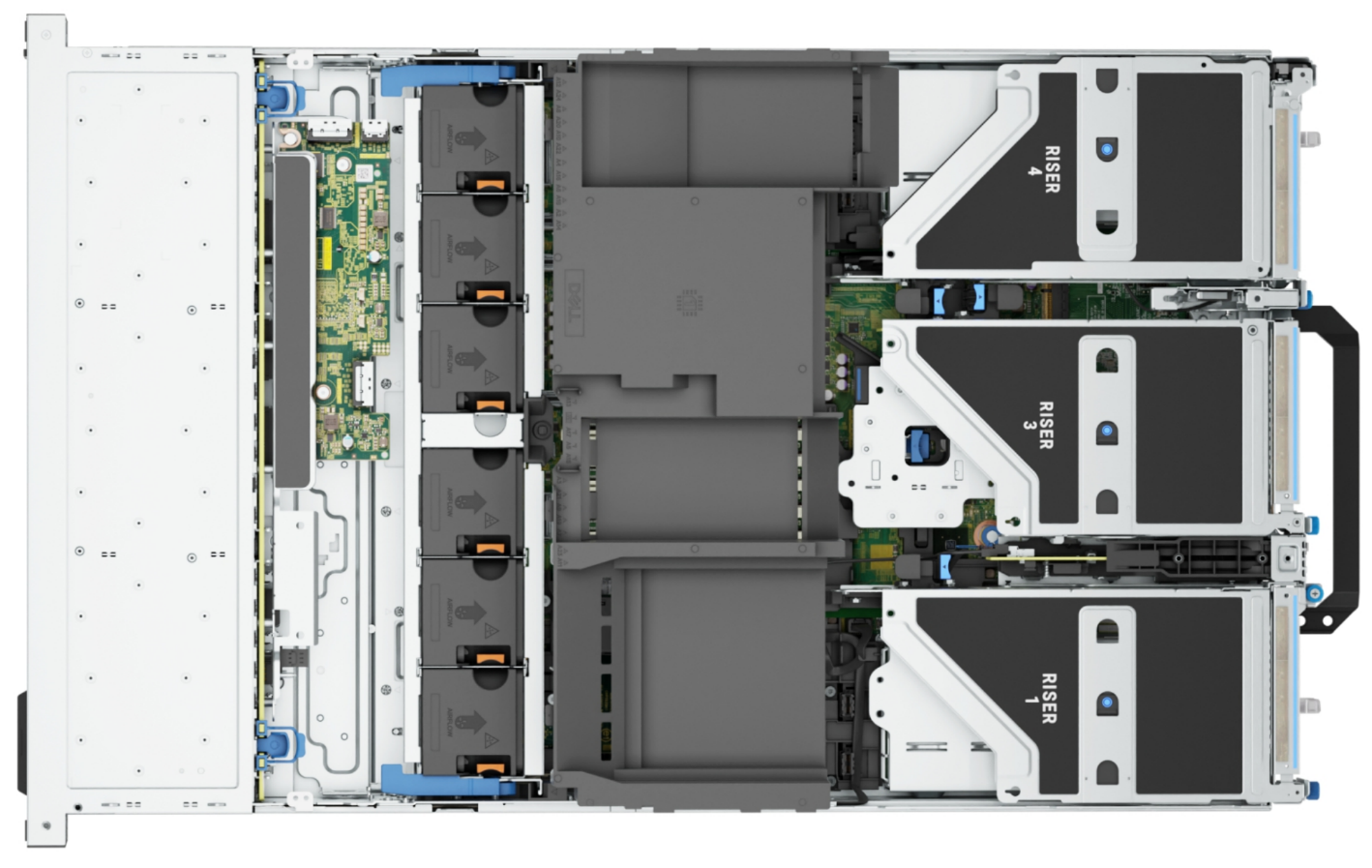

Dell PowerEdge R7615 server

The PowerEdge R7615 server stands out as an excellent choice for AI, machine learning (ML), and deep learning (DL) workloads due to its robust performance capabilities and optimized architecture. With its powerful processing capabilities including up to three NVIDIA L40S GPUs supported by TensorRT, this server can handle complex neural network inference and training tasks with ease. Powered by a single AMD EPYC processor, this server performs well for any demanding AI workloads.

Figure 3: Front view of the Dell PowerEdge R7615 server

Figure 4: Top view of the Dell PowerEdge R7615 server

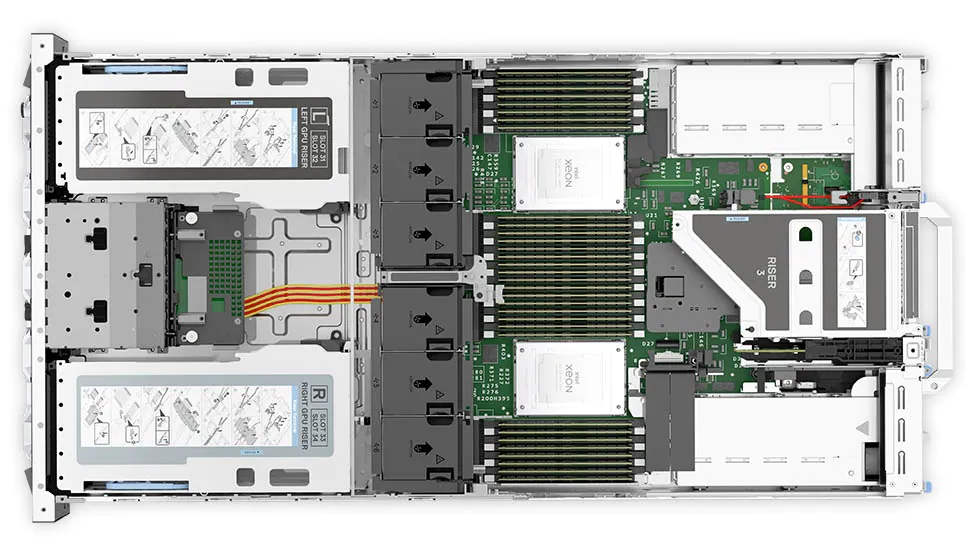

Dell PowerEdge R750xa server

The PowerEdge R750xa server is a perfect blend of technological prowess and innovation. This server is equipped with Intel Xeon Gold processors and the latest NVIDIA GPUs. The PowerEdge R760xa server is designed for the most demanding AI, ML, and DL workloads as it is compatible with the latest NVIDIA TensorRT engine and CUDA version. With up to nine PCIe Gen4 slots and availability in a 1U or 2U configuration, the PowerEdge R750xa server is an excellent option for any demanding workload.

Figure 5: Front view of the Dell PowerEdge R750xa server

Figure 6: Top view of the Dell PowerEdge R750xa server

Performance results

Classical Deep Learning models performance

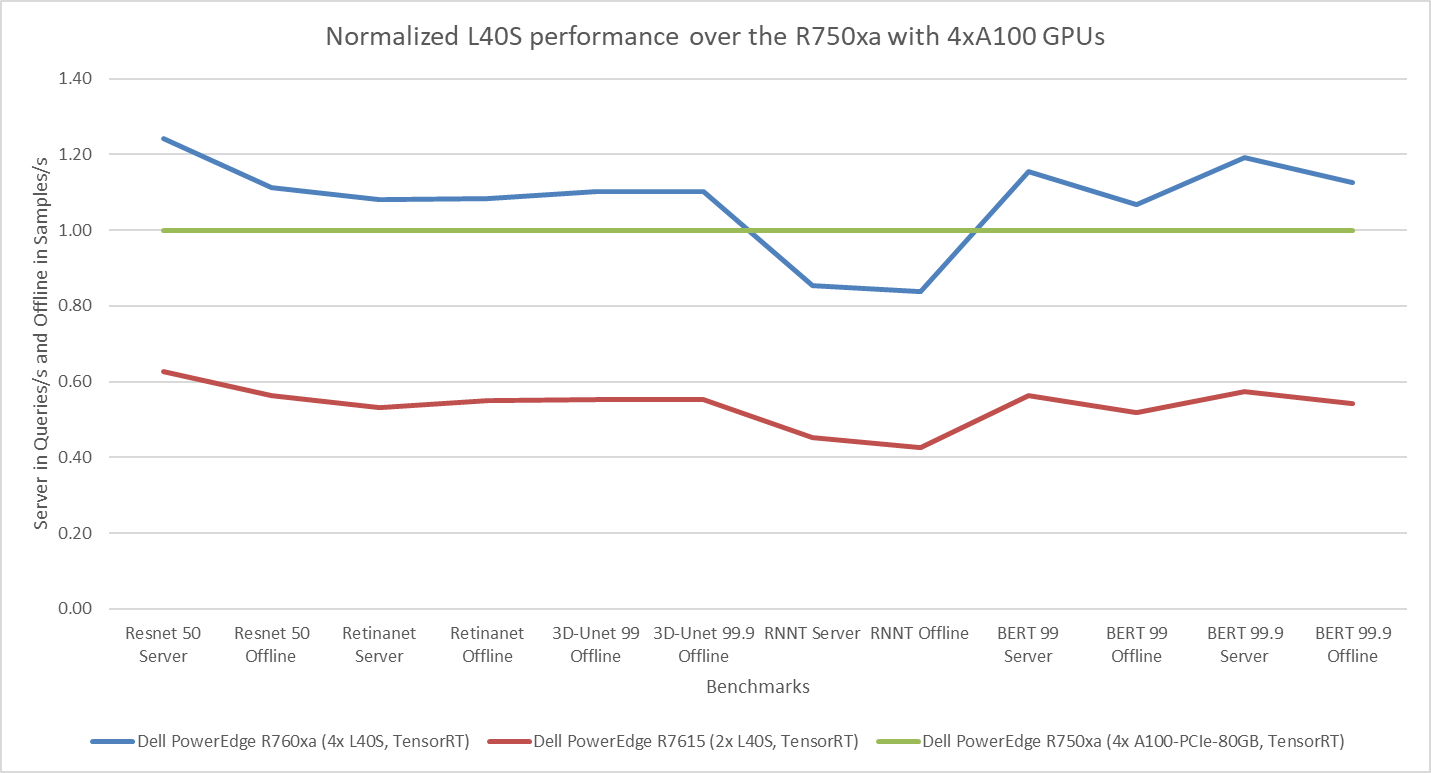

The following figure presents the results as a ratio of normalized numbers over the Dell PowerEdge R750xa server with four NVIDIA A100 GPUs. This result provides an easy-to-read comparison of three systems and several benchmarks.

Figure 7: Normalized NVIDIA L40S GPU performance over the PowerEdge R750xa server with four A100 GPUs

The green trendline represents the performance of the Dell PowerEdge R750xa server with four NVIDIA A100 GPUs. With a score of 1.00 for each benchmark value, the results have been divided by themselves to serve as the baseline in green for this comparison. The blue trendline represents the performance of the PowerEdge R760xa server with four NVIDIA L40S GPUs that has been normalized by dividing each benchmark result by the corresponding score achieved by the PowerEdge R750xa server. In most cases, the performance achieved on the PowerEdge R760xa server outshines the results of the PowerEdge R750xa server with NVIDIA A100 GPUs, proving the expected improvements from the NVIDIA L40S GPU. The red trendline has also been normalized over the PowerEdge R750xa server and represents the performance of the PowerEdge R7615 server with two NVIDIA L40S GPUs. It is interesting that the red line almost mimics the blue line. This result suggests that the PowerEdge R7615 server, despite having half the compute resources, still performs comparably well in most cases, showing its efficiency.

Generative AI performance

The latest submission saw the introduction of the new Stable Diffusion XL benchmark. In the context of generative AI, stable diffusion is a text to image model that generates coherent image samples. This result is achieved gradually by refining and spreading out information throughout the generation process. Consider the example of dropping food coloring into a large bucket of water. Initially, only a small, concentrated portion of the water turns color, but gradually the coloring is evenly distributed in the bucket.

The following table shows the excellent performance of the PowerEdge R760xa server with the powerful NVIDIA L40S GPU for the GPT-J and Stable Diffusion XL benchmarks. The PowerEdge R760xa takes the top spot in GPT-J and Stable Diffusion XL when compared to other NVIDIA L40S results.

Table 3: Benchmark results for the PowerEdge R760xa server with the NVIDIA L40S GPU

Benchmark | Dell PowerEdge R760xa L40S result (Server in Queries/s and Offline in Samples/s) | Dell’s % gain to the next best non-Dell results (%) |

Stable Diffusion XL Server | 0.65 | 5.24 |

Stable Diffusion XL Offline | 0.67 | 2.28 |

GPT-J 99 Server | 12.75 | 4.33 |

GPT-J 99 Offline | 12.61 | 1.88 |

GPT-J 99.9 Server | 12.75 | 4.33 |

GPT-J 99.9 Offline | 12.61 | 1.88 |

Conclusion

The MLPerf Inference submissions elicit insightful like-to-like comparisons. This blog highlights the impressive performance of the NVIDIA L40S GPU in the Dell PowerEdge R760xa and PowerEdge R7615 servers. Both servers performed well when compared to the performance of the Dell PowerEdge R750xa server with the NVIDIA A100 GPU. The outstanding performance improvements in the NVIDIA L40S GPU coupled with the Dell PowerEdge server position Dell customers to succeed in AI workloads. With the advent of the GPT-J and Stable diffusion XL Models, the Dell PowerEdge server is well positioned to handle Generative AI workloads.

Q1 2024 Update for Ansible Integrations with Dell Infrastructure

Tue, 02 Apr 2024 14:45:56 -0000

|Read Time: 0 minutes

In this blog post, I am going to cover the new Ansible functionality for the Dell infrastructure portfolio that we released over the past two quarters. Ansible collections are now on a monthly release cadence, and you can bookmark the changelog pages from their respective GitHub pages to get updates as soon as they are available!

PowerScale Ansible collections 2.3 & 2.4

SyncIQ replication workflow support

SyncIQ is the native remote replication engine of PowerScale. Before seeing what is new in the Ansible tasks for SyncIQ, let’s take a look at the existing modules:

- SyncIQPolicy: Used to query, create, and modify replication policies, as well as to start a replication job.

- SyncIQJobs: Used to query, pause, resume, or cancel a replication job. Note that new synciq jobs are started using the synciqpolicy module.

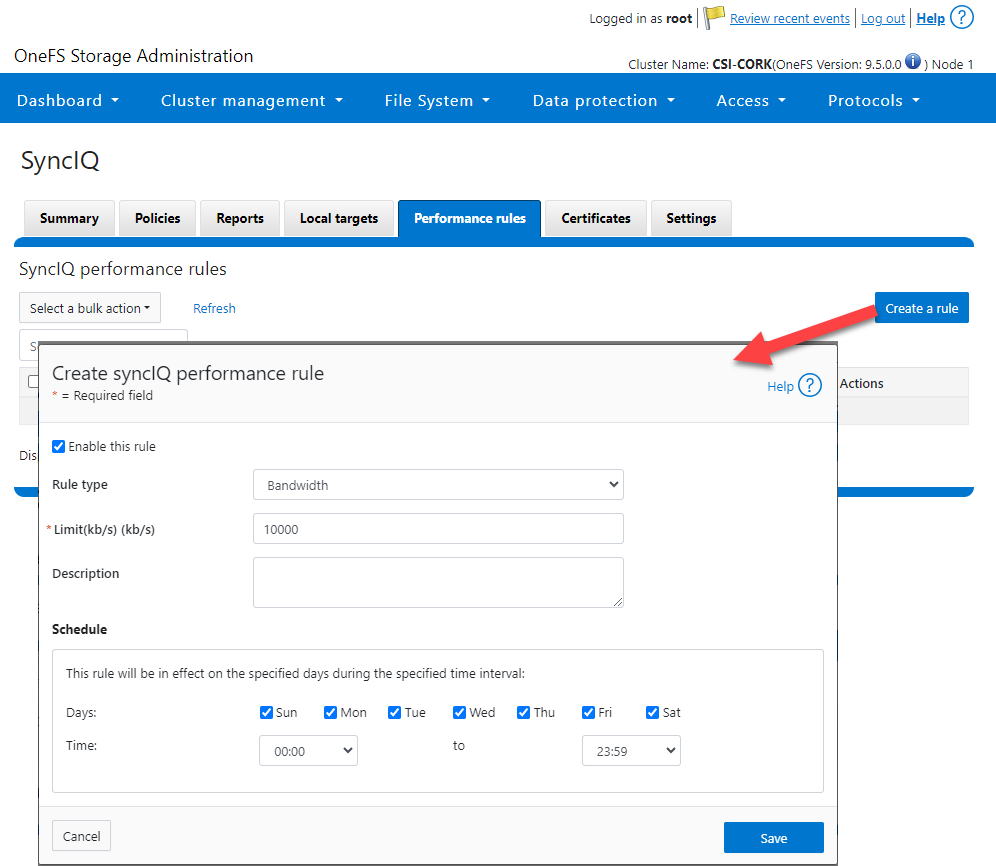

- SyncIQRules: Used to manage the replication performance rules that can be accessed as follows on the OneFS UI:

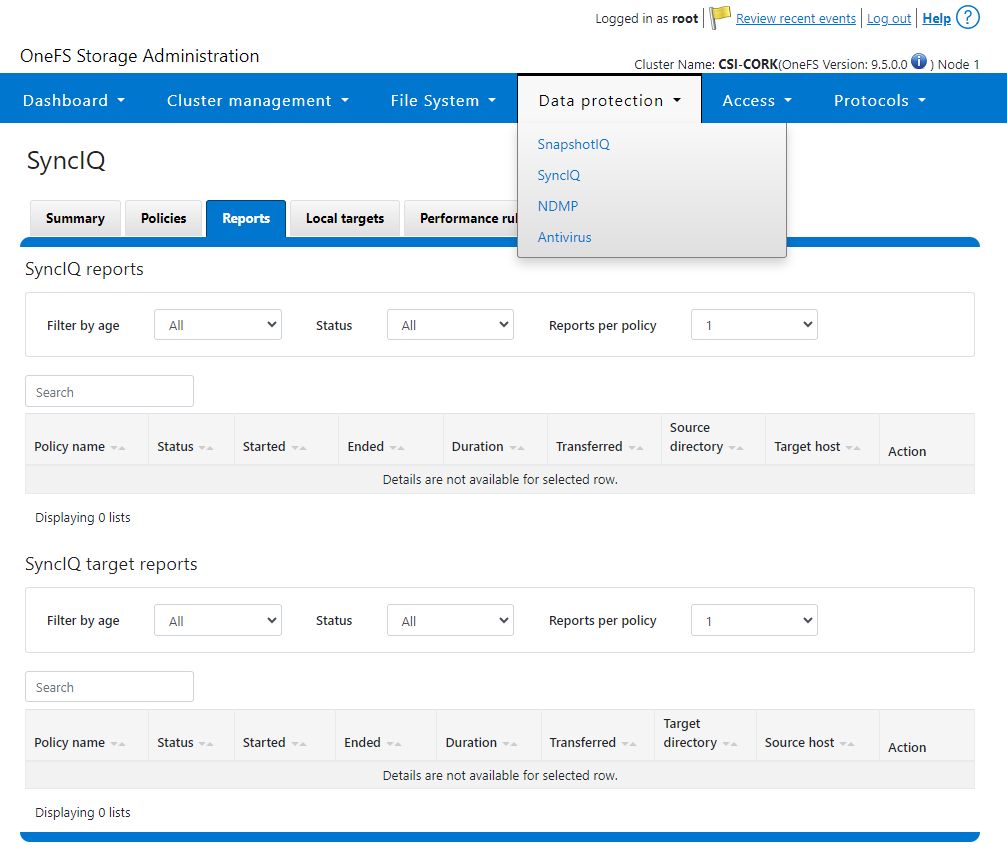

- SyncIQReports and SyncIQTargetReports: Used to manage SyncIQ reports. Following is the corresponding management UI screen where it is done manually:

Following are the new modules introduced to enhance the Ansible automation of SyncIQ workflows:

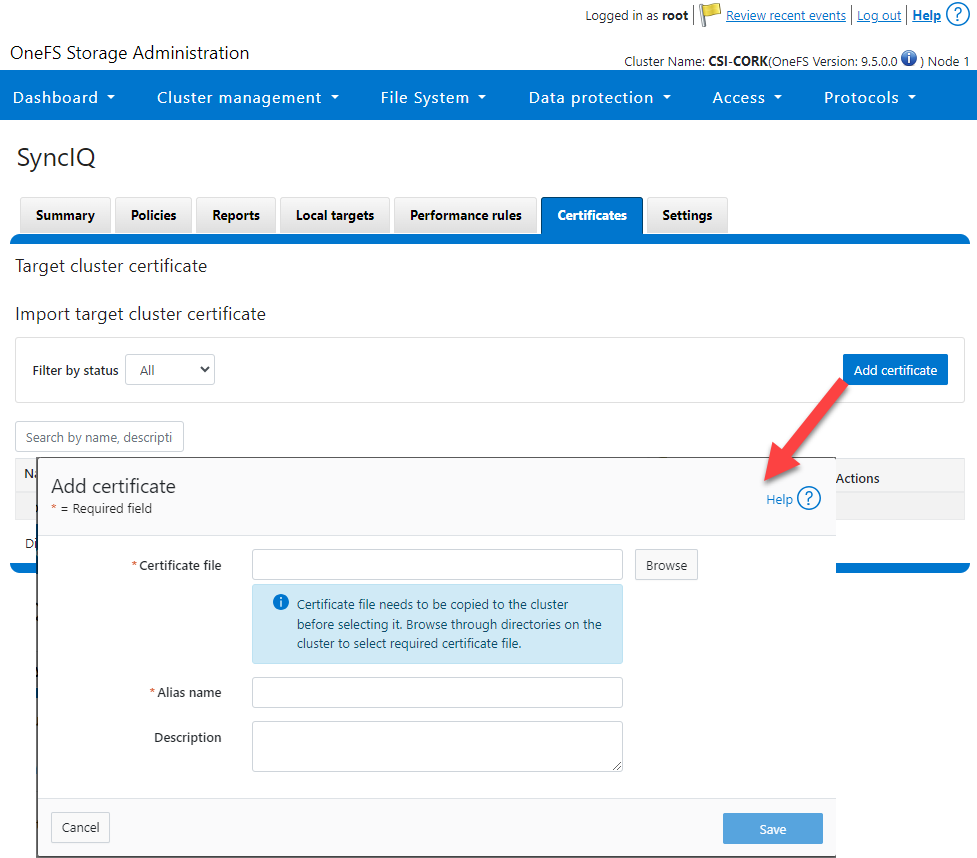

- SyncIQCertificate (v2.3): Used to manage SyncIQ target cluster certificates on PowerScale. Functionality includes getting, importing, modifying, and deleting target cluster certificates. Here is the OneFS UI for these settings:

- SyncIQ_global_settings (v2.3): Used to configure SyncIQ global settings that are part of the include the following:

Table 1. SyncIQ settings

SyncIQ Setting (datatype) | Description |

bandwidth_reservation_reserve_absolute (int) | The absolute bandwidth reservation for SyncIQ |

bandwidth_reservation_reserve_percentage (int) | The percentage-based bandwidth reservation for SyncIQ |

cluster_certificate_id (str) | The ID of the cluster certificate used for SyncIQ |

encryption_cipher_list (str) | The list of encryption ciphers used for SyncIQ |

encryption_required (bool) | Whether encryption is required or not for SyncIQ |

force_interface (bool) | Whether the force interface is enabled or not for SyncIQ |

max_concurrent_jobs (int) | The maximum number of concurrent jobs for SyncIQ |

ocsp_address (str) | The address of the OCSP server used for SyncIQ certificate validation |

ocsp_issuer_certificate_id (str) | The ID of the issuer certificate used for OCSP validation in SyncIQ |

preferred_rpo_alert (bool) | Whether the preferred RPO alert is enabled or not for SyncIQ |

renegotiation_period (int) | The renegotiation period in seconds for SyncIQ |

report_email (str) | The email address to which SyncIQ reports are sent |

report_max_age (int) | The maximum age in days of reports that are retained by SyncIQ |

report_max_count (int) | The maximum number of reports that are retained by SyncIQ |

restrict_target_network (bool) | Whether to restrict the target network in SyncIQ |

rpo_alerts (bool) | Whether RPO alerts are enabled or not in SyncIQ |

service (str) | Specifies whether the SyncIQ service is currently on, off, or paused |

service_history_max_age (int) | The maximum age in days of service history that is retained by SyncIQ |

service_history_max_count (int) | The maximum number of service history records that are retained by SyncIQ |

source_network (str) | The source network used by SyncIQ |

tw_chkpt_interval (int) | The interval between checkpoints in seconds in SyncIQ |

use_workers_per_node (bool) | Whether to use workers per node in SyncIQ or not |

Additions to Info module

The following information fields have been added to the Info module:

- S3 buckets

- SMB global settings

- Detailed network interfaces

- NTP servers

- Email settings

- Cluster identity (also available in the Settings module)

- Cluster owner (also available in the Settings module)

- SNMP settings

- SynciqGlobalSettings

PowerStore Ansible collections 3.1: More NAS configuration

In this release of Ansible collections for PowerStore, new modules have been added to manage the NAS Server protocols like NFS and SMB, as well as to configure a DNS or NIS service running on PowerStore NAS.

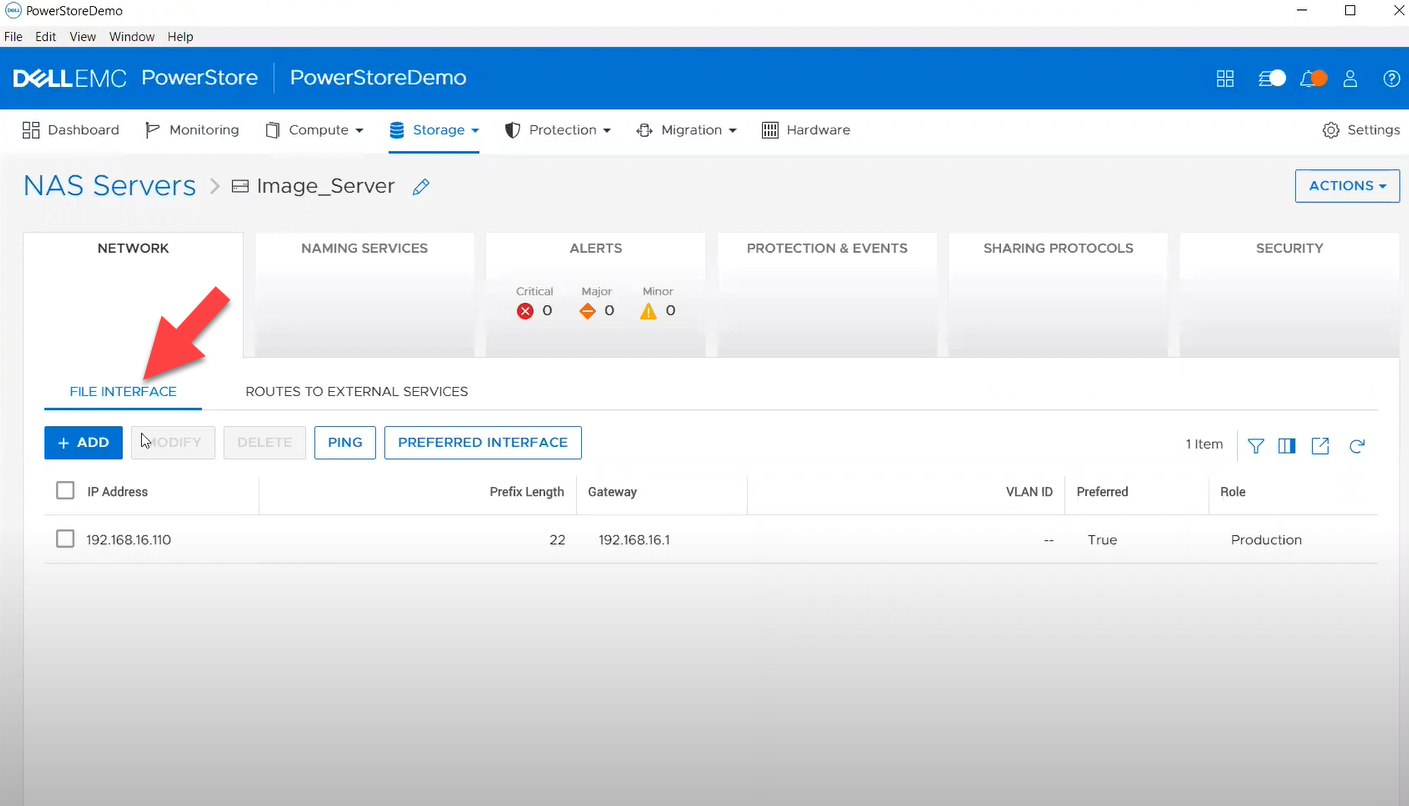

Managing NAS Server interfaces on PowerStore

- file_interface - to enable, query, and modify PowerStore NAS interfaces. Some examples can be found here.

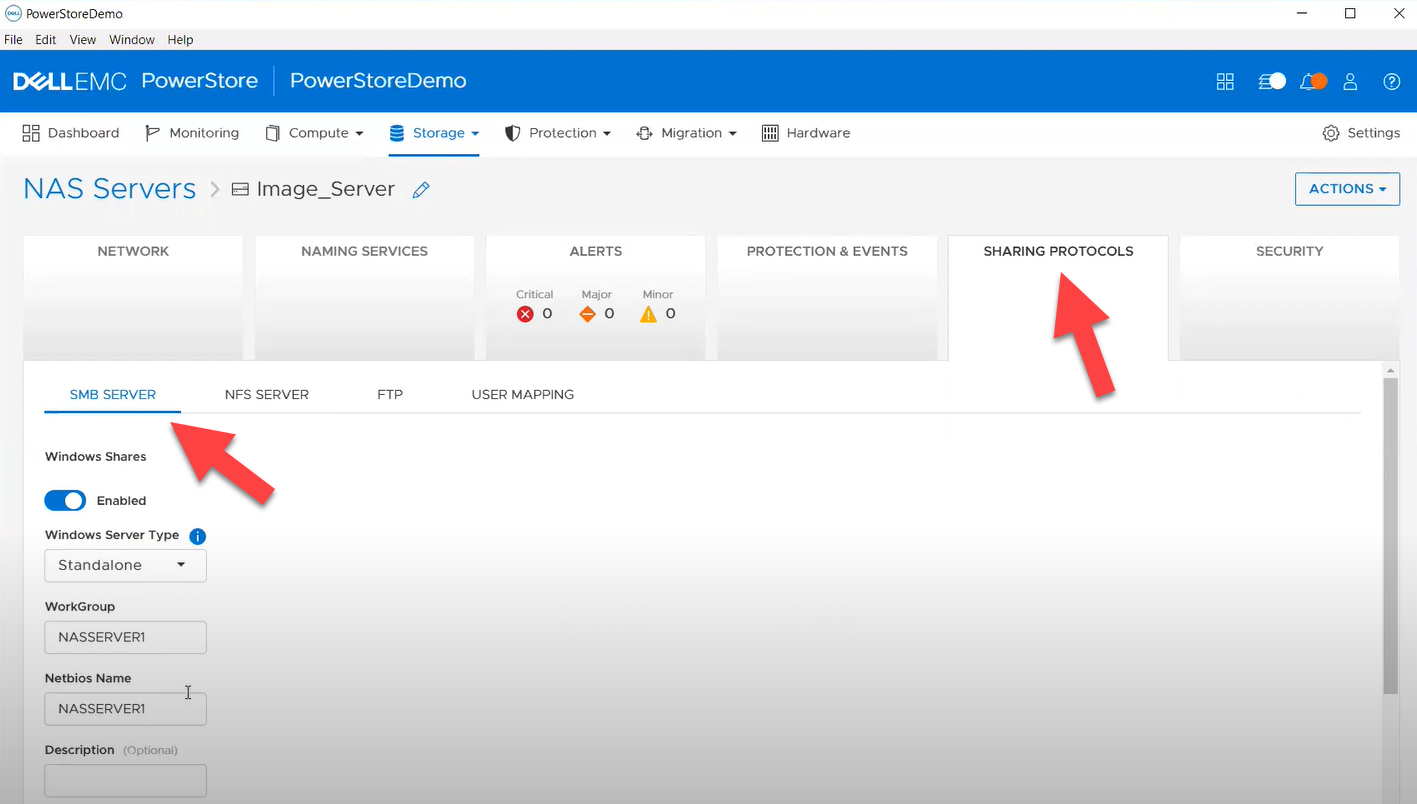

- smb_server - to enable, query, and modify SMB Shares on PowerStore NAS. Some examples can be found here.

- nfs_server - to enable, query, and modify NFS Server on PowerStore NAS. Some examples can be found here.

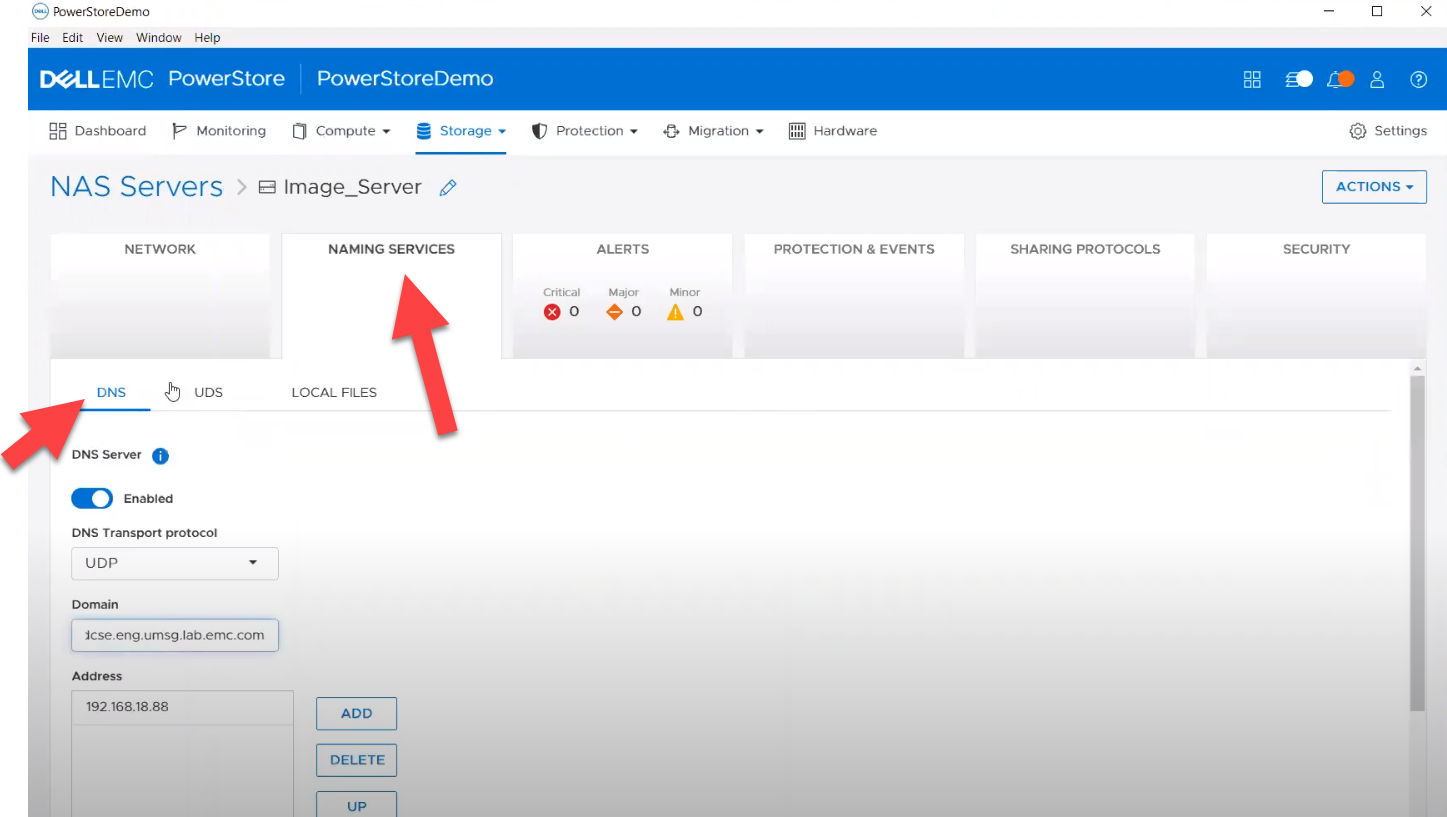

Naming services on PowerStore NAS

- file_dns – to enable, query, and modify File DNS on PowerStore NAS. Some examples can be found here.

- file_nis - to enable, query, and modify NIS on PowerStore NAS. Some examples can be found here.

- service_config - manage service config for PowerStore

The Info module is enhanced to list file interfaces, DNS Server, NIS Server, SMB Shares, and NFS exports. Also in this release, support has been added for creating multiple NFS exports with same name but different NAS servers.

PowerFlex Ansible collections 2.0.1 and 2.1: More roles

In releases 1.8 and 1.9 of the PowerFlex collections, new roles have been introduced to install and uninstall various software components of PowerFlex to enable day-1 deployment of a PowerFlex cluster. In the latest 2.0.1 and 2.1 releases, more updates have been made to roles, such as:

- Updated config role to support creation and deletion of protection domains, storage pools, and fault sets

- New role to support installation and uninstallation of Active MQ

- Enhanced SDC role to support installation on ESXi, Rocky Linux, and Windows OS

OpenManage Ansible collections: More power to iDRAC

At the risk of repetition, OpenManage Ansible collections have modules and roles for both OpenManage Enterprise as well as iDRAC/Redfish node interfaces. In the last five months, a plethora of a new functionalities (new modules and roles) have become available, especially for the iDRAC modules in the areas of security and user and license management. Following is a summary of the new features:

V9.1

- redfish_storage_volume now supports iDRAC8.

- dellemc_idrac_storage_module is deprecated and replaced with idrac_storage_volume.

v9.0

- Module idrac_diagnostics is added to run and export diagnostics on iDRAC.

- Role idrac_user is added to manage local users of iDRAC.

v8.7

- New module idrac_license to manage iDRAC licenses. With this module you can import, export, and delete licenses on iDRAC.

- idrac_gather_facts role enhanced to add storage controller details in the role output and provide support for secure boot.

v8.6

- Added support for the environment variables, `OME_USERNAME` and `OME_PASSWORD`, as fallback for credentials for all modules of iDRAC, OME, and Redfish.

- Enhanced both idrac_certificates module and role to support the import and export of `CUSTOMCERTIFICATE`, Added support for import operation of `HTTPS` certificate with the SSL key.

v8.5

- redfish_storage_volume module is enhanced to support reboot options and job tracking operation.

v8.4

- New module idrac_network_attributes to configure the port and partition network attributes on the network interface cards.

Conclusion

Ansible is the most extensively used automation platform for IT Operations, and Dell Technologies provides an exhaustive set of modules and roles to easily deploy and manage server and storage infrastructure on-prem as well as on Cloud. With the monthly release cadence for both storage and server modules, you can get access to our latest feature additions even faster. Enjoy coding your Dell infrastructure!

Author: Parasar Kodati, Engineering Technologist, Dell ISG