Home > AI Solutions > Artificial Intelligence > White Papers > Digital Assistant with Red Hat OpenShift AI on Dell APEX Cloud Platform for Red Hat OpenShift > RAG overview

RAG overview

-

RAG is an AI framework that allows LLMs to access additional domain specific data and generate better and more accurate answers, without having to be retrained.

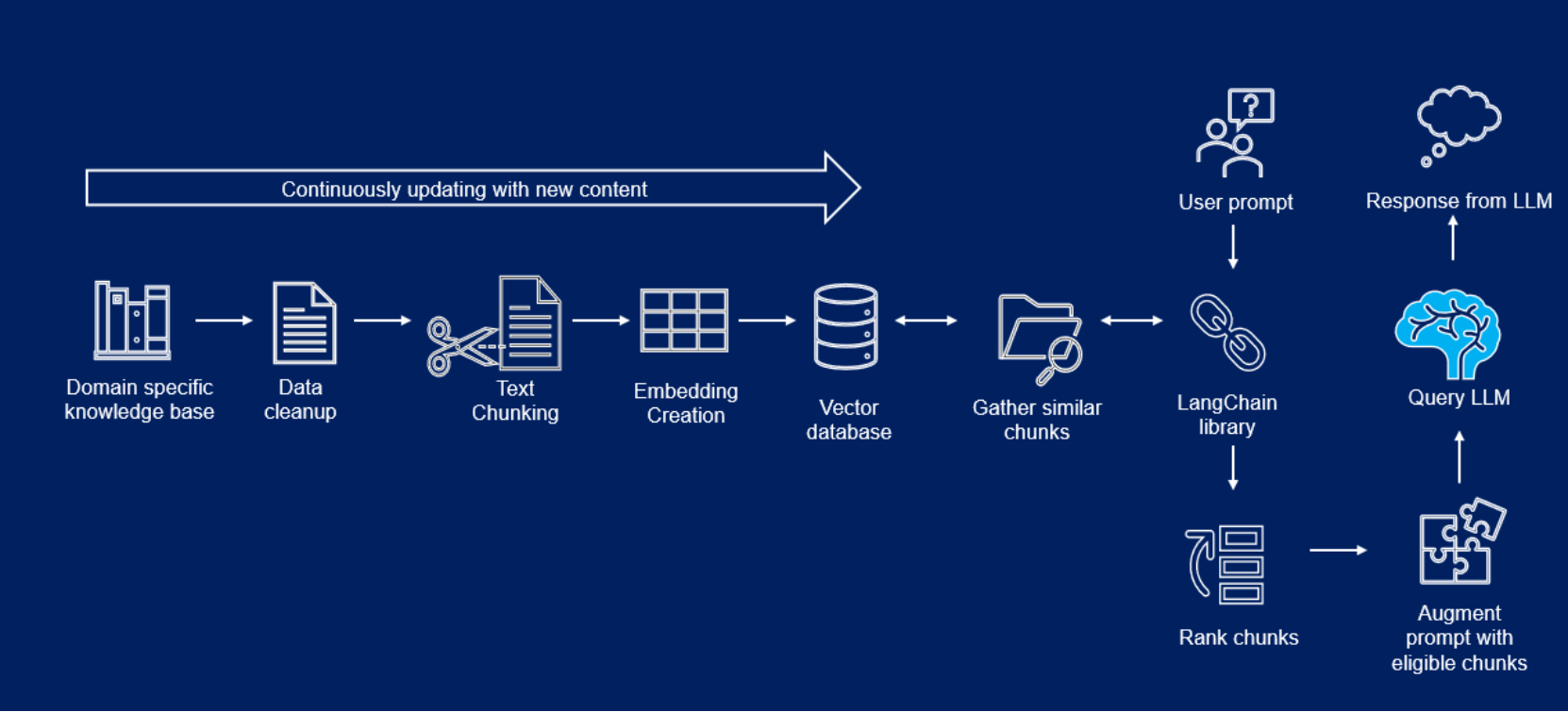

Figure 1. RAG architecture

Figure 1. RAG architectureIn a RAG based framework, domain specific knowledge bases, such as PDF documents, are cleaned up and embedded into vector stores. When a user submits a query, the query is converted to an embedding and a semantic search is performed against embeddings stored in the vector store. Relevant chunks are gathered and passed as extended context along with the user query to a LLM, such as Llama 2. The LLM then answers the user query based on its context and pretrained capabilities.

The following list describes components from Figure 1.

- LangChain: LangChain is an open-source orchestration framework for the development of applications using LLMs. It is available in both Python and JavaScript based libraries. LangChain’s tools and APIs simplify the process of building LLM-driven applications like chatbots and virtual agents.

LangChain serves as a generic interface for nearly any LLM, providing a centralized development environment to build LLM applications and integrate them with external data sources and software workflows. - Llama 2: Llama 2 is an open-access pre-trained LLM, from Meta that is freely available for research and commercial use. Llama 2 was trained on 40 percent more data than its predecessor, Llama 1, and has twice the context length (4096 compared to 2048 tokens). This means that Llama 2 is better equipped to understand context and generate more relevant and accurate information. Llama-2-13b is used in this solution, it is more powerful and provides better responses to user queries than Llama-2-7b because of the increased number of weights.

- PGVector: Vector stores are databases designed to store and retrieve vector embeddings efficiently and to perform semantic searches. PGVector is a PostgreSQL extension that provides powerful functionalities for working with vectors in high-dimensional space. It introduces a dedicated datatype, operators, and functions that enable efficient storage, manipulation, and analysis of vector data directly within the PostgreSQL database.

- Redis: Redis is a vector store that offers an effective solution to efficiently query and retrieve relevant information from massive amounts of data. Redis is a popular in-memory data structure store. One feature of the Redis database is the ability to store embeddings with metadata to be used later by LLMs.

- LangChain: LangChain is an open-source orchestration framework for the development of applications using LLMs. It is available in both Python and JavaScript based libraries. LangChain’s tools and APIs simplify the process of building LLM-driven applications like chatbots and virtual agents.