Design Guide—Dell Validated Design for Analytics—Data Lakehouse

Home > Workload Solutions > Data Analytics > Guides > Design Guide—Dell Validated Design for Analytics—Data Lakehouse > Workload design

Workload design

-

Overview

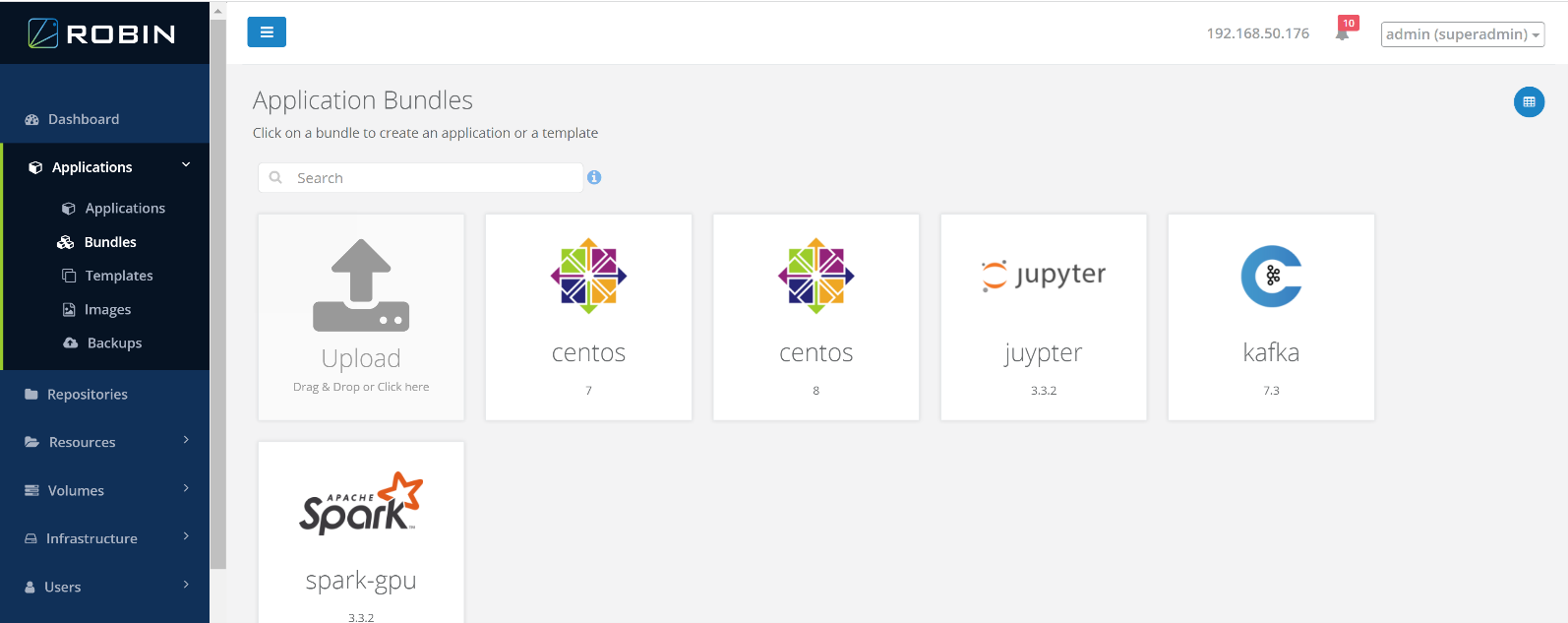

The platform orchestration capabilities use the Symcloud Application Workflow Manager. Applications are deployed using the Symcloud application bundle framework. Using this deployment framework, an application bundle specifies all the resources necessary to deploy an application. When an application bundle is installed, it appears in the application catalog, and platform users can deploy it. The figure below shows the main Symcloud application catalog screen.

Note: The application bundles shown are examples of applications that the Symcloud Platform can deploy. They are not standard bundles available from Symcloud.Figure 5. Application bundles catalog

When a bundle is deployed, the Symcloud scheduler provisions the required compute, storage, accelerator, and network resources and then starts the application pods. Since the platform is aware of the entire application, the scheduler can use advanced placement techniques, including data locality, data affinity, and infrastructure awareness. During deployment, users can specify deployment and runtime preferences in the launch screen.

The primary component of an application bundle is a manifest file which acts as a blueprint for the application. It describes the application components, any dependencies between services, resource requirements, affinity and anti-affinity rules, and custom actions required for application management. The bundle also contains icons, any supporting scripts, and specifies the necessary container images for the application. Bundles are packaged as compressed tar archives.

Detailed information about the creation and management of applications bundles is available in the Robin Hyperconverged Kubernetes Bundle Building Guide.

Dell Technologies implemented and deployed workload bundles as part of the solution verification process. The following sections describe the implementation of two of these bundles.

Spark bundle

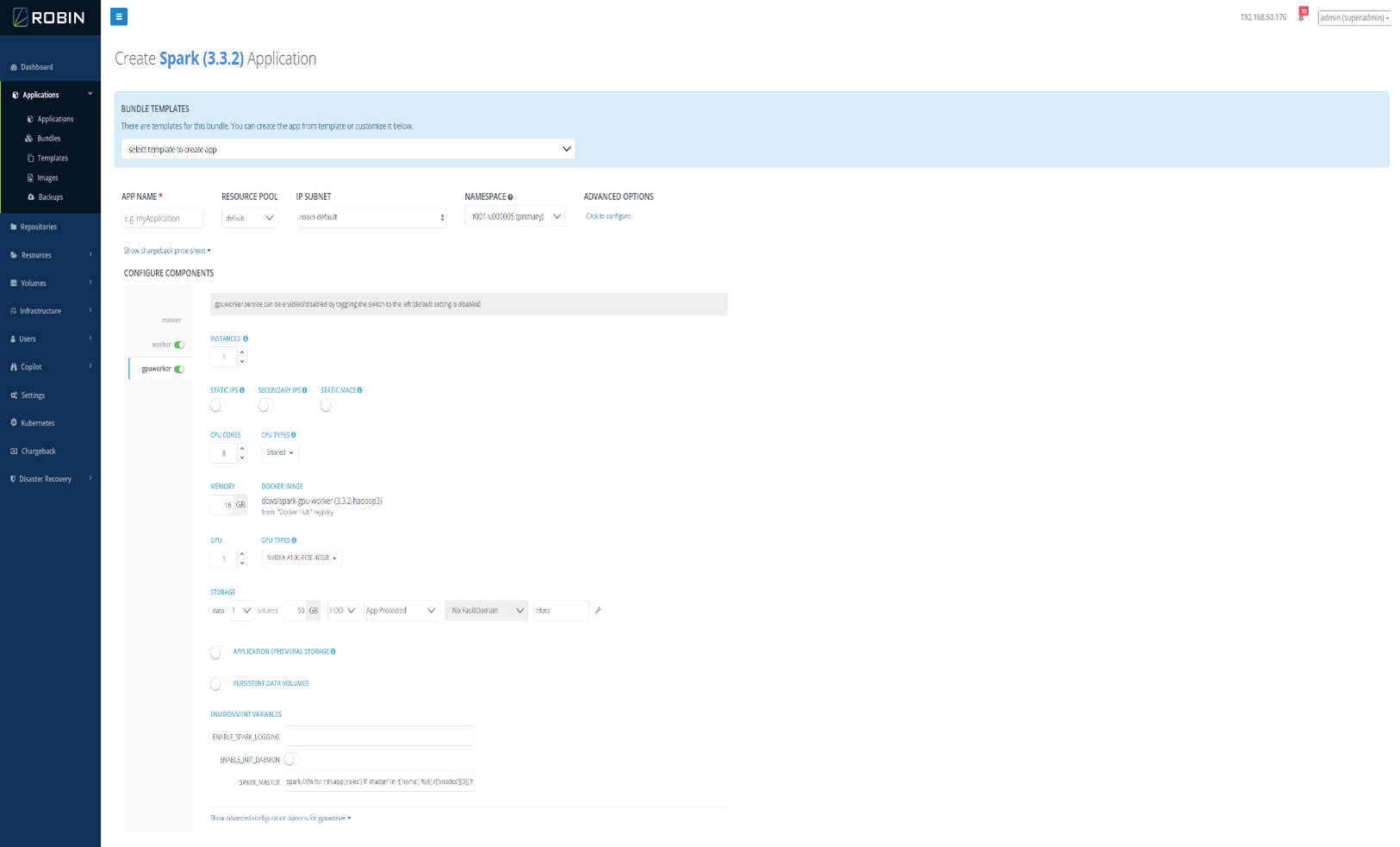

The Spark application bundle deploys an Apache Spark 3.3.2 stand-alone cluster. The figure below shows the application creation screen for the Spark bundle.

Figure 6. Spark 3.3.2 application creation screen

This bundle includes:

- Apache Spark 3.3.2

- Hadoop client libraries (for HDFS access)

- Hadoop AWS libraries (for S3 access)

- Delta Lake 2.3.0 libraries

- NVIDIA CUDA 11.4 (for GPU support)

- NVIDIA RAPIDS Accelerator for Apache Spark (for Spark GPU support)

At application creation time, the numbers of both Spark workers and Spark GPU workers can be specified. The bundle also allows resources to be specified, including number of cores and memory per worker.

Kafka bundle

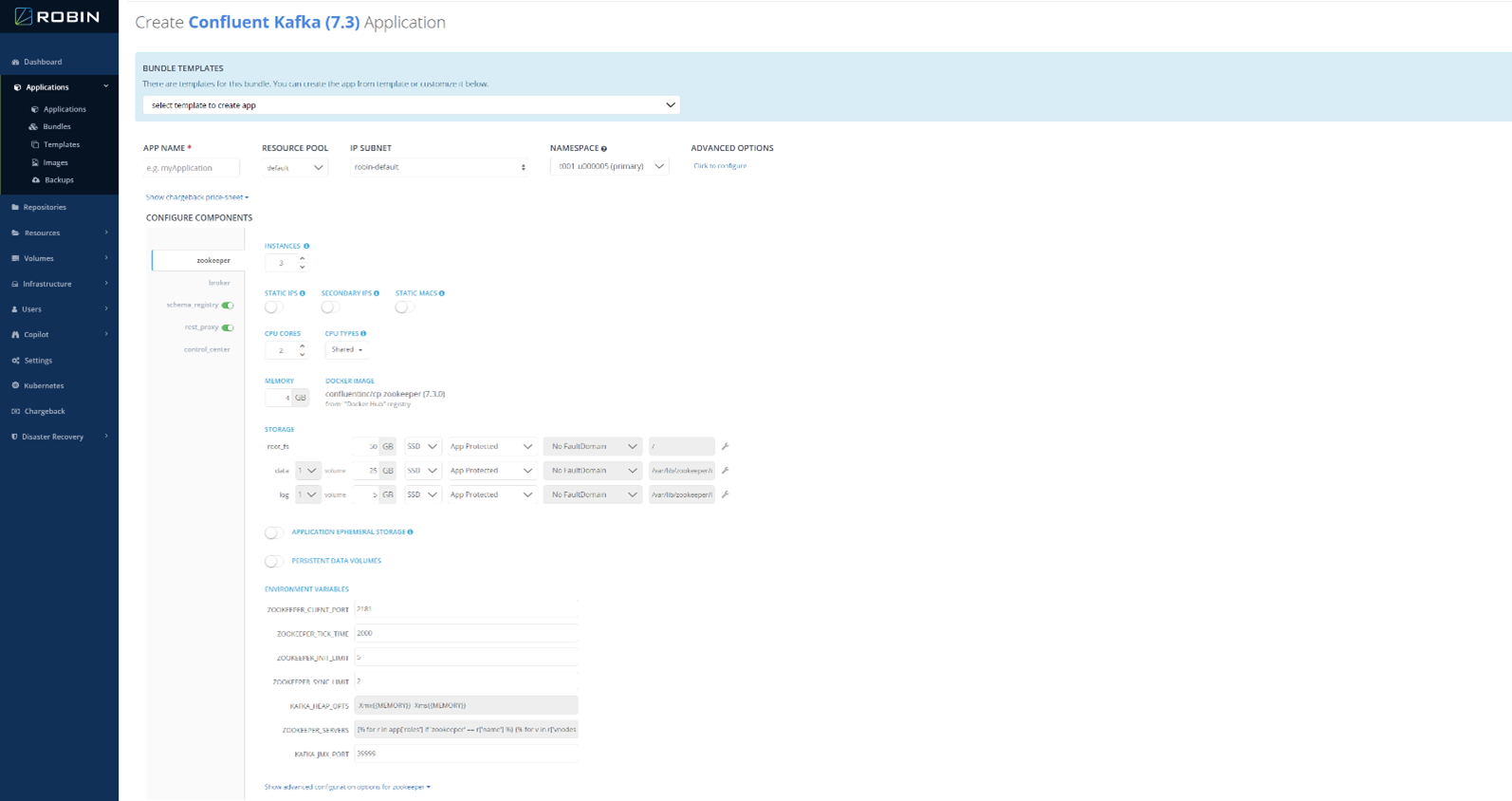

The Kafka application bundle deploys a Confluent Kafka 7.3 cluster. The figure below shows the application creation screen for the Kafka bundle.

Figure 7. Kafka 7.3 application creation screen

This bundle includes the standard Kafka components, including:

- Kafka Broker

- Kafka Schema Registry

- Kafka REST proxy

- Confluent Control Center

- ZooKeeper (for runtime support)

At application creation time, the number of brokers can be specified. The bundle also allows resources to be specified, including number of cores and memory per broker. As part of the broker configuration, the location and amount of storage for the Kafka queues can also be specified.