Home > Workload Solutions > Data Analytics > Guides > Design Guide—Dell Validated Design for Analytics—Data Lakehouse > Dell infrastructure

Dell infrastructure

-

Infrastructure overview

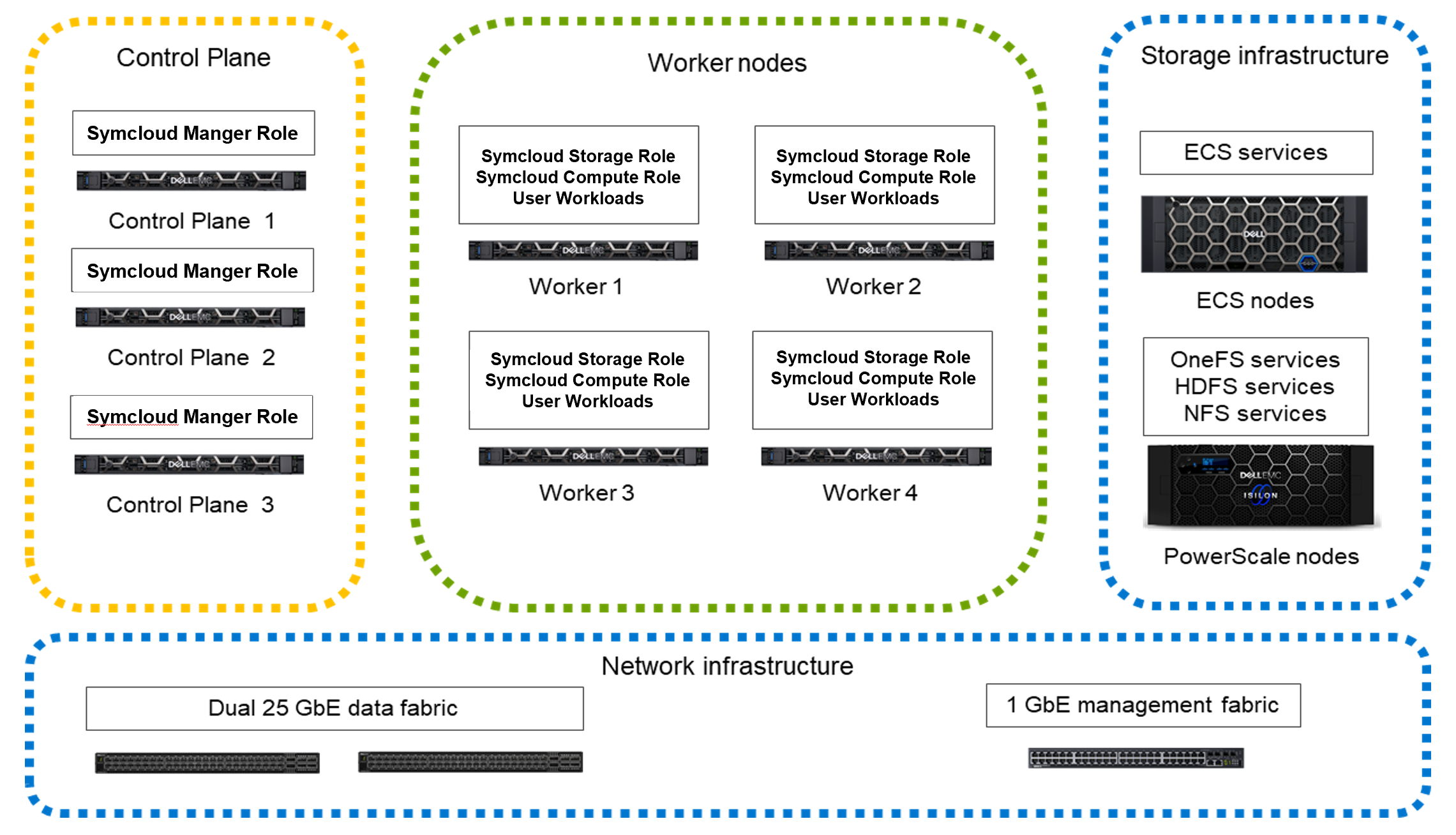

Dell infrastructure provides the compute, memory, storage, and network resources for the platform. Lakehouse platform on Dell infrastructure illustrates the required infrastructure components and their roles in the cluster.

Figure 2. Lakehouse platform on Dell infrastructure

- Control nodes

- Control nodes support the core platform services that are defined in the Symcloud Platform Manager role.

- Worker nodes

- Worker nodes support the platform runtime services and customer workloads. The runtime services are defined in the Symcloud Platform Compute and Storage roles, while user deployments define the workloads. Supported nodes include standard and compute accelerated nodes.

- Storage infrastructure

- Storage infrastructure provides the lakehouse storage layer. PowerScale and ECS configurations are provided. You can use either or both.

- Network infrastructure

- Network infrastructure provides the required connectivity between the server and storage infrastructure and the on-premises network.

The architecture is flexible and supports a wide range of infrastructure configurations. Dell Technologies recommends the following configurations for a general-purpose lakehouse environment running analytics workloads. Individual node configurations include node-specific guidance. More guidance for cluster level sizing and scaling is provided in Sizing the solution. Design guides for individual solutions on the platform may include more specific recommendations for those solutions.

Server infrastructure

The server infrastructure provides compute, memory, and some of the storage resources that are required to run customer workloads. A wide variety of PowerEdge server configurations are possible. The recommendations here support a wide variety of workloads typical in a lakehouse architecture implementation.

Lakehouse control plane node

Lakehouse control plane nodes support the core platform services that are defined in the Symcloud Platform Manager role. Dell recommends the configuration in Lakehouse control plane node configuration as a starting point for these nodes.

Table 2. Lakehouse control plane node configuration Machine function Component Platform PowerEdge R660 server Chassis 2.5 in chassis with up to 10 hard drives (SAS or SATA), two CPU slots, and PERC 12 Chassis configuration Riser configuration 2, three 16-channel, low-profile slots (two Gen5 and one Gen4) Power supply Dual hot-plug, fault-tolerant (1+1), 1100 W mixed mode (100-240 Vac), Titanium, normal airflow (NAF) power supplies Processor Intel Xeon Gold 6426Y 2.5 G, 16 C/32 T, 16 GT/s, 38 M cache, turbo, HT (185 W) DDR5-4800 Memory capacity 128 GB (eight 16 GB RDIMM, 3200 MT/s, dual rank) Internal RAID storage controllers Dell PERC H965i with rear load bracket Disk—SSD Two 1.6 TB 2.5 in hot-plug, SAS, mixed-use, up to 24 Gbps 512e Federal Information Processing Standard (FIPS), three drive writes per day (DWPD) SSDs Network interface controllers NVIDIA ConnectX-6 Lx dual port 10/25 GbE SFP28 adapter, PCIe low profile Dell Technologies recommends the disk volume and partition layouts for this set of machines that are listed in Lakehouse control plane node volumes and Lakehouse control plane node partitions.

Table 3. Lakehouse control plane node volumes Usage Volume type Physical disks Volume ID Operating system and Symcloud Platform RAID 1 Two 1.6 TB SAS SSDs 0 Table 4. Lakehouse control plane node partitions Mount point Size File system type Volume ID Partition type Description /boot 1024 MB XFS 0 Primary This partition contains BIOS start-up files that must be within first 2 GB of disk. /boot/efi 650 MB VFAT 0 Extended This partition contains EFI start-up files. / 200 GB XFS 0 LVM This partition contains the root file system. /var 60 GB XFS 0 LVM This partition contains variable data like system logging files, databases, mail and printer spool directories, transient, and temporary files. /var/lib/docker 400 GB XFS 0 LVM This partition contains the container images. /home 300 GB XFS 0 LVM This partition contains the user home directories. /home/robinds 40 GB XFS 0 LVM This partition contains the Symworld home directory. /home/robinds/var/lib/pgsql 80 GB XFS 0 LVM This partition contains the PostgreSQL database that Symworld uses. /home/robinds/var/log 60 GB XFS 0 LVM This partition contains the Symworld log files. /home/robinds/var/crash 100 GB XFS 0 LVM This partition contains the Symworld crash files. Three control plane nodes are required for production clusters to provide high availability for the control plane. For pilot testing, the control plane services that are defined in the Symcloud Platform Manager role can be assigned to worker nodes instead of using dedicated control plane nodes.

Memory, storage, and processor have been sized to support all the required services in a production deployment.

The configuration includes two network ports to support high-availability (HA) networking. These ports can be from a single network card, or a pair of network cards for additional adapter level HA.

Two SDDs in a RAID 1 configuration are used for the operating system volume. The home directories are allocated in a separate small partition since user files should not be stored on infrastructure nodes. Most of the storage is allocated to the /var partition for runtime files.

You can use LVM to adjust the storage allocation between

/,/home, and/varfor specific needs.

General-purpose lakehouse worker node

Lakehouse worker nodes support the platform runtime services and customer workloads. The runtime services are defined in the Symcloud Platform Compute and Storage roles. User deployments define the workloads. Dell Technologies recommends the configuration in General-purpose lakehouse worker node configuration as a starting point for general-purpose workloads.

Table 5. General-purpose lakehouse worker node configuration Machine function Component Platform PowerEdge R660 server Chassis 2.5 in chassis with up to eight SAS or SATA hard drives, three PCIe slots, and two CPUs Chassis configuration Riser configuration 2, three low-profile 16-channel slots, (two Gen5 and one (Gen4) Power supply Dual hot-plug, fully redundant (1+1), 2400 W, mixed mode power supplies Processor Dual Intel Xeon Gold 6438Y 2 G, 32 C/64 T, 16 GT/s, 60 M Cache, turbo, HT (205 W) DDR5-4800 Memory capacity 512 GB (sixteen 32 GB RDIMM, 4800 MT/s, dual rank) Internal RAID storage controllers Dell PERC HBA355i with rear load bracket Disk—SSD Six 3.84 TB hot-plug, SAS, mixed-use, up to 24 Gbps, 512 2.5 in, Federal Information Processing Standard (FIPS) Self-Encrypting Drives (SEDs) Boot optimized storage cards BOSS-N1 controller card + with two M.2 960 GB SSDs (RAID 1) Network interface controllers NVIDIA ConnectX-6 Lx dual port 10/25 GbE SFP28 adapter, PCIe low profile Dell Technologies recommends the disk volume and partition layouts for this set of machines that are listed in General-purpose lakehouse worker node volumes and General-purpose lakehouse worker node partitions.

Table 6. General-purpose lakehouse worker node volumes Usage Volume type Physical disks Volume ID Operating system RAID 1 Two M.2 960 GB SSDs 0 Symcloud Storage No RAID Six 3.84 TB SAS SSDs 1-6 Table 7. General-purpose lakehouse worker node partitions Mount point Size File system type Volume ID Partition type Description /boot 1024 MB XFS 0 Primary This partition contains BIOS start-up files that must be within first 2 GB of disk. /boot/efi 650 MB VFAT 0 Extended The partition contains EFI start-up files. / Around 100 GB XFS 0 LVM This partition contains the root file system. /home 400 GB XFS 0 LVM This partition contains the user home directories. /var 400 GB XFS 0 LVM This partition contains variable data like system logging files, databases, mail and printer spool directories, transient, and temporary files. None Six 3.84 TB Symcloud Storage 1-6 Raw partitions Symcloud Storage manages these partitions. Dell Technologies recommends four worker nodes for a minimum deployment.

The configuration includes two network ports to support high-availability (HA) networking. These ports can be from a single network card, or a pair of network cards for additional adapter level HA.

Two M.2 960 GB SSDs in a RAID 1 configuration are used for the operating system volume. The home directories are allocated in a separate small partition since user files are not stored at the operating system level on production nodes. Most of the storage is allocated to the /var partition for runtime files. You can use LVM to adjust the storage allocation between

/,/home, and/varfor specific needs.Six SSDs are allocated for use by Symcloud Storage. The services to support this storage are deployed with the Symcloud Storage role. This storage is exposed to workloads running on the cluster through the Kubernetes CSI interface. The recommended configuration provides approximately 23 TB of storage per node. This capacity is enough for typical runtime storage in a lakehouse environment where the bulk of the data is stored on external storage. The external storage can be either HDFS provided by PowerScale, or object storage provided by ECS. If more local storage is needed, up to two more SSDs can be added, and drive sizes can be increased.

Memory has been sized to support all the required services in a production deployment with enough headroom for user workloads. The most common change is to increase the memory size to support more containers or workloads requiring more memory.

The processors have been chosen to support compute intensive AI and ML workloads and include dual Intel Advanced Vector Extensions (AVX) units for maximum compute speed. Other processor choices are possible but should be made with memory requirements and overall power consumption in mind.

GPU-accelerated lakehouse worker node

GPU-accelerated lakehouse worker nodes support the platform runtime services and customer workloads that benefit from GPU acceleration. Symcloud Platform Compute and Storage roles define the runtime services, while user deployments define the workloads. Dell Technologies recommends the configuration in GPU-accelerated lakehouse worker node configuration as a starting point for GPU-accelerated workloads.

Table 8. GPU-accelerated lakehouse worker node configuration Machine function Component Platform PowerEdge R760 server Chassis 2.5 in chassis with up to 16 SAS or SATA drives, Smart Flow, front PERC 12, two CPUs Chassis configuration Riser configuration 5, full-length, with two full-height 16-channel slots (Gen4), two low-profile 16-channel slots (Gen4), one full-height 16-channel slot (Gen5), and one full-height, double-wide, 16-channel, GPU-capable slot (Gen5) Power supply Dual, hot-plug, 2400 W redundant, configuration D, mixed-mode power supplies Processor Intel Xeon Gold 6438Y 2 G, 32 C/64 T, 16 GT/s, 60 M cache, turbo, HT (205 W) DDR5-4800 Memory capacity 512 GB (sixteen 32 GB RDIMM, 3200 MT/s, dual rank) Internal RAID storage controllers Dell PERC H965i with rear load bracket Disk—SSD Six 3.84 TB hot-plug, SAS, mixed-use, up to 24 Gbps, 512e 2.5 in, Federal Information Processing Standard (FIPS) Self-Encrypting Drives (SEDs) Boot optimized storage cards BOSS-N1 controller card + with two M.2 960 GB SSDs (RAID 1) Network interface controllers NVIDIA ConnectX-6 Lx dual port 10/25 GbE SFP28 adapter, PCIe low profile GPU, FPGA, or acceleration cards NVIDIA Ampere A30, PCIe, 165 W, 24 GB passive, double wide, full-height GPU with cable Dell Technologies recommends the disk volume and partition layouts for this set of machines that are listed in GPU-accelerated lakehouse worker node volumes and GPU-accelerated lakehouse worker node partitions.

Table 9. GPU-accelerated lakehouse worker node volumes Usage Volume type Physical disks Volume ID Operating system RAID 1 Two 960 GB SSDs 0 Symcloud Storage No RAID Six 3.84 TB SAS SSDs 1-6 Table 10. GPU-accelerated lakehouse worker node partitions Mount point Size File system type Volume ID Partition type Description /boot 1024 MB XFS 0 Primary This partition contains BIOS start-up files that must be within first 2 GB of disk. /boot/efi 650 MB VFAT 0 Extended The partition contains EFI start-up files. / Around 100 GB XFS 0 LVM This partition contains the root file system. /home 300 GB XFS 0 LVM This partition contains the user home directories. /var 400 GB XFS 0 LVM This partition contains variable data like system logging files, databases, mail and printer spool directories, transient, and temporary files. None Six 3.84 TB Symcloud Storage 1-6 Raw partitions Symcloud Storage manages these partitions. GPU accelerated worker nodes have capabilities that are similar to general purpose worker nodes, while adding GPU acceleration. Dell Technologies recommends four worker nodes for a minimum deployment. You can use any mix of general purpose and GPU-accelerated nodes.

The configuration includes two network ports to support high-availability (HA) networking. These ports can be from a single network card, or a pair of network cards for additional adapter level HA.

Two M.2 960 GB SSDs in a RAID 1 configuration are used for the operating system volume. The home directories are allocated in a separate small partition since user files are not stored at the operating system level on production nodes. Most of the storage is allocated to the

/varpartition for runtime files. You can use LVM to adjust the storage allocation between/,/home, and/varfor specific needs.Six SSDs are allocated for use by Symcloud Storage. The services to support this storage are deployed with the Symcloud Storage role. This storage is exposed to workloads running on the cluster through the Kubernetes CSI interface. The recommended configuration provides approximately 23 TB of storage per node. This capacity is enough for typical runtime storage in a lakehouse environment where the bulk of the data is stored on external storage. The external storage can be either HDFS provided by PowerScale, or object storage provided by ECS. If more local storage is needed, up to 10 more SSDs can be added, and drive sizes can be increased.

Memory has been sized to support all the required services in a production deployment with enough headroom for user workloads. The most common change is to increase the memory size to support more containers or workloads requiring more memory.

The processors have been chosen to support compute intensive AI and ML workloads and include dual Intel Advanced Vector Extensions (AVX) units for maximum compute speed. Other processor choices are possible but should be made with memory requirements and overall power consumption in mind.

The GPU has been chosen to support Spark acceleration of SQL and dataframe operations using the NVIDIA RAPIDS Accelerator for Apache Spark. These workload operations are typical in a lakehouse environment. One or two GPUs can be used in this configuration. AI and ML workloads may benefit from alternative GPU models.

Storage infrastructure

Lakehouse storage can use PowerScale with the HDFS protocol, or ECS for object storage. You can use either or both systems depending on workload requirements. Server internal storage provides runtime storage that applications require. The Symcloud Storage software manages runtime storage.

ECS and PowerScale are deployed as cluster-level systems. The node recommendations here can be used as guidance for new clusters, verification of compatibility with existing clusters, or expansion of existing clusters.

PowerScale infrastructure

Dell Technologies recommends the configuration in PowerScale configuration for storage in clusters using PowerScale for their primary lakehouse storage using HDFS.

Table 11. PowerScale configuration Machine function Component Model PowerScale H7000 (hybrid) Chassis 4U node Nodes per chassis Four Node storage Twenty 12 TB 3.5 in. 4 kn SATA hard drives Node cache Two 3.2 TB SSDs Usable capacity per chassis 600 TB Front-end networking Two 25 GbE (SFP28) Infrastructure (back-end) networking Two InfiniBand QDR or two 40 GbE (QSFP+) Operating system OneFS 9.4.0.12 The recommended configuration is sized for typical usage as lakehouse HDFS storage.

Two Ethernet network ports per node are included for connection to the Cluster data network or a PowerScale storage network. Two additional network ports are included for connection to the PowerScale back-end network. These additional ports can be either InfiniBand QDR or 40 GbE, depending upon on-site preferences.

One PowerScale H7000 chassis supports four PowerScale H7000 nodes. This configuration provides approximately 720 TB of usable storage. At 85% utilization, 600 TB of HDFS storage is a good guideline for available storage per chassis.

This configuration assumes that the PowerScale nodes are primarily used for HDFS storage. If the PowerScale nodes are used for other storage applications or clusters, you must account for it in the overall cluster sizing. You can also use other PowerScale H7000 drive configurations.

ECS node

Dell Technologies recommends the configurations in ECS EX500 node configuration or ECS EXF900 node configuration for storage in clusters using ECS for their primary lakehouse storage using the

s3a://protocol.Table 12. ECS EX500 node configuration Machine function Component Model ECS EX500 Chassis 2U node Nodes per rack 16 Node storage 960 GB SSD Node cache N/A Usable capacity per chassis Slightly less than 384 TB Front-end networking Two 25 GbE (SFP28) Infrastructure (back-end) networking Two 25 GbE (SFP28) The ECS EX500 configuration provides a good balance of storage density and performance for lakehouse usage.

Two Ethernet network ports per node are included for connection to the Cluster data network or an ECS storage network. Two additional network ports are included for connection to the ECS back-end network.

Table 13. ECS EXF900 node configuration Machine function Component Model ECS EXF900 Chassis 2U node Nodes per rack 16 Node storage 184 TB (twenty-four 7.68 TB NVMe drives) Node cache N/A Usable capacity per chassis Slightly less than 184 TB Front-end networking Two 25 GbE (SFP28) Infrastructure (back-end) networking Two 25 GbE (SFP28) The ECS EXF900 configuration is an all-flash configuration and provides the highest performance for lakehouse usage.

Two Ethernet network ports per node are included for connection to the Cluster data network or an ECS storage network. Two additional network ports are included for connection to the ECS back-end network.

Server storage

The worker nodes include local SSD storage for use as runtime storage for workloads. This storage is exposed to pods running on the cluster through the Kubernetes CSI interface. Persistent and ephemeral volumes are supported. Symcloud Storage manages these storage devices through the Symcloud Storage role.

Symcloud Storage and the associated CSI driver support advanced features including:

- Replication for data availability

- Encryption for data security

- Compression and thin provisioning for storage efficiency

- Snapshots

- Backup and recovery

The Kubernetes storage class defines some of these features, while others are accessible through the Symcloud management interface. More details about the Symcloud Storage capabilities can be found in the Symcloud document, Robin Cloud Native Storage Overview.

The recommended configuration provides approximately 23 TB of storage per node. This capacity is enough for typical runtime storage in a lakehouse environment where the bulk of the data is on external storage. Alternative configurations, including dedicated storage nodes, are possible. Some analytics workloads, like databases, may require additional storage for persistent data outside the lakehouse storage.

Network infrastructure

The network is designed to meet the needs of a high performance and scalable cluster, while providing redundancy and access to management capabilities. The architecture is a leaf and spine model that is based on Ethernet networking technologies. It uses PowerSwitch S5248F-ON switches for the leaves and PowerSwitch Z9432F-ON switches for the spine.

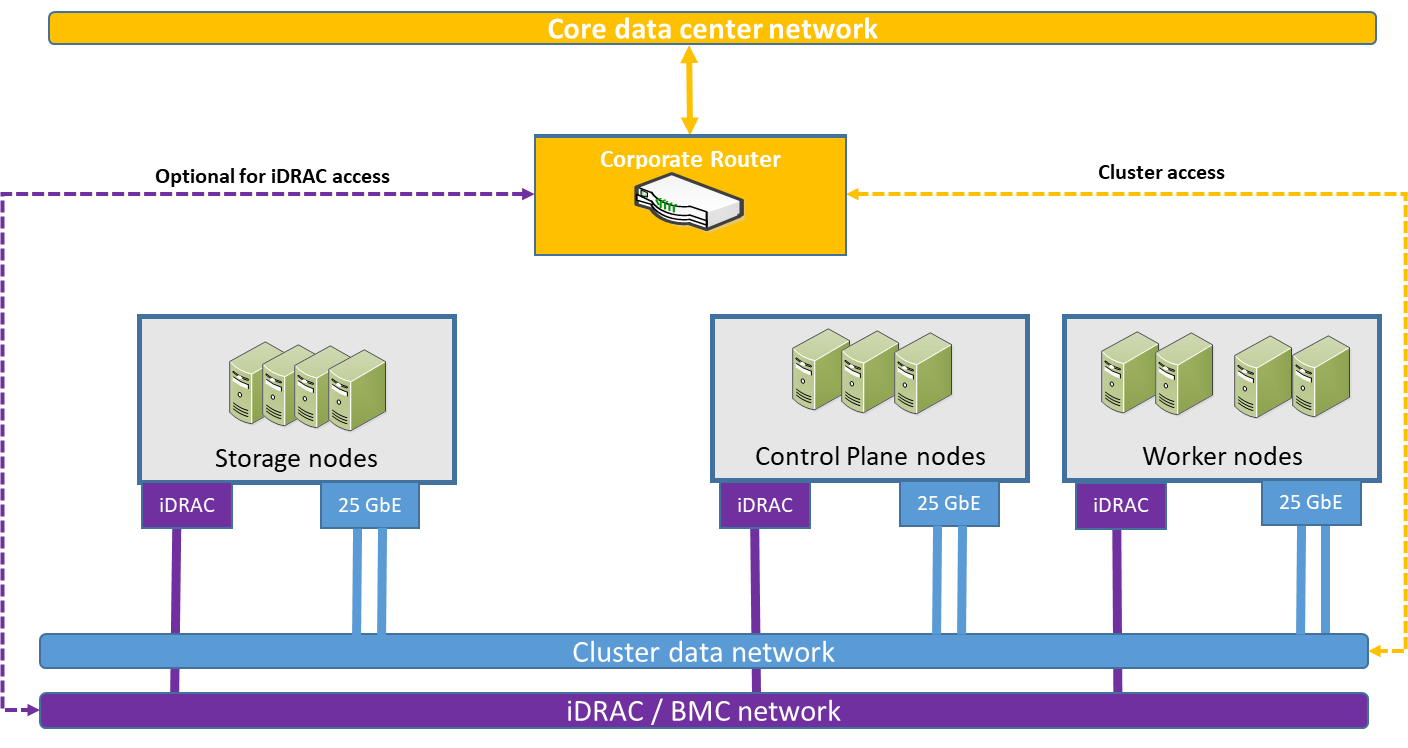

Physical networking in this architecture is straightforward since most of the advanced capabilities of the system are implemented using software defined networking. The logical network is described in Container platform implementation. This architecture has three physical networks, as shown in Physical network infrastructure.

- iDRAC (or BMC) network

- The iDRAC (or BMC) network is a secured and isolated network for switch and server hardware management, including access to the iDRAC9 module and Serial-over-LAN. This network optionally connects to corporate network management allowing more direct access to the hardware infrastructure. Each node on this network is assigned an individual IP address from the management address space.

- Cluster data network

- The Cluster data network is the primary network for internode communication between all server and storage nodes. Each server node on this network is assigned a single IP address on this network.

- Core data center network

- The Core data center network is the existing enterprise network. The Cluster data network is interfaced with this network through switching and routing allowing cluster services to be exposed to system users.

Figure 3. Physical network infrastructure

Management network fabric

The management network uses a PowerSwitch S3148 1 GbE switch for iDRAC connectivity and chassis management.

Cluster data network fabric

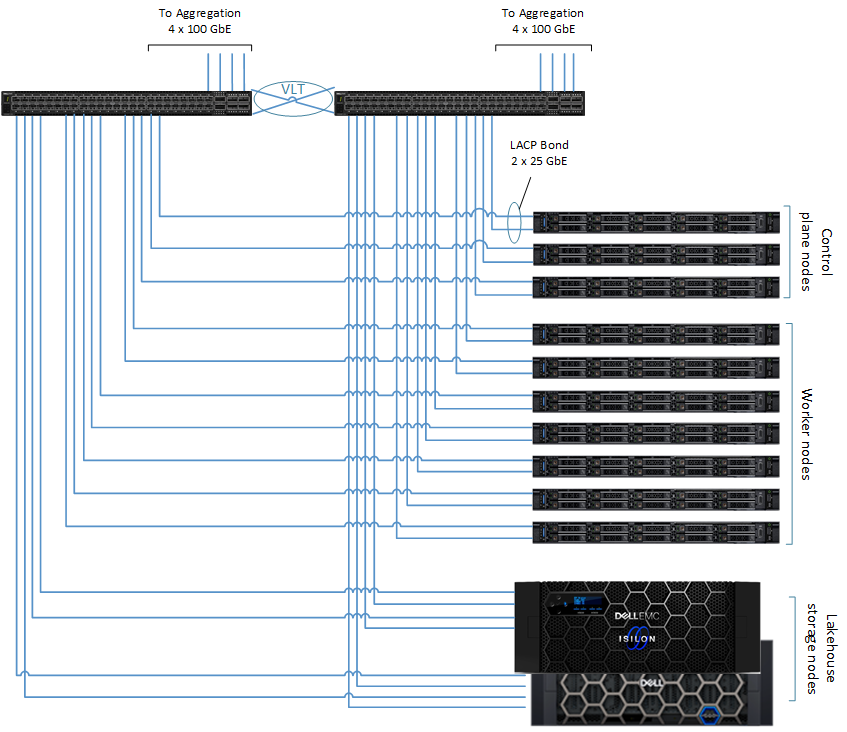

The Cluster data network uses a scalable, resilient, nonblocking fabric with a leaf-spine design as shown in Cluster data network connections. Each node on this network is connected to two S5248F-ON leaf switches with 25 GbE network interfaces. The switches run Dell SmartFabric OS10. SmartFabric OS10 enables multilayered disaggregation of network functions that are layered on an open-source Linux-based operating system.

On the server side, the two network connections are bonded and have a single IP address assigned.

On the switch side, the network design employs a Virtual Link Trunking (VLT) connection between the two leaf switches.

VLT technology enables a server to uplink multiple physical trunks into more than one S5248F-ON switch by treating the uplinks as one logical trunk. In a VLT environment, connected pair of switches acts as a single switch to a connecting server while all paths are active. It is possible to achieve high throughput while still providing resiliency against hardware failures. VLT replaces Spanning Tree Protocol (STP)-based networks, providing both redundancy and full bandwidth utilization using multiple active paths.

The VLT configuration in this design uses four 100 GbE ports between each Top of Rack (ToR) switch. The remaining 100 GbE ports can be used for high-speed connectivity to spine switches, or directly to the data center core network infrastructure.

Figure 4. Cluster data network connections