Who’s watching your IP cameras?

Thu, 20 Jul 2023 18:05:50 -0000

|Read Time: 0 minutes

Introduction

In today’s world, the deployment of security cameras is a common practice. In some public facilities like airports, travelers can be in view of a security camera 100% of the time. The days of security guards watching banks of video panels being fed from hundreds of security cameras are quickly being replaced by computer vision systems powered by artificial intelligence (AI). Today’s advanced analytics can be performed on many camera streams in real-time without a human in the loop. These systems enhance not only personal safety but also provide other benefits, including better passenger experience and enhanced shopping experiences.

Modern IP cameras are complex devices. In addition to recording video streams at increasingly higher resolutions (4k is now common), they can also encode and send those streams over traditional internet protocol IP to downstream systems for additional analytic processing and eventually archiving. Some cameras on the market today have enough onboard computing power and storage to evaluate AI models and perform analytics right on the camera.

The Problem

The development of IP-connected cameras provided great flexibility in deployment by eliminating the need for specialized cables. IP cameras are so easy to plug into existing IT infrastructure that almost anyone can do it. However, since most camera vendors use a modified version of an open-source Linux operating system, IT and security professionals realize there are hundreds or thousands of customized Linux servers mounted on walls and ceilings all over their facilities. Whether you are responsible for <10 cameras at a small retail outlet or >5000 at an airport facility, the question remains “How much exposure do all those cameras pose from cyber-attacks?”

The Research

To understand the potential risk posed by IP cameras, we assembled a lab environment with multiple camera models from different vendors. Some cameras were thought to be up to date with the latest firmware, and some were not.

Working in collaboration with the Secureworks team and their suite of vulnerability and threat management tools, we assessed a strategy for detecting IP camera vulnerabilities Our first choice was to implement their Secureworks Taegis™ VDR vulnerability scanning software to scan our lab IP network to discover any camera vulnerabilities. VDR provides a risk-based approach to managing vulnerabilities driven by automated & intelligent machine learning.

We planned to discover the cameras with older firmware and document their vulnerabilities. Then we would have the engineers upgrade all firmware and software to the latest patches available and rescan to see if all the vulnerabilities were resolved.

Findings

Once the SecureWorks Edge agent was set up in the lab, we could easily add all the IP ranges that might be connected to our cameras. All the cameras on those networks were identified by SecureWorks VDR and automatically added to the VDR AWS cloud-based reporting console.

Discovering Camera Vulnerabilities

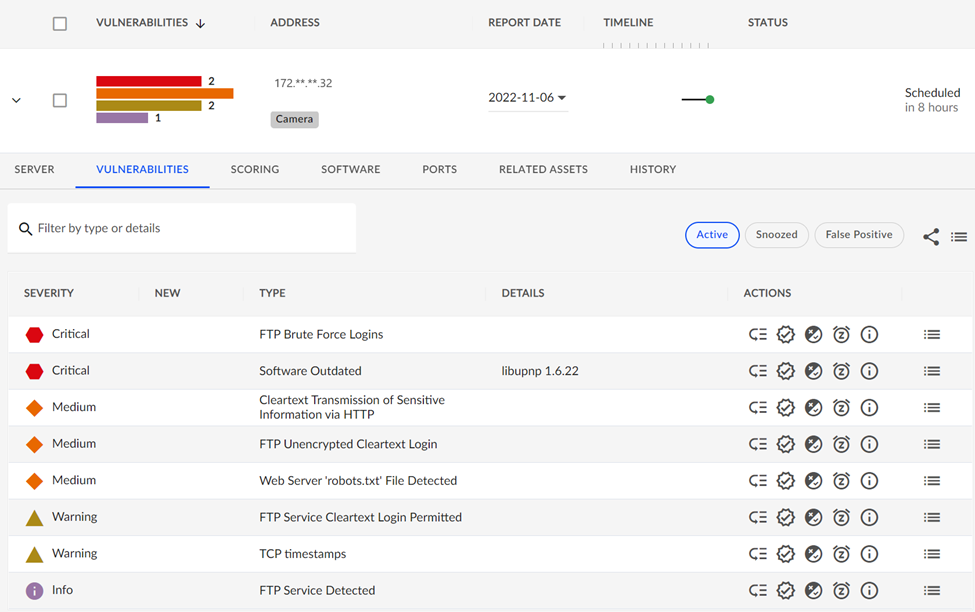

The results of the scans were surprising. Almost all discovered cameras had some Critical issues identified by the VDR scanning. In one case, even after a camera was upgraded to the latest firmware available from the vendor, VDR found Critical software and configuration vulnerabilities shown below:

One of the remaining critical issues was the result of an insecure FTP username/password that was not changed from the vendor’s default settings before the camera was put into service. These types of procedural lapses should not happen, but inadvertently they are bound to. The password hardening mistake was easily caught by a VDR scan so that another common cybersecurity risk could be dealt with. This is an example of an issue not related to firmware but a combination of the need for vendors not to ship with a well-known FTP login and the responsibility of users to not forget to harden the login.

Another example of the types of Critical issues you can expect when dealing with IP cameras relates to discovering an outdated library dependency found on the camera. The library is required by the vendor software but was not updated when the latest camera firmware patches were applied.

Camera Administration Consoles

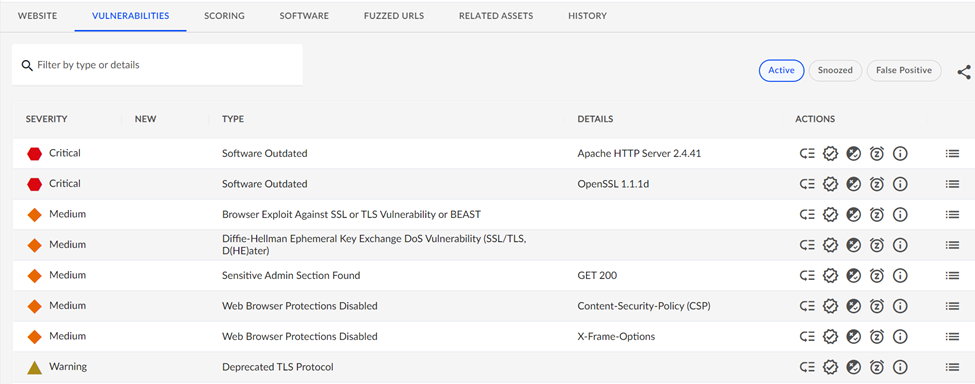

The VDR tool will also detect if a camera is exposing any HTTP sites/services and look for vulnerabilities there. Most IP cameras ship with an embedded HTTP server so administrators can access the cameras' functionality and perform maintenance. Again, considering the number of deployed cameras, this represents a huge number of websites that may be susceptible to hacking. Our testing found some examples of the type of issues that a camera’s web applications can expose:

The scan of this device found an older version of Apache webserver software and outdated SSL libraries in use for this cameras website and should be considered a critical vulnerability.

Conclusion

In this article, we have tried to raise awareness of the significant Cyber Security risk that IP cameras pose to organizations, both large and small. Providing effective video recording and analysis capabilities is much more than simply mounting cameras on the wall and walking away. IT and security professionals must ask, “Who’s watching our IP cameras? Each camera should be continuously patched to the latest version of firmware and software - and scanned with a tool like SecureWorks VDR. If vulnerabilities still exist after scanning and patching, it is critical to engage with your camera vendor to remediate the issues that may adversely impact your organization if neglected. Someone will be watching your IP cameras; let’s ensure they don’t conflict with your best interests.

Dell Technologies is at the forefront of delivering enterprise-class computer vision solutions. Our extensive partner network and key industry stakeholders have allowed us to develop an award-winning process that takes customers from ideation to full-scale implementation faster and with less risk. Our outcomes-based process for computer vision delivers:

- Increased operational efficiencies: Leverage all the data you’re capturing to deliver high-quality services and improve resource allocation.

- Optimized safety and security: Provide a safer, more real-time aware environment

- Enhanced experience: Provide a more positive, personalized, and engaging experience for customers and employees.

- Improved sustainability: Measure and lower your environmental impact.

- New revenue opportunities: Unlock more monetization opportunities from your data with more actionable insights

Where to go next...

Dell Technologies Workload Solutions for Computer Vision

Virtualized Computer Vision for Smart Transportation with Genetec

Virtualized Computer Vision for Smart Transportation with Milestone