Cloud vs Edge: Putting Cutting- Edge AL Voice, Vision, & Language Modules to the Test in the Cloud & Edge

Read the ReportThu, 14 Mar 2024 16:49:21 -0000

|Read Time: 0 minutes

| DEPLOYING LEADING AI MODELS ON THE EDGE OR IN THE CLOUD

The decision to deploy workloads at the edge or in the cloud is often a contest shaped by four pivotal factors: economics, latency, regulatory requirements, and fault tolerance. Some might distill these considerations into a more colloquial framework: the laws of economics, the laws of the land, the laws of physics, and Murphy's Law. In this multi-part paper, we won't merely discuss these principles in theory. Instead, we'll delve deeper, testing and comparing leading AI models across voice, computer vision, and large language models both at the edge and in the cloud.

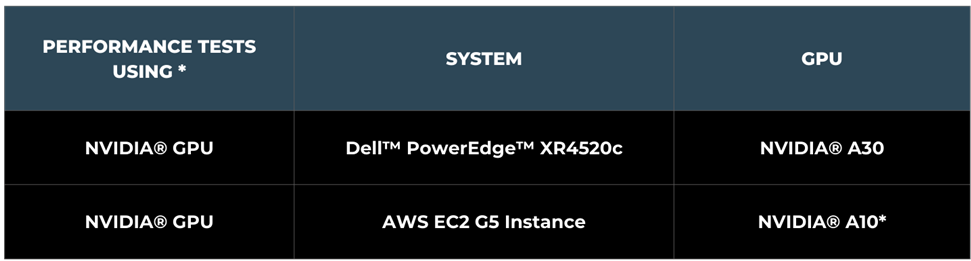

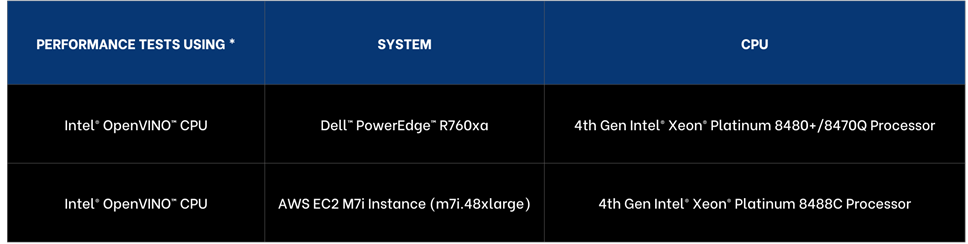

In part one we put the leading CPUs to the test, with 4th Generation Intel® Xeon® Scalable processors both in the cloud and at the edge. In part two we’ll put NVIDIA® GPUs to the test.

| GPUS AVAILABILITY IN THE CLOUD & AT THE EDGE

GPU instances are widely available in cloud environments, but only recently have purpose build platforms for the edge, offered similar high performing GPUs needed for advanced AI workloads at the edge.

In this paper we’ll evaluate leading vision, voice, and language models at the edge and in the cloud on NVIDIA® GPUs.

| AI MODELS SELECTION

- LLama-2 7B Chat • OpenAI Whisper base • YOLOv8n Instance Segmentation

To ensure we have a broad range of AI workloads tested at the edge and the cloud we opted for three of the leading models in their domains:

- VISION | YOLOv8n Instance Segmentation

YOLOv8n Instance Segmentation is designed for instance segmentation. Unlike basic object detection instance segmentation identifies the objects in an image as well as the segments of each object and provides outlines and confidence scores.

- LANGUAGE | Llama-2 7B Chat

Llama-2 7B Chat is a member of the Llama family of large language models offered by Meta trained on 2 trillion tokens and is well suited for chat applications.

- VOICE | OpenAI Whisper base 74M

Whisper is a deep learning model developed by OpenAI for speech recognition and transcription, capable of transcribing speech in English and multiple other languages and can translate several non-English languages into English.

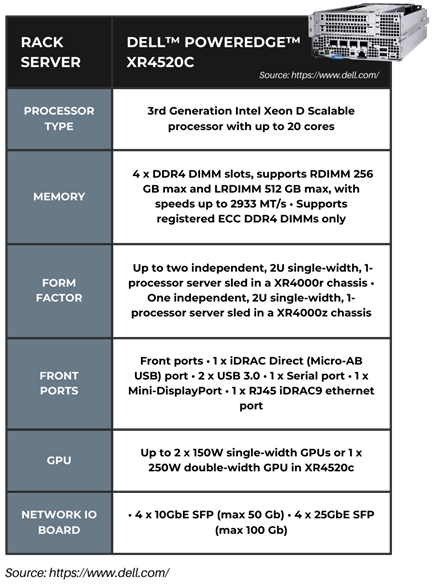

| EDGE HARDWARE • DELL™ POWEREDGE™ XR4520C

The system we selected is Dell™ PowerEdge™ XR4520c purpose built for the edge, the shortest depth server available to date that delivers high-performance compute along with its support for NVIDIA® GPUs, specifically the A30 Tensor Core series. Designed for workloads at the Edge including computer vision, video surveillance, and point of sales applications. It offers a rackable chassis that supports up to four separate server nodes in a single 2U chassis. The system offers storage up to 45Tb per sled. Dell™ PowerEdge™ XR4520c offers a temperature range of -5C-55C and is MIL 810H compliant, making it ideal for the harsh environments at the edge. Dell™ PowerEdge™ XR4520c NEBS Level 3 compliance meets the rigorous standards for performance, reliability, and environmental resilience, leading to a more stable and reliable network.

| AWS INSTANCE SELECTION

We have selected the AWS EC2 G5 instances, specifically the g5.8large instance powered by AWS NVIDIA® A10G Tensor Core GPUs and built for AWS cloud workloads including rich AI support. This option was selected as the nearest comparison to the NVIDIA® A30 selected for Dell™ PowerEdge XR4520c, but currently unavailable in the AWS portfolio. As of November 2023, pricing for the AWS EC2 G5 Instance starts at US$2.449 per hour.

| HARDWARE SELECTION CONSIDERATIONS

We have selected the best comparable offering. Dell™ PowerEdge™ portfolio offered NVIDIA® A30 GPUs, while AWS offered enhanced NVIDIA® A10G Tensor Core GPUs. While cloud offerings offer significant choice, Dell™ PowerEdge™ portfolio offered a great choice of processors, memory, and networking.

In our analysis we are providing performance as well as cost of compute comparisons. For deployment you will also want to consider the following factors:

- Operational expenditures including power and maintenance costs.

- Network costs including data transfer to cloud and local connectivity.

- Data storage costs including cloud cost versus local storage.

- Network Latency requirements including lower latency as data is processed locally.

- Security and compliance costs

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

| PERFORMANCE INSIGHTS

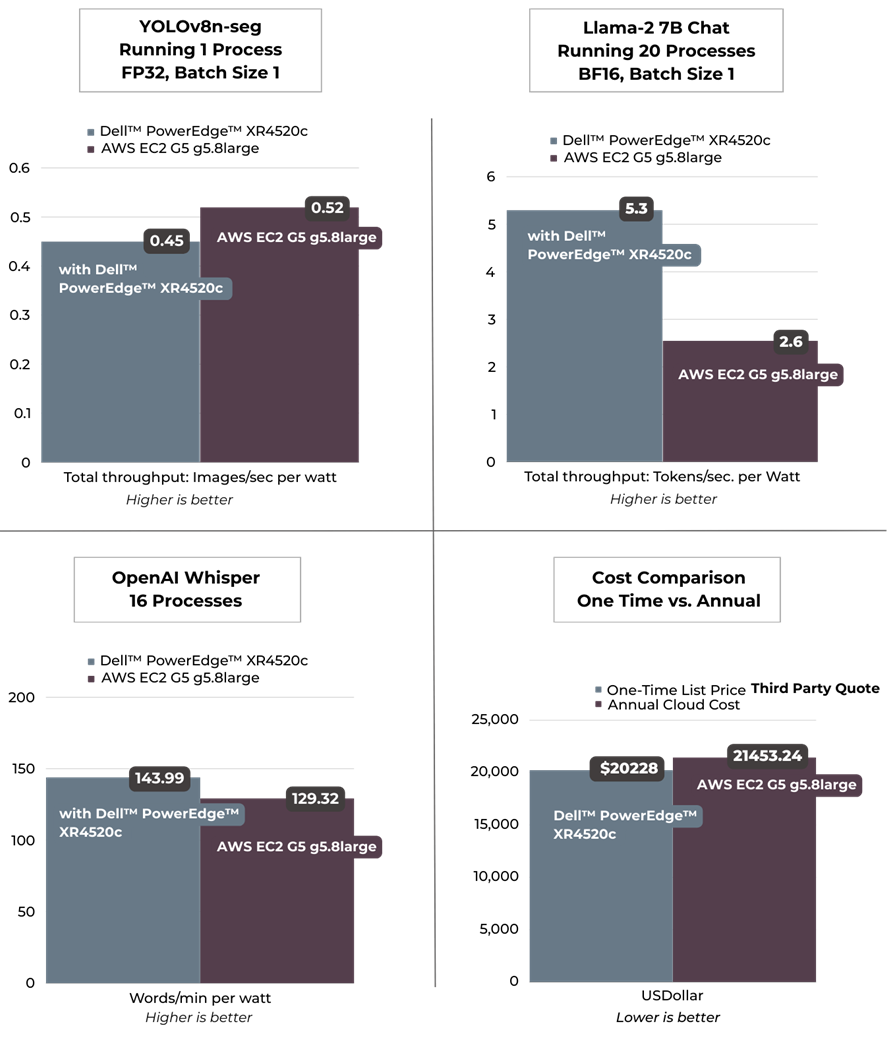

We selected one process results for YOLOv8n Instance Segmentation for best images per second performance. Llama-2 7B Chat was selected running 20 processes as it achieved our targeted sub 100 ms per token user latency. For OpenAI Whisper we selected running 16 processes to target user reading speed. Across language and voice per watt, the edge offering exceeded the cloud instance, including offering lower latency AI performance. The cloud exceeded on vision and raw voice performance. From a computational cost comparison the on premise solution offered a payback period of nearly a year indicating a TCO win for edge.

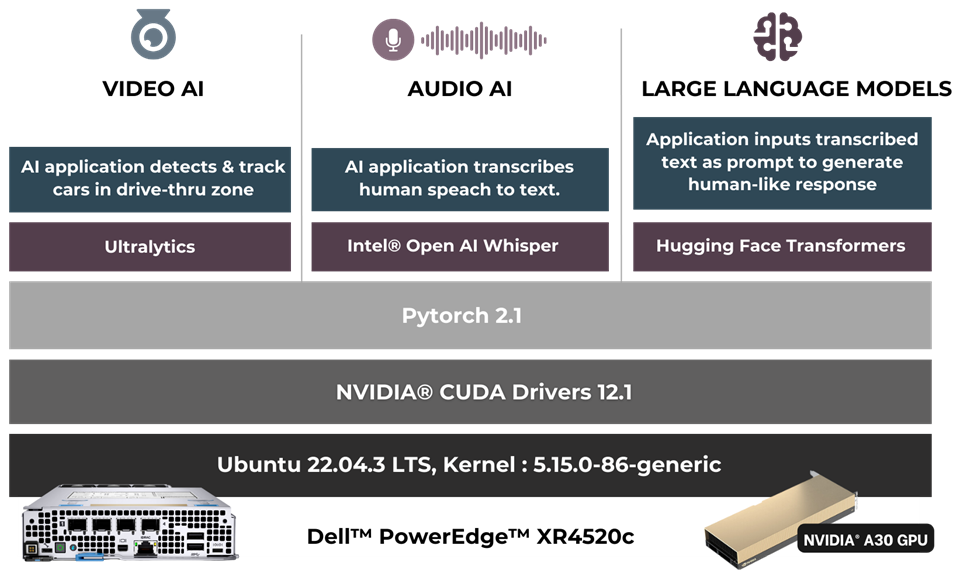

| RETAIL USE CASE

- Drive-thru Pharmacy Pick-up

To demonstrate the practical application of these models, we designed a solution architecture accompanied by a demo that simulates a drive-through pharmacy scenario. In this use case, the vision model identifies the car upon its arrival, the language model gathers the client's information, and communication is facilitated via the voice model. As you can discern, factors such as latency, privacy, security, and cost play crucial roles in this scenario, emphasizing the importance of the decision to deploy either in the cloud or at the edge.

In our drive-thru pharmacy pick-up scenario, we utilize a comprehensive architecture to optimize the customer experience. The Video AI module employs YOLOv8n Instance segmentation model to accurately detect and track cars in the drive-thru zone. The Audio AI segment captures and transcribes human speech on the optimized Whisper-base model. This transcribed text is then processed by our Large Language Models segment, where an application leverages the optimized LLama2 7B Chat model to generate intuitive, human-like responses.

| RETAIL USE CASE ARCHITECTURE

| SUMMARY

In this analysis we put the leading voice, language, and vision models to the test on Dell™ PowerEdge™ and AWS instances. Dell™ PowerEdge™ XR4520c exceeded the cloud instances LLM and performance per watt on voice, while the AWS instances offered superior performance on computer vision. The Dell™ PowerEdge™ XR4520c offers a payback period of nearly one year based on Dell™ third party pricing The pharmacy drive through use case showcased the advantages of an edge deployment to maintain customer privacy, HIPPA compliance, and ensure fault tolerance and low latency.

APPENDIX | PERFORMANCE TESTING DETAILS

- Performance Insights | Yolov8n Instance Segmentation

| Test Methodology

The YOLOv8n-seg FP32 model was tested using ultralytics 8.0.43 library. A 53 second video with a resolution of 1080p and a bitrate of 1906 kb/s was employed for the performance tests. The first 30 inference samples were used as a warm-up phase and were not included in calculating the average inference metrics. The recorded time includes H264 encode-decode using PyAV 10.0.0 and model inference time.

Input | Video file: Duration: 53.3 sec, h264, 1920x1080, 1906 kb/s, 30 fp

Output | Video file with h264 encoding (without segmentation post processing)

Base Model: https://docs.ultralytics.com/tasks/segment/#models

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

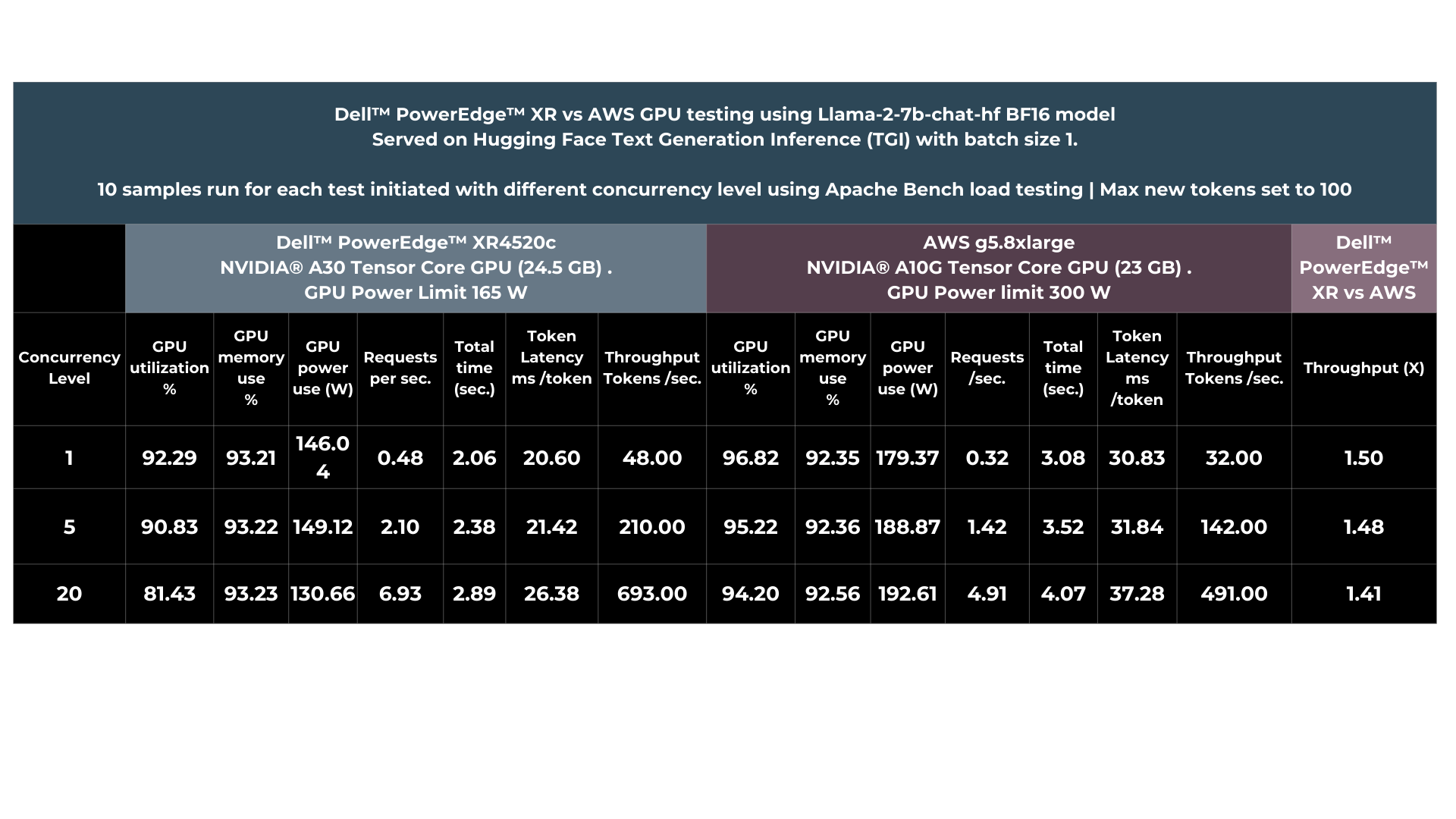

| PERFORMANCE INSIGHTS LANGUAGE • CLOUD VS. EDGE

- Llama 2 7B Chat

| Test Methodology

For tests on NVIDIA® GPU, Llama-2-7B-chat-hf BF16 model was served on Text Generation Inference v1.1.0 server (TGI server). The model was loaded into NVIDIA® GPU by TGI server and Apache Bench was used for load testing.

The test involved initiating concurrent requests using Apache Bench. For each concurrent requests, results for performance was collected for ten samples. The first five requests were treated as a warm-up phase and were not included in calculation of average inference time (in seconds) and the average time per token.

Input | Discuss the history and evolution of artificial intelligence in 80 words.

Output | Discuss the history and evolution of artificial intelligence in 80 words or less. Artificial intelligence (AI) has a long history dating back to the 1950s when computer scientist Alan Turing proposed the Turing Test to measure machine intelligence. Since then, AI has evolved through various stages, including rule-based systems, machine learning, and deep learning, leading to the development of intelligent systems capable of performing tasks that typically require human intelligence, such as visual recognition, natural language processing, and decision-making.

Base Model | https://huggingface.co/meta-llama/Llama-2-7b-chat-hf

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

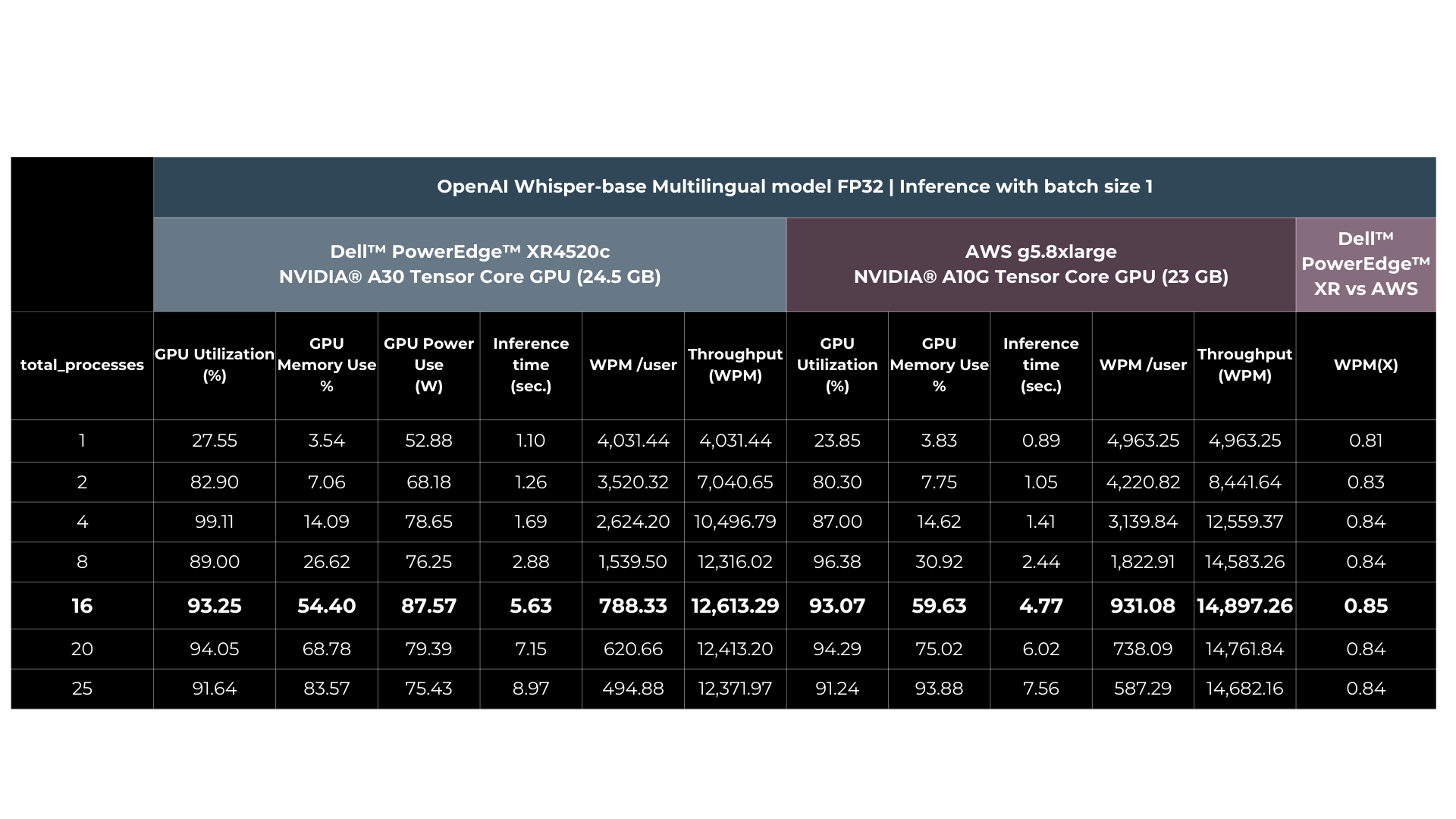

| PERFORMANCE INSIGHTS VOICE • CLOUD VS. EDGE

- OpenAI Whisper-base model

| Test Methodology

The OpenAI Whisper base 74M FP32 multilingual was tested for inference using OpenAI-Whisper v20231117 Python package. For performance tests, an audio clip of 28.2 seconds with bitrate of 32 kb/s was employed. Twenty five iterations were executed for each test scenario out of which the first 5 iterations were considered as warm-up and were not included in calculating the average Inference time (in seconds) and tokens per second. The time collected includes encode-decode time using tokenizer and the model inference time.

Input | MP3 file with 28.2 sec audio

Output | Generative AI has revolutionized the retail industry by offering a wide array of innovative use cases that enhance customer experiences and streamline operations. One prominent application of Generative AI is personalized product recommendations. Retailers can utilize advanced recommendation algorithms to analyze customer data and generate tailored product suggestions in real time. This not only drives sales but also enhances customer satisfaction by presenting them with items that align with their preferences and purchase history.

| 74 words transcribed

Base Model | https://github.com/openai/whisper#available-models-and-languages

***Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

| About Scalers AI™

Scalers AI™ specializes in creating end-to-end artificial intelligence (AI) solutions to fast track industry transformation across a wide range of industries, including retail, smart cities, manufacturing, insurance, finance, legal and healthcare. Scalers AI™ industry offering include predictive analytics, custom large language models and multi-modal offerings across voice, vision, and language. As a full stack AI solutions company with solutions ranging from the cloud to the edge, our customers often need versatile common off the shelf (COTS) hardware that works well across a range of workloads.

- Fast track development & save hundreds of hours in development with access to the solution code

As part of this effort Scalers AI™ is making the solution code available.

Reach out to your Dell™ representative or contact Scalers AI™ at contact@scalers.ai for access to Github repo.

Related Documents

Cloud Vs On Premise: Putting Leading AI Voice, Vision & Language Models to the Test in the Cloud & On Premise

Thu, 14 Mar 2024 16:49:21 -0000

|Read Time: 0 minutes

| DEPLOYING LEADING AI MODELS ON PREMISE OR IN THE CLOUD

The decision to deploy workloads either on premise or in the cloud, hinges on four pivotal factors: economics, latency, regulatory requirements, and fault tolerance. Some might distill these considerations into a more colloquial framework: the laws of economics, the laws of the land, the laws of physics, and Murphy's Law. In this multi-part paper, we won't merely discuss these principles in theory. Instead, we'll delve deeper, testing and comparing leading AI models across voice, computer vision, and large language models both on premise and in the cloud.

In part one we’ll put leading CPUs to the test, with 4th Generation Intel® Xeon® Scalable Processor both in the cloud and on premise.

| LEVERAGING INTEL® DISTRIBUTION OF OPENVINO™ TOOLKIT & CORE PINNING FOR ENHANCED PERFORMANCE

To ensure enhanced performance across the cloud and on premise, we are using the Intel® Distribution of OpenVINO™ Toolkit because it offers enhanced optimizations of AI models runs and across a broad range of platforms and leading AI frameworks.

To further enhance performance, we conducted core pinning, a process used in computing to assign specific CPU cores to specific tasks or processes.

| AWS INSTANCE SELECTION

We have selected the AWS EC2 M7i Instance, specifically the m7i.48xlarge model, part of Amazon general-purpose instances that offers a substantial amount of computing resources making it comparable to Dell™ PowerEdge™ 760xa, the on-premise solution we selected.

- Processing Power and Memory: The m7i.48xlarge Instance is equipped with 192 virtual CPUs (vCPUs) and 768 GiB of memory. This high level of processing power and memory capacity is ideal for CPU-based machine learning.

- Networking and Bandwidth: This instance provides a bandwidth of 50 Gbps, facilitating efficient data processing and transfer, essential for high-transaction and latency-sensitive workloads.

- Performance Enhancement: The M7i Instances, including the m7i.48xlarge, are powered by custom 4th Generation Intel® Xeon® Scalable Processors, also known as Sapphire Rapids.

As of November 2023, the pricing for the AWS EC2 M7i Instance, specifically the m7i.48xlarge model, starts at US$9.6768 per hour.

| HARDWARE SELECTION CONSIDERATIONS

For the cloud instance, we selected the top AWS EC2 M7i Instance with 192 virtual cores. For on premise, Dell™ PowerEdge™ portfolio offered more choice and we selected 112 physical core processor with 224 hyper threaded cores. While cloud offerings offer significant choice, Dell™ PowerEdge™ portfolio offered great choice of processors, memory, and networking.

In our analysis, we are providing performance insights as well as cost of compute comparisons. For deployment you will also want to consider the following factors:

- Operational expenditures including power and maintenance costs,

- Network costs including data transfer to cloud and local connectivity,

- Data storage costs including cloud cost versus local storage,

- Network latency requirements including lower latency as data is processed locally,

- Security and compliance costs.

| AI MODELS SELECTION

- LLama-2 7B Chat • OpenAI Whisper Base • YOLOv8n Instance Segmentation

To ensure we have a broad range of AI workloads tested on premise and in the cloud we opted for three of the leading models in their domains:

- VISION | YOLOv8n-seg

YOLOv8n-seg is model variant of YOLOv8 that is designed for instance segmentation and has 3.2 million parameters for the nano version. Unlike basic object detection instance segmentation identifies the objects in an image as well as the segments of each object and provides outlines and confidence scores.

- LANGUAGE | Llama 2 7B Chat

Llama-2 7B-chat is a member of the Llama family of large language models offered by Meta, trained on 2 trillion tokens and well suited for chat applications.

- VOICE | OpenAI Whisper base 74M

OpenAI Whisper is a deep learning model developed by OpenAI for speech recognition and transcription, capable of transcribing speech in English and multiple other languages and translating several non-English languages into English.

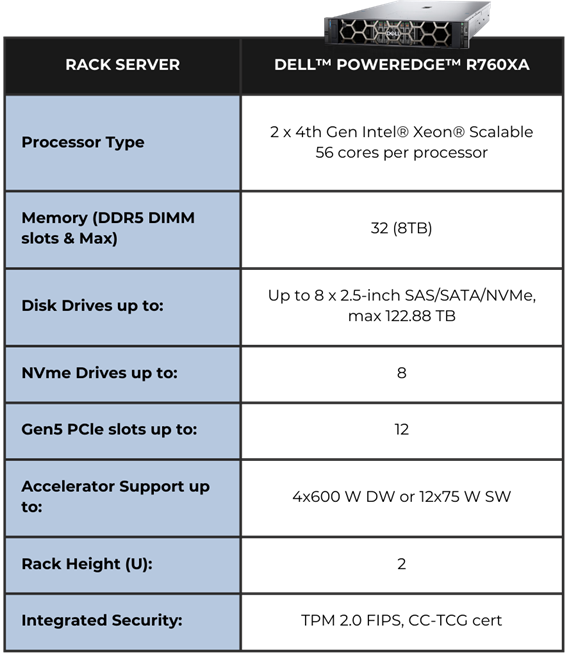

EDGE HARDWARE | DELL™ POWEREDGE™ R760XA RACK SERVER

The system we selected is Dell™ PowerEdge™ R760xa hardware powered by 4th Generation Intel® Xeon® Scalable Processors.

The Air-cooled design with front-facing accelerators enables better cooling Cyber Resilient Architecture for Zero Trust IT environment.

Operations Security is integrated into every phase of Dell™ PowerEdge™ lifecycle, including protected supply chain and factory-to-site integrity assurance.

Silicon-based root of trust anchors provide end-to-end boot resilience complemented by Multi-Factor Authentication (MFA) and role-based access controls to ensure secure operations. iDRAC delivers seamless automation and centralize one-to-many management.

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

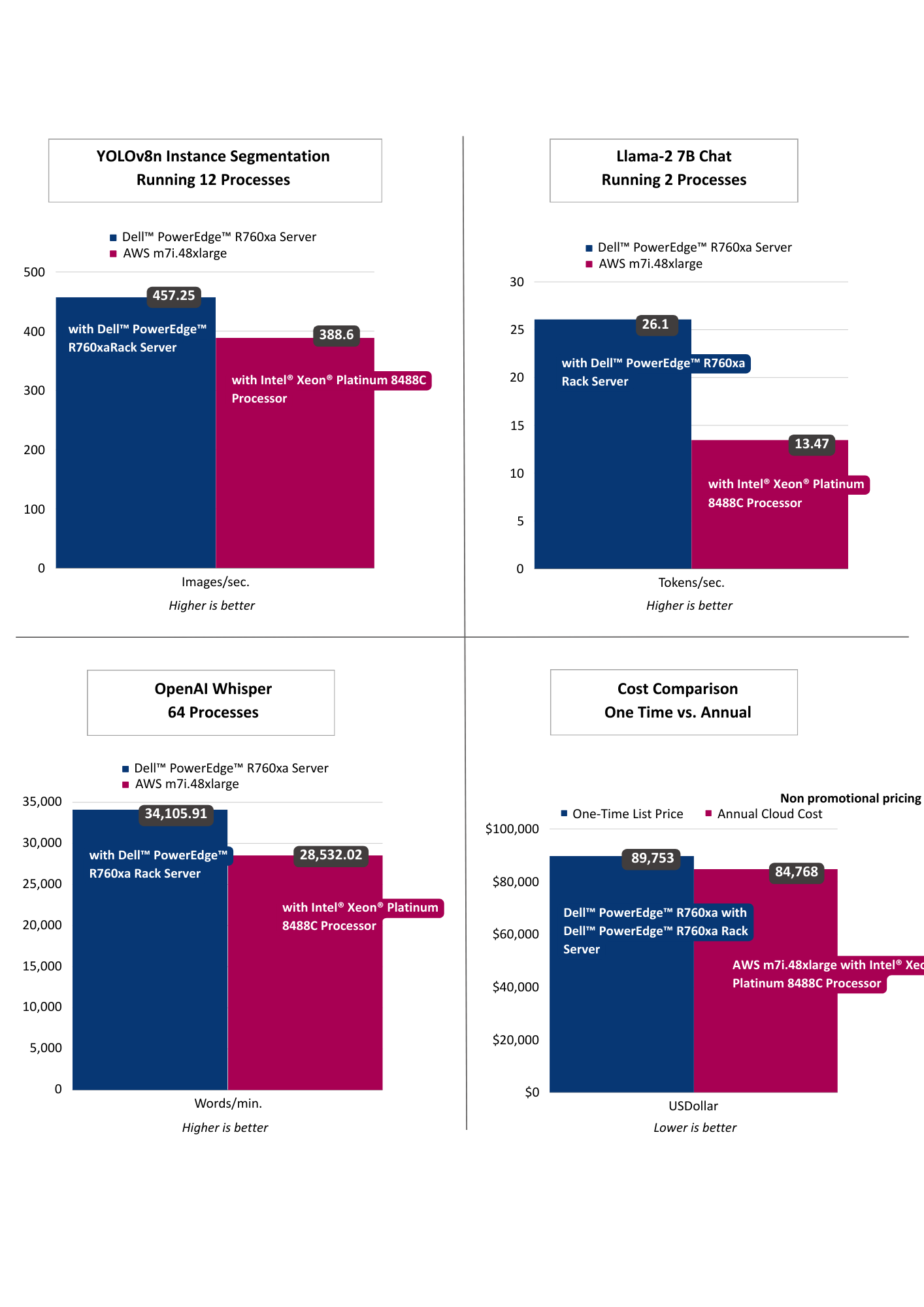

| PERFORMANCE INSIGHTS

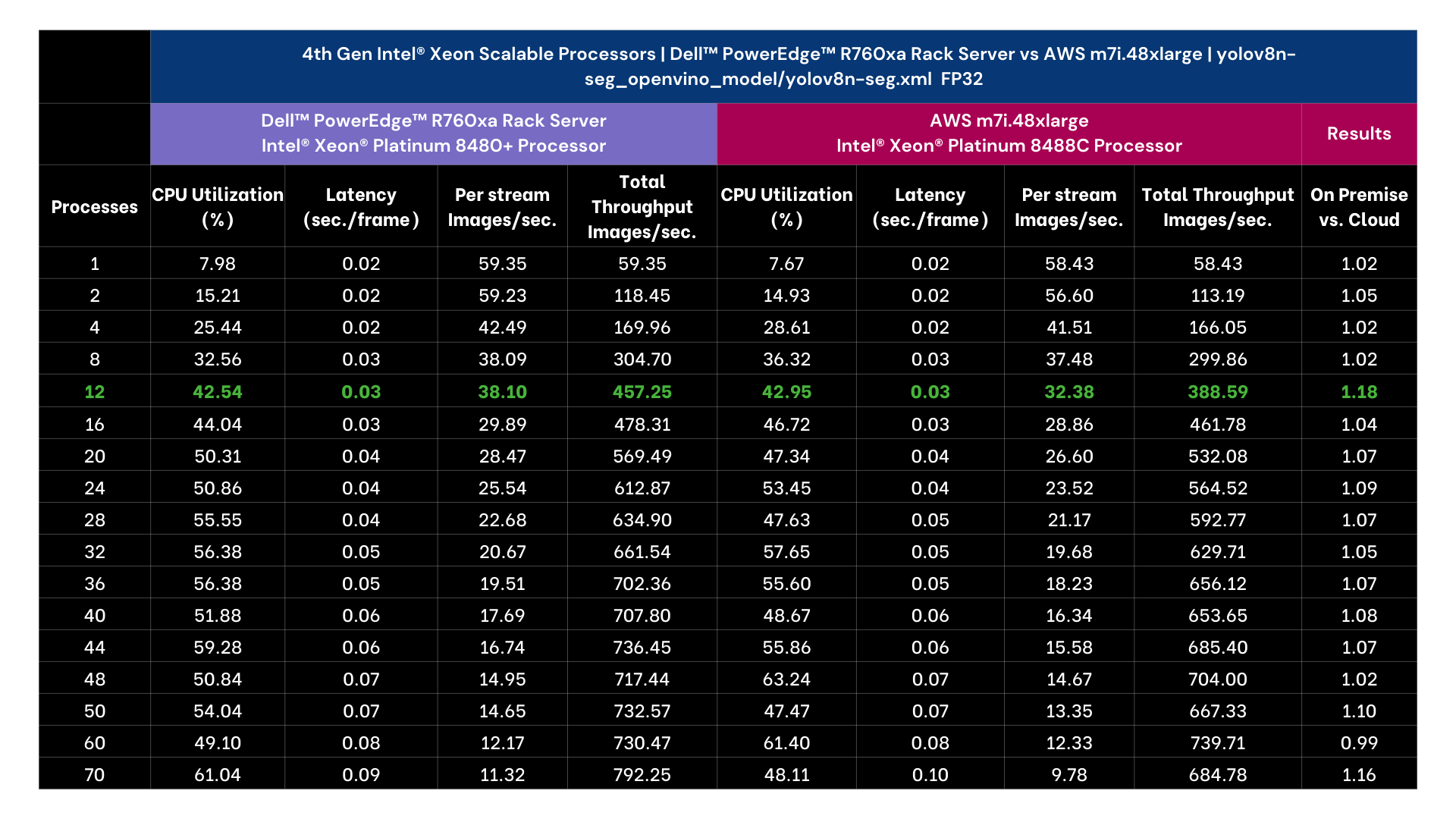

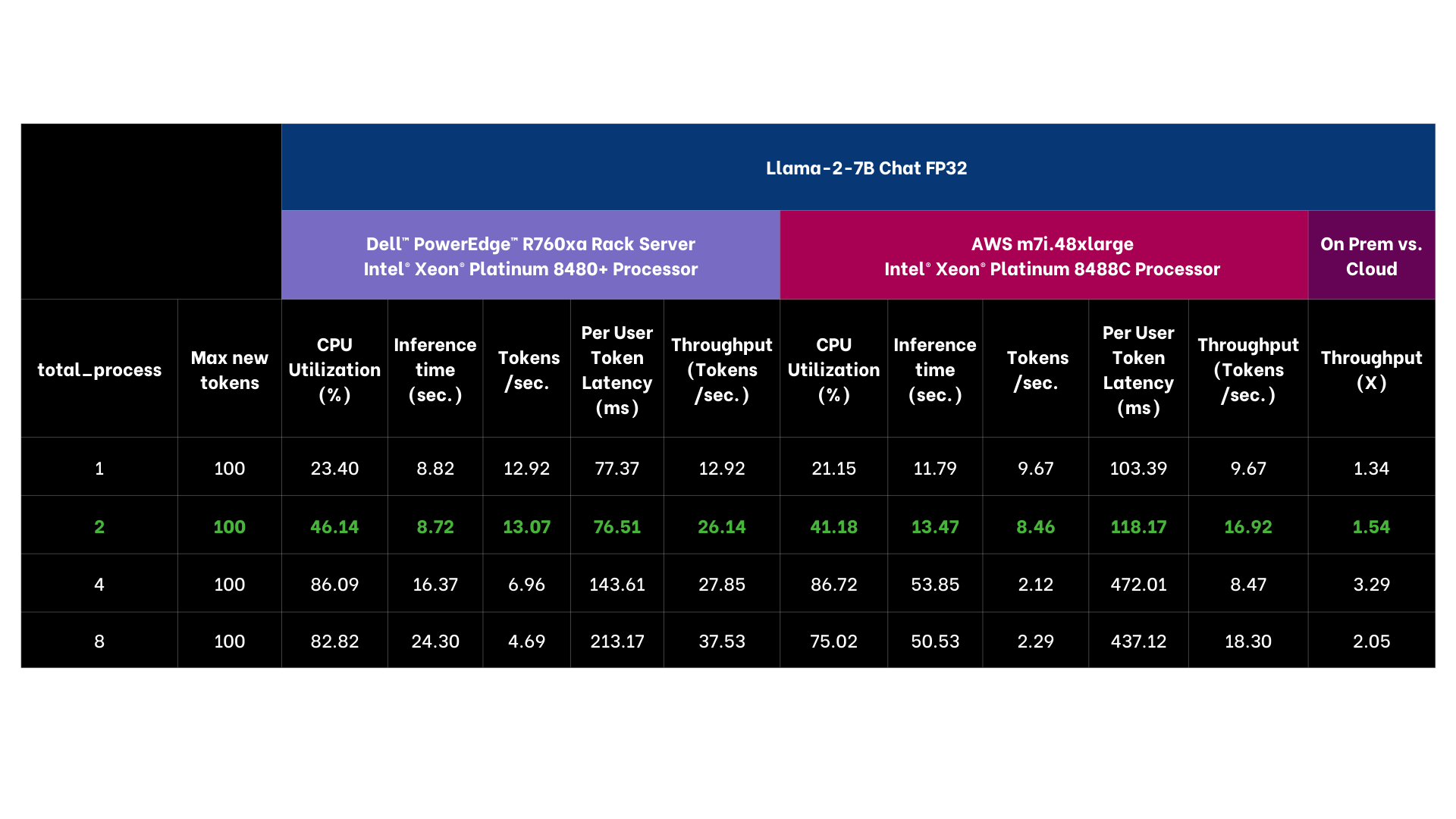

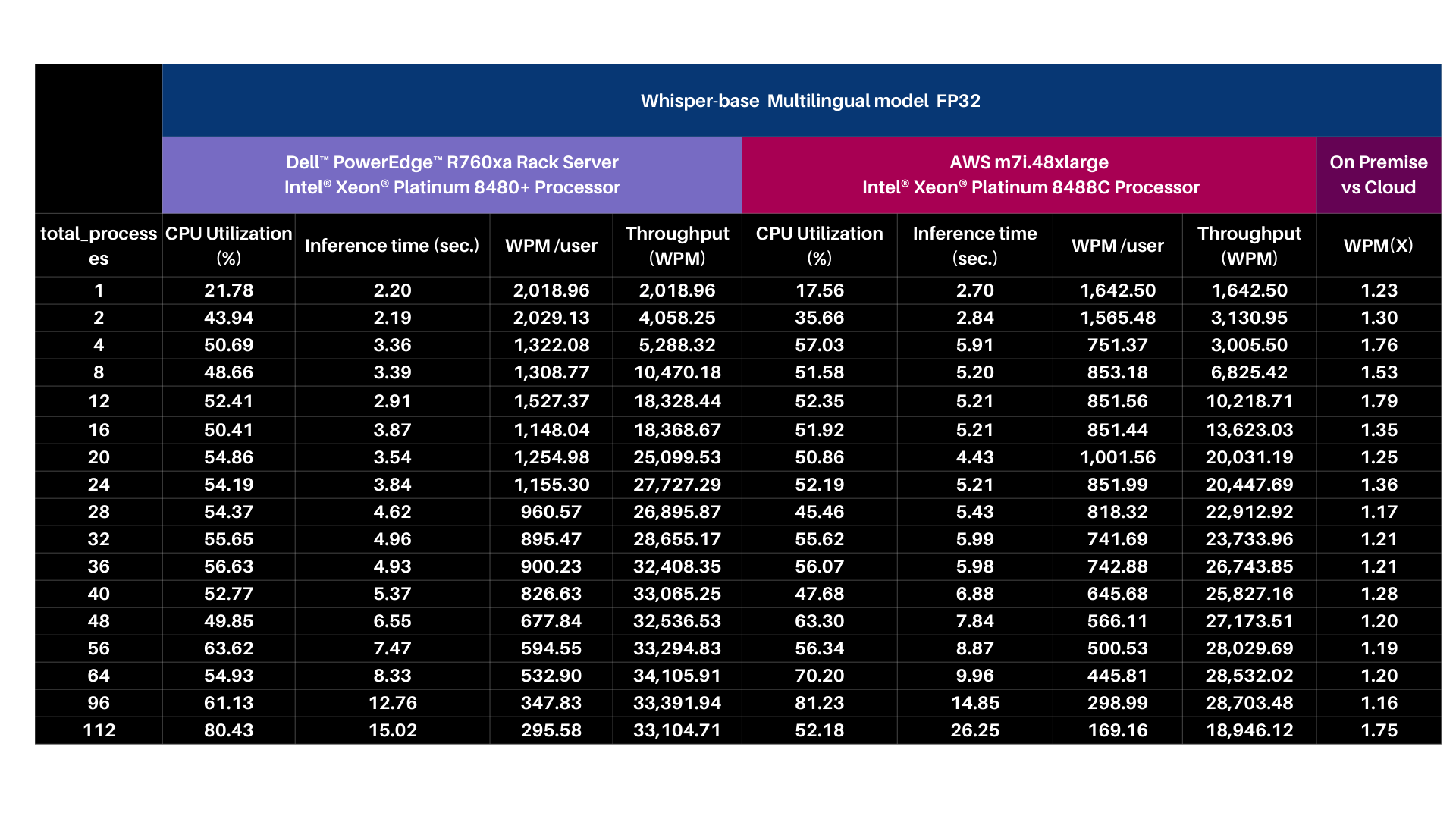

The results selected for YOLOv8n Instance Segmentation running 12 processes as that threshold achieved targeted performance of >30 images per second. Llama-2 7B Chat was selected running 2 processes as it achieved targeted sub 100 ms per token user latency. OpenAI Whisper selected running 64 processes targeting user reading speed. Across vision, language, and voice, the on premise offering exceeded the cloud instance, including offering lower latency AI performance. From a computational cost comparison the on premise solution offered a payback period of nearly a year based on dell.com pricing indicating a TCO win for on premise as well.

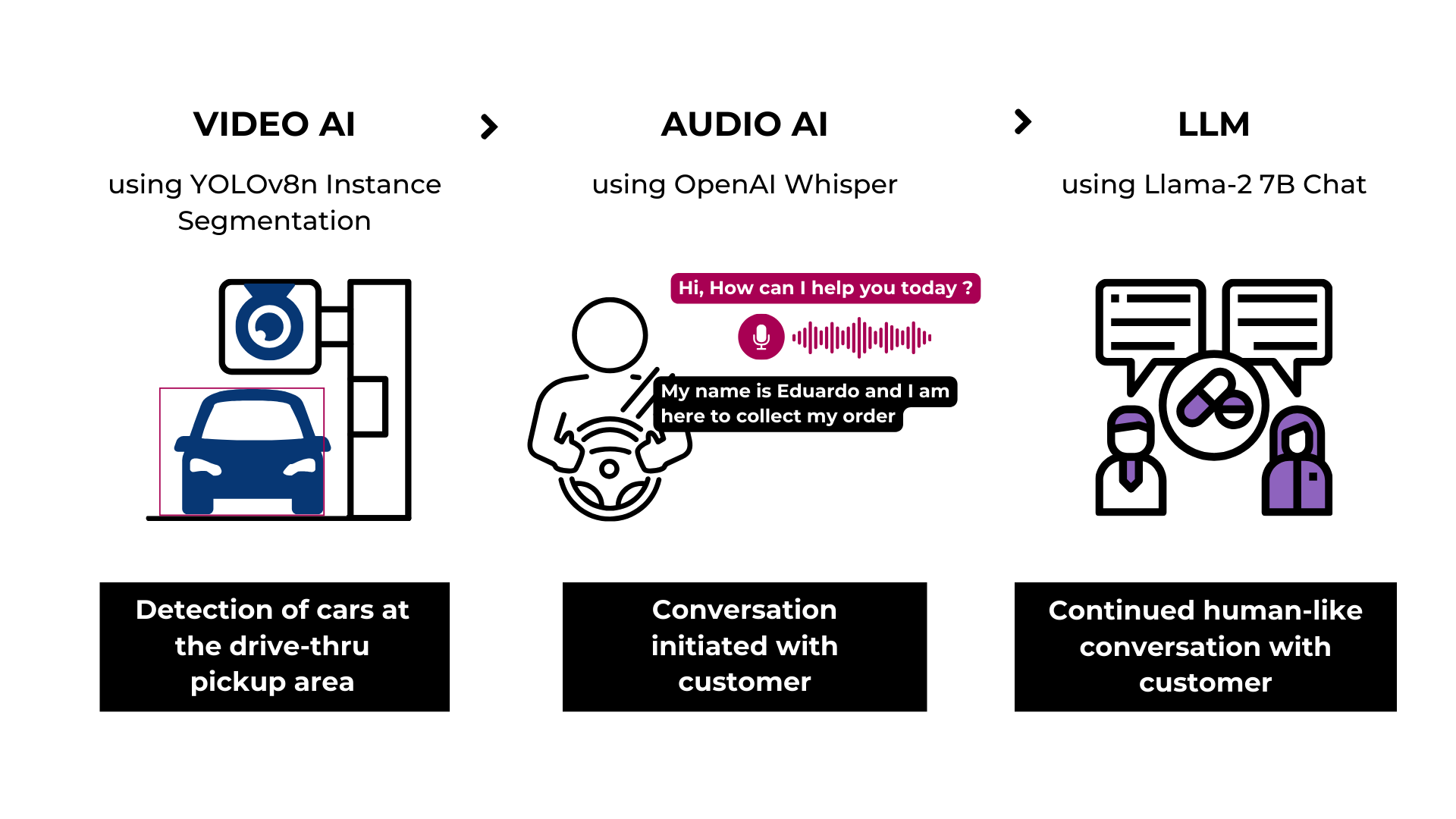

| RETAIL USE CASE

- Drive-thru Pharmacy Pick-up

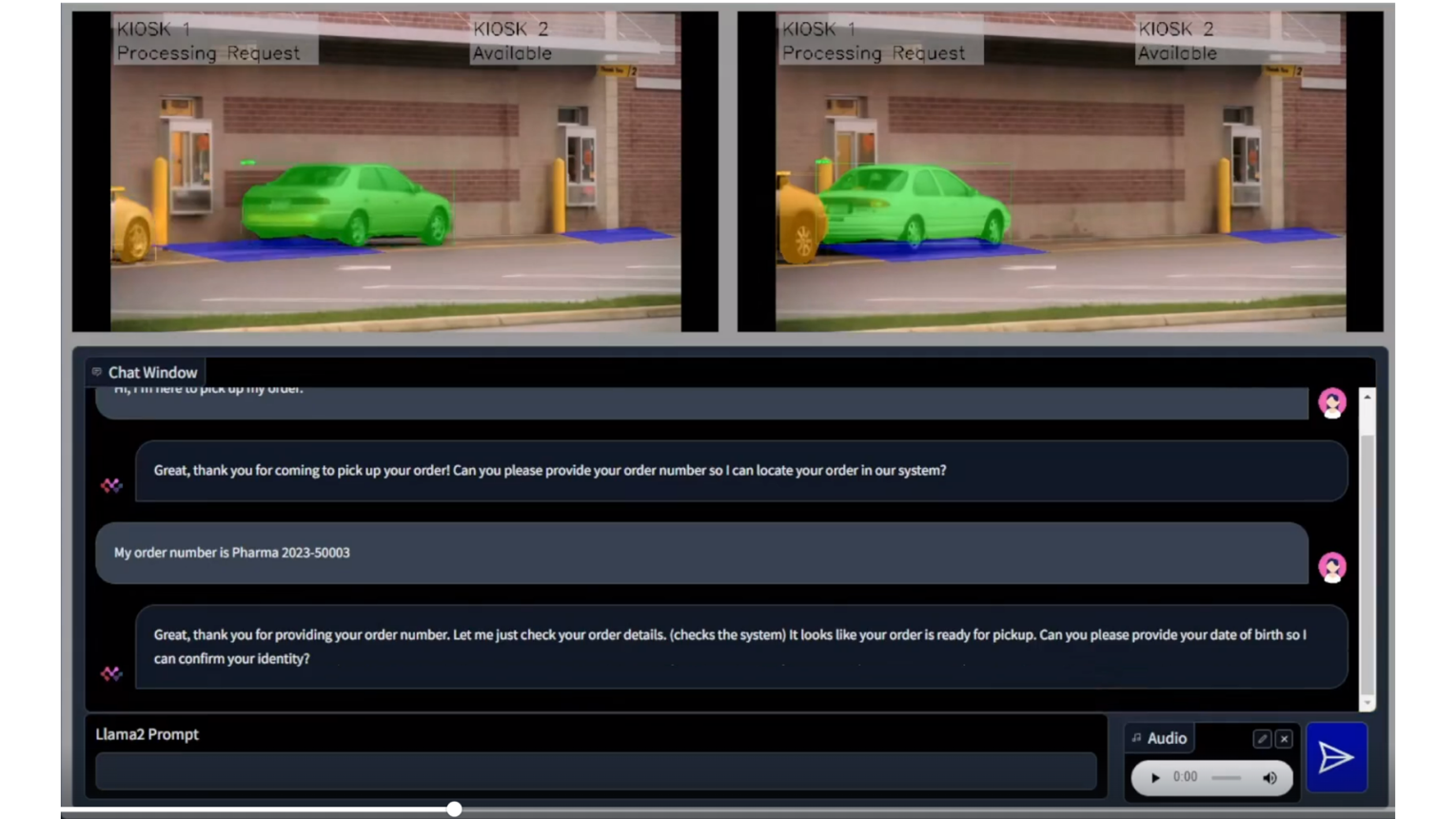

To demonstrate the practical application of these models, we designed a solution architecture accompanied by a demo that simulates a drive-through pharmacy scenario. In this use case, the vision model identifies the car upon its arrival, the language model gathers the client's information, and communication is facilitated via the voice model. As you can discern, factors such as latency, privacy, security, and cost play crucial roles in this scenario, emphasizing the importance of the decision to deploy either in the cloud or on premise.

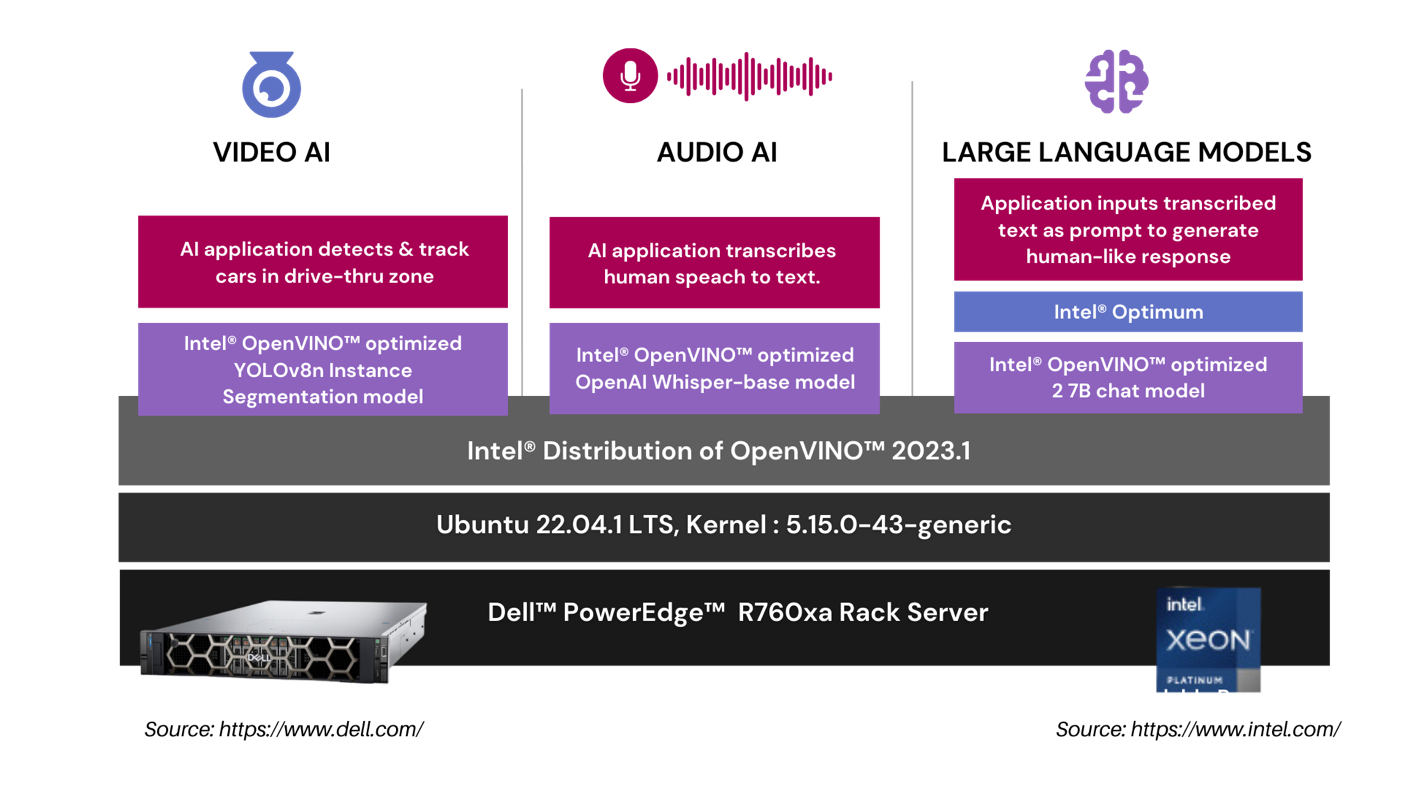

In our drive-thru pharmacy pick-up scenario, we utilize a comprehensive architecture to optimize the customer experience. The Video AI module employs an Intel® OpenVINO™ optimized YOLOv8n Instance Segmentation model to accurately detect and track cars in the drive-thru zone. The Audio AI segment captures and transcribes human speech into text using an Intel® OpenVINO™ optimized OpenAI whisper-base model. This transcribed text is then processed by our Large Language Models segment, where an application leverages the Intel® OpenVINO™ optimized LLama 2 7B Chat model to generate intuitive, human-like responses.

| RETAIL USE CASE ARCHITECTURE

| SUMMARY

In this analysis, we put the leading voice, language, and vision models to the test on Dell™ PowerEdge™ and AWS on CPUs. Dell™ PowerEdge™ R760xa Rack Server exceeded the cloud instances on all performance tests and offers a payback period of nearly one year based on Dell™ public pricing. The drive-through pharmacy use case showcased the advantages of an on premise deployment to maintain customer privacy, HIPPA compliance, and ensure fault tolerance and low latency. Finally, in both instances we showcased enhanced CPU performance with Intel® OpenVINO™ and core pinning. In part II, we’ll compare GPU workloads in the cloud versus on premise.

APPENDIX | PERFORMANCE TESTING DETAILS

Performance Insights | 4th Generation Intel® Xeon® Scalable Processors

- Yolov8n Instance Segmentation with Intel® OpenVINO™ & Core Pinning

| Test Methodology

YOLOv8n Instance Segmentation FP32 model is exported into the Intel® OpenVINO™ format using ultralytics 8.0.43 library and then tested for object segmentation (inference) using Intel® OpenVINO™ 2023.1.0 runtime.

For performance tests, we used a source video of 53 sec duration with resolution of 1080p and a bitrate of 1906 kb/s. The initial 30 inference samples were treated as warm-up and excluded from calculating the average inference metrics. The time collected includes H264 encode-decode using PyAV 10.0.0 and model inference time.

Output | Video file with h264 encoding (without segmentation post processing)

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

Performance Insights | 4th Gen Intel® Xeon® Scalable Processors

- Llama 2 7B Chat with Intel® OpenVINO™ & Core Pinning

| Test methodology

The Llama-2 7B Chat FP32 model is exported into the Intel® OpenVINO™ format and then tested for text generation (inference) using Hugging Face Optimum 1.13.1. Hugging Face Optimum is an extension of Hugging Face transformers and Diffusers and provides tools to export and run optimized models on various ecosystems including Intel® OpenVINO™. For performance tests, 25 iterations were executed for each inference scenario out of which initial 5 iterations were considered as warm-up and were discarded for calculating Inference time (in seconds) and tokens per second. The time collected includes encode-decode time using tokenizer and LLM inference time.

Input | Discuss the history and evolution of artificial intelligence in 80 words.

Output | Discuss the history and evolution of artificial intelligence in 80 words or less.

Artificial intelligence (AI) has a long history dating back to the 1950s when computer scientist Alan Turing proposed the Turing Test to measure machine intelligence. Since then, AI has evolved through various stages, including rule-based systems, machine learning, and deep learning, leading to the development of intelligent systems capable of performing tasks that typically require human intelligence, such as visual recognition, natural language processing, and decision-making.

Base Model | https://huggingface.co/meta-llama/Llama-2-7b-chat-hf

*Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

PERFORMANCE INSIGHTS | 4TH GEN INTEL® XEON® SCALABLE PROCESSORS

- OpenAI Whisper-base model with Intel® OpenVINO™ & Core Pinning

| Test methodology

The OpenAI Whisper base 74M FP32 model is exported into the Intel® OpenVINO™ format and then tested for inference using Intel® OpenVINO™. For performance tests, 25 iterations were executed for each inference scenario out of which initial 5 iterations were considered as warm-up and were discarded for calculating Inference time (in seconds) and tokens per second. The time collected includes encode-decode time using tokenizer and LLM inference time.

Input | MP3 file with 28.2 sec audio

Output | Generative AI has revolutionized the retail industry by offering a wide array of innovative use cases that enhance customer experiences and streamline operations. One prominent application of Generative AI is personalized product recommendations. Retailers can utilize advanced recommendation algorithms to analyze customer data and generate tailored product suggestions in real time. This not only drives sales but also enhances customer satisfaction by presenting them with items that align with their preferences and purchase history.

| 74 words transcribed.

Base Model | https://github.com/openai/whisper#available-models-and-languages

***Performance varies by use case, model, application, hardware & software configurations, the quality of the resolution of the input data, and other factors. This performance testing is intended for informational purposes and not intended to be a guarantee of actual performance of an AI application.

| About Scalers AI™

Scalers AI™ specializes in creating end-to-end artificial intelligence (AI) solutions to fast-track industry transformation across a wide range of industries, including retail, smart cities, manufacturing, insurance, finance, legal and healthcare. Scalers AI™ industry offering include predictive analytics, generative AI chatbots, stable diffusion, image and speech recognition, and natural language processing. As a full stack AI solutions company with solutions ranging from the cloud to the edge, our customers often need versatile common off the shelf (COTS) hardware that works well across a range of workloads.

- Fast track development & save hundreds of hours in development with access to the solution code.

As part of this effort, Scalers AI™ is making the solution code available. Reach out to your Dell™ representative or contact Scalers AI™ at contact@scalers.ai for access to GitHub repo.

Delivering Choice for Enterprise AI: Multi-Node Fine-Tuning on Dell PowerEdge XE9680 with AMD Instinct MI300X

Wed, 15 May 2024 21:26:35 -0000

|Read Time: 0 minutes

In this blog, Scalers AI and Dell have partnered to show you how to use domain-specific data to fine-tune the Llama 3 8B Model with BF16 precision on a distributed system of Dell PowerEdge XE9680 Servers equipped with AMD Instinct MI300X Accelerators.

| Introduction

Large language models (LLMs) have been a significant breakthrough in AI and demonstrated remarkable capabilities in understanding and generating human-like text across a wide range of domains. The first step in approaching an LLM-assisted AI solution is generally pre-training, during which an untrained model learns to anticipate the next token in a given sequence using information acquired from various massive datasets, followed by fine-tuning, which involves adapting the pre-trained model for a domain specific task by updating a task-specific layer on top.

Fine-tuning, however, still requires a lot of time, computation, and RAM. One approach to reducing computation time is distributed fine-tuning, which allows computational resources to be more efficiently utilized by parallelizing the fine-tuning process across multiple GPUs or devices. Scalers AI showcased various industry-leading capabilities of Dell PowerEdge XE9680 Servers paired with AMD Instinct MI300X Accelerators on a distributed fine-tuning task by uncovering these key value drivers:

- Developed a distributed finetuning software stack on the flagship Dell PowerEdge XE9680 Server equipped with eight AMD Instinct MI300X Accelerators.

- Fine-tuned Llama 3 8B with BF16 precision using the PubMedQA medical dataset on two Dell PowerEdge XE9680 Servers each equipped with AMD Instinct MI300X Accelerators.

- Deployed fine-tuned model in an enterprise chatbot scenario & conducted side by side tests with Llama 3 8B.

- Released distributed fine-tuning stack with support for Dell PowerEdge XE9680 Servers equipped with AMD Instinct MI300X Accelerators and NVIDIA H100 Tensor Core GPUs to offer enterprise choice.

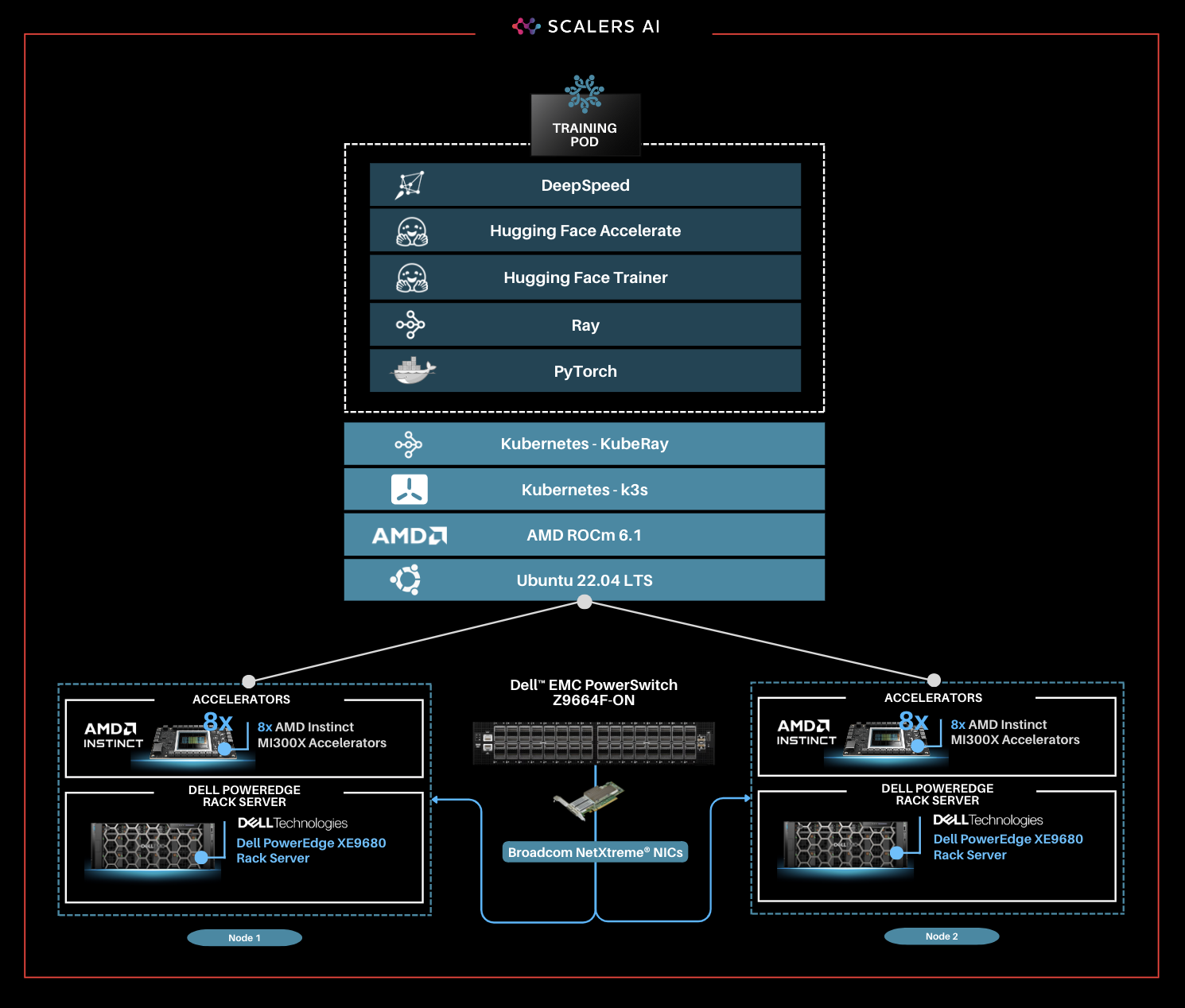

| The Software Stack

This solution stack leverages Dell PowerEdge Rack Servers, coupled with Broadcom Ethernet NICs for providing high-speed inter-node communications needed for distributed computing as well as Kubernetes for scaling. Each Dell PowerEdge server contains AI accelerators, specifically AMD Instinct Accelerators to enhance LLM fine-tuning.

The architecture diagram provided below illustrates the configuration of two Dell PowerEdge XE9680 servers with eight AMD Instinct MI300X accelerators each.

Leveraging Dell PowerEdge, Dell PowerSwitch, and high-speed Broadcom Ethernet Network adaptors, the software platform integrates Kubernetes (K3S), Ray, Hugging Face Accelerate, Microsoft DeepSpeed, with other AI libraries and drivers including AMD ROCm™ and PyTorch.

| Step-by-Step Guide

Step 1. Set up the distributed cluster.

Follow the k3s setup and introduce additional parameters for the k3s installation script. This involves configuring flannel, the networking fabric for kubernetes, with a user-selected specified network interface and utilizing the "host-gw" backend for networking. Then, Helm, the package manager for Kubernetes, will be used, and AMD plugins will be incorporated to grant access to AMD Instinct MI300X GPUs for the cluster pods.

Step 2. Install KubeRay and configure Ray Cluster.

The next steps include installing Kuberay, a Kubernetes operator, using Helm. The core of KubeRay comprises three Kubernetes Custom Resource Definitions (CRDs):

- RayCluster: This CRD enables KubeRay to fully manage the lifecycle of a RayCluster, automating tasks such as cluster creation, deletion, and autoscaling, while ensuring fault tolerance.

- RayJob: KubeRay streamlines job submission by automatically creating a RayCluster when needed. Users can configure RayJob to initiate job deletion once the task is completed, enhancing operational efficiency.

- RayService: RayService is made up of two parts: a RayCluster and a Ray Serve deployment graph. RayService offers zero-downtime upgrades for RayCluster and high availability.

helm repo add kuberay https://ray-project.github.io/kuberay-helm/ helm install kuberay-operator kuberay/kuberay-operator --version 1.0.0 |

This RayCluster consists of a head node followed by 1 worker node. In a YAML file, the head node is configured to run Ray with specified parameters, including the dashboard host and the number of GPUs, as shown in the excerpt below. Here, the worker node is under the name "gpu-group”.

... headGroupSpec: rayStartParams: dashboard-host: "0.0.0.0" # setting num-gpus on the rayStartParams enables # head node to be used as a worker node num-gpus: "8" ... |

The Kubernetes service is also defined to expose the Ray dashboard port for the head node. The deployment of the Ray cluster, as defined in a YAML file, will be executed using kubectl.

kubectl apply -f cluster.yml |

Step 3. Fine-tune Llama 3 8B Model with BF16 Precision.

You can either create your own dataset or select one from HuggingFace. The dataset must be available as a single json file with the specified format below.

{"question":"Is pentraxin 3 reduced in bipolar disorder?", "context":"Immunologic abnormalities have been found in bipolar disorder but pentraxin 3, a marker of innate immunity, has not been studied in this population.", "answer":"Individuals with bipolar disorder have low levels of pentraxin 3 which may reflect impaired innate immunity."}

Jobs will be submitted to the Ray Cluster through the Ray Python SDK utilizing the Python script, job.py, provided below.

# job.py

from ray.job_submission import JobSubmissionClient

# Update the <Head Node IP> to your head node IP/Hostname client = JobSubmissionClient("http://<Head Node IP>:30265")

fine_tuning = ( "python3 create_dataset.py \ --dataset_path /train/dataset.json \ --prompt_type 5 \ --test_split 0.2 ;" "python3 train.py \ --num-devices 16 \ # Number of GPUs available --batch-size-per-device 12 \ --model-name meta-llama/Meta-Llama-3-8B-Instruct \ # model name --output-dir /train/ \ --hf-token <HuggingFace Token> " ) submission_id = client.submit_job(entrypoint=fine_tuning,)

print("Use the following command to follow this Job's logs:") print(f"ray job logs '{submission_id}' --address http://<Head Node IP>:30265 --follow") |

This script initializes the JobSubmissionClient with the head node IP address, and sets parameters such as prompt_type, which determines how each question-answer datapoint is formatted when inputted into the model, as well as batch size and number of devices for training. It then submits the job with these set parameter definitions.

The initial phase involves generating a fine-tuning dataset, which will be stored in a specified format. Configurations such as the prompt used and the ratio of training to testing data can be added. During the second phase, we will proceed with fine-tuning the model. For this fine-tuning, configurations such as the number of GPUs to be utilized, batch size for each GPU, the model name as available on HuggingFace, HuggingFace API Token, and the number of epochs to fine-tune can all be specified.

Finally, in the third phase, we can start fine-tuning the model.

python3 job.py |

The fine-tuning jobs can be monitored using Ray CLI and Ray Dashboard.

- Using Ray CLI:

- Retrieve submission ID for the desired job.

- Use the command below to track job logs.

ray job logs <Submission ID> --address http://<Head Node IP>:30265 --follow |

Ensure to replace <Submission ID> and <Head Node IP> with the appropriate values.

- Using Ray Dashboard:

- To check the status of fine-tuning jobs, simply visit the Jobs page on your Ray Dashboard at <Head Node IP>:30265 and select the specific job from the list.

The reference code for this solution can be found here.

| Industry Specific Medical Use Case

Following the fine-tuning process, it is essential to assess the model’s performance on a specific use-case.

This solution uses the PubMedQA medical dataset to fine-tune a Llama 3 8B model on BF16 precision for our evaluation. The process was conducted on a distributed setup, utilizing a batch size of 12 per device, with training performed over 25 epochs. Both the base model and fine-tuned model are deployed in the Scalers AI enterprise chatbot to compare performance. The example below prompts the chatbot with a question from the MedMCQA dataset available on Hugging Face, for which the correct answer is “a.”

As shown on the left, the response generated by the base Llama 3 8B model is unstructured and vague, and returns an incorrect answer. On the other hand, the fine-tuned model returns the correct answer and also generates a thorough and detailed response to the instruction while demonstrating an understanding of the specific subject matter, in this case medical knowledge, relevant to the instruction.

| Enterprise Choice in Industry Leading Accelerators

To deliver enterprise choice, this distributed fine-tuning software stack supports both AMD Instinct MI300X Accelerators as well as NVIDIA H100 Tensor Core GPUs. Below, we show a visualization of the unified software and hardware stacks, running seamlessly with the Dell PowerEdge XE9680 Server.

“Scalers AI is thrilled to offer choice in distributed fine-tuning across both leading AI GPUs in the industry on the flagship PowerEdge XE9680.” -CEO at Scalers AI |

| Summary

Dell PowerEdge XE9680 Server, featuring AMD Instinct MI300X Accelerators, provides enterprises with cutting-edge infrastructure for creating industry-specific AI solutions using their own proprietary data. In this blog, we showcased how enterprises deploying applied AI can take advantage of this unified AI ecosystem by delivering the following critical solutions:

- Developed a distributed finetuning software stack on the flagship Dell PowerEdge XE9680 Server equipped with eight AMD Instinct MI300X Accelerators.

- Fine-tuned Llama 3 8B with BF16 precision using the PubMedQA medical dataset on two Dell PowerEdge XE9680 Servers each equipped with eight AMD Instinct MI300X Accelerators.

- Deployed fine-tuned model in an enterprise chatbot scenario & conducted side by side tests with Llama 3 8B.

- Released distributed fine-tuning stack with support for Dell PowerEdge XE9680 Servers equipped with AMD Instinct MI300X Accelerators and NVIDIA H100 Tensor Core GPUs to offer enterprise choice.

Scalers AI is excited to see continued advancements from Dell and AMD on hardware and software optimizations in the future, including an upcoming RAG (retrieval augmented generation) offering running on the Dell PowerEdge XE9680 Server with AMD Instinct MI300X Accelerators at Dell Tech World ‘24.

| References

AMD products: AMD Library, https://library.amd.com/account/dashboard/

Nvidia images: Nvidia.com

Copyright © 2024 Scalers AI, Inc. All Rights Reserved. This project was commissioned by Dell Technologies. Dell and other trademarks are trademarks of Dell Inc. or its subsidiaries. AMD, Instinct™, ROCm™, and combinations thereof are trademarks of Advanced Micro Devices, Inc. All other product names are the trademarks of their respective owners.

***DISCLAIMER - Performance varies by hardware and software configurations, including testing conditions, system settings, application complexity, the quantity of data, batch sizes, software versions, libraries used, and other factors. The results of performance testing provided are intended for informational purposes only and should not be considered as a guarantee of actual performance.