PowerProtect Kubernetes Advanced Asset Source Configuration

Mon, 07 Aug 2023 23:05:40 -0000

|Read Time: 0 minutes

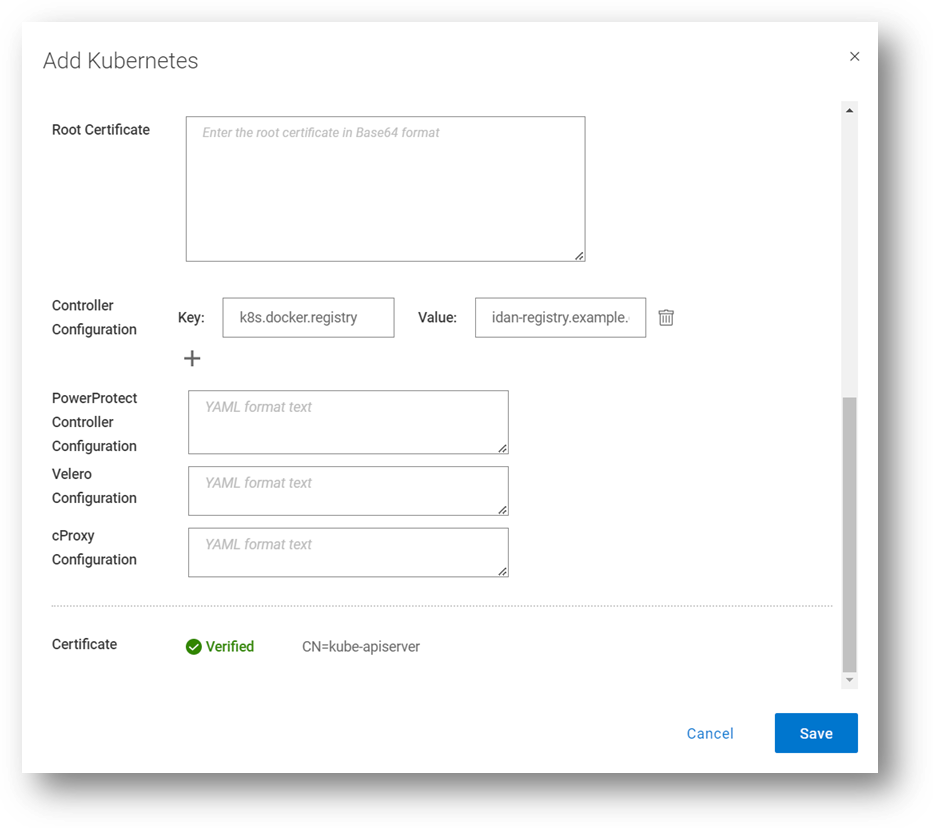

In this blog, we’ll go through some of the advanced options we’re enabling as part of the Kubernetes (K8s) asset source configuration in PowerProtect Data Manager (PPDM). These advanced parameters can be specified when adding a new K8s asset source or when modifying an existing one. Let’s look at some use cases and how PPDM can help.

Use Case 1: Internal registry

For a great first use case, let’s look at an advanced controller configuration. The advanced configuration, on the pod called ‘powerprotect controller,’ allows you to configure key-value pairs. There are nine pairs documented in the PowerProtect Kubernetes User Guide, but we will focus on the most important ones in this blog.

This one allows you to define an internal registry from which you can pull container images. By default, the required images are pulled from Docker Hub. For example:

Key: k8s.docker.registry

Value: idan-registry.example.com:8446

The value represents the FQDN of the registry, including the port as needed. Note that if the registry requires authentication, the k8s.image.pullsecrets key-value pair can be specified.

By the way, I’ve discussed the Root Certificate option in previous blogs. Take a look at PowerProtect Data Manager – How to Protect AWS EKS Workloads? and PowerProtect Data Manager – How to Protect GKE Workloads?.

Use Case 2: Exclude resources from metadata backup

The second use case we’ll look at enables the exclusion of Kubernetes resource types from metadata backup. It accepts a comma-separated list of resources to exclude. For example:

Key: k8s.velero.exclude.resources

Value: certificaterequests.cert-manager.io

Use Case 3: PowerProtect Affinity Rules for Pods

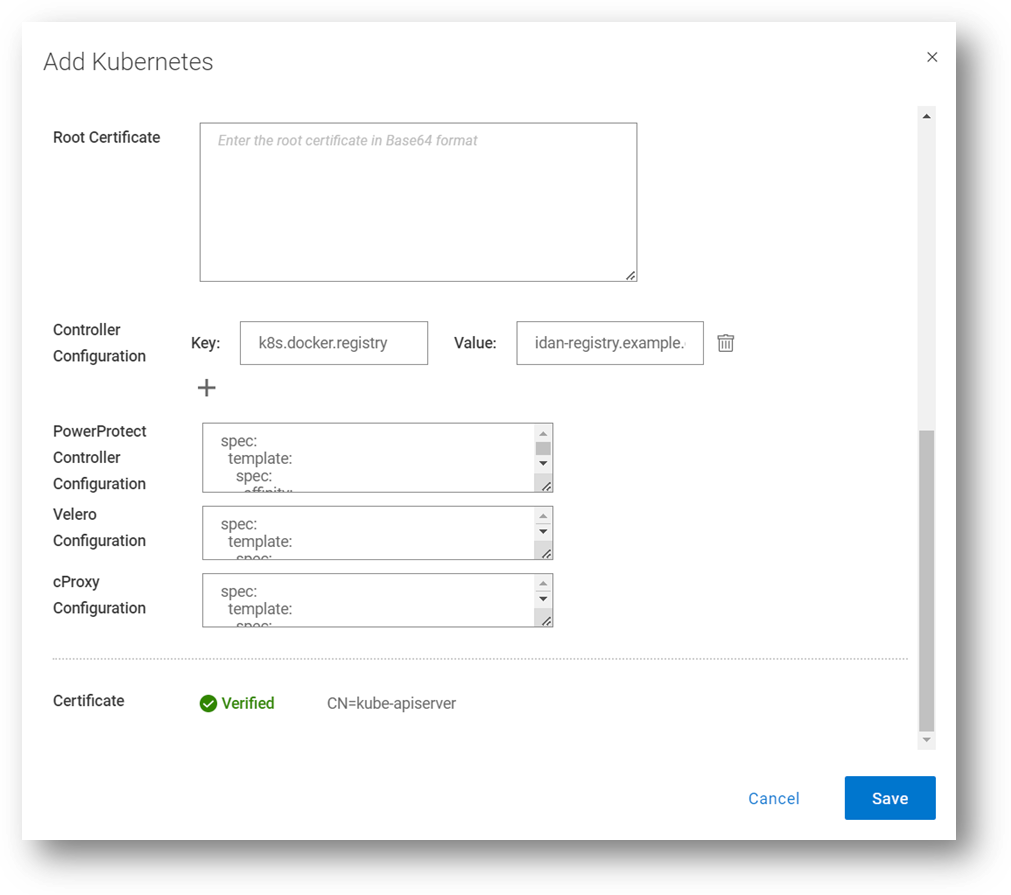

Another useful advanced option is the ability to customize any or all PowerProtect-related pods - powerprotect-controller, Velero, cProxy, and their configurations. The third use case we’ll cover is Affinity Rules.

Example 1 – nodeAffinity

The first example is nodeAffinity which allows you to assign any PowerProtect pod to a node with a specific node label.

This case may be suitable when you need to run the PowerProtect pods in specific nodes. For example, perhaps only some of the nodes have 10Gb connectivity to the backup VLAN, or only some of the nodes have connectivity to PowerProtect DD.

In the following example – any node with the app=powerprotect label can run the configured pod. This example uses the requiredDuringSchedulingIgnoredDuringExecution node affinity option, which means that the scheduler won’t run this pod on any node unless the rule is met.

Note: This must be in YAML format.

The configured pod is patched with the following configuration:

spec: template: spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: app operator: In values: - powerprotect

Here’s another example, but this time with the preferredDuringSchedulingIgnoredDuringExecution node affinity option enabled. This means the scheduler tries to find a node that meets the rule, but if a matching node is not available the scheduler still schedules the pod.

spec: template: spec: affinity: nodeAffinity: preferredDuringSchedulingIgnoredDuringExecution: - weight: 1 preference: matchExpressions: - key: app operator: In values: - powerprotect

Here we can see how it is configured through the PowerProtect Data Manager UI, when registering a new K8s asset source, or when editing an existing one. In this screenshot, I’m updating the configuration for all the PowerProtect pods (powerprotect-controller, Velero, and cProxy), but it’s certainly possible to make additional config changes on any of these PowerProtect pods.

Example 2 – nodeSelector

Another much simpler example for node selection is nodeSelector. The pods would only be scheduled to nodes with the specified labels.

spec: template: spec: nodeSelector: app: powerprotect

Example 3 – nodeName

In this example we’ll examine an alternative way of assigning one of the PowerProtect pods to specific worker nodes.

spec: template: spec: nodeName: workernode01

Example 4 – Node Anti-affinity

The final example we’ll look at for nodeAffinity is anti-affinity, with operators including NotIn or DoesNotExist. The case for using anti-affinity is to enable scheduling the PowerProtect pods only to specific nodes that do not have a specific label or a certain role.

spec: template: spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: app operator: NotIn values: - powerprotect

Use Case 4: Multus and custom DNS configuration

Another popular use case is Multus and custom DNS configuration. Multus is a Container Network Interface (CNI) plugin for Kubernetes that enables attaching multiple network interfaces to pods. I won’t elaborate too much on Multus’ features and capabilities here, but I’ll show some examples of how to customize the PowerProtect pod specs to accept multiple NICs and custom DNS configuration.

Example 1 – dnsConfig

spec: template: spec: dnsConfig: nameservers: - "10.0.0.1" searches: - lab.idan.example.com - cluster.local

Example 2 – Multus

metadata: annotations: k8s.v1.cni.cncf.io/networks: macvlan-conf spec: template: spec: dnsConfig: nameservers: - "10.0.0.1" searches: - lab.idan.example.com - cluster.local

Always remember –documentation is your friend! The PowerProtect Data Manager Kubernetes User Guide has some useful information for any PPDM with K8s deployment.

Thanks for reading, and feel free to reach out with any questions or comments.

Idan

Author: Idan Kentor