Virtualization with Layer 2 over Layer 3

Tue, 28 Mar 2023 12:49:05 -0000

|Read Time: 0 minutes

IT organizations must transform to meet the increasingly complex challenges in data center networking. Virtualization and software-defined data center (SDDC) in hyperconverged services are key components for today's data centers.

IT organizations making this transformation must interconnect their data centers. Virtualization and other high-value services are creating the need for logically connected, geographically isolated data centers. Dell Technologies has the infrastructure architectures to facilitate these requirements.

With Dell SmartFabric OS10 operating system, Dell introduces a networking solution in virtualization with two popular VLAN tunneling technologies: virtual extensive LAN (VXLAN) and generic routing encapsulation (GRE).

The solution of Border Gateway Protocol (BGP) and Ethernet Virtual Private Network (EVPN) for VXLAN uses Dell PowerSwitches and PowerEdge servers. BGP EVPN for VXLAN serves as a network virtualization overlay to extend Layer 2 connectivity across the data centers, which simplifies the deployment of virtualization and provides benefits such as vMotion, vSAN, and overall efficient resource sharing.

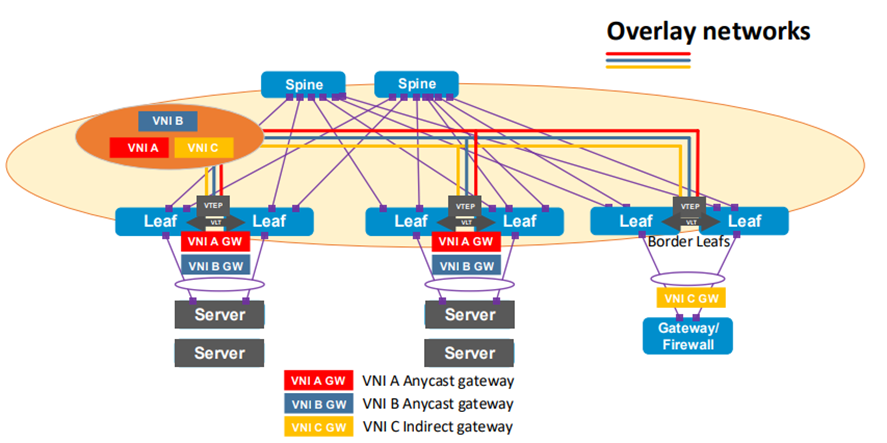

Figure 1. BGP EVPN for VXLAN network diagram overview

GRE is an IP encapsulation protocol that transports IP packets over a network in a point-to-point interconnection between two branches by tunneling any Layer 3 protocol, including IP.

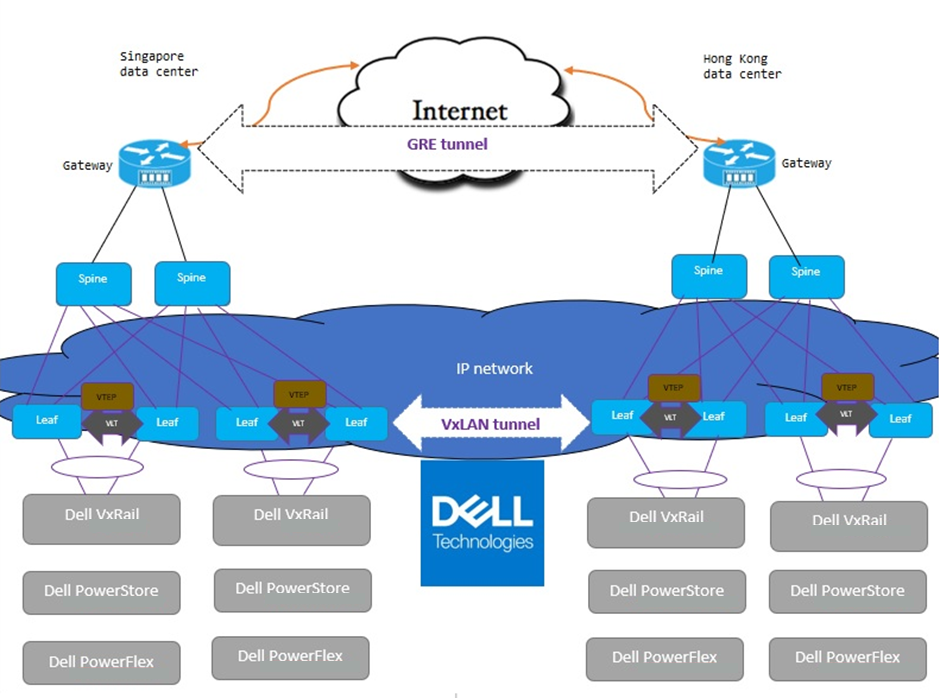

With BGP EVPN VXLAN and GRE, any organization can interconnect their data centers across public internet in a secure (encrypted) manner. For example, data centers can securely connect over a local connection network—even if they are geographically distant or in different countries. The following figure illustrates that changing the infrastructure to leverage the existing Dell products portfolio on the production environment (VxRail, PowerStore, and PowerFlex) does not impact performance.

Figure 2. Data center interconnection over public internet with BGP EVPN for VXLAN and GRE tunneling

BGP EVPN for VXLAN and GRE tunneling provides the following benefits:

- Increases scalability in virtualized cloud environments

- Optimizes existing infrastructure with virtualization, scalability, flexibility, and mobility across data centers

- Maintains the security of the data center

Contact Dell Technologies for more details on this solution. Dell Technologies is excited to partner with you to deliver high-value services for your business.

Resources

For more information, refer to the following sections of the VMware Cloud Foundation on VxRail Multirack Deployment Using BGP EVPN Configuration and Deployment Guide:

Related Blog Posts

Be more agile with EVPN Multihoming (MH)

Thu, 04 Jan 2024 16:51:10 -0000

|Read Time: 0 minutes

Let’s talk about enhancing your basic EVPN fabric. In your typical data center EVPN fabric, an end host uses dual homed connections onto the leaf or Top of Rack (ToR) switches.

The ToRs are usually a pair of switches configured with multi-chassis link aggregation (MC-LAG) to provide end-host link redundancy if one of the ToRs failed.

These links are Layer 2 with spanning-tree deployed on the fabric. Spanning tree typically blocks half of the links to avoid any network loops. As a result, the fabric bandwidth is cut in half. This only happens when the LAG consists of single links, as demonstrated in Figure 2.

However, if there was a way to attain link redundancy, flexibility, and full link bandwidth utilization things could be more interesting in the EVPN landscape.

Dell Enterprise SONiC 4.2 brings EVPN multihoming into the data center. It is a standards-based replacement for multi-chassis link aggregation (Multi-chassis Link Aggregation Group) and legacy stacking technology.

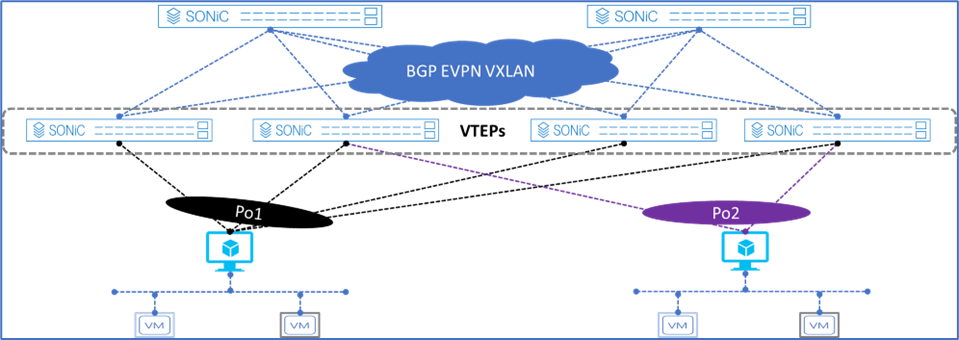

Figure 1. Dell Enterprise SONiC EVPN MH

Figure 1 shows the supported Dell Enterprise SONiC EVPN MH deployment. It shows the maximum number of VTEPs that can be connected to a single end host.

These connections are independent, meaning each link belonging to the link aggregation (LAG) can be connected to multiple independent upstream switches and these upstream switches do not have to be interconnected.

Deployment simplicity is the main benefit of EVPN MH, as all the connections only have to be connected from the end-host or server to the switches.

Achieve end host enhanced connectivity and link efficiency with EVPN MH

In an EVPN fabric, especially a data center fabric, the end hosts or servers are dual homed to a pair of Top of Rack (ToR) switches providing link redundancy. This deployment is common and it uses MC-LAG.

The other deployment option is known as stacking. This option involves several switches stacked together with a primary switch acting as the controller of the stack. All end-hosts or servers are connected to each of the switches part of the stack.

Note: A stack consisting of a single switch is also possible, but rarely deployed.

Both deployments offer link and device redundancy, but they have some limitations that EVPN MH can overcome. The benefits and limitations for each deployment option are described in the following lists.

MC-LAG deployment

- A minimum of two ToR/Leaf switches are required

- A single switch deployment is not supported

- An end host or server can connect only up to two ToRs/Leaf switches at any given time

- All connections from the end-host or server are Layer 2 based

Stacking deployment

- A maximum of eight switches are stacked with one primary or controller switch

- Specific types of stacking cables are required to form the stack

- A single switch deployment is not supported

- All end hosts or servers connect to each switch part of the stack to maintain link redundancy, resulting in a cable management situation

- All connections from the end-host or server are Layer 2 based

EVPN multihoming deployment

- A minimum of one ToR/Leaf switch is required

- An end-host or server can connect to four separate ToR/Leaf switches (VTEPs) at any given time

- All links from the end-host or server to the VTEPs are active

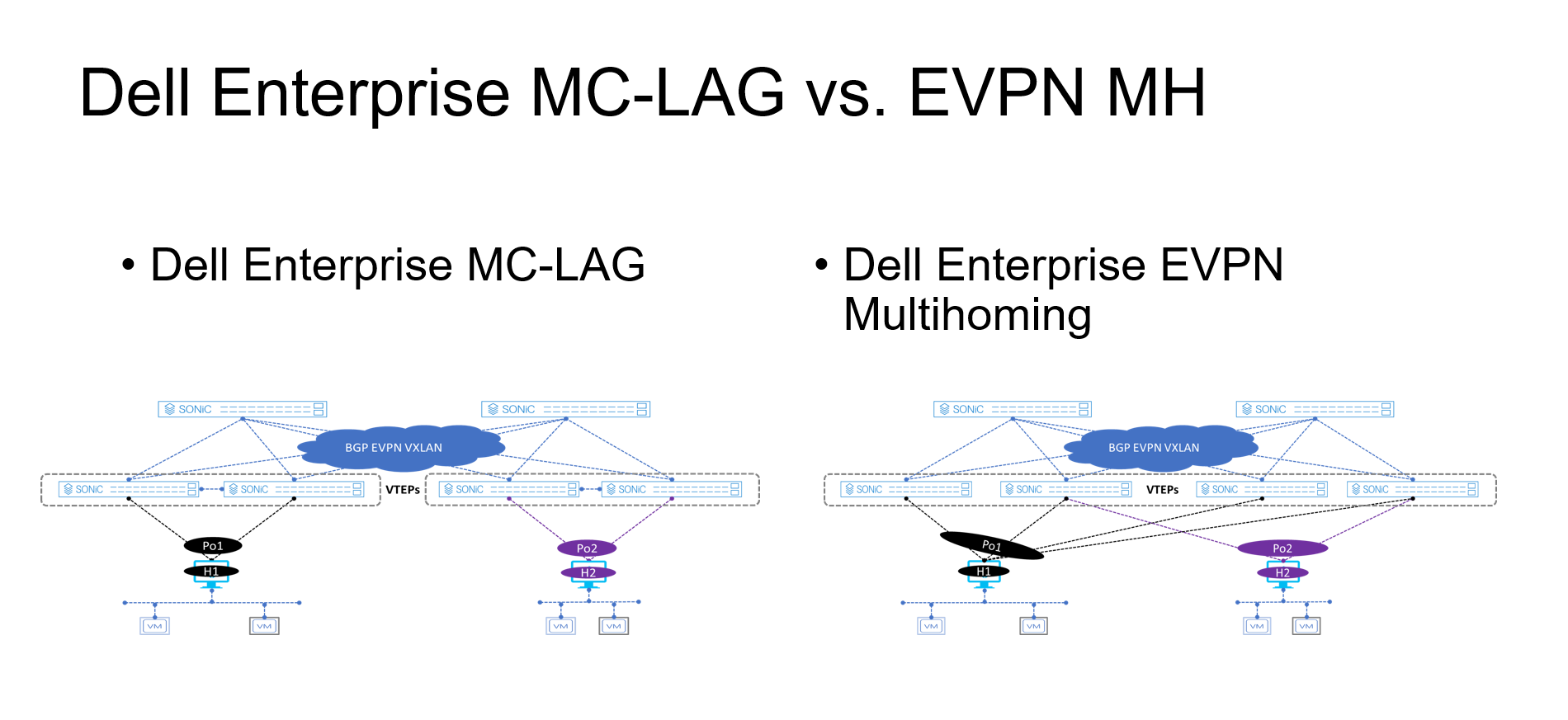

Figure 2. MC-LAG vs. EVPN multihoming deployment

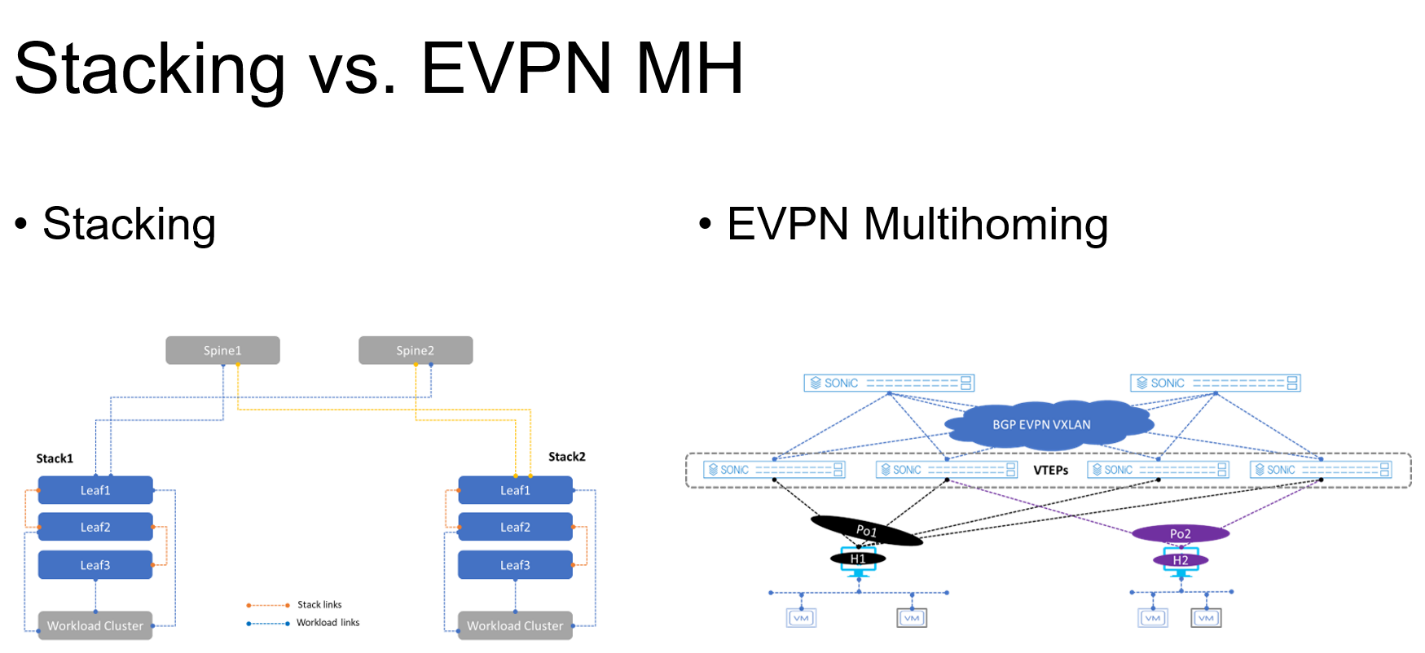

Figure 3. Stacking vs. EVPN multihoming deployment

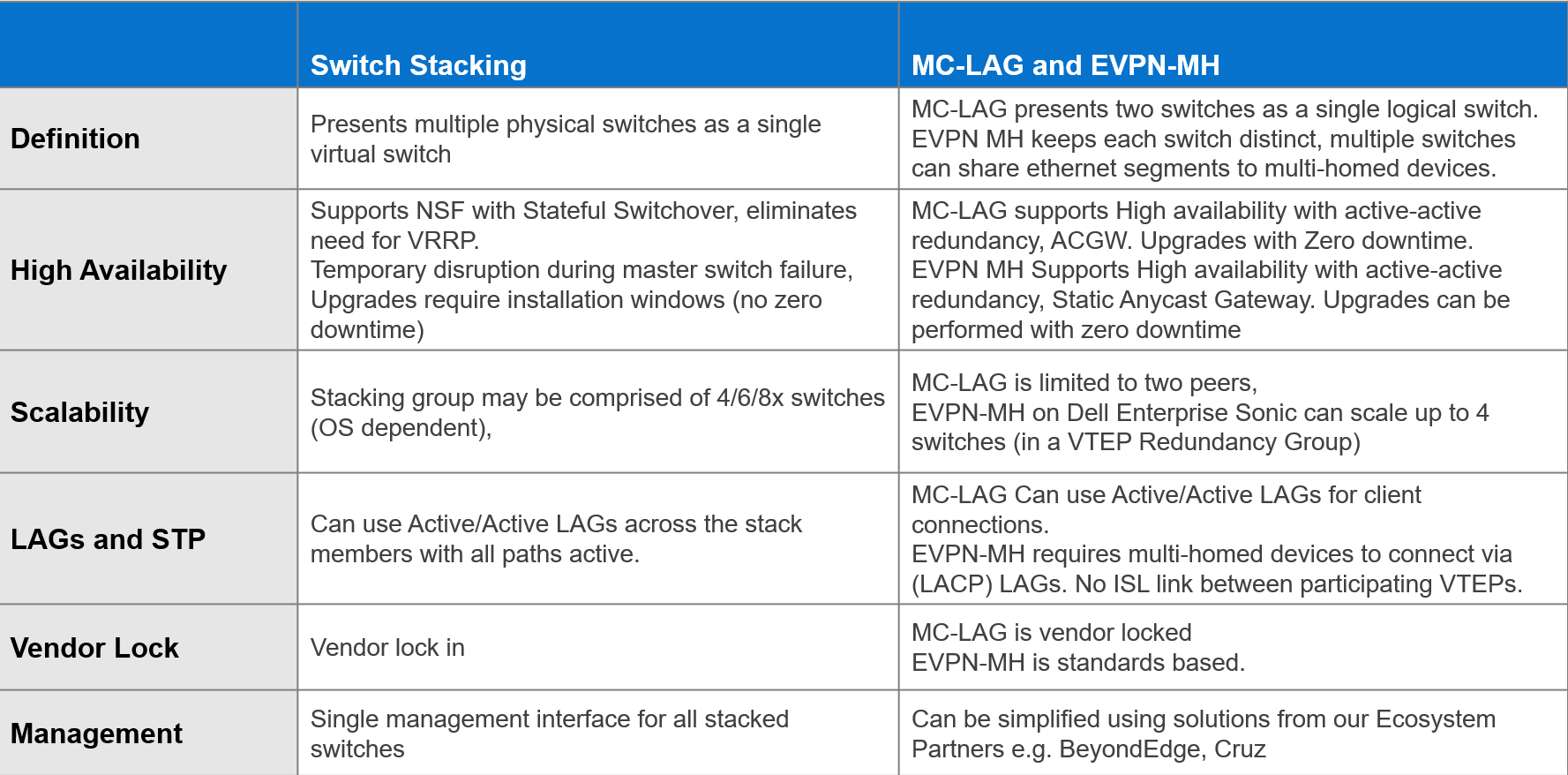

The advantages offered by EVPN multihoming are clear when compared with the traditional stacking and MC-LAG. Table 1 summarizes these differences.

Table 1. Stacking compared to MC-LAG and EVPN-MH

EVPN offers an upgrade to the legacy Layer 2 VPN technology. EVPN should be considered each time a new fabric is deployed, especially when virtualization is one of the workloads.

Dell Enterprise SONiC 4.2 offers even more simplicity into the adoption of EVPN in the data center.

Additional resources

Dell Enterprise SONiC 4.2.0 User Guide (log in required)

New Deployment Option for SmartFabric Services with VxRail

Fri, 15 Sep 2023 20:06:45 -0000

|Read Time: 0 minutes

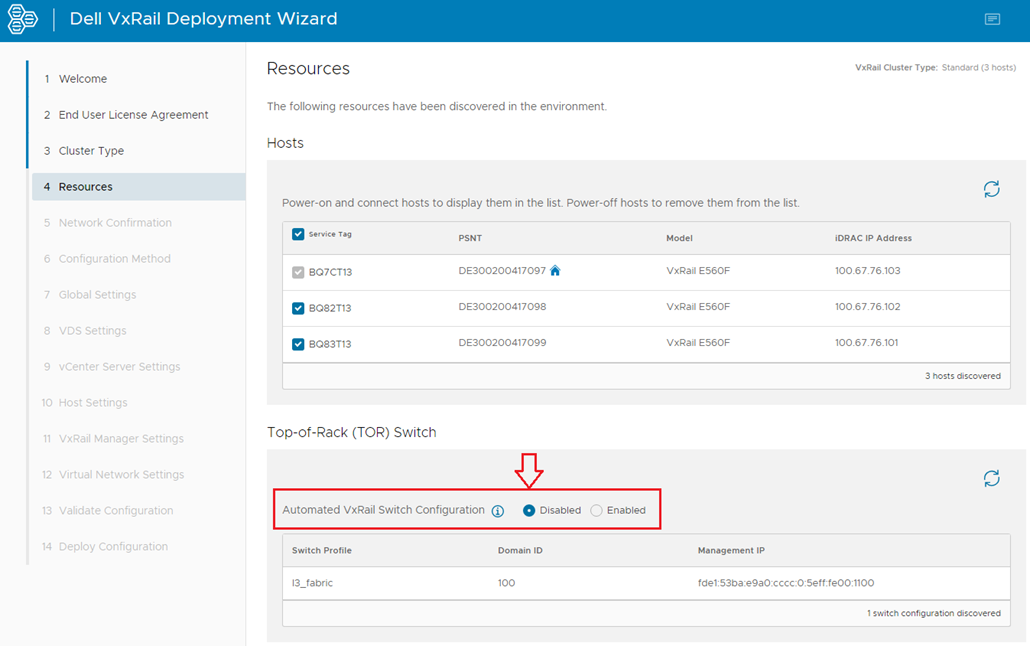

VxRail 7.0.400 introduces an option that opens up new functionality for SmartFabric Services (SFS) switches with VxRail deployments. This new option allows you to choose whether automated SmartFabric switch configuration is enabled or disabled during VxRail deployment.

In VxRail versions earlier than 7.0.400, automated SmartFabric switch configuration during deployment is always enabled, and there is no option to disable it. The automation configures VxRail networks on the SmartFabric switches during deployment, but it limits the ability to support SFS with VxRail in a wide range of network environments after deployment. This limitation is because internal settings specific to SFS are made to VxRail Manager which limits the ability to expand the network or add additional products in some environments.

Starting with VxRail 7.0.400, the VxRail Deployment Wizard provides an option to set Automated VxRail Switch Configuration to Disabled. Dell Technologies recommends disabling the automation, as shown below.

With automated switch configuration disabled, the VxRail Deployment wizard skips the switch configuration step. The advantage of bypassing this automation during deployment is that SFS and VxRail can now be supported across a wide array of network environments after deployment. It will also simplify the VxRail upgrade process in the future.

Disabling automated switch configuration only affects SmartFabric switch automation during VxRail deployment or when adding nodes to an existing VxRail cluster after deployment. The SFS UI is used to place VxRail node-connected ports in the correct networks instead of the automation.

Automated SmartFabric switch configuration after VxRail is deployed is still supported by registering the VxRail vCenter Server with OpenManage Network Integration (OMNI). When registration is done, networks that are created in vCenter continue to be automatically configured on the SmartFabric switches by OMNI.

In summary, for new deployments with SFS and VxRail 7.0.400 or later, it is recommended that you disable the automated switch configuration during deployment. This action will give you more flexibility when expanding your network in the future.

Resources

Dell Networking SmartFabric Services Deployment with VxRail 7.0.400